HP PA 8800, PA-8600, PA 8700 User Manual

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Overview

This subchapter pertains to all HP 9000 Superdome servers (running PA-8600, PA-8700 or PA-8800 processors) for all markets. With Superdome, HP launches a new strategy to ensure a positive Total Customer Experience is achieved via industry-leading HP Services. Our experience has shown us that large solution implementations most often succeed as a result of appropriate skills and attention being applied to the solution design and implementation. To address this on the implementation side, for Superdome, HP is responding to Customer and Industry feedback and delivering Superdome Configurations via three, pre-configured Services levels: Critical Service, Proactive Service and Foundation Service. With Superdome, we introduced a new role, the TCE Manager, who manages the fulfillment of an integrated business solution based on customer requirements. For each customer account, the TCE Manager will facilitate the selection of the appropriate configuration. For ordering instructions, please consult the ordering guide.

Superdome Service Solutions

Superdome continues to provide the same positive Total Customer Experience via industry leading HP Services as with existing Superdome servers. The HP Services component of Superdome is described here:

Solution Life Cycle |

HP customers have consistently achieved higher levels of satisfaction when key components of their IT infrastructures are |

|

implemented using the Solution Life Cycle. The Solution Life Cycle focuses on rapid productivity and maximum |

|

availability by examining customers' specific needs at each of five distinct phases (plan, design, integrate, install, and |

|

manage) and then designing their Superdome solution around those needs. |

|

|

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 1 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Overview

Service Solutions |

HP offers three pre-configured service solutions for Superdome that provide customers with a choice of lifecycle services to |

|

address their own individual business requirements. |

|

Foundation Service Solution: This solution reduces design problems, speeds time-to-production, and lays the |

|

groundwork for long-term system reliability by combining pre-installation preparation and integration services, |

|

hands-on training and reactive support. This solution includes HP Support Plus 24 to provide an integrated set of |

|

24×7 hardware and software services as well as software updates for selected HP and third party products. |

|

Proactive Service Solution: This solution builds on the Foundation Service Solution by enhancing the |

|

management phase of the Solution Life Cycle with HP Proactive 24 to complement your internal IT resources with |

|

proactive assistance and reactive support. Proactive Service Solution helps reduce design problems, speed time to |

|

production, and lay the groundwork for long-term system reliability by combining pre installation preparation and |

|

integration services with hands on staff training and transition assistance. With HP Proactive 24 included in your |

|

solution, you optimize the effectiveness of your IT environment with access to an HP-certified team of experts that |

|

can help you identify potential areas of improvement in key IT processes and implement necessary changes to |

|

increase availability. |

|

Critical Service Solution: Mission Critical environments are maintained by combining proactive and reactive |

|

support services to ensure maximum IT availability and performance for companies that can't tolerate downtime |

|

without serious business impact. Critical Service Solution encompasses the full spectrum of deliverables across the |

|

Solution Lifecycle and is enhanced by HP Critical Service as the core of the management phase. This total solution |

|

provides maximum system availability and reduces design problems, speeds time-to-production, and lays the |

|

groundwork for long-term system reliability by combining pre-installation preparation and integration services, |

|

hands on training, transition assistance, remote monitoring, and mission critical support. As part of HP Critical |

|

Service, you get the services of a team of HP certified experts that will assist with the transition process, teach your |

|

staff how to optimize system performance, and monitor your system closely so potential problems are identified |

|

before they can affect availability. |

|

|

Other Services |

HP's Mission Critical Partnership: This service offering provides customers the opportunity to create a custom |

|

agreement with Hewlett-Packard to achieve the level of service that you need to meet your business requirements. |

|

This level of service can help you reduce the business risk of a complex IT infrastructure, by helping you align IT |

|

service delivery to your business objectives, enable a high rate of business change, and continuously improve service |

|

levels. HP will work with you proactively to eliminate downtime, and improve IT management processes. |

|

Service Solution Enhancements: HP's full portfolio of services is available to enhance your Superdome Service |

|

Solution in order to address your specific business needs. Services focused across multi-operating systems as well as |

|

other platforms such as storage and networks can be combined to compliment your total solution. |

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 2 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Standard Features

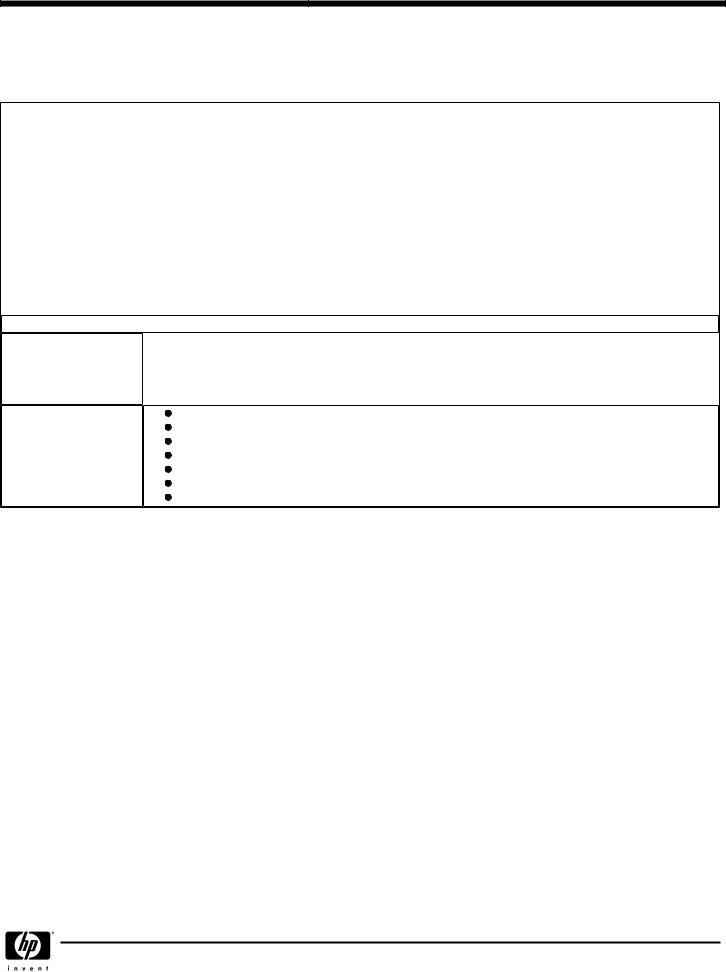

NOTE: Given that PA-8600/PA-8700 are single-core processors and PA-8800 is a dual-core processor, the columns listed in this table refer to 16-socket, 32-socket and 64-socket. This terminology refers to 16-way, 32-way and 64-way for Superdome PA-8600/PA-8700 and 32-way, 64-way and 128-way for Superdome PA 8800 systems.

System Size |

Minimum System |

Maximum SPU Capacities |

|||

|

|

|

|

|

|

|

|

|

|

|

|

16 Sockets |

PA 8600 or |

PA 8800 |

PA 8600 or |

PA 8800 |

|

|

PA 8700 |

|

PA 8700 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

CPUs |

1 |

2 |

16 |

32 |

|

Memory |

|

|

|

|

|

|

|

|

|

||

2 GB |

2 GB |

64 GB |

256 GB |

||

Cell Boards |

|||||

|

|

|

|

||

|

|

|

|

||

1 Cell Board |

1 Cell Board |

4 Cell Boards |

4 Cell Boards |

||

PCI Chassis |

|||||

|

|

|

|

||

|

|

|

|

||

|

1 12 slot chassis |

1 12 slot chassis |

4 12 slot chassis |

4 12 slot chassis |

|

|

|

|

|

|

|

|

|

|

|

|

|

32 Sockets |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

CPUs |

1 |

2 |

32 |

64 |

|

Memory |

|

|

|

|

|

|

|

|

|

||

2 GB |

2 GB |

128 GB |

512 GB |

||

Cell Boards |

|||||

|

|

|

|

||

|

|

|

|

||

1 Cell Board |

1 Cell Board |

8 Cell Boards |

8 Cell Boards |

||

PCI Chassis |

|||||

|

|

|

|

||

|

|

|

|

||

|

1 12 slot chassis |

1 12 slot chassis |

8 12 slot chassis |

8 12 slot chassis |

|

|

|

|

|

|

|

64 Sockets

CPUs

Memory

Cell Boards

PCI Chassis

Standard Features

6 |

6 |

64 |

128 |

|

|

|

|

|

|

|

|

6 GB |

6 GB |

256 GB |

1024 GB (1) |

|

|

|

|

|

|

|

|

3 Cell Boards |

3 Cell Boards |

16 Cell Boards |

16 Cell Boards |

|

|

|

|

|

|

|

|

1 12 slot chassis |

1 12 slot chassis |

16 12 slot chassis |

16 12 slot chassis |

|

|

|

|

Redundant Power Supply

Redundant Fans

HP-UX operating system with unlimited user license

Factory Integration of memory and I/O Cards

Installation Guide, Operators Guide, and Architecture Manual

HP Site planning and Installation

One year warranty with same business day on-site service response

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 3 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Configuration

There are three basic building blocks in the Superdome system architecture: the cell, the crossbar backplane and the I/O subsystem. Please note that Superdome with PA-8800 is based on a different chip set (sx1000) than Superdome with PA-8600 or PA-8700. For more information on the sx1000 chip set, please refer to the HP Integrity Superdome QuickSpec.

Cabinets |

A Superdome system can consist of up to four different types of cabinet assemblies: |

At least one Superdome left cabinet. The Superdome cabinets contain all of the processors, memory and core devices of the system. They will also house most (usually all) of the system's I/O cards. Systems may include both left and right cabinet assemblies containing, a left or right backplane respectively.

One or more HP Rack System/E cabinets. These 19-inch rack cabinets are used to hold the system peripheral devices such as disk drives.

Optionally, one or more I/O expansion cabinets (Rack System/E). An I/O expansion cabinet is required when a customer requires more PCI cards than can be accommodated in their Superdome cabinets.

Superdome cabinets are serviced from the front and rear of the cabinet only. This enables customers to arrange the cabinets of their Superdome system in the traditional row fashion found in most computer rooms. The width of the cabinet accommodates moving it through common doorways in the U.S. and Europe. The intake air to the main (cell) card cage is filtered. This filter can be removed for cleaning/replacement while the system is fully operational.

A status display will be located on the outside of the front and rear doors of each cabinet. The customer and field engineers can therefore determine basic status of each cabinet without opening any cabinet doors.

For PA-8600 and PA-8700 processors (single core per processor):

Superdome 16-way: single cabinet (a left cabinet)

Superdome 32-way: single cabinet (a left cabinet)

Superdome 64 way: dual cabinet (a left cabinet and a right cabinet)

For PA-8800 processors (dual core per processor):

Superdome 32-way: single cabinet (a left cabinet)

Superdome 64-way: single cabinet (a left cabinet)

Superdome 128-way: dual cabinet (a left cabinet and a right cabinet)

Each cabinet may contain a specific number of cell boards (consisting of CPUs and memory) and I/O. See the following sections for configuration rules pertaining to each cabinet. The base configuration product numbers for each of the models are listed below.

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 4 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Configuration

Cells (CPUs and Memory) A cell, or cell board, is the basic building block of a Superdome system. It is a symmetric multi-processor (SMP), containing:

Four sockets that can hold PA-8600, PA-8700 or PA-8800 processors (only one type is allowed per cell)

Memory (up to 16-GB RAM with 512-MB DIMMs, 32-GB RAM with 1-GB DIMMs and 64-GB with 2-GB DIMMs.

NOTE: 1-GB and 2 -GB DIMMs are only supported with Superdome PA 8800)

One cell controller (CC)

Power converters

Data buses

Optional link to I/O card cage

Please note the following:

|

PA-8600 and PA-8700 processors can be mixed within a Superdome system, but must reside on different cells and |

|

in different partitions. PA-8800 processors cannot be mixed within a Superdome system with PA-8600 or PA-8700 |

|

processors. |

|

A Superdome PA-8800 system can support up to 1 TB of memory with 2 GB DIMMs. However, due to the limitation |

|

of HP-UX 11i, the maximum amount of memory supported in a partition is 512 GB. |

|

For PA-8600 and PA-8700 processors: The minimum configuration includes one active CPU and 2 GB memory per |

|

cell board. The maximum configuration includes four active CPU and 16 GB memory per cell board. Each cell |

|

board ships with four PA-8600 or PA-8700 CPUs. However, based on the number of active CPUs ordered, from one |

|

through four CPUs are activated prior to shipment. |

|

For PA-8800 processors: The minimum configuration includes four active processors and 2 GB memory per cell |

|

board. The maximum configuration includes 8 active processors and 64 GB memory per cell board using 2-GB |

|

DIMMs. Each cell board ships with either four or eight PA-8800 CPUs. The minimum number of active processors |

|

on each cell board is 4. Additional processors on the cell board can be activated individually (not in pairs). |

|

512-MB, 1-GB and 2-GB (future) DIMMs can be mixed on a cell board in Superdome PA-8800 systems only. |

|

|

Crossbar Backplane |

Each Crossbar backplane contains two sets of two crossbar chips that provide a non blocking connection between eight |

|

cells and the other backplane. Each backplane cabinet can support up to eight resulting in a Superdome PA-8600 or |

|

Superdome PA-8700 32-way or Superdome PA-8800 64-way system. A backplane supporting four cells would result in a |

|

Superdome PA-8600 or Superdome PA-8700 16-way and a Superdome PA-8800 32-way system. Two backplanes can be |

|

linked together with flex cables to produce a cabinet that can support up to 16 cells resulting in a Superdome PA-8600 or |

|

Superdome PA-8700 64-way or Superdome PA-8800 128-way system. |

|

|

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 5 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Configuration

I/O Subsystem |

Each I/O chassis provides twelve I/O slots. Superdome PA-8600 and Superdome PA-8700 support I/O chassis with 12 PCI |

|

66 capable slots, eight supported via single (1x) ropes (266 MB/s peak) and four supported via dual (2x) ropes (533 MB/s |

|

peak). Superdome PA-8800 supports I/O chassis with 12 PCI-X 133 capable slots, eight supported via single enhanced (2x) |

|

ropes (533 MB/s peak) and four supported via dual enhanced (4x) ropes (1066 MB/s peak). |

|

Each Superdome cabinet supports a maximum of four I/O chassis. The optional I/O expansion cabinet can support three |

|

I/O chassis enclosures (ICE), each of which supports two I/O chassis for a maximum of six I/O chassis per I/O expansion |

|

cabinet. |

|

Each I/O chassis connects to one cell board and the number of I/O chassis supported is dependent on the number of cells |

|

present in the system. A Superdome system can have more cells than I/O chassis. For instance, an 8-cell Superdome can |

|

have one to eight I/O chassis. Each partition must have at least one I/O chassis with the number of I/O chassis not |

|

exceeding the number of cells. |

|

A 4-cell Superdome supports four I/O chassis for a maximum of 48 PCI slots. |

|

An 8-cell Superdome supports eight I/O chassis for a maximum of 96 PCI slots. Since a single Superdome cabinet only |

|

supports four I/O chassis, an I/O expansion cabinet and two I/O chassis enclosures are required to support all eight I/O |

|

chassis. |

|

A 16-cell Superdome supports 16 I/O chassis for a maximum of 192 PCI slots. Since two Superdome cabinets (left and |

|

right) only support eight I/O chassis, two I/O expansion cabinets and four I/O chassis enclosures are required to support all |

|

16 I/O chassis. The four I/O chassis enclosures are spread across the two I/O expansion cabinets, either three ICE in one |

|

I/O expansion cabinet and one ICE in the other, or two ICE in each. |

|

|

Core I/O |

Superdome's core I/O provides the base set of I/O functions required by every Superdome partition. Each partition must have |

|

at least one core I/O card in order to boot. Multiple core I/O cards may be present within a partition (one core I/O card is |

|

supported per I/O backplane); however, only one may be active at a time. Core I/O will utilize the standard long card PCI |

|

form factor but will add a second card cage connection to the I/O backplane for additional non-PCI signals (USB and |

|

utilities). This secondary connector will not impede the ability to support standard PCI cards in the core slot when a core I/O |

|

card is not installed. |

|

Any I/O chassis can support a Core I/O card that is required for each independent partition. A system configured with 16 |

|

cells, each with its own I/O chassis and core I/O card could support up to 16 independent partitions. Note that cells can be |

|

configured without I/O chassis attached, but I/O chassis cannot be configured in the system unless attached to a cell. |

|

The core I/O card's primary functions are: |

|

Partitions (console support) including USB and RS-232 connections |

|

10/100Base-T LAN (general purpose) |

|

Other common functions, such as Ultra/Ultra2 SCSI, Fibre Channel, and Gigabit Ethernet, are not included on the core I/O |

|

card. These functions are, of course, supported as normal add in cards. |

The unified 100Base-T Core LAN driver code searches to verify whether there is a cable connection on an RJ-45 port or on an AUI port. If no cable connection is found on the RJ-45 port, there is a busy wait pause of 150 ms when checking for an AUI connection. By installing the loopback connector (description below) in the RJ-45 port, the driver would think an RJ 45 cable was connected and would not continue to search for an AUI connection, hence eliminate the 150 ms busy wait state:

|

Product/Option Number |

Description |

|

|

|

|

|

|

|

A7108A |

RJ-45 Loopback Connector |

|

|

|

|

|

|

|

0D1 |

Factory integration RJ-45 Loopback Connector |

|

|

|

|

|

|

|

|

|

I/O Expansion Cabinet The I/O expansion functionality is physically partitioned into four rack mounted chassis-the I/O expansion utilities chassis (XUC), the I/O expansion rear display module (RDM), the I/O expansion power chassis (XPC) and the I/O chassis enclosure (ICE). Each ICE supports up to two 12-slot I/O chassis.

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 6 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Configuration

Factory Integration |

When an I/O Expansion cabinet is ordered as an upgrade to a Superdome system, it includes the factory testing and |

|

integration of any components that are ordered at the same time as the cabinet. This includes any I/O Chassis, PCI or PCI- |

|

X cards and peripherals. If it is ordered as an upgrade but not at the time of the Superdome system, additional installation |

|

assistance may be required and can be ordered as field installation products. |

|

|

Field Racking |

The only field rackable I/O expansion components are the ICE and the 12-slot I/O chassis. Either component would be |

|

field installed when the customer has ordered additional I/O capability for a previously installed I/O expansion cabinet. |

|

No I/O expansion cabinet components will be delivered to be field installed in a customer's existing rack other than a |

|

previously installed I/O expansion cabinet. The I/O expansion components were not designed to be installed in racks other |

|

than Rack System E. In other words, they are not designed for Rosebowl I, pre-merger Compaq, Rittal, or other third-party |

|

racks. |

|

The I/O expansion cabinet is based on a modified HP Rack System E and all expansion components mount in the rack. |

|

Each component is designed to install independently in the rack. The Rack System E cabinet has been modified to allow |

|

I/O interface cables to route between the ICE and cell boards in the Superdome cabinet. I/O expansion components are |

|

not designed for installation behind a rack front door. The components are designed for use with the standard Rack System |

|

E perforated rear door. |

|

|

I/O Chassis Enclosure |

The I/O chassis enclosure (ICE) provides expanded I/O capability for Superdome. Each ICE supports up to 24 I/O slots by |

(ICE) |

using two 12-slot Superdome I/O chassis. The I/O chassis installation in the ICE puts the I/O cards in a horizontal |

|

position. An ICE supports one or two 12-slot I/O chassis. The I/O chassis enclosure (ICE) is designed to mount in a Rack |

|

System E rack and consumes 9U of vertical rack space. |

|

To provide online addition/replacement/deletion access to I/O cards and hot-swap access for I/O fans, all I/O chassis are |

|

mounted on a sliding shelf inside the ICE. |

|

Four (N+1) I/O fans mounted in the rear of the ICE provide cooling for the chassis. Air is pulled through the front as well |

|

as the I/O chassis lid (on the side of the ICE) and exhausted out the rear. The I/O fan assembly is hot swappable. An LED |

|

on each I/O fan assembly indicates that the fan is operating. |

|

|

Cabinet Height and Although the individual I/O expansion cabinet components are designed for installation in any Rack System E cabinet, Configuration Limitations rack size limitations have been agreed upon. IOX Cabinets will ship in either the 1.6-meter (33U) or 1.96-meter (41U)

cabinet. In order to allay service access concerns, the factory will not install IOX components higher than 1.6 meters from the floor. Open space in an IOX cabinet will be available for peripheral installation.

Peripheral Support |

All peripherals qualified for use with Superdome and/or for use in a Rack System E are supported in the I/O expansion |

|

cabinet as long as there is available space. Peripherals not connected to or associated with the Superdome system to which |

|

the I/O expansion cabinet is attached may be installed in the I/O expansion cabinet. |

|

|

Server Support |

No servers except those required for Superdome system management such as Superdome Support Management Station or |

|

ISEE may be installed in an I/O expansion cabinet. |

|

Peripherals installed in the I/O expansion cabinet cannot be powered by the XPC. Provisions for peripheral AC power must |

|

be provided by a PDU or other means. |

|

|

Standalone I/O Expansion If an I/O expansion cabinet is ordered alone, its field installation can be ordered via option 750 in the ordering guide Cabinet (option 950 for Superdome Advanced Architect Program Channel partners).

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 7 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Configuration

DVD Solution |

The DVD solution for Superdome requires the following components per partition. External racks A4901A and A4902A |

|

must also be ordered with the DVD solution. |

|

NOTE: One DVD and one DAT is required per nPartition. |

Superdome DVD Solutions

Description |

Part Number |

Option Number |

|

|

|

|

|

|

PCI Ultra160 SCSI Adapter or PCI Dual Channel Ultra160 SCSI Adapter |

A6828A or A6829A |

0D1 |

|

|

|

|

|

|

Surestore Tape Array 5300 |

C7508AZ |

|

|

|

|

|

|

|

HP DVD+RW Array Module (one per partition) |

Q1592A |

0D1 |

(NOTE: HP DVD-ROM Array Module for the TA5300 (C7499B) is replaced by |

|

|

HP DVD+RW Array Module (Q1592A) to give customers read capabilities for |

|

|

loading software from CD or DVD, DVD write capabilities for small amount of |

|

|

data (up to 4 GB) and offline hot-swap capabilities.) |

|

|

|

|

|

|

|

|

HP DAT 40m Array Module |

C7497A |

0D1 |

|

|

|

|

|

|

HP DAT 72 Array Module (carbon) |

Q1524B |

|

|

|

|

|

|

|

DDS-4/DAT40 (one per partition) |

C7497B |

0D1 |

|

|

|

|

|

|

PCI LVD/SE SCSI card |

A5149A |

0D1 |

NOTE: A5149A supports the MSA30 SB/DB as a boot device on Superdome |

|

|

running HP-UX 11i. |

|

|

|

|

|

|

|

|

Jumper SCSI Cable for DDS-4 (optional) 1 |

C2978B |

0D1 |

|

|

|

SCSI Cable 10m VHD/HDTS68 LVD/SE ILT M/M |

C7556A |

|

|

|

|

|

|

|

SCSI cable 5 meter |

C7520A |

0D1 |

|

|

|

|

|

|

SCSI Terminator |

C2364A |

0D1 |

|

|

|

|

|

|

1 0.5-meter HD-HDTS68 is required if DDS-4 is used.

If using DAT72, it is recommended to use an A6829A dual port SCSI with daisy chaining to connect the DVD and the DAT72 leaving the second port available to connect a SCSI data storage device.

Partitions |

Hardware Partitions |

|

A hardware partition (nPar) consists of one or more cells that communicate coherently over a high bandwidth, low latency |

|

crossbar fabric. Individual processors on a single cell board cannot be separately partitioned. nPars are logically isolated |

|

from each other such that transactions in one nPar are not visible to other nPars within the same complex. |

|

Each nPar runs its own independent operating system. Different nPars may be executing the same or different revisions of an |

|

operating system. On HP Integrity Superdome systems, nPars may be executing different operating systems altogether (HP- |

|

UX, Windows Server 2003 or Linux). Please refer to the HP Integrity Superdome section for details on these operating |

|

systems. |

|

Each nPar has its own independent CPUs, memory and I/O resources consisting of the resources of the cells that make up |

|

the nPar. Resources may be removed from one nPar and added to another without having to physically manipulate the |

|

hardware just by using commands that are part of the System Management interface. |

|

Superdome HP-UX 11i supports static nPars. Static nPars imply that any nPar configuration change requires a reboot of that |

|

nPar. In a future HP-UX release, dynamic nPars will be supported. Dynamic nPars imply that the nPar configuration |

|

changes do not require a reboot of that nPar. Using the related capabilities of dynamic reconfiguration (i.e. on-line |

|

addition, on-line removal), new resources may be added to an nPar and failed modules may be removed and replaced |

|

while the nPar continues in operation. |

|

Virtual Partitions |

|

A virtual partition (vPar) provides the capability of dividing a system into different HP UX operating system images. For |

|

more details on vPars, please refer to Chapter 6 of this guide. |

|

VPars is available on HP-UX 11i and therefore can run on Superdome PA-8600/PA-8700 servers. |

|

|

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 8 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Configuration

High Availability |

NOTE: Online addition/replacement for cell boards is not currently supported and will be available in a future HP UX |

|

release. Online addition/replacement of individual CPUs and memory DIMMs will never be supported.) |

|

Superdome high availability offering is as follows: |

CPU: The features below nearly eliminate the down time associated with CPU cache errors (which are the majority of CPU errors).

Dynamic processor resilience w/ Instant Capacity enhancement.

CPU cache ECC protection and automatic de allocation

CPU bus parity protection

Redundant DC conversion

Memory: The memory subsystem design is such that a single SDRAM chip does not contribute more than 1 bit to each ECC word. Therefore, the only way to get a multiple-bit memory error from SDRAMs is if more than one SDRAM failed at the same time (rare event). The system is also resilient to any cosmic ray or alpha particle strike because these failure modes can only affect multiple bits in a single SDRAM. If a location in memory is "bad", the physical page is de-allocated dynamically and is replaced with a new page without any OS or application interruption. In addition, a combination of hardware and software scrubbing is used for memory. The software scrubber reads/writes all memory locations periodically. However, it does not have access to "locked-down" pages. Therefore, a hardware memory scrubber is provided for full coverage. Finally data is protected by providing address/control parity protection.

Memory DRAM fault tolerance (i.e., recovery of a single SDRAM failure)

DIMM address/control parity protection

Dynamic memory resilience (i.e., page de-allocation of bad memory pages during operation)

Hardware and software memory scrubbing

Redundant DC conversion

Cell COD

I/O: Partitions configured with dual path I/O can be configured to have no shared components between them, thus preventing I/O cards from creating faults on other I/O paths. I/O cards in hardware partitions (nPars) are fully isolated from I/O cards in other hard partitions. It is not possible for an I/O failure to propagate across hard partitions. It is possible to dynamically repair and add I/O cards to an existing running partition.

Full single-wire error detection and correction on I/O links

I/O cards fully isolated from each other

Hardware for the prevention of silent corruption of data going to I/O

On-line addition/replacement (OLAR) for individual I/O cards, some external peripherals, SUB/HUB

Parity protected I/O paths

Dual path I/O

Crossbar and Cabinet Infrastructure:

Recovery of a single crossbar wire failure

Localization of crossbar failures to the partitions using the link

Automatic de-allocation of bad crossbar link upon boot

Redundant and hotswap DC converters for the crossbar backplane

ASIC full burn-in and "high quality" production process

Full "test to failure" and accelerated life testing on all critical assemblies

Strong emphasis on quality for multiple-nPartition single points of failure (SPOFs)

System resilience to Management Processor (MP)

Isolation of nPartition failure

Protection of nPartitions against spurious interrupts or memory corruption

Hot swap redundant fans (main and I/O) and power supplies (main and backplane power bricks)

Dual power source

Phone-Home capability

"HA Cluster-In-A-Box" Configuration: The "HA Cluster-In-A-Box" allows for failover of users' applications between hardware partitions (nPars) on a single Superdome system. All providers of mission critical solutions agree that failover between clustered systems provides the safest availability-no single points of failures (SPOFs) and no ability to propagate failures between systems. However, HP supports the configuration of HA cluster software in a single system to allow the highest possible availability for those users that need the benefits of a non-clustered solution, such as scalability and manageability. Superdome with this configuration will provide the greatest single system availability configurable. Since no single-system solution in the industry provides protection against a SPOF, users that still need this kind of safety and HP's highest availability should use HA cluster software in a multiple system HA configuration. Multiple Serviceguard or Serviceguard Extension for RAC clusters can be configured within a single Superdome system (i.e., two 4-node clusters configured within a 32-way Superdome system).

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 9 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Configuration

Multi-system High |

Any Superdome partition with PA-RISC processors that is protected by Serviceguard or Serviceguard Extension for RAC can |

Availability |

be configured in a cluster with: |

|

Another Superdome with PA-RISC processors |

|

One or more standalone non Superdome systems with PA-RISC processors |

|

Another partition within the same single cabinet Superdome (refer to "HA Cluster-in-a-Box" above for specific |

|

requirements) |

|

Separate partitions within the same Superdome system can be configured as part of different Serviceguard clusters. |

|

Please note that when you add nodes or initially create a cluster, all nodes must be at the same version of the operating |

|

system and Serviceguard. This means that you may have to load an operating system update for hardware enablement of |

|

the newer hardware, even on older systems. Please refer to the "Compatibility and Feature Matrix" at |

|

http://docs.hp.com/hpux/onlinedocs/4076/SG%20SGeRAC%20EMS%20Support%20Matrix_10 3 03.htm |

|

|

Geographically Dispersed The following Geographically Dispersed Cluster solutions fully support cluster configurations using Superdome systems. The Cluster Configurations existing configuration requirements for non-Superdome systems also apply to configurations that include Superdome

systems. An additional recommendation, when possible, is to configure the nodes of cluster in each datacenter within multiple cabinets to allow for local failover in the case of a single cabinet failure. Local failover is always preferred over a remote failover to the other datacenter. The importance of this recommendation increases as the geographic distance between datacenters increases.

Extended Campus Clusters (using Serviceguard with Mirrordisk/UX)

MetroCluster with Continuous Access XP

MetroCluster with EMC SRDF

ContinentalClusters

From an HA perspective, it is always better to have the nodes of an HA cluster spread across as many system cabinets (Superdome and non Superdome systems) as possible. This approach maximizes redundancy to further reduce the chance of a failure causing down time.

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 10 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Management Features

Supportability and management features on HP 9000 Superdome are covered in the next section.

Service Processor (MP) The service processor (MP) utility hardware is an independent support system for nPartition servers. It provides a way for you to connect to a server complex and perform administration or monitoring

tasks for the server hardware and its nPartitions. The main features of the service processor include the Command menu, nPartition consoles, console logs, chassis code viewers, and partition Virtual Front Panels (live displays of nPartition and cell states).

Access to the MP is restricted by user accounts. Each user account is password protected and provides a specific level of access to the Superdome complex and service processor commands. Multiple users can independently interact with the service processor because each service processor login session is private.

Up to 16 users can simultaneously log in to the service processor through its network (customer LAN) interface and they can independently manage nPartitions or view the server complex hardware states.

Two additional service processor login sessions can be supported by the local and remote serial ports. These allow for serial port terminal access(through the local RS-232 port) and external modem access (through the

remote RS-232 port).

In general, the service processor (MP) on Superdome servers is similar to the service processor on other HP servers, while providing enhanced features necessary for managing a multiple-nPartition server. For example, the service processor manages the complex profile, which defines nPartition configurations as well as complex-wide settings for the server. The service processor also controls power, reset, and TOC capabilities, displays and records system events (chassis codes), and can display detailed information about the various internal subsystems.

Functional capabilities: The primary features available through the service processor are:

The Service Processor Command Menu: provides commands for system service, status, access configuration, and manufacturing tasks.

Partition Consoles: Each nPartition in a server complex has its own console. Each nPartition's console provides access to Boot Console Handler (BCH) interface and the HP-UX console for the nPartition.

Console Logs: Each nPartition has its own console log, which has a history of the nPartition console's output, including boot output, BCH activity, and any HPUX console login activity.

Chassis Logs Viewers (Live and Recorded Chassis Codes): Three types of chassis code log views are available: activity logs, error logs, and live chassis code logs. Virtual Front Panels: Each nPartition's Virtual Front Panel (VFP) displays realtime status of the nPartition boot status and activity, and details about all cells assigned to the nPartition. The VFP display automatically updates as cell and nPartition status changes.

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 11 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Management Features

Support Management |

The Support Management Station (SMS) runs the Superdome scan tools that enhance the diagnosis and testability of |

Station (SMS) |

Superdome. The SMS and associated tools also provide for faster and easier upgrades and hardware replacement. |

|

The purpose of the SMS is to provide Customer Engineers with an industry-leading set of support tools, and thereby enable |

|

faster troubleshooting and more precise problem root-cause analysis. It also enables remote support by factory experts who |

|

consult with and back up the HP Customer Engineer. The SMS complements the proactive role of HP's Instant Support |

|

Enterprise Edition (ISEE) that is offered to Mission Critical customers by focusing on reactive diagnosis for both mission- |

|

critical and non-mission-critical Superdome customers. |

|

The user of the SMS is the HP Customer Engineer and HP Factory Support Engineer. The Superdome customer benefits from |

|

their use of the SMS by receiving faster return to normal operation of their Superdome server and improved accuracy of fault |

|

diagnosis, resulting in fewer callbacks. HP can offer better service through reduced installation time. |

|

Functional Capabilities: |

|

The SMS basic functional capabilities are: |

|

Remote access via customer LAN |

|

Modem access (PA-8800 SMS only) |

|

Ability to be disconnected from the Superdome platform(s) and not disrupt their operation. |

|

Ability to connect a new Superdome platform to the SMS and be recognized by scan software. |

|

Support for up to sixteen Superdome systems |

|

Ability to support multiple, heterogeneous Superdome platforms (scan software capability). |

|

System scan and diagnostics |

|

Utility firmware updates |

|

Enhanced IPMI logging capabilities (Windows-based ProLiant SMS only) |

|

|

Console Access |

The optimal configuration of console device(s) depends on a number of factors, including the customer's data center |

|

layout, console security needs, customer engineer access needs, and the degree with which an operator must interact with |

|

server or peripheral hardware and a partition (i.e. changing disks, tapes). This section provides a few guidelines. However |

|

the configuration that makes best sense should be designed as part of site preparation, after consulting with the customer's |

|

system administration staff and the field engineering staff. |

|

Customer data centers exhibit a wide range of configurations in terms of the preferred physical location of the console |

|

device. (The term "console device" refers to the physical screen/keyboard/mouse that administrators and field engineers use |

|

to access and control the server.) The Superdome server enables many different configurations by its flexible configuration of |

|

access to the MP, and by its support for multiple geographically distributed console devices. |

|

Three common data center styles are: |

|

The secure site where both the system and its console are physically secured in a small area. |

|

The "glass room" configuration where all the systems' consoles are clustered in a location physically near the |

|

machine room. |

|

The geographically dispersed site, where operators administer systems from consoles in remote offices. |

|

These can each drive different solutions to the console access requirement. |

|

The considerations listed below apply to the design of provision of console access to the server. These must be considered |

|

during site preparation. |

|

The Superdome server can be operated from a VT100 or an hpterm compatible terminal emulator. However some |

|

programs (including some of those used by field engineers) have a friendlier user interface when operated from an |

|

hpterm. |

|

LAN console device users connect to the MP (and thence to the console) using terminal emulators that establish |

|

telnet connections to the MP. The console device(s) can be anywhere on the network connected to either port of the |

|

MP. |

|

Telnet data is sent between the client console device and the MP "in the clear", i.e. unencrypted. This may be a |

|

concern for some customers, and may dictate special LAN configurations. |

|

If an HP UX workstation is used as a console device, an hpterm window running telnet is the recommended way to |

|

connect to the MP. If a PC is used as a console device, Reflection1 configured for hpterm emulation and telnet |

|

connection is the recommended way to connect to the MP. |

|

The MP currently supports a maximum of 16 telnet connected users at any one time. |

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 12 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Management Features

It is desirable, and sometimes essential for rapid time to repair to provide a reliable way to get console access that is physically close to the server, so that someone working on the server hardware can get immediate access to the results of their actions. There are a few options to achieve this:

Place a console device close to the server.

Ask the field engineer to carry in a laptop, or to walk to the operations center.

Use a system that is already in close proximity of the server such as the Instant Support Enterprise Edition (ISEE) or the System Management Station as a console device close to the system.

The system administrator is likely to want to run X applications or a browser using the same client that they access the MP and partition consoles with. This is because the partition configuration tool, parmgr, has a graphical interface. The system administrator's console device(s) should have X window or browser capability, and should be connected to the system LAN of one or more partitions.

Support |

The following matrix describes the supported SMS and recommended console devices for all Superdomes. |

SMS and Console Support Matrix

|

|

SMS |

Console |

|

|

|

|

|

|

|

|

PA 8700 |

(pre March 1, 2004) |

Legacy UNIX SMS 1 |

PC/workstation |

|

|

|

|

|

|

|

|

PA 8700 |

(post March 1, 2004) |

UNIX rx2600 bundle |

TFT5600 + Ethernet switch |

|

|

|

|

|

|

||

PA 8700 upgraded to Integrity or |

Legacy UNIX SMS with software |

PC/workstation |

|

PA 8800 |

|

upgrade2 |

|

|

|

|

|

|

|

|

|

|

|

UNIX rx2600 bundle3 |

TFT5600 + Ethernet switch |

|

|

|

|

|

|

|

|

|

|

Windows SMS/Console (ProLiant ML350) |

|

|

|

||

|

|

||

Integrity or PA 8800 |

Windows SMS/Console (ProLiant ML350) |

||

|

|

|

|

|

|

|

|

|

|

UNIX SMS/Console (rx2600) |

|

|

|

|

|

|

|

|

|

1A legacy UNIX SMS could be an A400, A500, rp2430 or rp2470 bundle, depending on when it was ordered

2In order for a legacy SMS to be upgraded to support Integrity or PA8800, it must be running HP-UX 11.0 or later, as sx1000 scan tools are not supported on HP-UX 10.20.

3rx2600 SMS bundles ordered and installed prior to October 2004 will require a software upgrade in order to support an sx1000-based Superdome. As of October 2004, all rx2600 SMS bundles support PA8800 and Integrity Superdomes without this upgrade.

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 13 |

QuickSpecs

HP 9000 Superdome Servers

(PA-8600, PA-8700 and PA-8800)

Management Features

PA 8700

Hardware Requirement |

Customers ordering an SMS for the first time for a new PA 8700 Superdome should order the rx2600 SMS. The rx2600 SMS |

|

can also be used to manage PA8800 and Integrity Superdomes. |

|

Customers using the earlier-released A180 SMS must replace it with the rx2600 if they expect to use it with new Superdome |

|

or Integrity servers. Customers may use an existing rp2470 or A500 SMS to manage any new PA 8700 Superdome. |

|

One rx2600 SMS can support up to 16 Superdomes using a switch. Please note, however, that certain datacenters are so |

|

large that the networking structure will not permit the sharing of one SMS for the entire datacenter. The SMS is connected to |

|

each PA 8700 Superdome system on a private LAN. It is beneficial to have the SMS in close physical proximity to the |

|

Superdome(s) because the Customer Engineer (CE) requires SMS access to service the Superdome hardware. The physical |

|

connection from the Superdome is a private Ethernet connection and thus, the absolute maximum distance is determined |

|

by the Ethernet specification. |

|

The UNIX rx2600 SMS bundle is comprised of: |

|

HP rx2600 1.0G 1.5MB CPU server Solution |

|

Factory rack kit for rx2600 |

|

1GB DDR memory |

|

36GB 15K HotPlug Ultra320 HDD |

|

HP-UX 11iv2 Foundation OE |

|

1 x HP Tape Array 5300 with DVD-ROM and DAT 40 |

|

HP ProCurve Switch 2124 |

|

CAT 5e Cables |

|

By default, the rx2600 SMS does not come with a display monitor or keyboard unless explicitly ordered to enable console |

|

access (the TFT5600 rackmounted display/mouse/keyboard is the recommended solution). See the ordering guide for details |

|

on the additional components that are required in order to use the rx2600 SMS as a console. |

|

|

Software Requirements |

All SMS software is preloaded in the factory and delivered to the customer as a complete solution. The rx2600 SMS supports |

|

only HP UX 11i at this time. Current versions of the SMS software have not been qualified for 64 bit Windows. To ensure |

|

only optimal diagnostic solutions are used, an integrated Windows/Linux SMS/Console is not available for PA 8700 |

|

Superdomes. All SMS software is preloaded in the factory and delivered to the customer as a complete solution. |

|

|

SMS Connectivity |

PA-8700 Superdome requires scan traffic to be isolated from console traffic, therefore two distinct networks are required for |

|

the SMS and/or console. The rx2600 SMS has two LAN connections on the integrated multifunction I/O that can support |

|

and connect to two LAN interfaces on the Superdome MP: the Private LAN and the Customer LAN. These two LAN |

|

connections allow SMS and console operations to be performed remotely. |

|

The 10/100Base TX port on the rx2600 is required, and is connected to the Private LAN on the Superdome MP. This |

|

connection is solely used for the various diagnostics supported by the SMS. The 10/100/1000Base TX port on the rx2600 can |

|

optionally be connected to the Superdome MP's Customer LAN for console access to the MP (and the Superdome partitions) |

|

from the existing management network. More details on console use of the rx2600 is provided later in this chapter. |

|

For use as an SMS only, the rx2600's 10/100Base-TX port is connected to the Private LAN port on the Superdome MP. This |

|

can be done with a direct-connect crossover cable, or by using an Ethernet switch. HP recommends the switched connect |

|

configuration for the rx2600 SMS in order for the SMS to be shared with other Superdomes, and remotely accessed. |

|

The SMS can be accessed remotely from the Management LAN, or directly via RS 232 on an as needed basis. If the customer |

|

chooses to access the SMS from the Customer Management LAN, the SMS traffic is on a distinct and private network. |

|

Console traffic goes to Customer LAN port on MP. This diagram assumes that the Customer provides the console |

|

infrastructure. Note as well that the UNIX rx2600 SMS is supported for use on all current models of Superdome. |

|

Providing the Ethernet switch is configured with the UNIX rx2600 SMS, additional Superdomes can be easily added into the |

|

existing infrastructure with minimal disruption and downtime. Each additional Superdome MP's Private LAN port should be |

|

connected to the Ethernet switch. Note this will share SMS scan functionality only, not console access. |

|

|

DA - 11721 North America — Version 13 — April 1, 2005 |

Page 14 |

Loading...

Loading...