Page 1

HP StorageWorks

X1000 and X3000 Network Storage System

user guide

Part number: 5697-0185

First edition: November 2009

Page 2

Legal and notice information

© Copyright 1999, 2009 Hewlett-Packard Development Company, L.P.

Confidential computer software. Valid license from HP required for possession, use or copying. Consistent with FAR 12.211

and 12.212, Commercial Computer Software, Computer Software Documentation, and Technical Data for Commercial Items

are licensed to the U.S. Government under vendor's standard commercial license.

The information contained herein is subject to change without notice. The only warranties for HP products and services are set

forth in the express warranty statements accompanying such products and services. Nothing herein should be construed as

constituting an additional warranty. HP shall not be liable for technical or editorial errors or omissions contained herein.

WARRANTY STATEMENT: To obtain a copy of the warranty for this product, see the warranty information website:

h

ttp://www.hp.com/go/storagewarranty

Intel, Itanium, Pentium, Intel Inside, and the Intel Inside logo are trademarks or registered trademarks of Intel Corporation or

its subsidiaries in the United States and other countries.

Microsoft, Windows, Windows XP, and Windows NT are U.S. registered trademarks of Microsoft Corporation.

Adobe and Acrobat are trademarks of Adobe Systems Incorporated.

Java is a US trademark of Sun Microsystems, Inc.

Oracle is a registered US trademark of Oracle Corporation, Redwood City, California.

UNIX is a registered trademark of The Open Group.

Printed in the US

Page 3

Contents

About this guide ................................................................................. 13

Intended audience .................................................................................................................... 13

Related documentation .............................................................................................................. 13

Document conventions and symbols ............................................................................................. 13

Rack stability ............................................................................................................................ 14

HP technical support ................................................................................................................. 15

Customer self repair .................................................................................................................. 15

Subscription service .................................................................................................................. 15

HP websites ............................................................................................................................. 15

Documentation feedback ........................................................................................................... 16

1 Installing and configuring the server ................................................... 17

Setup overview ......................................................................................................................... 17

Determine an access method ............................................................................................... 17

Check kit contents ..................................................................................................................... 17

Locate and record the serial number ............................................................................................ 18

Install the storage system hardware .............................................................................................18

Access the storage system .......................................................................................................... 18

Power on the server and log on ..................................................................................................19

Configure the storage system using the HP StorageWorks Rapid Startup Wizard ............................... 20

Complete system configuration ...................................................................................................21

Additional access methods ......................................................................................................... 22

Using the remote browser method ......................................................................................... 22

Using the Remote Desktop method ........................................................................................ 23

Using the Telnet Server method ............................................................................................ 23

Enabling Telnet Server .................................................................................................. 23

Default storage settings .............................................................................................................. 23

Physical configuration ......................................................................................................... 23

Default boot sequence ........................................................................................................ 25

2 Storage system component identification ............................................. 27

HP X1400 Network Storage System and X3400 Network Storage Gateway hardware

components ............................................................................................................................. 27

HP X1600 Network Storage System hardware components ............................................................ 29

HP X1800 Network Storage System and X3800 Network Storage Gateway hardware

components ............................................................................................................................. 32

SAS and SATA hard drive LEDs ................................................................................................... 36

Systems Insight Display LEDs ...................................................................................................... 37

Systems Insight Display LED combinations .................................................................................... 39

3 Administration tools .......................................................................... 41

HP StorageWorks Automated Storage Manager ........................................................................... 41

Microsoft Windows Storage Server 2008 administration tools ........................................................ 41

Remote Desktop for Administration ....................................................................................... 41

X1000 and X3000 Network Storage System 3

Page 4

Share and Storage Management .......................................................................................... 42

Microsoft Services for Network File System ............................................................................. 42

Active Directory Lightweight Directory Services (ADLDS) ........................................................... 43

Configuring ADLDS ...................................................................................................... 43

Single Instance Storage ....................................................................................................... 44

Print Management .............................................................................................................. 45

4 Storage management overview .......................................................... 47

Storage management elements ................................................................................................... 47

Storage management example ............................................................................................. 47

Physical storage elements .................................................................................................... 48

Arrays ........................................................................................................................ 49

Fault tolerance ............................................................................................................. 50

Online spares ............................................................................................................. 50

Logical storage elements ..................................................................................................... 50

Logical drives (LUNs) .................................................................................................... 50

Partitions .................................................................................................................... 51

Volumes ..................................................................................................................... 51

File system elements ............................................................................................................52

File sharing elements .......................................................................................................... 52

Volume Shadow Copy Service overview ................................................................................ 52

Using storage elements ....................................................................................................... 53

Clustered server elements .................................................................................................... 53

Network adapter teaming .......................................................................................................... 53

Management tools .................................................................................................................... 53

HP Systems Insight Manager ................................................................................................ 53

Management Agents .......................................................................................................... 54

5 File server management .................................................................... 55

File services features in Windows Storage Server 2008 ................................................................. 55

Storage Manager for SANs ................................................................................................. 55

Single Instance Storage ....................................................................................................... 55

File Server Resource Manager .............................................................................................. 55

Windows SharePoint Services .............................................................................................. 55

File services management .......................................................................................................... 56

Configuring data storage .................................................................................................... 56

Storage management utilities ............................................................................................... 56

Array management utilities ............................................................................................ 57

Array Configuration Utility ............................................................................................ 57

Disk Management utility ............................................................................................... 58

Guidelines for managing disks and volumes .......................................................................... 58

Scheduling defragmentation ................................................................................................ 59

Disk quotas ....................................................................................................................... 59

Adding storage ..................................................................................................................60

Expanding storage ...................................................................................................... 60

Extending storage using Windows Storage Utilities .......................................................... 61

Expanding storage for EVA arrays using Command View EVA ........................................... 61

Expanding storage using the Array Configuration Utility ................................................... 61

Volume shadow copies .............................................................................................................. 62

Shadow copy planning ....................................................................................................... 62

Identifying the volume .................................................................................................. 63

Allocating disk space ................................................................................................... 63

Identifying the storage area .......................................................................................... 64

Determining creation frequency ..................................................................................... 64

4

Page 5

Shadow copies and drive defragmentation ............................................................................ 65

Mounted drives ..................................................................................................................65

Managing shadow copies ................................................................................................... 65

The shadow copy cache file .......................................................................................... 66

Enabling and creating shadow copies ............................................................................ 67

Viewing a list of shadow copies ..................................................................................... 68

Set schedules .............................................................................................................. 68

Viewing shadow copy properties ................................................................................... 68

Redirecting shadow copies to an alternate volume ........................................................... 69

Disabling shadow copies .............................................................................................. 69

Managing shadow copies from the storage system desktop ..................................................... 70

Shadow Copies for Shared Folders ....................................................................................... 70

SMB shadow copies .................................................................................................... 71

NFS shadow copies ..................................................................................................... 72

Recovery of files or folders ............................................................................................ 73

Recovering a deleted file or folder .................................................................................. 73

Recovering an overwritten or corrupted file ...................................................................... 74

Recovering a folder ...................................................................................................... 74

Backup and shadow copies .......................................................................................... 75

Shadow Copy Transport ...................................................................................................... 75

Folder and share management ................................................................................................... 75

Folder management ............................................................................................................ 76

Share management ............................................................................................................ 82

Share considerations .................................................................................................... 82

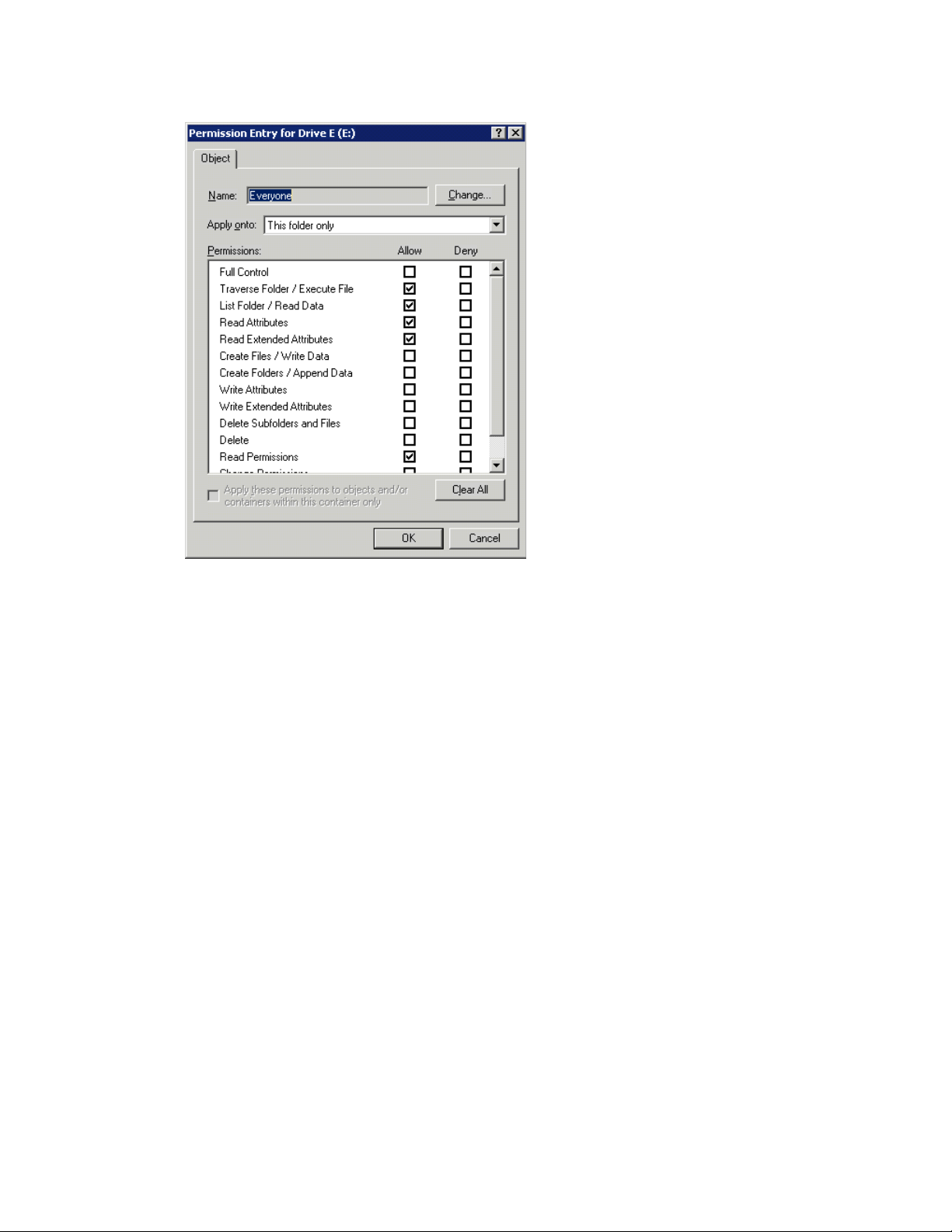

Defining Access Control Lists ......................................................................................... 83

Integrating local file system security into Windows domain environments ............................. 83

Comparing administrative (hidden) and standard shares ................................................... 83

Managing shares ........................................................................................................ 84

File Server Resource Manager ....................................................................................................84

Quota management ........................................................................................................... 84

File screening management ................................................................................................. 85

Storage reports .................................................................................................................. 85

Other Windows disk and data management tools ......................................................................... 85

Additional information and references for file services .................................................................... 85

Backup ............................................................................................................................. 85

HP StorageWorks Library and Tape Tools .............................................................................. 85

Antivirus ............................................................................................................................ 86

6 Cluster administration ....................................................................... 87

Cluster overview ....................................................................................................................... 87

Cluster terms and components ....................................................................................................88

Nodes .............................................................................................................................. 88

Resources .......................................................................................................................... 88

Cluster groups ................................................................................................................... 89

Virtual servers .................................................................................................................... 89

Failover and failback .......................................................................................................... 89

Quorum disk .....................................................................................................................89

Cluster concepts ....................................................................................................................... 90

Sequence of events for cluster resources ................................................................................ 90

Hierarchy of cluster resource components .............................................................................. 91

Cluster planning ....................................................................................................................... 91

Storage planning ............................................................................................................... 92

Network planning .............................................................................................................. 92

Protocol planning ...............................................................................................................93

X1000 and X3000 Network Storage System 5

Page 6

Preparing for cluster installation .................................................................................................. 94

Before beginning installation ............................................................................................... 94

Using multipath data paths for high availability ...................................................................... 94

Checklists for cluster server installation .................................................................................. 94

Network requirements .................................................................................................. 94

Shared disk requirements .............................................................................................. 95

Cluster installation ..................................................................................................................... 95

Setting up networks ............................................................................................................ 96

Configuring the private network adapter ......................................................................... 96

Configuring the public network adapter .......................................................................... 96

Renaming the local area connection icons ...................................................................... 96

Verifying connectivity and name resolution ...................................................................... 96

Verifying domain membership ....................................................................................... 96

Setting up a cluster account .......................................................................................... 96

About the Quorum disk ................................................................................................ 96

Configuring shared disks .............................................................................................. 97

Verifying disk access and functionality ............................................................................ 97

Configuring cluster service software ............................................................................................. 97

Using Cluster Administrator ................................................................................................. 97

Creating a cluster ............................................................................................................... 97

Adding nodes to a cluster .................................................................................................... 98

Geographically dispersed clusters ........................................................................................ 98

Cluster groups and resources, including file shares ........................................................................ 98

Cluster group overview ....................................................................................................... 98

Node-based cluster groups ........................................................................................... 99

Load balancing ........................................................................................................... 99

File share resource planning issues ....................................................................................... 99

Resource planning ....................................................................................................... 99

Permissions and access rights on share resources ........................................................... 100

NFS cluster-specific issues ........................................................................................... 100

Non cluster aware file sharing protocols .............................................................................. 101

Adding new storage to a cluster ......................................................................................... 101

Creating physical disk resources .................................................................................. 101

Creating file share resources ....................................................................................... 101

Creating NFS share resources ..................................................................................... 102

Shadow copies in a cluster ................................................................................................ 102

Extend a LUN in a cluster .................................................................................................. 102

MSNFS administration on a server cluster ............................................................................ 102

Best practices for running Server for NFS in a server cluster ............................................. 103

Print services in a cluster .......................................................................................................... 103

Creating a cluster printer spooler ........................................................................................ 103

Advanced cluster administration procedures ............................................................................... 104

Failing over and failing back ............................................................................................. 104

Restarting one cluster node ................................................................................................ 105

Shutting down one cluster node .......................................................................................... 105

Powering down the cluster ................................................................................................. 105

Powering up the cluster ..................................................................................................... 106

7 Troubleshooting, servicing, and maintenance ..................................... 107

Troubleshooting the storage system ............................................................................................ 107

WEBES (Web Based Enterprise Services) ................................................................................... 107

Maintenance and service ......................................................................................................... 108

Maintenance updates ....................................................................................................... 108

System updates .......................................................................................................... 108

6

Page 7

Firmware updates ............................................................................................................. 108

Certificate of Authenticity ......................................................................................................... 108

8 System recovery ............................................................................. 109

The System Recovery DVD ........................................................................................................ 109

To restore a factory image ....................................................................................................... 109

Managing disks after a restoration ............................................................................................ 109

A Regulatory compliance notices ........................................................ 111

Regulatory compliance identification numbers ............................................................................ 111

Federal Communications Commission notice .............................................................................. 111

FCC rating label .............................................................................................................. 111

Class A equipment ..................................................................................................... 111

Class B equipment ..................................................................................................... 112

Declaration of Conformity for products marked with the FCC logo, United States only ............... 112

Modification .................................................................................................................... 112

Cables ............................................................................................................................ 112

Canadian notice (Avis Canadien) ............................................................................................. 112

Class A equipment ........................................................................................................... 112

Class B equipment ............................................................................................................ 113

European Union notice ............................................................................................................ 113

Japanese notices .................................................................................................................... 113

Japanese VCCI-A notice .................................................................................................... 113

Japanese VCCI-B notice .................................................................................................... 113

Japanese power cord statement .......................................................................................... 113

Korean notices ....................................................................................................................... 114

Class A equipment ........................................................................................................... 114

Class B equipment ............................................................................................................ 114

Taiwanese notices ................................................................................................................... 114

BSMI Class A notice ......................................................................................................... 114

Taiwan battery recycle statement ........................................................................................ 114

Laser compliance notices ......................................................................................................... 115

English laser notice ........................................................................................................... 115

Dutch laser notice ............................................................................................................. 115

French laser notice ........................................................................................................... 116

German laser notice ......................................................................................................... 116

Italian laser notice ............................................................................................................ 116

Japanese laser notice ........................................................................................................ 117

Spanish laser notice ......................................................................................................... 117

Recycling notices .................................................................................................................... 117

English notice .................................................................................................................. 117

Bulgarian notice ............................................................................................................... 118

Czech notice ................................................................................................................... 118

Danish notice .................................................................................................................. 118

Dutch notice .................................................................................................................... 118

Estonian notice ................................................................................................................ 119

Finnish notice ................................................................................................................... 119

French notice ................................................................................................................... 119

German notice ................................................................................................................. 119

Greek notice .................................................................................................................... 120

Hungarian notice ............................................................................................................. 120

Italian notice .................................................................................................................... 120

Latvian notice .................................................................................................................. 120

Lithuanian notice .............................................................................................................. 121

X1000 and X3000 Network Storage System 7

Page 8

Polish notice .................................................................................................................... 121

Portuguese notice ............................................................................................................. 121

Romanian notice .............................................................................................................. 121

Slovak notice ................................................................................................................... 122

Spanish notice ................................................................................................................. 122

Swedish notice ................................................................................................................. 122

Turkish notice ................................................................................................................... 122

Battery replacement notices ...................................................................................................... 123

Dutch battery notice .......................................................................................................... 123

French battery notice ........................................................................................................ 124

German battery notice ...................................................................................................... 124

Italian battery notice ......................................................................................................... 125

Japanese battery notice .................................................................................................... 125

Spanish battery notice ...................................................................................................... 126

Index ............................................................................................... 127

8

Page 9

Figures

HP StorageWorks Rapid Startup Wizard Welcome screen ............................................ 211

HP X1400 and X3400 front panel components .......................................................... 272

HP X1400 and X3400 front panel LEDs ..................................................................... 283

HP X1400 and X3400 rear panel components ........................................................... 284

HP X1400 and X3400 rear panel LEDs ..................................................................... 295

HP X1600 front panel components and LEDs .............................................................. 306

HP X1600 rear panel components ............................................................................ 317

HP X1600 (AW528A) rear panel components ............................................................ 328

HP X1800 and X3800 front panel components .......................................................... 339

HP X1800 and X3800 front panel LEDs and buttons ................................................... 3410

HP X1800 and X3800 rear panel components ........................................................... 3411

HP X1800 and X3800 rear panel LEDs and buttons .................................................... 3512

SAS/SATA hard drive LEDs ....................................................................................... 3613

Systems Insight Display LEDs ..................................................................................... 3814

Storage management process example ...................................................................... 4815

Configuring arrays from physical drives ...................................................................... 4916

RAID 0 (data striping) (S1-S4) of data blocks (B1-B12) ................................................ 4917

Two arrays (A1, A2) and five logical drives (L1 through L5) spread over five physical

18

drives .................................................................................................................... 51

System administrator view of Shadow Copies for Shared Folders ................................... 6619

Shadow copies stored on a source volume ................................................................. 6620

Shadow copies stored on a separate volume .............................................................. 6721

Accessing shadow copies from My Computer ............................................................. 7022

Client GUI ............................................................................................................. 7223

Recovering a deleted file or folder ............................................................................. 7424

Properties dialog box, Security tab ............................................................................ 7725

Advanced Security settings dialog box, Permissions tab ............................................... 7826

User or group Permission Entry dialog box ................................................................. 7927

Advanced Security Settings dialog box, Auditing tab ................................................... 8028

Select User or Group dialog box ............................................................................... 8029

Auditing Entry dialog box for folder name NTFS Test ................................................... 8130

Advanced Security Settings dialog box, Owner tab ..................................................... 8231

X1000 and X3000 Network Storage System 9

Page 10

Storage system cluster diagram ................................................................................. 8832

Cluster concepts diagram ......................................................................................... 9033

10

Page 11

Tables

Document conventions ............................................................................................. 131

HP Rapid Startup Wizard configuration options .......................................................... 202

Storage system RAID configurations ........................................................................... 243

HP X1400 and X3400 front panel LED descriptions ..................................................... 284

HP X1400 and X3400 rear panel LED descriptions ..................................................... 295

HP X1600 front panel component and LED descriptions ............................................... 306

HP X1800 and X3800 front panel LED and button descriptions .................................... 347

HP X1800 and X3800 rear panel LED and button descriptions ..................................... 358

SAS and SATA hard drive LED combinations ............................................................... 369

Systems Insight Display LED descriptions .................................................................... 3810

Systems Insight Display LEDs and internal health LED combinations ................................ 3911

Summary of RAID methods ....................................................................................... 5012

Tasks and utilities needed for storage system configuration ........................................... 5613

Sharing protocol cluster support ................................................................................ 9314

Power sequencing for cluster installation ..................................................................... 9515

X1000 and X3000 Network Storage System 11

Page 12

12

Page 13

About this guide

This guide provides information about installing, configuring, managing, and troubleshooting the

following HP StorageWorks Network Storage Systems:

• HP StorageWorks X1400 Network Storage System

• HP StorageWorks X1600 Network Storage System

• HP StorageWorks X1800 Network Storage System

• HP StorageWorks X3400 Network Storage Gateway

• HP StorageWorks X3800 Network Storage Gateway

Intended audience

This guide is intended for technical professionals with knowledge of:

• Microsoft® administrative procedures

• System and storage configurations

Related documentation

The following documents [and websites] provide related information:

• HP StorageWorks X1000 and X3000 Network Storage System installation instructions

• HP StorageWorks X1000 Automated Storage Manager user guide

• HP Integrated Lights-Out 2 User Guide

To obtain HP StorageWorks X1000 and X3000 Network Storage System documentation, go to http:/

/www.hp.com/go/nas, select your product family, product model, click Support for your product,

and then click Manuals.

Document conventions and symbols

Table 1 Document conventions

ElementConvention

Cross-reference links and e-mail addressesBlue text: Table 1

ttp://www.hp.com

Bold text

Website addressesBlue, underlined text: h

• Keys that are pressed

• Text typed into a GUI element, such as a box

• GUI elements that are clicked or selected, such as menu

and list items, buttons, tabs, and check boxes

Text emphasisItalic text

X1000 and X3000 Network Storage System 13

Page 14

Monospace text

ElementConvention

• File and directory names

• System output

• Code

• Commands, their arguments, and argument values

Monospace, italic text

Monospace, bold text

• Code variables

• Command variables

Emphasized monospace text

WARNING!

Indicates that failure to follow directions could result in bodily harm or death.

CAUTION:

Indicates that failure to follow directions could result in damage to equipment or data.

IMPORTANT:

Provides clarifying information or specific instructions.

NOTE:

Provides additional information.

TIP:

Provides helpful hints and shortcuts.

Rack stability

Rack stability protects personnel and equipment.

About this guide14

Page 15

WARNING!

To reduce the risk of personal injury or damage to equipment:

• Extend leveling jacks to the floor.

• Ensure that the full weight of the rack rests on the leveling jacks.

• Install stabilizing feet on the rack.

• In multiple-rack installations, fasten racks together securely.

• Extend only one rack component at a time. Racks can become unstable if more than one component

is extended.

HP technical support

For worldwide technical support information, see the HP support website:

http://www.hp.com/support

Before contacting HP, collect the following information:

• Product model names and numbers

• Technical support registration number (if applicable)

• Product serial numbers

• Error messages

• Operating system type and revision level

• Detailed questions

Customer self repair

HP customer self repair (CSR) programs allow you to repair your StorageWorks product. If a CSR

part needs replacing, HP ships the part directly to you so that you can install it at your convenience.

Some parts do not qualify for CSR. Your HP-authorized service provider will determine whether a

repair can be accomplished by CSR.

For more information about CSR, contact your local service provider, or see the CSR website:

http://www.hp.com/go/selfrepair

Subscription service

HP recommends that you register your product at the Subscriber's Choice for Business website:

http://www.hp.com/go/e-updates

After registering, you will receive e-mail notification of product enhancements, new driver versions,

firmware updates, and other product resources.

HP websites

For additional information, see the following HP websites:

•http://www.hp.com

X1000 and X3000 Network Storage System 15

Page 16

•http://www.hp.com/go/storage

•http://www.hp.com/service_locator

•http://www.hp.com/support/manuals

•http://www.hp.com/support/downloads

Documentation feedback

HP welcomes your feedback.

To make comments and suggestions about product documentation, please send a message to

storagedocsFeedback@hp.com. All submissions become the property of HP.

About this guide16

Page 17

1 Installing and configuring the server

Setup overview

The HP StorageWorks X1000 Network Storage System comes preinstalled with the Microsoft

Windows® Storage Server™ 2008 Standard x64 Edition operating system with Microsoft iSCSI

Software Target and HP X1000 Automated Storage Manager (HP ASM) included.

The HP StorageWorks X3000 Network Storage System comes preinstalled with the Microsoft

Windows® Storage Server™ 2008 Enterprise x64 Edition operating system with Microsoft iSCSI

Software Target and a Microsoft Cluster Service (MSCS) license included.

IMPORTANT:

• Windows Storage Server 2008 x64 operating systems are designed to support 32–bit applications

without modification; however, any 32–bit applications that are run on these operating systems

should be thoroughly tested before releasing the storage system to a production environment.

• Windows Storage Server x64 editions support only x64-based versions of Microsoft Management

Console (MMC) snap-ins, not 32-bit versions.

Determine an access method

Before you install the storage system, you need to decide on an access method.

The type of access you select is determined by whether or not the network has a Dynamic Host

Configuration Protocol (DHCP) server. If the network has a DHCP server, you can install the storage

system through the direct attachment or remote management methods. If your network does not have

a DHCP server, you must access the storage system through the direct attachment method.

The direct attachment method requires a display, keyboard, and mouse. These components are not

provided with the storage system.

IMPORTANT:

Only the direct attach and remote management access methods can be used to install the storage

system. After the storage system installation process is complete and the system's IP address has been

assigned, you can then additionally use the remote browser and remote desktop methods to access

the storage system.

Check kit contents

Remove the contents, making sure you have all the components listed below. If components are missing,

contact HP technical support.

• HP StorageWorks X1000 or X3000 Network Storage System (with operating system preloaded)

X1000 and X3000 Network Storage System 17

Page 18

• Power cord(s)

• Product Documentation and Safety and Disposal Documentation CD

• HP StorageWorks Storage System Recovery DVD

• End User License Agreement

• Certificate of Authenticity Card

• Slide rail assembly

• HP ProLiant Essentials Integrated Lights-Out 2 Advanced Pack

Locate and record the serial number

Before completing the installation portion of this guide, locate and write down the storage system's

serial number.

The storage system's serial number is located in three places:

• Top of the storage system

• Back of the storage system

• Inside the storage system shipping box

Install the storage system hardware

1. Install the rail kit by following the HP Rack Rail Kit installation instructions.

2. If connecting to the storage system using the direct attach method, connect the following cables

to the back panel of the storage system in the following sequence: keyboard, mouse, network

cable, monitor cable, and power cable.

NOTE:

• The keyboard, mouse, and monitor are not provided with the storage system.

• The X1600 does not include PS/2 ports for connecting a keyboard and mouse. You

must use USB-compatible keyboard and mouse devices with this storage system.

3. If connecting to the storage system using the remote management method, connect a network

cable to a data port, a network cable to the iLO 2 port, and power cable.

Access the storage system

Use either the direct connect or remote management method to connect to the storage system.

IMPORTANT:

Only the direct attach and remote management access methods can be used to install the storage

system. After the storage system installation process is complete and the system's IP address has been

assigned, you can then additionally use the remote browser and remote desktop methods to access

the storage system.

• Direct attach — Connect a monitor, keyboard, and mouse directly to the storage system. This access

method is mandatory if your network does not have a Dynamic Host Configuration Protocol (DHCP)

server.

Installing and configuring the server18

Page 19

NOTE:

• The keyboard, mouse, and monitor are not provided with the storage system.

• The X1600 does not include PS/2 ports for connecting a keyboard and mouse. You must use

USB-compatible keyboard and mouse devices with this storage system.

• Remote management — Access the storage system using the Integrated Lights-Out 2 remote man-

agement method:

1. Ensure that a network cable is connected to the iLO 2 port located on the back of the storage

system.

2. Locate the iLO 2 Network Settings tag attached to the storage system and record the default

user name, password, and DNS name.

3. From a remote computer, open a standard Web browser and enter the iLO 2 management

hostname of the storage system.

NOTE:

By default, iLO 2 obtains the management IP address and subnet mask from your

network’s DHCP server. The hostname found on the iLO 2 tag is automatically registered

with your network’s DNS server.

4. Using the default user information provided on the iLO 2 Network Settings tag, log on to the

storage system.

For detailed instructions on using iLO 2, see the HP Integrated Lights–Out 2 user guide.

Power on the server and log on

Power on the server after installing the hardware and connecting the cables. Powering on the server

for the first time initiates the storage system installation process.

1. Power on the system by pushing the power button on the front panel. If using iLO 2, click

Momentary Press on the Power Management page to power on the server, then click Launch on

the Status Summary page to open the iLO 2 Integrated Remote Console and complete the

installation process.

The storage system starts and displays an HP Network Storage System installation screen. The

storage system installation process takes approximately 10–15 minutes.

NOTE:

Your storage system comes pre-installed with the Microsoft Windows Storage Server 2008

operating system. There is no operating system installation required.

When the storage system installation process nears completion, the Windows Storage Server

2008 desktop displays the following message: The user's password must be changed before

logging on the first time. Log on to the storage system by establishing an Administrator password:

2. Click OK.

3. Type an Administrator password in the New password box.

X1000 and X3000 Network Storage System 19

Page 20

4. Re-type the Administrator password in the Confirm password box.

5. Click the blue arrow next to the Confirm password box.

6. Click OK.

After the Administrator password has been set, the storage system completes the installation

process and restarts.

7. When prompted, press CTRL+ALT+DELETE to log on to the system. If using iLO 2, on the iLO

2 Integrated Remote Console tab, click the button labeled CAD and then click the Ctrl-Alt-Del

menu item.

IMPORTANT:

After establishing the new Administrator password, be sure to remember it and record it in a safe

place if needed. HP has no way of accessing the system if the new password is lost.

After logging in for the first time, the Welcome screen of the HP StorageWorks Rapid Startup Wizard

opens. Use the HP StorageWorks Rapid Startup Wizard to set up your system with basic configuration

information.

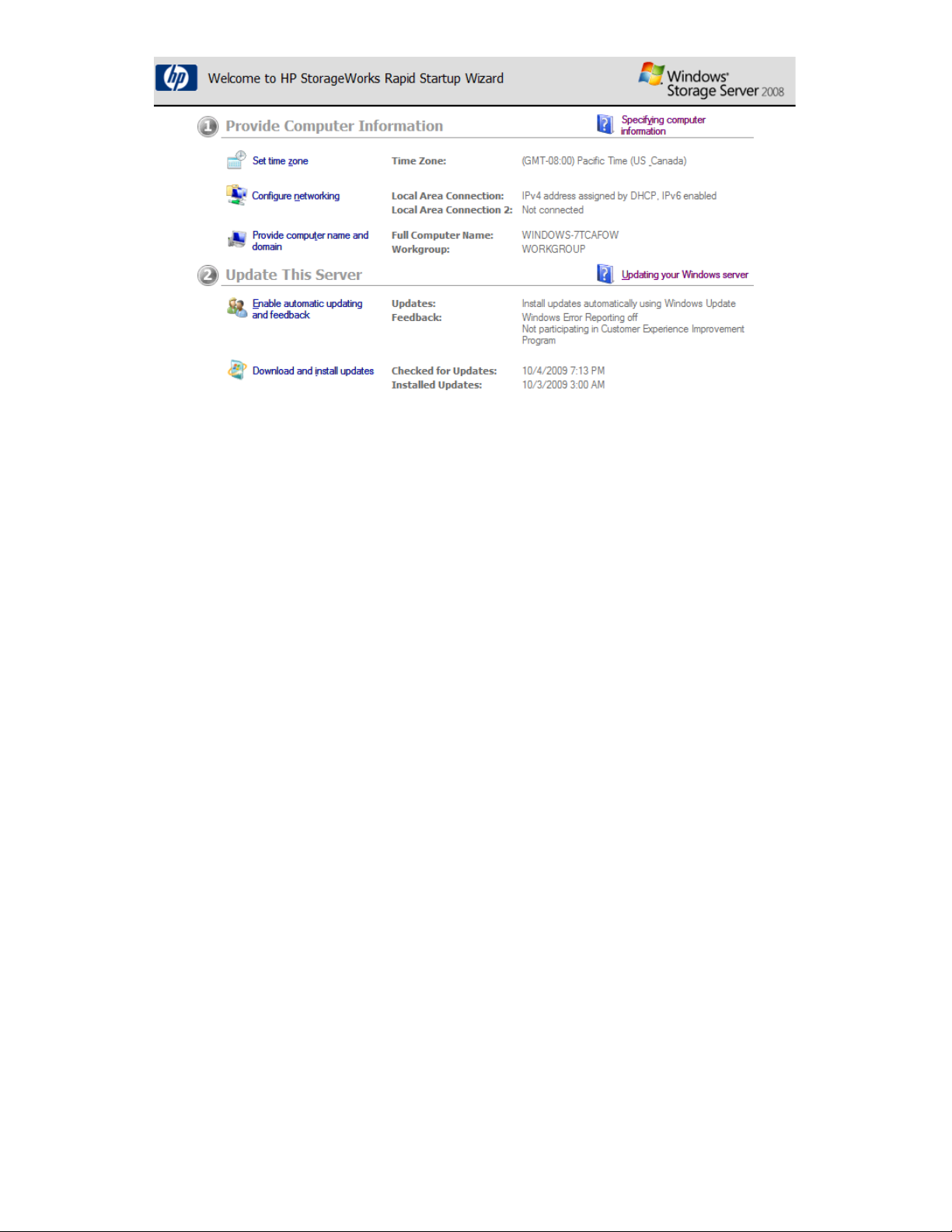

Configure the storage system using the HP StorageWorks Rapid Startup Wizard

The HP StorageWorks Rapid Startup Wizard is displayed after logging in for the first time. This wizard

guides you through configuring system settings. The HP StorageWorks Rapid Startup Wizard provides

the following options for configuring the storage system:

Table 2 HP Rapid Startup Wizard configuration options

Provide Computer Information

Customize This Server

Configure HP Recommended Settings

Configuration settingsRapid Startup Wizard Section

Set time zone, Configure networking, Provide computer name and domain

Enable automatic updating and feedback, Download and install updatesUpdate This Server

Add roles, Add features, Enable Remote Desktop, Configure Windows

Firewall

Alert E-mail Notification, SNMP Settings, HP Lights-Out Configuration

Utility

Installing and configuring the server20

Page 21

Figure 1 HP StorageWorks Rapid Startup Wizard Welcome screen

.

For detailed information about each of these configuration options, click the corresponding online

help link to the right of each section.

Complete system configuration

After the storage system is physically set up and the basic configuration is established, you must

complete additional setup tasks. Depending on the deployment scenario of the storage system, these

steps can vary. These additional steps can include:

• Running Microsoft Windows Update—HP highly recommends that you run Microsoft Windows

updates to identify, review, and install the latest, applicable, critical security updates on the storage

system.

• Creating and managing users and groups—User and group information and permissions determine

whether a user can access files. If the storage system is deployed into a workgroup environment,

this user and group information is stored locally on the device. By contrast, if the storage system

is deployed into a domain environment, user and group information is stored on the domain.

• Joining workgroup and domains—These are the two system environments for users and groups.

Because users and groups in a domain environment are managed through standard Windows or

Active Directory domain administration methods, this document discusses only local users and

groups, which are stored and managed on the storage system. For information on managing users

and groups on a domain, see the domain documentation available on the Microsoft web site.

If the storage system is deployed in a domain environment, the domain controller will store new

accounts on the domain; however, remote systems will store new accounts locally unless they are

granted permissions to create accounts on the domain.

• Using Ethernet NIC teaming (optional)—Select models are equipped with an HP or Broadcom

NIC Teaming utility. The utility allows administrators to configure and monitor Ethernet network

interface controller (NIC) teams in a Windows-based operating system. These teams provide options

for increasing fault tolerance and throughput.

• Activating iLO 2 Advanced features using a license key—The Remote Console feature of iLO 2

requires a license key. The key is included with the storage system inside the Country Kit. See the

iLO 2 Advanced License Pack for activation instructions.

• Adjusting logging for system, application, and security events.

X1000 and X3000 Network Storage System 21

Page 22

• Installing third-party software applications—For example, these might include an antivirus applic-

ation that you install.

• Registering the server — To register the server, refer to the HP Registration website (http://re-

gister.hp.com).

Additional access methods

After the storage system installation process is complete and the system's IP address has been assigned,

you can then additionally use the remote browser, Remote Desktop, and Telnet Server methods to

access the storage system.

Using the remote browser method

The storage system ships with DHCP enabled on the network port. If the server is placed on a

DHCP-enabled network and the IP address or server name is known, the server can be accessed

through a client running Internet Explorer 5.5 (or later) on that network, using the TCP/IP 3202 port.

IMPORTANT:

Before you begin this procedure, ensure that you have the following:

• Windows-based PC loaded with Internet Explorer 5.5 (or later) on the same local network as the

storage system

• DHCP-enabled network

• Server name or IP address of the storage system

To connect the server to a network using the remote browser method, ensure that the client is configured

to download signed ActiveX controls.

To connect the storage system to a network using the remote browser method

1. On the remote client machine open Internet Explorer and enter https:// and the server name

of the storage system followed by a hyphen (-), and then:3202. For example, https://

labserver-:3202. Press Enter.

NOTE:

If you are able to determine the IP address from your DHCP server, you can substitute the

IP address for the server name. For example: 192.100.0.1:3202.

2. Click OK on the Security Alert prompt.

3. Log on to the storage system with the administrator user name and password.

IMPORTANT:

If you are using the remote browser method to access the storage system, always close the remote

session before closing your Internet browser. Closing the Internet browser does not close the remote

session. Failure to close your remote session impacts the limited number of remote sessions allowed

on the storage system at any given time.

Installing and configuring the server22

Page 23

Using the Remote Desktop method

Remote Desktop provides the ability for you to log onto and remotely administer your server, giving

you a method of managing it from any client. Installed for remote administration, Remote Desktop

allows only two concurrent sessions. Leaving a session running takes up one license and can affect

other users. If two sessions are running, additional users will be denied access.

To connect the storage system to a network using the Remote Desktop method

1. On the PC client, select Start > Run. At Open, type mstsc, then click OK.

2. Enter the IP address of the storage system in the Computer box and click Connect.

3. Log on to the storage system with the administrator user name and password.

Using the Telnet Server method

Telnet Server is a utility that lets users connect to machines, log on, and obtain a command prompt

remotely. Telnet Server is preinstalled on the storage system but must be activated before use.

CAUTION:

For security reasons, the Telnet Server is disabled by default. The service needs to be modified to

enable access to the storage system with Telnet.

Enabling Telnet Server

The Telnet Server service needs to be enabled prior to its access.

1. In Server Manager, expand the Configuration node in the left panel.

2. Click System and Network Settings.

3. Under System Settings Configuration, click Telnet.

4. Check the Enable Telnet access to this server check box and then click OK.

Default storage settings

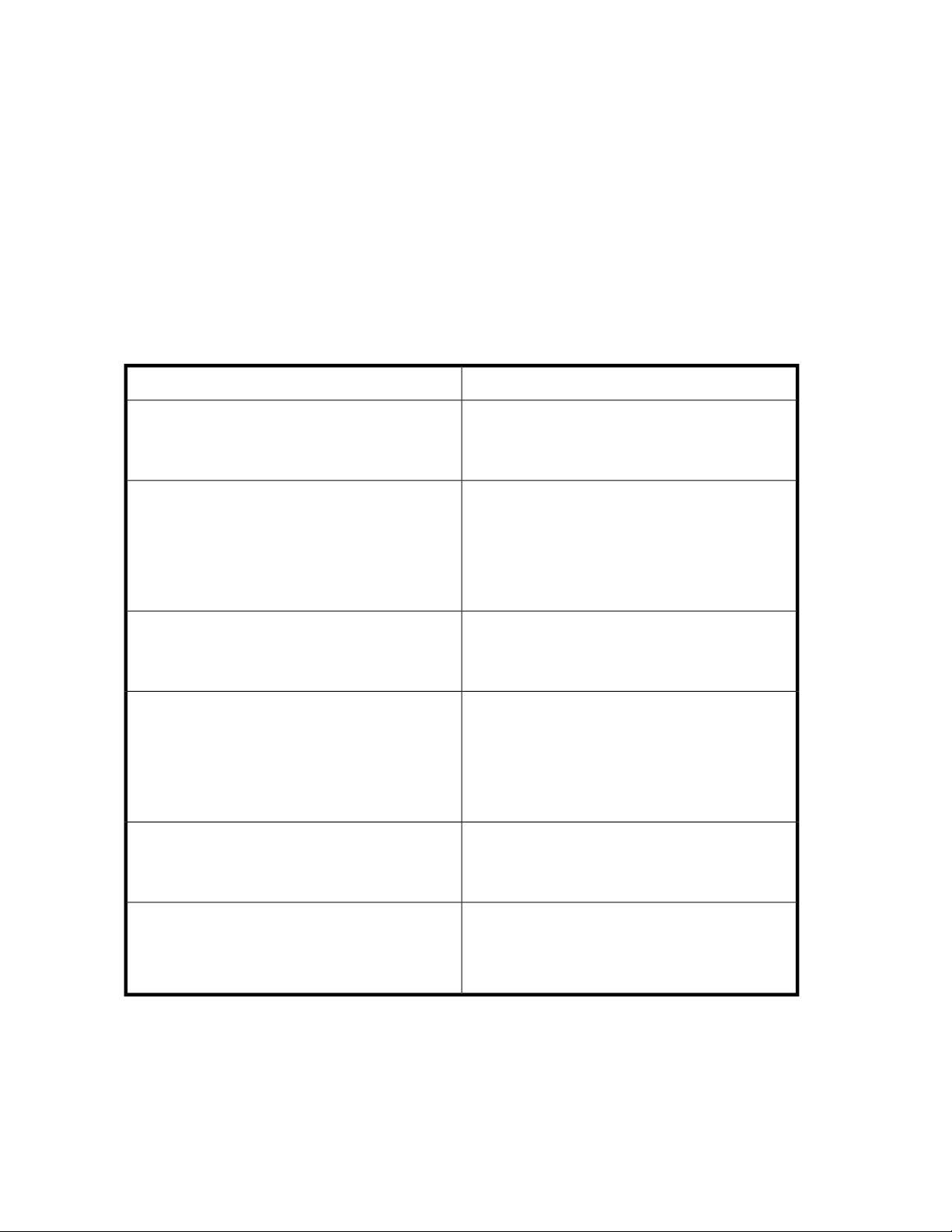

HP StorageWorks X1000 and X3000 Network Storage Systems are preconfigured with default storage

settings. This section provides additional details about the preconfigured storage.

Physical configuration

The logical disks reside on physical drives as shown in the table below.

As of the SWX image version 1.2, the DON'T ERASE volume that was created on earlier versions of

HP StorageWorks Network Storage System models is no longer used.

IMPORTANT:

The first two logical drives are configured for the storage system operating system.

X1000 and X3000 Network Storage System 23

Page 24

The Operating System volume default factory settings can be customized after the operating system

is up and running. The following settings can be changed:

• RAID level can be changed to any RAID level except RAID 0

• OS logical drive size can be changed to 40 GB or higher

If the Operating System volume is customized and the System Recovery DVD is run at a later time,

the System Recovery process will maintain the custom settings as long as the above criteria are met

(RAID level other than RAID 0 and OS logical drive size of 40 GB or higher) and the OS volume is

labeled System. If the storage system arrays are deleted and the System Recovery DVD is run, the

System Recovery process will configure the storage system using the factory default settings listed in

the table below.

HP StorageWorks X1000 and X3000 Network Storage Systems do not include preconfigured data

volumes. The administrator must configure data storage for the storage system. See

“Configuring data storage” on page 56 for more information.

Table 3 Storage system RAID configurations

Logical Disk 1Server model

HP StorageWorks X1400 Network Storage System

(all models)

• HP StorageWorks X1600 Network Storage System

(base model)

• HP StorageWorks X1600 5.4TB SAS Network

Storage System

• HP StorageWorks X1600 12TB SATA Network

Storage System

HP StorageWorks X1600 6TB SATA Network Storage

System

• HP StorageWorks X1800 Network Storage System

(base model)

• HP StorageWorks X1800 3.6 TB SAS Network

Storage System

• HP StorageWorks X1800 6TB SATA Network

Storage System

HP StorageWorks X1800 2.4TB SAS Network Storage

System

• Operating System Volume

• RAID 5

• Physical Drives 0–3

• Operating System Volume

• RAID 1+0

• Physical Drives 0–1

• Operating System Volume

• RAID 5

• Physical Drives 0–5

• Operating System Volume

• RAID 1+0

• Physical Drives 0–1

• Operating System Volume

• RAID 5

• Physical Drives 0–7

• HP StorageWorks X3400 Network Storage Gate-

way

• HP StorageWorks X3800 Network Storage Gate-

way

Installing and configuring the server24

• Operating System Volume

• RAID 1+0

• Physical Drives 0–1

Page 25

NOTE:

In the HP Array Configuration Utility (ACU), logical disks are labeled 1 and 2. In Microsoft Disk

Manager, logical disks are displayed as 0 and 1. For HP Smart Array configuration information, see

http://h18004.www1.hp.com/products/servers/proliantstorage/arraycontrollers/.

If the operating system has a failure that might result from corrupt system files, a corrupt registry, or

the system hangs during boot, see “System recovery” on page 109.

Default boot sequence

The BIOS supports the following default boot sequence:

1. DVD-ROM

2. HDD

3. Bootable USB flash drive

4. PXE (network boot)

Under normal circumstances, the storage systems boot up from the OS logical drive.

• If the system experiences a drive failure, the drive displays an amber disk failure LED.

• If a single drive failure occurs, it is transparent to the OS.

X1000 and X3000 Network Storage System 25

Page 26

Installing and configuring the server26

Page 27

2 Storage system component identification

This chapter provides illustrations of the storage system hardware components.

NOTE:

The keyboard, mouse, and monitor are used only for the direct attached method of accessing the

server. They are not provided with your storage system.

HP X1400 Network Storage System and X3400 Network Storage Gateway hardware components

The following figures show components and LEDs located on the front and rear panels of the X1400

Network Storage System and X3400 Network Storage Gateway.

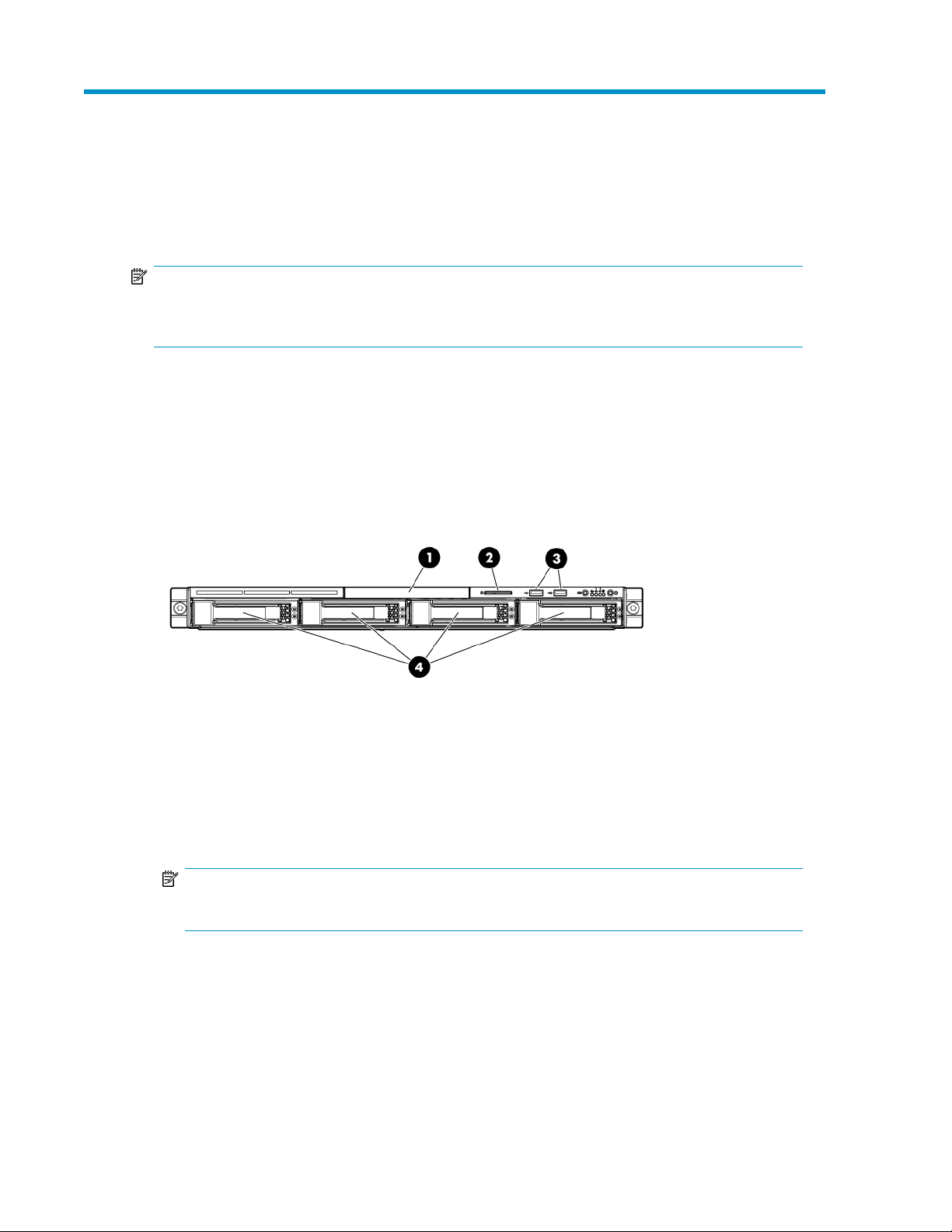

Figure 2 HP X1400 and X3400 front panel components

.

1. DVD-RW drive

2. Serial label pull tab

3. Two (2) USB ports

4. Four (4) 3.5” hot-plug SAS/SATA hard drive bays

NOTE:

See “SAS and SATA hard drive LED combinations” on page 36 for HDD LED status descriptions.

X1000 and X3000 Network Storage System 27

Page 28

Figure 3 HP X1400 and X3400 front panel LEDs

.

Table 4 HP X1400 and X3400 front panel LED descriptions

StatusItem / Description

Green = System health is normal.

1. Internal health LED

Amber = System health is degraded.

Red = System health is critical.

Off = System health is normal (when in standby mode).

2. NIC 1 link/activity LED

3. NIC 2 link/activity LED

4. Drive activity LED

5. Power On/Standby button and system

power LED

6. UID button/LED

Green = Network link exists.

Flashing green = Network link and activity exist.

Off = No network link exists.

Green = Drive activity is normal.

Off = No drive activity exists.

Green = Normal (system on)

Amber = System is in standby, but power is still applied.

Off = Power cord is not attached or the power supply has failed.

Blue = Identification is activated.

Flashing blue = System is being managed remotely.

Off = Identification is deactivated.

Figure 4 HP X1400 and X3400 rear panel components

.

Storage system component identification28

Page 29

1. Power cord connector

2. Mouse connector

3. 10/100/1000 NIC 1 connector/shared iLO 2 management port

4. 10/100/1000 NIC 2 connector

5. Serial connector

6. Low profile PCIe slot cover (x16 slot open)

7. Full-sized PCIe slot (occupied by Smart Array P212 controller)

8. Dedicated iLO 2 management port (this port is optional and must be purchased separately)

9. Video connector

10. USB connectors (2)

11. Keyboard connector

Figure 5 HP X1400 and X3400 rear panel LEDs

.

Table 5 HP X1400 and X3400 rear panel LED descriptions

StatusItem / Description

Blue = Activated

1. UID button/LED

2. NIC/iLO 2 link

3. NIC/iLO 2 activity

Flashing = System is being managed remotely.

Off = Deactivated

Green or flashing green = Activity exists.

Off = No activity exists.

Green = Link exists.

Off = No link exists.

HP X1600 Network Storage System hardware components

The following figures show components and LEDs located on the front and rear panels of the HP

X1600 Network Storage System.

X1000 and X3000 Network Storage System 29

Page 30

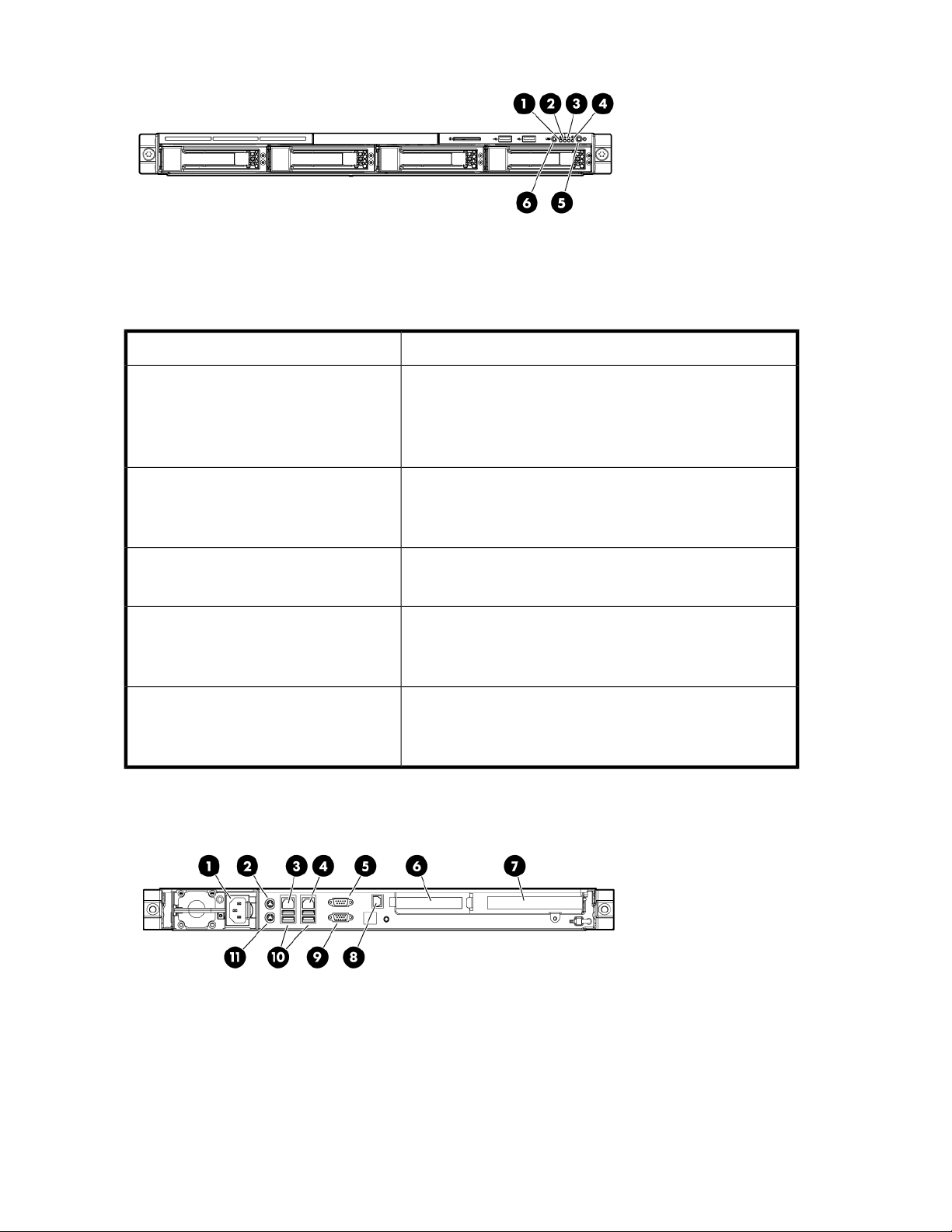

Figure 6 HP X1600 front panel components and LEDs

.

Table 6 HP X1600 front panel component and LED descriptions

StatusItem / Description

1 — 12. Twelve 3.5” (LFF) hot plug SATA

/ SAS hard drive bays

14. Unit identification (UID) LED button

15. System health LED

16. NIC1 LED

17. NIC2 LED

18. HDD LED

19. Power button

See “SAS and SATA hard drive LED combinations” on page 36

for HDD LED status descriptions.

N/A13. Front USB ports (2)

Green = Normal (system on)

Flashing amber = System health degraded

Flashing red = System health critical

Off = Normal (system off)

Green = Normal (system on)

Flashing amber = System health degraded

Flashing red = System health critical

Off = Normal (system off)

Green = Network link

Flashing = Network link and activity

Off = No network connection

Green = HDD install ready

Flashing green = Data access

Off = No access

Green = System on

Amber = System off

Storage system component identification30

Page 31

Figure 7 HP X1600 rear panel components

.

1. Redundant hot-plug power supplies

2. Power supply cable socket

3. Rear DVD-RW drive

4. x8 full-length /full-height PCI-Express slot

5. x8 full-length /full-height PCI-Express slot (occupied by Smart Array P212 controller)

6. UID LED button

7. iLO 2 management port

8. LAN port

9. LAN port

10. Two (2) rear USB 2.0 ports

11. VGA port

12. Serial port

X1000 and X3000 Network Storage System 31

Page 32

Figure 8 HP X1600 (AW528A) rear panel components

.

The HP X1600 Network Storage System AW528A model includes two 2.5” Small Form Factor (SFF)

SAS / SATA hot plug hard drives in the rear of the unit that are configured for the operating system.

This allows for the use of up to twelve hard drives on the front of the unit to be configured for storage.

In addition, the AW528A model includes two PCI-Express (PCIe) slots.

1. Redundant hot-plug power supplies

2. Power supply cable socket

3. Low profile PCIe slot (x16 slot open)

4. 2.5” SFF SAS / SATA hot plug hard drive

5. 2.5” SFF SAS / SATA hot plug hard drive

6. x8 full-length /full-height PCIe slot (occupied by Smart Array P212 controller)

7. UID LED button

8. iLO 2 management port

9. LAN port

10. LAN port

11. Two (2) rear USB 2.0 ports

12. VGA port

13. Serial port

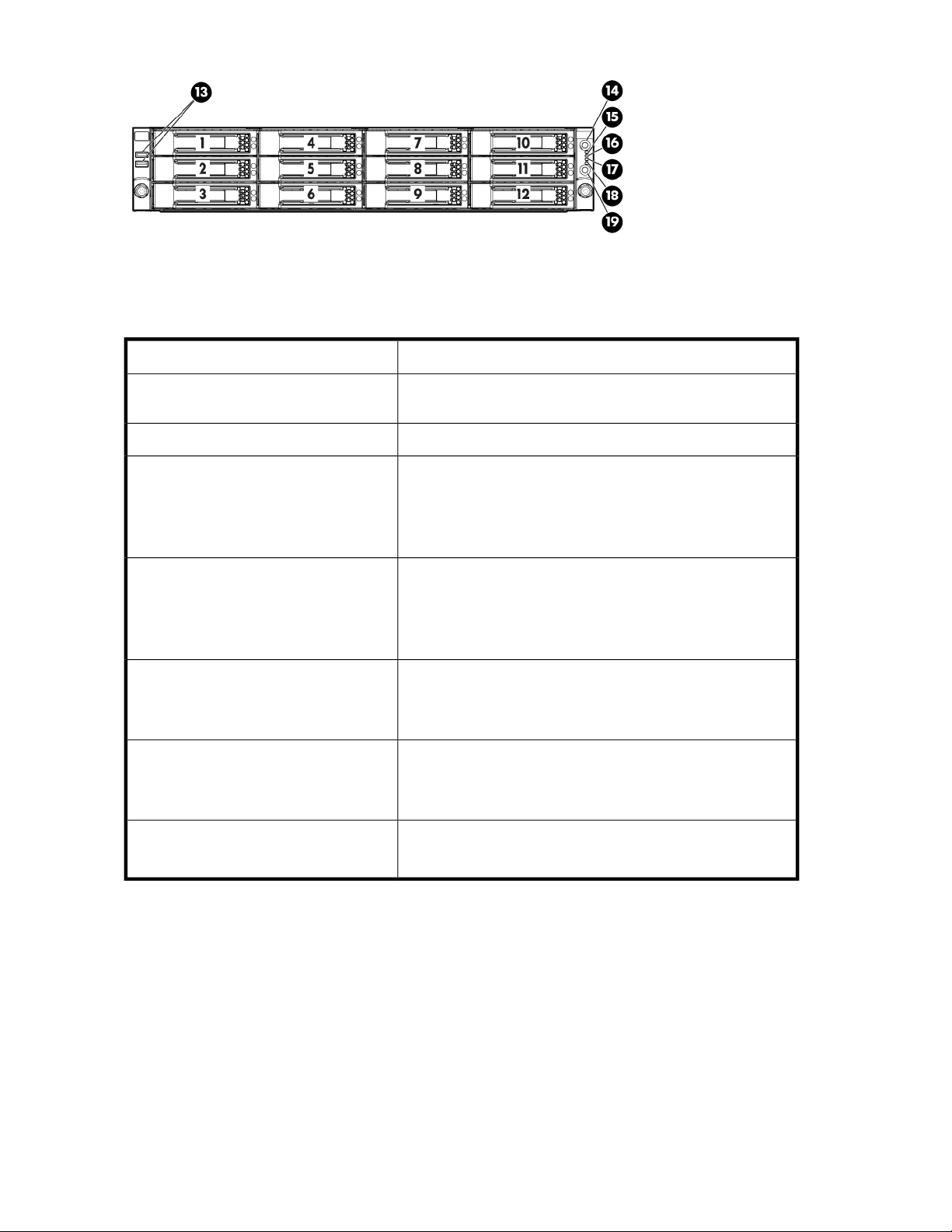

HP X1800 Network Storage System and X3800 Network Storage Gateway hardware components

The following figures show components and LEDs located on the front and rear panels of the X1800

Network Storage System and X3800 Network Storage Gateway.

Storage system component identification32

Page 33

Figure 9 HP X1800 and X3800 front panel components

.

1. Quick release levers (2)

2. Systems Insight Display

NOTE:

See “Systems Insight Display LEDs” on page 37 and

“Systems Insight Display LED combinations” on page 39 for LED status information.

3. Eight (8) 2.5” SFF SAS / SATA hot plug hard drive bays

NOTE:

See “SAS and SATA hard drive LED combinations” on page 36 for HDD LED status descriptions.

4. DVD-RW drive

5. Video connector

6. USB connectors (2)

X1000 and X3000 Network Storage System 33

Page 34

Figure 10 HP X1800 and X3800 front panel LEDs and buttons

.

Table 7 HP X1800 and X3800 front panel LED and button descriptions

StatusItem / Description

Blue = Activated

1. UID LED and button

2. System health LED

Flashing blue = System being remotely managed

Off = Deactivated

Green = Normal

Amber = System degraded

Red = System critical

To identify components in degraded or critical state, see

3. Power On/Standby

button and system power

LED

Green = System on

Amber = System in standby, but power is still applied

Off = Power cord not attached or power supply failure

Figure 11 HP X1800 and X3800 rear panel components

.

1. PCIe slot 5

Storage system component identification34

Page 35

2. PCIe slot 6

3. PCIe slot 4

4. PCIe slot 2

5. PCIe slot 3

6. PCIe slot 1 (occupied by Smart Array controller with external SAS ports for expandability)

7. Power supply 2 (standard)

8. Power supply 1 (standard)

9. USB connectors (2)

10. Video connector

11. NIC 1 connector

12. NIC 2 connector

13. Mouse connector

14. Keyboard connector

15. Serial connector

16. iLO 2 connector

17. NIC 3 connector

18. NIC 4 connector

Figure 12 HP X1800 and X3800 rear panel LEDs and buttons

.

Table 8 HP X1800 and X3800 rear panel LED and button descriptions

StatusItem / Description

1. Power supply LED

2. UID LED/button

Green = Normal

Off = System is off or power supply has failed

Blue = Activated

Flashing blue = System being managed remotely

Off = Deactivated

X1000 and X3000 Network Storage System 35

Page 36

3. NIC/iLO 2 activity LED

StatusItem / Description

Green = Network activity

Flashing green = Network activity

Off = No network activity

4. NIC/iLO 2 link LED

Green = Network link

Off = No network link

SAS and SATA hard drive LEDs

The following figure shows SAS/SATA hard drive LEDs. These LEDs are located on all HP ProLiant

hot plug hard drives.

Figure 13 SAS/SATA hard drive LEDs

.

Table 9 SAS and SATA hard drive LED combinations

1. Fault/UID LED (am-

ber/blue)

Amber, flashing regularly (1

Hz)

(green)

On, off, or flashingAlternating amber and blue

On, off, or flashingSteadily blue

On

Status2. Online/activity LED

The drive has failed, or a predictive failure alert has

been received for this drive; it also has been selected

by a management application.

The drive is operating normally, and it has been selected by a management application.

A predictive failure alert has been received for this

drive. Replace the drive as soon as possible.

The drive is online, but it is not active currently.OnOff

Storage system component identification36

Page 37

1. Fault/UID LED (amber/blue)

Amber, flashing regularly (1

Hz)

(green)

Flashing regularly (1 Hz)

Flashing regularly (1 Hz)Off

Status2. Online/activity LED

Do not remove the drive. Removing a drive may

terminate the current operation and cause data loss.

The drive is part of an array that is undergoing capacity expansion or stripe migration, but a predictive

failure alert has been received for this drive. To

minimize the risk of data loss, do not replace the

drive until the expansion or migration is complete.

Do not remove the drive. Removing a drive may

terminate the current operation and cause data loss.

The drive is rebuilding, or it is part of an array that

is undergoing capacity expansion or stripe migration.

Amber, flashing regularly (1

Hz)

Amber, flashing regularly (1

Hz)

Flashing irregularly

OffSteadily amber

Off

OffOff

Systems Insight Display LEDs

The HP Systems Insight Display LEDs represent the system board layout. The display enables diagnosis

with the access panel installed.

The drive is active, but a predictive failure alert has

been received for this drive. Replace the drive as

soon as possible.

The drive is active, and it is operating normally.Flashing irregularlyOff

A critical fault condition has been identified for this

drive, and the controller has placed it offline. Replace the drive as soon as possible.

A predictive failure alert has been received for this

drive. Replace the drive as soon as possible.

The drive is offline, a spare, or not configured as

part of an array.

X1000 and X3000 Network Storage System 37

Page 38

Figure 14 Systems Insight Display LEDs

.

Table 10 Systems Insight Display LED descriptions

StatusItem / Description

Green = Network link

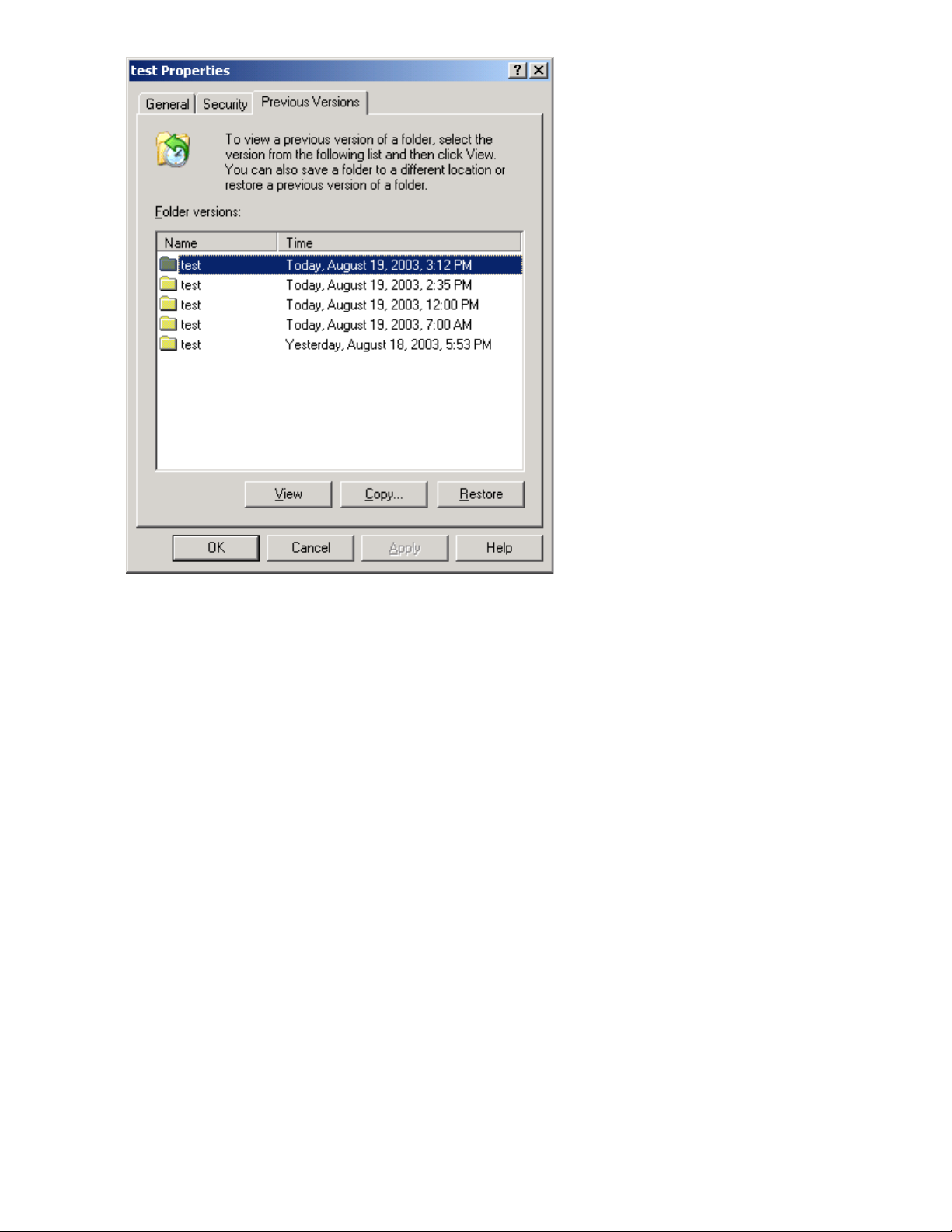

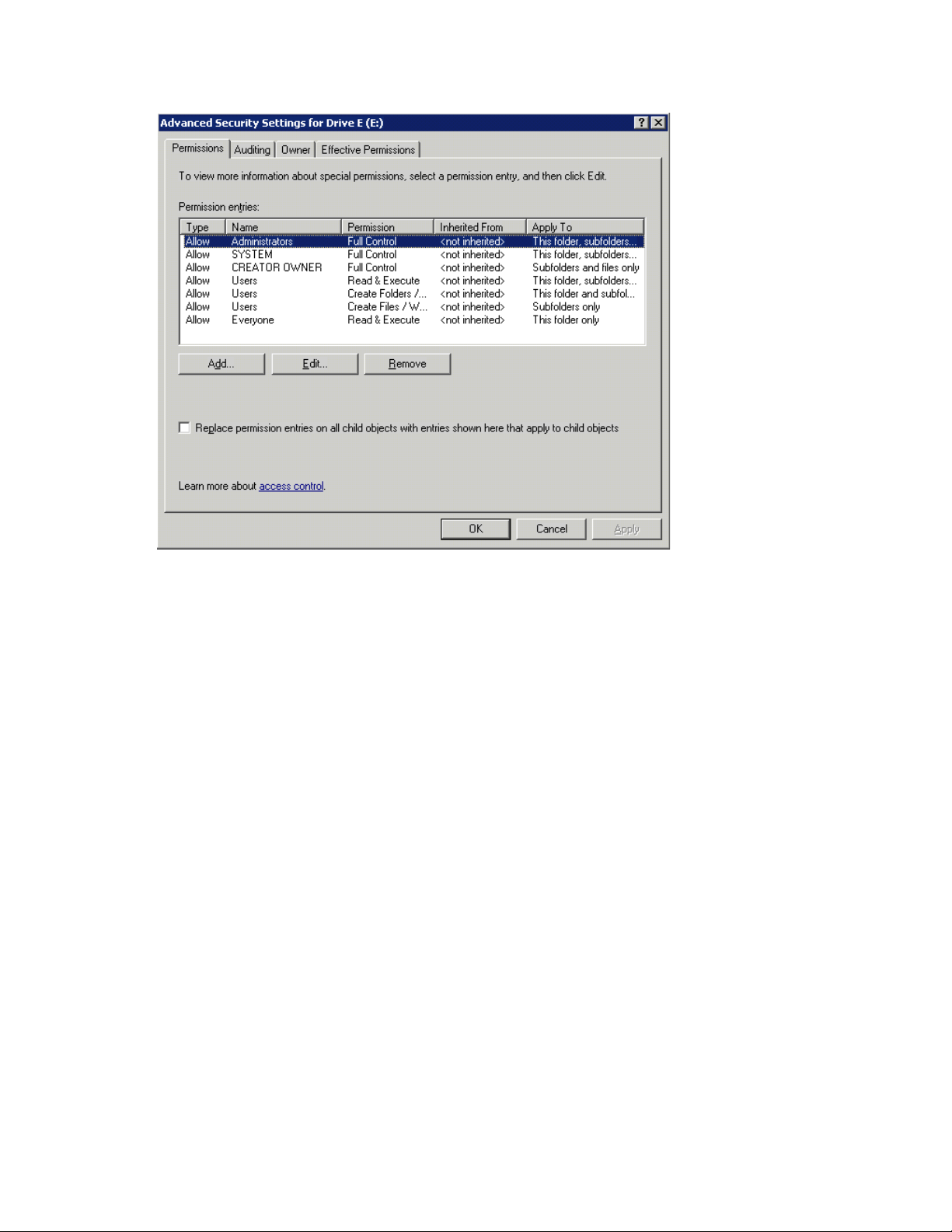

Flashing green = Network link and activity