Page 1

VMware vSphere Big Data Extensions

Administrator's and User's Guide

vSphere Big Data Extensions 2.2

This document supports the version of each product listed and

supports all subsequent versions until the document is

replaced by a new edition. To check for more recent editions

of this document, see http://www.vmware.com/support/pubs.

EN-001701-00

Page 2

VMware vSphere Big Data Extensions Administrator's and User's Guide

You can find the most up-to-date technical documentation on the VMware Web site at:

http://www.vmware.com/support/

The VMware Web site also provides the latest product updates.

If you have comments about this documentation, submit your feedback to:

docfeedback@vmware.com

Copyright © 2013 – 2015 VMware, Inc. All rights reserved. Copyright and trademark information.

This work is licensed under a Creative Commons Attribution-NoDerivs 3.0 United States License

(http://creativecommons.org/licenses/by-nd/3.0/us/legalcode).

VMware, Inc.

3401 Hillview Ave.

Palo Alto, CA 94304

www.vmware.com

2 VMware, Inc.

Page 3

Contents

About This Book 7

About VMware vSphere Big Data Extensions 9

1

Getting Started with Big Data Extensions 9

Big Data Extensions and Project Serengeti 10

About Big Data Extensions Architecture 12

About Application Managers 12

Big Data Extensions Support for MapReduce Distribution Features 15

Feature Support By Hadoop Distribution 17

Installing Big Data Extensions 19

2

System Requirements for Big Data Extensions 19

Unicode UTF-8 and Special Character Support 22

The Customer Experience Improvement Program 23

Deploy the Big Data Extensions vApp in the vSphere Web Client 24

Install RPMs in the Serengeti Management Server Yum Repository 27

Install the Big Data Extensions Plug-In 28

Configure vCenter Single Sign-On Settings for the Serengeti Management Server 30

Connect to a Serengeti Management Server 30

Install the Serengeti Remote Command-Line Interface Client 31

Access the Serengeti CLI By Using the Remote CLI Client 32

Upgrading Big Data Extensions 35

3

Prepare to Upgrade Big Data Extensions 35

Upgrade Big Data Extensions Virtual Appliance 37

Upgrade the Big Data Extensions Plug-in 40

Upgrade the Serengeti CLI 41

Upgrade Big Data Extensions Virtual Machine Components by Using the Serengeti Command-Line

Interface 41

Add a Remote Syslog Server 42

VMware, Inc.

Managing Application Managers 45

4

Add an Application Manager by Using the vSphere Web Client 45

Modify an Application Manager by Using the Web Client 46

Delete an Application Manager by Using the vSphere Web Client 46

View Application Managers and Distributions by Using the Web Client 46

View Roles for Application Manager and Distribution by Using the Web Client 46

Managing Hadoop Distributions 49

5

Hadoop Distribution Deployment Types 50

3

Page 4

VMware vSphere Big Data Extensions Administrator's and User's Guide

Configure a Tarball-Deployed Hadoop Distribution by Using the Serengeti Command-Line

Interface 50

Configuring Yum and Yum Repositories 52

Create a Hadoop Template Virtual Machine using RHEL Server 6.x and VMware Tools 69

Maintain a Customized Hadoop Template Virtual Machine 73

Managing the Big Data Extensions Environment 75

6

Add Specific User Names to Connect to the Serengeti Management Server 75

Change the Password for the Serengeti Management Server 76

Create a User Name and Password for the Serengeti Command-Line Interface 77

Specify a Group of Users in Active Directory or LDAP to Use a Hadoop Cluster 77

Stop and Start Serengeti Services 78

Ports Used for Communication between Big Data Extensions and the vCenter Server 79

Verify the Operational Status of the Big Data Extensions Environment 80

Managing vSphere Resources for Clusters 91

7

Add a Resource Pool with the Serengeti Command-Line Interface 91

Remove a Resource Pool with the Serengeti Command-Line Interface 92

Add a Datastore in the vSphere Web Client 92

Remove a Datastore in the vSphere Web Client 93

Add a Network in the vSphere Web Client 93

Modify the DNS Type in the vSphere Web Client 94

Reconfigure a Static IP Network in the vSphere Web Client 95

Remove a Network in the vSphere Web Client 95

Creating Hadoop and HBase Clusters 97

8

About Hadoop and HBase Cluster Deployment Types 99

Hadoop Distributions Supporting MapReduce v1 and MapReduce v2 (YARN) 99

About Cluster Topology 100

About HBase Database Access 100

Create a Big Data Cluster in the vSphere Web Client 101

Create an HBase Only Cluster in Big Data Extensions 104

Create a Cluster with an Application Manager by Using the vSphere Web Client 106

Create a Compute-Only Cluster with a Third Party Application Manager by Using vSphere Web

Client 106

Create a Compute Workers Only Cluster by Using the vSphere Web Client 107

Managing Hadoop and HBase Clusters 109

9

Stop and Start a Cluster in the vSphere Web Client 109

Delete a Cluster in the vSphere Web Client 110

About Resource Usage and Elastic Scaling 110

Scale a Cluster in or out by using the vSphere Web Client 114

Scale CPU and RAM in the vSphere Web Client 115

Use Disk I/O Shares to Prioritize Cluster Virtual Machines in the vSphere Web Client 116

About vSphere High Availability and vSphere Fault Tolerance 116

Change the User Password on All of the Nodes of a Cluster 117

Reconfigure a Cluster with the Serengeti Command-Line Interface 117

Recover from Disk Failure with the Serengeti Command-Line Interface Client 119

4 VMware, Inc.

Page 5

Enter Maintenance Mode to Perform Backup and Restore with the Serengeti Command-Line

Interface Client 120

Log in to Hadoop Nodes with the Serengeti Command-Line Interface Client 121

Contents

Monitoring the Big Data Extensions Environment 123

10

Enable the Big Data Extensions Data Collector 123

Disable the Big Data Extensions Data Collector 124

View Serengeti Management Server Initialization Status 124

View Provisioned Clusters in the vSphere Web Client 125

View Cluster Information in the vSphere Web Client 126

Monitor the HDFS Status in the vSphere Web Client 127

Monitor MapReduce Status in the vSphere Web Client 127

Monitor HBase Status in the vSphere Web Client 128

Accessing Hive Data with JDBC or ODBC 129

11

Configure Hive to Work with JDBC 129

Configure Hive to Work with ODBC 131

Big Data Extensions Security Reference 133

12

Services, Network Ports, and External Interfaces 133

Big Data Extensions Configuration Files 135

Big Data Extensions Public Key, Certificate, and Keystore 136

Big Data Extensions Log Files 136

Big Data Extensions User Accounts 137

Security Updates and Patches 137

Troubleshooting 139

13

Log Files for Troubleshooting 140

Configure Serengeti Logging Levels 140

Collect Log Files for Troubleshooting 141

Troubleshooting Cluster Creation Failures 142

Big Data Extensions Virtual Appliance Upgrade Fails 148

Upgrade Cluster Error When Using Cluster Created in Earlier Version of Big Data Extensions 149

Virtual Update Manager Does Not Upgrade the Hadoop Template Virtual Machine Under Big

Data Extensions vApp 149

Unable to Connect the Big Data Extensions Plug-In to the Serengeti Server 150

vCenter Server Connections Fail to Log In 151

Management Server Cannot Connect to vCenter Server 151

Cannot Perform Serengeti Operations after Deploying Big Data Extensions 151

SSL Certificate Error When Connecting to Non-Serengeti Server with the vSphere Console 152

Cannot Restart or Reconfigure a Cluster For Which the Time Is Not Synchronized 153

Cannot Restart or Reconfigure a Cluster After Changing Its Distribution 153

Virtual Machine Cannot Get IP Address and Command Fails 153

Cannot Change the Serengeti Server IP Address From the vSphere Web Client 154

A New Plug-In Instance with the Same or Earlier Version Number as a Previous Plug-In Instance

Does Not Load 155

Host Name and FQDN Do Not Match for Serengeti Management Server 155

Serengeti Operations Fail After You Rename a Resource in vSphere 156

VMware, Inc. 5

Page 6

VMware vSphere Big Data Extensions Administrator's and User's Guide

Big Data Extensions Server Does Not Accept Resource Names With Two or More Contiguous

White Spaces 156

Non-ASCII characters are not displayed correctly 156

MapReduce Job Fails to Run and Does Not Appear In the Job History 157

Cannot Submit MapReduce Jobs for Compute-Only Clusters with External Isilon HDFS 157

MapReduce Job Stops Responding on a PHD or CDH4 YARN Cluster 158

Cannot Download the Package When Using Downloadonly Plugin 158

Cannot Find Packages When You Use Yum Search 158

Remove the HBase Rootdir in HDFS Before You Delete the HBase Only Cluster 159

Index 161

6 VMware, Inc.

Page 7

About This Book

VMware vSphere Big Data Extensions Administrator's and User's Guide describes how to install VMware

vSphere Big Data Extensions™ within your vSphere environment, and how to manage and monitor Hadoop

and HBase clusters using the Big Data Extensions plug-in for vSphere Web Client.

VMware vSphere Big Data Extensions Administrator's and User's Guide also describes how to perform Hadoop

and HBase operations using the VMware Serengeti™ Command-Line Interface Client, which provides a

greater degree of control for certain system management and big data cluster creation tasks.

Intended Audience

This guide is for system administrators and developers who want to use Big Data Extensions to deploy and

manage Hadoop clusters. To successfully work with Big Data Extensions, you should be familiar with

VMware® vSphere® and Hadoop and HBase deployment and operation.

VMware Technical Publications Glossary

VMware Technical Publications provides a glossary of terms that might be unfamiliar to you. For definitions

of terms as they are used in VMware technical documentation, go to

http://www.vmware.com/support/pubs.

VMware, Inc.

7

Page 8

VMware vSphere Big Data Extensions Administrator's and User's Guide

8 VMware, Inc.

Page 9

About VMware vSphere Big Data

Extensions 1

VMware vSphere Big Data Extensions lets you deploy and centrally operate big data clusters running on

VMware vSphere. Big Data Extensions simplifies the Hadoop and HBase deployment and provisioning

process, and gives you a real time view of the running services and the status of their virtual hosts. It

provides a central place from which to manage and monitor your big data cluster, and incorporates a full

range of tools to help you optimize cluster performance and utilization.

This chapter includes the following topics:

“Getting Started with Big Data Extensions,” on page 9

n

“Big Data Extensions and Project Serengeti,” on page 10

n

“About Big Data Extensions Architecture,” on page 12

n

“About Application Managers,” on page 12

n

“Big Data Extensions Support for MapReduce Distribution Features,” on page 15

n

“Feature Support By Hadoop Distribution,” on page 17

n

Getting Started with Big Data Extensions

Big Data Extensions lets you deploy big data clusters. The tasks in this section describe how to set up

VMware vSphere® for use with Big Data Extensions, deploy the Big Data Extensions vApp, access the

VMware vCenter Server® and command-line interface (CLI) administrative consoles, and configure a

Hadoop distribution for use with Big Data Extensions.

Prerequisites

Understand what Project Serengeti® and Big Data Extensions is so that you know how they fit into your

n

big data workflow and vSphere environment.

Verify that the Big Data Extensions features that you want to use, such as data-compute separated

n

clusters and elastic scaling, are supported by Big Data Extensions for the Hadoop distribution that you

want to use.

Understand which features are supported by your Hadoop distribution.

n

Procedure

1 Do one of the following.

Install Big Data Extensions for the first time. Review the system requirements, install vSphere, and

n

install the Big Data Extensions components: Big Data Extensions vApp, Big Data Extensions plugin for vCenter Server, and Serengeti CLI Client.

Upgrade Big Data Extensions from a previous version. Perform the upgrade steps.

n

VMware, Inc.

9

Page 10

VMware vSphere Big Data Extensions Administrator's and User's Guide

2 (Optional) Install and configure a distribution other than Apache Bigtop for use with

Big Data Extensions.

Apache Bigtop is included in the Serengeti Management Server, but you can use any Hadoop

distribution that Big Data Extensions supports.

What to do next

After you have successfully installed and configured your Big Data Extensions environment, you can

perform the following additional tasks.

Stop and start the Serengeti services, create user accounts, manage passwords, and log in to cluster

n

nodes to perform troubleshooting.

Manage the vSphere resource pools, datastores, and networks that you use to create Hadoop and HBase

n

clusters.

Create, provision, and manage big data clusters.

n

Monitor the status of the clusters that you create, including their datastores, networks, and resource

n

pools, through the vSphere Web Client and the Serengeti Command-Line Interface.

On your Big Data clusters, run HDFS commands, Hive and Pig scripts , and MapReduce jobs, and

n

access Hive data.

If you encounter any problems when using Big Data Extensions, see Chapter 13, “Troubleshooting,” on

n

page 139.

Big Data Extensions and Project Serengeti

Big Data Extensions runs on top of Project Serengeti, the open source project initiated by VMware to

automate the deployment and management of Hadoop and HBase clusters on virtual environments such as

vSphere.

Big Data Extensions and Project Serengeti provide the following components.

Project Serengeti

Serengeti Management

Server

An open source project initiated by VMware, Project Serengeti lets users

deploy and manage big data clusters in a vCenter Server managed

environment. The major components are the Serengeti Management Server,

which provides cluster provisioning, software configuration, and

management services; an elastic scaling framework; and command-line

interface. Project Serengeti is made available under the Apache 2.0 license,

under which anyone can modify and redistribute Project Serengeti according

to the terms of the license.

Provides the framework and services to run Big Data clusters on vSphere.

The Serengeti Management Server performs resource management, policybased virtual machine placement, cluster provisioning, software

configuration management, and environment monitoring.

10 VMware, Inc.

Page 11

Chapter 1 About VMware vSphere Big Data Extensions

Serengeti CommandLine Interface Client

Big Data Extensions

The command-line interface (CLI) client provides a comprehensive set of

tools and utilities with which to monitor and manage your Big Data

deployment. If you are using the open source version of Serengeti without

Big Data Extensions, the CLI is the only interface through which you can

perform administrative tasks. For more information about the CLI, see the

VMware vSphere Big Data Extensions Command-Line Interface Guide.

The commercial version of the open source Project Serengeti from VMware,

Big Data Extensions, is delivered as a vCenter Server Appliance.

Big Data Extensions includes all the Project Serengeti functions and the

following additional features and components.

Enterprise level support from VMware.

n

Bigtop distribution from the Apache community.

n

NOTE VMware provides the Hadoop distribution as a convenience but

does not provide enterprise-level support. The Apache Bigtop

distribution is supported by the open source community.

The Big Data Extensions plug-in, a graphical user interface integrated

n

with vSphere Web Client. This plug-in lets you perform common

Hadoop infrastructure and cluster management administrative tasks.

Elastic scaling lets you optimize cluster performance and utilization of

n

physical compute resources in a vSphere environment. Elasticityenabled clusters start and stop virtual machines, adjusting the number

of active compute nodes based on configuration settings that you

specify, to optimize resource consumption. Elasticity is ideal in a mixed

workload environment to ensure that workloads can efficiently share the

underlying physical resources while high-priority jobs are assigned

sufficient resources.

VMware, Inc. 11

Page 12

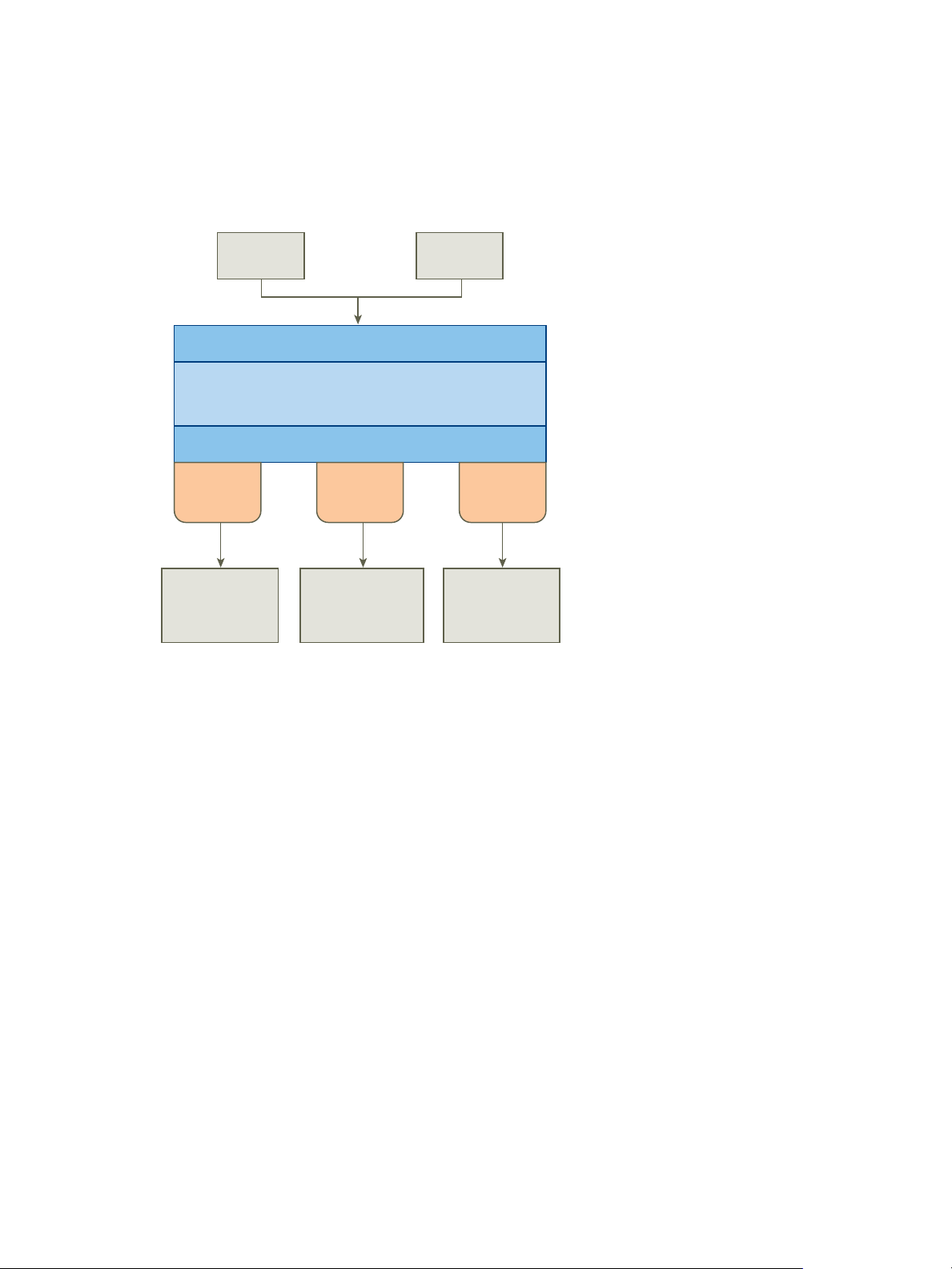

CLI GUI

Rest API

VM and Application

Provisioning Framework

Software Management SPI

Default

adapter

Cloudera

adapter

Ambari

adapter

Software

Management

Thrift Service

Cloudera

Manager

Server

Ambari

Server

VMware vSphere Big Data Extensions Administrator's and User's Guide

About Big Data Extensions Architecture

The Serengeti Management Server and Hadoop Template virtual machine work together to configure and

provision big data clusters.

Figure 1‑1. Big Data Extensions Architecture

Big Data Extensions performs the following steps to deploy a big data cluster.

1 The Serengeti Management Server searches for ESXi hosts with sufficient resources to operate the

cluster based on the configuration settings that you specify, and then selects the ESXi hosts on which to

place Hadoop virtual machines.

2 The Serengeti Management Server sends a request to the vCenter Server to clone and configure virtual

machines to use with the big data cluster.

3 The Serengeti Management Server configures the operating system and network parameters for the

new virtual machines.

4 Each virtual machine downloads the Hadoop software packages and installs them by applying the

distribution and installation information from the Serengeti Management Server.

5 The Serengeti Management Server configures the Hadoop parameters for the new virtual machines

based on the cluster configuration settings that you specify.

6 The Hadoop services are started on the new virtual machines, at which point you have a running

cluster based on your configuration settings.

About Application Managers

You can use Cloudera Manager, Apache Ambari, and the default application manager to provision and

manage clusters with VMware vSphere Big Data Extensions.

After you add a new Cloudera Manager or Ambari application manager to Big Data Extensions, you can

redirect your software management tasks, including monitoring and managing clusters, to that application

manager.

12 VMware, Inc.

Page 13

Chapter 1 About VMware vSphere Big Data Extensions

You can use an application manager to perform the following tasks:

List all available vendor instances, supported distributions, and configurations or roles for a specific

n

application manager and distribution.

Create clusters.

n

Monitor and manage services from the application manager console.

n

Check the documentation for your application manager for tool-specific requirements.

Restrictions

The following restrictions apply to Cloudera Manager and Ambari application managers:

To add an application manager with HTTPS, use the FQDN instead of the URL.

n

You cannot rename a cluster that was created with a Cloudera Manager or Ambari application

n

manager.

You cannot change services for a big data cluster from Big Data Extensions if the cluster was created

n

with Ambari or Cloudera Manager application manager.

To change services, configurations, or both, you must make the changes from the application manager

n

on the nodes.

If you install new services, Big Data Extensions starts and stops the new services together with old

services.

If you use an application manager to change services and big data cluster configurations, those changes

n

cannot be synced from Big Data Extensions. The nodes that you create with Big Data Extensions do not

contain the new services or configurations.

Services and Operations Supported by the Application Managers

If you use Cloudera Manager or Apache Ambari with Big Data Extensions, there are several additional

services that are available for your use.

Supported Application Managers and Distributions

Big Data Extensions supports certain application managers and Hadoop distributions.

Table 1‑1. Supported application managers and Hadoop distributions

Supported

Application Managers Supported Version

Cloudera Manager 5.3, 5.4 CDH 5.3, 5.4 Isilon OneFS 7.1, 7.2

Apache Ambari 1.6, 1.7 HDP 2.1, 2.2, PHD 3.0 Isilon OneFS 7.1, 7.2

Default Apache Bigtop 0.8, CDH

Distributions Supported EMC Isilon OneFS

Big Data Extensions does not

support the provisioning of

compute-only clusters with Ambari

Manager. However Ambari can

provision compute-only clusters

when using Isilon OneFS. Refer to

the EMC Isilon Hadoop Starter Kit

for Hortonworks documentation

for information on configuring

Ambari and Isilon OneFS.

Isilon OneFS 7.1, 7.2

5.2, 5.3, HDP 2.1, PHD

2.0, 2.1 MapR 4.0, 4.1

VMware, Inc. 13

Page 14

VMware vSphere Big Data Extensions Administrator's and User's Guide

Supported Features and Operations

The following features and operations are available when you use the Ambari application manager 1.6 and

1.7 (with versions HDP 2.1, 2.2, or PHD 3.0) and the Cloudera Manager application manager 5.3 and 5.4

(with versions CDH 5.3 and 5.4) on Big Data Extensions.

Create Hadoop Cluster

n

Create HBase Cluster

n

Scale Cluster In/Out

n

Cluster Delete

n

Cluster Export (can only be performed with the Serengeti CLI)

n

Cluster List

n

Cluster Resume

n

Cluster Start/Stop

n

NameNode High Availability (available only with Ambari and Cloudera Manager)

n

To use NameNode High Availability (HA) with Ambari, you must configure NameNode HA for use

with your Hadoop deployment. See NameNode High Availability for Hadoop in the Hortonworks

documentation.

Hadoop Topology Awareness (RACK_AS_RACK, HOST_AS_RACK or HVE)

n

vSphere Fault Tolerance

n

vSphere High Availability

n

Services supported on Cloudera Manager and Ambari

Table 1‑2. Services supported on Cloudera Manager and Ambari

Service Name Cloudera Manager 5.3, 5.4 Ambari 1.6, 1.7

Falcon X

Flume X X

Ganglia X

HBase X X

HCatalog X

HDFS X X

Hive X X

Hue X X

Impala X

MapReduce X X

Nagios X

Oozie X X

Pig X

Sentry

Solr X

Spark X

Sqoop X X

14 VMware, Inc.

Page 15

Chapter 1 About VMware vSphere Big Data Extensions

Table 1‑2. Services supported on Cloudera Manager and Ambari (Continued)

Service Name Cloudera Manager 5.3, 5.4 Ambari 1.6, 1.7

Storm X

TEZ X

WebHCAT X

YARN X X

Zookeeper X X

About Service Level vSphere High Availability for Ambari

Ambari supports NameNode HA, however, you must configure NameNode HA for use with your Hadoop

deployment. See NameNode High Availability for Hadoop in the Hortonworks documentation.

About Service Level vSphere High Availability for Cloudera

The Cloudera distributions offer the following support for Service Level vSphere HA.

Cloudera using MapReduce v1 provides service level vSphere HA support for JobTracker.

n

Cloudera provides its own service level HA support for NameNode through HDFS2.

n

For information about how to use an application manager with the CLI, see the VMware vSphere Big Data

Extensions Command-Line Interface Guide.

Big Data Extensions Support for MapReduce Distribution Features

Big Data Extensions provides different levels of feature support depending on the distribution and version

that you configure for use with the default application manager.

Support for Hadoop MapReduce v1 Distribution Features

Table 1-3 lists the supported Hadoop MapReduce v1 distributions and indicates which features are

supported when you use the distribution with the default application manager on Big Data Extensions.

Table 1‑3. Big Data Extensions Feature Support for Hadoop MapReduce v1 Distributions

Cloudera Hortonworks MapR

Version 5.3, 5.4 1.3 4.0, 4.1

Automatic Deployment Yes Yes Yes

Scale Out Yes Yes Yes

Create Cluster with

Multiple Networks

Data-Compute

Separation

Compute-only Yes Yes No

Elastic Scaling of

Compute Nodes

Hadoop Configuration Yes Yes No

Hadoop Topology

Configuration

Hadoop Virtualization

Extensions (HVE)

Yes Yes No

Yes Yes Yes

Yes when using

MapReduce v1

Yes Yes No

No Yes No

Yes No

VMware, Inc. 15

Page 16

VMware vSphere Big Data Extensions Administrator's and User's Guide

Table 1‑3. Big Data Extensions Feature Support for Hadoop MapReduce v1 Distributions (Continued)

Cloudera Hortonworks MapR

vSphere HA Yes Yes Yes

Service Level vSphere

HA

See “About Service Level

vSphere High Availability

Yes No

for Cloudera,” on page 15

vSphere FT Yes Yes Yes

Support for Hadoop MapReduce v2 (YARN) Distribution Features

Table 1-4 lists the supported Hadoop MapReduce v2 distributions and indicates which features are

supported when you use the distribution with the default application manager on Big Data Extensions.

Table 1‑4. Big Data Extensions Feature Support for Hadoop MapReduce v2 (YARN) Distributions

Apache

Bigtop Cloudera Hortonworks MapR Pivotal

Version 0.8 5.3, 5.4 2.1 4.0, 4.1 2.0, 2.1

Automatic

Deployment

Scale Out Yes Yes Yes Yes Yes

Create Cluster

with Multiple

Networks

Data-Compute

Separation

Compute-only Yes Yes Yes No Yes

Elastic Scaling of

Compute Nodes

Hadoop

Configuration

Hadoop

Topology

Configuration

Hadoop

Virtualization

Extensions

(HVE)

vSphere HA No No No Yes No

Service Level

vSphere HA

vSphere FT No No No Yes No

Yes Yes Yes Yes Yes

Yes Yes Yes No Yes

Yes Yes Yes Yes Yes

No No when using

Yes No No

MapReduce 2

Yes Yes Yes No Yes

Yes Yes Yes No Yes

Support only

for HDFS

No See “About

Support only

for HDFS

Support only

No Yes

for HDFS.

No No No

Service Level

vSphere High

Availability for

Cloudera,” on

page 15

16 VMware, Inc.

Page 17

Chapter 1 About VMware vSphere Big Data Extensions

Feature Support By Hadoop Distribution

Each Hadoop distribution and version provides differing feature support. Learn which Hadoop

distributions support which features.

Hadoop Features

The table illustrates which Hadoop distributions support which features when you use the distributions

with the default application manager on Big Data Extensions.

Table 1‑5. Hadoop Feature Support

Apache Bigtop Cloudera Hortonworks MapR Pivotal

Version 0.8 5.3, 5.4 2.1, 2.2 4.0, 4.1 2.0 , 2.1

HDFS1 No Yes No No No

HDFS2 Yes Yes Yes No Yes

MapReduce v1 No Yes No Yes No

MapReduce v2

(YARN)

Pig Yes Yes Yes Yes Yes

Hive Yes Yes Yes Yes Yes

Hive Server Yes Yes Yes Yes Yes

HBase Yes Yes Yes Yes Yes

ZooKeeper Yes Yes Yes Yes Yes

Yes Yes Yes Yes Yes

VMware, Inc. 17

Page 18

VMware vSphere Big Data Extensions Administrator's and User's Guide

18 VMware, Inc.

Page 19

Installing Big Data Extensions 2

To install Big Data Extensions so that you can create and provision big data clusters, you must install the

Big Data Extensions components in the order described.

What to do next

If you want to create clusters on any Hadoop distribution other than Apache Bigtop, which is included in

theSerengeti Management Server, install and configure the distribution for use with Big Data Extensions.

This chapter includes the following topics:

“System Requirements for Big Data Extensions,” on page 19

n

“Unicode UTF-8 and Special Character Support,” on page 22

n

“The Customer Experience Improvement Program,” on page 23

n

“Deploy the Big Data Extensions vApp in the vSphere Web Client,” on page 24

n

“Install RPMs in the Serengeti Management Server Yum Repository,” on page 27

n

“Install the Big Data Extensions Plug-In,” on page 28

n

“Configure vCenter Single Sign-On Settings for the Serengeti Management Server,” on page 30

n

“Connect to a Serengeti Management Server,” on page 30

n

“Install the Serengeti Remote Command-Line Interface Client,” on page 31

n

“Access the Serengeti CLI By Using the Remote CLI Client,” on page 32

n

System Requirements for Big Data Extensions

Before you begin the Big Data Extensions deployment tasks, your system must meet all of the prerequisites

for vSphere, clusters, networks, storage, hardware, and licensing.

Big Data Extensions requires that you install and configure vSphere and that your environment meets

minimum resource requirements. Make sure that you have licenses for the VMware components of your

deployment.

vSphere Requirements

VMware, Inc. 19

Before you install Big Data Extensions, set up the following VMware

products.

Install vSphere 5.5 (or later) Enterprise or Enterprise Plus.

n

Page 20

VMware vSphere Big Data Extensions Administrator's and User's Guide

When you install Big Data Extensions on vSphere 5.5 or later, use

n

VMware® vCenter™ Single Sign-On to provide user authentication.

When logging in to vSphere 5.5 or later you pass authentication to the

vCenter Single Sign-On server, which you can configure with multiple

identity sources such as Active Directory and OpenLDAP. On successful

authentication, your user name and password is exchanged for a

security token that is used to access vSphere components such as

Big Data Extensions.

Configure all ESXi hosts to use the same Network Time Protocol (NTP)

n

server.

On each ESXi host, add the NTP server to the host configuration, and

n

from the host configuration's Startup Policy list, select Start and stop

with host. The NTP daemon ensures that time-dependent processes

occur in sync across hosts.

Cluster Settings

Network Settings

Configure your cluster with the following settings.

Enable vSphere HA and VMware vSphere® Distributed Resource

n

Scheduler™.

Enable Host Monitoring.

n

Enable admission control and set the policy you want. The default

n

policy is to tolerate one host failure.

Set the virtual machine restart priority to high.

n

Set the virtual machine monitoring to virtual machine and application

n

monitoring.

Set the monitoring sensitivity to high.

n

Enable vMotion and Fault Tolerance logging.

n

All hosts in the cluster have Hardware VT enabled in the BIOS.

n

The Management Network VMkernel Port has vMotion and Fault

n

Tolerance logging enabled.

Big Data Extensions can deploy clusters on a single network or use multiple

networks. The environment determines how port groups that are attached to

NICs are configured and which network backs each port group.

You can use either a vSwitch or vSphere Distributed Switch (vDS) to provide

the port group backing a Serengeti cluster. vDS acts as a single virtual switch

across all attached hosts while a vSwitch is per-host and requires the port

group to be configured manually.

When you configure your networks to use with Big Data Extensions, verify

that the following ports are open as listening ports.

Ports 8080 and 8443 are used by the Big Data Extensions plug-in user

n

interface and the Serengeti Command-Line Interface Client.

Port 5480 is used by vCenter Single Sign-On for monitoring and

n

management.

Port 22 is used by SSH clients.

n

To prevent having to open a network firewall port to access Hadoop

n

services, log into the Hadoop client node, and from that node you can

access your cluster.

20 VMware, Inc.

Page 21

Chapter 2 Installing Big Data Extensions

To connect to the internet (for example, to create an internal yum

n

repository from which to install Hadoop distributions), you may use a

proxy.

To enable communications, be sure that firewalls and web filters do not

n

block the Serengeti Management Server or other Serengeti nodes.

Direct Attached Storage

Do not use

Big Data Extensions in

conjunction with

vSphere Storage DRS

Migrating virtual

machines in vCenter

Server may disrupt the

virtual machine

placement policy

Attach and configure direct attached storage on the physical controller to

present each disk separately to the operating system. This configuration is

commonly described as Just A Bunch Of Disks (JBOD). Create VMFS

datastores on direct attached storage using the following disk drive

recommendations.

8-12 disk drives per host. The more disk drives per host, the better the

n

performance.

1-1.5 disk drives per processor core.

n

7,200 RPM disk Serial ATA disk drives.

n

Big Data Extensions places virtual machines on hosts according to available

resources, Hadoop best practices, and user defined placement policies prior

to creating virtual machines. For this reason, you should not deploy

Big Data Extensions on vSphere environments in combination with Storage

DRS. Storage DRS continuously balances storage space usage and storage I/O

load to meet application service levels in specific environments. If Storage

DRS is used with Big Data Extensions, it will disrupt the placement policies

of your Big Data cluster virtual machines.

Big Data Extensions places virtual machines based on available resources,

Hadoop best practices, and user defined placement policies that you specify.

For this reason, DRS is disabled on all the virtual machines created within

the Big Data Extensions environment. While this prevents virtual machines

from being automatically migrated by vSphere, it does not prevent you from

inadvertently moving virtual machines using the vCenter Server user

interface. This may break the Big Data Extensions defined placement policy.

For example, this may disrupt the number of instances per host and group

associations.

Resource Requirements

Resource pool with at least 27.5GB RAM.

n

for the vSphere

40GB or more (recommended) disk space for the management server

Management Server and

Templates

Resource Requirements

for the Hadoop Cluster

n

and Hadoop template virtual disks.

Datastore free space is not less than the total size needed by the Hadoop

n

cluster, plus swap disks for each Hadoop node that is equal to the

memory size requested.

Network configured across all relevant ESXi hosts, and has connectivity

n

with the network in use by the management server.

vSphere HA is enabled for the master node if vSphere HA protection is

n

needed. To use vSphere HA or vSphere FT to protect the Hadoop master

node, you must use shared storage.

VMware, Inc. 21

Page 22

VMware vSphere Big Data Extensions Administrator's and User's Guide

Hardware Requirements

for the vSphere and

Big Data Extensions

Environment

Host hardware is listed in the VMware Compatibility Guide. To run at optimal

performance, install your vSphere and Big Data Extensions environment on

the following hardware.

Dual Quad-core CPUs or greater that have Hyper-Threading enabled. If

n

you can estimate your computing workload, consider using a more

powerful CPU.

Use High Availability (HA) and dual power supplies for the master

n

node's host machine.

4-8 GBs of memory for each processor core, with 6% overhead for

n

virtualization.

Use a 1GB Ethernet interface or greater to provide adequate network

n

bandwidth.

Tested Host and Virtual

Machine Support

The maximum host and virtual machine support that has been confirmed to

successfully run with Big Data Extensions is 256 physical hosts running a

total of 512 virtual machines.

vSphere Licensing

You must use a vSphere Enterprise license or above to use VMware vSphere

HA and vSphere DRS.

Unicode UTF-8 and Special Character Support

Big Data Extensions supports internationalization (I18N) level 3. However, there are resources you specify

that do not provide UTF-8 support. You can use only ASCII attribute names consisting of alphanumeric

characters and underscores (_) for these resources.

Big Data Extensions Supports Unicode UTF-8

vCenter Server resources you specify using both the CLI and vSphere Web Client can be expressed with

underscore (_), hyphen (-), blank spaces, and all letters and numbers from any language. For example, you

can specify resources such as datastores labeled using non-English characters.

When using a Linux operating system, you should configure the system for use with UTF-8 encoding

specific to your locale. For example, to use U.S. English, specify the following locale encoding: en_US.UTF-8.

See your vendor's documentation for information on configuring UTF-8 encoding for your Linux

environment.

Special Character Support

The following vCenter Server resources can have a period (.) in their name, letting you select them using

both the CLI and vSphere Web Client.

portgroup name

n

cluster name

n

resource pool name

n

datastore name

n

The use of a period is not allowed in the Serengeti resource name.

22 VMware, Inc.

Page 23

Chapter 2 Installing Big Data Extensions

Resources Excluded From Unicode UTF-8 Support

The Serengeti cluster specification file, manifest file, and topology racks-hosts mapping file do not provide

UTF-8 support. When you create these files to define the nodes and resources for use by the cluster, use only

ASCII attribute names consisting of alphanumeric characters and underscores (_).

The following resource names are excluded from UTF-8 support:

cluster name

n

nodeGroup name

n

node name

n

virtual machine name

n

The following attributes in the Serengeti cluster specification file are excluded from UTF-8 support:

distro name

n

role

n

cluster configuration

n

storage type

n

haFlag

n

instanceType

n

groupAssociationsType

n

The rack name in the topology racks-hosts mapping file, and the placementPolicies field of the Serengeti

cluster specification file is also excluded from UTF-8 support.

The Customer Experience Improvement Program

You can configure Big Data Extensions to collect data to help improve your user experience with VMware

products. The following section contains important information about the VMware Customer Experience

Improvement Program.

The goal of the Customer Experience Improvement Program is to quickly identify and address problems

that might be affecting your experience. If you choose to participate in the Customer Experience

Improvement Program,Big Data Extensions will regularly send anonymous data to VMware. You can use

this data for product development and troubleshooting purposes.

Before collecting the data, VMware makes anonymous all fields that contain information that is specific to

your organization. VMware sanitizes fields by generating a hash of the actual value. When a hash value is

collected, VMware cannot identify the actual value but can detect changes in the value when you change

your environment.

VMware, Inc. 23

Page 24

VMware vSphere Big Data Extensions Administrator's and User's Guide

Categories of Information in Collected Data

When you choose to participate in VMware’s Customer Experience Improvement Program (CEIP), VMware

will receive the following categories of data:

Configuration Data

Feature Usage Data

Performance Data

Data about how you have configured VMware products and information

related to your IT environment. Examples of Configuration Data include:

version information for VMware products; details of the hardware and

software running in your environment; product configuration settings, and

information about your networking environment. Configuration Data may

include hashed versions of your device IDs and MAC and Internet Protocol

Addresses.

Data about how you use VMware products and services. Examples of

Feature Usage Data include: details about which product features are used;

metrics of user interface activity; and details about your API calls.

Data about the performance of VMware products and services. Examples of

Performance Data include metrics of the performance and scale of VMware

products and services; response times for User Interfaces, and details about

your API calls.

Enabling and Disabling Data Collection

By default, enrollment in the Customer Experience Improvement Program is enabled during installation.

You have the option of disabling this service during installation. You can discontinue participation in the

Customer Experience Improvement Program at any time, and stop sending data to VMware. See“Disable

the Big Data Extensions Data Collector,” on page 124.

If you have any questions or concerns regarding the Customer Experience Improvement Program for Log

Insight, contact bde-info@vmware.com.

Deploy the Big Data Extensions vApp in the vSphere Web Client

Deploying the Big Data Extensions vApp is the first step in getting your cluster up and running with

Big Data Extensions.

Prerequisites

Install and configure vSphere.

n

Configure all ESXi hosts to use the same NTP server.

n

On each ESXi host, add the NTP server to the host configuration, and from the host configuration's

n

Startup Policy list, select Start and stop with host. The NTP daemon ensures that time-dependent

processes occur in sync across hosts.

When installing Big Data Extensions on vSphere 5.1 or later, use vCenter Single Sign-On to provide

n

user authentication.

Verify that you have one vSphere Enterprise license for each host on which you deploy virtual Hadoop

n

nodes. You manage your vSphere licenses in the vSphere Web Client or in vCenter Server.

Install the Client Integration plug-in for the vSphere Web Client. This plug-in enables OVF deployment

n

on your local file system.

NOTE Depending on the security settings of your browser, you might have to approve the plug-in

when you use it the first time.

24 VMware, Inc.

Page 25

Chapter 2 Installing Big Data Extensions

Download the Big Data Extensions OVA from the VMware download site.

n

Verify that you have at least 40GB disk space available for the OVA. You need additional resources for

n

the Hadoop cluster.

Ensure that you know the vCenter Single Sign-On Look-up Service URL for your

n

vCenter Single Sign-On service.

If you are installing Big Data Extensions on vSphere 5.1 or later, ensure that your environment includes

vCenter Single Sign-On. Use vCenter Single Sign-On to provide user authentication on vSphere 5.1 or

later.

Review the Customer Experience Improvement Program description, and determine if you wish to

n

collect data and send it to VMware help improve your user experience using Big Data Extensions. See

“The Customer Experience Improvement Program,” on page 23.

Procedure

1 In the vSphere Web Client vCenter Hosts and Clusters view, select Actions > All vCenter Actions >

Deploy OVF Template.

2 Choose the location where the Big Data Extensions OVA resides and click Next.

Option Description

Deploy from File

Deploy from URL

Browse your file system for an OVF or OVA template.

Type a URL to an OVF or OVA template located on the internet. For

example: http://vmware.com/VMTN/appliance.ovf.

3 View the OVF Template Details page and click Next.

4 Accept the license agreement and click Next.

5 Specify a name for the vApp, select a target datacenter for the OVA, and click Next.

The only valid characters for Big Data Extensions vApp names are alphanumeric and underscores. The

vApp name must be < 60 characters. When you choose the vApp name, also consider how you will

name your clusters. Together the vApp and cluster names must be < 80 characters.

6 Select a vSphere resource pool for the OVA and click Next.

Select a top-level resource pool. Child resource pools are not supported by Big Data Extensions even

though you can select a child resource pool. If you select a child resource pool, you will not be able to

create clusters from Big Data Extensions.

7 Select shared storage for the OVA and click Next.

If shared storage is not available, local storage is acceptable.

8 For each network specified in the OVF template, select a network in the Destination Networks column

in your infrastructure to set up the network mapping.

The first network lets the Management Server communicate with your Hadoop cluster. The second

network lets the Management Server communicate with vCenter Server. If your vCenter Server

deployment does not use IPv6, you can specify the same IPv4 destination network for use by both

source networks.

VMware, Inc. 25

Page 26

VMware vSphere Big Data Extensions Administrator's and User's Guide

9 Configure the network settings for your environment, and click Next.

a Enter the network settings that let the Management Server communicate with your Hadoop

cluster.

Use a static IPv4 (IP) network. An IPv4 address is four numbers separated by dots as in

aaa.bbb.ccc.ddd, where each number ranges from 0 to 255. You must enter a netmask, such as

255.255.255.0, and a gateway address, such as 192.168.1.253.

If the vCenter Server or any ESXi host or Hadoop distribution repository is resolved using a fully

qualified domain name (FQDN), you must enter a DNS address. Enter the DNS server IP address

as DNS Server 1. If there is a secondary DNS server, enter its IP address as DNS Server 2.

NOTE You cannot use a shared IP pool with Big Data Extensions.

b (Optional) If you are using IPv6 between the Management Server and vCenter Server, select the

Enable Ipv6 Connection checkbox.

Enter the IPv6 address, or FQDN, of the vCenter Server. The IPv6 address size is 128 bits. The

preferred IPv6 address representation is: xxxx:xxxx:xxxx:xxxx:xxxx:xxxx:xxxx:xxxx where each x is

a hexadecimal digit representing 4 bits. IPv6 addresses range from

0000:0000:0000:0000:0000:0000:0000:0000 to ffff:ffff:ffff:ffff:ffff:ffff:ffff:ffff. For convenience, an IPv6

address may be abbreviated to shorter notations by application of the following rules.

Remove one or more leading zeroes from any groups of hexadecimal digits. This is usually

n

done to either all or none of the leading zeroes. For example, the group 0042 is converted to 42.

Replace consecutive sections of zeroes with a double colon (::). You may only use the double

n

colon once in an address, as multiple uses would render the address indeterminate. RFC 5952

recommends that a double colon not be used to denote an omitted single section of zeroes.

The following example demonstrates applying these rules to the address

2001:0db8:0000:0000:0000:ff00:0042:8329.

Removing all leading zeroes results in the address 2001:db8:0:0:0:ff00:42:8329.

n

Omitting consecutive sections of zeroes results in the address 2001:db8::ff00:42:8329.

n

See RFC 4291 for more information on IPv6 address notation.

10 Verify that the Initialize Resources check box is selected and click Next.

If the check box is unselected, the resource pool, data store, and network connection assigned to the

vApp will not be added to Big Data Extensions.

If you do not add the resource pool, datastore, and network when you deploy the vApp, use the

vSphere Web Client or the Serengeti CLI Client to specify the resource pool, datastore, and network

information before you create a Hadoop cluster.

11 Run the vCenter Single Sign-On Lookup Service URL to enable vCenter Single Sign-On.

If you use vCenter 5.x, use the following URL: https://FQDN_or_IP_of_SSO_SERVER:

n

7444/lookupservice/sdk

If you use vCenter 6.0, use the following URL: https://FQDN_of_SSO_SERVER:

n

443/lookupservice/sdk

If you don't input the URL, vCenter Single Sign-On is disabled.

12 To disable the Big Data Extensions data collector, uncheck the Customer Experience Improvement

Program checkbox.

26 VMware, Inc.

Page 27

Chapter 2 Installing Big Data Extensions

13 (Optional) To disable the Big Data Extensions Web plug-in from automatically registering, uncheck the

enable checkbox.

By default the checkbox to enable automatic registration of the Big Data Extensions Web plug-in is

selected. When you first login to the Big Data Extensions Web client, it automatically connects to the

Serengeti management server.

14 Specify a remote syslog server, such as VMware vRealize Log Insight, to which Big Data Extensions can

send logging information to across the network.

Retention, rotation and the splitting of logs received and managed by a syslog server are controlled by

that syslog server. Big Data Extensions cannot configure or control log management on a remote syslog

server. For more information on log management, see the documentation for the syslog server.

Regardless of the additional syslog configuration specified with this option, logs continue to be placed

in the default locations of the Big Data Extensions environment.

15 Verify the vService bindings and click Next.

16 Verify the installation information and click Finish.

vCenter Server deploys the Big Data Extensions vApp. When deployment finishes, two virtual

machines are available in the vApp.

The Management Server virtual machine, management-server (also called the

n

Serengeti Management Server), which is started as part of the OVA deployment.

The Hadoop Template virtual machine, hadoop-template, which is not started. Big Data Extensions

n

clones Hadoop nodes from this template when provisioning a cluster. Do not start or stop this

virtual machine without good reason. The template does not include a Hadoop distribution.

IMPORTANT Do not delete any files under the /opt/serengeti/.chef directory. If you delete any of these

files, such as the serengeti.pem file, subsequent upgrades to Big Data Extensions might fail without

displaying error notifications.

What to do next

Install the Big Data Extensions plug-in within the vSphere Web Client. See “Install the Big Data Extensions

Plug-In,” on page 28.

If the Initialize Resources check box is not selected, add resources to the Big Data Extensions server before

you create a Hadoop cluster.

Install RPMs in the Serengeti Management Server Yum Repository

Install the wsdl4j and mailx Red Hat Package Manager (RPM) packages within the internal Yum repository

of the Serengeti Management Server.

The wsdl4j and mailx RPM packages are not embedded within big Data Extension due to licensing

agreements. For this reason you must install them within the internal Yum repository of the Serengeti

Management Server.

Prerequisites

Deploy the Big Data Extensions vApp.

Procedure

1 Open a command shell, such as Bash or PuTTY, and log in to the Serengeti Management Server as the

user serengeti.

VMware, Inc. 27

Page 28

VMware vSphere Big Data Extensions Administrator's and User's Guide

2 Download and install the wsdl4j and mailx RPM packages.

If the Serengeti Management Server can connect to the Internet, run the commands as shown in the

n

example below to download the RPMs, copy the files to the required directory, and create a

repository.

cd /opt/serengeti/www/yum/repos/centos/6/base/RPMS/

wget http://mirror.centos.org/centos/6/os/x86_64/Packages/mailx-12.4-7.el6.x86_64.rpm

wget http://mirror.centos.org/centos/6/os/x86_64/Packages/wsdl4j-1.5.2-7.8.el6.noarch.rpm

createrepo ..

If the Serengeti Management Server cannot connect to the Internet, you must run the following

n

tasks manually.

a Download the RPM files as shown in the example below.

http://mirror.centos.org/centos/6/os/x86_64/Packages/mailx-12.4-7.el6.x86_64.rpm

http://mirror.centos.org/centos/6/os/x86_64/Packages/wsdl4j-1.5.2-7.8.el6.noarch.rpm

b Copy the RPM files to /opt/serengeti/www/yum/repos/centos/6/base/RPMS/.

c Run the createrepo command to create a repository from the RPMs you downloaded.

createrepo /opt/serengeti/www/yum/repos/centos/6/base/

Install the Big Data Extensions Plug-In

To enable the Big Data Extensions user interface for use with a vCenter Server Web Client, register the plugin with the vSphere Web Client. The Big Data Extensions graphical user interface is supported only when

you use vSphere Web Client 5.1 and later.

The Big Data Extensions plug-in provides a GUI that integrates with the vSphere Web Client. Using the

Big Data Extensions plug-in interface you can perform common Hadoop infrastructure and cluster

management tasks.

NOTE Use only the Big Data Extensions plug-in interface in the vSphere Web Client or the Serengeti CLI

Client to monitor and manage your Big Data Extensions environment. Performing management operations

in vCenter Server might cause the Big Data Extensions management tools to become unsynchronized and

unable to accurately report the operational status of your Big Data Extensions environment.

Prerequisites

Deploy the Big Data Extensions vApp. See “Deploy the Big Data Extensions vApp in the vSphere Web

n

Client,” on page 24.

By default, the Big Data Extensions Web plug-in automatically installs and registers when you deploy

n

the Big Data Extensions vApp. To install the Big Data Extensions Web plug-in after deploying the

Big Data Extensions vApp, you must has opted not to enable automatic registration of the Web plug-in

during deployment. See “Deploy the Big Data Extensions vApp in the vSphere Web Client,” on

page 24.

Ensure that you have login credentials with administrator privileges for the vCenter Server system with

n

which you are registering Big Data Extensions.

NOTE The user name and password you use to login cannot contain characters whose UTF-8 encoding

is greater than 0x8000.

If you want to use the vCenter Server IP address to access the vSphere Web Client, and your browser

n

uses a proxy, add the vCenter Server IP address to the list of proxy exceptions.

28 VMware, Inc.

Page 29

Chapter 2 Installing Big Data Extensions

Procedure

1 Open a Web browser and go to the URL of vSphere Web Client 5.1 or later.

https://hostname-or-ip-address:port/vsphere-client

The hostname-or-ip-address can be either the DNS hostname or IP address of vCenter Server. By default

the port is 9443, but this might have changed during installation of the vSphere Web Client.

2 Enter the user name and password with administrative privileges that has permissions on

vCenter Server, and click Login.

3 Using the vSphere Web Client Navigator pane, locate the ZIP file on the Serengeti Management Server

that contains the Big Data Extensions plug-in to register to the vCenter Server.

You can find the Serengeti Management Server under the datacenter and resource pool to which you

deployed it.

4 From the inventory tree, select management-server to display information about the

Serengeti Management Server in the center pane.

Click the Summary tab in the center pane to access additional information.

5 Note the IP address of the Serengeti Management Server virtual machine.

6 Open a Web browser and go to the URL of the management-server virtual machine.

https://management-server-ip-address:8443/register-plugin

The management-server-ip-address is the IP address you noted in Step 5.

7 Enter the information to register the plug-in.

Option Action

Register or Unregister

vCenter Server host name or IP

address

User Name and Password

Big Data Extensions Package URL

Click Install to install the plug-in. Select Uninstall to uninstall the plug-in.

Enter the server host name or IP address of vCenter Server.

Do not include http:// or https:// when you enter the host name or IP

address.

Enter the user name and password with administrative privileges that you

use to connect to vCenter Server. The user name and password cannot

contain characters whose UTF-8 encoding is greater than 0x8000.

Enter the URL with the IP address of the management-server virtual

machine where the Big Data Extensions plug-in package is located:

https://management-server-ip-address/vcplugin/serengetiplugin.zip

8 Click Submit.

The Big Data Extensions plug-in registers with vCenter Server and with the vSphere Web Client.

9 Log out of the vSphere Web Client, and log back in using your vCenter Server user name and

password.

The Big Data Extensions icon appears in the list of objects in the inventory.

10 Click Big Data Extensions in the Inventory pane.

What to do next

Connect the Big Data Extensions plug-in to the Big Data Extensions instance that you want to manage by

connecting to the corresponding Serengeti Management Server. See “Connect to a Serengeti Management

Server,” on page 30.

VMware, Inc. 29

Page 30

VMware vSphere Big Data Extensions Administrator's and User's Guide

Configure vCenter Single Sign-On Settings for the Serengeti Management Server

If the Big Data Extensions Single Sign-On (SSO) authentication settings are not configured or if they change

after you install the Big Data Extensions plug-in, you can use the Serengeti Management Server

Administration Portal to enable SSO, update the certificate, and register the plug-in so that you can connect

to the Serengeti Management Server and continue managing clusters.

The SSL certificate for the Big Data Extensions plug-in can change for many reasons. For example, you

install a custom certificate or replace an expired certificate.

Prerequisites

Ensure that you know the IP address of the Serengeti Management Server to which you want to

n

connect.

Ensure that you have login credentials for the Serengeti Management Server root user.

n

Procedure

1 Open a Web browser and go the URL of the Serengeti Management Server Administration Portal.

https://management-server-ip-address:5480

2 Type root for the user name, type the password, and click Login.

3 Select the SSO tab.

4 Do one of the following.

Option Description

Update the certificate

Enable SSO for the first time

The Big Data Extensions and vCenter SSO server certificates are synchronized.

What to do next

Reregister the Big Data Extensions plug-in with the Serengeti Management Server. See “Connect to a

Serengeti Management Server,” on page 30.

Click Update Certificate.

Type the Lookup Service URL, and click Enable SSO.

Connect to a Serengeti Management Server

To use the Big Data Extensions plug-in to manage and monitor big data clusters and Hadoop distributions,

you must connect the Big Data Extensions plug-in to the Serengeti Management Server in your

Big Data Extensions deployment.

You can deploy multiple instances of the Serengeti Management Server in your environment. However, you

can connect the Big Data Extensions plug-in with only one Serengeti Management Server instance at a time.

You can change which Serengeti Management Server instance the plug-in connects to, and use the

Big Data Extensions plug-in interface to manage and monitor multiple Hadoop and HBase distributions

deployed in your environment.

IMPORTANT The Serengeti Management Server that you connect to is shared by all users of the

Big Data Extensions plug-in interface in the vSphere Web Client. If a user connects to a different

Serengeti Management Server, all other users are affected by this change.

30 VMware, Inc.

Page 31

Chapter 2 Installing Big Data Extensions

Prerequisites

Verify that the Big Data Extensions vApp deployment was successful and that the

n

Serengeti Management Server virtual machine is running.

Verify that the version of the Serengeti Management Server and the Big Data Extensions plug-in is the

n

same.

Ensure that vCenter Single Sign-On is enabled and configured for use by Big Data Extensions for

n

vSphere 5.1 and later.

Install theBig Data Extensions plug-in.

n

Procedure

1 Use the vSphere Web Client to log in to vCenter Server.

2 Select Big Data Extensions.

3 Click the Summary tab.

4 In the Connected Server pane, click the Connect Server link.

5 Navigate to the Serengeti Management Server virtual machine in the Big Data Extensions vApp to

which to connect, select it, and click OK.

The Big Data Extensions plug-in communicates using SSL with the Serengeti Management Server.

When you connect to a Serengeti server instance, the plug-in verifies that the SSL certificate in use by

the server is installed, valid, and trusted.

The Serengeti server instance appears as the connected server on the Summary tab of the

Big Data Extensions Home page.

What to do next

You can add resource pool, datastore, and network resources to your Big Data Extensions deployment, and

create big data clusters that you can provision for use.

Install the Serengeti Remote Command-Line Interface Client

Although theBig Data Extensions Plug-in for vSphere Web Client supports basic resource and cluster

management tasks, you can perform a greater number of the management tasks using the Serengeti CLI

Client.

Prerequisites

Verify that the Big Data Extensions vApp deployment was successful and that the Management Server

n

is running.

Verify that you have the correct user name and password to log into the Serengeti CLI Client. If you are

n

deploying on vSphere 5.1 or later, the Serengeti CLI Client uses your vCenter Single Sign-On

credentials.

Verify that the Java Runtime Environment (JRE) is installed in your environment, and that its location is

n

in your PATH environment variable.

Procedure

1 Use the vSphere Web Client to log in to vCenter Server.

2 Select Big Data Extensions.

3 Click the Getting Started tab, and click the Download Serengeti CLI Console link.

A ZIP file containing the Serengeti CLI Client downloads to your computer.

VMware, Inc. 31

Page 32

VMware vSphere Big Data Extensions Administrator's and User's Guide

4 Unzip and examine the download, which includes the following components in the cli directory.

The serengeti-cli-version JAR file, which includes the Serengeti CLI Client.

n

The samples directory, which includes sample cluster configurations.

n

Libraries in the lib directory.

n

5 Open a command shell, and navigate to the directory where you unzipped the Serengeti CLI Client

download package.

6 Change to the cli directory, and run the following command to open the Serengeti CLI Client:

java -jar serengeti-cli-version.jar

What to do next

To learn more about using the Serengeti CLI Client, see the VMware vSphere Big Data Extensions Commandline Interface Guide.

Access the Serengeti CLI By Using the Remote CLI Client

You can access the Serengeti Command-Line Interface (CLI) to perform Serengeti administrative tasks with

the Serengeti Remote CLI Client.

Prerequisites

Use the VMware vSphere Web Client to log in to the VMware vCenter Server® on which you deployed

n

the Serengeti vApp.

Verify that the Serengeti vApp deployment was successful and that the Management Server is running.

n

Verify that you have the correct password to log in to Serengeti CLI. See the VMware vSphere Big Data

n

Extensions Administrator's and User's Guide.

The Serengeti CLI uses its vCenter Server credentials.

Verify that the Java Runtime Environment (JRE) is installed in your environment and that its location is

n

in your path environment variable.

Procedure

1 Download the Serengeti CLI package from the Serengeti Management Server.

Open a Web browser and navigate to the following URL: https://server_ip_address/cli/VMware-

Serengeti-CLI.zip

2 Download the ZIP file.

The filename is in the format VMware-Serengeti-cli-version_number-build_number.ZIP.

3 Unzip the download.

The download includes the following components.

The serengeti-cli-version_number JAR file, which includes the Serengeti Remote CLI Client.

n

The samples directory, which includes sample cluster configurations.

n

Libraries in the lib directory.

n

4 Open a command shell, and change to the directory where you unzipped the package.

32 VMware, Inc.

Page 33

Chapter 2 Installing Big Data Extensions

5 Change to the cli directory, and run the following command to enter the Serengeti CLI.

For any language other than French or German, run the following command.

n

java -jar serengeti-cli-version_number.jar

For French or German languages, which use code page 850 (CP 850) language encoding when

n

running the Serengeti CLI from a Windows command console, run the following command.

java -Dfile.encoding=cp850 -jar serengeti-cli-version_number.jar

6 Connect to the Serengeti service.

You must run the connect host command every time you begin a CLI session, and again after the 30

minute session timeout. If you do not run this command, you cannot run any other commands.

a Run the connect command.

connect --host xx.xx.xx.xx:8443

b At the prompt, type your user name, which might be different from your login credentials for the

Serengeti Management Server.

NOTE If you do not create a user name and password for the

Serengeti Command-Line Interface Client, you can use the default vCenter Server administrator

credentials. The Serengeti Command-Line Interface Client uses the vCenter Server login credentials

with read permissions on the Serengeti Management Server.

c At the prompt, type your password.

A command shell opens, and the Serengeti CLI prompt appears. You can use the help command to get help

with Serengeti commands and command syntax.

To display a list of available commands, type help.

n

To get help for a specific command, append the name of the command to the help command.

n

help cluster create

Press Tab to complete a command.

n

VMware, Inc. 33

Page 34

VMware vSphere Big Data Extensions Administrator's and User's Guide

34 VMware, Inc.

Page 35

Upgrading Big Data Extensions 3

You can use VMware vSphere® Update Manager™ to upgrade Big Data Extensions from earlier versions.

This chapter includes the following topics:

“Prepare to Upgrade Big Data Extensions,” on page 35

n

“Upgrade Big Data Extensions Virtual Appliance,” on page 37

n

“Upgrade the Big Data Extensions Plug-in,” on page 40

n

“Upgrade the Serengeti CLI,” on page 41

n

“Upgrade Big Data Extensions Virtual Machine Components by Using the Serengeti Command-Line

n

Interface,” on page 41

“Add a Remote Syslog Server,” on page 42

n

Prepare to Upgrade Big Data Extensions

As a prerequisite to upgrading Big Data Extensions, you must prepare your system to ensure that you have

all necessary software installed and configured properly, and that all components are in the correct state.

Data from nonworking Big Data Extensions deployments is not migrated during the upgrade process. If

Big Data Extensions is not working and you cannot recover according to the troubleshooting procedures, do

not try to perform the upgrade. Instead, uninstall the previous Big Data Extensions components and install

the new version.

VMware, Inc.

IMPORTANT Do not delete any files in the /opt/serengeti/.chef directory. If you delete any of these files,

such as the sernegeti.pem file, subsequent upgrades to Big Data Extensions might fail without displaying

error notifications.

Prerequisites

Install vSphere Update Manager. For more information, see the vSphere Update Manager

n

documentation.

Verify that your previous Big Data Extensions deployment is working normally.

n

Verify that you can create a default Hadoop cluster.

n

Procedure

1 Install vSphere Update Manager on a Windows Server.

Use the same version of vSphere Update Manager as vCenter Server. For example, if you are using

n

vCenter Server 5.5, use vSphere Update Manager 5.5.

35

Page 36

VMware vSphere Big Data Extensions Administrator's and User's Guide

vSphere Update Manager requires network connectivity with vCenter Server. Each

n

vSphere Update Manager instance must be registered with a single vCenter Server instance.

2 Log in to vCenter Server with the vSphere Web Client.

3 Power on the Hadoop Template virtual machine.

4 If the Serengeti Management Server is configured to use a static IP network, make sure that the Hadoop

Template virtual machine receives a valid IP address.

You must have a valid IP address and be connected to the network for the Hadoop Template virtual

machine to connect to vSphere Update Manager.

5 Open a command shell and log in to the Serengeti Management Server as the user serengeti, and run

the following series of commands.

/usr/sbin/vmware-rpctool 'info-set guestinfo.vmware.vami.version 2010100'

/usr/sbin/vmware-rpctool 'info-set guestinfo.vmware.vami.features SUP'

/usr/sbin/vmware-rpctool 'info-set guestinfo.vmware.vami.https-port 5489'

/usr/sbin/vmware-rpctool 'info-set guestinfo.vmware.vami.http-port 5488'

/usr/sbin/vmware-rpctool 'info-set guestinfo.vmware.vami.httpsclientkeyfile /opt/vmware/etc/sfcb/file.pem'

/usr/sbin/vmware-rpctool 'info-set guestinfo.vmware.vami.httpsclienttruststorefile /opt/vmware/etc/sfcb/client.pem'

6 For each cluster that is in AUTO scaling mode, change the scaling mode to MANUAL.

a Open a command shell and log in to the Serengeti Management Server as the user serengeti.

b Set the scaling mode of the cluster to MANUAL and the --targetComputeNodeNum parameter value

to the number of provisioned compute nodes in the cluster.

cluster setParam --name cluster-name --elasticityMode manual --targetComputeNodeNum numprovisioned-compute-nodes

7 Verify that all Hadoop clusters are in one of the following states:

RUNNING

n

STOPPED

n

CONFIGURE_ERROR

n

If the status of the cluster is PROVISIONING, wait for the process to finish and for the state of the

cluster to change to RUNNING.

8 Make sure that the host name of the Serengeti Management Server matches its fully qualified domain

name (FQDN).

9 Access the Admin view of vSphere Update Manager.

a Start vSphere Update Manager.

b On the Home page of the vSphere Web Client, select Hosts and Clusters.

c Click Update Manager.

d Open the Admin view.

Perform the upgrade tasks in the Admin view.

36 VMware, Inc.

Page 37

Upgrade Big Data Extensions Virtual Appliance

You must perform several tasks to complete the upgrade of the Big Data Extensions virtual appliance.

Because the versions of the virtual appliance, the Serengeti CLI, and the Big Data Extensions plug-in must

all be the same, it is important that you upgrade all components to the new version.

Prerequisites

Complete the preparation steps for upgrading Big Data Extensions.

Procedure

1 Configure Proxy Settings on page 37

You must have access to the Internet to upgrade your Big Data Extensions virtual appliance. If your

site uses a proxy server to access the Internet, you must configure vSphere Update Manager to use the

proxy server.

2 Download the Upgrade Source and Accept the License Agreement on page 38

To start the Big Data Extensions upgrade process, you download the upgrade source and accept the

license agreement (EULA).

3 Create an Upgrade Baseline on page 38

When you upgrade Big Data Extensions virtual appliances, you must create a custom virtual appliance

upgrade baseline.

Chapter 3 Upgrading Big Data Extensions

4 Specify Upgrade Compliance Settings on page 39

Upgrade compliance settings ensure that the upgrade baseline does not conflict with the current state

of your Big Data Extensions virtual appliance.

5 Configure the Upgrade Remediation Task and Run the Upgrade Process on page 39

The upgrade remediation task is the process by which vSphere Update Manager applies patches,

extensions, and upgrades to the Big Data Extensions virtual appliance. You configure and run the

remediation task to finish the Big Data Extensions virtual appliance upgrade process.

6 Replace the Hadoop Template Virtual Machine Under Big Data Extensions vApp on page 40

To complete upgrade process, you must manually replace the virtual machine template after you

upgrade the Big Data Extensions vApp.

Configure Proxy Settings

You must have access to the Internet to upgrade your Big Data Extensions virtual appliance. If your site uses

a proxy server to access the Internet, you must configure vSphere Update Manager to use the proxy server.

If you do not use a proxy server, continue to “Download the Upgrade Source and Accept the License

Agreement,” on page 38.

Prerequisites

Verify that you have obtained the values for the proxy server URL and port from your network

administrator.

Procedure

1 In the Admin view of vSphere Update Manager, click Configuration and then select Download

Settings.

2 In the Proxy Settings section, click Use proxy.

3 Enter the values for the proxy URL and port.

VMware, Inc. 37

Page 38

VMware vSphere Big Data Extensions Administrator's and User's Guide

4 Click Test Connection to ensure that the settings are correct.

5 If the settings are correct, click Apply.

vSphere Update Manager can now access the Web using the proxy server for your site.

Download the Upgrade Source and Accept the License Agreement