Page 1

Intel® Xeon® Processor E5-2600

Product Family Uncore Performance

Monitoring Guide

March 2012

Reference Number: 327043-001

Page 2

INFORMATION IN THIS DOCUMENT IS PROVIDED IN CONNECTION WITH INTEL

BY ESTOPPEL OR OTHERWISE, TO ANY INTELLECTUAL PROPERTY RIGHTS IS GRANTED BY THIS DOCUMENT. EXCEPT AS

PROVIDED IN INTEL'S TERMS AND CONDITIONS OF SALE FOR SUCH PRODUCTS, INTEL ASSUMES NO LIABILITY WHATSOEVER,

AND INTEL DISCLAIMS ANY EXPRESS OR IMPLIED WARRANTY, RELATING T O SALE AND/OR USE OF INTEL PRODUC TS INCLUDING

LIABILITY OR WARRANTIES RELA TING T O FITNES S FOR A PARTICULAR PURPOSE, MERCHANT ABILITY, OR INFRINGEMENT OF ANY

PATENT, COPYRIGHT OR OTHER INTELLECTUAL PROPERTY RIGHT. Intel products are not intended for use in medical, life saving,

life sustaining applications.

A "Mission Critical Application" is any application in which failure of the Intel Product could result, directly or indirectly, in personal

injury or death. SHOULD YOU PURCHASE OR USE INTEL'S PRODUCTS FOR ANY SUCH MISSION CRITICAL APPLICATION, YOU

SHALL INDEMNIFY AND HOLD INTEL AND ITS SUBSIDIARIES, SUBCONTRACTORS AND AFFILIATES, AND THE DIRECTORS,

OFFICERS, AND EMPLOYEES OF EACH, HARM LESS AGAINST ALL CLAIMS COSTS, DAMAGES, AND EXPENSES AND REASONABLE

AT TORNEY S' FEES ARISING O UT OF, DIRECTLY OR INDIRECTLY, ANY CLAIM O F PRODUCT LIABILITY, PERSONAL INJURY, OR DEATH

ARISING IN ANY WAY OUT OF SUCH MISSION CRITICAL APPLICATION, WHETHER OR NOT INTEL OR ITS SUBCONTRACTOR WAS

NEGLIGENT IN THE DESIGN, MANUFACTURE, OR WARNING OF THE INTEL PRODUCT OR ANY OF ITS PARTS.

®

PRODUCTS. NO LICENSE, EXPRESS OR IMPLIED,

Intel may make changes to specifications and product descriptions at any time, without notice.

Designers must not rely on the absence or characteristics of any features or instructions marked “reserved” or “undefined.” Intel

reserves these for future definition and shall have no responsibility whatsoever for conflicts or incompatibilities arising from future

changes to them.

Code names represented in this document are only for use by Intel to identify a product, technology, or service in development,

that has not been made commercially available to the public, i.e., announced, launched or shipped. It is not a “commercial” name

for products or services and is not intended to function as a trademark.

The Intel

from published specifications. Current characterized er rata ar e ava ila bl e on re q uest.

Hyper-Threading Technology requires a computer system with an I ntel

an HT Technology enabled chipset, BIOS and operating system. Performance will vary depending on the specific hardware and

software you use. For more information, see http://www.intel.com/technology/hyperthread/index.htm; including details on which processors

support HT Technology.

Intel

(VMM) and for some uses, certain platform software enabled for it. Functionality, performance or other benefits will

on hardware and software configurations. Intel

development.

64-bit computing on Intel architecture requires a computer system with a processor , chipset, BIOS, operating system, device drivers and applications enabled for Intel

64 architecture-enabled BIOS. Performance will vary depending on your hardware and software configurations. Consult with your

system vendor for more information.

®

64 architecture processors may contain design defects or errors known as errata, which may cause the product to deviate

®

processor supporting Hyper-Threading Technology and

®

Virtualization Technology requires a computer system with an enabled Intel® processor, BIOS, virtual machine monitor

®

Virtualization Technology-enabled BIOS and VMM applications are currently in

®

64 architecture. Processors will not operate (including 32-bit operation) without an Intel

vary depending

Contact your local Intel sales office or your distributor to obtain the latest specifications and before placing your product order.

Copies of documents which have an order number and are referenced in this document, or other Intel literature, may be obtained

by calling 1-800-548-4725, or by visiting Intel's Web Site

Intel, Pentium, Intel Xeon, Intel NetBurst, Intel Core Solo , In tel Cor e Duo , Intel Core 2 Duo, Intel Core 2 Extreme, Intel Pentium

D, Itanium, Intel SpeedStep, MMX, and VTune are trademarks or registered trademarks of Intel Corporation or its subsidiaries in

.

the United States and other countries.

Copyright © 2012 Intel Corporation. All rights reserved.

*Other names and brands may be claimed as the property of others.

®

2 Reference Number: 327043-001

Page 3

Contents

1Introduction..............................................................................................................9

1.1 Introduction .......................................................................................................9

1.2 Uncore PMON Overview.................................. .. ......................... .. .. .......................9

1.3 Section References............................................................................................10

1.4 Uncore PMON - Typical Control/Counter Logic .......................................................11

1.5 Uncore PMU Summary Tables ............................... ..............................................12

1.6 On Parsing and Using Derived Events...................................................................14

1.6.1 On Common Terms found in Derived Events ..............................................15

2 Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring.17

2.1 Uncore Per-Socket Performance Monitoring Control................................................17

2.1.1 Setting up a Monitoring Session ...............................................................17

2.1.2 Reading the Sample Interval....................................................................18

2.2 UBox Performance Monitoring.............................................................................19

2.2.1 Overview of the UBox .............................................................................19

2.2.2 UBox Performance Monitoring Overview ............................................ .. .. ....19

2.2.3 UBox Performance Monitors.....................................................................19

2.2.3.1 UBox Box Level PMON State.......................................................19

2.2.3.2 UBox PMON state - Counter/Control Pairs.....................................20

2.2.4 UBox Performance Monitoring Events....................................... ... ..............21

2.2.5 UBOX Box Events Ordered By Code ..........................................................21

2.2.6 UBOX Box Performance Monitor Event List.................................................21

2.3 Caching Agent (Cbo) Performance Monitoring................................. .......................22

2.3.1 Overview of the CBo...............................................................................22

2.3.2 CBo Performance Monitoring Overview ......................................................23

2.3.2.1 Special Note on CBo Occupancy Events........................................23

2.3.3 CBo Performance Monitors.......................................................................24

2.3.3.1 CBo Box Level PMON State.........................................................27

2.3.3.2 CBo PMON state - Counter/Control Pairs ......................................27

2.3.3.3 CBo Filter Register (Cn_MSR_PMON_BOX_FILTER) ........................28

2.3.4 CBo Performance Monitoring Events..........................................................30

2.3.4.1 An Overview: ............................... .. .. .......................... .. .. ..........30

2.3.4.2 Acronyms frequently used in CBo Events: ....................................30

2.3.4.3 The Queues: .................................... .......................... .. ............31

2.3.5 CBo Events Ordered By Code...................................................................31

2.3.6 CBO Box Common Metrics (Derived Events)...............................................32

2.3.7 CBo Performance Monitor Event List..........................................................34

2.4 Home Agent (HA) Performance Monitoring............................................................45

2.4.1 Overview of the Home Agent ...................................................................45

2.4.2 HA Performance Monitoring Overview.................. .. ... ........................... ......46

2.4.3 HA Performance Monitors........................................................................46

2.4.3.1 HA Box Level PMON State..........................................................46

2.4.3.2 HA PMON state - Counter/Control Pairs........................................47

2.4.4 HA Performance Monitoring Events.......................... .. .. .............................49

2.4.4.1 On the Major HA Structures: ......................................................49

2.4.5 HA Box Events Ordered By Code ..............................................................50

2.4.6 HA Box Common Metrics (Derived Events).................................................50

2.4.7 HA Box Performance Monitor Event List.....................................................51

2.5 Memory Controller (iMC) Performance Monitoring ..................................................59

2.5.1 Overview of the iMC ...............................................................................59

2.5.2 Functional Overview .................. .. ...........................................................59

2.5.3 iMC Performance Monitoring Overview................. .. ............................ .. ......59

2.5.4 iMC Performance Monitors....................... .. ..............................................60

Reference Number: 327043-001 3

Page 4

2.5.4.1 MC Box Level PMON State ..........................................................60

2.5.4.2 MC PMON state - Counter/Control Pairs........................................61

2.5.5 iMC Performance Monitoring Events...........................................................62

2.5.5.1 An Overview:............................................................................62

2.5.6 iMC Box Events Ordered By Code............................... .. .. ...........................63

2.5.7 iMC Box Common Metrics (Derived Events)................................................63

2.5.8 iMC Box Performance Monitor Event List ....................................................64

2.6 Power Control (PCU) Performance Monitoring ........................................................72

2.6.1 Overview of the PCU ...............................................................................72

2.6.2 PCU Performance Monitoring Overview ......................................................72

2.6.3 PCU Performance Monitors.......................................................................72

2.6.3.1 PCU Box Level PMON State.........................................................73

2.6.3.2 PCU PMON state - Counter/Control Pairs.......................................74

2.6.3.3 Intel® PCU Extra Registers - Companions to PMON HW..................76

2.6.4 PCU Performance Monitoring Events..........................................................76

2.6.4.1 An Overview:............................................................................76

2.6.5 PCU Box Events Ordered By Code.............................................................78

2.6.6 PCU Box Common Metrics (Derived Events)................................................79

2.6.7 PCU Box Performance Monitor Event List....................................................79

2.7 Intel® QPI Link Layer Performance Monitoring.......................................................87

2.7.1 Overview of the Intel® QPI Box................................................................87

2.7.2 Intel® QPI Performance Monitoring Overview.............................................87

2.7.3 Intel® QPI Performance Monitors.............. .. .. ........................... .. ...............88

2.7.3.1 Intel® QPI Box Level PMON State ...............................................88

2.7.3.2 Intel® QPI PMON state - Counter/Control Pairs .............................89

2.7.3.3 Intel® QPI Registers for Packet Mask/Match Facility.......................90

2.7.3.4 Intel® QPI Extra Registers - Companions to PMON HW...................94

2.7.4 Intel® QPI LL Performance Monitoring Events.............................................94

2.7.4.1 An Overview.............................................................................94

2.7.4.2 Acronyms frequently used in Intel® QPI Events: ...........................95

2.7.5 Intel® QPI LL Box Events Ordered By Code................................................95

2.7.6 Intel QPI LL Box Common Metrics (Derived Events).....................................96

2.7.7 Intel® QPI LL Box Performance Monitor Event List ......................................98

2.8 R2PCIe Performance Monitoring.........................................................................111

2.8.1 Overview of the R2PCIe Box...................................................................111

2.8.2 R2PCIe Performance Monitoring Overview................................................111

2.8.3 R2PCIe Performance Monitors.................................................................112

2.8.3.1 R2PCIe Box Level PMON State................... ... .. .. .........................112

2.8.3.2 R2PCIe PMON state - Counter/Control Pairs ................................113

2.8.4 R2PCIe Performance Monitoring Events....................................................114

2.8.4.1 An Overview...........................................................................114

2.8.5 R2PCIe Box Events Ordered By Code.......................................................114

2.8.6 R2PCIe Box Common Metrics (Derived Events) .........................................114

2.8.7 R2PCIe Box Performance Monitor Event List .............................................115

2.9 R3QPI Performance Monitoring..........................................................................119

2.9.1 Overview of the R3QPI Box....................................................................119

2.9.2 R3QPI Performance Monitoring Overview .................................................119

2.9.3 R3QPI Performance Monitors..................................................................120

2.9.3.1 R3QPI Box Level PMON State............................................ ........120

2.9.3.2 R3QPI PMON state - Counter/Control Pairs..................................121

2.9.4 R3QPI Performance Monitoring Events.....................................................122

2.9.4.1 An Overview...........................................................................122

2.9.5 R3QPI Box Events Ordered By Code ........................................................122

2.9.6 R3QPI Box Common Metrics (Derived Events)...........................................123

2.9.7 R3QPI Box Performance Monitor Event List...............................................123

2.10 Packet Matching Reference................................................................................131

4 Reference Number: 327043-001

Page 5

Figures

1-1 Uncore Sub-system Block Diagram of Intel Xeon Processor E5-2600 Family ................9

1-2 Perfmon Control/Counter Block Diagram...............................................................11

Tables

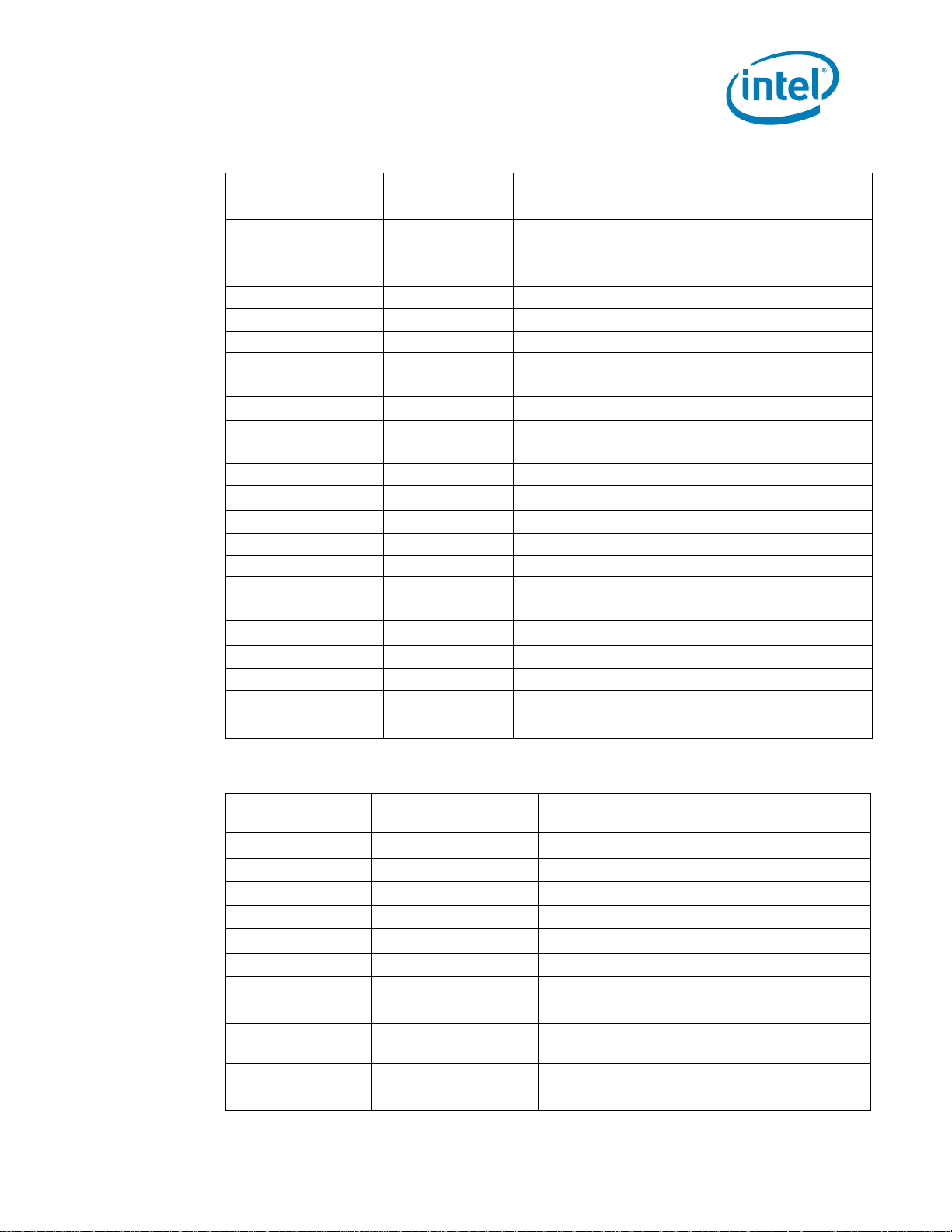

1-1 Per-Box Performance Monitoring Capabilities.........................................................10

1-2 MSR Space Uncore Performance Monitoring Registers.............................................12

1-3 PCICFG Space Uncore Performance Monitoring Registers ........................................13

2-1 UBox Performance Monitoring MSRs........................... .. .. ............................ ..........19

2-2 U_MSR_PMON_CTL{1- 0} Re g i s t er – F iel d Definitions .............................................20

2-3 U_MSR_PMON_CTR{1-0} Register – Field Definitions.............................................20

2-4 U_MSR_PMON_FIXED_CTL Register – Field Definitions ...........................................21

2-5 U_MSR_PMON_FIXED_CTR Register – Field Definitions...........................................21

2-8 CBo Performance Monitoring MSRs ......................................................................24

2-9 Cn_MSR_PMON_BOX_CTL Register – Field Definitions ............................................27

2-10 Cn_MSR_PMON_CTL{3-0} Register – Field Definitions............................................27

2-11 Cn_MSR_PMON_CTR{3-0} Register – Field Definitions ...........................................28

2-12 Cn_MSR_PMON_BOX_FILTER Register – Field Definitions........................................29

2-13 Opcode Match by IDI Packet Type for Cn_MSR_PMON_BOX_FILTER. opc ...................29

2-33 HA Performance Monitoring MSRs....................................... .. .. ........................... ..46

2-34 HA_PCI_PMON_BOX_CTL Register – Field Defin i tions ......................................... .. ..47

2-35 HA_PCI_PMON_CTL{3-0} Register – Field Definitions.............................................47

2-36 HA_PCI_PMON_CTR{3-0} Register – Field Definitions ............................................48

2-37 HA_PCI_PMON_BOX_OPCODEMATCH Register – Field Definitions............................48

2-38 HA_PCI_PMON_BOX_ADDRMATCH1 Register – Field Definitions...............................48

2-39 HA_PCI_PMON_BOX_ADDRMATCH0 Register – Field Definitions...............................49

2-59 iMC Performance Monitoring MSRs................................... ............................ .. .. ....60

2-60 MC_CHy_PCI_PMON_BOX_CTL Register – Field Definitions......................................60

2-61 MC_CHy_PCI_PMON_CTL{3-0} Register – Field Definitions .....................................61

2-62 MC_CHy_PCI_PMON_FIXED_CTL Register – Field Definitions ................................... 62

2-63 MC_CHy_PCI_PMON_CTR{FIXED,3-0} Register – Field Definitions ...........................62

2-73 PCU Performance Monitoring MSRs ......................................................................72

2-74 PCU_MSR_PMON_BOX_CTL Register – Field Definitions ..........................................73

2-75 PCU_MSR_PMON_CTL{3-0} Register – Field Definitions..........................................74

2-76 PCU_MSR_PMON_CTR{3-0} Register – Field Definitions .........................................75

2-77 PCU_MSR_PMON_BOX_FILTER Reg i s ter – F i el d De finitions......................................76

2-78 PCU_MSR_CORE_C6_CTR Register – Field Definitions.............................................76

2-79 PCU_MSR_CORE_C3_CTR Register – Field Definitions.............................................76

2-80 PCU Configuration Examples...............................................................................77

2-84 Intel® QPI Performance Monitoring Registers........................................................88

2-85 Q_Py_PCI_PMON_BOX_CTL Register – Field Definitions ..........................................89

2-86 Q_Py_PCI_PMON_CTL{3-0} Register – Field Definitions..........................................89

2-87 Q_Py_PCI_PMON_CTR{3-0} Register – Field Definitions .........................................90

2-88 Q_Py_PCI_PMON_PKT_MATCH1 Registers.............................................................91

2-89 Q_Py_PCI_PMON_PKT_MATCH0 Registers.............................................................91

2-90 Q_Py_PCI_PMON_PKT_MASK1 Registers...............................................................92

2-91 Q_Py_PCI_PMON_PKT_MASK0 Registers...............................................................92

2-92 Message Events Derived from the Match/Mask filters..............................................93

Reference Number: 327043-001 5

Page 6

2-93 QPI_RATE_STATUS Register – Field Definitions ......................................................94

2-104 R2PCIe Performance Monitoring Registers ..... ......................................................112

2-105 R2_PCI_PMON_BOX_CTL Register – Field Definitions ............................................112

2-106 R2_PCI_PMON_CTL{3-0} Register – Field Definitions............................................113

2-107 R2_PCI_PMON_CTR{3-0} Register – Field Definitions ...........................................113

2-118 R3QPI Performance Monitoring Registers ............................................................120

2-119 R3_Ly_PCI_PMON_BOX_CTL Register – Field Definitions .......................................120

2-120 R3_Ly_PCI_PMON_CTL{2-0} Register – Field Definitions.......................................121

2-121 R3_Ly_PCI_PMON_CTR{2-0} Register – Field Definitions ......................................121

2-142 Intel® QuickPath Interconnect Packet Message Classes ........................................131

2-143 Opcode Match by Message Class........................................................................131

2-144 Opcodes (Alphabetical Listing)...........................................................................132

6 Reference Number: 327043-001

Page 7

Revision History

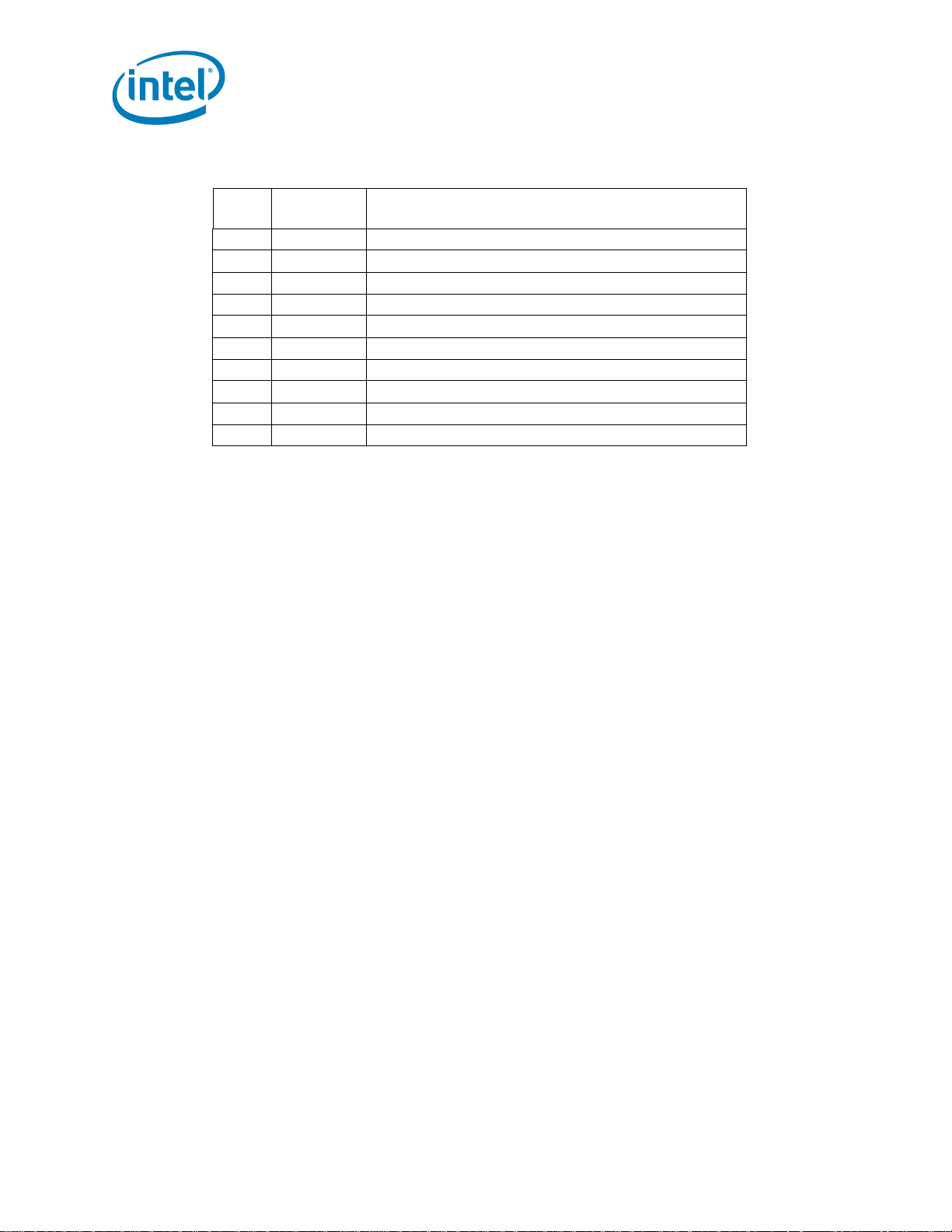

Revision Description Date

327043-001 Initial release. March 2012

§

Reference Number: 327043-001 7

Page 8

8 Reference Number: 327043-001

Page 9

Introduction

1 Introduction

1.1 Introduction

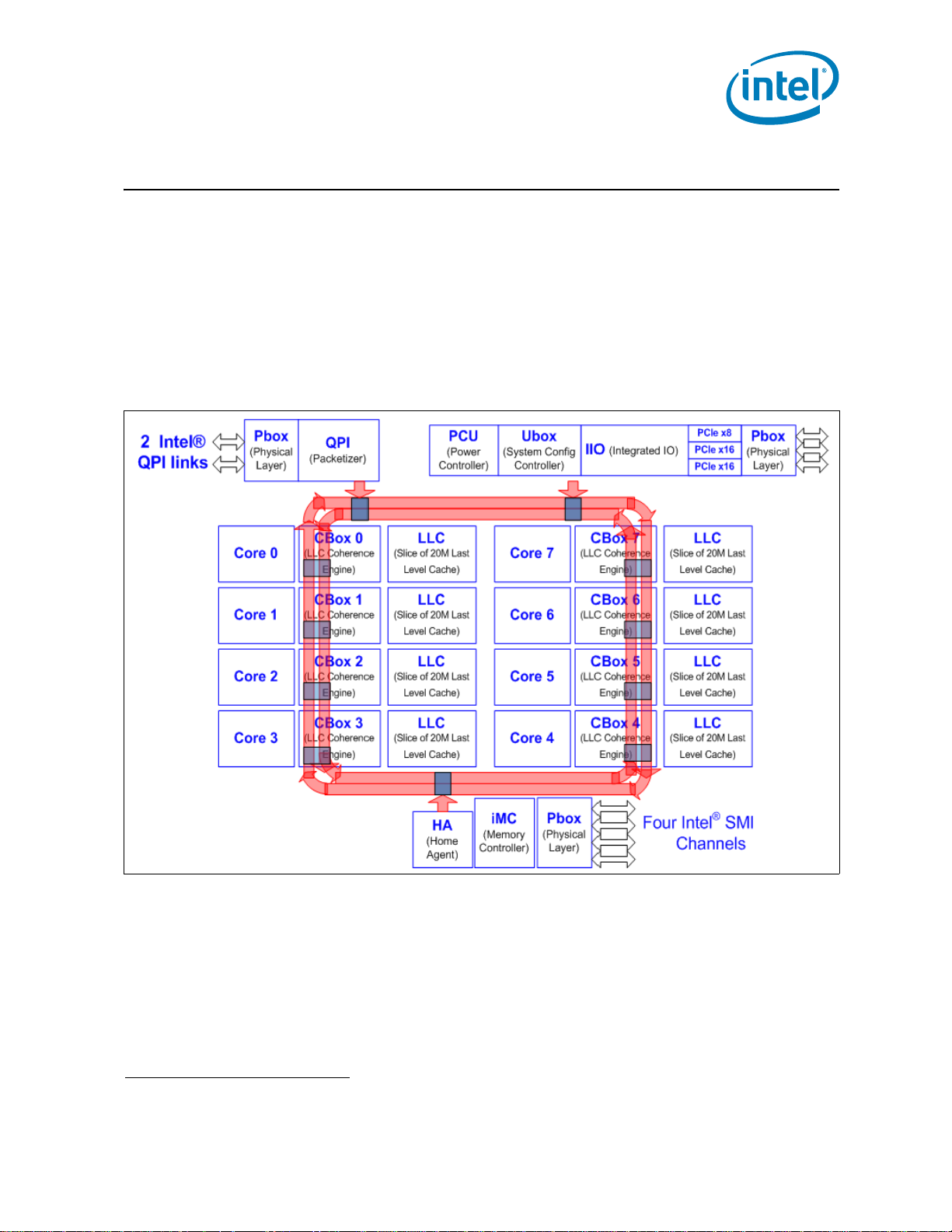

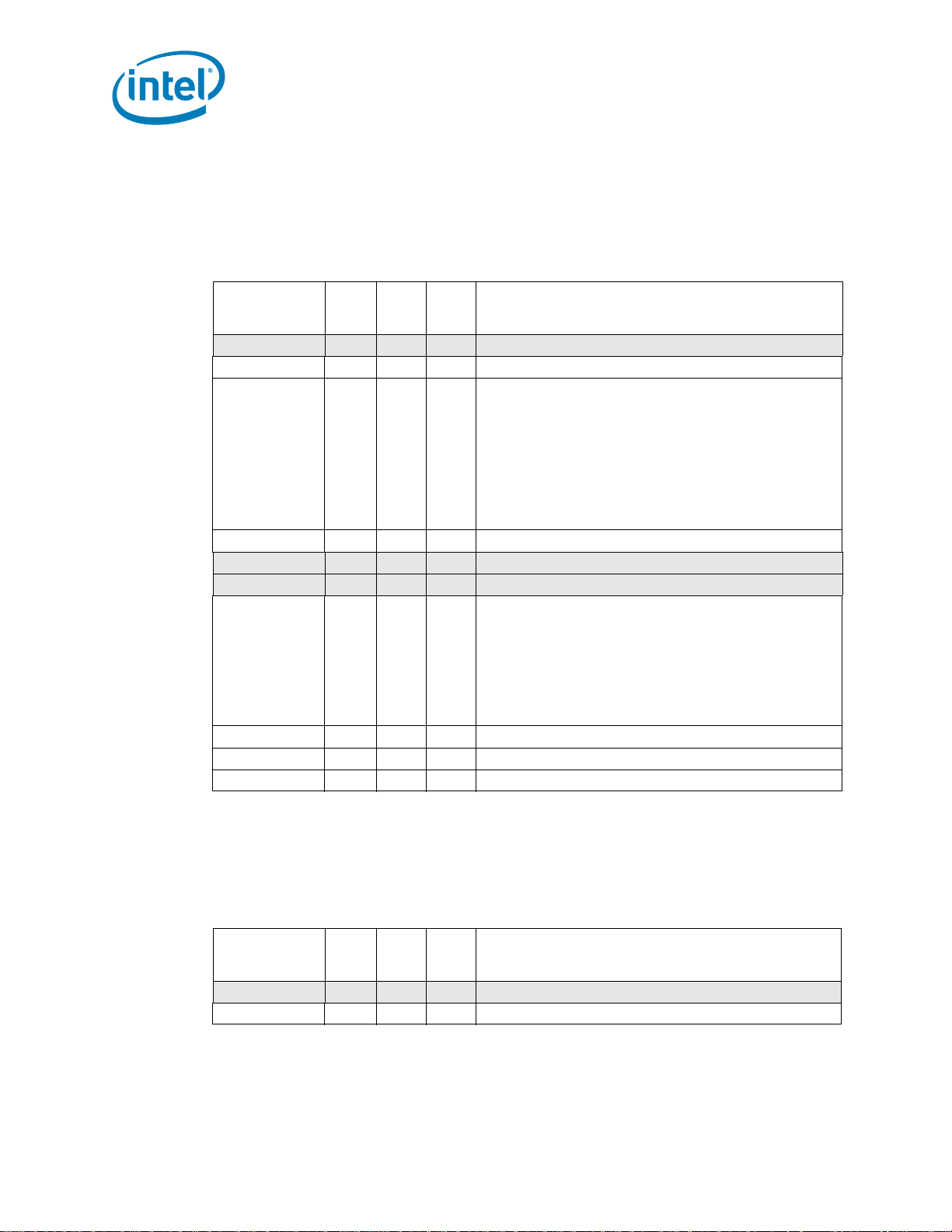

The uncore subsystem of the Intel® Xeon® processor E5-2600 product family is shown in Figure 1-1.

The uncore subsystem also applies to the Intel® Xeon® processor E5-1600 product family in a

single-socket platform

CBox caching agent to the power controller unit (PCU), integrated memory controller (iMC) and home

agent (HA), to name a few. Most of these components provide similar performance monitoring

capabilities.

Figure 1-1. Uncore Sub-system Block Diagram of Intel Xeon Processor E5-2600 Family

1

. The uncore sub-system consists of a variety of components, r anging from the

1.2 Uncore PMON Overview

The uncore performance monitoring facilities are organized into per-component performance

monitoring (or ‘PMON’) units. A PMON unit within an uncore component may contain one of more

sets of counter registers. With the exception of the UBox, each PMON unit provides a unit-level

control register to synchronize actions across the counters within the box (e.g., to start/stop

counting).

1. The uncore sub-system in Intel® CoreTM i7-3930K and i7-3820 processors are derived from

above, hence most of the descriptions of this document also apply.

Reference Number: 327043-001 9

Page 10

Introduction

Events can be collected by reading a set of local counter registers. Each counter register is paired with

a dedicated control register used to specify what to count (i.e. through the event select/umask fields)

and how to count it. Some units provide the ability to specify additional information that can be used

to ‘filter’ the monitored events (e.g., C-box; see Section 2.3.3.3, “CBo Filter Register

(Cn_MSR_PMON_BOX_FILTER)”).

Uncore performance monitors represent a per-socket resource that is not meant to be affected by

context switches and thread migration performed by the OS, it is recommended that the monitoring

software agent establish a fixed affinity binding to prevent cross-talk of event counts from different

uncore PMU.

The programming interface of the counter registers and control registers fall into two address spaces:

• Accessed by MSR are PMON registers within the Cbo units, PCU, and U-Box, see Table 1-2.

• Access by PCI device configuration space are PMON registers within the HA, iMC, Intel® QPI,

R2PCIe and R3QPI units, see Table 1-3.

Irrespective of the address-space difference and with only minor exceptions, the bit-granular layout of

the control registers to program event code, unit mask, start/stop, and signal filtering via threshold/

edge detect are the same.

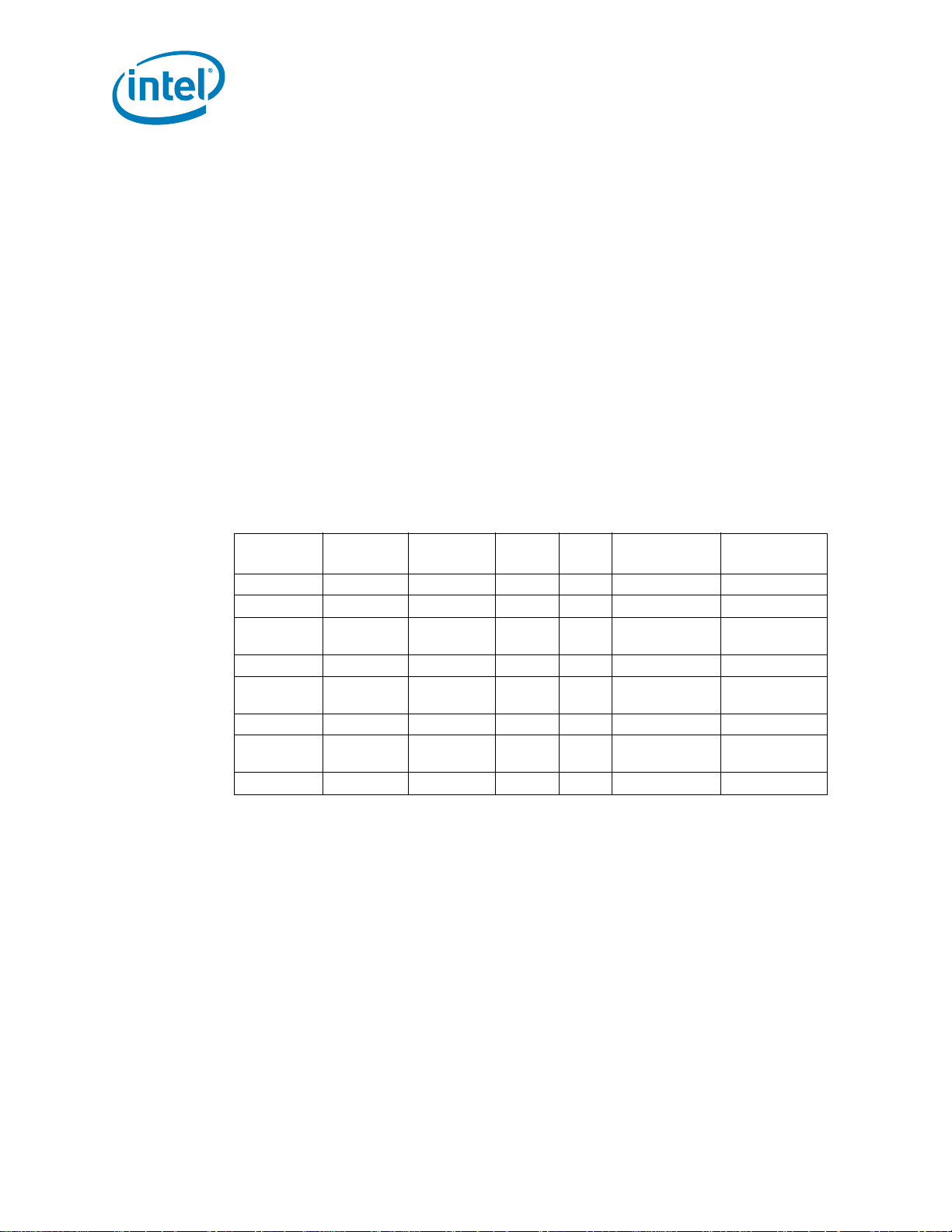

The general performance monitoring capabilities of each box are outlined in the following table.

Table 1-1. Per-Box Performance Monitoring Capabilities

Box # Boxes

C-Box 8 4 1 N Y 44

HA 1 4 4 Y Y 48

iMC 1

(4 channels)

PCU 1 4 (+2) 4 N N 48

QPI 1

(2 ports)

R2PCIe 1 4 1 N N 44

R3QPI 1

(2 links)

U-Box 1 2 (+1) 0 N/A N 44

# Counters/

Box

4 (+1)

(per channel)

(per port)

# Queue

Enabled

4

31N N44

Bus

Lock?

4N N 48

4N Y? 48

Packet Match/

Mask Filters?

Bit Width

1.3 Section References

The following sections provide a breakdown of the performance monitoring capabilities for each box.

• Section 2.1, “Uncore Per-Socket Performance Monitoring Control”

• Section 2.2, “UBox Performance Monitoring”

• Section 2.3, “Caching Agent (Cbo) Performance Monitoring”

• Section 2.6, “Power Control (PCU) Performance Monitoring”

• Section 2.4, “Home Agent (HA) Performance Monitoring”

• Section 2.5, “Memory Controller (iMC) Performance Monitoring”

• Section 2.7, “Intel® QPI Link Layer Performance Monitoring”

• Section 2.9, “R3QPI Performance Monitoring”

• Section 2.8, “R2PCIe Performance Monitoring”

• Section 2.10, “Packet Matching Reference”

10 Reference Number: 327043-001

Page 11

Introduction

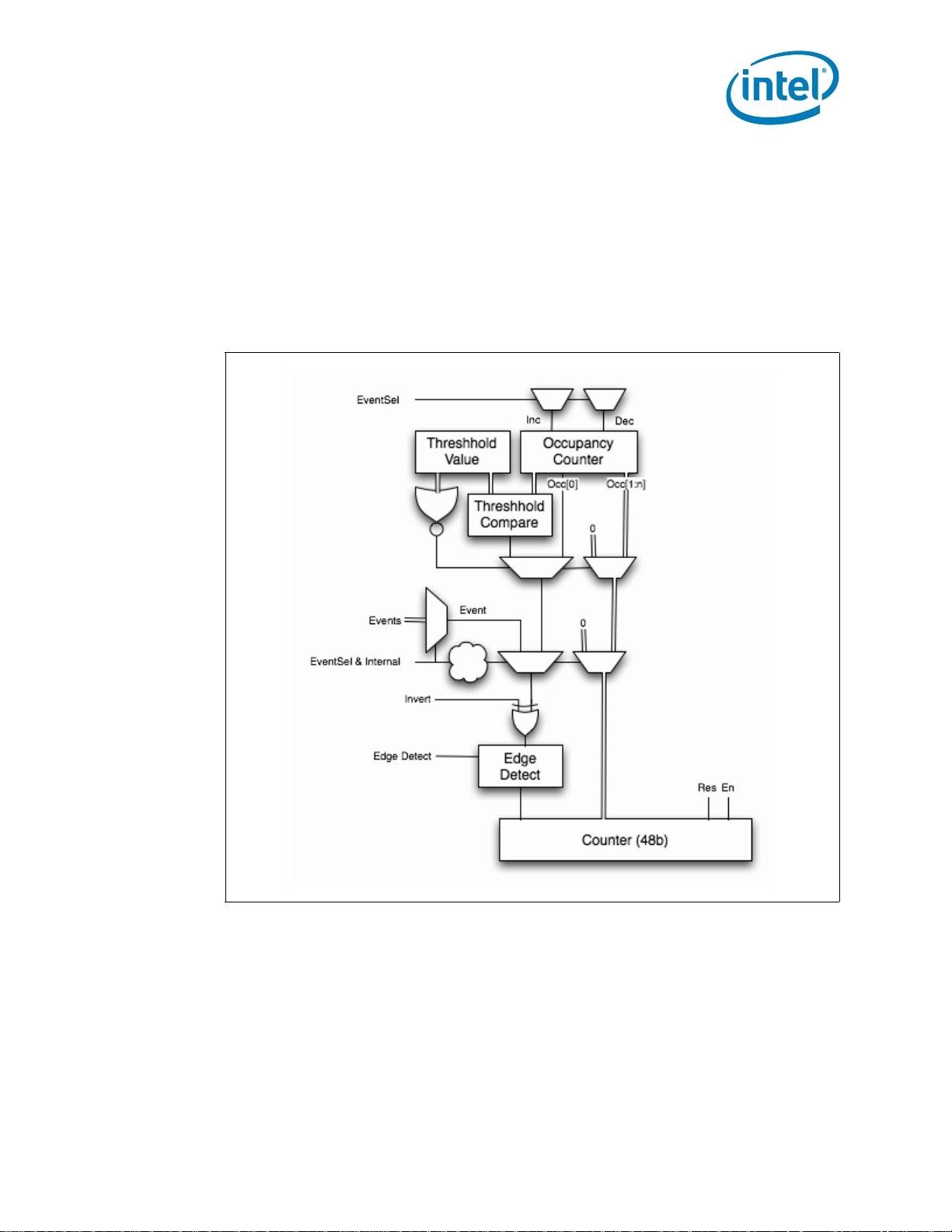

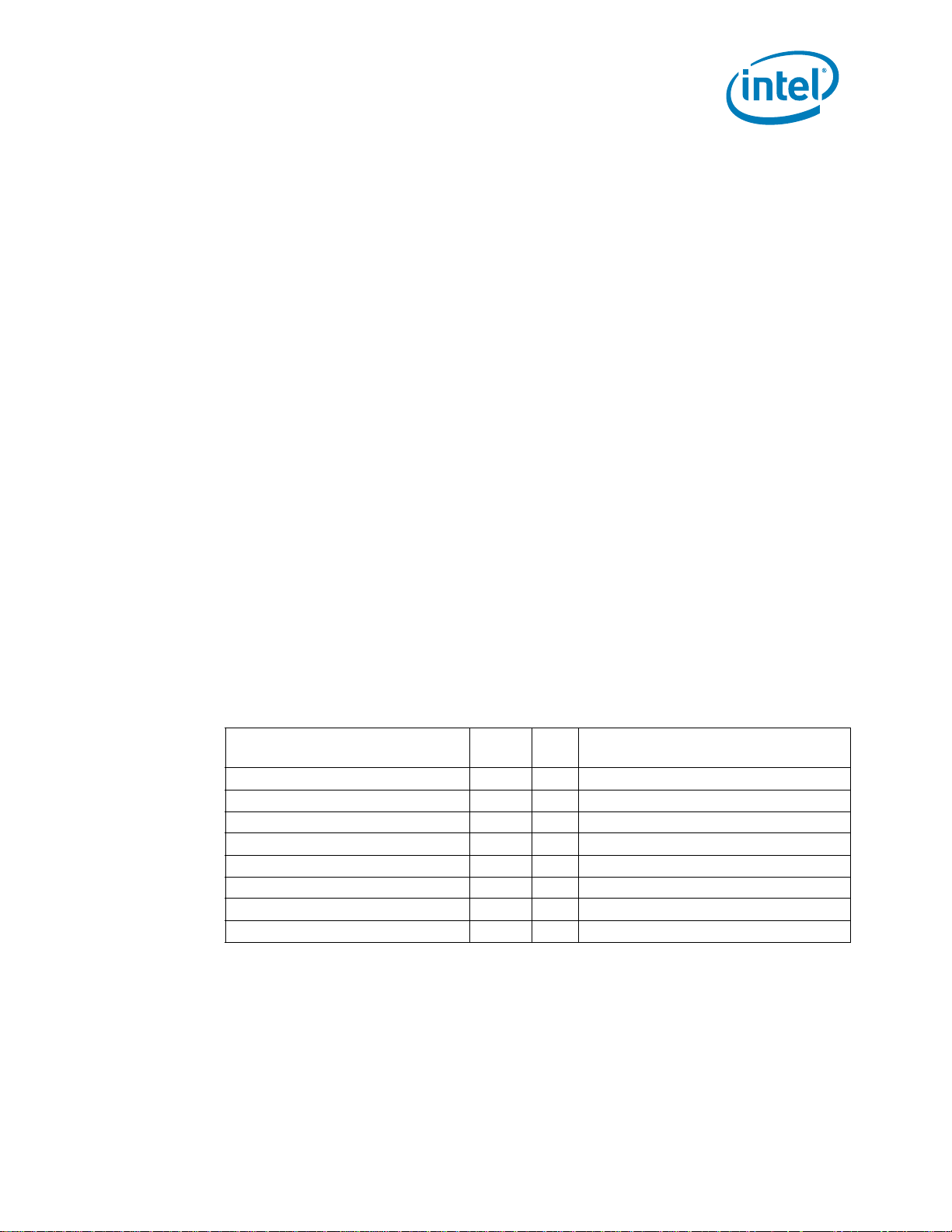

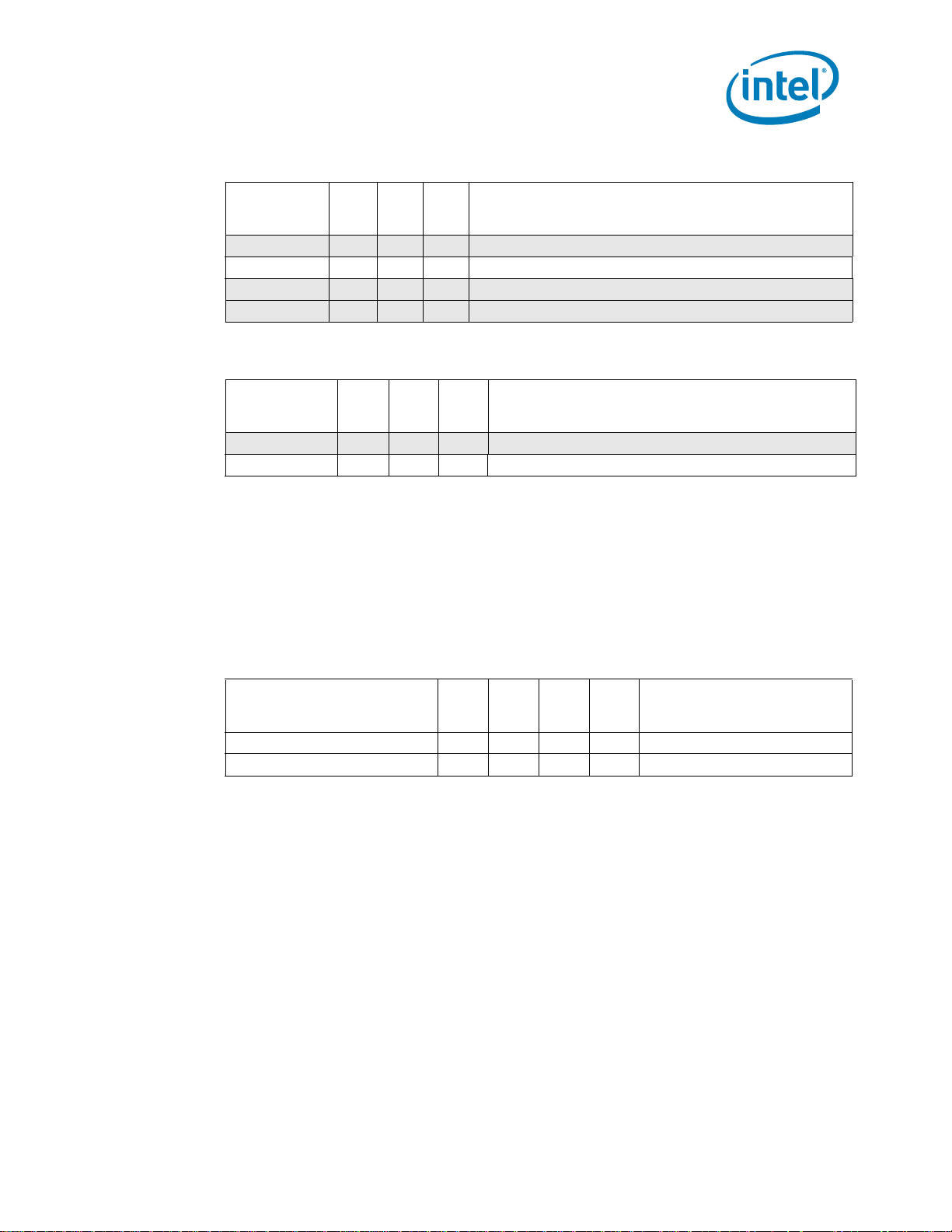

1.4 Uncore PMON - Typical Control/Counter Logic

Following is a diagram of the standard perfmon counter block illustrating how event information is

routed and stored within each counter and how its paired control register helps to select and filter the

incoming information. Details for how control bits affect event information is presented in each of the

box subsections of Chapter 2, with some summary information below.

Note: The PCU uses an adaptation of this block (refer to Section 2.6.3, “PCU Performance

Monitors” more information). Also note that only a subset of the available control bits

are presented in the diagram.

Figure 1-2. Perfmon Control/Counter Block Diagram

Selecting What To Monitor: The main task of a configuration register is to select the event to be

monitored by its respective data counter. Setting the .ev_sel and .umask fields performs the event

selection.

Telling HW that the Control Register Is Set: .en bit must be set to 1 to enable counting. Once

counting has been enabled in the box and global level of the Performance Monitoring H ier archy (refer

to Section 2.1.1, “Setting up a Monitoring Session” for more information), the paired data register will

begin to collect events.

Reference Number: 327043-001 11

Page 12

Introduction

Additional control bits include:

Applying a Threshold to Incoming Events: .thresh - since most counters can increment by a

value greater than 1, a threshold can be applied to generate an event based on the outcome of the

comparison. If the .thresh is set to a non-zero value, that value is compared against the incoming

count for that event in each cycle. If the incoming count is >= the threshold value, then the event

count captured in the data register will be incremented by 1.

Using the threshold field to generate additional events can be particularly useful when applied to a

queue occupancy count. For example, if a queue is known to contain eight entries, it may be useful to

know how often it contains 6 or more entires (i.e. Almost Full) or when it contains 1 or more entries

(i.e. Not Empty).

Note: The .invert and .edge_det bits follow the threshold comparison in sequence. If a user

wishes to apply these bits to events that only increment by 1 per cycle, . thresh must be

set to 0x1.

Inverting the Threshold Comparison: .invert - Changes the .thresh test condition to ‘<‘.

Counting State Transitions Instead of per-Cycle Events: .edge_det - Rather than accumulating

the raw count each cycle (for events that can increment by 1 per cycle), the register can capture

transitions from no event to an event incoming (i.e. the ‘Rising Edge’).

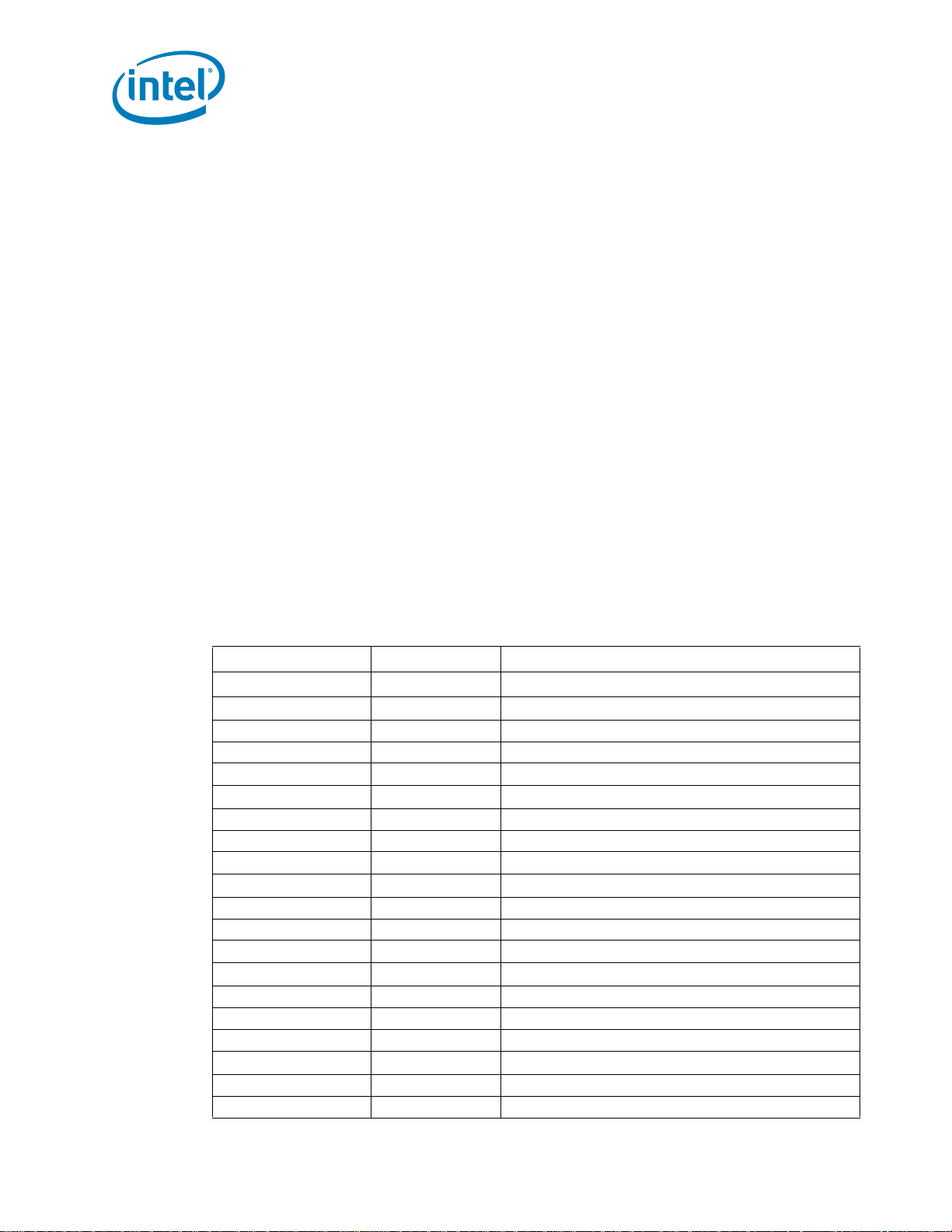

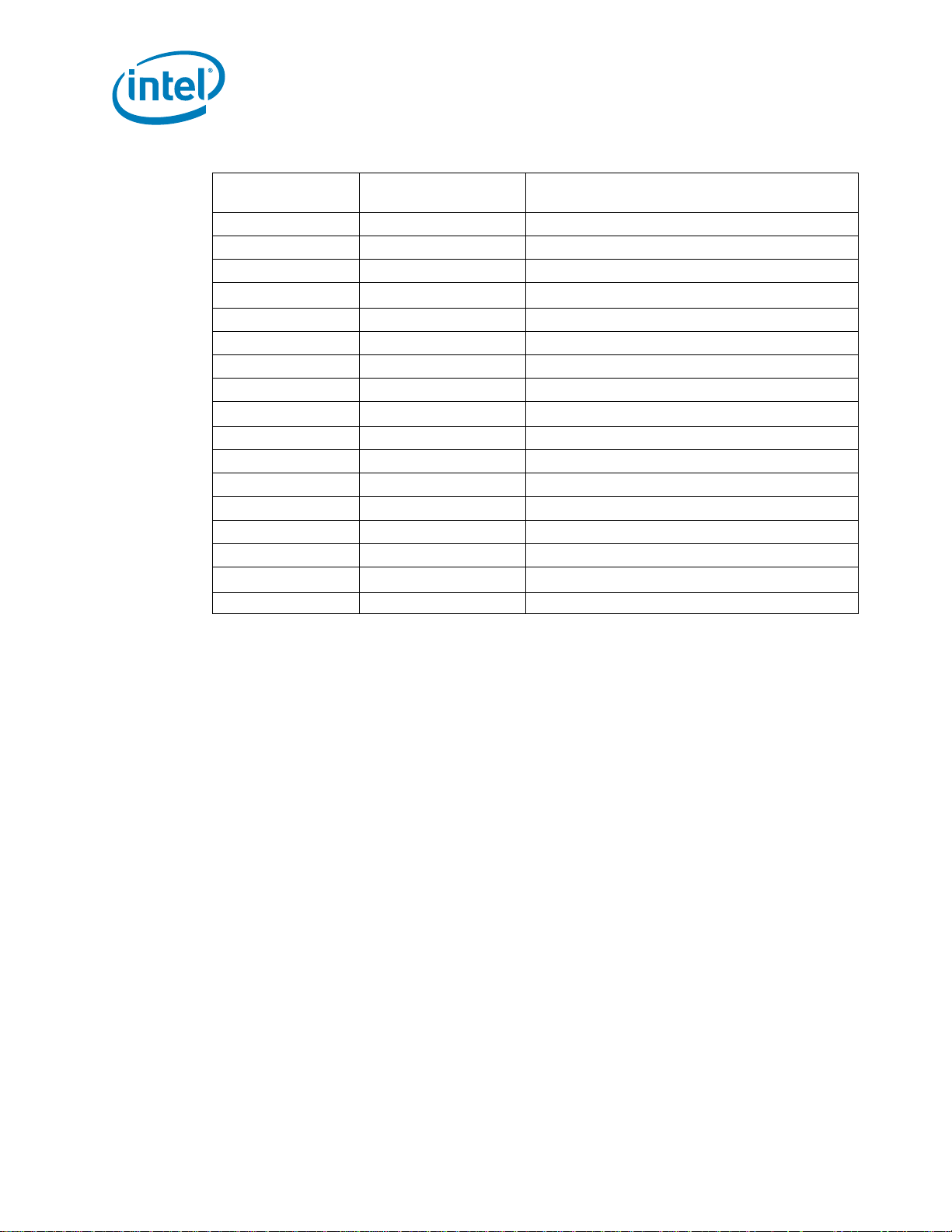

1.5 Uncore PMU Summary Tables

Following is a list of the registers provided in the Uncore for Performance Monitoring. It should be

noted that the PMON interfaces are split between MSR space (U, CBo and PCU) and PCICFG space.

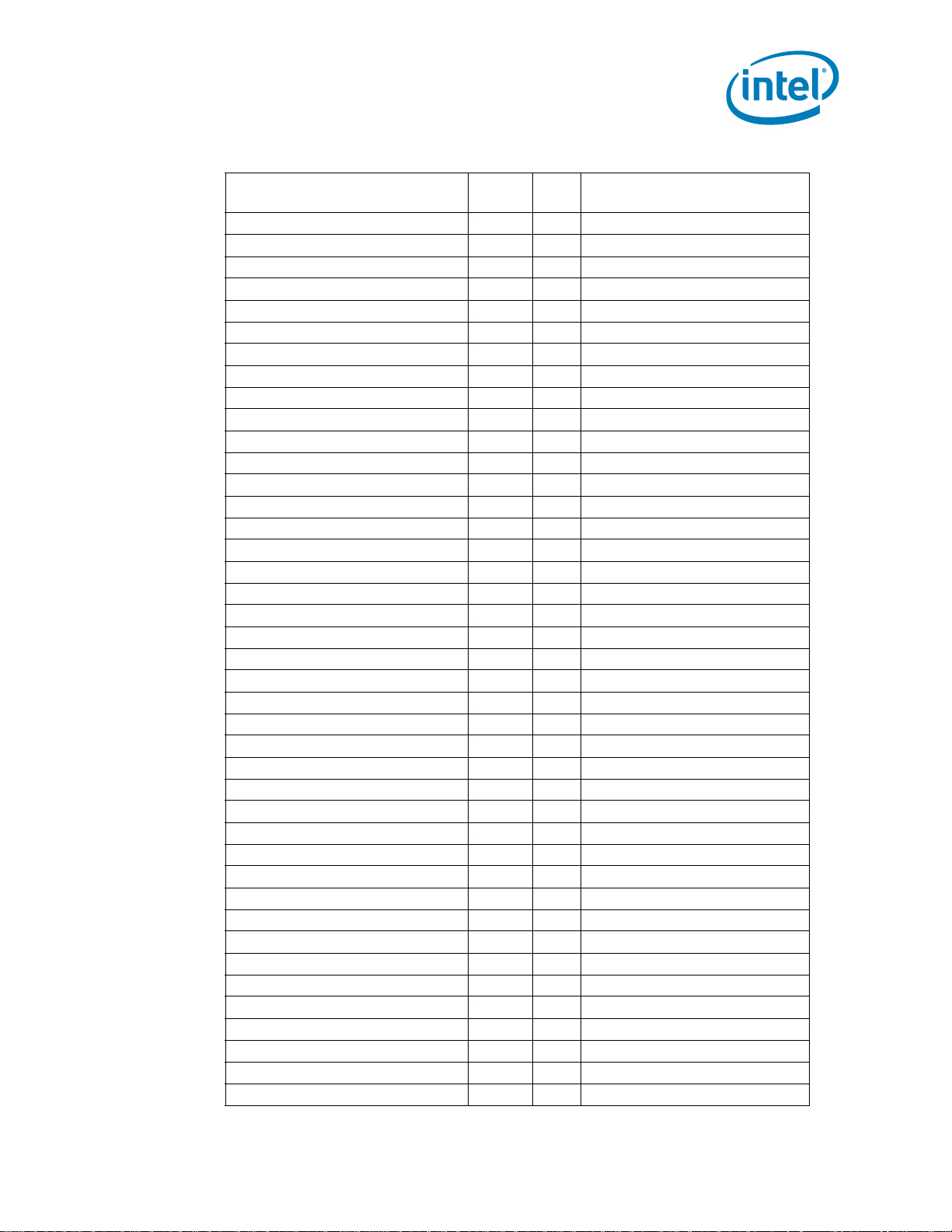

Table 1-2. MSR Space Uncore Performance Monitoring Registers (Sheet 1 of 2)

Box MSR Addresses Description

C-Box Counters

C-Box 7

C-Box 6

C-Box 5

C-Box 4

C-Box 3

0xDF9-0xDF6 Counter Registers

0xDF4 Counter Filters

0xDF3-0xDF0 Counter Config Registers

0xDE4 Box Control

0xDD9-0xDD6 Counter Registers

0xDD4 Counter Filters

0xDD3-0xDD0 Counter Config Registers

0xDC4 Box Control

0xDB9-0xDB6 Counter Registers

0xDB4 Counter Filters

0xDB3-0xDB0 Counter Config Registers

0xDA4 Box Control

0xD99-0xD96 Counter Registers

0xD94 Counter Filters

0xD93-0xD90 Counter Config Registers

0xD84 Box Control

0xD79-0xD76 Counter Registers

0xD74 Counter Filters

0xD73-0xD70 Counter Config Registers

12 Reference Number: 327043-001

Page 13

Introduction

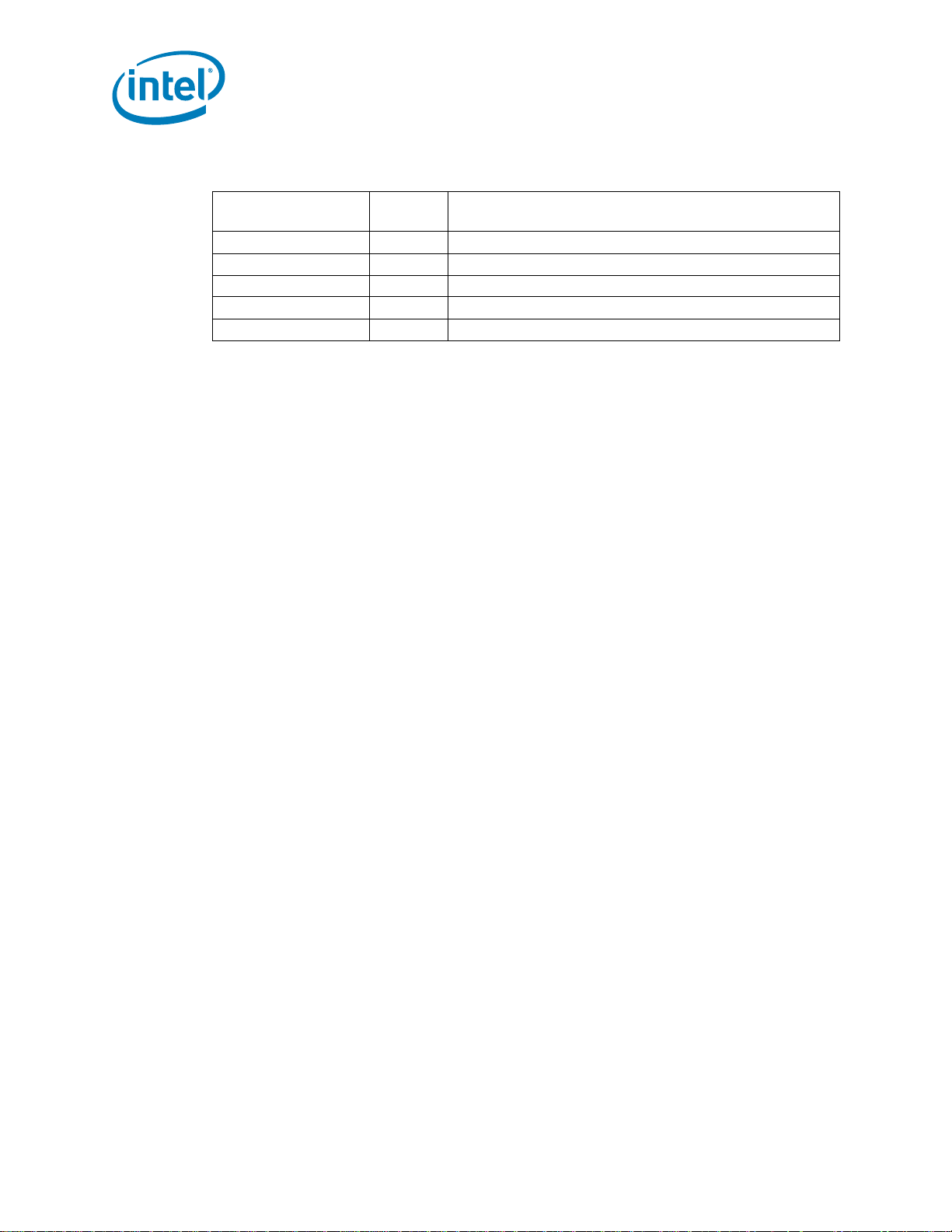

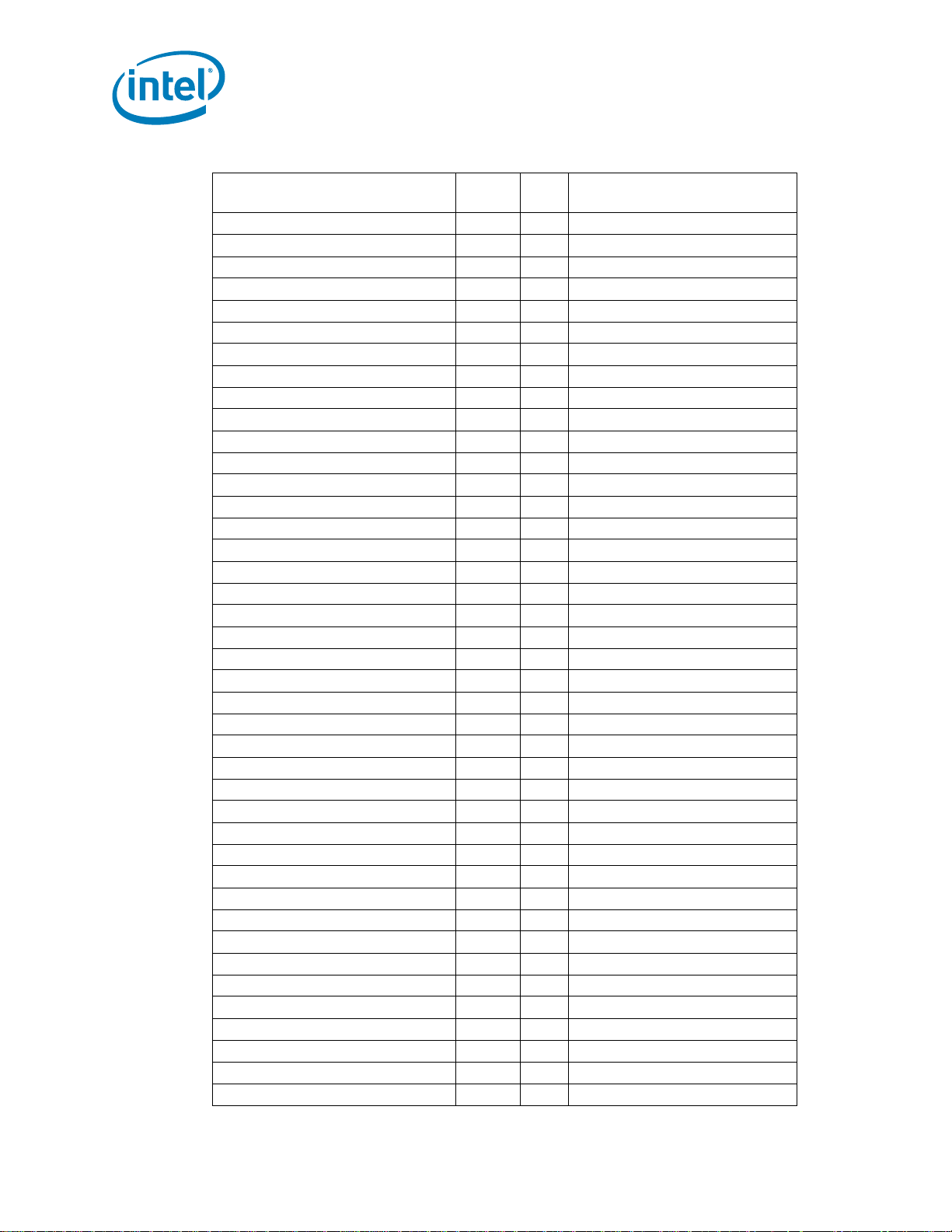

Table 1-2. MSR Space Uncore Per formance Monitoring Registers (Sheet 2 of 2)

Box MSR Addresses Description

0xD64 Box Control

C-Box 2

C-Box 1

C-Box 0

PCU Counters

U-Box Counters

For U-Box

0xD59-0xD56 Counter Registers

0xD54 Counter Filters

0xD53-0xD50 Counter Config Registers

0xD44 Box Control

0xD39-0xD36 Counter Registers

0xD34 Counter Filters

0xD33-0xD30 Counter Config Registers

0xD24 Box Control

0xD19-0xD16 Counter Registers

0xD14 Counter Filters

0xD13-0xD10 Counter Config Registers

0xD04 Box Control

0xC39-0xC36 Counter Registers

0xC24 Box Control

0xC34 Counter Filters

0xC33-0xC30 Counter Config Registers

0x3FC-0x3FD Fixed Counters (Non-PMON)

0xC17-0xC16 Counter Registers

0xC11-0xC10 Counter Config Registers

0xC09-0xC08 Fixed Counter/Config Register

Table 1-3. PCICFG Space Uncore Performance Monitoring Registers (Sheet 1 of 2)

Box

PCICFG Register

Addresses

R3QPI D19:F5,6 F(5,6) for Link 0,1

F4 Box Control

E0-D8 Counter Config Registers

B4-A0 Counter Registers

R2PCIe D19:F1

F4 Box Control

E4-D8 Counter Config Registers

BC-A0 Counter Registers

iMC D16:F0,1,4,5

F4 Box Control

F0 Counter Config Register (Fixed)

Reference Number: 327043-001 13

F(0,1,4,5) For Channel 0,1,2,3

Description

Page 14

Introduction

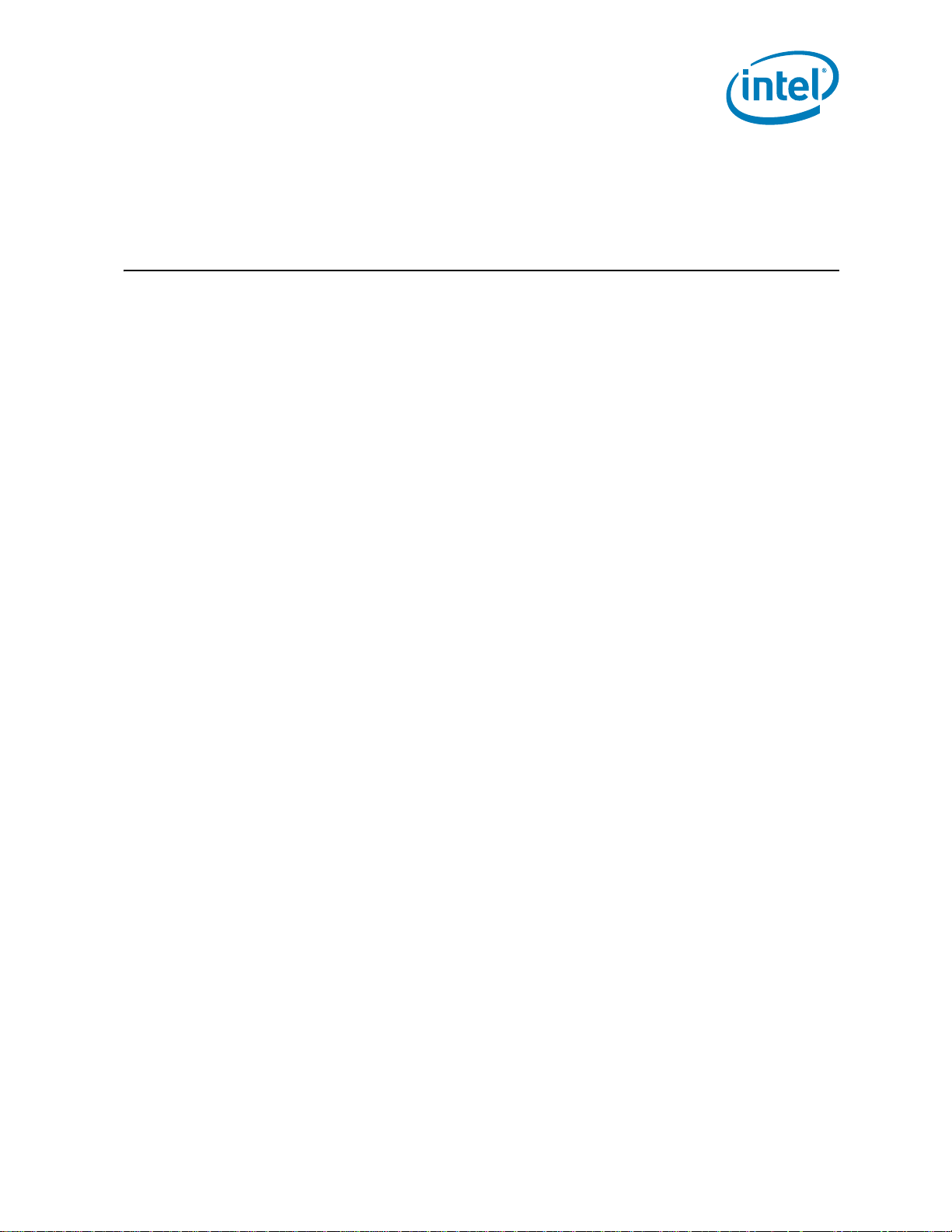

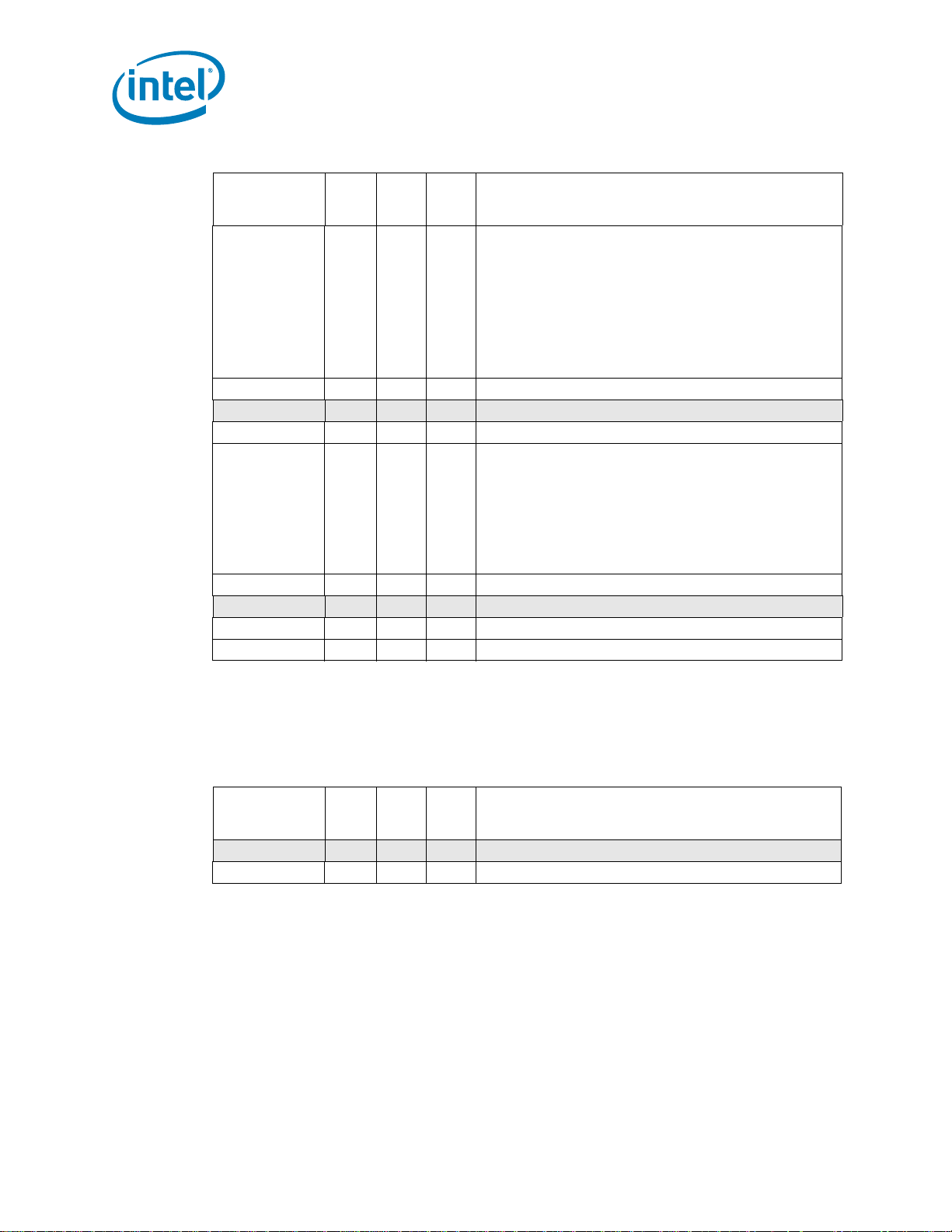

Table 1-3. PCICFG Space Uncore Performance Monitoring Registers (She et 2 of 2)

Box

HA D14:F1

QPI D8,9:F2 D(8,9) for Port 0,1

QPI Mask/Match D8,9:F6 D(8,9) for Port 0,1

QPI Misc D8,9:F0 D(8,9) for Port 0,1

PCICFG Register

Addresses

E4-D8 Counter Config Registers (General)

D4-D0 Counter Register (Fixed)

BC-A0 Counter Registers (General)

F4 Box Control

E4-D8 Counter Config Registers

BC-A0 Counter Registers

48-40 Opcode/Addr Match Filters

F4 Box Control

E4-D8 Counter Config Registers

BC-A0 Counter Registers

23C-238 Mask 0,1

22C-228 Match 0,1

D4 QPI Rate Status

Description

1.6 On Parsing and Using Derived Events

For many of the sections in the chapter covering the Performance Monitoring capabilites of each box,

a set of commonly measured metrics or ‘Derived Events’ have been included. For the most part,

these derived events are simple mathetmatical combinations of events found within the box. (e.g.

[SAMPLE]) However, there are some extensions to the notation used by the metrics.

Following is a breakdown of a Derived Event to illustrate many of the notations used. To calculcate

“Average Number of Data Read Entries that Miss the LLC when the TOR is not empty”.

(TOR_OCCUPANCY.MISS_OPCODE / COUNTER0_OCCUPANCY{edge_det,thresh=0x1}))

with:Cn_MSR_PMON_BOX_FILTER.opc=0x182.

Requires programming an extra control register (often for filtering):

• For a single field: with:Register_Name.field=value1

• For multiple fields: with:Register_Name.{field1,field2,...}={value1,value2,...}

•e.g.,

Requires reading a fixed data register

• For the case where the metric requires the information contained in a fixed data register, the

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x182,my_node}

pnemonic for the register will be included in the equation. Software will be responsible for

configuring the data register and setting it to start counting with the other events used by the

metric.

14 Reference Number: 327043-001

Page 15

Introduction

•e.g., POWER_THROTTLE_CYCLES.RANKx / MC_Chy_PCI_PMON_CTR_FIXED

Requires more input to software to determine the specific event/subevent

• In some cases, there may be multiple events/subevents that cover the same information across

multiple like hardware units. Rather than manufacturing a derived event for each combination,

the derived event will use a lower case variable in the event name.

•e.g., POWER_CKE_CYCLES.RANKx / MC_Chy_PCI_PMON_CTR_FIXED where ‘x’ is a variable to cover

events POWER_CKE_CYCLES.RANK0 through POWER_CKE_CYCLES.RANK7

Requires setting extra control bits in the register the event has been programmed in:

• event_name[.subevent_name]{ctrl_bit[=value],}

•e.g.,

NOTE: If there is no [=value] specified it is assumed that the bit must be set to 1.

Requires gathering of extra information outside the box (often for common terms):

• See following section for a breakdown of common terms found in Derived Events.

COUNTER0_OCCUPANCY{edge_det,thresh=0x1}

1.6.1 On Common Terms found in Derived Events

To convert a Latency term from a count of clocks to a count of nanoseconds:

•(Latency Metric) - {Box}_CLOCKTICKS * (1000 / UNCORE_FREQUENCY)

To convert a Bandwidth term from a count of raw bytes at the operating clock to GB/sec:

•((Traffic Metric in Bytes) / (SAMPLE_INTERVAL / (TSC_SPEED * 1000000))) /

GB_CONVERSION

• e.g., For READ_MEM_BW, an event derived from iMC:CAS_COUNT.RD * 64, which is the amount

of memory bandwidth consumed by read requests, put ‘READ_MEM_BW’ into the bandwidth term

to convert the measurement from raw bytes to GB/sec.

Following are some other terms that may be found wi thin Metrics and how they should be interpreted.

• GB_CONVERSION: 1024^3

• TSC_SPEED: Time Stamp Counter frequency in MHz

• SAMPLE_INTERVAL = TSC end time - TSC start time.

§

Reference Number: 327043-001 15

Page 16

Introduction

16 Reference Number: 327043-001

Page 17

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

2 Intel® Xeon® Processor E5-

2600 Product Family Uncore

Performance Monitoring

2.1 Uncore Per-Socket Performance Monitoring Control

The uncore PMON does not support interrupt based sampling. T o manage the large number of counter

registers distributed across many units and collect event data efficiently, this section describes the

hierarchical technique to start/stop/restart event counting that a software agent may need to perform

during a monitoring session.

2.1.1 Setting up a Monitoring Session

On HW reset, all the counters are disabled. Enabling is hierarchical. So the following steps, which

include programming the event control registers and enabling the counters to begin collecting events,

must be taken to set up a monitoring session. Section 2.1.2 co vers the steps to stop/re-start counter

registers during a monitoring session.

For each box in which events will be measured: Skip (a) and (b) for U-Box monitoring.

a) Enable each box to accept the freeze signal to start/stop/re-start all counter registers in that box

e.g., set Cn_MSR_PMON_BOX_CTL.frz_en to 1

Note: Recommended: set the .frz_en bits during the setup phase for each box a user intends

to monitor, and left alone for the duration of the monitoring session.

b) Freeze the box’s counters while setting up the monitoring session.

e.g., set Cn_MSR_PMON_BOX_CTL.frz to 1

For each event to be measured within each box:

c) Enable counting for each monitor

e.g. Set C0_MSR_PMON_CTL2.en to 1

Note: Recommended: set the .en bit for all counters in each box a user intends to monitor,

and left alone for the duration of the monitoring session.

d) Select event to monitor if the event control register hasn’t been programmed:

Reference Number: 327043-001 17

Page 18

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Program the .ev_sel and .umask bits in the control register with the encodings necessary to capture

the requested event along with any signal conditioning bits (.thresh/.edge_det/.invert) used to qualify

the event.

e.g., Set C0_MSR_PMON_CT2.{ev_sel, umask} to {0x03, 0x1} in order to capture

LLC_VICTIMS.M_STATE in CBo 0’s C0_MSR_PMON_CTR2.

Note: It is also important to program any additional filter registers used to further qualify the

events (e.g., setting the opcode match field in Cn_MSR_BOX_FILTER to qualify

TOR_INSERTS by a specific opcode).

Back to the box level:

e) Reset counters in each box to ensure no stale values have been acquired from previous sessions.

• For each CBo, set Cn_MSR_PMON_BOX_CTL[1:0] to 0x2.

• For each Intel® QPI Port, set Q_Py_PCI_PMON_BOX_CTL[1:0] to 0x2.

• Set PCU_MSR_PMON_BOX_CTL[1:0] to 0x2.

• For each Link, set R3QPI_PCI_PMON_BOX_CTL[1:0] to 0x2.

• Set R2PCIE_PCI_PMON_BOX_CTL[1:0] to 0x2.

Note: The UBox does not have a Unit Control register and neither the iMC nor the HA have a

reset bit in their Unit Control register. The counters in the UBox, the HA each populated

DRAM channel in the iMC will need to be manually reset by writing a 0 in each data

register.

Back to the box level:

f) Commence counting at the box level by unfreezing the counters in each box

e.g., set Cn_MSR_PMON_BOX_CTL.frz to 0

And with that, counting will begin.

Note: The UBox does not have a Unit Control register. Once enabled and programmed with a

valid event, they will be collecting events. For somewhat better synchronization, a user

can keep the U_MSR_PMON_CTL.ev_sel at 0x0 while enabled and write it with a valid

value just prior to unfreezing the registers in other boxes.

2.1.2 Reading the Sample Interval

Software can poll the counters whenever it chooses.

a) Polling - before reading, it is recommended that software freeze the counters in each box in which

counting is to take place (by setting *_PMON_BOX_CTL.frz_en and .frz to 1). After reading the event

counts from the counter registers, the monitoring agent can choose to reset the event counts to avoid

event-count wrap-around; or resume the counter register without resetting their values. The latter

choice will require the monitoring agent to check and adjust for potential wrap-around situations.

18 Reference Number: 327043-001

Page 19

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

2.2 UBox Performance Monitoring

2.2.1 Overview of the UBox

The UBox serves as the system configuration controller for the Intel Xeon Processor E5-2600 family

uncore.

In this capacity, the UBox acts as the central unit for a variety of functions:

• The master for reading and writing physically distributed registers across the uncore using the

Message Channel.

• The UBox is the intermediary for interrupt traffic, receiving interrupts from the sytem and

dispatching interrupts to the appropriate core.

• The UBox serves as the system lock master used when quiescing the platform (e.g., Intel® QPI

bus lock).

2.2.2 UBox Performance Monitoring Overview

The UBox supports event monitoring through two programmable 44-bit wide counters

(U_MSR_PMON_CTR{1:0}), and a 48-bit fixed counter which increments each u-clock. Each of these

counters can be programmed (U_MSR_PMON_CTL{1:0}) to monitor any UBox event.

For information on how to setup a monitoring session, refer to Section 2.1, “Uncore Per- Sock et

Performance Monitoring Control”

.

2.2.3 UBox Performance Monitors

Table 2-1. UBox Performance Monitoring MSRs

MSR Name

U_MSR_PMON_CTR1 0x0C17 64 U-Box PMON Counter 1

U_MSR_PMON_CTR0 0x0C16 64 U-Box PMON Counter 0

U_MSR_PMON_CTL1 0x0C11 64 U-Box PMON Control for Counter 1

U_MSR_PMON_CTL0 0x0C10 32 U-Box PMON Control for Counter 0

U_MSR_PMON_UCLK_FIXED_CTR 0x0C09 64 U-Box PMON UCLK Fixed Counter

U_MSR_PMON_UCLK_FIXED_CTL 0x0C08 32 U-Box PMON UCLK Fixed Counter Control

MSR

Address

2.2.3.1 UBox Box Level PMON State

The following registers represent the state governing all box-level PMUs in the UBox.

Size

(bits)

Description

U

Reference Number: 327043-001 19

Page 20

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

2.2.3.2 UBox PMON state - Counter/Control Pairs

The following table defines the layout of the UBox performance monitor control registers. The main

task of these configuration registers is to select the event to be monitored by their respective data

counter (.ev_sel, .umask). Additional control bits are provided to shape the incoming events (e.g.

.invert, .edge_det, .thresh) as well as provide additional functionality for monitoring software (.rst).

Table 2-2. U_MSR_PMON_CTL{1-0} Register – Field D efinitions

Field Bits Attr

rsv 31:29 RV 0 Reserved (?)

thresh 28:24 RW 0 Threshold used in counter comparison.

invert 23 RW 0 Invert comparison against Threshold.

en 22 RW 0 Local Counter Enable.

rsv 21:20 RV 0 Reserved. SW must write to 0 for proper operation.

rsv 19 RV 0 Reserved (?)

edge_det 18 RW 0 When set to 1, rather than measuring the event in each cycle it

rst 17 WO 0 When set to 1, the corresponding counter will be cleared to 0.

umask 15:8 RW 0 Select subevents to be counted within the selected event.

ev_sel 7:0 RW 0 Select event to be counted.

HW

Reset

Val

Description

0 - comparison will be ‘is event increment >= threshold?’.

1 - comparison is inverted - ‘is event increment < threshol d?’

NOTE: .invert is in series following .thresh, Due to this, the

.thresh field must be set to a non-0 value. For events that

increment by no more than 1 per cycle, set .thresh to 0x1.

Also, if .edge_det is set to 1, the counter will increment when a 1

to 0 transition (i.e. falling edge) is detected.

is active, the corresponding counter will incr ement when a 0 to 1

transition (i.e. rising edge) is detected.

When 0, the counter will increment in each cycle that the event

is asserted.

NOTE: .edge_det is in series following .thresh, Due to this, the

.thresh field must be set to a non-0 value. For events that

increment by no more than 1 per cycle, set .thresh to 0x1.

The UBox performance monitor data registers are 44-bit wide. Should a counter ov erflow (a carry out

from bit 43), the counter will wrap and continue to collect events.

If accessible, software can continuously read the data registers without disabling event collection.

Table 2-3. U_MSR_PMON_CTR{1-0} Register – Field Definitions

Field Bits Attr

rsv 63:44 RV 0 Reserved (?)

event_count 43:0 RW-V 0 44-bit performance event counter

HW

Reset

Val

Description

The Global UBox PMON registers also include a fixed counter that increments at UCLK for each cycle it

is enabled.

20 Reference Number: 327043-001

Page 21

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-4. U_MSR_PMON_FIXED_CTL Register – Field Definitions

Field Bits Attr

rsv 31:23 RV 0 Reserved (?)

en 22 RW 0 Enable counter when global enable is set.

rsv 21:20 RV 0 Reserved. SW must write to 0 for proper operation.

rsv 19:0 RV 0 Reserved ( ?)

HW

Rese

t Val

Description

Table 2-5. U_MSR_PMON_FIXED_CTR Register – Field Definitions

Field Bits Attr

rsv 63:44 RV 0 Reserved (?)

event_count 43:0 RW-V 0 48-bit performance event counter

HW

Reset

Val

Description

2.2.4 UBox Performance Monitoring Events

The set of events that can be monitored in the UBox are summarized in Section 2.2.

2.2.5 UBOX Box Events Ordered By Code

The following table summarizes the directly measured UBOX Box events.

Table 2-6. Performance Monitor Events for UBOX

Symbol Name

EVENT_MSG 0x42 0 0-1 1 VLW Received

LOCK_CYCLES 0x44 0 0-1 1 IDI Lock/SplitLock Cycles

Event

Code

Extra

Select

Bit

Ctrs

Max

Inc/

Cyc

Description

2.2.6 UBOX Box Performance Monitor Event List

The section enumerates the uncore performance monitoring events for the UBOX Box.

EVENT_MSG

• Title: VLW Received

• Category: EVENT_MSG Events

• Event Code: 0x42

• Max. Inc/Cyc: 1, Register Restrictions: 0-1

• Definition: Virtual Logical Wire (legacy) message were received from Uncore. Specify the thread

to filter on using NCUPMONCTRLGLCTR.ThreadID.

Reference Number: 327043-001 21

Page 22

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-7. Unit Masks for EVENT_MSG

Extension

VLW_RCVD bxxxxxxx1

MSI_RCVD bxxxxxx1x

IPI_RCVD bxxxxx1xx

DOORBELL_RCVD bxxxx1xxx

INT_PRIO bxxx1xxxx

umask

[15:8]

Description

LOCK_CYCLES

• Title: IDI Lock/SplitLock Cycles

• Category: LOCK Events

• Event Code: 0x44

• Max. Inc/Cyc: 1, Register Restrictions: 0-1

• Definition: Number of times an IDI Lock/SplitLock sequence was started

2.3 Caching Agent (Cbo) Performance Monitoring

2.3.1 Overview of the CBo

The LLC coherence engine (CBo) manages the interface between the core and the last level cache

(LLC). All core transactions that access the LLC are directed from the core to a CBo via the ring

interconnect. The CBo is responsible for managing data delivery from the LLC to the requesting core.

It is also responsible for maintaining coherence between the cores within the socket that share the

LLC; generating snoops and collecting snoop responses from the local cores when the MESIF protocol

requires it.

So, if the CBo fielding the core request indicates that a core within the socket owns the line (for a

coherent read), the request is snooped to that local core. That same CBo will then snoop all peers

which might have the address cached (other cores, remote sockets, etc) and send the request to the

appropriate Home Agent for conflict checking, memory requests and writebacks.

In the process of maintaining cache coherency within the socket, the CBo is the gate keeper for all

®

QuickPath Interconnect (Intel® QPI) messages that originate in the core and is responsible for

Intel

ensuring that all Intel

®

QPI messages that pass through the socket’s LLC remain coherent.

The CBo manages local conflicts by ensuring that only one request is issued to the system for a

specific cacheline.

The uncore contains up to eight instances of the CBo, each assigned to manage a distint 2.5MB slice

of the processor’s total LLC capacity. A slice that can be up to 20-way set associative. For processors

with fewer than 8 2.5MB LLC slices, the CBo Boxes or missing slices will still be active and track ring

traffic caused by their co-located core even if they have no LLC related traffic to track (i.e. hits/

misses/snoops).

Every physical memory address in the system is uniquely associated with a single CBo instance via a

proprietary hashing algorithm that is designed to keep the distribution of traffic across the CBo

instances relatively uniform for a wide range of possible address patterns. This enables the individual

CBo instances to operate independently , each man aging its slice of the physical address space without

any CBo in a given socket ever needing to communicate with the other CBos in that same socket.

22 Reference Number: 327043-001

Page 23

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

2.3.2 CBo Performance Monitoring Overview

Each of the CBos in the uncore supports event monitoring through four 44-bit wide counters

(Cn_MSR_PMON_CTR{3:0}). Event programming in the CBo is restricted such that each events can

only be measured in certain counters within the CBo. For example, counter 0 is dedicated to

occupancy events. No other counter may be used to capture occupancy events.

• Counter 0: Queue-occupancy-enabled counter that tracks all events

• Counter 1: Basic counter that tracks all but queue occupancy events

• Counter 2: Basic counter that tracks ring events and the occupancy companion event

(COUNTER0_EVENT).

• Counter 3: Basic counter that tracks ring events and the occupancy companion event

(COUNTER0_EVENT).

CBo counter 0 can increment by a maximum of 20 per cycle; counters 1-3 can increment by 1 per

cycle.

Some uncore performance events that monitor transaction activities require additional details that

must be programmed in a filter register. Each Cbo provides one filter register and allows only one

such event be programmed at a given time, see Section 2.3.3.3.

For information on how to setup a monitoring session, refer to Section 2.1, “Uncore Per- Sock et

Performance Monitoring Control”

.

2.3.2.1 Special Note on CBo Occupancy Events

Although only counter 0 supports occupancy events, it is possible to program coounters 1-3 to

monitor the same occupancy event by selecting the “OCCUPANCY_COUNTER0” event code on

counters 1-3.

This allows:

• Thresholding on all four counters.

While one can monitor no more than one queue at a time, it is possible to setup different queue

occupancy thresholds on each of the four counters. For example, if one wanted to monitor the

IRQ, one could setup thresholds of 1, 7, 14, and 18 to get a picture of the time spent at different

occupancies in the IRQ.

• Average Latency and Average Occupancy

It can be useful to monitor the average occupancy in a queue as well as the average number of

items in the queue. One could program counter 0 to accumulate the occupancy, counter 1 with

the queue’s allocations event, and counter 2 with the OCCUPANCY_COUNTER0 event and a

threshold of 1. Latency could then be calculated by counter 0 / counter 1, and occupancy by

counter 0 / counter 2.

Reference Number: 327043-001 23

Page 24

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

2.3.3 CBo Performance Monitors

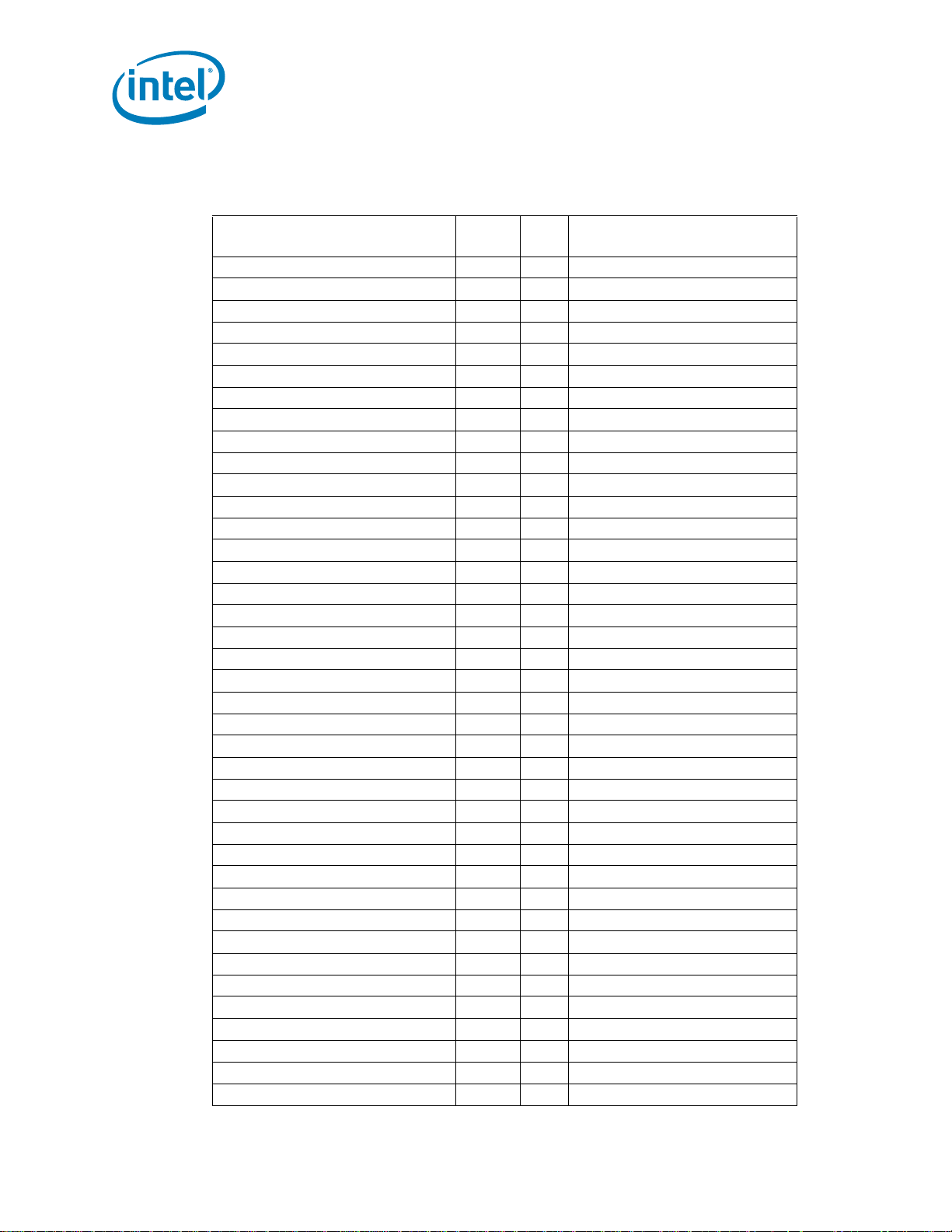

Table 2-8. CBo Performance Monitoring MSRs (Sheet 1 of 4)

MSR Name

CBo 0 PMON Registers

Generic Counters

C0_MSR_PMON_CTR3 0x0D19 64 CBo 0 PMON Counter 3

C0_MSR_PMON_CTR2 0x0D18 64 CBo 0 PMON Counter 2

C0_MSR_PMON_CTR1 0x0D17 64 CBo 0 PMON Counter 1

C0_MSR_PMON_CTR0 0x0D16 64 CBo 0 PMON Counter 0

Box-Level Filter

C0_MSR_PMON_BOX_FILTER 0x0D14 32 CBo 0 PMON Filter

Generic Counter Control

C0_MSR_PMON_CTL3 0x0D13 32 CBo 0 PMON Control for Counter 3

C0_MSR_PMON_CTL2 0x0D12 32 CBo 0 PMON Control for Counter 2

C0_MSR_PMON_CTL1 0x0D11 32 CBo 0 PMON Control for Counter 1

C0_MSR_PMON_CTL0 0x0D10 32 CBo 0 PMON Control for Counter 0

Box-Level Control/Status

C0_MSR_PMON_BOX_CTL 0x0D04 32 CBo 0 PMON Box-Wide Control

CBo 1 PMON Registers

Generic Counters

C1_MSR_PMON_CTR3 0x0D39 64 CBo 1 PMON Counter 3

C1_MSR_PMON_CTR2 0x0D38 64 CBo 1 PMON Counter 2

C1_MSR_PMON_CTR1 0x0D37 64 CBo 1 PMON Counter 1

C1_MSR_PMON_CTR0 0x0D36 64 CBo 1 PMON Counter 0

Box-Level Filter

C1_MSR_PMON_BOX_FILTER 0x0D34 32 CBo 1 PMON Filter

Generic Counter Control

C1_MSR_PMON_CTL3 0x0D33 32 CBo 1 PMON Control for Counter 3

C1_MSR_PMON_CTL2 0x0D32 32 CBo 1 PMON Control for Counter 2

C1_MSR_PMON_CTL1 0x0D31 32 CBo 1 PMON Control for Counter 1

C1_MSR_PMON_CTL0 0x0D30 32 CBo 1 PMON Control for Counter 0

Box-Level Control/Status

C1_MSR_PMON_BOX_CTL 0x0D24 32 CBo 1 PMON Box-Wide Control

MSR

Address

Size

(bits)

Description

CBo 2 PMON Registers

Generic Counters

C2_MSR_PMON_CTR3 0x0D59 64 CBo 2 PMON Counter 3

C2_MSR_PMON_CTR2 0x0D58 64 CBo 2 PMON Counter 2

C2_MSR_PMON_CTR1 0x0D57 64 CBo 2 PMON Counter 1

C2_MSR_PMON_CTR0 0x0D56 64 CBo 2 PMON Counter 0

Box-Level Filter

24 Reference Number: 327043-001

Page 25

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-8. CBo Performance Moni toring MSRs (Sheet 2 of 4)

MSR Name

C2_MSR_PMON_BOX_FILTER 0x0D54 32 CBo 2 PMON Filter

Generic Counter Control

C2_MSR_PMON_CTL3 0x0D53 32 CBo 2 PMON Control for Counter 3

C2_MSR_PMON_CTL2 0x0D52 32 CBo 2 PMON Control for Counter 2

C2_MSR_PMON_CTL1 0x0D51 32 CBo 2 PMON Control for Counter 1

C2_MSR_PMON_CTL0 0x0D50 32 CBo 2 PMON Control for Counter 0

Box-Level Control/Status

C2_MSR_PMON_BOX_CTL 0x0D44 32 CBo 2 PMON Box-Wide Control

CBo 3 PMON Registers

Generic Counters

C3_MSR_PMON_CTR3 0x0D79 64 CBo 3 PMON Counter 3

C3_MSR_PMON_CTR2 0x0D78 64 CBo 3 PMON Counter 2

C3_MSR_PMON_CTR1 0x0D77 64 CBo 3 PMON Counter 1

C3_MSR_PMON_CTR0 0x0D76 64 CBo 3 PMON Counter 0

Box-Level Filter

C3_MSR_PMON_BOX_FILTER 0x0D74 32 CBo 3 PMON Filter

Generic Counter Control

C3_MSR_PMON_CTL3 0x0D73 32 CBo 3 PMON Control for Counter 3

C3_MSR_PMON_CTL2 0x0D72 32 CBo 3 PMON Control for Counter 2

C3_MSR_PMON_CTL1 0x0D71 32 CBo 3 PMON Control for Counter 1

C3_MSR_PMON_CTL0 0x0D70 32 CBo 3 PMON Control for Counter 0

Box-Level Control/Status

C3_MSR_PMON_BOX_CTL 0x0D64 32 CBo 3 PMON Box-Wide Control

MSR

Address

Size

(bits)

Description

CBo 4 PMON Registers

Generic Counters

C4_MSR_PMON_CTR3 0x0D99 64 CBo 4 PMON Counter 3

C4_MSR_PMON_CTR2 0x0D98 64 CBo 4 PMON Counter 2

C4_MSR_PMON_CTR1 0x0D97 64 CBo 4 PMON Counter 1

C4_MSR_PMON_CTR0 0x0D96 64 CBo 4 PMON Counter 0

Box-Level Filter

C4_MSR_PMON_BOX_FILTER 0x0D94 32 CBo 4 PMON Filter

Generic Counter Control

C4_MSR_PMON_CTL3 0x0D93 32 CBo 4 PMON Control for Counter 3

C4_MSR_PMON_CTL2 0x0D92 32 CBo 4 PMON Control for Counter 2

C4_MSR_PMON_CTL1 0x0D91 32 CBo 4 PMON Control for Counter 1

C4_MSR_PMON_CTL0 0x0D90 32 CBo 4 PMON Control for Counter 0

Box-Level Control/Status

C4_MSR_PMON_BOX_CTL 0x0D84 32 CBo 4 PMON Box-Wide Control

Reference Number: 327043-001 25

Page 26

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-8. CBo Performance Monitoring MSRs (Sheet 3 of 4)

MSR Name

CBo 5 PMON Registers

Generic Counters

C5_MSR_PMON_CTR3 0x0DB9 64 CBo 5 PMON Counter 3

C5_MSR_PMON_CTR2 0x0DB8 64 CBo 5 PMON Counter 2

C5_MSR_PMON_CTR1 0x0DB7 64 CBo 5 PMON Counter 1

C5_MSR_PMON_CTR0 0x0DB6 64 CBo 5 PMON Counter 0

Box-Level Filter

C5_MSR_PMON_BOX_FILTER 0x0DB4 32 CBo 5 PMON Filter

Generic Counter Control

C5_MSR_PMON_CTL3 0x0DB3 32 CBo 5 PMON Control for Counter 3

C5_MSR_PMON_CTL2 0x0DB2 32 CBo 5 PMON Control for Counter 2

C5_MSR_PMON_CTL1 0x0DB1 32 CBo 5 PMON Control for Counter 1

C5_MSR_PMON_CTL0 0x0DB0 32 CBo 5 PMON Control for Counter 0

Box-Level Control/Status

C5_MSR_PMON_BOX_CTL 0x0DA4 32 CBo 5 PMON Box-Wide Control

CBo 6 PMON Registers

Generic Counters

C6_MSR_PMON_CTR3 0x0DD9 64 CBo 6 PMON Counter 3

C6_MSR_PMON_CTR2 0x0DD8 64 CBo 6 PMON Counter 2

C6_MSR_PMON_CTR1 0x0DD7 64 CBo 6 PMON Counter 1

C6_MSR_PMON_CTR0 0x0DD6 64 CBo 6 PMON Counter 0

Box-Level Filter

C6_MSR_PMON_BOX_FILTER 0x0DD4 32 CBo 6 PMON Filter

Generic Counter Control

C6_MSR_PMON_CTL3 0x0DD3 32 CBo 6 PMON Control for Counter 3

C6_MSR_PMON_CTL2 0x0DD2 32 CBo 6 PMON Control for Counter 2

C6_MSR_PMON_CTL1 0x0DD1 32 CBo 6 PMON Control for Counter 1

C6_MSR_PMON_CTL0 0x0DD0 32 CBo 6 PMON Control for Counter 0

Box-Level Control/Status

C6_MSR_PMON_BOX_CTL 0x0DC4 32 CBo 6 PMON Box-Wide Control

MSR

Address

Size

(bits)

Description

CBo 7 PMON Registers

Generic Counters

C7_MSR_PMON_CTR3 0x0DF9 64 CBo 7 PMON Counter 3

C7_MSR_PMON_CTR2 0x0DF8 64 CBo 7 PMON Counter 2

C7_MSR_PMON_CTR1 0x0DF7 64 CBo 7 PMON Counter 1

C7_MSR_PMON_CTR0 0x0DF6 64 CBo 7 PMON Counter 0

Box-Level Filter

C7_MSR_PMON_BOX_FILTER 0x0DF4 32 CBo 7 PMON Filter

Generic Counter Control

26 Reference Number: 327043-001

Page 27

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-8. CBo Performance Moni toring MSRs (Sheet 4 of 4)

MSR Name

C7_MSR_PMON_CTL3 0x0DF3 32 CBo 7 PMON Control for Counter 3

C7_MSR_PMON_CTL2 0x0DF2 32 CBo 7 PMON Control for Counter 2

C7_MSR_PMON_CTL1 0x0DF1 32 CBo 7 PMON Control for Counter 1

C7_MSR_PMON_CTL0 0x0DF0 32 CBo 7 PMON Control for Counter 0

Box-Level Control/Status

C7_MSR_PMON_BOX_CTL 0x0DE4 32 CBo 7 PMON Box-Wide Control

MSR

Address

Size

(bits)

Description

2.3.3.1 CBo Box Level PMON State

The following registers represent the state governing all box-level PMUs in the CBo.

In the case of the CBo, the Cn_MSR_PMON_BOX_CTL register governs what happens when a freeze

signal is received (.frz_en). It also provides the ability to manually freeze the counters in the box

(.frz) and reset the generic state (.rst_ctrs and .rst_ctrl).

Table 2-9. Cn_MSR_PMON_BOX_CTL Register – Field Definitions

Field Bits Attr

rsv 31:18 RV 0 Reserved (?)

rsv 17 RV 0 Reserved; SW must write to 0 else behavior is undefined.

frz_en 16 WO 0 Freeze Enable.

HW

Reset

Val

Description

If set to 1 and a freeze signal is received, the counters will be

stopped or ‘frozen’, else the freeze signal will be ignored.

rsv 15:9 RV 0 Reserved (?)

frz 8 WO 0 Freeze.

If set to 1 and the .frz_en is 1, the counters in this box will be

frozen.

rsv 7:2 RV 0 Reserved (?)

rst_ctrs 1 WO 0 Reset Counters.

When set to 1, the Counter Registers will be reset to 0.

rst_ctrl 0 WO 0 Reset Control.

U

When set to 1, the Counter Control Registers will be reset to 0.

2.3.3.2 CBo PMON state - Counter/Control Pairs

The following table defines the layout of the CBo performance monitor control registers. The main

task of these configuration registers is to select the event to be monitored by their respective data

counter (.ev_sel, .umask). Additional control bits are provided to shape the incoming events (e.g.

.invert, .edge_det, .thresh) as well as provide additional functionality for monitoring software (.rst).

Table 2-10. Cn_MSR_PMON_CTL{3-0} Re gister – Field Definitions (Sheet 1 of 2)

Field Bits Attr

thresh 31:24 RW-V 0 Threshold used in counter comparison.

HW

Reset

Val

Description

Reference Number: 327043-001 27

Page 28

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-10. Cn_MSR_PMON_CTL{3-0} Register – Field Definitions (Sheet 2 of 2)

Field Bits Attr

invert 23 RW-V 0 Invert comparison against Threshold.

en 22 RW-V 0 Local Counter Enable.

rsv 21:20 RV 0 Reserved; SW must write to 0 else behavior is undefined.

tid_en 19 RW-V 0 TID Filter Enable

edge_det 18 RW-V 0 When set to 1, rather than measuring the event in each cycle it

rst 17 WO 0 When set to 1, the corresponding counter will be cleared to 0.

rsv 16 RV 0 Reserved. SW must write to 0 else behavior is undefined.

umask 15:8 RW-V 0 Select subevents to be counted within the selected event.

ev_sel 7:0 RW-V 0 Select event to be counted.

HW

Reset

Val

Description

0 - comparison will be ‘is event increment >= threshold?’.

1 - comparison is inverted - ‘is event increment < threshol d?’

NOTE: .invert is in series following .thresh, Due to this, the

.thresh field must be set to a non-0 value. For events that

increment by no more than 1 per cycle, set .thresh to 0x1.

Also, if .edge_det is set to 1, the counter will increment when a 1

to 0 transition (i.e. falling edge) is detected.

is active, the corresponding counter will incr ement when a 0 to 1

transition (i.e. rising edge) is detected.

When 0, the counter will increment in each cycle that the event

is asserted.

NOTE: .edge_det is in series following .thresh, Due to this, the

.thresh field must be set to a non-0 value. For events that

increment by no more than 1 per cycle, set .thresh to 0x1.

The CBo performance monitor data registers are 44b wide. Should a counter overflow (a carry out

from bit 43), the counter will wrap and continue to collect events.If accessible, software can

continuously read the data registers without disabling event collection.

Table 2-11. Cn_MSR_PMON_CTR{3-0} Register – Field Definitions

Field Bits Attr

rsv 63:44 RV 0 Reserved (?)

event_count 43:0 RW-V 0 44-bit performance event counter

HW

Reset

Val

Description

2.3.3.3 CBo Filter Register (Cn_MSR_PMON_BOX_FILTER)

In addition to generic event counting, each CBo provides a MATCH register that allows a user to filter

various traffic as it applies to specific events (see Event Section for more information). LLC_LOOKUP

may be filtered by the cacheline state, QPI_CREDITS may be filtered by link while TOR_INSERTS and

TOR_OCCUPANCY may be filtered by the opcode of the queued request as well as the corresponding

NodeID.

Any of the CBo events may be filtered by Thread/Core-ID. To do so, the control register’s .tid_en bit

must be set to 1 and the tid field in the FILTER register filled out.

28 Reference Number: 327043-001

Page 29

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Note: Not all transactions can be associated with a specific thread. For example, when a

snoop triggers a WB, it does not have an associated thread. Transactions that are

associated with PCIe will come from “0x1E” (b11110).

Note: Only one of these filtering criteria may be applied at a time.

Table 2-12. Cn_MSR_PMON_BOX_FILTER Register – Field Definitions

Field Bits Atrtr

opc

(7b IDI Opcode?

w/top 2b 0x3)

state 22:18 RW 0 Select state to monitor for LLC_LOOKUP event. Setting multiple

nid 17:10 0 0 Match on Target NodeID.

rsv 9:8 RV 0 Reserved (?)

rsv 7:5 RV 0 Reserved (?)

tid 4:0 0 0 [3:1] Core-ID

31:23 RW 0 Match on Opcode (see

HW

Reset

Val

Description

IDI Packet Type for

Table 2-13, “Opcode Match by

Cn_MSR_PMON_BOX_FILTER.opc”)

NOTE: Only tracks opcodes that come from the IRQ. It is not

possible to track snoops (from IPQ) or other transactions from

the ISMQ.

bits in this field will allow a user to track multiple states.

b1xxxx - ‘F’ state.

bx1xxx - ‘M’ state

bxx1xx - ‘E’ state.

bxxx1x - ‘S’ state.

bxxxx1 - ‘I’ state.

NID is a mask filter with each bit representing a different Node in

the system. 0x01 would filter on NID 0, 0x2 would filter on NID

1, etc

[0] Thread 1/0

When .tid_en is 0; the specified counter will count ALL events

Thread-ID 0xF is reserved for non- associated requests such as: -

LLC victims - PMSeq - External Snoops

Refer to Table 2-144, “Opcodes (Alphabetical Listing)” for definitions of the opcodes found in the

following table.

Table 2-13. Opcode Match by IDI Packet Type for Cn_MSR_PMON_BOX_FILTER.opc (Sheet

1 of 2)

opc

Value

0x180 RFO Demand Data RFO

0x181 CRd Demand Code Read

0x182 DRd Demand Data Read

0x187 PRd Partial Reads (UC)

0x18C WCiLF Streaming Store - Full

0x18D WCiL Streaming Store - Partial

0x190 PrefRFO Prefetch RFO into LLC but don’t pass to L2. Includes Hints

0x191 PrefCode Prefetch Code into LLC but don’t pass to L2. Includes Hints

0x192 PrefData Prefetch Data into LLC but don’t pass to L2. Includes Hints

Reference Number: 327043-001 29

Opcode Defn

Page 30

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-13. Opcode Match by IDI Packet Type for Cn_MSR_PMON_BOX_FILTER.opc (Sheet

2 of 2)

opc

Value

0x194 PCIWiLF PCIe Write (non-allocating)

0x195 PCIPRd PCIe UC Read

0x19C PCIItoM PCIe Write (allocating)

0x19E PCIRdCur PCIe read current

0x1C4 WbMtoI Request writeback Modified invalidate line

0x1C5 WbMtoE Request writeback Modified set to Exclusive

0x1C8 ItoM Request Invalidate Line

0x1E4 PCINSRd PCIe Non-Snoop Read

0x1E5 PCINSWr PCIe Non-Snoop Write (partial)

0x1E6 PCINSWrF PCIe Non-Snoop Read (full)

Opcode Defn

2.3.4 CBo Performance Monitoring Events

2.3.4.1 An Overview:

The performance monitoring events within the CBo include all events internal to the LLC as well as

events which track ring related activity at the CBo/Core ring stops.

CBo performance monitoring events can be used to track LLC access rates, LLC hit/miss rates, LLC

eviction and fill rates, and to detect evidence of back pressure on the LLC pipelines. In addition, the

CBo has performance monitoring events for tracking MESI state transitions that occur as a result of

data sharing across sockets in a multi-socket system. And finally, there are events in the CBo for

tracking ring traffic at the CBo/Core sink inject points.

Every event in the CBo is from the point of view of the LLC and is not associated with any specific core

since all cores in the socket send their LLC transactions to all CBos in the socket. However, the PMON

logic in the CBo provides a thread-id field in the Cn_MSR_PMON_BOX_FILTER register which can be

applied to the CBo events to obtain the interactions between specific cores and threads.

There are separate sets of counters for each CBo instance. For any event, to get an aggregate count

of that event for the entire LLC, the counts across the CBo instances must be added together. The

counts can be averaged across the CBo instances to get a view of the typical count of an event from

the perspective of the individual CBos. Indiv idual per-CBo deviations from the a ver age can be used to

identify hot-spotting across the CBos or other evidences of non-uniformity in LLC behavior across the

CBos. Such hot-spotting should be rare, though a repetitive polling on a fixed physical address is one

obvious example of a case where an analysis of the deviations across the CBos would indicate hotspotting.

2.3.4.2 Acronyms frequently used in CBo Events:

The Rings:

AD (Address) Ring - Core Read/Write Requests and Intel QPI Snoops. Carries Intel QPI requests and

snoop responses from C to Intel® QPI.

BL (Block or Data) Ring - Data == 2 transfers for 1 cache line

30 Reference Number: 327043-001

Page 31

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

AK (Acknowledge) Ring - Acknowledges Intel® QPI to CBo and CBo to Core. Carries snoop responses

from Core to CBo.

IV (Invalidate) Ring - CBo Snoop requests of core caches

Internal CBo Queues:

IRQ - Ingress Request Queue on AD Ring. Associated with requests from core.

IPQ - Ingress Probe Queue on AD Ring. Associated with snoops from Intel® QPI LL.

ISMQ - Ingress Subsequent Messages (response queue). Associated with messages responses to

ingress requests (e.g. data responses, Intel QPI complete messages, core snoop response messages

and GO reset queue).

TOR - Table Of Requests. Tracks pending CBo transactions.

RxR (aka IGR) - “Receive from Ring” referring to Ingress (requests from the Cores) queues.

TxR (aka EGR) - “Transmit to Ring” referring to Egress (requests headed for the Ring) queues.

2.3.4.3 The Queues:

There are several internal occupancy queue counters, each of which is 5bits wide and dedicated to its

queue: IRQ, IPQ, ISMQ, QPI_IGR, IGR, EGR and the TOR.

2.3.5 CBo Events Ordered By Code

The following table summarizes the directly measured CBO Box events.

Table 2-14. Performance Monitor Events for CBO (Sheet 1 of 2)

Symbol Name

CLOCKTICKS 0x00 0-3 1 Uncore Clocks

TxR_INSERTS 0x02 0-1 1 Egress Allocations

TxR_ADS_USED 0x04 0-1 1

RING_BOUNCES 0x05 0-1 1 Number of LLC responses that bounced on

RING_SRC_THRTL 0x07 0-1 1

RxR_OCCUPANCY 0x11 0 20 Ingress Occupancy

RxR_EXT_STARVED 0x12 0-1 1 Ingress Arbiter Blocking Cycles

RxR_INSERTS 0x13 0-1 1 Ingress Allocations

RING_AD_USED 0x1B 2-3 1 AD Ring In Use

RING_AK_USED 0x1C 2-3 1 AK Ring In Use

RING_BL_USED 0x1D 2-3 1 BL Ring in Use

RING_IV_USED 0x1E 2-3 1 BL Ring in Use

COUNTER0_OCCUPANCY 0x1F 1-3 20 Counter 0 Occupancy

ISMQ_DRD_MISS_OCC 0x21 0-1 20

RxR_IPQ_RETRY 0x31 0-1 1 Probe Queue Retries

RxR_IRQ_RETRY 0x32 0-1 1 Ingress Request Queue Rejects

Event

Code

Ctrs

Max

Inc/

Cyc

Description

the Ring.

Reference Number: 327043-001 31

Page 32

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-14. Performance Monitor Events for CBO (Sheet 2 of 2)

Symbol Name

RxR_ISMQ_RETRY 0x33 0-1 1 ISMQ Retries

LLC_LOOKUP 0x34 0-1 1 Cache Lookups

TOR_INSERTS 0x35 0-1 1 TOR Inserts

TOR_OCCUPANCY 0x36 0 20 TOR Occupancy

LLC_VICTIMS 0x37 0-1 1 Lines Victimized

MISC 0x39 0-1 1 Cbo Misc

Event

Code

Ctrs

Max

Inc/

Cyc

Description

2.3.6 CBO Box Common Metrics (Derived Events)

The following table summarizes metrics commonly calculated from CBO Box events.

Table 2-15. Metrics Derived from CBO Events (Sheet 1 of 3)

Symbol Name:

Definition

AVG_INGRESS_DEPTH:

Average Depth of the Ingress Queue

through the sample interval

AVG_INGRESS_LATENCY:

Average Latency of Requests through the

Ingress Queue in Uncore Clocks

AVG_INGRESS_LATENCY_WHEN_NE:

Average Latency of Requests through the

Ingress Queue in Uncore Clocks when Ingress

Queue has at least one entry

AVG_TOR_DRDS_MISS_WHEN_NE:

Average Number of Data Read Entries that

Miss the LLC when the TOR is not empty.

AVG_TOR_DRDS_WHEN_NE:

Average Number o f Data Re ad Entries when

the TOR is not empty.

AVG_TOR_DRD_HIT_LATENCY:

Average Latency of Data Reads through the

TOR that hit the LLC

AVG_TOR_DRD_LATENCY:

Average Latency of Data Read Entries

making their way through the TOR

AVG_TOR_DRD_LOC_MISS_LATENCY:

Average Latency of Data Reads through the

TOR that miss the LLC and were satsified by

Locally HOMed Memory. Only valid at

processor level == don't add counts across

Cbos. NOTE: Count imperfect. Will be

polluted by remote hits where memory's

home node is local memory.

AVG_TOR_DRD_MISS_LATENCY:

Average Latency of Data Reads through the

TOR that miss the LLC

RxR_OCCUPANCY.IRQ / SAMPLE_INTERVAL

RxR_OCCUPANCY.IRQ / RxR_INSERTS.IRQ

RxR_OCCUPANCY.IRQ /

COUNTER0.OCCUPANCY{edge_det,thresh=0x1}

(TOR_OCCUPANCY.MISS_OPCODE /

COUNTER0_OCCUPANCY{edge_det,thresh=0x1}))

with:Cn_MSR_PMON_BOX_FILTER.opc=0x182

(TOR_OCCUPANCY.OPCODE /

COUNTER0_OCCUPANCY{edge_det,thresh=0x1})

with:Cn_MSR_PMON_BOX_FILTER.opc=0x182

((TOR_OCCUPANCY.OPCODE TOR_OCCUPANCY.MISS_OPCODE) / (TOR_INSERTS.OPCODE TOR_INSERTS.MISS_OPCODE))

with:Cn_MSR_PMON_BOX_FILTER.opc=0x182

(TOR_OCCUPANCY.OPCODE / TOR_INSERTS.OPCODE)

with:Cn_MSR_PMON_BOX_FILTER.opc=0x182

(TOR_OCCUPANCY.MISS_OPCODE /

TOR_INSERTS.MISS_OPCODE)

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x182,my_node

}

(TOR_OCCUPANCY.MISS_OPCODE /

TOR_INSERTS.MISS_OPCODE)

with:Cn_MSR_PMON_BOX_FILTER.opc=0x182

Equation

32 Reference Number: 327043-001

Page 33

Intel® Xeon® Processor E5-2600 Product Family Uncore Performance Monitoring

Table 2-15. Metrics Derived from CBO Events (Sheet 2 of 3)

Symbol Name:

Definition

AVG_TOR_DRD_REM_MISS_LATENCY:

Average Latency of Data Reads through the

TOR that miss the LLC and were satsified by a

Remote cache or Remote Memory . Only valid

at processor level == don't add counts across

Cbos.

CYC_INGRESS_BLOCKED:

Cycles the Ingress Request Queue arbiter

was Blocked

CYC_INGRESS_STARVED:

Cycles the Ingress Request Queue was in

Internal Starvation

CYC_USED_DNEVEN:

Cycles Used in the Down direction, Even

polarity

CYC_USED_DNODD:

Cycles Used in the Down direction, Odd

polarity

CYC_USED_UPEVEN:

Cycles Used in the Up direction, Even

polarity

CYC_USED_UPODD:

Cycles Used in the Up direction, Odd

polarity

INGRESS_REJ_V_INS:

Ratio of Ingress Request Entries that were

rejected vs. inserted

LLC_DRD_MISS_PCT:

LLC Data Read miss ratio

LLC_DRD_RFO_MISS_TO_LOC_MEM:

LLC Data Read and RFO misses satisfied by

locally HOMed memory. Only valid at

processor level == don't add counts across

Cbos. NOTE: Count imperfect. Will be

polluted by remote hits where memory's

home node is local memory.

LLC_DRD_RFO_MISS_TO_REM_MEM:

LLC Data Read and RFO misses satisfied by

a remote cache or remote memory. Only

valid at processor level == don't add counts

across Cbos.

LLC_MPI:

LLC Misses Per Instruction (code, read, RFO

and prefetches)

LLC_PCIE_DATA_BYTES:

LLC Miss Data from PCIe in Number of

Bytes

LLC_RFO_MISS_PCT:

LLC RFO Miss Ratio

MEM_WB_BYTES:

Data written back to memory in Number of

Bytes

Equation

(TOR_OCCUPANCY.MISS_OPCODE /

TOR_INSERTS.MISS_OPCODE)

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x182,other_no

des}

RxR_EXT_STARVED.IRQ / SAMPLE_INTERVAL

RxR_INT_STARVED.IRQ / SAMPLE_INTERVAL

RING_BL_USED.DN_EVEN / SAMPLE_INTERVAL

RING_BL_USED.DN_ODD / SAMPLE_INTERVAL

RING_BL_USED.UP_EVEN / SAMPLE_INTERVAL

RING_BL_USED.UP_ODD / SAMPLE_INTERVAL

RxR_INSERTS.IRQ_REJECTED / RxR_INSERTS.IRQ

LLC_LOOKUP.DATA_READ

(Cn_MSR_PMON_BOX_FILTER.state=0x1) /

LLC_LOOKUP.DATA_READ

(Cn_MSR_PMON_BOX_FILTER.state=0x1F)

(TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x182,my_node

} + TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x180,my_node

} ) / (TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x182,0xF} +

TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x180,0xF} )

(TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x182,other_no

des} + TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x180,other_no

des} ) / (TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x182,0xF} +

TOR_INSERTS.NID_MISS_OPCODE

with:Cn_MSR_PMON_BOX_FILTER.{opc,nid}={0x180,0xF} )

LLC_LOOKUP.ANY (Cn_MSR_PMON _BOX_FILTER.state=0x1) /