Page 1

Thecus i8500

iSCSI RAID SYSTEM

User Manual

- 1 -

Page 2

Preface

About this manual

This manual is the introduction of i8500 and it aims to help users know the

operations of the disk array system easily. Information contained in this manual

has been reviewed for accuracy, but not for product warranty because of the

various environments/OS/settings, Information and specification will be changed

without further notice. For any update information, please visit www.thecus.com

and your contact windows.

Copyright@2009, Thecus Technology, Corp. All rights reserved.

Thank you for using Thecus Technology, Corp. products; if you have any

question, please e-mail to “sales@thecus.com”. We will answer your question

as soon as possible.

The RAM size of i8500 is recommended DDR-333/400 512MB or above (Factory

default DDR-400 1G). Please refer to the certification list in Appendix A.

- 2 -

Page 3

Table of Contents

1.1 Features........................................................................... 5

1.2 Terminology ..................................................................... 6

1.3 RAID levels ...................................................................... 8

1.4 Volume relationship diagram ........................................... 9

Chapter 2 Getting started.............................................10

2.1 Before starting................................................................ 10

2.2 iSCSI introduction .......................................................... 10

2.3 Management methods ................................................... 12

2.3.1 Web GUI hierarchy...............................................................................12

2.3.2 Remote control – secure shell .............................................................. 13

2.4 Enclosure ....................................................................... 13

2.4.1 LCM...................................................................................................... 13

2.4.2 System buzzer......................................................................................16

2.4.3 LED ......................................................................................................16

Chapter 3 Web GUI guideline.......................................17

3.1 Web GUI hierarchy ........................................................ 17

3.2 Login .............................................................................. 18

3.3 Volume creation wizard.................................................. 19

3.4 System configuration ..................................................... 20

3.4.1 System setting......................................................................................20

3.4.2 IP address ............................................................................................21

3.4.3 Login setting ......................................................................................... 22

3.4.4 Mail setting ...........................................................................................22

3.4.5 Notification setting ................................................................................ 23

3.5 iSCSI configuration ........................................................ 25

3.5.1 Entity property ......................................................................................25

3.5.2 NIC ....................................................................................................... 26

3.5.3 Node.....................................................................................................27

3.5.4 Session.................................................................................................28

3.5.5 CHAP account......................................................................................28

3.6 Volume configuration ..................................................... 29

3.6.1 Volume create wizard...........................................................................29

3.6.2 Physical disk......................................................................................... 32

3.6.3 RAID group........................................................................................... 35

3.6.4 Virtual disk............................................................................................38

3.6.5 Snapshot ..............................................................................................42

3.6.6 Logical unit ...........................................................................................44

3.6.7 Example ...............................................................................................45

3.7 Enclosure management ................................................. 50

3.7.1 SES configuration................................................................................. 50

3.7.2 Hardware monitor.................................................................................51

3.7.3 Hard drive S.M.A.R.T. support .............................................................52

3.7.4 UPS......................................................................................................53

3.8 System maintenance ..................................................... 54

- 3 -

Page 4

3.8.1 System information............................................................................... 54

3.8.2 Upgrade................................................................................................54

3.8.3 Reset to factory default.........................................................................55

3.8.4 Import and export ................................................................................. 56

3.8.5 Event log ..............................................................................................56

3.8.6 Reboot and shutdown...........................................................................57

3.9 Logout ............................................................................ 57

Chapter 4 Advanced operation....................................58

4.1 Rebuild........................................................................... 58

4.2 RG migration.................................................................. 60

4.3 VD Extension ................................................................. 62

4.4 Snapshot / Rollback ....................................................... 62

4.4.1 Create snapshot volume.......................................................................63

4.4.2 Auto snapshot ......................................................................................65

4.4.3 Rollback................................................................................................66

4.5 Disk roaming .................................................................. 67

4.6 Support Microsoft MPIO and MC/S................................ 67

Appendix............................................................................69

A. Certification list............................................................... 69

Event notifications....................................................................... 71

C. Known issues................................................................. 76

D. Microsoft iSCSI Initiator ................................................. 77

E. Installation steps for large volume (TB).......................... 79

F. MPIO and MC/S setup instructions................................ 82

G. BBM (Battery Backup Module) inspect steps................. 84

G. BBM(Battery Backup Module) inspect steps -(Optional) .. 85

H. M/B Diagram .................................................................. 87

- 4 -

Page 5

Chapter 1 RAID introduction

1.1 Features

i8500 controller features:

• Front-end 2 GbE NIC ports.

• iSCSI jumbo frame support.

• RAID 6, 60 ready.

• SATA II drives backward-compatible.

• One logic volume can be shared by as many as 8 hosts and 32

connections per system.

• Host access control.

• Configurable N-way mirror for high data protection.

• On-line volume migration with no system down-time.

• HDD S.M.A.R.T. enabled for SATA drives.

With proper configuration, i8500 can provide non-stop service with a high degree

of fault tolerance by using i8500 RAID technology and advanced array

management features. The controller features are slightly different between the

backplane solution and cable solution. For more details, please contact your

direct sales or email to “sales@thecus.com ”.

i8500 connects to the host system in iSCSI interface. It can be configured to any

RAID level. i8500 provides reliable data protection for servers and RAID 6. The

RAID 6 allows two HDD failures without producing any impact on the existing

data. Data can be recovered from the existing data and parity drives. (Data can

be recovered from the rest disks/drives.)

Snapshot is a fully usable copy of a defined collection of data that contains an

image of the data as it appeared at the point in time, which means a point-in-time

data replication. It provides consistent and instant copies of data volumes without

any system downtime. Snapshot can keep up to 32 snapshots for all data

volumes. Rollback feature is provided for restoring the previous-snapshot data

easily while continuously using the volume for further data access. The data

access which includes read/ write is working as usual without any impact to end

users. The snapshot is taken at target side and done by i8500. It will not

consume any host CPU time thus the server is dedicated to the specific or other

application. The snapshot copies can be taken manually or by schedule every

hour or every day, depends on the modification.

i8500 is the most cost-effective disk array controller with completely integrated

high-performance and data-protection capabilities which meet or exceed the

highest industry standards, and the best data solution for small/medium

business (SMB) users.

- 5 -

Page 6

Caution

Snapshot / rollback features need 512MB RAM or more.

Please refer to RAM certification list in Appendix A for more

detail.

1.2 Terminology

The document uses the following terms:

RAID

PD

RG

VD

CV

LUN

RAID is the abbreviation of “Redundant Array of Independent

Disks”. There are different RAID levels with different degree

of the data protection, data availability, and performance to

host environment.

The Physical Disk belongs to the member disk of one specific

RAID group.

Raid Group. A collection of removable media. One RG

consists of a set of VDs and owns one RAID level attribute.

Virtual Disk. Each RD could be divided into several VDs. The

VDs from one RG have the same RAID level, but may have

different volume capacity.

Cache Volume. Controller uses onboard memory as cache.

All RAM (except for the part which is occupied by the

controller) can be used as cache.

Logical Unit Number. A logical unit number (LUN) is a unique

identifier which enables it to differentiate among separate

devices (each one is a logical unit).

GUI

RAID width,

RAID copy,

RAID row

(RAID cell in

Graphic User Interface.

RAID width, copy and row are used to describe one RG.

E.g.:

1. One 4-disk RAID 0 volume: RAID width= 4; RAID

one row)

2. One 3-way mirroring volume: RAID width=1; RAID

3. One RAID 10 volume over 3 4-disk RAID 1 volume:

copy=1; RAID row=1.

copy=3; RAID row=1.

- 6 -

Page 7

RAID width=1; RAID copy=4; RAID row=3.

WT

WB

RO

DS

GS

Write-Through cache-write policy. A caching technique in

which the completion of a write request is not signaled until

data is safely stored in non-volatile media. Each data is

synchronized in both data cache and accessed physical disks.

Write-Back cache-write policy. A caching technique in which

the completion of a write request is signaled as soon as the

data is in cache and actual writing to non-volatile media

occurs at a later time. It speeds up system write performance

but needs to bear the risk where data may be inconsistent

between data cache and the physical disks in one short time

interval.

Set the volume to be Read-Only.

Dedicated Spare disks. The spare disks are only used by one

specific RG. Others could not use these dedicated spare disks

for any rebuilding purpose.

Global Spare disks. GS is shared for rebuilding purpose. If

some RGs need to use the global spare disks for rebuilding,

they could get the spare disks out from the common spare

disks pool for such requirement.

DC

GC

DG

SCSI

iSCSI

SAS

FC

S.M.A.R.T.

Dedicated Cache.

Global Cache.

DeGraded mode. Not all of the array’s member disks are

functioning, but the array is able to respond to application

read and write requests to its virtual disks.

Small Computer Systems Interface.

Internet Small Computer Systems Interface.

Serial Advanced Technology Attachment

Fibre Channel.

Self-Monitoring Analysis and Reporting Technology.

- 7 -

Page 8

WWN

World Wide Name.

HBA

SAF-TE

SES

NIC

LACP

MPIO

MC/S

MTU

CHAP

iSNS

Host Bus Adapter.

SCSI Accessed Fault-Tolerant Enclosures.

SCSI Enclosure Services.

Network Interface Card.

Link Aggregation Control Protocol.

Multi-Path Input/Output.

Multiple Connections per Session

Maximum Transmission Unit.

Challenge Handshake Authentication Protocol. An optional

security mechanism to control access to an iSCSI storage

system over the iSCSI data ports.

Internet Storage Name Service.

1.3 RAID levels

RAID 0

RAID 1

N-way

mirror

RAID 3

RAID 5

RAID 6

Disk striping. RAID 0 needs at least one hard drive.

Disk mirroring over two disks. RAID 1 needs at least two hard

drives.

Extension to RAID 1 level. It has N copies of the disk.

Striping with parity on the dedicated disk. RAID 3 needs at

least three hard drives.

Striping with interspersed parity over the member disks. RAID

3 needs at least three hard drives.

2-dimensional parity protection over the member disks. RAID

6 needs at least four hard drives.

- 8 -

Page 9

RAID 0+1

G

3

Mirroring of the member RAID 0 volumes. RAID 0+1 needs at

least four hard drives.

RAID 10

Striping over the member RAID 1 volumes. RAID 10 needs at

least four hard drives.

RAID 30

Striping over the member RAID 3 volumes. RAID 30 needs at

least six hard drives.

RAID 50

Striping over the member RAID 5 volumes. RAID 50 needs at

least six hard drives.

RAID 60

Striping over the member RAID 6 volumes. RAID 60 needs at

least eight hard drives.

JBOD

The abbreviation of “Just a Bunch Of Disks”. JBOD needs at

least one hard drive.

1.4 Volume relationship diagram

This is the volume structure of i8500 designed. It describes the relationship of

RAID components. One RG (RAID group) consists of a set of VDs (Virtual disk)

and owns one RAID level attribute. Each RG can be divided into several VDs.

The VDs in one RG share the same RAID level, but may have different volume

capacity. Each VD will be associated with one specific CV (Cache Volume) to

LUN 1 LUN 2 LUN

VD 1 VD 2

Snap

VD

+

+

+

R

PD 2 PD 3 DS PD 1

Figure 1.4.1

Global CV

Dedicated

CV

RAM

- 9 -

Page 10

execute the data transaction. Each CV can have different cache memory size by

user’s modification/setting. LUN (Logical Unit Number) is a unique identifier, in

which users can access through SCSI commands.

Chapter 2 Getting started

2.1 Before starting

Before starting, prepare the following items.

1. Check “Certification list” in Appendix A to confirm the hardware

setting is fully supported.

2. Read the latest release note before upgrading. Release note

accompany with release firmware.

3. A server with a NIC or iSCSI HBA.

4. CAT 5e, or CAT 6 network cables for management port and iSCSI data

ports. Recommend CAT 6 cables for best performance.

5. Prepare storage system configuration plan.

6. Management and iSCSI data ports network information. When using

static IP, please prepare static IP addresses, subnet mask, and default

gateway.

7. Gigabit LAN switches. (recommended) Or Gigabit LAN switches with

VLAN/LCAP. (optional)

8. CHAP security information, including CHAP username and secret.

(optional)

9. Setup the hardware connection before power on servers and i8500.

Connect console cable, management port cable, and iSCSI data port

cables in advance.

2.2 iSCSI introduction

iSCSI (Internet SCSI) is a protocol which encapsulates SCSI (Small Computer

System Interface) commands and data in TCP/IP packets for linking storage

devices with servers over common IP infrastructures. iSCSI provides high

performance SANs over standard IP networks like LAN, WAN or the Internet.

IP SANs are true SANs (Storage Area Networks) which allow few servers to

attach to an infinite number of storage volumes by using iSCSI over TCP/IP

networks. IP SANs can scale the storage capacity with any type and brand of

storage system. In addition, it can be used by any type of network (Ethernet, Fast

Ethernet, and Gigabit Ethernet) and combination of operating systems (Microsoft

Windows, Linux, Solaris, etc.) within the SAN network. IP-SANs also include

mechanisms for security, data replication, multi-path and high availability.

- 10 -

Page 11

Storage protocol, such as iSCSI, has “two ends” in the connection. These ends

are initiator and target. In iSCSI, we call them iSCSI initiator and iSCSI target.

The iSCSI initiator requests or initiates any iSCSI communication. It requests all

SCSI operations like read or write. An initiator is usually located on the

host/server side (either an iSCSI HBA or iSCSI SW initiator).

The target is the storage device itself or an appliance which controls and serves

volumes or virtual volumes. The target is the device which performs SCSI

command or bridge to an attached storage device.

Host 1

(initiator)

NIC

Host 2

(initiator)

iSCSI

HBA

IP SAN

iSCSI device 1

(target)

iSCSI device 2

(target)

Figure 2.2.1

The host side needs an iSCSI initiator. The initiator is a driver which handles the

SCSI traffic over iSCSI. The initiator can be software or hardware (HBA). Please

refer to the certification list of iSCSI HBA(s) in Appendix A. OS native initiators or

other software initiators use standard TCP/IP stack and Ethernet hardware,

while iSCSI HBA(s) use their own iSCSI and TCP/IP stacks on board.

Hardware iSCSI HBA(s) provide its own initiator tool. Please refer to the vendors’

HBA user manual. Microsoft, Linux and Mac provide iSCSI initiator driver. Below

are the available links:

1. Link to download the Microsoft iSCSI software initiator:

http://www.microsoft.com/downloads/details.aspx?FamilyID=12cb3c1a15d6-4585-b385-befd1319f825&DisplayLang=en

Please refer to Appendix D for Microsoft iSCSI initiator installation

procedure.

- 11 -

Page 12

2. Linux iSCSI initiator is also available. For different kernels, there are

different iSCSI drivers. Please check Appendix A for iSCSI initiator

certification list. If user needs the latest Linux iSCSI initiator, please

visit Open-iSCSI project for most update information. Linux-iSCSI

(sfnet) and Open-iSCSI projects merged in April 11, 2005.

Open-iSCSI website: http://www.open-iscsi.org/

Open-iSCSI README: http://www.open-iscsi.org/docs/README

Features: http://www.open-iscsi.org/cgi-bin/wiki.pl/Roadmap

Support Kernels:

http://www.open-iscsi.org/cgi-bin/wiki.pl/Supported_Kernels

Google groups:

http://groups.google.com/group/open-iscsi/threads?gvc=2

http://groups.google.com/group/open-iscsi/topics

Open-iSCSI Wiki: http://www.open-iscsi.org/cgi-bin/wiki.pl

3. ATTO iSCSI initiator is available for Mac.

Website: http://www.attotech.com/xtend.html

2.3 Management methods

There are two management methods to manage i8500, describe in the following:

2.3.1 Web GUI hierarchy

i8500 support graphic user interface to manage the system. Be sure to connect

LAN cable. The default setting of management port IP is Static IP and Static IP

address displays on LCM; user can inspect LCM for IP first, then open the

browser and type the Static IP address:

Take an example on LCM:

192.168.1.100

i8500

http://192.168.1.100

Click any function at the first time; it will pop up a dialog to authenticate current

user.

Login name: admin

Default password: admin

- 12 -

Page 13

Or login with read-only account which only allows reading the configuration and

cannot change setting.

Login name: user

Default password: 1234

2.3.2 Remote control – secure shell

SSH (secure shell) is required for controllers to remote login. The SSH client

software is available at the following web site:

SSHWinClient WWW: http://www.ssh.com/

Putty WWW: http://www.chiark.greenend.org.uk/

Host name: 192.168.1.100 (Please check your DHCP address for this field.)

Login name: admin

Default password: 1234

Tips

i8500 controllers only support SSH for remote control. For

using SSH, the IP address and password are required for login.

2.4 Enclosure

2.4.1 LCM

There are four buttons to control LCM (LCD Control Module), including:

c (up), d (down), ESC (Escape), and ENT (Enter).

After booting up the system, the following screen shows management port IP and

model name:

192.168.1.100

i8500 ←

Press “ENT”, the LCM functions “System Info.”, “Alarm Mute”,

“Reset/Shutdown”, “Quick Install”, “Volume Wizard”, “View IP Setting”,

“Change IP Config” and “Reset to Default” will rotate by pressing c (up) and

d (down).

- 13 -

Page 14

When there is WARNING or ERROR occurred (LCM default filter), the LCM

shows the event log to give users more detail from front panel.

The following table is function description.

System Info.

Alarm Mute

Reset/Shutdown

Quick Install

Volume Wizard

View IP Setting

Change IP

Config

Reset to Default

Display system information.

Mute alarm when error occurs.

Reset or shutdown controller.

Quick steps to create a volume. Please refer to next

chapter for operation in web UI.

Smart steps to create a volume. Please refer to next

chapter for operation in web UI.

Display current IP address, subnet mask, and gateway.

Set IP address, subnet mask, and gateway. There are 2

options: DHCP (Get IP address from DHCP server) or

static IP.

Reset to default will set password to default: admin, and

set IP address to default as DHCP setting.

Default IP address: 192.168.1.100

Default subnet mask: 255.255.255.0

Default gateway: 192.168.1.1

The following is LCM menu hierarchy.

cd

[System Info.]

[Alarm Mute] [cYes Nod]

[Reset/Shutdown]

[Firmware

Version

x.x.x]

[RAM Size

xxx MB]

[Reset]

[Shutdown]

[cYes

Nod]

[cYes

Nod]

- 14 -

Page 15

RAID 0

RAID 1

RAID 3

[Quick Install]

[Volume Wizard]

[View IP Setting]

[Change IP

Config]

[Reset to Default] [cYes Nod]

RAID 5

RAID 6

RAID 0+1

xxx GB

[Local]

RAID 0

RAID 1

RAID 3

RAID 5

RAID 6

RAID 0+1

[JBOD x] cd

RAID 0

RAID 1

RAID 3

RAID 5

RAID 6

RAID 0+1

[IP Config]

[Static IP]

[IP Address]

[192.168.001.100]

[IP Subnet Mask]

[255.255.255.0]

[IP Gateway]

[192.168.001.001]

[DHCP]

[Static IP]

[Apply The

Config]

[Use default

algorithm]

[new x disk]

cd

xxx BG

[cYes

Nod]

[IP Address]

[IP Subnet

Mask]

[IP

Gateway]

[Apply IP

Setting]

[cYes

Nod]

[Apply

[Volume

Size]

xxx GB

Adjust

Volume Size

Adjust IP

address

Adjust

Submask IP

Adjust

Gateway IP

[cYes

Nod]

The

Config]

[cYes

Nod]

[Apply

The

Config]

[cYes

Nod]

Caution

Before power off, it is better to execute “Shutdown” to flush

the data from cache to physical disks.

- 15 -

Page 16

2.4.2 System buzzer

The system buzzer features are listed below:

1. The system buzzer alarms 1 second when system boots up

successfully.

2. The system buzzer alarms continuously when there is error occurred.

The alarm will be stopped after error resolved or be muted.

3. The alarm will be muted automatically when the error is resolved. E.g.,

when RAID 5 is degraded and alarm rings immediately, user

changes/adds one physical disk for rebuilding. When the rebuilding is

done, the alarm will be muted automatically.

2.4.3 LED

The LED features are listed below:

1. Marquee / Disk Status / Disk Rebuilding LED: The Marquee / Disk

Status / Disk Rebuilding LEDs are displayed in the same LEDs. The

LEDs indicates different functions in different stages.

I. Marquee LEDs: When system powers on and successfully boots

up, the Marquee LED is on until the system boots successful.

II. Disk status LEDs: the LEDs reflect the disk status for the tray.

Only On/Off situation.

III. Disk rebuilding LEDs: the LEDs are blinking when the disks are

under rebuilding.

2. Disk Access LED: Hardware activated LED when accessing disks (IO).

3. Disk Power LED: Hardware activated LED when the disks are plugged

in and powered on.

4. System status LED: Used to reflect the system status by turning on

the LED when error occurs or RAID malfunction happens.

5. Management LAN port LED: GREEN LED is for LAN transmit/receive

indication. ORANGE LED is for LAN port 10/100 LINK indication.

6. BUSY LED: Hardware activated LED when the front-end channel is

busy.

7. POWER LED: Hardware activated LED when system is powered on.

- 16 -

Page 17

Chapter 3 Web GUI guideline

A

3.1 Web GUI hierarchy

The below table is the hierarchy of web GUI.

Æ

Quick installation

System configuration

System setting

IP address

Login setting

Mail setting

Notification

iSCSI configuration

Entity property

CHAP account

Session

Volume configuration

Volume create

Physical disk

RAID group

Virtual disk

Snapshot

Logical unit

Enclosure management

configuration

Hardware

monitor

S.M.A.R.T.

Maintenance

information

setting

NIC

Node

wizard

SES

UPS

System

Step 1 / Step 2 / Confirm

System name / Date and time

Æ

MAC address / Address / DNS / port

Æ

Login configuration / Admin password / User

Æ

password

Mail

Æ

SNMP / Messenger / System log server / Event log

Æ

filter

Æ

Entity name / iSNS IP

IP settings for iSCSI ports / Become default gateway /

Æ

Enable jumbo frame

Create

Æ

Session information / Delete

Æ

Create / Delete

Æ

Step 1 / Step 2 / Step 3 / Step 4 / Confirm

Set Free disk / Set Global spare / Set Dedicated

Æ

spare / More information

Create / Migrate / Activate / Deactivate / Scrub /

Æ

Delete / Set disk property / More information

Create / Extend / Scrub / Delete / Set property /

Æ

ttach LUN / Detach LUN / List LUN / Set snapshot

space / Cleanup snapshot / Take snapshot / Auto

snapshot / List snapshot / More information

Cleanup snapshot / Auto snapshot / Take snapshot /

Æ

Export / Rollback / Delete

Attach / Detach

Æ

Enable / Disable

Æ

Auto shutdown

Æ

S.M.A.R.T. information

Æ

UPS Type / Shutdown battery level / Shutdown delay /

Æ

Shutdown UPS

System information

Æ

- 17 -

Page 18

Browse the firmware to upgrade / Export configuration

Logout

Upgrade

Reset to default

Import and

export

Event log

Reboot and

shutdown

Æ

Sure to reset to factory default?

Æ

Import/Export / Import file

Æ

Download / Mute / Clear

Æ

Reboot / Shutdown

Æ

Sure to logout?

3.2 Login

i8500 supports graphic user interface (GUI) to operate the system. Be sure to

connect the LAN cable. The default IP address is 192.168.1.100; open the

browser and enter:

http://192.168.1.100 (Please check the IP address first on LCM.)

Click any function at the first time; it will pop up a dialog for authentication.

Login name: admin

Default password: admin

After login, you can choose the functions which lists on the left side of window to

make configuration.

There are six indicators at the top-right corner for backplane solutions, and

cabling solutions have three indicators at the top-right corner.

Figure 3.2.1

- 18 -

Page 19

Figure 3.2.2

1. RAID light: Green means RAID works well. Red represents RAID

failure.

2. Temperature light: Green means normal temperature. Red

represents abnormal temperature.

3. Voltage light: Green means normal voltage. Red represents

abnormal voltage.

4. UPS light: Green means UPS works well. Red represents UPS

failure.

3.3 Volume creation wizard

It is easy to use “Volume creation wizard” to create a volume. It uses whole

physical disks to create a RG; the system will calculate maximum spaces on

RAID levels 0/1/3/5/6/0+1. “Volume creation wizard” will occupy all residual

RG space for one VD, and it has no space for snapshot and spare. If snapshot is

needed, please create volumes by manual, and refer to section 4.4 for more

detail. If some physical disks are used in other RGs, “Volume creation wizard”

can not be run because the operation is valid only when all physical disks in this

system are free.

Step 1: Click “Volume creation wizard”, then choose the RAID level. After

choosing the RAID level, then click “ ”. It will link to another

page.

Figure 3.3.1

- 19 -

Page 20

Step 2: Confirm page. Click “ ” if all setups are correct. Then

a VD will be created.

Done. You can start to use the system now.

Figure 3.3.2

(Figure 3.3.2: A RAID 0 Virtual disk with the VD name “QUICK53360”, named by system

itself, with the total available volume size 1191GB.)

3.4 System configuration

“System configuration” is designed for setting up the “System setting”, “IP

address”, “Login setting”, “Mail setting”, and “Notification setting”.

3.4.1 System setting

“System setting” can set system name and date. Default “System name”

composed of model name and serial number of this system.

Figure 3.4.1

- 20 -

Page 21

Figure 3.4.1.1

Check “Change date and time” to set up the current date, time, and time zone

before using or synchronize time from NTP (Network Time Protocol) server.

3.4.2 IP address

“IP address” can change IP address for remote administration usage. There are

2 options, DHCP (Get IP address from DHCP server) or static IP. The default

setting is Static IP(192.168.1.100). User can change the HTTP, and SSH port

number when the default port number is not allowed on host/server.

Figure 3.4.2.1

- 21 -

Page 22

3.4.3 Login setting

“Login setting” can set single admin, auto logout time and Admin/User

password. The single admin can prevent multiple users access the same

controller at the same time.

1. Auto logout: The options are (1) Disable; (2) 5 minutes; (3) 30 minutes;

(4) 1 hour. The system will log out automatically when user is inactive

for a period of time.

2. Login lock: Disable/Enable. When the login lock is enabled, the

system allows only one user to login or modify system settings.

Check “Change admin password” or “Change user password” to change

admin or user password. The maximum length of password is 12 characters.

3.4.4 Mail setting

“Mail setting” can enter at most 3 mail addresses for receiving the event

notification. Some mail servers would check “Mail-from address” and need

authentication for anti-spam. Please fill the necessary fields and click “Send test

mail” to test whether email functions are available. User can also select which

levels of event logs are needed to be sent via Mail. Default setting only enables

ERROR and WARNING event logs.

Figure 3.4.3.1

- 22 -

Page 23

Figure 3.4.4.1

3.4.5 Notification setting

“Notification setting” can set up SNMP trap for alerting via SNMP, pop-up

message via Windows messenger (not MSN), alert via system log server

protocol, and event log filter.

- 23 -

Page 24

“SNMP” allows up to 3 SNMP trap addresses. Default community setting is

“public”. User can choose the event log levels and default setting only enables

INFO event log in SNMP. There are many SNMP tools. The following web sites

are for your reference:

SNMPc: http://www.snmpc.com/

Net-SNMP:

http://net-snmp.sourceforge.net/

Using “Messenger”, user must enable the service “Messenger” in Windows

(Start Æ Control Panel Æ Administrative Tools Æ Services Æ Messenger), and

then event logs can be received. It allows up to 3 messenger addresses. User

can choose the event log levels and default setting enables the WARNING and

ERROR event logs.

Figure 3.4.5.1

- 24 -

Page 25

Using “System log server”, user can choose the facility and the event log level.

The default port of syslog is 514. The default setting enables event level: INFO,

WARNING and ERROR event logs.

There are some syslog server tools. The following web sites are for your

reference:

WinSyslog: http://www.winsyslog.com/

Kiwi Syslog Daemon: http://www.kiwisyslog.com/

Most UNIX systems build in syslog daemon.

“Event log filter” setting can enable event level on “Pop up events” and “LCM”.

3.5 iSCSI configuration

“iSCSI configuration” is designed for setting up the “Entity Property”, “NIC”,

“Node”, “Session”, and “CHAP account”.

Figure 3.5.1

3.5.1 Entity property

“Entity property” can view the entity name of the controller, and setup “iSNS

IP” for iSNS (Internet Storage Name Service). iSNS protocol allows automated

discovery, management and configuration of

Using iSNS, it needs to install a iSNS server in SAN. Add an iSNS server IP

address into iSNS server lists in order that iSCSI initiator service can send

queries. The entity name can’t be changed.

Figure 3.5.1.1

- 25 -

iSCSI devices on a TCP/IP network.

Page 26

3.5.2 NIC

“NIC” can change IP addresses of iSCSI data ports.

Figure 3.5.2.1

(Figure 3.5.2.1: there are 2 iSCSI data ports.)

IP settings:

User can change IP address by moving mouse to the gray button of LAN port,

click “IP settings for iSCSI ports”. There are 2 selections, DHCP (Get IP

address from DHCP server) or static IP.

Figure 3.5.2.2

Default gateway:

Default gateway can be changed by moving mouse to the gray button of LAN

port, click “Become default gateway”. There is only one default gateway.

MTU / Jumbo frame:

MTU (Maximum Transmission Unit) size can be enabled by moving mouse to the

gray button of LAN port, click “Enable jumbo frame”.

- 26 -

Page 27

Caution

The MTU size of switching hub and HBA on host must be

enabled. Otherwise, the LAN connection can not work properly.

3.5.3 Node

“Node” can view the target name for iSCSI initiator. The node name of i8500

exists by default and can not be changed.

Figure 3.5.3.1

CHAP:

CHAP is the abbreviation of Challenge Handshake Authorization Protocol. CHAP

is a strong authentication method used in point-to-point for user login. It’s a type

of authentication in which the authentication server sends the client a key to be

used for encrypting the username and password. CHAP enables the username

and password to transmitting in an encrypted form for protection.

To use CHAP authentication, please follow the procedures.

1. Click “

”.

2. Select “CHAP”.

Figure 3.5.3.2

3. Click “

”.

Figure 3.5.3.3

- 27 -

Page 28

4. Go to “/ iSCSI configuration / CHAP account” page to create CHAP

account. Please refer to next section for more detail.

5. In “Authenticate” page, select “None” to disable CHAP.

Tips

After setting CHAP, the initiator in host/server should be set the

same CHAP account. Otherwise, user cannot login.

3.5.4 Session

“Session” can display iSCSI session and connection information, including the

following items:

1. Host (Initiator Name)

2. Error Recovery Level

3. Error Recovery Count

4. Detail of Authentication status and Source IP: port number.

Figure 3.5.4.1

(Figure 3.5.4.1: iSCSI Session.)

Mouse moves to the gray button of session number, click “List connection”. It

will list all connection(s) of the session.

Figure 3.5.4.2

(Figure 3.5.4.2: iSCSI Connection.)

3.5.5 CHAP account

“CHAP account” can manage a CHAP account for authentication.

To setup CHAP account, please follow the procedures.

1. Click “ ”.

- 28 -

Page 29

2. Enter “User”, “Secret”, and “Confirm” secret again.

Figure 3.5.5.3

3. Click “ ”.

Figure 3.5.5.1

(Figure 3.5.5.4: create a CHAP account named “chap1”.)

4. Click “Delete” to delete CHAP account.

3.6 Volume configuration

“Volume configuration” is designed for setting up the volume configuration

which includes “Volume create wizard”, “Physical disk”, “RAID group”,

“Virtual disk”, “Snapshot”, and “Logical unit”.

3.6.1 Volume create wizard

“Volume create wizard” has a smarter policy. When the system is inserted with

some HDDs. “Volume create wizard” lists all possibilities and sizes in different

Figure 3.6.1

- 29 -

Page 30

RAID levels, it will use all available HDDs for RAID level depends on which user

chooses. When system has different sizes of HDDs, e.g., 8*200G and 8*80G, it

lists all possibilities and combination in different RAID level and different sizes.

After user chooses RAID level, user may find that some HDDs are available (free

status). The result is using smarter policy designed by Thecus. It gives user:

1. Biggest capacity of RAID level for user to choose and,

2. The fewest disk number for RAID level / volume size.

E.g., user chooses RAID 5 and the controller has 6*200G + 2*80G HDDs

inserted. If we use all 16 HDDs for a RAID 5, and then the maximum size of

volume is 560G (80G*7). By the wizard, we do smarter check and find out the

most efficient way of using HDDs. The wizard only uses 200G HDDs (Volume

size is 200G*5=1000G), the volume size is bigger and fully uses HDD capacity.

Step 1: Select “Volume create wizard” and then choose the RAID level. After

the RAID level is chosen, click “ ”. Then it will link to next

page.

Step 2: Please select the combination of the RG capacity, or “Use default

algorithm” for maximum RG capacity. After RG size is chosen, click

“ ”.

Figure 3.6.1.1

- 30 -

Page 31

Figure 3.6.1.2

Step 3: Decide VD size. User can enter a number less or equal to the default

number. Then click “ ”.

Figure 3.6.1.3

Step 4: Confirm page. Click “ ” if all setups are correct. Then

a VD will be created.

Done. You can start to use the system now.

Figure 3.6.1.4

(Figure 3.6.1.4: A RAID 0 Virtual disk with the VD name “QUICK13573”, named by system

itself, with the total available volume size 1862GB.)

- 31 -

Page 32

3.6.2 Physical disk

“Physical disk” can view the status of hard drives in the system. The followings

are operational tips:

1. Mouse moves to the gray button next to the number of slot, it will show

the functions which can be executed.

2. Active function can be selected, but inactive function will show in gray

color.

For example, set PD slot number 11 to dedicated spare disk.

Step 1: Mouse moves to the gray button of PD 11, select “Set Dedicated

spare”, it will link to next page.

Figure 3.6.2.1

Step 2: Maybe it has some RGs which can be set dedicate spare disk, select

which one will be added, then click “ ”.

Figure 3.6.2.2

Done. View “Physical disk” page.

- 32 -

Page 33

Figure 3.6.2.3

(Figure 3.6.2.3: Physical disks of slot 1,2,3 are created for a RG named “RG-R5”. Slot 4 is

set as dedicated spare disk of RG named “RG-R5”. The others are free disks.)

• PD column description:

Slot

Size (GB)

RG Name

Status

Health

The position of hard drives. The button next to the

number of slot shows the functions which can be

executed.

Capacity of hard drive.

Related RAID group name.

The status of hard drive.

“Online” Æ the hard drive is online.

“Rebuilding” Æ the hard drive is being rebuilt.

“Transition” Æ the hard drive is being migrated or is

replaced by another disk when rebuilding occurs.

“Missing” Æ the hard drive has already joined a RG

but not plugged into the disk tray of current

system.

The health of hard drive.

- 33 -

Page 34

“Good” Æ the hard drive is good.

“Failed” Æ the hard drive is failed.

“Error Alert” Æ S.M.A.R.T. error alert.

“Read Errors” Æ the hard drive has unrecoverable

read errors.

Usage

Vendor

Serial

Type

“RD” Æ RAID Disk. This hard drive has been set to

RAID.

“FR” Æ FRee disk. This hard drive is free for use.

“DS” Æ Dedicated Spare. This hard drive has been

set to the dedicated spare of the RG.

“GS” Æ Global Spare. This hard drive has been set to

a global spare of all RGs.

“RS” Æ ReServe. The hard drive contains the RG

information but cannot be used. It may be

caused by an uncompleted RG set, or hot-plug of

this disk in the running time. In order to protect

the data in the disk, the status changes to

reserve. It can be reused after setting it to “FR”

manually.

Hard drive vendor.

Hard drive serial number.

Hard drive type.

Write cache

Standby

• PD operations description:

Set Free disk

Set Global

spare

“SATA” Æ SATA disk.

“SATA2” Æ SATA II disk.

Hard drive write cache is enabled or disabled.

HDD auto spindown to save power. The default value

is disabled.

Make the selected hard drive to be free for use.

Set the selected hard drive to global spare of all RGs.

- 34 -

Page 35

Set

Dedicated

spares

Set hard drive to dedicated spare of selected RGs.

Set property

Change the status of write cache and standby.

Write cache options:

“Enabled” Æ Enable disk write cache.

“Disabled” Æ Disable disk write cache.

Standby options:

“Disabled” Æ Disable spindown.

“30 sec / 1 min / 5 min / 30 min” Æ Enable hard drive

auto spindown to save power in the period of

time.

More

Show hard drive detail information.

information

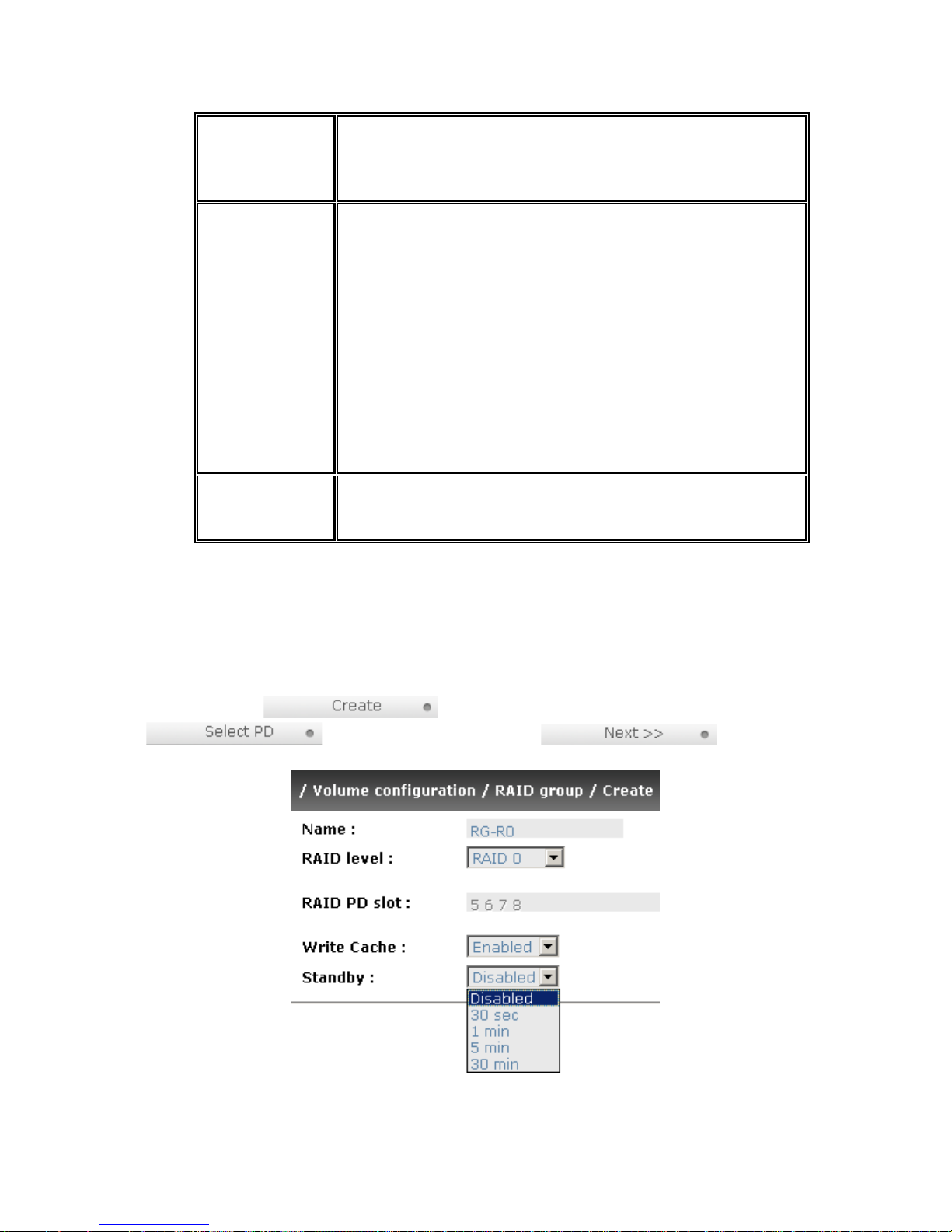

3.6.3 RAID group

“RAID group” can view the status of each RAID group. The following is an

example to create a RG.

Step 1: Click “ ”, enter “Name”, choose “RAID level”, click

“ ” to select PD. Then click “ ”.

Figure 3.6.3.1

- 35 -

Page 36

Step 2: Confirm page. Click “ ” if all setups are correct.

Figure 3.6.3.2

(Figure 3.6.3.2: There is a RAID 0 with 4 physical disks, named “RG-R0”, total size is

135GB. Another is a RAID 5 with 3 physical disks, named “RG-R5”.)

Done. View “RAID group” page.

• RG column description:

No.

Number of RAID group. The button next to the No.

shows the functions which can be executed.

Name

Total(GB)

RAID group name.

Total capacity of this RAID group.

Free(GB)

#PD

#VD

Status

Free capacity of this RAID group.

The number of physical disks in RAID group.

The number of virtual disks in RAID group.

The status of RAID group.

“Online” Æ the RAID group is online.

“Offline” Æ the RAID group is offline.

“Rebuild” Æ the RAID group is being rebuilt.

- 36 -

Page 37

“Migrate” Æ the RAID group is being migrated.

“Scrub” Æ the RAID group is being scrubbed.

Health

The health of RAID group.

“Good” Æ the RAID group is good.

“Failed” Æ the hard drive is failed.

“Degraded” Æ the RAID group is not completed. The

reason could be lack of one disk or disk failure.

RAID

Enclosure

• RG operations description:

Create

Migrate

The RAID level of the RAID group.

RG locates on local or JBOD enclosure.

Create a RAID group.

Migrate a RAID group. Please refer to next chapter for

more detail.

Activate

Activate a RAID group; it can be executed when RG

status is offline. This is for online roaming purpose.

Deactivate

Scrub

Delete

Set disk

property

More

Deactivate a RAID group; it can be executed when RG

status is online. This is for online roaming purpose.

Scrub a RAID group. It’s a parity regeneration. It

supports RAID 3 / 5 / 6 / 30 / 50 / 60 only.

Delete a RAID group.

Change the disk status of write cache and standby.

Write cache options:

“Enabled” Æ Enable disk write cache.

“Disabled” Æ Disable disk write cache.

Standby options:

“Disabled” Æ Disable spindown.

“30 sec / 1 min / 5 min / 30 min” Æ Enable hard drive

auto spindown to save power in the period of

time.

Show RAID group detail information.

- 37 -

Page 38

information

3.6.4 Virtual disk

“Virtual disk” can view the status of each Virtual disk. The following is an

example to create a VD.

Step 1: Click “

”, enter “Name”, choose “RG name”,

“Stripe height (KB)”, “Block size (B)”, “Read/Write” mode, “Priority”, “Bg

rate” , change “Capacity (GB)” if necessary. Then click “ ”.

Step 2: Confirm page. Click “ ” if all setups are correct.

Figure 3.6.4.1

- 38 -

Page 39

Figure 3.6.4.2

(Figure 3.6.4.2: Create a VD named “VD-01”, related to “RG-R0”, size is 30GB. The other

VD is named “VD-02”, initializing to 12%.)

Done. View “Virtual disk” page.

• VD column description:

No.

Name

Size(GB)

Right

Priority

Number of this Virtual disk. The button next to the VD

No. shows the functions which can be executed.

Virtual disk name.

Total capacity of the Virtual disk.

“WT” Æ Write Through.

“WB” Æ Write Back.

“RO” Æ Read Only.

“HI” Æ HIgh priority.

“MD” Æ MiD priority.

“LO” Æ LOw priority.

- 39 -

Page 40

Bg rate

Background task priority.

“4 / 3 / 2 / 1 / 0” Æ Default value is 4. The higher

number the background priority of a VD has, the more

background I/O will be scheduled to execute.

Status

Health

The status of Virtual disk.

“Online” Æ the Virtual disk is online.

“Offline” Æ the Virtual disk is offline.

“Initiating” Æ the Virtual disk is being initialized.

“Rebuild” Æ the Virtual disk is being rebuilt.

“Migrate” Æ the Virtual disk is being migrated.

“Rollback” Æ the Virtual disk is being rolled back.

“Scrub” Æ the Virtual disk is being scrubbed.

The health of Virtual disk.

“Optimal” Æ the Virtual disk is operating and has

experienced no failures of the disks that comprise

the RG.

“Degraded” Æ At least one disk which comprises

space of the Virtual disk has been marked as

failed or has been plugged.

“Missing” Æ the Virtual disk has been marked as

missing by the system.

R %

RAID

#LUN

Snapshot

(MB)

“Failed” Æ the Virtual disk has experienced enough

failures of the disks that comprise the VD for

unrecoverable data loss to occur.

“Part optimal” Æ the Virtual disk has experienced

disk failures.

Ratio of initializing or rebuilding.

The levels of RAID that Virtual disk is using.

Number of LUN(s) that Virtual disk is attaching.

The Virtual disk size that used for snapshot. The

number means “Used snapshot space” / “Total

snapshot space”. The unit is in megabytes (MB).

- 40 -

Page 41

#Snapshot

Number of snapshot(s) that Virtual disk is taken.

RG name

Readahead

• VD operations description:

Extend

Scrub

The Virtual disk is related to the RG name

This feature makes data be loaded to disk's buffer in

advance for further use. Default is "Enabled".

Extend a Virtual disk capacity.

Scrub a Virtual disk. It’s a parity regeneration. It

supports RAID 3 / 5 / 6 / 30 / 50 / 60 only.

Delete

Set property

Delete a Virtual disk.

Change the VD name, right, priority and bg rate.

Right options:

“WT” Æ Write Through.

“WB” Æ Write Back.

“RO” Æ Read Only.

Attach LUN

Detach LUN

List LUN

Set snapshot

space

Priority options:

“HI” Æ HIgh priority.

“MD” Æ MiD priority.

“LO” Æ LOw priority.

Bg rate options:

“4 / 3 / 2 / 1 / 0” Æ Default value is 4. The higher

number the background priority of a VD has, the

more background I/O will be scheduled to

execute.

Attach to a LUN.

Detach to a LUN.

List attached LUN(s).

Set snapshot space for executing snapshot. Please

refer to next chapter for more detail.

- 41 -

Page 42

Cleanup

snapshot

Clean all snapshot VD related to the Virtual disk and

release snapshot space.

Take

Take a snapshot on the Virtual disk.

snapshot

Auto

Set auto snapshot on the Virtual disk.

snapshot

List snapshot

More

List all snapshot VD related to the Virtual disk.

Show Virtual disk detail information.

information

3.6.5 Snapshot

“Snapshot” can view the status of snapshot. Please refer to next chapter for

more detail about snapshot concept. The following is an example to take a

snapshot.

Step 1: Create snapshot space. In “/ Volume configuration / Virtual disk”,

Mouse moves to the gray button next to the VD number; click “Set snapshot

space”.

Step 2: Set snapshot space. Then click “ ”. The snapshot

space is created.

Figure 3.6.5.1

Figure 3.6.5.2

(Figure 3.6.5.2: “VD-01” snapshot space has been created, snapshot space is 15360MB,

and used 263MB for saving snapshot index.)

- 42 -

Page 43

Step 3: Take a snapshot. In “/ Volume configuration / Snapshot”, click

“ ”. It will link to next page. Enter a snapshot name.

Figure 3.6.5.3

Step 4: Export the snapshot VD. Mouse moves to the gray button next to the

Snapshot VD number; click “Export”. Enter a capacity for snapshot VD. If size is

zero, the exported snapshot VD will be read only. Otherwise, the exported

snapshot VD can be read/written, and the size will be the maximum capacity to

read/write.

Figure 3.6.5.4

(Figure 3.6.5.5: This is the list of “VD-01”. There are two snapshots in “VD-01”. Snapshot

VD “SnapVD-01” is exported to read only, “SnapVD-02” is exported to read/write.)

Step 5: Attach a LUN for snapshot VD. Please refer to the next section for

attaching a LUN.

Done. Snapshot VD can be used.

Figure 3.6.5.5

- 43 -

Page 44

• Snapshot column description:

No.

Number of this snapshot VD. The button next to the

snapshot VD No. shows the functions which can be

executed.

Name

Used (MB)

Exported

Right

Snapshot VD name.

The amount of snapshot space that has been used.

Snapshot VD is exported or not.

“RW” Æ Read / Write. The snapshot VD can be read /

write.

“RO” Æ Read Only. The snapshot VD can be read

only.

#LUN

Created time

• Snapshot operations description:

Number of LUN(s) that snapshot VD is attaching.

Snapshot VD created time.

Export /

Export / unexport the snapshot VD.

Unexport

Rollback

Delete

Attach

Detach

List LUN

Rollback the snapshot VD to the original.

Delete the snapshot VD.

Attach to a LUN.

Detach to a LUN.

List attached LUN(s).

3.6.6 Logical unit

“Logical unit” can view the status of attached logical unit number of each VD.

- 44 -

Page 45

User can attach LUN by clicking the “ ”. “Host” must enter

an iSCSI node name for access control, or fill-in wildcard “*”, which means every

host can access the volume. Choose LUN number and permission, then click

“ ”.

Figure 3.6.6.1

• LUN operations description:

Attach

Detach

Attach a logical unit number to a Virtual disk.

Detach a logical unit number from a Virtual disk.

The matching rules of access control are inspected from top to bottom in

sequence. For example: there are 2 rules for the same VD, one is “*”, LUN 0; and

the other is “iqn.host1”, LUN 1. The other host “iqn.host2” can login successfully

because it matches the rule 1.

The access will be denied when there is no matching rule.

3.6.7 Example

The following is an example for creating volumes. Example 1 is to create two

VDs and set a global spare disk.

• Example 1

Example 1 is to create two VDs in one RG, each VD uses global cache volume.

Global cache volume is created after system boots up automatically. So, no

action is needed to set CV. Then set a global spare disk. Eventually, delete all of

them.

- 45 -

Page 46

Step 1: Create RG (RAID group).

To create the RAID group, please follow the procedures:

Figure 3.6.7.1

1. Select “/ Volume configuration / RAID group”.

2. Click “ “.

3. Input a RG Name, choose a RAID level from the list, click

“ “ to choose the RAID PD slot(s), then click

“ “.

4. Check the outcome. Click “ “ if all setups are

correct.

5. Done. A RG has been created.

Figure 3.6.7.2

(Figure 3.6.7.2: Creating a RAID 5 with 3 physical disks, named “RG-R5”. The total size is

931GB. Because there is no related VD, free size still remains 931GB.)

Step 2: Create VD (Virtual disk).

To create a data user volume, please follow the procedures.

- 46 -

Page 47

Figure 3.6.7.3

5. Select “/ Volume configuration / Virtual disk”.

6. Click “ ”.

7. Input a VD name, choose a RG Name and enter a size of VD; decide

the stripe high, block size, read/write mode and set priority, finally click

“ “.

8. Done. A VD has been created.

9. Do one more time to create another VD.

Figure 3.6.7.4

(Figure 3.6.7.4: Create VDs named “VD-R5-1” and “VD-R5-2”. Regarding to “RG-R5”, the

size of “VD-R5-1” is 50GB, the size of “VD-R5-2” is 64GB. “VD-R5-1” is initialing about 86%.

There is no LUN attached.)

Step 3: Attach LUN to VD.

There are 2 methods to attach LUN to VD.

1. In “/ Volume configuration / Virtual disk”, mouse moves to the gray

button next to the VD number; click “Attach LUN”.

2. In “/ Volume configuration / Logical unit”, click

“

”.

The procedures are as follows:

1. Select a VD.

- 47 -

Page 48

2. Input “Host” name, which is a FC node name for access control, or fill-

in wildcard “*”, which means every host can access to this volume.

Choose LUN and permission, then click “ ”.

3. Done.

Tips

The matching rules of access control are from top to bottom in

sequence.

Step 4: Set global spare disk.

To set global spare disks, please follow the procedures.

1. Select “/ Volume configuration / Physical disk”.

2. Mouse moves to the gray button next to the PD slot; click “Set Global

space”.

3. “GS” icon is shown in “Usage” column.

(Figure 3.5.8.7: Slot 4 is set as global spare disk.)

Step 5: Done. They can be used as disks.

Delete VDs, RG, please follow the steps listed below.

Step 6: Detach LUN from VD.

Figure 3.6.7.5

- 48 -

Page 49

In “/ Volume configuration / Logical unit”,

1. Mouse moves to the gray button next to the LUN; click “Detach”.

There will pop up a confirmation page.

2. Choose “OK”.

3. Done.

Step 7: Delete VD (Virtual disk).

To delete the Virtual disk, please follow the procedures:

1. Select “/ Volume configuration / Virtual disk”.

2. Mouse moves to the gray button next to the VD number; click “Delete”.

There will pop up a confirmation page, click “OK”.

3. Done. Then, the VDs are deleted.

Tips

When deleting VD, the attached LUN(s) related to this VD will

be detached automatically.

Step 8: Delete RG (RAID group).

To delete the RAID group, please follow the procedures:

1. Select “/ Volume configuration / RAID group”.

2. Select a RG which is no VD related on this RG, otherwise the VD(s) on

this RG must be deleted first.

3. Mouse moves to the gray button next to the RG number click “Delete”.

4. There will pop up a confirmation page, click “OK”.

5. Done. The RG has been deleted.

Tips

The action of deleting one RG will succeed only when all of the

related VD(s) are deleted in this RG. Otherwise, it will have an

error when deleting this RG.

Step 9: Free global spare disk.

To free global spare disks, please follow the procedures.

- 49 -

Page 50

1. Select “/ Volume configuration / Physical disk”.

2. Mouse moves to the gray button next to the PD slot; click “Set Free

disk”.

Step 10: Done, all volumes have been deleted.

3.7 Enclosure management

“Enclosure management” allows managing enclosure information including

“SES configuration”, “Hardware monitor”, “S.M.A.R.T.” and “UPS”. For the

enclosure management, there are many sensors for different purposes, such as

temperature sensors, voltage sensors, hard disks, fan sensors, power sensors,

and LED status. Due to the different hardware characteristics among these

sensors, they have different polling intervals. Below are the details of polling time

intervals:

1. Temperature sensors: 1 minute.

2. Voltage sensors: 1 minute.

3. Hard disk sensors: 10 minutes.

4. Fan sensors: 10 seconds . When there are 3 errors consecutively,

controller sends ERROR event log.

5. Power sensors: 10 seconds, when there are 3 errors consecutively,

controller sends ERROR event log.

6. LED status: 10 seconds.

3.7.1 SES configuration

SES represents SCSI Enclosure Services, one of the enclosure management

standards. “SES configuration” can enable or disable the management of SES.

The SES client software is available at the following web site:

SANtools:

http://www.santools.com/

Figure 3.7.1

- 50 -

Page 51

3.7.2 Hardware monitor

“Hardware monitor” can view the information of current voltage and

temperature.

Figure 3.7.2.1

If “Auto shutdown” has been checked, the system will shutdown automatically

when voltage or temperature is out of the normal range. For better data

protection, please check “Auto Shutdown”.

For better protection and avoiding single short period of high temperature

triggering auto shutdown, controllers use multiple condition judgments for auto

shutdown, below are the details of when the Auto shutdown will be triggered.

1. There are 3 sensors placed on controllers for temperature checking,

one is on core processor, another two are on controller board.

Controller will check each sensor for every 30 seconds. When one of

these sensors is over high temperature value for continuous 3 minutes,

auto shutdown will be triggered immediately.

2. The core processor temperature limit is 85 ℃ . The PCI-X bridge

temperature limit is 80℃. The daughter board temperature limit is 80℃.

- 51 -

Page 52

3. If the high temperature situation doesn’t last for 3 minutes, controller

will not do auto shutdown.

3.7.3 Hard drive S.M.A.R.T. support

S.M.A.R.T. (Self-Monitoring Analysis and Reporting Technology) is a diagnostic

tool for hard drives to deliver warning of drive failures in advance. S.M.A.R.T.

provides users chances to take actions before possible drive failure.

S.M.A.R.T. measures many attributes of the hard drive all the time and inspects

the properties of hard drives which are close to be out of tolerance. The

advanced notice of possible hard drive failure can allow users to back up hard

drive or replace the hard drive. This is much better than hard drive crash when it

is writing data or rebuilding a failed hard drive.

“S.M.A.R.T.” can display S.M.A.R.T. information of hard drives. The number is

the current value; the number in parenthesis is the threshold value. The threshold

values of hard drive vendors are different; please refer to vendors’ specification

for details.

Figure 3.7.3.1

- 52 -

Page 53

3.7.4 UPS

“UPS” can set up UPS (Uninterruptible Power Supply).

Figure 3.7.4.1

Currently, the system only supports and communicates with smart-UPS of APC

(American Power Conversion Corp.) UPS. Please review the details from the

website: http://www.apc.com/.

First, connect the system and APC UPS via RS-232 for communication. Then set

up the shutdown values when power is failed. UPS in other companies can work

well, but they have no such communication feature.

UPS Type

Select UPS Type. Choose Smart-UPS for APC, None

for other vendors or no UPS.

Shutdown

Battery Level

When below the setting level, system will shutdown.

Setting level to “0” will disable UPS.

(%)

Shutdown

Delay (s)

If power failure occurred, and system can not return to

value setting status, the system will shutdown. Setting

delay to “0” will disable the function.

Shutdown

UPS

Status

Select ON, when power is gone, UPS will shutdown by

itself after the system shutdown successfully. After

power comes back, UPS will start working and notify

system to boot up. OFF will not.

The status of UPS.

“Detecting…”

“Running”

“Unable to detect UPS”

“Communication lost”

“UPS reboot in progress”

- 53 -

Page 54

“UPS shutdown in progress”

“Batteries failed. Please change them NOW!”

Battery Level

(%)

Current percentage of battery level.

3.8 System maintenance

“Maintenance” allows the operation of system functions which include “System

information” to show the system version, “Upgrade” to the latest firmware,

“Reset to factory default” to reset all controller configuration values to factory

settings, “Import and export” to import and export all controller configuration,

“Event log” to view system event log to record critical events, and “Reboot and

shutdown” to either reboot or shutdown the system.

Figure 3.8.1

3.8.1 System information

“System information” can display system information (including firmware

version), CPU type, installed system memory, and controller serial number.

3.8.2 Upgrade

“Upgrade” can upgrade firmware. Please prepare new firmware file named

“xxxx.bin” in local hard drive, then click “

“

want to downgrade to the previous FW later (not recommend), please export

your system configuration in advance”, click “Cancel” to export system

configuration in advance, then click “OK” to start to upgrade firmware.

”, it will pop up a message “Upgrade system now? If you

” to select the file. Click

- 54 -

Page 55

Figure 3.8.2.1

Figure 3.8.2.2

When upgrading, there is a progress bar running. After finished upgrading, the

system must reboot manually to make the new firmware took effect.

Tips

Please contact with www.thecus.com for latest firmware.

3.8.3 Reset to factory default

“Reset to factory default” allows user to reset controller to factory default

setting.

Figure 3.8.3.1

Reset to default value, the password is: admin, and IP address to default Static

IP.

Default IP address: 192.168.1.100

Default subnet mask: 255.255.255.0

Default gateway: 192.168.1.1

- 55 -

Page 56

3.8.4 Import and export

“Import and export” allows user to save system configuration values: export,

and apply all configuration: import. For the volume configuration setting, the

values are available in export and not available in import which can avoid

confliction/date-deleting between two controllers. That says if one controller

already exists valuable data in the disks and user may forget to overwrite it. Use

import could return to original configuration. If the volume setting was also

imported, user’s current data will be overwritten.

Figure 3.8.4.1

1. Import: Import all system configurations excluding volume

configuration.

2. Export: Export all configurations to a file.

Caution

“Import” will import all system configurations excluding

volume configuration; the current configurations will be

replaced.

3.8.5 Event log

“Event log” can view the event messages. Check the checkbox of INFO,

WARNING, and ERROR to choose the level of display event log. Clicking

“

name “log-ModelName-SerialNumber-Date-Time.txt”.

” button will save the whole event log as a text file with file

Click ”

“ ” button will stop alarm if system alerts.

” button will clear event log. Click

- 56 -

Page 57

Figure 3.8.5.1

The event log is displayed in reverse order which means the latest event log is

on the first page. The event logs are actually saved in the first four hard drives;

each hard drive has one copy of event log. For one controller, there are four

copies of event logs to make sure users can check event log any time when

there is/are failed disk(s).

Tips

Please plug-in any of the first four hard drives, then event logs

can be saved and displayed in next system boot up. Otherwise,

the event logs would be disappeared.

3.8.6 Reboot and shutdown

“Reboot and shutdown” displays “Reboot” and “Shutdown” buttons. Before

power off, it’s better to execute “Shutdown” to flush the data from cache to

physical disks. The step is necessary for data protection.

3.9 Logout

For security reason, “Logout” allows users logout when no user is operating the

system. Re-login the system; please enter username and password again.

Figure 3.8.6.1

- 57 -

Page 58

Chapter 4 Advanced operation

4.1 Rebuild

If one physical disk of the RG which is set as protected RAID level (e.g.: RAID 3,

RAID 5, or RAID 6) is FAILED or has been unplugged/removed, then the status

of RG is changed to degraded mode, the system will search/detect spare disk to

rebuild the degraded RG to a complete one. It will detect dedicated spare disk as

rebuild disk first, then global spare disk.

i8500 support Auto-Rebuild. The following is the scenario:

Take RAID 6 for example:

1. When there is no global spare disk or dedicated spare disk in the

system, controller will be in degraded mode and wait until (A) there is

one disk assigned as spare disk, or (B) the failed disk is removed and

replaced with new clean disk, then the Auto-Rebuild starts. The new

disk will be a spare disk to the original RG automatically.

If the new added disk is not clean (with other RG information), it would

be marked as RS (reserved) and the system will not start "auto-rebuild".

If this disk is not belonging to any existing RG, it would be FR (Free)

disk and the system will start Auto-Rebuild.

If user only removes the failed disk and plugs the same failed disk in

the same slot again, the auto-rebuild will start running. But rebuilding in

the same failed disk may impact customer data if the status of disk is

unstable. Thecus suggests all customers not to rebuild in the failed

disk for better data protection.

2. When there is enough global spare disk(s) or dedicated spare disk(s)

for the degraded array, controller starts Auto-Rebuild immediately. And

in RAID 6, if there is another disk failure occurs during rebuilding,

controller will start the above Auto-Rebuild process as well. Auto-

Rebuild feature only works at that the status of RG is "Online". It will

not work at “Offline”. Thus, it will not conflict with the “Roaming”.

3. In degraded mode, the status of RG is “Degraded”. When rebuilding,

the status of RG/VD will be “Rebuild”, the column “R%” in VD will

display the ratio in percentage. After complete rebuilding, the status will

become “Online”. RG will become completely one.

- 58 -

Page 59

Tips

“Set dedicated spare” is not available if there is no RG or

only RG of RAID 0, JBOD, because user can not set dedicated

spare disk to RAID 0 & JBOD.

Sometimes, rebuild is called recover; they are the same meaning. The following

table is the relationship between RAID levels and rebuild.

RAID 0

RAID 1

N-way

mirror

RAID 3

RAID 5

RAID 6

Disk striping. No protection for data. RG fails if any hard drive

fails or unplugs.

Disk mirroring over 2 disks. RAID 1 allows one hard drive fails

or unplugging. Need one new hard drive to insert to the

system and rebuild to be completed.

Extension to RAID 1 level. It has N copies of the disk. N-way

mirror allows N-1 hard drives failure or unplugging.

Striping with parity on the dedicated disk. RAID 3 allows one

hard drive failure or unplugging.

Striping with interspersed parity over the member disks. RAID

5 allows one hard drive failure or unplugging.

2-dimensional parity protection over the member disks. RAID

6 allows two hard drives failure or unplugging. If it needs to

rebuild two hard drives at the same time, it will rebuild the first

one, then the other in sequence.

RAID 0+1

Mirroring of RAID 0 volumes. RAID 0+1 allows two hard drive

failures or unplugging, but at the same array.

RAID 10

Striping over the member of RAID 1 volumes. RAID 10 allows

two hard drive failure or unplugging, but in different arrays.

RAID 30

Striping over the member of RAID 3 volumes. RAID 30 allows

two hard drive failure or unplugging, but in different arrays.

RAID 50

Striping over the member of RAID 5 volumes. RAID 50 allows

two hard drive failures or unplugging, but in different arrays.

RAID 60

Striping over the member of RAID 6 volumes. RAID 40 allows

four hard drive failures or unplugging, every two in different

- 59 -

Page 60

arrays.

JBOD

The abbreviation of “Just a Bunch Of Disks”. No data

protection. RG fails if any hard drive failures or unplugs.

4.2 RG migration

To migrate the RAID level, please follow below procedures.

1. Select “/ Volume configuration / RAID group”.

2. Mouse moves to the gray button next to the RG number; click

“Migrate”.

3. Change the RAID level by clicking the down arrow to “RAID 5”. There

will be a pup-up which indicates that HDD is not enough to support the

new setting of RAID level, click “ ” to increase hard

drives, then click “ “ to go back to setup page.

When doing migration to lower RAID level, such as the original RAID

level is RAID 6 and user wants to migrate to RAID 0, system will

evaluate whether this operation is safe or not, and appear a message

of "Sure to migrate to a lower protection array?” to give user

warning.

4. Double check the setting of RAID level and RAID PD slot. If there is no

problem, click “

5. Finally a confirmation page shows the detail of RAID information. If

there is no problem, click “

System also pops up a message of “Warning: power lost during

migration may cause damage of data!” to give user warning. When

the power is abnormally off during the migration, the data is in high risk.

6. Migration starts and it can be seen from the “status” of a RG with

“Migrating”. In “/ Volume configuration / Virtual disk”, it displays a

“Migrating” in “Status” and complete percentage of migration in

“R%”.

Figure 4.2.1

“.

“ to start migration.

- 60 -

Page 61

Figure 4.2.2

(Figure 4.2.2: A RAID 0 with 4 physical disks migrates to RAID 5 with 5 physical disks.)

Figure 4.2.3

(Figure 4.2.3: A RAID 0 migrates to RAID 5, the complete percentage is 14%.)

To do migration, the total size of RG must be larger or equal to the original RG. It

does not allow expanding the same RAID level with the same hard disks of

original RG.

The operation is not allowed when RG is being migrated. System would reject

following operations:

1. Add dedicated spare.

2. Remove a dedicated spare.

3. Create a new VD.

4. Delete a VD.

5. Extend a VD.

6. Scrub a VD.

7. Perform yet another migration operation.

8. Scrub entire RG.

9. Take a new snapshot.

10. Delete an existing snapshot.

11. Export a snapshot.

12. Rollback to a snapshot.

Caution

RG Migration cannot be executed during rebuild or VD

extension.

- 61 -

Page 62

4.3 VD Extension

To extend VD size, please follow the procedures.

1. Select “/ Volume configuration / Virtual disk”.

2. Mouse moves to the gray button next to the VD number; click

“Extend”.

3. Change the size. The size must be larger than the original, and then

click “ “ to start extension.

Figure 4.3.1

4. Extension starts. If VD needs initialization, it will display an “Initiating”

in “Status” and complete percentage of initialization in “R%”.

Figure 4.3.2

(Figure 4.3.2: Extend VD-R5 from 20GB to 40GB.)

Tips

The size of VD extension must be larger than original.

Caution

VD Extension cannot be executed during rebuild or migration.

4.4 Snapshot / Rollback

Snapshot captures the instant state of data in the target volume in a logical

sense. The underlying logic is Copy-on-Write -- moving out the data which would