Page 1

SAS®Analytics Accelerator 1.3

for Teradata

Guide

SAS®Documentation

Page 2

The correct bibliographic citation for this manual is as follows: SAS Institute Inc. 2011. SAS®Analytics Accelerator 1.3 for

Teradata: Guide. Cary, NC: SAS Institute Inc.

SAS®Analytics Accelerator 1.3 for Teradata: Guide

Copyright © 2011, SAS Institute Inc., Cary, NC, USA

All rights reserved. Produced in the United States of America.

For a hard-copy book: No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or

by any means, electronic, mechanical, photocopying, or otherwise, without the prior written permission of the publisher, SAS

Institute Inc.

For a Web download or e-book: Your use of this publication shall be governed by the terms established by the vendor at the time

you acquire this publication.

U.S. Government Restricted Rights Notice: Use, duplication, or disclosure of this software and related documentation by the

U.S. government is subject to the Agreement with SAS Institute and the restrictions set forth in FAR 52.227-19, Commercial

Computer Software-Restricted Rights (June 1987).

SAS Institute Inc., SAS Campus Drive, Cary, North Carolina 27513.

1st electronic book, February 2011

SAS®Publishing provides a complete selection of books and electronic products to help customers use SAS software to its fullest

potential. For more information about our e-books, e-learning products, CDs, and hard-copy books, visit the SAS Publishing Web

site at support.sas.com/publishing or call 1-800-727-3228.

SAS®and all other SAS Institute Inc. product or service names are registered trademarks or trademarks of SAS Institute Inc. in

the USA and other countries. ® indicates USA registration.

Other brand and product names are registered trademarks or trademarks of their respective companies.

Page 3

Contents

Chapter 1. SAS Analytics Accelerator 1.3 for Teradata: Guide . . . . . . . . . . . . . 1

Subject Index 71

Syntax Index 73

Page 4

iv

Page 5

Chapter 1

SAS Analytics Accelerator 1.3 for Teradata: Guide

Contents

What’s New in SAS Analytics Accelerator 1.3 for Teradata . . . . . . . . . . . . . . . . . . 3

Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

Overview of In-Database Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

Teradata and the SAS System . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4

SQL Generation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

SAS In-Database Functions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

SAS In-Database Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

Base SAS In-Database Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

SAS/STAT In-Database Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

SAS/ETS In-Database Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

SAS Enterprise Miner In-Database Procedures . . . . . . . . . . . . . . . . . . . . . 10

Limitations of In-Database Procedures . . . . . . . . . . . . . . . . . . . . . . . . . 10

Performance and Numerical Accuracy Issues with In-Database Computing . . . . . . . . . 11

Enabling and Controlling In-Database Computing . . . . . . . . . . . . . . . . . . . . . . . 11

LIBNAME Statement Options for In-Database Computing . . . . . . . . . . . . . . . . . . 14

Using SAS/ACCESS Software with Teradata: Some Cautionary Notes . . . . . . . . . . . . 19

Indeterminate Row Order in a DBMS Table . . . . . . . . . . . . . . . . . . . . . . . 28

SAS/ACCESS Data Set Options for Teradata . . . . . . . . . . . . . . . . . . . . . . . . . 31

Base SAS Data Set Options . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

Deploying and Using SAS Formats in Teradata . . . . . . . . . . . . . . . . . . . . . . . . 37

Using SAS Formats . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 37

How It Works . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 39

Formats That SAS Supplies in the Teradata EDW . . . . . . . . . . . . . . . . . . . . 41

User-Defined Formats in the Teradata EDW . . . . . . . . . . . . . . . . . . . . . . . 41

Overview of the Publishing Process . . . . . . . . . . . . . . . . . . . . . . . . . . . 42

Using SAS Formats . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 42

BY Groups and In-Database Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

Support for Teradata Data Types . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 47

Variables in the DATA= Data Set . . . . . . . . . . . . . . . . . . . . . . . . . . . . 47

Numeric Computations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 48

BY Processing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

Conditions That Prevent In-Database Processing . . . . . . . . . . . . . . . . . . . . . . . 49

Page 6

2 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Options . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 50

LIBNAME Properties . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 52

Data Set and Variable Properties . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

BY Processing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

Compatibility of the DATA= and OUT= Data Sets . . . . . . . . . . . . . . . . . . . 54

Other Conditions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

In-Database Computing for the DATA= Data Set by the CANCORR, FACTOR, PRINCOMP,

REG, and VARCLUS Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

ODS Tables for the CANCORR, FACTOR, PRINCOMP, REG, and VARCLUS Procedures . 56

In-Database Computing for the OUT= Data Set by the CANCORR, FACTOR, PRINCOMP,

and SCORE Procedures . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 56

SAS/STAT Procedure Options, Statements, and Features Affected by In-Database Computing 57

CANCORR Procedure Options, Statements, and Features Affected by In-Database

Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 57

FACTOR Procedure Options, Statements, and Features Affected by In-Database Com-

puting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 58

PRINCOMP Procedure Options, Statements, and Features Affected by In-Database

Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 58

REG Procedure Options, Statements, and Features Affected by In-Database Computing 59

SCORE Procedure Options, Statements, and Features Affected by In-Database Com-

puting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

VARCLUS Procedure Options, Statements, and Features Affected by In-Database

Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

SAS/ETS Procedure Options, Statements, and Features Affected by In-Database Computing 62

TIMESERIES Procedure Options, Statements, and Features Affected by In-Database

Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

Base SAS Procedure Options, Statements, and Features Affected by In-Database Computing 62

CORR Procedure Options, Statements, and Features Affected by In-Database Computing 62

FREQ Procedure Options, Statements, and Features Affected by In-Database Computing 63

SAS Enterprise Miner Procedure Options, Statements, and Features Affected by In-Database

Computing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

DMDB Procedure Options, Statements, and Features Affected by In-Database Com-

puting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

DMINE Procedure Options, Statements, and Features Affected by In-Database Com-

puting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

DMREG Procedure Options, Statements, and Features Affected by In-Database Com-

puting . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

Miscellaneous Details . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

Example . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

Page 7

What’s New in SAS Analytics Accelerator 1.3 for Teradata F 3

What’s New in SAS Analytics Accelerator 1.3 for Teradata

Overview

This release contains the first implementation of in-database functionality for SAS®Enterprise Miner™.

SAS Analytics Accelerator 1.3 for Teradata supports three SAS Enterprise Miner procedures: DMDB,

DMINE, and DMREG.

Overview of In-Database Computing

SAS applications are often built to work with large volumes of data in environments that demand rigorous IT

security and management. When the data are stored in an external database, such as a Teradata database, the

transfer of large data sets to the computers that run the SAS System can cause a performance bottleneck and

possible unwanted security and resource management consequences for local data storage. SAS Analytics

Accelerator 1.3 for Teradata addresses these challenges by moving computational tasks closer to the data

and by improving the integration between the SAS System and the database management system (DBMS).

At present, there are in-database versions of six SAS/STAT procedures, one SAS/ETS procedure, and three

SAS Enterprise Miner procedures. The SAS/STAT in-database procedures include CANCORR, FACTOR,

PRINCOMP, REG, SCORE, and VARCLUS; the SAS/ETS procedure is TIMESERIES; and the SAS Enterprise Miner procedures are DMDB, DMINE, and DMREG.

There are also five Base SAS in-database procedures (CORR, FREQ, MEANS, RANK, and SUMMARY),

but Base SAS in-database procedures, with the exception of the CORR procedure, do not require the installation of SAS Analytics Accelerator 1.3 for Teradata. Table 1.1 lists the software licenses that are required

to take advantage of in-database computing.

Page 8

4 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Table 1.1 Software Licensing Requirements for In-Database Computing

Procedures Licenses Required

SAS/STAT procedures Base SAS 9.2M3

(CANCORR, FACTOR, PRINCOMP, SAS/ACCESS 9.2 (SAS/ACCESS Interface to Teradata)

REG, VARCLUS, and SCORE) SAS/STAT 9.22

SAS Analytics Accelerator 1.3 for Teradata

SAS/ETS procedure Base SAS 9.2M3

(TIMESERIES) SAS/ACCESS 9.2 (SAS/ACCESS Interface to Teradata)

SAS/ETS 9.22

SAS Analytics Accelerator 1.3 for Teradata

SAS Enterprise Miner procedures

(DMDB, DMINE, and DMREG) Base SAS 9.2M3

SAS/ACCESS 9.2 (SAS/ACCESS Interface to Teradata)

SAS Enterprise Miner 6.2

SAS Analytics Accelerator 1.3 for Teradata

Base SAS statistical procedures Base SAS 9.2M3

(FREQ, MEANS, RANK, and SUMMARY) SAS/ACCESS 9.2 (SAS/ACCESS Interface to Teradata)

BASE SAS statistical procedures Base SAS 9.2M3

(CORR) SAS/ACCESS 9.2 (SAS/ACCESS Interface to Teradata)

SAS Analytics Accelerator 1.3 for Teradata

All SAS Analytics Accelerator 1.3 for Teradata in-database functions require Teradata 13.00.00.15 or higher.

Installation instructions for SAS Analytics Accelerator 1.3 for Teradata are published in the section “Configuring SAS Analytics Accelerator for Teradata” in the Configuration Guide for SAS 9.2 Foundation for

UNIX Environments and the Configuration Guide for SAS 9.2 Foundation for Microsoft Windows.

In SAS 9.2M3, SAS/STAT 9.22, and SAS/ETS 9.22 in-database computing is available only for the Teradata

DBMS. Future releases might provide in-database computing for other DBMSs.

Teradata and the SAS System

In a conventional environment where in-database computing is not possible, a large amount of data is stored

in the Teradata database. When a SAS procedure executes, it must read the data through the SAS/ACCESS

engine. This movement of data from the database to the SAS workspace server causes a performance

penalty. In an environment where in-database computing is possible, such as that provided by SAS Analytics

Accelerator 1.3 for Teradata, when a comparable Base SAS, SAS/STAT, SAS/ETS, or SAS Enterprise Miner

in-database procedure executes, some of the procedure’s computations are performed within Teradata, and

only the results of those computations, rather than the raw data, are passed to the SAS workspace server.

The benefit is a reduction in data movement between the database and the SAS workspace server. The cost

Page 9

SQL Generation F 5

of this reduction in data movement is the difference in processing time due to using Teradata versus the SAS

System to process the data. The trade-off in processing time versus data movement time depends on several

dynamic factors, including client and server workloads, network speed and workload, and data size. The net

benefit from using in-database procedures varies based on the particular environment. In general, the net

benefit increases as the number of rows in a database table increases.

SQL Generation

When performing in-database modeling, a SAS/STAT, SAS/ETS, or SAS Enterprise Miner procedure dynamically generates SQL code, which is based on the procedure options and statements. It then submits the

SQL code directly to the database. The code can be standard SQL that can be interpreted by any database,

or it can be tuned specifically for Teradata. The choice for the type of SQL code is determined by the complexity of the required analysis. The code can include SAS formats that are executed in Teradata. The query

returns result sets that are used by the in-database procedure to complete the analysis before supplying the

results to one of the following: the SAS output listing, the ODS listing, ODS Graphics, or output tables.

The end result is usually the same as when you use Base SAS tables rather than Teradata tables. However,

with in-database computing the relational database software is responsible for optimizing and executing the

query. When the table to be analyzed is very large, the in-database computing approach can result in significantly lower total elapsed processing time and in reduced data movement between the SAS System and the

Teradata database.

SAS In-Database Functions

When a SAS/STAT, SAS/ETS, or SAS Enterprise Miner procedure dynamically creates and submits SQL

code, this code includes references to new user-defined functions (UDFs), developed by SAS, which are

installed on the Teradata system. These UDFs are the key to performing advanced statistical computations efficiently in-database. For example, SAS has developed a Teradata UDF called SAS_TACORR for

computing uncorrected sum of squares and crossproducts (SSCP) matrices (also known as X’X matrices)

and related statistics in-database. The SAS/STAT procedures CANCORR, FACTOR, PRICOMP, REG,

and VARCLUS have been modified to take advantage of the SAS_TACORR UDF, which enables them

to perform advanced statistical computations on a DBMS table without downloading the entire table with

SAS/ACCESS software.

To better understand how the SAS_TACORR UDF enhances computing efficiency, consider the following.

The SSCP matrix is a condensed representation of the relationships between variables. The size of the

SSCP matrix is determined by the number of variables that are used by the procedure and does not depend

on the number of rows. For example, a data set that contains 100 numeric columns and 5 million rows of

data produces an SSCP matrix that contains, nominally, 100x100 cells. However, a SAS/STAT in-database

procedure needs only the lower triangle of 100x50 cells. Thus, the data that is transferred from Teradata to

SAS is reduced from 500,000,000 raw data cells to 5,000 SSCP data cells, which is a reduction of 99.999

percent. As the example illustrates, the benefit of computing the SSCP matrix in-database versus passing

the raw data to the SAS System increases greatly as the number of rows increases. Large data applications

benefit the most from in-database processing.

Page 10

6 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

The SAS/STAT in-database procedure constructs SQL code that uses the SAS in-database function to create the SSCP matrix. This function is not intended for general-purpose SQL queries, but it is useful for

SAS/STAT in-database procedures. The elements of the SSCP are created and transferred to the SAS System. After the crossproducts have been transferred to the SAS System, the database is available to continue

processing queries while the SAS/STAT procedure continues the analysis.

SAS In-Database Procedures

Several SAS/STAT, SAS/ETS, SAS Enterprise Miner, and Base SAS procedures have been modified to

move critical data-intensive operations into the database. Such operations include basic summarization and

exploratory data analysis. These SAS procedures are commonly found in many SAS programs and represent

a large opportunity to improve efficiency when working with relational databases. Current changes are

optimized for the Teradata relational database. The modifications to the SAS/STAT, SAS/ETS, and SAS

Enterprise Miner in-database procedures, as well as the Base SAS CORR procedure, require the installation

of the SAS Analytics Accelerator 1.3 for Teradata and are documented here.

Base SAS In-Database Procedures

Base SAS currently offers in-database implementations of the CORR, FREQ, MEANS, RANK, and SUMMARY procedures. The Base SAS in-database procedures work differently from the SAS/STAT, SAS/ETS,

and SAS Enterprise Miner in-database procedures and do not require the installation of the SAS Analytics

Accelerator for Teradata (with the exception of the CORR procedure). The Base SAS in-database procedures are included in the Base SAS software and are documented in Base SAS Procedures Guide. However,

some notes about the options that affect the Base SAS in-database procedures are included in this document

for convenience.

SAS/STAT In-Database Procedures

In-database versions of the CANCORR, FACTOR, PRINCOMP, REG, SCORE, and VARCLUS procedures

are available in SAS/STAT 9.22. Each procedure generates SQL code which, except for the SCORE procedure, calls the SAS in-database function for SSCP creation. These procedures are commonly used in

exploratory data analysis and regression model building. The procedure syntax and output for in-database

computing is identical to the syntax and output for conventional SAS processing in most cases. In those

cases, existing procedure steps in SAS programs are able to run in-database without modification.

However, some issues with in-database processing can cause the output to differ because of numerical precision issues and BY processing issues. These issues are discussed in the section “Conditions That Prevent

In-Database Processing.” Together with the in-database Base SAS procedures, the SAS/STAT procedures

can be used for basic tasks that are often executed at the beginning of larger analytical tasks. Use of these

techniques can greatly reduce the total data transfer between relational databases and the SAS System.

Page 11

SAS/STAT In-Database Procedures F 7

The CANCORR Procedure

The CANCORR procedure performs canonical correlation, partial canonical correlation, and canonical redundancy analysis.

Canonical correlation is a generalization of multiple correlation for analyzing the relationship between two

sets of variables. In multiple correlation, you examine the relationship between a linear combination of a

set of explanatory variables, X, and a single response variable, Y. In canonical correlation, you examine

the relationship between linear combinations of the set of X variables and linear combinations of a set of

Y variables. These linear combinations are called canonical variables or canonical variates. Either set of

variables can be considered explanatory or response variables, since the statistical model is symmetric in

the two sets of variables. Simple and multiple correlation are special cases of canonical correlation in which

one or both sets contain a single variable.

The SSCP matrix is computed in-database and is transferred to the SAS System for further processing. The

options in the CANCORR procedure that are affected by in-database processing are detailed in the section

“CANCORR Procedure Options Affected by In-Database Computing.”

The FACTOR Procedure

The FACTOR procedure performs a variety of common factor and component analyses and rotations. The

methods for factor extraction are principal component analysis, principal factor analysis, iterated principal

factor analysis, unweighted least squares factor analysis, maximum likelihood (canonical) factor analysis,

alpha factor analysis, image component analysis, and Harris component analysis. A variety of methods for

prior communality estimation are also available.

The SSCP matrix is computed in-database and is transferred to the SAS System for further processing. The

options in the FACTOR procedure that are affected by in-database processing are detailed in the section

“FACTOR Procedure Options Affected by In-Database Computing.”

The PRINCOMP Procedure

The PRINCOMP procedure computes a principal component transformation of the SSCP matrix into orthogonal components. The first component accounts for the maximum amount of variation; the second

accounts for the next largest amount of variation; and so on. This procedure is typically used in exploratory

data analysis and visualization. Scatter plots that are generated from the principal component dimensions

often reveal interesting relationships among the data points.

Principal component analysis (PCA) reduces the dimensionality of data for predictive modeling by replacing

the variables in the original data with fewer principal component terms in the final model. The SSCP matrix

is computed in-database and is transferred to the SAS System for further processing. The options in the

PRINCOMP procedure that are affected by in-database processing are detailed in the section “PRINCOMP

Procedure Options Affected by In-Database Computing.”

Page 12

8 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

The REG Procedure

The REG procedure computes a model in which a dependent variable is modeled as a linear equation of

multiple independent variables by a least squares function. PROC REG has numerous model-fitting options,

which include many model selection variations and hypothesis tests. Regression analysis is often used to

identify a subset of independent variables that have unique and significant relationships with the dependent

variable. In this case, PROC REG is used for its exploratory analysis, in addition to its extensive model

fitting and reporting functions. You might want to use the REG procedure to compute candidate models in

the database. A full SSCP matrix is computed in Teradata and is transferred to the SAS System, in which

the model fitting occurs.

PROC REG includes an option that uses a CORR or SSCP matrix as input and creates a CORR or SSCP matrix as output. For both in-database processing and matrix input, any option that requires access to individual

rows of data is disabled. The disabled options include the general class of residual analysis, plot options,

and confidence intervals. Complete details of which options and statements are affected by in-database

processing are provided in the section “REG Procedure Options Affected by In-Database Computing.”

The SCORE Procedure

Many statistical procedures create output data sets (using the OUTEST= or OUTSTAT= options) that contain coefficients. The SCORE procedure can apply the coefficients in those data sets to a raw data set and

compute new variables that are generically called scores. Each new score variable is formed as a linear

combination of the raw data and the scoring coefficients. That is, for each observation in the raw data set,

PROC SCORE multiplies the value of a variable in the raw data set by the matching scoring coefficient

from the data set of scoring coefficients. This multiplication process is repeated for each variable in the

VAR statement. The resulting products are then summed to produce the value of the new score variable. In

other words, PROC SCORE performs a matrix multiplication on the two data sets.

For example, you can use the SCORE procedure to produce output in the form of a table in Teradata that

contains predicted values or residuals from a model that is estimated using the REG procedure in-database.

The SCORE procedure dynamically generates SQL code for the given model. PROC SCORE then submits

the SQL code to the database, which produces a Teradata table without having to extract any rows of data into

the SAS System. Details of which options in the SCORE procedure are affected by in-database processing

are provided in the section “SCORE Procedure Options Affected by In-Database Computing.”

PROC SCORE cannot be used for scoring nonlinear models.

The following SAS procedures produce output data sets that contain scoring coefficients that can be used

by PROC SCORE:

ACECLUS procedure

CALIS procedure

CANCORR procedure

CANDISC procedure

DISCRIM procedure

Page 13

SAS/ETS In-Database Procedures F 9

FACTOR procedure

PRINCOMP procedure

TCALIS procedure

VARCLUS procedure

ORTHOREG procedure

QUANTREG procedure

REG procedure

ROBUSTREG procedure

You can also use a DATA step or the IML procedure to output any coefficients you want in a data set to use

with PROC SCORE.

The VARCLUS Procedure

The VARCLUS procedure groups variables into clusters that are based on their correlations. The full SSCP

matrix is created, and then clusters are chosen to maximize the variance that is associated with the first

principal component within the cluster. An iterative process assigns variables to clusters to maximize the

sum across clusters of the variance of the original variables, which is explained by the centroid cluster

measure.

Similar to principle components, variable clustering is another technique that is used for exploratory data

analysis and for variable reduction, which reduces the number of terms that are used in successive analyses.

The SSCP matrix is computed in-database and is then transferred to the SAS System for further processing.

Details of which options in the VARCLUS procedure are affected by in-database processing are provided in

the section “VARCLUS Procedure Options Affected by In-Database Computing.”

SAS/ETS In-Database Procedures

The TIMESERIES procedure analyzes time-stamped transactional data with respect to time and accumulates

the data into a time series format. The procedure can perform trend and seasonal analysis on the transactions.

After the transactional data are accumulated, time domain analysis and frequency domain analysis can be

performed on the accumulated time series.

Details of which options in the TIMESERIES procedure are affected by in-database processing are provided

in the section “TIMESERIES Procedure Options Affected by In-Database Computing.”

Page 14

10 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

SAS Enterprise Miner In-Database Procedures

The DMDB Procedure

SAS Enterprise Miner architecture is based on the creation of a data mining database (DMDB) that is a

snapshot of the original data. PROC DMDB creates this DMDB from the input data source. It also compiles

and computes metadata information about the input data based on variable roles and stores it in a metadata

catalog. The DMDB and the associated metadata catalog facilitate subsequent data mining activities. They

are both designed for processing and storage efficiencies.

The DMINE Procedure

Many data mining databases have hundreds of potential model inputs. The DMINE procedure enables you

to quickly identify the input variables that are useful for predicting the target variables based on a linear

models framework. The procedure facilitates ordinary least squares or logistic regression methods.

The DMREG Procedure

The DMREG procedure enables you to fit both linear and logistic regression models. Linear regression

attempts to predict the value of a continuous target as a linear function of one or more independent inputs.

Logistic regression attempts to predict the probability that a categorical (binary, ordinal, or nominal) target

will acquire the event of interest as a function of one or more independent inputs. The procedure supports

forward, backward, and stepwise selection methods. It also enables you to score data sets or generate SAS

DATA step code to score a data set.

Limitations of In-Database Procedures

Some features of SAS procedures do not execute in-database. The ability to perform in-database processing

depends on the specific type of analysis that is provided by the statements and options of a procedure. These

limitations are considered reasonable for the use case of accessing and exploring data. After a subset of data

has been selected and a model form has been established, a data sample can be defined by using PROC SQL.

This subset of data can then be accessed directly by the SAS procedure to execute an analysis that includes

functions that do not execute in-database. Use of the SAS in-database procedures reduces the amount of

data that is transferred and makes use of the resources of the Teradata system.

Page 15

Performance and Numerical Accuracy Issues with In-Database Computing F 11

Performance and Numerical Accuracy Issues with In-Database Computing

Consider the following issues when performing in-database computations:

In-database computation can be either faster or slower than computation that uses SAS/ACCESS

software, depending on client performance, network performance, server performance, and the type

of computations involved.

In-database computation tends to be slow when the number of variables in the analysis is large or

when the number of BY groups is large.

Additional overhead is involved with in-database computation, and therefore more memory is required

on the client for in-database computation compared to out-of-database computation.

The SSCP matrix is computed with less accuracy in-database when the variables have small coeffi-

cients of variation.

Enabling and Controlling In-Database Computing

You control in-database computing with options in the LIBNAME statement and the OPTIONS statement.

SQLGENERATION=NONE | DBMS | ALL

specifies the type of in-database computing to be performed. The SQLGENERATION= option can

be specified as either a LIBNAME statement option or as a system option in an OPTIONS statement.

The value used for the SQLGENERATION= option depends on whether the option is specified in the

LIBNAME statement, an OPTIONS statement, or both as follows:

If the SQLGENERATION= option is specified in the LIBNAME statement for a data set, then

with respect to that data set, any value of the SQLGENERATION= option that is specified in

any OPTIONS statement is ignored.

If the SQLGENERATION= option is not specified in a LIBNAME statement for a data set, then

with respect to that data set, the value of the SQLGENERATION= option that is specified in the

most recent OPTIONS statement is used.

If the SQLGENERATION= option is not specified in either the LIBNAME statement for a data

set or in an OPTIONS statement, then by default with respect to that data set, SQLGENERATION=DBMS.

You can specify different values of the SQLGENERATION= option for the DATA= and OUT= data

sets by using different LIBNAME statements for the two data sets.

Page 16

12 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

The values of the SQLGENERATION option are interpreted as follows:

NONE specifies that no in-database computation be performed. In this case, a SAS/STAT

procedure uses SAS/ACCESS to copy the raw data from Teradata to the SAS Work

directory, and the procedure performs all computations within the SAS System.

DBMS specifies that in-database computation be performed for data sets that are DBMS

tables if no system options, LIBNAME options, data set attributes, or procedure

options are incompatible with in-database computation. DBMS is the default

value, and it automatically enables in-database processing.

When the value is DBMS, the procedure uses in-database processing (when possible) and uses conventional SAS processing when the specific procedure statements

and options do not support in-database processing. For example, a REG procedure

statement that contains a residual option computes the SSCP matrix in-database,

but transfers the detail data to the SAS System to create residual analysis and output.

If the procedure attempts to perform a computation in-database but the SQL command fails, the procedure tries to perform that computation out-of-database.

ALL specifies that in-database computation be performed whenever possible.

In-database computation is not applicable to data sets that contain summary statistics, such as OUTEST= and OUTSTAT= data sets, or DATA= data sets for which the TYPE= attribute is CORR, EST,

SSCP, FACTOR, and so on. (See the “Special SAS Data Sets” chapter in SAS/STAT User’s Guide for

the complete list of TYPE= attributes.) Because the data in these tables are already summaries, no

additional summarization can be performed in-database. The SQLGENERATION= option is ignored

with respect to those data sets for which in-database computation is not applicable.

The following system options, which are specified in an OPTIONS statement, also control in-database

computing for SAS/STAT, SAS/ETS, and SAS Enterprise Miner procedures:

MSGLEVEL=N | I

specifies the level of messages to be printed to the log. N is the default value.

N specifies that the following messages be printed in the log:

I specifies that everything printed by MSGLEVEL=N be printed plus the following

a confirmatory note that states that SQL is used for in-database computations,

when that is the case

error messages if anything goes wrong with the SQL commands submitted for

in-database computations

note that states whether SQL is used, when there are SQL error messages

notes:

note that explains why SQL is not used for in-database computations, if that

is the case (however, no note is printed when OPTIONS SQLGENERATION=NONE is specified)

note that explains that the TYPE= attribute is not stored in DBMS tables when

you try to create a special SAS data set (that is, a data set that has a non-blank

value of the TYPE= attribute) as a DBMS table

Page 17

SQL_IP_TRACE=NOTE | SOURCE | ALL

specifies the level of information to be included in the trace output. You can specify more than one

value by enclosing the values in parentheses.

NOTE specifies that the trace output include the following information:

all original messages from the DBMS (ordinarily, the SAS System intercepts

certain confusing messages from the DBMS and replaces them with messages

that can be more easily understood by SAS customers)

note that indicates whether SQL is used for in-database computations, even

when SQLGENERATION=NONE is specified. By default, a note is printed

when SQL is used, but no note is printed when SQL is not used. If you specify

SQL_IP_TRACE=NOTE, a note is always printed.

notes that state the name of the data set engine, the name of the DBMS

database, and so on

notes that explain what kinds of SAS passwords apply to the data set, because

the drivers used for in-database computation do not support SAS passwords

note that indicates that no system options or data set attributes prevent using

SQL for in-database computation, when that is the case (this note does not

rule out the possibility that some procedure options might prevent in-database

computation)

Enabling and Controlling In-Database Computing F 13

SOURCE specifies that trace output include the major SQL commands that are submitted

for in-database computation, but not minor SQL commands such as those used to

obtain metadata.

ALL specifies that trace output include the output for NOTE and SOURCE, plus all

SQL commands that are submitted (including those used to obtain metadata and to

verify that UDFs are published), plus additional details.

SQLMAPPUTTO=NONE | SAS_PUT

is not applicable to the SAS/STAT, SAS/ETS, or SAS Enterprise Miner procedures but is included

here for completeness.

The Base SAS procedures use implicit pass-through to send SQL commands to Teradata. With implicit pass-through, the SQL commands are written in the SAS dialect of SQL and then the SAS

System translates those commands to the Teradata dialect of SQL. The SAS dialect of SQL uses the

PUT function to format variables. The Teradata dialect of SQL uses the SAS_PUT UDF to format

variables. The SQLMAPPUTTO=SAS_PUT option specifies that the PUT function in SAS SQL be

translated (mapped) to the SAS_PUT function in Teradata SQL.

The SAS/STAT, SAS/ETS, and SAS Enterprise Miner procedures write SQL commands directly in the

Teradata dialect of SQL instead of using implicit pass-through. Therefore, this option has no effect

on the SAS/STAT, SAS/ETS, and SAS Enterprise Miner procedures. See the section “SQLMAPPUTTO= System Option” in SAS/ACCESS for Relational Databases: Reference.

FMTERR | NOFMTERR

specifies whether to refrain from using unpublished formats.

If a procedure needs to format a BY variable but that format has not been published (that is, not

installed on the Teradata server in SYSLIB or the current database), the procedure does not perform

Page 18

14 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

in-database computation unless you specify NOFMTERR. NOFMTERR tells the procedure to refrain

from using unpublished formats and to use the unformatted values of the BY variables instead. If you

specify NOFMTERR and a Teradata error occurs when the procedure tests a format, the procedure

uses the unformatted values of the BY variables to define BY groups.

For each BY variable that has a format that cannot be used, a message is printed to that effect. That

message is an error if FMTERR is specified or a note if NOFMTERR is specified. In addition, a note

states whether SQL is used.

For information about publishing formats, see the section “Deploying and Using SAS Formats in

Teradata” on page 37 in this document or the section “Deploying and Using SAS Formats in Teradata”

in SAS/ACCESS 9.2 for Relational Databases: Reference.

DBFMTIGNORE | NODBFMTIGNORE

specifies which type of formats be used for DBMS columns.

Ordinarily, SAS formats control how data values are displayed rather than how data values are stored.

However, SAS/ACCESS software works differently from other SAS products with respect to formats.

NODBFMTIGNORE (the default) causes SAS/ACCESS software, when it creates a DBMS table, to

use the SAS formats assigned to SAS variables to decide which DBMS data types to assign to the

corresponding DBMS columns. For example, the following SAS statements create a DBMS table

named pi_table which contains a column named pi with a DBMS data type of decimal (3,2) with a

total of 3 digits with 2 decimal places:

data dbms.pi_table;

pi = 22/7;

format pi 3.2;

run;

The value that SAS/ACCESS software inserts in the column pi is 3.14 rather than 3.142857142 . ..

(that is, the actual numeric value of the column is truncated to the two decimal places that are specified

in the SAS format). Computations performed with the DBMS column pi suffer from loss of precision

and possibly numeric overflow.

If you specify OPTIONS DBFMTIGNORE, SAS/ACCESS software creates numeric DBMS columns

with the data type DOUBLE PRECISION. This data type is the same as the default data type that the

SAS System uses for numeric variables in ordinary SAS data sets.

The DBFMTIGNORE option does not apply to datetime or character formats.

See the section “Using SAS/ACCESS Software with Teradata: Some Cautionary Notes” for more details.

LIBNAME Statement Options for In-Database Computing

To perform in-database computations, the SAS/STAT, SAS/ETS, and SAS Enterprise Miner procedures

connect to the DBMS by using the credentials (username, password, and so on) in the LIBNAME statement for the DATA= data set. This connection is separate from any connection made by SAS/ACCESS

Page 19

LIBNAME Statement Options for In-Database Computing F 15

software. Therefore, some SAS/ACCESS options such as the CONNECTION= option do not work with the

SAS/STAT, SAS/ETS, and SAS Enterprise Miner in-database procedures. Also, LIBNAME concatenation

cannot be used for in-database computation because the procedure cannot determine which credentials to

use for the connection.

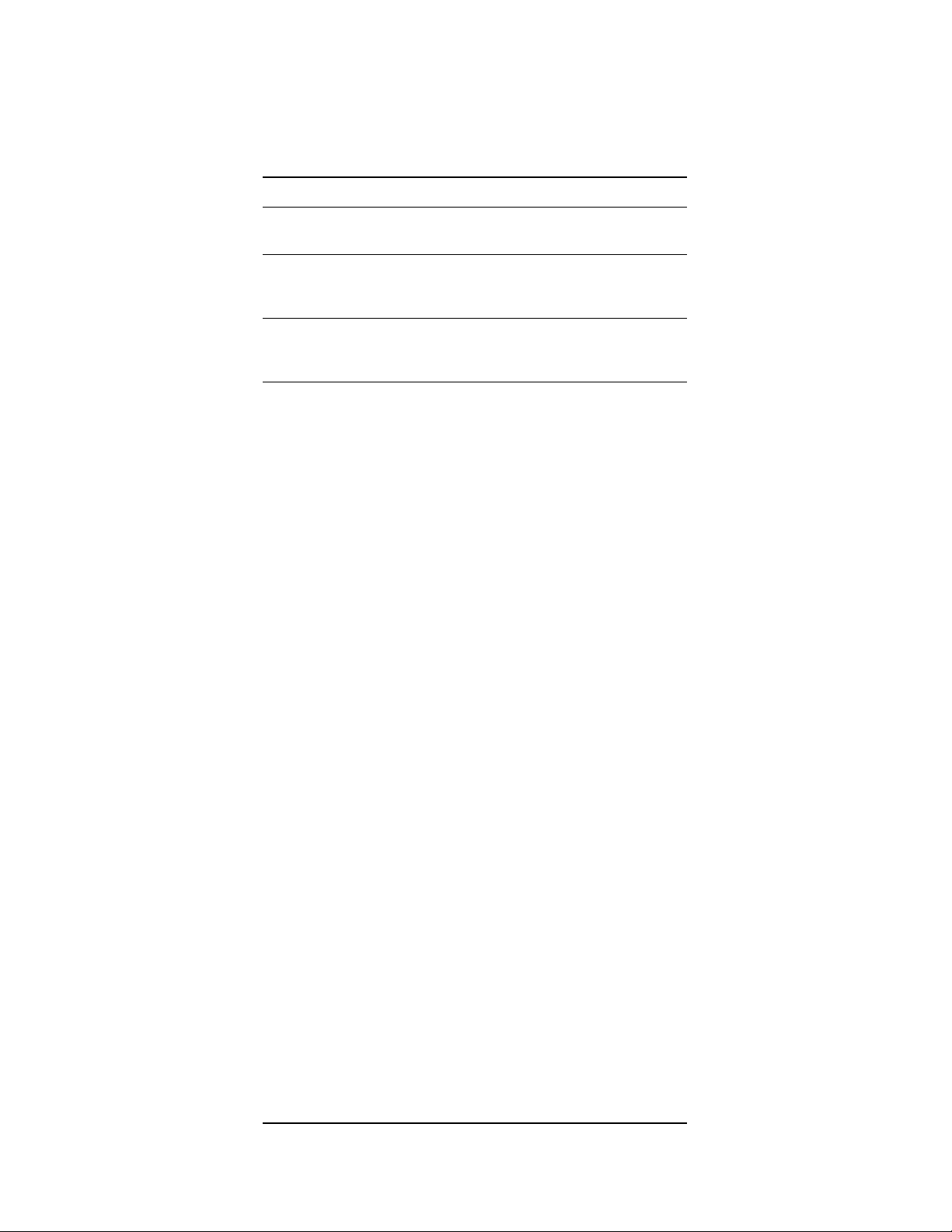

Although the DATABASE= and SCHEMA= options are aliases as data set options, they are not aliases in

a LIBNAME statement. Table 1.2 describes the effects of the DATABASE= and SCHEMA= options in a

LIBNAME statement.

Table 1.2 DATABASE= and SCHEMA= Options for Teradata

Options

specified in

LIBNAME

statement

Neither

DATABASE=

nor SCHEMA=

DATABASE=

but not

SCHEMA=

SCHEMA= but

not

DATABASE=

Both

DATABASE=

and SCHEMA=

Option that

specifies name

of database

where the SAS

System

Option that

specifies name of

database where

OUT= table is

stored**

Option that specifies

name of database where

Teradata looks for

UDFs***

searches for

DATA= table*

USER= USER= USER=

DATABASE= DATABASE= DATABASE=

SCHEMA= SCHEMA= USER=

SCHEMA= SCHEMA= DATABASE=

* The DBMS

user for the

DATA= table

must have the

SELECT

privilege for the

named

database.

** The DBMS user

for the DATA=

table must have the

CREATE TABLE,

SELECT, and

INSERT privileges

for the named

database.

*** If Teradata does not

find the UDF in the

named database, then

Teradata looks in

SYSLIB. The DBMS

user for the DATA= table

must have the EXECUTE

FUNCTION privilege for

the database where the

UDF is found.

Table 1.3 describes whether SAS/ACCESS LIBNAME options for Teradata work correctly with the DATA=

Page 20

16 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

and OUT= options for the SAS/STAT and SAS/ETS in-database procedures. The cell entries in the DATA=

and OUT= columns have the following values:

Yes—indicates that the option works for in-database computing

No—indicates that the option does not work for in-database computing

N/A (not applicable)—indicates that the option does not apply to in-database computing

Some cell entries in the DATA= and OUT= columns have superscripts that are intended to convey additional

information. The meanings of the superscripts are as follows:

A superscript of 1 (Yes1or No1) indicates an option that is recommended for statistical applications

and correctly affects in-database processing as expected.

A superscript of 2 (Yes2or No2) indicates an option that correctly affects in-database processing as

expected.

A superscript of 3 (Yes3or No3) indicates an option that causes the procedure to use SAS/ACCESS

for out-of-database computing.

A superscript of 4 (Yes4or No4) indicates an option that neither works for in-database computing nor

prevents in-database computing, but is unlikely to cause a serious error. See the Comment column for

more information.

Table 1.3 SAS/ACCESS LIBNAME Options for Teradata

LIBNAME option DATA= OUT= Comments

ACCESS=READONLY Yes

2

Yes

2

For an OUT= data set, you

correctly receive a

SAS/ACCESS error message.

BULKLOAD= N/A N/A For out-of-database I/O.

CAST= N/A N/A For out-of-database I/O.

CAST_OVERHEAD_MAXPERCENT= N/A N/A For out-of-database I/O.

CONNECTION= No

4

No

4

In-database SAS/STAT and

SAS/ETS procedures use

separate connections from

SAS/ACCESS software and

therefore work as if

CONNECTION=UNIQUE is

specified.

Page 21

LIBNAME Statement Options for In-Database Computing F 17

LIBNAME option DATA= OUT= Comments

CONNECTION_GROUP= No

3

No

3

In-database SAS/STAT and

SAS/ETS procedures use

separate connections from

SAS/ACCESS software.

DBCOMMIT= N/A N/A For out-of-database I/O.

DBCONINIT= No

3

No

3

In-database SAS/STAT and

SAS/ETS procedures use

separate connections from

SAS/ACCESS software.

DBCONTERM= No

3

No

3

In-database SAS/STAT and

SAS/ETS procedures use

separate connections from

SAS/ACCESS software.

DBCREATE_TABLE_OPTS= N/A Yes

2

DBINDEX= N/A N/A For out-of-database I/O.

DBLIBINIT= N/A N/A Executed when LIBNAME

connection is made.

DBLIBTERM= N/A N/A Executed when LIBNAME is

disconnected.

DBMSTEMP=YES No

3

No

3

In-database SAS/STAT and

SAS/ETS procedures use

separate connections from

SAS/ACCESS software.

Temporary tables can be

accessed only within the

connection in which they are

created, so DBMSTEMP=YES

causes Teradata errors that say

the table does not exist. The

default value NO works.

DBPROMPT= N/A N/A Executed when LIBNAME

connection is made.

DBSASLABEL= Yes

1

N/A DBSASLABEL=NONE is

recommended for correct

procedure output.

Page 22

18 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

LIBNAME option DATA= OUT= Comments

DBSLICEPARM= N/A N/A For out-of-database I/O.

DEFER=NO No

4

No

4

In-database SAS/STAT and

SAS/ETS procedures use

separate connections from

SAS/ACCESS software and

therefore work as if

DEFER=YES is specified.

DIRECT_EXE=DELETE N/A N/A

DIRECT_SQL= N/A N/A PROC SQL only.

ERRLIMIT= N/A N/A For out-of-database I/O.

LOGDB= N/A N/A For out-of-database I/O.

MODE= N/A N/A Applies to explicit pass-through

in PROC SQL.

MULTISTMT= N/A N/A For out-of-database I/O.

MULTI_DATASRC_OPT= N/A N/A For out-of-database I/O with

PROC SQL.

PASSWORD= Yes

2

Yes

2

PREFETCH= N/A N/A For out-of-database I/O.

PRESERVE_COL_NAMES=NO No

PRESERVE_NAMES=NO No

PRESERVE_TAB_NAMES=NO No

READ_ISOLATION_LEVEL= No

READ_LOCK_TYPE= No

READ_MODE_WAIT= No

3

3

3

4

4

4

No

No

No

No

No

No

3

3

3

4

4

4

The default value YES works.

The default value YES works.

The default value YES works.

The option is ignored.

The option is ignored.

The option is ignored.

REREAD_EXPOSURE= N/A N/A

SERVER= Yes

2

Yes

2

Same as TDPID=.

SPOOL= N/A N/A For out-of-database I/O.

Page 23

Using SAS/ACCESS Software with Teradata: Some Cautionary Notes F 19

LIBNAME option DATA= OUT= Comments

SQL_FUNCTIONS= N/A N/A For PROC SQL.

SQL_FUNCTIONS_COPY= N/A N/A For PROC SQL.

SQLGENERATION= Yes

2

Yes

2

If SQLGENERATION= is

specified in the LIBNAME

statement, any value specified in

an OPTIONS statement is

ignored.

TDPID= Yes

UPDATE_ISOLATION_LEVEL= No

UPDATE_LOCK_TYPE= No

UPDATE_MODE_WAIT= No

USER= Yes

2

4

4

4

2

Yes

No

No

No

Yes

2

4

4

4

2

Same as SERVER=.

The option is ignored.

The option is ignored.

The option is ignored.

UTILCONN_TRANSIENT= N/A N/A In-database SAS/STAT and

SAS/ETS procedures use

separate connections from

SAS/ACCESS software.

Using SAS/ACCESS Software with Teradata: Some Cautionary Notes

See the chapter “SAS/ACCESS Interface to Teradata” in SAS/ACCESS for Relational Databases: Reference

for the complete documentation regarding the use of SAS/ACCESS software with Teradata.

When a SAS/STAT or SAS/ETS procedure performs in-database computation, SAS/ACCESS soft-

ware is not used for downloading the rows of the DATA= data set from the DBMS or for uploading

the rows of the OUT= data set to the DBMS. Rather, SAS/ACCESS software is used as follows:

– When the SAS System parses the statements of a SAS/STAT or SAS/ETS procedure,

SAS/ACCESS software looks up the variable names in the DATA= data set. Or, when you do

not specify an explicit variable list, SAS/ACCESS software obtains the names of the variables

from the DBMS.

– SAS/ACCESS software tells the procedure which SAS data types and SAS formats are assigned

to the variables in both the DATA= and OUT= data sets when those data sets are DBMS tables.

Page 24

20 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

– When a SAS/STAT or SAS/ETS procedure attempts to create an OUT= data set, SAS/ACCESS

software generates the CREATE TABLE command and sends it to the DBMS.

Because SAS/ACCESS software is used for these purposes, numerous SAS/ACCESS options affect

in-database computation as described in this and subsequent sections. In addition, SAS/ACCESS

options are important for using the DATA step or out-of-database procedures to create DBMS tables,

and these options can have important consequences for subsequent analyses that are performed using

in-database procedures.

In statistical applications, you should exercise extreme caution with the BULKLOAD=YES option

in the LIBNAME statement. The BULKLOAD=YES option omits duplicate rows. It is not unusual

for statistical data sets to have duplicate rows, and omitting them produces incorrect answers. This

behavior affects the DATA step and procedures that do not perform in-database computation. This

behavior does not affect OUT= data sets that are created in-database by the PRINCOMP or SCORE

procedures.

You should usually specify the following SAS/ACCESS options regardless of whether you are per-

forming in-database computations:

– Either specify OPTIONS NOLABEL or specify the DBSASLABEL=NONE option in a LIB-

NAME statement to prevent SAS/ACCESS software from assigning default labels and thus

adding spurious variable labels to procedure listings, ODS tables, and output SAS data sets.

(Teradata tables do not store SAS variable labels. Variable labels must be specified in the PROC

step using a LABEL or ATTRIB statement. However, when SAS/ACCESS software creates a

SAS data set from a DBMS table, it assigns the variable names as labels by default.)

– Specify DBFMTIGNORE in an OPTIONS statement to prevent SAS/ACCESS software from

assigning data types to output variables based on their SAS formats. Without the DBFMTIGNORE option, output data sets in the DBMS (including ODS tables) might suffer from loss of

precision or numeric overflow, causing loss of data. See the subsection “LIBNAME Statement

Data Conversions” in the section “Data Types for Teradata” in SAS/ACCESS 9.2 for Relational

Databases: Reference.

When SAS/ACCESS software reads a Teradata table or creates an ordinary SAS data set from a

Teradata table, it assigns formats to the SAS variables based on the Teradata data types. These formats

are often inappropriate and can interfere with the proper operation of SAS/STAT, SAS/ETS, and Base

SAS procedures such as PRINT, PRINCOMP, and TRANSREG, especially for character variables.

When using such a data set, you might want to specify a FORMAT statement to remove the formats,

such as the following:

FORMAT _CHARACTER_;

If you use a RENAME= data set option, SAS/ACCESS software assigns the old variable names as

variable labels.

Most SAS data set options are not stored in Teradata tables. In particular, the TYPE= data set option is

not stored in Teradata tables. For example, if you run the CORR procedure to create a TYPE=CORR

data set as a Teradata table, the data set TYPE= is lost and you must specify the TYPE= data set

option every time you use that table.

Page 25

Using SAS/ACCESS Software with Teradata: Some Cautionary Notes F 21

Teradata has nulls instead of missing values. SAS software has 28 kinds of numeric missing values,

but SAS/ACCESS software converts all of them to null when it creates a Teradata table from SAS

input. SAS character missing values are the same as blank strings, but Teradata nulls are different from

blank strings. When SAS/ACCESS software creates a Teradata table from SAS input, blank strings

are converted to nulls. Conversely, when a Teradata table is converted to a SAS data set, numeric

nulls are converted to ordinary SAS numeric missing values, and character nulls are converted to

blank strings.

Comparisons with Teradata nulls work differently from comparisons with SAS missing values. Tera-

data uses three-valued logic, so a comparison with a null produces an “unknown” logical value. In the

SAS System, numeric missing values are regarded as less than any nonmissing value, and character

missing values are (equal to) blank strings. Comparisons between two SAS missing values that the

SAS System considers equal might yield either a null or a true value on Teradata, depending on how

the SAS System translates the syntax.

When the SAS System copies a column from a DBMS table to a new DBMS table, the new DBMS

data type can differ from that of the original table. This is true for both in-database and out-of-database

processing and is a consequence of the manner in which SAS/ACCESS software handles DBMS data

types and SAS formats. See the subsection “LIBNAME Statement Data Conversions” in the section

“Data Types for Teradata” in SAS/ACCESS 9.2 for Relational Databases: Reference. The change in

data types happens in a DATA step with a SET or MERGE statement and in procedure statements that

copy variables from the DATA= data set to the OUT= data set.

You can exercise limited control over data types in output tables by using FORMAT or ATTRIB

statements to assign formats. For out-of-database processing, you can use the DBTYPE= data set

option to specify data types for output tables. However, the DBTYPE= data set option is not supported

for in-database computation by the SAS/STAT and SAS/ETS procedures.

SAS/ACCESS software does not change the following data types:

– BYTE

– CHAR

– DATE

– FLOAT (also known as REAL or DOUBLE PRECISION)

– TIME

– TIMESTAMP

Page 26

22 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Table 1.4 shows how data types are changed when the SAS System copies a Teradata column.

Table 1.4 Data Type Changes

Original Data Type New Data Type

Byte Data Types

VarByte(n) Byte(n)

Character Data Types

VarChar(n) Char(n)

Numeric Data Types

BYTEINT SMALLINT

SMALLINT INTEGER

INTEGER DECIMAL(11)

DECIMAL INTEGER

DECIMAL(1) BYTEINT

DECIMAL(1,0) BYTEINT

DECIMAL(1,1) DECIMAL(2,1)

DECIMAL(2) SMALLINT

DECIMAL(2,0) SMALLINT

DECIMAL(2,1) DECIMAL(3,1)

DECIMAL(2,2) DECIMAL(3,2)

DECIMAL(3) SMALLINT

DECIMAL(3,0) SMALLINT

DECIMAL(3,1) DECIMAL(4,1)

DECIMAL(3,2) DECIMAL(4,2)

DECIMAL(3,3) DECIMAL(4,3)

DECIMAL(4) INTEGER

DECIMAL(4,0) INTEGER

DECIMAL(4,1) DECIMAL(5,1)

DECIMAL(4,2) DECIMAL(5,2)

DECIMAL(4,3) DECIMAL(5,3)

DECIMAL(4,4) DECIMAL(5,4)

DECIMAL(5) INTEGER

DECIMAL(5,0) INTEGER

DECIMAL(5,1) DECIMAL(6,1)

DECIMAL(5,2) DECIMAL(6,2)

DECIMAL(5,3) DECIMAL(6,3)

DECIMAL(5,4) DECIMAL(6,4)

DECIMAL(5,5) DECIMAL(6,5)

DECIMAL(6) INTEGER

DECIMAL(6,0) INTEGER

DECIMAL(6,1) DECIMAL(7,1)

DECIMAL(6,2) DECIMAL(7,2)

DECIMAL(6,3) DECIMAL(7,3)

DECIMAL(6,4) DECIMAL(7,4)

Page 27

Using SAS/ACCESS Software with Teradata: Some Cautionary Notes F 23

Original Data Type New Data Type

DECIMAL(6,5) DECIMAL(7,5)

DECIMAL(6,6) DECIMAL(7,6)

DECIMAL(7) INTEGER

DECIMAL(7,0) INTEGER

DECIMAL(7,1) DECIMAL(8,1)

DECIMAL(7,2) DECIMAL(8,2)

DECIMAL(7,3) DECIMAL(8,3)

DECIMAL(7,4) DECIMAL(8,4)

DECIMAL(7,5) DECIMAL(8,5)

DECIMAL(7,6) DECIMAL(8,6)

DECIMAL(7,7) DECIMAL(8,7)

DECIMAL(8) INTEGER

DECIMAL(8,0) INTEGER

DECIMAL(8,1) DECIMAL(9,1)

DECIMAL(8,2) DECIMAL(9,2)

DECIMAL(8,3) DECIMAL(9,3)

DECIMAL(8,4) DECIMAL(9,4)

DECIMAL(8,5) DECIMAL(9,5)

DECIMAL(8,6) DECIMAL(9,6)

DECIMAL(8,7) DECIMAL(9,7)

DECIMAL(8,8) DECIMAL(9,8)

DECIMAL(9) DECIMAL(10)

DECIMAL(9,0) DECIMAL(10)

DECIMAL(9,1) DECIMAL(10,1)

DECIMAL(9,2) DECIMAL(10,2)

DECIMAL(9,3) DECIMAL(10,3)

DECIMAL(9,4) DECIMAL(10,4)

DECIMAL(9,5) DECIMAL(10,5)

DECIMAL(9,6) DECIMAL(10,6)

DECIMAL(9,7) DECIMAL(10,7)

DECIMAL(9,8) DECIMAL(10,8)

DECIMAL(9,9) DECIMAL(10,9)

DECIMAL(10) DECIMAL(11)

DECIMAL(10,0) DECIMAL(11)

DECIMAL(10,1) DECIMAL(11,1)

DECIMAL(10,2) DECIMAL(11,2)

DECIMAL(10,3) DECIMAL(11,3)

DECIMAL(10,4) DECIMAL(11,4)

DECIMAL(10,5) DECIMAL(11,5)

DECIMAL(10,6) DECIMAL(11,6)

DECIMAL(10,7) DECIMAL(11,7)

DECIMAL(10,8) DECIMAL(11,8)

DECIMAL(10,9) DECIMAL(11,9)

DECIMAL(10,10) DECIMAL(11,10)

DECIMAL(11) DECIMAL(12)

DECIMAL(11,0) DECIMAL(12)

Page 28

24 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Original Data Type New Data Type

DECIMAL(11,1) DECIMAL(12,1)

DECIMAL(11,2) DECIMAL(12,2)

DECIMAL(11,3) DECIMAL(12,3)

DECIMAL(11,4) DECIMAL(12,4)

DECIMAL(11,5) DECIMAL(12,5)

DECIMAL(11,6) DECIMAL(12,6)

DECIMAL(11,7) DECIMAL(12,7)

DECIMAL(11,8) DECIMAL(12,8)

DECIMAL(11,9) DECIMAL(12,9)

DECIMAL(11,10) DECIMAL(12,10)

DECIMAL(11,11) DECIMAL(12,11)

DECIMAL(12) DECIMAL(13)

DECIMAL(12,0) DECIMAL(13)

DECIMAL(12,1) DECIMAL(13,1)

DECIMAL(12,2) DECIMAL(13,2)

DECIMAL(12,3) DECIMAL(13,3)

DECIMAL(12,4) DECIMAL(13,4)

DECIMAL(12,5) DECIMAL(13,5)

DECIMAL(12,6) DECIMAL(13,6)

DECIMAL(12,7) DECIMAL(13,7)

DECIMAL(12,8) DECIMAL(13,8)

DECIMAL(12,9) DECIMAL(13,9)

DECIMAL(12,10) DECIMAL(13,10)

DECIMAL(12,11) DECIMAL(13,11)

DECIMAL(12,12) DECIMAL(13,12)

DECIMAL(13) DECIMAL(14)

DECIMAL(13,0) DECIMAL(14)

DECIMAL(13,1) DECIMAL(14,1)

DECIMAL(13,2) DECIMAL(14,2)

DECIMAL(13,3) DECIMAL(14,3)

DECIMAL(13,4) DECIMAL(14,4)

DECIMAL(13,5) DECIMAL(14,5)

DECIMAL(13,6) DECIMAL(14,6)

DECIMAL(13,7) DECIMAL(14,7)

DECIMAL(13,8) DECIMAL(14,8)

DECIMAL(13,9) DECIMAL(14,9)

DECIMAL(13,10) DECIMAL(14,10)

DECIMAL(13,11) DECIMAL(14,11)

DECIMAL(13,12) DECIMAL(14,12)

DECIMAL(13,13) DECIMAL(14,13)

DECIMAL(14) DECIMAL(15)

DECIMAL(14,0) DECIMAL(15)

DECIMAL(14,1) DECIMAL(15,1)

DECIMAL(14,2) DECIMAL(15,2)

DECIMAL(14,3) DECIMAL(15,3)

DECIMAL(14,4) DECIMAL(15,4)

Page 29

Using SAS/ACCESS Software with Teradata: Some Cautionary Notes F 25

Original Data Type New Data Type

DECIMAL(14,5) DECIMAL(15,5)

DECIMAL(14,6) DECIMAL(15,6)

DECIMAL(14,7) DECIMAL(15,7)

DECIMAL(14,8) DECIMAL(15,8)

DECIMAL(14,9) DECIMAL(15,9)

DECIMAL(14,10) DECIMAL(15,10)

DECIMAL(14,11) DECIMAL(15,11)

DECIMAL(14,12) DECIMAL(15,12)

DECIMAL(14,13) DECIMAL(15,13)

DECIMAL(14,14) DECIMAL(15,14)

DECIMAL(15) DECIMAL(16)

DECIMAL(15,0) DECIMAL(16)

DECIMAL(15,1) DECIMAL(16,1)

DECIMAL(15,2) DECIMAL(16,2)

DECIMAL(15,3) DECIMAL(16,3)

DECIMAL(15,4) DECIMAL(16,4)

DECIMAL(15,5) DECIMAL(16,5)

DECIMAL(15,6) DECIMAL(16,6)

DECIMAL(15,7) DECIMAL(16,7)

DECIMAL(15,8) DECIMAL(16,8)

DECIMAL(15,9) DECIMAL(16,9)

DECIMAL(15,10) DECIMAL(16,10)

DECIMAL(15,11) DECIMAL(16,11)

DECIMAL(15,12) DECIMAL(16,12)

DECIMAL(15,13) DECIMAL(16,13)

DECIMAL(15,14) DECIMAL(16,14)

DECIMAL(15,15) DECIMAL(16,15)

DECIMAL(16) DECIMAL(17)

DECIMAL(16,0) DECIMAL(17)

DECIMAL(16,1) DECIMAL(17,1)

DECIMAL(16,2) DECIMAL(17,2)

DECIMAL(16,3) DECIMAL(17,3)

DECIMAL(16,4) DECIMAL(17,4)

DECIMAL(16,5) DECIMAL(17,5)

DECIMAL(16,6) DECIMAL(17,6)

DECIMAL(16,7) DECIMAL(17,7)

DECIMAL(16,8) DECIMAL(17,8)

DECIMAL(16,9) DECIMAL(17,9)

DECIMAL(16,10) DECIMAL(17,10)

DECIMAL(16,11) DECIMAL(17,11)

DECIMAL(16,12) DECIMAL(17,12)

DECIMAL(16,13) DECIMAL(17,13)

DECIMAL(16,14) DECIMAL(17,14)

DECIMAL(16,15) DECIMAL(17,15)

DECIMAL(16,16) DECIMAL(17,16)

DECIMAL(17) DECIMAL(18)

Page 30

26 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Original Data Type New Data Type

DECIMAL(17,0) DECIMAL(18)

DECIMAL(17,1) DECIMAL(18,1)

DECIMAL(17,2) DECIMAL(18,2)

DECIMAL(17,3) DECIMAL(18,3)

DECIMAL(17,4) DECIMAL(18,4)

DECIMAL(17,5) DECIMAL(18,5)

DECIMAL(17,6) DECIMAL(18,6)

DECIMAL(17,7) DECIMAL(18,7)

DECIMAL(17,8) DECIMAL(18,8)

DECIMAL(17,9) DECIMAL(18,9)

DECIMAL(17,10) DECIMAL(18,10)

DECIMAL(17,11) DECIMAL(18,11)

DECIMAL(17,12) DECIMAL(18,12)

DECIMAL(17,13) DECIMAL(18,13)

DECIMAL(17,14) DECIMAL(18,14)

DECIMAL(17,15) DECIMAL(18,15)

DECIMAL(17,16) DECIMAL(18,16)

DECIMAL(17,17) DECIMAL(18,17)

DECIMAL(n) for n > 17 FLOAT

Time Data Types

TIME with TIMEZONE CHAR(21)

TIME(0) with TIMEZONE CHAR(14)

TIME(1) with TIMEZONE CHAR(16)

TIME(2) with TIMEZONE CHAR(17)

TIME(3) with TIMEZONE CHAR(18)

TIME(4) with TIMEZONE CHAR(19)

TIME(5) with TIMEZONE CHAR(20)

TIME(6) with TIMEZONE CHAR(21)

Timestamp Data Types

TIMESTAMP with TIMEZONE CHAR(32)

TIMESTAMP(0) with TIMEZONE CHAR(25)

TIMESTAMP(1) with TIMEZONE CHAR(27)

TIMESTAMP(2) with TIMEZONE CHAR(28)

TIMESTAMP(3) with TIMEZONE CHAR(29)

TIMESTAMP(4) with TIMEZONE CHAR(30)

TIMESTAMP(5) with TIMEZONE CHAR(31)

TIMESTAMP(6) with TIMEZONE CHAR(32)

Interval Data Types

INTERVAL YEAR CHAR(3)

INTERVAL YEAR(1) CHAR(2)

INTERVAL YEAR(2) CHAR(3)

INTERVAL YEAR(3) CHAR(4)

INTERVAL YEAR(4) CHAR(5)

Page 31

Using SAS/ACCESS Software with Teradata: Some Cautionary Notes F 27

Original Data Type New Data Type

INTERVAL YEAR to MONTH CHAR(6)

INTERVAL YEAR(1) to MONTH CHAR(5)

INTERVAL YEAR(2) to MONTH CHAR(6)

INTERVAL YEAR(3) to MONTH CHAR(7)

INTERVAL YEAR(4) to MONTH CHAR(8)

INTERVAL MONTH CHAR(3)

INTERVAL MONTH(1) CHAR(2)

INTERVAL MONTH(2) CHAR(3)

INTERVAL MONTH(3) CHAR(4)

INTERVAL MONTH(4) CHAR(5)

INTERVAL DAY CHAR(3)

INTERVAL DAY(1) CHAR(2)

INTERVAL DAY(2) CHAR(3)

INTERVAL DAY(3) CHAR(4)

INTERVAL DAY(4) CHAR(5)

INTERVAL DAY to HOUR CHAR(6)

INTERVAL DAY(1) to HOUR CHAR(5)

INTERVAL DAY(2) to HOUR CHAR(6)

INTERVAL DAY(3) to HOUR CHAR(7)

INTERVAL DAY(4) to HOUR CHAR(8)

INTERVAL DAY to MINUTE CHAR(9)

INTERVAL DAY(1) to MINUTE CHAR(8)

INTERVAL DAY(2) to MINUTE CHAR(9)

INTERVAL DAY(3) to MINUTE CHAR(10)

INTERVAL DAY(4) to MINUTE CHAR(11)

INTERVAL DAY to SECOND CHAR(19)

INTERVAL DAY(1) to SECOND CHAR(18)

INTERVAL DAY(2) to SECOND CHAR(19)

INTERVAL DAY(3) to SECOND CHAR(20)

INTERVAL DAY(4) to SECOND CHAR(21)

INTERVAL HOUR CHAR(3)

INTERVAL HOUR(1) CHAR(2)

INTERVAL HOUR(2) CHAR(3)

INTERVAL HOUR(3) CHAR(4)

INTERVAL HOUR(4) CHAR(5)

INTERVAL HOUR to MINUTE CHAR(6)

INTERVAL HOUR(1) to MINUTE CHAR(5)

INTERVAL HOUR(2) to MINUTE CHAR(6)

INTERVAL HOUR(3) to MINUTE CHAR(7)

INTERVAL HOUR(4) to MINUTE CHAR(8)

INTERVAL HOUR to SECOND CHAR(16)

INTERVAL HOUR(1) to SECOND CHAR(15)

INTERVAL HOUR(2) to SECOND CHAR(16)

INTERVAL HOUR(3) to SECOND CHAR(17)

INTERVAL HOUR(4) to SECOND CHAR(18)

INTERVAL MINUTE CHAR(3)

Page 32

28 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Original Data Type New Data Type

INTERVAL MINUTE(1) CHAR(2)

INTERVAL MINUTE(2) CHAR(3)

INTERVAL MINUTE(3) CHAR(4)

INTERVAL MINUTE(4) CHAR(5)

INTERVAL MINUTE to SECOND CHAR(13)

INTERVAL MINUTE(1) to SECOND CHAR(12)

INTERVAL MINUTE(2) to SECOND CHAR(13)

INTERVAL MINUTE(3) to SECOND CHAR(14)

INTERVAL MINUTE(4) to SECOND CHAR(15)

INTERVAL SECOND CHAR(10)

INTERVAL SECOND(1) CHAR(9)

INTERVAL SECOND(2) CHAR(10)

INTERVAL SECOND(3) CHAR(11)

INTERVAL SECOND(4) CHAR(12)

Indeterminate Row Order in a DBMS Table

In an ordinary SAS data set, the observations are ordered in a consistent way. For example, if you run

the PRINT procedure several times, the observations are always printed in the same order. But in a DBMS

table, the order of the rows is not defined. If you run PROC PRINT several times on a DBMS table, the rows

might be printed in a different order every time. If you run a statistical analysis that depends on row order

in a DBMS table (such as the Durbin-Watson statistic in the REG procedure or tables with ORDER=DATA

in the FREQ procedure), the results might differ every time you run the analysis.

To get a consistent row order for a DBMS table, you can use the DBCONDITION= data set option to specify

an ORDER BY clause with one or more variables that defines a unique order for the rows. However, the

DBCONDITION= option works only for out-of-database processing. The DBCONDITION= option does

not work with in-database processing.

Following is an example that uses the DBCONDITION= option for out-of-database processing to control

the row order in PROC PRINT:

options ls=72 options nodate nostimer nonumber;

%let server =sl91204;

%let user =sas;

%let password=sas;

libname tera teradata server=&server user=&user password=&password;

The SAS System generates the following note:

NOTE: Libref TERA was successfully assigned as follows:

Engine: TERADATA

Physical Name: sl91204

Page 33

Indeterminate Row Order in a DBMS Table F 29

proc delete data=tera.test;

run;

The SAS System generates the following warning:

WARNING: File TERA.test.DATA does not exist.

data tera.test;

do i=1 to 3;

do j=1 to 4;

x=rannor(12345);

output;

end;

end;

run;

The SAS System generates the following note:

NOTE: The data set TERA.test has 12 observations and 3 variables.

title "Indeterminate Row Order";

proc print data=tera.test;

run;

The SAS System generates the following output:

Indeterminate Row Order

Obs i j x

1 1 1 -0.04298

2 3 1 0.57221

3 1 2 -0.09999

4 3 2 0.17571

5 1 3 -0.24349

6 3 3 -1.44361

7 1 4 -0.22226

8 3 4 0.44887

9 2 1 0.07353

10 2 2 0.49937

11 2 3 -1.52119

12 2 4 0.79180

NOTE: There were 12 observations read from the data set TERA.test.

title "Order Rows by I and J";

proc print data=tera.test(dbcondition="order by i, j");

run;

Page 34

30 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

The SAS System generates the following output:

Order Rows by I and J

Obs i j x

1 1 1 -0.04298

2 1 2 -0.09999

3 1 3 -0.24349

4 1 4 -0.22226

5 2 1 0.07353

6 2 2 0.49937

7 2 3 -1.52119

8 2 4 0.79180

9 3 1 0.57221

10 3 2 0.17571

11 3 3 -1.44361

12 3 4 0.44887

NOTE: There were 12 observations read from the data set TERA.test.

title "Order Rows by X";

proc print data=tera.test(dbcondition="order by x");

run;

The SAS System generates the following output:

Order Rows by X

Obs i j x

1 2 3 -1.52119

2 3 3 -1.44361

3 1 3 -0.24349

4 1 4 -0.22226

5 1 2 -0.09999

6 1 1 -0.04298

7 2 1 0.07353

8 3 2 0.17571

9 3 4 0.44887

10 2 2 0.49937

11 3 1 0.57221

12 2 4 0.79180

NOTE: There were 12 observations read from the data set TERA.test.

proc delete data=tera.test;

run;

The SAS System generates the following note:

NOTE: Deleting TERA.test (memtype=DATA).

Page 35

SAS/ACCESS Data Set Options for Teradata F 31

SAS/ACCESS Data Set Options for Teradata

Table 1.5 describes whether SAS/ACCESS data set options for Teradata work correctly with the DATA=

and OUT= SAS/STAT and SAS/ETS procedure options. The cell entries in the DATA= and OUT= columns

have the following values:

Yes—indicates that the option works for in-database computing

No—indicates that the option does not work for in-database computing

N/A (not applicable)—indicates that the option does not apply to in-database computing

Sometimes—see Comment column for details

Some cell entries in the DATA= and OUT= columns have superscripts that are intended to convey additional

information. The meanings of the superscripts are as follows:

A superscript of 1 (Yes1or No1) indicates an option that correctly affects in-database processing as

expected.

A superscript of 2 (Yes2or No2) indicates an option that works differently for in-database computing

compared to out-of-database computing.

A superscript of 3 (Yes3, No3, N/A3, or Sometimes3) indicates an option that causes the procedure to

use SAS/ACCESS for out-of-database computing.

A superscript of 4 (Yes4or No4) indicates an option that neither works for in-database computing nor

prevents in-database computing, but is unlikely to cause a serious error. See the Comment column for

more information.

A superscript of 5 (Yes5or No5) indicates an option that might cause incorrect answers or Teradata

syntax errors.

NOTE : As a data set option, DATABASE= is an alias for SCHEMA=, but as LIBNAME options,

DATABASE= and SCHEMA= are distinct options. The data set options DATABASE= and SCHEMA=

work the same way as the LIBNAME option SCHEMA=.

If you specify the same option as both a data set option and as a LIBNAME option, the value of the data set

option is used for that data set.

Table 1.5 SAS/ACCESS Data Set Options for Teradata

Data Set Option DATA= OUT= Comments

BL_CONTROL= N/A N/A For out-of-database I/O.

BL_DATAFILE= N/A N/A For out-of-database I/O.

Page 36

32 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Data Set Option DATA= OUT= Comments

BL_LOG= N/A N/A For out-of-database I/O.

BUFFERS= N/A N/A For out-of-database I/O.

BULKLOAD= N/A N/A For out-of-database I/O.

CAST= N/A N/A For out-of-database I/O.

CAST_OVERHEAD_MAXPERCENT= N/A N/A For out-of-database I/O.

DATABASE= No

5

No

5

DATABASE= is an alias for

SCHEMA= as a data set option.

DATABASE= as a data set

option does not work like

DATABASE= as a LIBNAME

option.

DBCOMMIT= N/A N/A For out-of-database I/O.

DBCONDITION= No

3

DBCREATE_TABLE_OPTS= N/A Yes

No

3

1

DBFORCE= N/A N/A In-database computation works

like DBFORCE=YES,

regardless of what value is

specified for DBFORCE=.

SAS/ACCESS software defaults

to DBFORCE=NO. However,

DBFORCE= would have an

effect on in-database

computation only if DBTYPE=

specified a Teradata data type

that resulted in truncation,

whereas specifying DBTYPE=

prevents in-database

computation.

DBINDEX= N/A N/A For out-of-database I/O.

DBKEY= N/A N/A For out-of-database I/O.

Page 37

SAS/ACCESS Data Set Options for Teradata F 33

Data Set Option DATA= OUT= Comments

DBLABEL= N/A N/A DBLABEL= applies only when

the DATA= data set is a SAS

data set with variable labels and

the OUT= data set is a DBMS

table. In-database computation

cannot be performed when the

DATA= data set is not a DBMS

table.

DBMASTER= N/A N/A

DBNULL= N/A

DBSASLABEL= Yes

3

1

3

No

N/A DBSASLABEL=NONE is

recommended for correct

procedure output, but it is

normally easier to specify this

in the LIBNAME statement.

DBSLICE= N/A N/A For out-of-database I/O.

DBSLICEPARM= N/A N/A For out-of-database I/O.

DBTYPE= N/A

3

Sometimes3DBTYPE= prevents in-database

computation because some

Teradata types such as DATE

and TIMESTAMP have not

been implemented.

ERRLIMIT= N/A N/A For out-of-database I/O.

MBUFSIZE= N/A N/A For out-of-database I/O.

ML_CHECKPOINT= N/A N/A For out-of-database I/O.

ML_ERROR1= N/A N/A For out-of-database I/O.

ML_ERROR2= N/A N/A For out-of-database I/O.

ML_LOG= N/A N/A For out-of-database I/O.

ML_RESTART= N/A N/A For out-of-database I/O.

ML_WORK= N/A N/A For out-of-database I/O.

MULTILOAD= N/A N/A For out-of-database I/O.

Page 38

34 F Chapter 1: SAS Analytics Accelerator 1.3 for Teradata: Guide

Data Set Option DATA= OUT= Comments

MULTISTMT= N/A N/A For out-of-database I/O.

NULLCHAR= N/A

NULLCHARVAL= N/A

PRESERVE_COL_NAMES=NO No

READ_ISOLATION_LEVEL= No

READ_LOCK_TYPE= No

READ_MODE_WAIT= No

SCHEMA= Yes

3

3

3

4

4

4

1

No

No

No

No

No

No

Yes

SET= N/A Yes

3

3

3

4

4

4

1

2

The default value YES works.

The option is ignored.

The option is ignored.

The option is ignored.

If the DATA= table contains

duplicate rows, the procedure

attempts to insert duplicate rows

into the OUT= table, and the

results for in-database and

out-of-database processing

differ. With in-database

processing, duplicate rows are

discarded and do not cause

errors. With out-of-database

processing, duplicate rows

cause errors and a ROLLBACK

is issued. With

MODE=TERADATA, the

ROLLBACK produces an

empty or incomplete table. With

MODE=ANSI, the

ROLLBACK produces an

empty table.

SLEEP= N/A N/A For out-of-database I/O.

TENACITY= N/A N/A For out-of-database I/O.

UPDATE_ISOLATION_LEVEL= No

UPDATE_LOCK_TYPE= No

UPDATE_MODE_WAIT= No

4

4

4

No

No

No

4

4

4

The option is ignored.

The option is ignored.

The option is ignored.

Page 39

Base SAS Data Set Options F 35

Base SAS Data Set Options

Table 1.6 describes whether the Base SAS data set options work with the DATA= and OUT= SAS/STAT and

SAS/ETS procedure options for in-database computing. The cell entries in the DATA= and OUT= columns

have the following values:

Yes—indicates that the option works for in-database computing

No—indicates that the option does not work for in-database computing

N/A (not applicable)—indicates that the option does not apply to in-database computing