Page 1

Avid® Interplay™Engine

Failover Guide

make manage move | media

™

Avid

®

Page 2

Legal Notices

Product specifications are subject to change without notice and do not represent a commitment on the part of Avid Technology,

Inc.

The software described in this document is furnished under a license agreement. You can obtain a copy of that license by

visiting Avid's Web site at www.avid.com. The terms of that license are also available in the product in the same directory as

the software. The software may not be reverse assembled and may be used or copied only in accordance with the terms of the

license agreement. It is against the law to copy the software on any medium except as specifically allowed in the license

agreement.

Avid products or portions thereof are protected by one or more of the following United States Patents: 4,970,663; 5,267,351;

5,309,528; 5,355,450; 5,396,594; 5,440,348; 5,467,288; 5,513,375; 5,528,310; 5,557,423; 5,577,190; 5,584,006; 5,627,765;

5,640,601; 5,644,364; 5,654,737; 5,715,018; 5,719,570; 5,724,605; 5,726,717; 5,729,673; 5,745,637; 5,752,029; 5,754,851;

5,799,150; 5,812,216; 5,828,678; 5,842,014; 5,852,435; 5,999,406; 6,038,573; 6,061,758; 6,141,007; 6,211,869; 6,532,043;

6,546,190; 6,596,031; 6,636,869; 6,747,705; 6,763,523; 6,766,357; 6,813,622; 6,847,373; 7,081,900; RE40,107; D352,278;

D372,478; D373,778; D392,267; D392,268; D392,269; D395,291; D396,853; D398,912. Other patents are pending.

This document is protected under copyright law. An authorized licensee of Avid Interplay may reproduce this publication for the

licensee’s own use in learning how to use the software. This document may not be reproduced or distributed, in whole or in

part, for commercial purposes, such as selling copies of this document or providing support or educational services to others.

This document is supplied as a guide for Interplay Framework. Reasonable care has been taken in preparing the information it

contains. However, this document may contain omissions, technical inaccuracies, or typographical errors. Avid Technology,

Inc. does not accept responsibility of any kind for customers’ losses due to the use of this document. Product specifications

are subject to change without notice.

Copyright © 2008 Avid Technology, Inc. and its licensors. All rights reserved.

The following disclaimer is required by Sam Leffler and Silicon Graphics, Inc. for the use of their TIFF library:

Copyright © 1988–1997 Sam Leffler

Copyright © 1991–1997 Silicon Graphics, Inc.

Permission to use, copy, modify, distribute, and sell this software [i.e., the TIFF library] and its documentation for any purpose

is hereby granted without fee, provided that (i) the above copyright notices and this permission notice appear in all copies of

the software and related documentation, and (ii) the names of Sam Leffler and Silicon Graphics may not be used in any

advertising or publicity relating to the software without the specific, prior written permission of Sam Leffler and Silicon

Graphics.

THE SOFTWARE IS PROVIDED “AS-IS” AND WITHOUT WARRANTY OF ANY KIND, EXPRESS, IMPLIED OR

OTHERWISE, INCLUDING WITHOUT LIMITATION, ANY WARRANTY OF MERCHANTABILITY OR FITNESS FOR A

PARTICULAR PURPOSE.

IN NO EVENT SHALL SAM LEFFLER OR SILICON GRAPHICS BE LIABLE FOR ANY SPECIAL, INCIDENTAL, INDIRECT

OR CONSEQUENTIAL DAMAGES OF ANY KIND, OR ANY DAMAGES WHATSOEVER RESULTING FROM LOSS OF USE,

DATA OR PROFITS, WHETHER OR NOT ADVISED OF THE POSSIBILITY OF DAMAGE, AND ON ANY THEORY OF

LIABILITY, ARISING OUT OF OR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.

The following disclaimer is required by the Independent JPEG Group:

This software is based in part on the work of the Independent JPEG Group.

This Software may contain components licensed under the following conditions:

Copyright (c) 1989 The Regents of the University of California. All rights reserved.

Redistribution and use in source and binary forms are permitted provided that the above copyright notice and this paragraph

are duplicated in all such forms and that any documentation, advertising materials, and other materials related to such

distribution and use acknowledge that the software was developed by the University of California, Berkeley. The name of the

University may not be used to endorse or promote products derived from this software without specific prior written

permission. THIS SOFTWARE IS PROVIDED ``AS IS'' AND WITHOUT ANY EXPRESS OR IMPLIED WARRANTIES,

INCLUDING, WITHOUT LIMITATION, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A

PARTICULAR PURPOSE.

Copyright (C) 1989, 1991 by Jef Poskanzer.

Permission to use, copy, modify, and distribute this software and its documentation for any purpose and without fee is hereby

granted, provided that the above copyright notice appear in all copies and that both that copyright notice and this permission

notice appear in supporting documentation. This software is provided "as is" without express or implied warranty.

Copyright 1995, Trinity College Computing Center. Written by David Chappell.

2

Page 3

Permission to use, copy, modify, and distribute this software and its documentation for any purpose and without fee is hereby

granted, provided that the above copyright notice appear in all copies and that both that copyright notice and this permission

notice appear in supporting documentation. This software is provided "as is" without express or implied warranty.

Copyright 1996 Daniel Dardailler.

Permission to use, copy, modify, distribute, and sell this software for any purpose is hereby granted without fee, provided that

the above copyright notice appear in all copies and that both that copyright notice and this permission notice appear in

supporting documentation, and that the name of Daniel Dardailler not be used in advertising or publicity pertaining to

distribution of the software without specific, written prior permission. Daniel Dardailler makes no representations about the

suitability of this software for any purpose. It is provided "as is" without express or implied warranty.

Modifications Copyright 1999 Matt Koss, under the same license as above.

Copyright (c) 1991 by AT&T.

Permission to use, copy, modify, and distribute this software for any purpose without fee is hereby granted, provided that this

entire notice is included in all copies of any software which is or includes a copy or modification of this software and in all

copies of the supporting documentation for such software.

THIS SOFTWARE IS BEING PROVIDED "AS IS", WITHOUT ANY EXPRESS OR IMPLIED WARRANTY. IN PARTICULAR,

NEITHER THE AUTHOR NOR AT&T MAKES ANY REPRESENTATION OR WARRANTY OF ANY KIND CONCERNING THE

MERCHANTABILITY OF THIS SOFTWARE OR ITS FITNESS FOR ANY PARTICULAR PURPOSE.

This product includes software developed by the University of California, Berkeley and its contributors.

The following disclaimer is required by Nexidia Inc.:

© 2006 Nexidia. All rights reserved.

Manufactured under license from the Georgia Tech Research Corporation, U.S.A. Patent Pending.

The following disclaimer is required by Paradigm Matrix:

Portions of this software licensed from Paradigm Matrix.

The following disclaimer is required by Ray Sauers Associates, Inc.:

“Install-It” is licensed from Ray Sauers Associates, Inc. End-User is prohibited from taking any action to derive a source code

equivalent of “Install-It,” including by reverse assembly or reverse compilation, Ray Sauers Associates, Inc. shall in no event be

liable for any damages resulting from reseller’s failure to perform reseller’s obligation; or any damages arising from use or

operation of reseller’s products or the software; or any other damages, including but not limited to, incidental, direct, indirect,

special or consequential Damages including lost profits, or damages resulting from loss of use or inability to use reseller’s

products or the software for any reason including copyright or patent infringement, or lost data, even if Ray Sauers Associates

has been advised, knew or should have known of the possibility of such damages.

The following disclaimer is required by Videomedia, Inc.:

“Videomedia, Inc. makes no warranties whatsoever, either express or implied, regarding this product, including warranties with

respect to its merchantability or its fitness for any particular purpose.”

“This software contains V-LAN ver. 3.0 Command Protocols which communicate with V-LAN ver. 3.0 products developed by

Videomedia, Inc. and V-LAN ver. 3.0 compatible products developed by third parties under license from Videomedia, Inc. Use

of this software will allow “frame accurate” editing control of applicable videotape recorder decks, videodisc recorders/players

and the like.”

The following disclaimer is required by Altura Software, Inc. for the use of its Mac2Win software and Sample

Source Code:

©1993–1998 Altura Software, Inc.

The following disclaimer is required by 3Prong.com Inc.:

Certain waveform and vector monitoring capabilities are provided under a license from 3Prong.com Inc.

The following disclaimer is required by Interplay Entertainment Corp.:

The “Interplay” name is used with the permission of Interplay Entertainment Corp., which bears no responsibility for Avid

products.

This product includes portions of the Alloy Look & Feel software from Incors GmbH.

3

Page 4

This product includes software developed by the Apache Software Foundation (http://www.apache.org/).

© DevelopMentor

This product may include the JCifs library, for which the following notice applies:

JCifs © Copyright 2004, The JCIFS Project, is licensed under LGPL (http://jcifs.samba.org/). See the LGPL.txt file in the Third

Party Software directory on the installation CD.

Avid Interplay contains components licensed from LavanTech. These components may only be used as part of and in

connection with Avid Interplay.

Attn. Government User(s). Restricted Rights Legend

U.S. GOVERNMENT RESTRICTED RIGHTS. This Software and its documentation are “commercial computer software” or

“commercial computer software documentation.” In the event that such Software or documentation is acquired by or on behalf

of a unit or agency of the U.S. Government, all rights with respect to this Software and documentation are subject to the terms

of the License Agreement, pursuant to FAR §12.212(a) and/or DFARS §227.7202-1(a), as applicable.

Trademarks

888 I/O, Adrenaline, AirPlay, AirSPACE, AirSPACE HD, AirSpeed, ALEX, Alexis, AniMatte, AudioSuite, AudioVision, AutoSync,

Avid, Avid Advanced Response, Avid DNA, Avid DNxcel, Avid DNxHD, AVIDdrive, AVIDdrive Towers, Avid DS Assist Station,

Avid ISIS, Avid Learning Excellerator, Avid Liquid, Avid Mojo, AvidNet, AvidNetwork, Avid Remote Response, AVIDstripe,

Avid Unity, Avid Unity ISIS, Avid Xpress, AVoption, AVX, CamCutter, CaptureManager, ChromaCurve, ChromaWheel,

Conectiv, CountDown, DAE, Dazzle, Deko, DekoCast, D-Fi, D-fx, DigiDelivery, Digidesign, Digidesign Audio Engine,

Digidesign Intelligent Noise Reduction, DigiDrive, Digital Nonlinear Accelerator, DigiTranslator, DINR, DNxchange, do more,

D-Verb, Equinox, ExpertRender, Face Robot, FACE ROBOT, FastBreak, Fast Track, FieldPak, Film Composer, FilmScribe,

FluidMotion, FXDeko, HIIP, HyperSPACE, HyperSPACE HDCAM, IllusionFX, Image Independence, iNEWS,

iNEWS ControlAir, Instinct, Interplay, Intraframe, iS9, iS18, iS23, iS36, ISIS, IsoSync, LaunchPad, Lightning, Lo-Fi,

Magic Mask, make manage move | media, Marquee, Matador, Maxim, MCXpress, Media Browse, Media Composer,

MediaDock, MediaDock Shuttle, Media Fusion, Media Illusion, MediaLog, Media Reader, Media Recorder, MEDIArray,

MediaShare, MediaStream, Meridien, MetaFuze, MetaSync, MicroTrack, MissionControl, MovieBox, NaturalMatch, Nearchive,

NetReview, NewsCutter, Nitris, OMF, OMF Interchange, OMM, Open Media Framework, Open Media Management, PCTV,

Pinnacle MediaSuite, Pinnacle Studio, Pinnacle Systems, ProEncode, Pro Tools, QuietDrive, Recti-Fi, RetroLoop, rS9, rS18,

SalesView, Sci-Fi, ScriptSync, SecureProductionEnvironment, Show Center, Softimage, Sound Designer II, SPACE,

SPACEShift, SpectraGraph, SpectraMatte, Sputnik, SteadyGlide, SubCap, Symphony, SynchroScience, TARGA, Thunder,

Thunder Station, TimeLiner, Torq, Trilligent, UnityRAID, Vari-Fi, Velvet, Video RAID, Video Slave Driver, VideoSPACE, Xdeck,

and X-Session are either registered trademarks or trademarks of Avid Technology, Inc. in the United States and/or other

countries.

Adobe and Photoshop are either registered trademarks or trademarks of Adobe Systems Incorporated in the United States

and/or other countries. Apple and Macintosh are trademarks of Apple Computer, Inc., registered in the U.S. and other

countries. Windows is either a registered trademark or trademark of Microsoft Corporation in the United States and/or other

countries. All other trademarks contained herein are the property of their respective owners.

GOT FOOTAGE?

Editors — Filmmakers — Special Effects Artists — Game Developers — Animators — Educators — Broadcasters — Content

creators of every genre — Just finished an incredible project and want to share it with the world?

Send us your reels and we may use your footage in our show reel or demo!*

For a copy of our release and Avid’s mailing address, go to www.avid.com/footage.

*Note: Avid cannot guarantee the use of materials submitted.

Avid Interplay Engine Failover Guide • 0130-07643-02 Rev C • June 2008 • This document is distributed by

Avid in online (electronic) form only, and is not available for purchase in printed form.

4

Page 5

Contents

Using This Guide . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

Symbols and Conventions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

If You Need Help. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

Viewing User Documentation on the Interplay Portal . . . . . . . . . . . . . . . . . . . . . . . . 11

Accessing the Online Library . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

How to Order Documentation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

Avid Training Services . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

Chapter 1 Automatic Server Failover Introduction. . . . . . . . . . . . . . . . . . . . . . . . . . 13

Server Failover Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

How Server Failover Works . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

Server Failover Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

Installing the Failover Hardware Components . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

SR2400 Slot Locations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

SR2500 Slot Locations. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

Making Failover Cluster Connections in an Avid Unity ISIS Environment . . . . . 19

Failover Cluster Connections in an Avid Unity ISIS Environment (SR2400) . . . 20

Failover Cluster Connections in an Avid Unity ISIS Environment (SR2500) . . . 21

Making Failover Cluster Connections in an Avid Unity MediaNetwork

Environment . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 22

Failover Cluster Connections in an Avid Unity MediaNetwork

Environment (SR2400) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

Failover Cluster Connections in an Avid Unity MediaNetwork Environment

(SR2500). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

Clustering Terminology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

Chapter 2 Automatic Server Failover Installation . . . . . . . . . . . . . . . . . . . . . . . . . . 27

Server Failover Installation Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28

Before You Begin the Server Failover Installation . . . . . . . . . . . . . . . . . . . . . . . . . . 28

List of IP Addresses and Network Names . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

Preparing the Server for the Cluster Service . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

Setting the QLogic HBA Link Speed . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 32

5

Page 6

Renaming the Local Area Network Interface on Each Node. . . . . . . . . . . . . . . 33

Configuring the Private Network Adapter on Each Node . . . . . . . . . . . . . . . . . 35

Configuring the Binding Order Networks on Each Node . . . . . . . . . . . . . . . . . . 38

Configuring the Public Network Adapter on Each Node . . . . . . . . . . . . . . . . . . 40

Joining Both Servers to the Active Directory Domain . . . . . . . . . . . . . . . . . . . . 40

Configuring the Cluster Shared-Storage RAID Disks on Each Node . . . . . . . . 40

Configuring the Cluster Service . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 41

Configuring the Cluster Service on the First Node . . . . . . . . . . . . . . . . . . . . . . 42

Validating the Cluster Service on the First Node. . . . . . . . . . . . . . . . . . . . . . . . 47

Configuring the Cluster Service on the Second Node. . . . . . . . . . . . . . . . . . . . 47

Configuring Rules for the Cluster Networks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

Prioritizing the Heartbeat Adapter . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

After Setting Up the Cluster . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 52

Verifying the Quorum Disk. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

Setting the Startup Times on Each Node . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

Testing the Cluster Installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

Installing the Distributed Transaction Coordinator . . . . . . . . . . . . . . . . . . . . . . . . . . 56

Creating a Resource Group for the Distributed Transaction Coordinator . . . . . 57

Assigning an IP Address to the MSDTC Group. . . . . . . . . . . . . . . . . . . . . . . . . 58

Assigning a Network Name to the MSDTC Group . . . . . . . . . . . . . . . . . . . . . . 59

Creating a Physical Resource for the MSDTC Group . . . . . . . . . . . . . . . . . . . . 60

Assigning Distributed Transaction Coordinator Resource to the MSDTC Group 60

Bringing the MSDTC Online . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

Installing the Interplay Engine . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

Disabling Any Web Servers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

Installing the Interplay Engine on the First Node . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

Preparation for Installing on the First Node. . . . . . . . . . . . . . . . . . . . . . . . . . . . 63

Starting the Installation and Accepting the License Agreement . . . . . . . . . . . . 63

Installing the Interplay Engine Using Custom Mode . . . . . . . . . . . . . . . . . . . . . 64

Specifying Cluster Mode During a Custom Installation . . . . . . . . . . . . . . . 65

Specifying the Interplay Engine Details . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

Specifying the Interplay Engine Name . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

Specifying the Destination Location . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 69

6

Page 7

Specifying the Default Database Folder . . . . . . . . . . . . . . . . . . . . . . . . . . . 69

Specifying the Share Name. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

Specifying the Configuration Server . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 71

Specifying the Server User . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

Specifying the Server Cache . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 74

Enabling Email Notifications . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

Installing the Interplay Engine for a Custom Installation on the First Node . 76

Bringing the Disk Resource Online . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 78

Installing the Interplay Engine on the Second Node . . . . . . . . . . . . . . . . . . . . . . . . . 81

Bringing the Interplay Engine Online. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

Testing the Complete Installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

Updating a Clustered Installation (Rolling Upgrade) . . . . . . . . . . . . . . . . . . . . . . . . . 84

Updating the Workgroup.xml File for a Split Database . . . . . . . . . . . . . . . . . . . 85

Uninstalling the Interplay Engine on a Clustered System . . . . . . . . . . . . . . . . . . . . . 86

Chapter 3 Automatic Server Failover Tips and Rules . . . . . . . . . . . . . . . . . . . . . . . 89

Index . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 93

7

Page 8

8

Page 9

Using This Guide

Congratulations on the purchase of your Avid® Interplay™, a powerful system for managing

media in a shared storage environment.

This guide is intended for all Avid Interplay administrators who are responsible for

installing, configuring, and maintaining an Avid Interplay Engine with the Automatic Server

Failover module integrated.

The documentation describes the features and hardware of all models. Therefore, your

n

system might not contain certain features and hardware that are covered in the

documentation.

Symbols and Conventions

Avid documentation uses the following symbols and conventions:

Symbol or Convention Meaning or Action

n

c

w

> This symbol indicates menu commands (and subcommands) in the

(Windows), (Windows

only), (Macintosh), or

(Macintosh only)

A note provides important related information, reminders,

recommendations, and strong suggestions.

A caution means that a specific action you take could cause harm to

your computer or cause you to lose data.

A warning describes an action that could cause you physical harm.

Follow the guidelines in this document or on the unit itself when

handling electrical equipment.

order you select them. For example, File > Import means to open the

File menu and then select the Import command.

This symbol indicates a single-step procedure. Multiple arrows in a list

indicate that you perform one of the actions listed.

This text indicates that the information applies only to the specified

operating system, either Windows or Macintosh OS X.

Page 10

Symbol or Convention Meaning or Action

Bold font Bold font is primarily used in task instructions to identify user interface

items and keyboard sequences.

Italic font Italic font is used to emphasize certain words and to indicate variables.

Courier Bold font

Ctrl+key or mouse action Press and hold the first key while you press the last key or perform the

If You Need Help

If you are having trouble using your Avid product:

1. Retry the action, carefully following the instructions given for that task in this guide. It

is especially important to check each step of your workflow.

2. Check the latest information that might have become available after the documentation

was published:

- If the latest information for your Avid product is provided as printed release notes,

they ship with your application and are also available online.

If the latest information for your Avid product is provided as a ReadMe file, it is

supplied on your Avid installation CD or DVD as a PDF document

(README_product.pdf) and is also available online.

You should always check online for the most up-to-date release notes or ReadMe

because the online version is updated whenever new information becomes

available. To view these online versions, select ReadMe from the Help menu, or visit

the Knowledge Base at

Courier Bold font identifies text that you type.

mouse action. For example, Command+Option+C or Ctrl+drag.

www.avid.com/readme.

10

3. Check the documentation that came with your Avid application or your hardware for

maintenance or hardware-related issues.

4. Visit the online Knowledge Base at www.avid.com/onlinesupport. Online services are

available 24 hours per day, 7 days per week. Search this online Knowledge Base to find

answers, to view error messages, to access troubleshooting tips, to download updates,

and to read or join online message-board discussions.

Page 11

Viewing User Documentation on the Interplay Portal

Viewing User Documentation on the Interplay

Portal

You can quickly access the Interplay user documentation from any system in the Interplay

environment. Type the following line in your Web browser:

http://

where Interplay_Engine_name is the name of the computer running the Interplay Engine

software. For example, the following line opens the portal Web page on a system named

DocWG:

http://DocWG

• Click the “Avid Interplay User Documentation” link to access the User Information

Interplay_Engine_name

Center page. On this page, select the Avid Interplay Framework User’s Guide from the

list of user’s

guides.

Accessing the Online Library

The Avid Interplay Online Library DVD contains all the Avid Interplay product

documentation in PDF format.The Online Library includes a Master Glossary of all

specialized terminology used in the documentation for Avid products.

Most Avid online libraries also include multimedia content such as feature presentations.

This multimedia content is an excellent first resource for learning how to use your

application or for helping you understand a particular feature or workflow.

You need Adobe® Reader® to view the documentation online. You can download the latest

n

version from the Adobe web site.

To access the online library from the Online Library DVD:

1. Insert the Online Library DVD into the drive.

2. Double-click the Mainmenu file.

How to Order Documentation

To order additional copies of this documentation from within the United States, call Avid

Sales at 800-949-AVID (800-949-2843). If you are placing an order from outside the United

States, contact your local Avid representative.

11

Page 12

Avid Training Services

Avid makes lifelong learning, career advancement, and personal development easy and

convenient. Avid understands that the knowledge you need to differentiate yourself is always

changing, and Avid continually updates course content and offers new training delivery

methods that accommodate your pressured and competitive work environment.

To learn about Avid's new online learning environment, Avid Learning Excellerator™

(ALEX), visit

For information on courses/schedules, training centers, certifications, courseware, and

books, please visit

(800-949-2843).

http://learn.avid.com.

www.avid.com/training or call Avid Sales at 800-949-AVID

12

Page 13

1 Automatic Server Failover Introduction

This chapter covers the following topics:

• Server Failover Overview

• How Server Failover Works

• Installing the Failover Hardware Components

• Clustering Terminology

Server Failover Overview

The automatic server failover mechanism in Avid Interplay allows client access to the

Interplay Engine in the event of failures or during maintenance, with minimal impact on the

availability. A failover server is activated in the event of application, operating system, or

hardware failures. The server can be configured to notify the administrator about such

failures using email.

c

Additional monitoring of the hardware and software components of a high-availability

solution is always required. Avid delivers Interplay preconfigured, but additional

attention on the customer side is required to prevent outage (for example, when a

private network fails, RAID disk fails, or a power supply loses power). In a mission

critical environment, monitoring tools and tasks are needed to be sure there are no

silent outages. If another (unmonitored) component fails, only an event is generated,

and while this does not interrupt availability, it might go unnoticed and lead to

problems. Additional software reporting such issues to the IT administration lowers

downtime risk.

The failover cluster is a system made up of two server nodes and a shared-storage device

connected over Fibre Channel. These are to be deployed in the same location given the

shared access to the storage device. The cluster uses the concept of virtual servers to specify

groups of resources that failover together.

The following diagram illustrates the failover cluster architecture for an Avid Unity

MediaNetwork environment. (This diagram and the next diagram do not show the complete

Interplay environment. For more detailed illustrations, see Avid Interplay Best Practices.)

Page 14

1 Automatic Server Failover Introduction

Two-node cluster in an Avid Unity

MediaNetwork environment

Interplay Engine - Cluster Node

Infortrend

cluster

shared-storage

RAID array

Interplay Engine - Cluster Node

Interplay clients

Intranet

Network Switch

Private network

for heartbeat

Fibre Switch

LEGEND

Fibre connection

1 GB Ethernet connection

Two-node cluster in an Avid Unity

ISIS environment

Interplay Engine - Cluster Node

Infortrend

cluster

shared-storage

RAID array

Interplay Engine - Cluster Node

Interplay clients

Intranet

Avid Network Switch

Private network

for heartbeat

LEGEND

Fibre connection

1 GB Ethernet connection

VLAN 20

VLAN 10

The following diagram illustrates the failover cluster architecture for an Avid Unity ISIS

environment. In this environment, each cluster node is “dual-connected” to the network

switch: one network interface is connected to the VLAN 10 subnet and the other is

connected to the VLAN 20 subnet.

14

If you are already using clusters, the Avid Interplay Engine will not interfere with your

n

current

setup.

Page 15

How Server Failover Works

FibreChannel

Intranet

Private Network

Intranet

Disk #1

Quorum

4GB

Interplay Server

(virtual)

11.22.33.201

MSDTC

11.22.33.202

Failover Cluster

11.22.33.200

Node #1

Intranet: 11.22.33.44

Private: 10.10.10.10

Node #2

Intranet: 11.22.33.45

Private: 10.10.10.11

Disk #2

MSDTC

5GB

Disk #3

Database

925GB +

Cluster Group

When the Microsoft® Windows® cluster service is running on the machines and the server is

deployed in cluster mode, the Interplay Engine and its accompanying services are exposed to

users as a virtual server. To clients, however, connecting to the clustered virtual Interplay

Engine appears to be the same process as connecting to a single, physical machine. The user

or client application does not know which node is actually hosting the virtual server.

When the server is online, the resource monitor regularly checks its availability and

automatically restarts the server or initiates a failover to the other node if a failure is

detected. The exact behavior can be configured using the Windows Cluster Administrator

console. Given that clients connect to the virtual network name and IP address, which are

also taken over by the failover node, this minimizes the impact on the availability of the

server.

The following diagram illustrates the components of a cluster group, including sample IP

addresses. For a list of required IP addresses and node names, see

Network Names” on page 30.

How Server Failover Works

“List of IP Addresses and

15

Page 16

1 Automatic Server Failover Introduction

Server Failover Requirements

You should make sure the server failover system meets the following requirements.

Hardware

A dual-server failover cluster-capable system with an Infortrend® cluster shared-storage

RAID disk set is needed. The automatic server failover system was developed on and tested

with the following:

• Intel Server Chassis SR2500 Packaged Cluster, which is the recommended hardware:

http://www.intel.com/design/servers/chassis/sr2500/

• Intel Server Chassis SR2400 Packaged Cluster:

http://www.intel.com/design/servers/chassis/sr2400/

The servers in a cluster are connected using one or more cluster shared-storage buses and

one or more physically independent networks acting as a heartbeat.

Server Software

Two licenses of Windows Server 2003 Enterprise Edition or Windows Server 2003

Datacenter Edition are needed.

16

Space Requirements

The default disk configuration for the cluster shared RAID array is as follows:

• Quorum disk - 4GB

•MSDTC disk - 5GB

• Database disk - 925GB or larger

Antivirus Software

You can run antivirus software on a cluster, if the antivirus software is cluster-aware. For

information about cluster-aware versions of your antivirus software, contact the antivirus

vendor. If you are running antivirus software on a cluster, make sure you exclude these

locations from the virus scanning: Q:\ (Quorum disk), C:\Windows\Cluster, and

S:\Workgroup_Databases (database).

Page 17

Installing the Failover Hardware Components

Functions You Need To Know

Before you set up a cluster in an Avid Interplay environment, you should be familiar with the

following functions:

• Microsoft Windows Active Directory domains and domain users

• Microsoft Windows clustering (current version, as there are changes from prior version)

• Disk configuration (format, partition, naming)

• Network configuration

Installing the Failover Hardware Components

A failover cluster system includes the following components:

• Two Interplay Engine nodes or two Interplay Archive nodes (two SR 2400 or two

SR 2500 servers)

• One Infortrend cluster shared-storage RAID array

The following topics provide information about installing the failover hardware

components:

• “SR2400 Slot Locations” on page 17

• “SR2500 Slot Locations” on page 18

• “Making Failover Cluster Connections in an Avid Unity ISIS Environment” on page 19

• “Failover Cluster Connections in an Avid Unity ISIS Environment (SR2400)” on page

20

• “Failover Cluster Connections in an Avid Unity ISIS Environment (SR2500)” on page

21

• “Making Failover Cluster Connections in an Avid Unity MediaNetwork Environment”

on page 22

• “Failover Cluster Connections in an Avid Unity MediaNetwork Environment (SR2400)”

on page 23

• “Failover Cluster Connections in an Avid Unity MediaNetwork Environment (SR2500)”

on page 24

17

Page 18

1 Automatic Server Failover Introduction

PCI slots

Serial A to F/C switch if needed

SCSI B

Video

USB

1

2

SR2400 Back Panel

Mouse

Keyboard

RJ 45 to

serial B

Power supply

Slot 2

Slot 1

Slot 3

Small form factor slots not used

1 GB

Ethernet

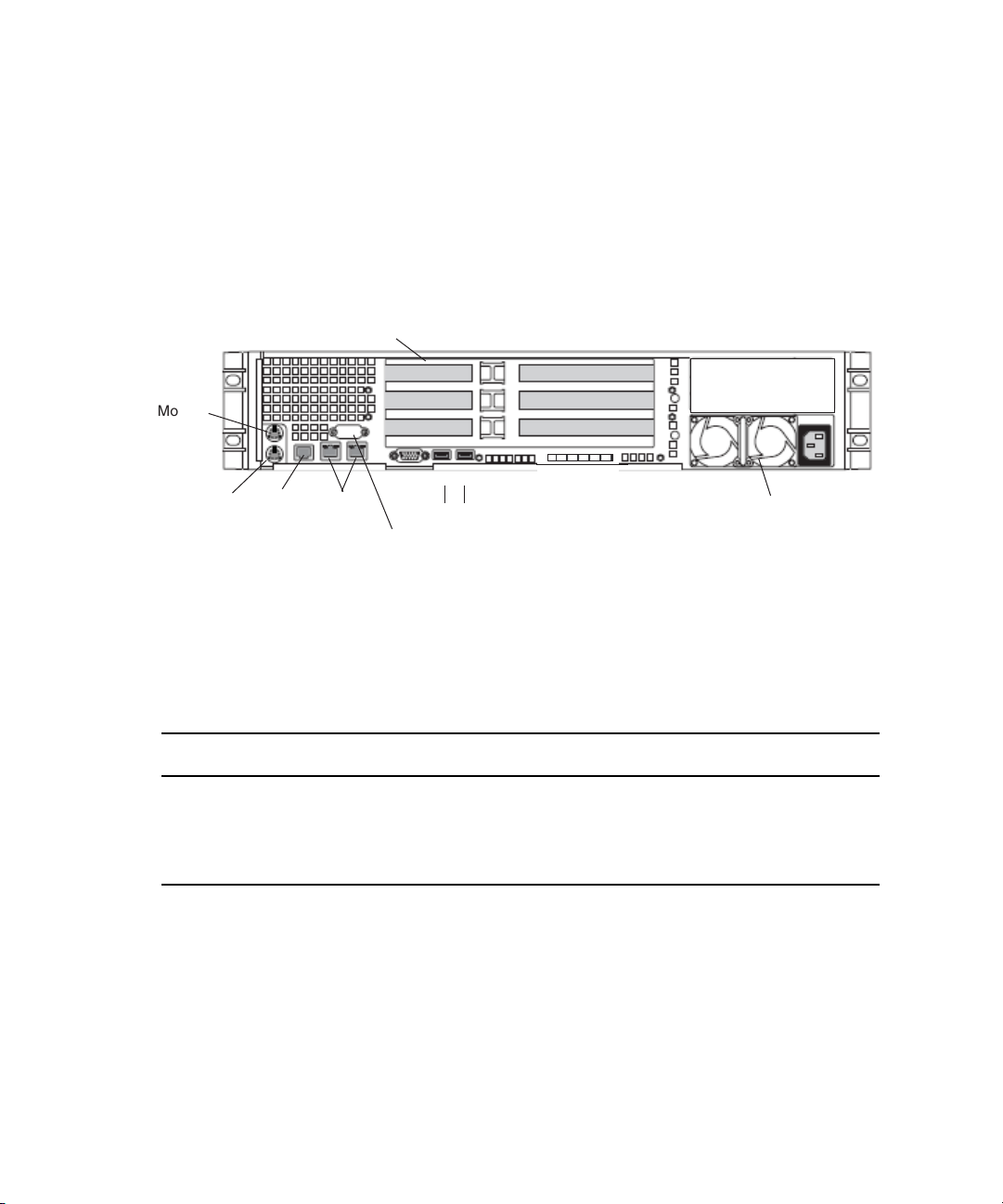

SR2400 Slot Locations

The SR2400 is supported as a server for the Interplay applications. This section describes

the slot locations that are specific to the Interplay components in a cluster configuration.

Use the following figure and table as guides to configuring your SR2400 systems in an

Interplay cluster environment.

On the SR2400, all boards must be installed starting in the top slot, and the second board

n

must be in the middle slot. The second board cannot be in the bottom slot with the middle

slot left open.

SR2400 Back Panel Configuration for Avid Unity Environment

Slot Avid Unity ISIS Avid Unity MediaNetwork

3 Intel Pro 1000MT ATTO

2 QLogic

1 Empty Intel Pro 1000MT

18

®

Card QLogic Card

a

a. Unity MediaNetwork environment: the Pro 1000MT card is shipped in slot 3 (top). You must move the

card to slot 1 (bottom) and install the ATTO card in slot 3 (top). The Pro 1000MT is not used in an Unity

MediaNetwork environment.

Page 19

SR2500 Slot Locations

PCI-X slots

Power supplies

Serial A to F/C switch if needed

Video

USB

1

2

SR2500 Back Panel

Mouse

Keyboard

RJ 45 to

serial B

Primary power

supply on bottom

Slot 2

Slot 1

Slot 3

PCIe slots (small form factor)

1 GB

Ethernet

Slot 2

Slot 1

The SR2500 is supported as a server for the Interplay applications. This section describes

the slot locations that are specific to the Interplay components in a cluster configuration.

Use the following figure and table as guides to configuring your SR2500 systems in an

Interplay cluster environment.

Installing the Failover Hardware Components

SR2500 Back Panel Configuration for Avid Unity Environment

Slot Type Slot Avid Unity ISIS Avid Unity MediaNetwork

PCI-X 3 Empty ATTO

PCIe NA NA NA

Its important to match the slot locations in the following tables because they match the order

n

that the drivers are loaded on the SR2500 Recovery DVDs.

2 Empty Empty

1 QLogic Card

a

QLogic Card

a

2 Intel Pro 1000PT Intel Pro 1000PT

1 Empty Empty

a. The SR2500 server might ship with the QLogic card in PCI-X slot 2 (middle). You must move the QLogic card to

PCI-X slot 1 (bottom), because this configuration matches the order that the drivers are loaded on the SR2500

Recovery DVDs.

19

Page 20

1 Automatic Server Failover Introduction

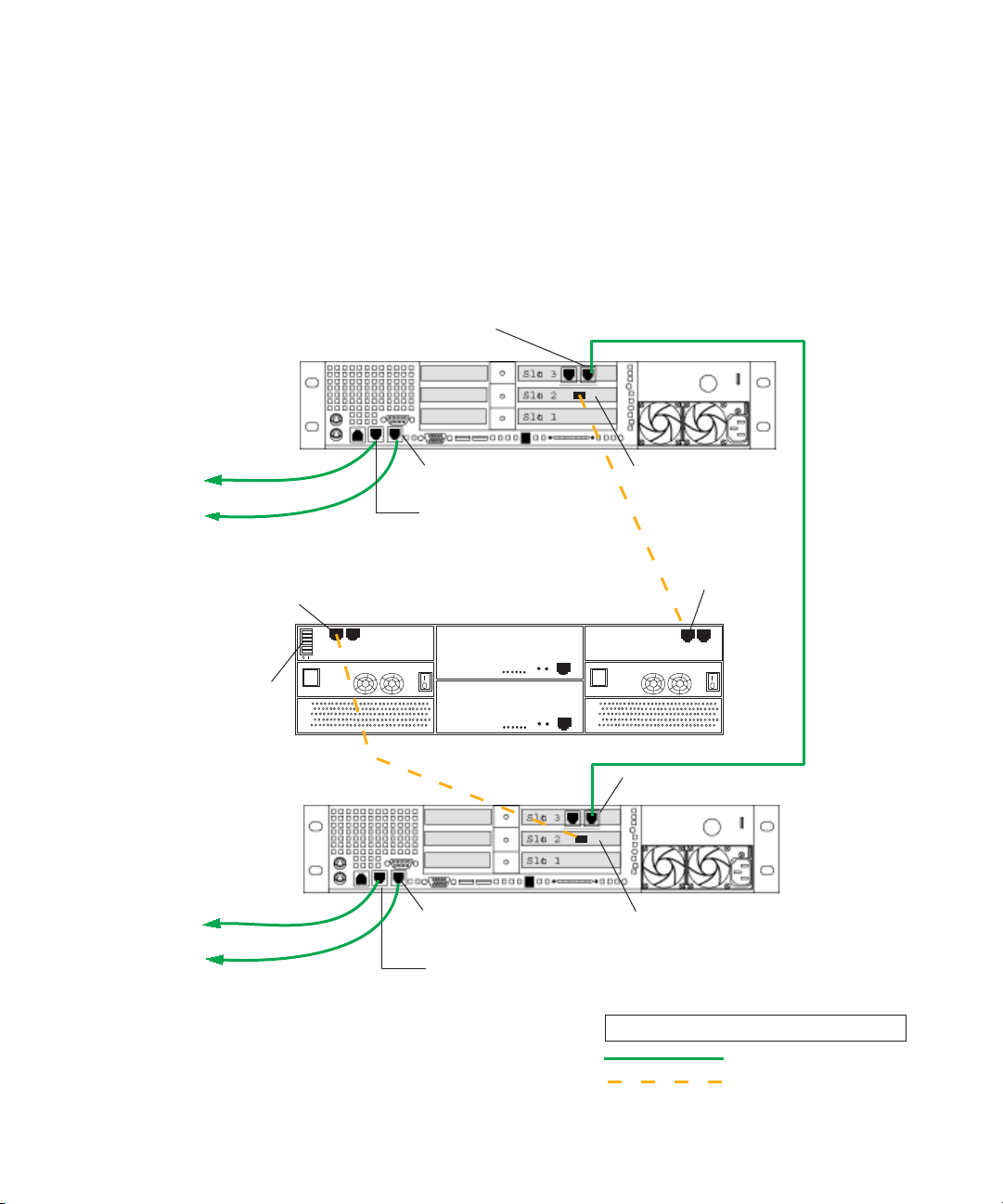

Making Failover Cluster Connections in an Avid Unity ISIS Environment

Make the following cable connections to add a failover cluster to an Avid Unity ISIS

environment:

• First cluster node:

- Left on-board network interface connector to ISIS left subnet (VLAN 10)

- Right on-board network interface connector to ISIS right subnet (VLAN 20)

- QLogic card connector to RAID array, Fibre Channel 1 left connector

• Second cluster node:

- Left on-board network interface connector to ISIS left subnet (VLAN 10)

- Right on-board network interface connector to ISIS right subnet (VLAN 20)

- QLogic card connector to RAID array, Fibre Channel 0 left connector

• Right connector on PCI adapter network interface in the first cluster node to right

connector on PCI adapter network interface in second cluster node (private network for

heartbeat)

• All switches on the cluster shared-storage RAID array are in the default “enable”

position (left)

20

For more details, see the illustrations in:

• “Failover Cluster Connections in an Avid Unity ISIS Environment (SR2400)” on page

20.

• “Failover Cluster Connections in an Avid Unity ISIS Environment (SR2500)” on page

21.

Page 21

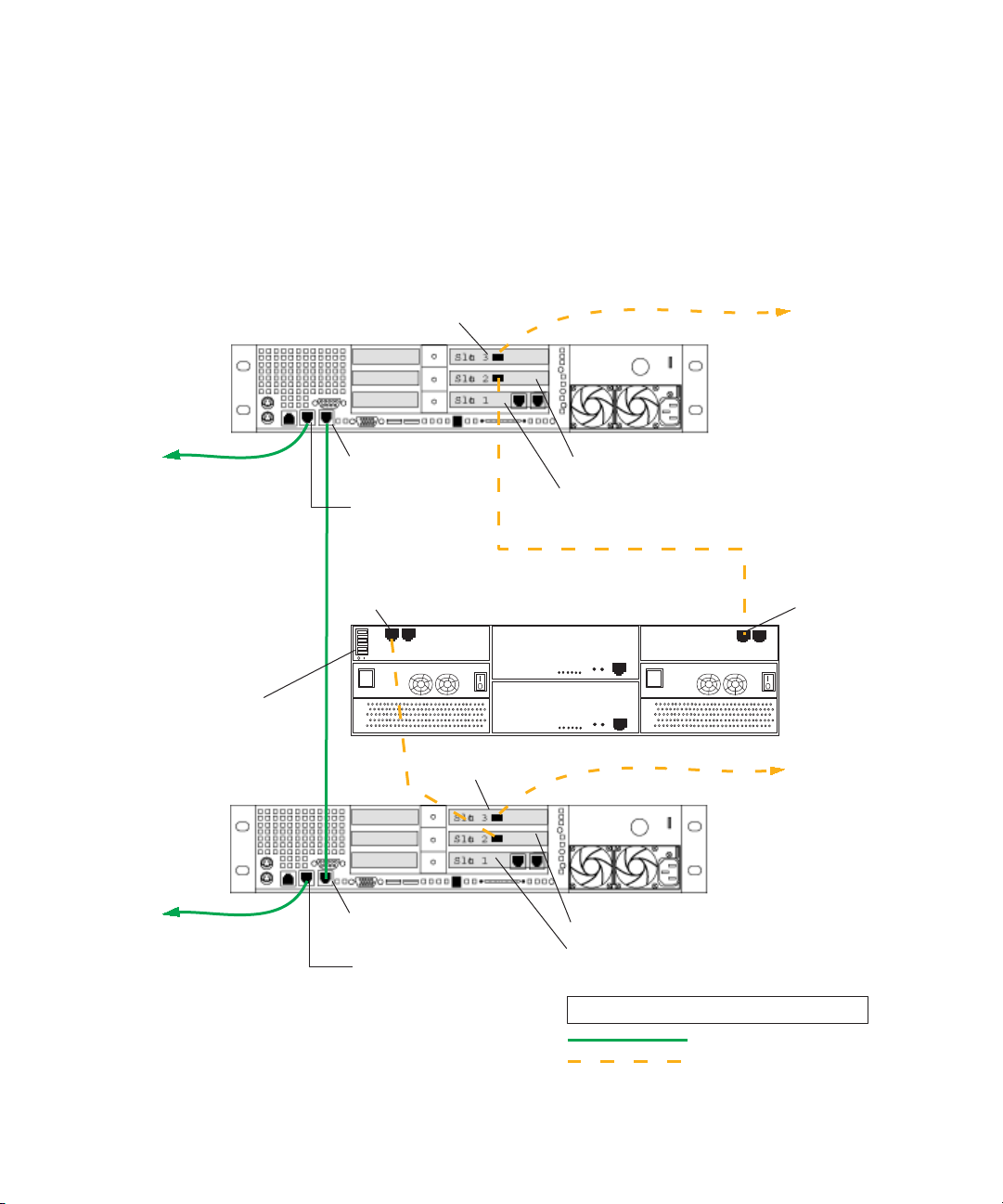

Private network

for heartbeat

PCI adapter network interface

right connector

To ISIS right

subnet

To ISIS left

subnet

To ISIS right subnet

To ISIS left

subnet

QLogic card

Right on-board

network interface

Left on-board

network interface

PCI adapter network interface

right connector

LEGEND

Fibre connection

1GB Ethernet connection

QLogic card

Interplay Engine

Cluster Node

Interplay Engine

Cluster Node

Right on-board

network interface

Left on-board

network interface

Cluster Shared-Storage RAID Array

Fibre Channel 1

left connector

Fibre Channel 0

left connector

FC CH0

FC CH1

All switches

set to default

“enabled” left

SR2400

Back Panel

SR2400

Back Panel

Installing the Failover Hardware Components

Failover Cluster Connections in an Avid Unity ISIS Environment (SR2400)

The following illustration shows the required cable connections when adding a failover

cluster in an Avid Unity ISIS environment (SR2400 servers). For a description of the

connections, see

on page 19.

“Making Failover Cluster Connections in an Avid Unity ISIS Environment”

21

Page 22

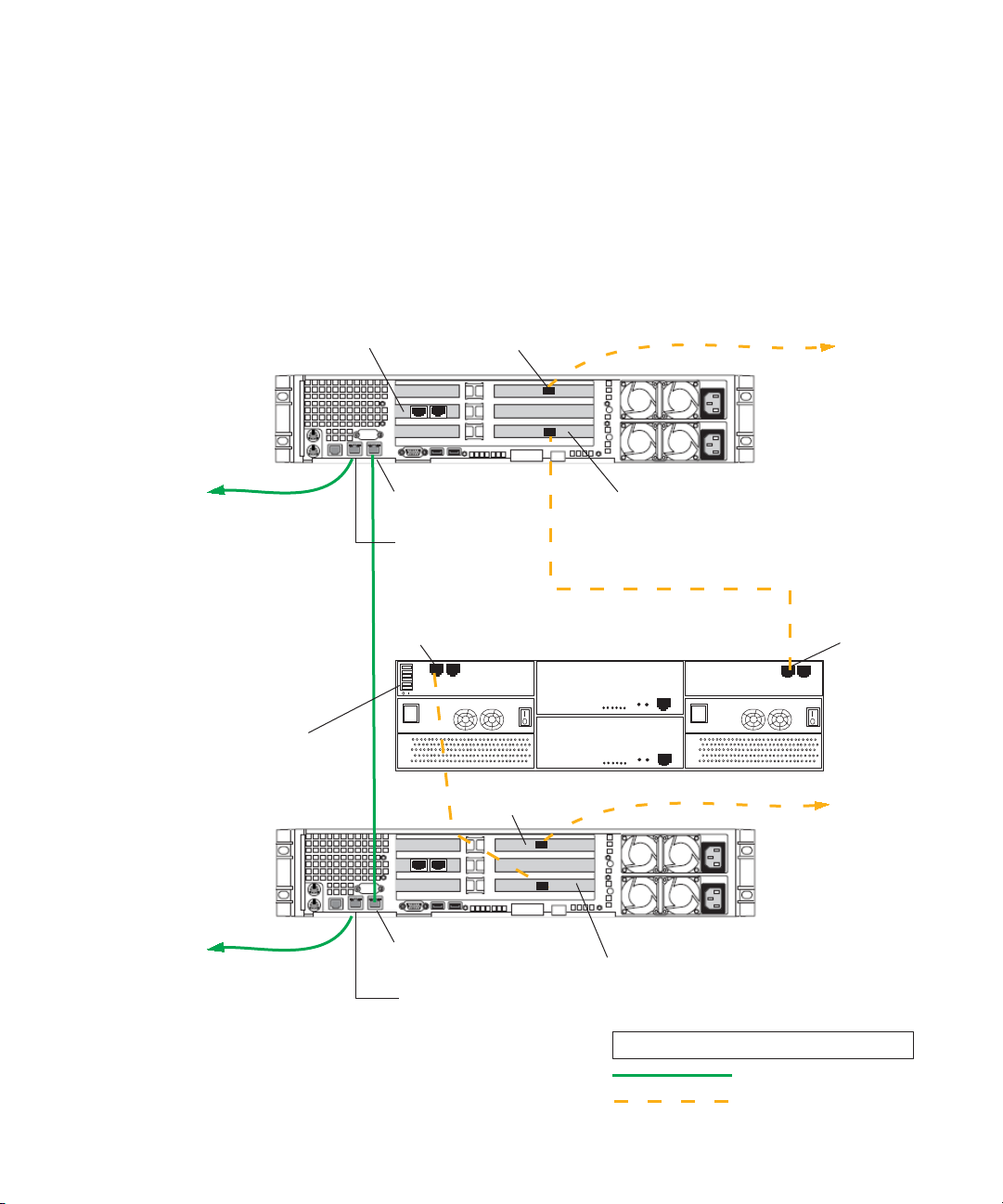

1 Automatic Server Failover Introduction

Private network

for heartbeat

PCI adapter network interface

right connector

To ISIS right

subnet

To ISIS left

subnet

To ISIS right subnet

To ISIS left

subnet

QLogic card

Right on-board

network interface

Left on-board

network interface

PCI adapter network interface right connector

LEGEND

Fibre connection

1GB Ethernet connection

QLogic card

Interplay Engine

Cluster Node

Interplay Engine

Cluster Node

Right on-board

network interface

Left on-board

network interface

Cluster Shared-Storage RAID Array

Fibre Channel 1

left connector

Fibre Channel 0

left connector

FC CH0

FC CH1

All switches

set to default

“enabled” left

SR2500

Back Panel

SR2500

Back Panel

Slot 3

Slot 2

Slot 1

Failover Cluster Connections in an Avid Unity ISIS Environment (SR2500)

The following illustration shows the required cable connections when adding a failover

cluster in an Avid Unity ISIS environment (SR2500 servers). For a description of the

connections, see

on page 19.

“Making Failover Cluster Connections in an Avid Unity ISIS Environment”

22

Page 23

Installing the Failover Hardware Components

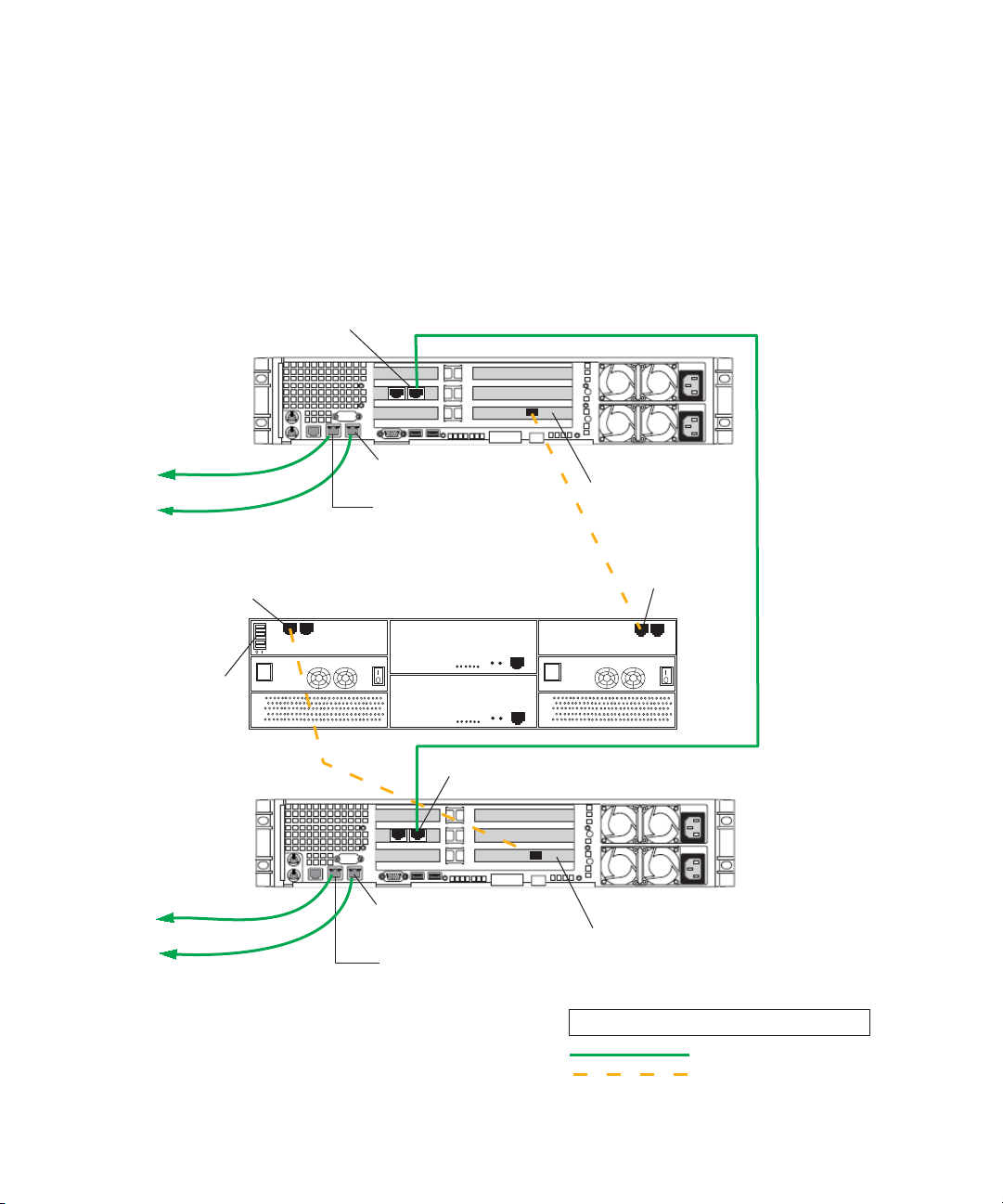

Making Failover Cluster Connections in an Avid Unity MediaNetwork Environment

The Interplay Engine Cluster nodes (SR2400 servers) ship with an Intel Pro 1000 MT card

in slot 3 (top). You need to move this card to slot 1 (bottom). Then add an ATTO host bus

adapter in slot 3 (top).

Make the following cable connections to add a failover cluster to an Unity MediaNetwork

environment:

• First cluster node:

®

- Left on-board network interface connector to Ethernet

- QLogic card connector to RAID array, Fibre Channel 1 left connector

- ATTO card connector to Unity MediaNetwork FC switch

• Second cluster node:

- Left on-board network interface connector to Ethernet

- QLogic card connector to RAID array, Fibre Channel 0 left connector

- ATTO card connector to Unity MediaNetwork FC switch

public network

public network

• Right on-board network interface connector on the first cluster node to right on-board

network interface connector on the second cluster node (private network for heartbeat)

• All switches on the cluster shared-storage RAID array are in the default “enable”

position (left)

For more details, see the illustration in:

• “Failover Cluster Connections in an Avid Unity MediaNetwork Environment (SR2400)”

on page 23.

• “Failover Cluster Connections in an Avid Unity MediaNetwork Environment (SR2500)”

on page 24.

23

Page 24

1 Automatic Server Failover Introduction

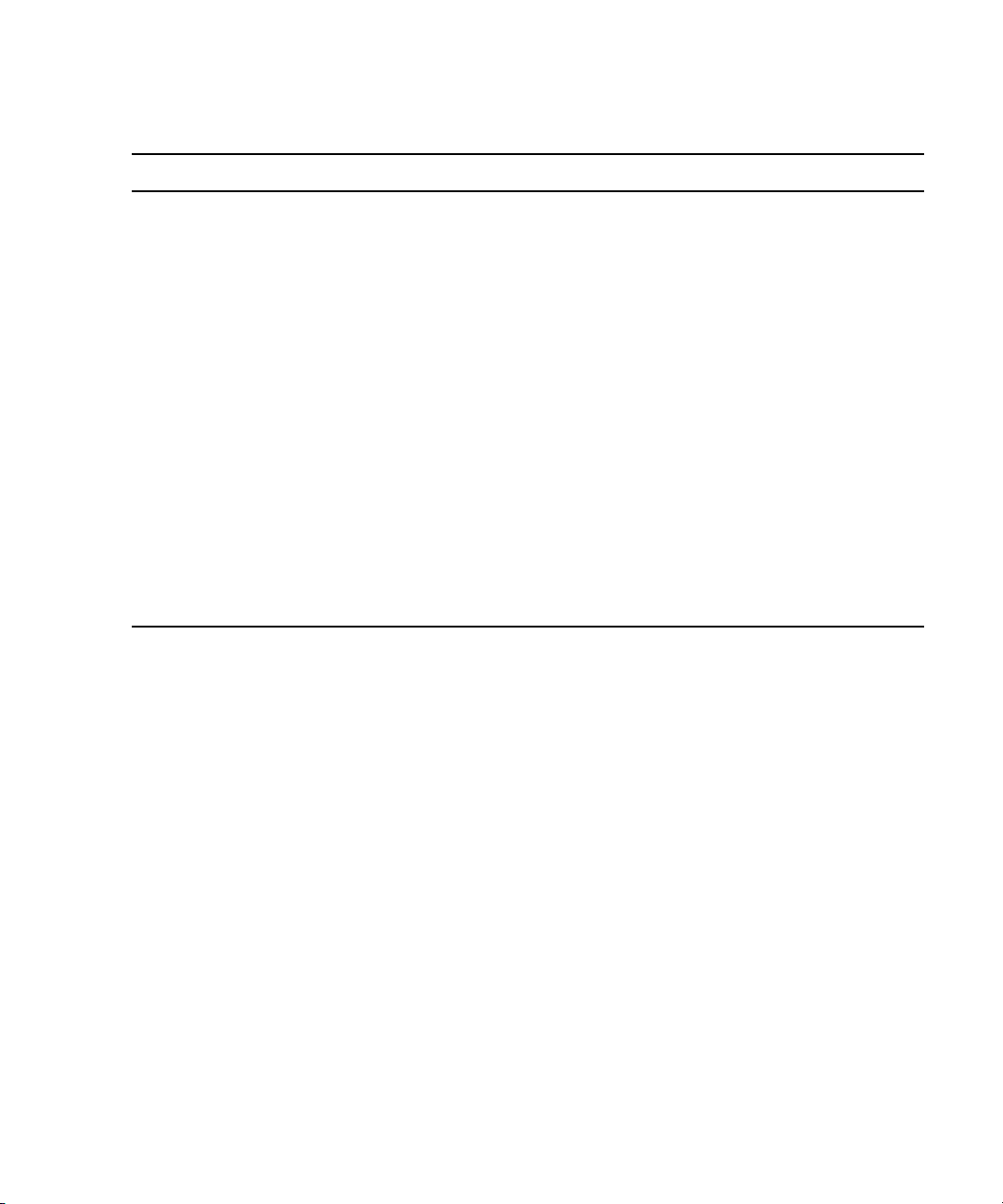

Private network

for heartbeat

ATTO card

ATTO card

To Ethernet

Public Network

Right on-board

network interface

Left on-board

network interface

To MediaNetwork

FC switch

LEGEND

Fibre connection

1GB Ethernet connection

To

MediaNetwork

FC switch

Interplay Engine

Cluster Node

Interplay Engine

Cluster Node

Right on-board

network interface

Left on-board

network interface

To Ethernet

Public Network

QLogic card

QLogic card

PCI adapter network interface - not used

PCI adapter network interface - not used

Fibre Channel 1

left connector

Fibre Channel 0

left connector

FC CH0

FC CH1

All switches set to

default “enabled” left

Cluster Shared-Storage RAID Array

SR2400

Back Panel

SR2400

Back Panel

Failover Cluster Connections in an Avid Unity MediaNetwork Environment (SR2400)

The following illustration shows the required cable connections when adding a failover

cluster in an Avid Unity MediaNetwork environment (SR2400 servers). For a description of

the connections, see

“Making Failover Cluster Connections in an Avid Unity MediaNetwork

Environment” on page 22.

24

Page 25

Installing the Failover Hardware Components

Slot 3

Slot 1

Private network

for heartbeat

ATTO card

ATTO card

To Ethernet

public network

Right on-board

network interface

Left on-board

network interface

To MediaNetwork

FC switch

LEGEND

Fibre connection

1GB Ethernet connection

To

MediaNetwork

FC switch

Interplay Engine

Cluster Node

Interplay Engine

Cluster Node

Right on-board

network interface

Left on-board

network interface

To Ethernet

public network

QLogic card

QLogic card

Fibre Channel 1

left connector

Fibre Channel 0

left connector

FC CH0

FC CH1

All switches set to

default “enabled” left

Cluster Shared-Storage RAID Array

SR2500

Back Panel

SR2500

Back Panel

PCI adapter network

interface - not used

Slot 2

Failover Cluster Connections in an Avid Unity MediaNetwork Environment (SR2500)

The following illustration shows the required cable connections when adding a failover

cluster in an Avid Unity MediaNetwork environment (SR2500 servers). For a description of

the connections, see

Environment” on page 22.

“Making Failover Cluster Connections in an Avid Unity MediaNetwork

25

Page 26

1 Automatic Server Failover Introduction

Clustering Terminology

Clustering is not always straightforward, so it is important that you get familiar with the

terminology of server clusters before you start. A good source of information is the

Microsoft Technology Center for Clustering Services under:

http://www.microsoft.com/windowsserver2003/technologies/clustering/default.mspx

Detailed architecture documentation can be found here:

http://www.microsoft.com/windowsserver2003/techinfo/overview/servercluster.mspx

Here is a brief summary of the major concepts and terms:

• Nodes: Individual computers in a cluster configuration.

• Cluster service: The group of components on each node that perform a cluster-specific

activity.

• Resource: Cluster components (hardware and software) that are managed by the cluster

service. Resources are physical hardware devices such as disk drives, and logical items

such as IP addresses and applications.

• Online resource: A resource that is available and is providing its service.

• Quorum resource: A special common cluster resource. This resource plays a critical role

in cluster operations.

26

• Resource group: A collection of resources that are managed by the cluster service as a

single, logical unit.

Page 27

2 Automatic Server Failover Installation

This chapter describes the processes for configuring the automatic server failover. It is

crucial that you follow the instructions given in this chapter completely, otherwise the

automatic server failover will not work.

This chapter covers the following topics:

• Server Failover Installation Overview

• Before You Begin the Server Failover Installation

• Preparing the Server for the Cluster Service

• Configuring the Cluster Service

• Configuring Rules for the Cluster Networks

• After Setting Up the Cluster

• Installing the Distributed Transaction Coordinator

• Installing the Interplay Engine

• Disabling Any Web Servers

• Installing the Interplay Engine on the First Node

• Installing the Interplay Engine on the Second Node

• Bringing the Interplay Engine Online

• Testing the Complete Installation

• Updating a Clustered Installation (Rolling Upgrade)

• Uninstalling the Interplay Engine on a Clustered System

Page 28

2 Automatic Server Failover Installation

Server Failover Installation Overview

Installation and configuration of the automatic server failover consists of the following

major tasks:

• Make sure that the network is correctly set up and that you have reserved IP host names

and static IP addresses (see “Before You Begin the Server Failover Installation” on page

28).

• Prepare the servers for the cluster service (see “Preparing the Server for the Cluster

Service” on page 31). This includes configuring the nodes for the network and

formatting the drives.

• Configure the cluster service (see “Configuring the Cluster Service” on page 41,

“Configuring Rules for the Cluster Networks” on page 49, and “After Setting Up the

Cluster” on page 52).

• Install the Distributed Transaction Coordinator (MSDTC group) (see “Installing the

Distributed Transaction Coordinator” on page 56).

• Install the Interplay Engine on both nodes (see “Installing the Interplay Engine” on page

62).

• Test the complete installation (see “Testing the Complete Installation” on page 82).

Do not install any other software on the cluster machines except the Interplay engine. For

n

example, Media Indexer software needs to be installed on a different server. For complete

installation instructions, see the Avid Interplay Software Installation and Configuration

Guide.

For more details about server clusters, see the Microsoft document “Guide to Creating and

Configuring a Server Cluster under Windows Server 2003,” available at:

http://www.microsoft.com/technet/prodtechnol/windowsserver2003/technologies/clustering

/confclus.mspx

Before You Begin the Server Failover Installation

Before you begin the installation process, you need to do the following:

• Make sure that the facility has a network that is qualified to run Active Directory and

DNS services.

• Determine the subnet mask, the gateway, DNS, and WINS server addresses on the

network.

• Install and set up an Avid Unity client on both servers. See the Avid Unity

MediaNetwork File Manager Setup Guide or the Avid Unity ISIS System Setup Guide.

28

Page 29

Before You Begin the Server Failover Installation

• Create or select two domain user accounts:

- Cluster Service Account (Server Execution User): Create or select an account

(sometimes called the cluster user account) that is used to start the cluster service

and is also used by the Interplay Engine service. This account must be a domain

user and it must be a unique name that will not be used for any other purpose. The

procedures in this document use sqauser as an example of a Cluster Service

Account. This account is automatically added to the Local Administrators group on

each node by the Interplay Engine software during the installation process.

The Server Execution User is critical to the operation of the Interplay Engine. If

necessary, you can change the name of the Server Execution User after the

installation. For more information, see “Troubleshooting the Server Execution User

Account” and “Re-creating the Server Execution User” in the Avid Interplay Engine

and Avid Interplay Archive Engine Administration Guide and the Interplay ReadMe.

For information on creating a cluster user account, see the Microsoft document

“Guide to Creating and Configuring a Server Cluster under Windows Server 2003.”

http://www.microsoft.com/technet/prodtechnol/windowsserver2003/technologies/cl

ustering/confclus.mspx.

- Cluster Installation Account: Create or select a user account to use during the

installation process. This user account must be a domain user account with

privileges to add servers to the domain. This user account is required only during

the installation of the cluster.

Do not use the same username and password for the Cluster Service Account and the

n

Cluster Installation Account. These accounts have different functions and require different

privileges.

• Create an Avid Unity user account with read and write privileges. This account is not

needed for the installation of Interplay Engine, but is required for the operation of

Interplay

of the Cluster Service Account.

• Make sure the network includes an Active Directory domain before you install or

configure the cluster.

• Reserve static IP addresses for all network interfaces and host names. See “List of IP

Addresses and Network Names” on page 30.

Engine. The user name and password must match the user name and password

29

Page 30

2 Automatic Server Failover Installation

List of IP Addresses and Network Names

You need to reserve IP host names and static IP addresses on the in-network DNS server

before you begin the installation process. An Avid Unity ISIS environment needs 8 IP

addresses and an Avid Unity MediaNetwork needs 5

provides a list of example names that you can use when configuring the cluster. The

procedures in this chapter use these example names.

Make sure that these IP addresses are outside of the range that is available to DHCP so they

n

cannot automatically be assigned to other machines.

If your Active Directory domain or DNS includes more than one cluster, to avoid conflicts,

n

you need to make sure the cluster names, MSDTC names, and IP addresses are different for

each cluster.

All names must be valid and unique network host names.

n

Required IP Addresses and Node Names

Node or Service Item Required Example Name Where Used

IP addresses. The following table

First Cluster Node • 1 Host Name

• 2 ISIS IP addresses - public

(one for left and one for right)

or

1 MediaNetwork IP address public

• 1 IP address - private

(Heartbeat)

Second Cluster Node • 1 Host Name

• 2 ISIS IP addresses - public

(one for left and one for right)

or

1 MediaNetwork IP address public

• 1 IP address - private

(Heartbeat)

SECLUSTER1 See “Configuring the

Cluster Service on the

First Node” on page 42

and “Creating a Resource

Group for the Distributed

Transaction Coordinator”

on page 57.

SECLUSTER2 See “Configuring the

Cluster Service on the

Second Node” on page 47

and “Creating a Resource

Group for the Distributed

Transaction Coordinator”

on page 57.

30

Page 31

Preparing the Server for the Cluster Service

Required IP Addresses and Node Names (Continued)

Node or Service Item Required Example Name Where Used

Cluster service • 1 Network Name

(virtual host name)

• 1 ISIS IP address

or

1 MediaNetwork IP address

MSDTC service —

Distributed Transaction

Coordinator

Interplay Engine

service

• 1 Network Name

(virtual host name)

• 1 ISIS IP address

or

1 MediaNetwork IP address

• 1 Network Name

(virtual host name)

• 2 ISIS IP addresses - public

(one for left and one for right)

or

1 MediaNetwork IP address public

SECLUSTER See “Configuring the

Cluster Service on the

First Node” on page 42.

CLUSTERMSDTC See “Assigning a Network

Name to the MSDTC

Group” on page 59.

SEENGINE See “Specifying the

Interplay Engine Details”

on page 66 and

“Specifying the Interplay

Engine Name” on page 68.

Preparing the Server for the Cluster Service

Before you configure the cluster service, you need to complete the tasks in the following

procedures:

• “Setting the QLogic HBA Link Speed” on page 32

• “Renaming the Local Area Network Interface on Each Node” on page 33

• “Configuring the Binding Order Networks on Each Node” on page 38

• “Configuring the Private Network Adapter on Each Node” on page 35

• “Configuring the Public Network Adapter on Each Node” on page 40

• “Joining Both Servers to the Active Directory Domain” on page 40

• “Configuring the Cluster Shared-Storage RAID Disks on Each Node” on page 40

31

Page 32

2 Automatic Server Failover Installation

Setting the QLogic HBA Link Speed

To avoid possible problems with the Infortrend RAID array, Avid recommends that you

change the QLogic HBA link speed (data rate) from the default setting to 2 Gbps. You need

to specify this setting on both the SR2400 server and the SR2500 server. Change the setting

by using the SAN Surfer utility on both nodes.

To set the QLogic HBA link speed:

1. On the first node, click Start, and select Programs > QLogic Management Suite > San

Surfer.

The San Surfer FC HBA Manager dialog box opens.

32

2. In the left pane, select Port 1.

3. Click the Settings tab.

4. In the HBA Port Settings section, click the arrow pointer for the Data Rate list and

change the default setting from Auto to 2

Gbps.

Page 33

5. Click Save.

Preparing the Server for the Cluster Service

6. When prompted for a password, enter

config

and click OK.

7. On the other node, repeat steps 1 through 6.

8. Verify that the SAN Surfer data rate is set to 2 Gbps on both nodes.

Renaming the Local Area Network Interface on Each Node

You need to rename the LAN interface on each node to appropriately identify each network.

Although you can use any name for the network connections, Avid suggests that you use the

naming conventions provided in the table in the following procedure.

Make sure you use the same name on both nodes. The names and network connections on

both nodes must match.

To rename the local area network connections:

1. Open the Network Connections window.

a. Click Start and select Control Panel.

b. Right-click Network Connections, and select Open.

The Network Connections window opens.

2. Right-click one of the listed network connections and select Rename.

You need to match the numbered connection with the appropriate device. For example,

you can start by determining which connection refers to the left on-board network

interface and select that connection.

33

Page 34

2 Automatic Server Failover Installation

SR2400 back view

Right on-board network interface

Left on-board network interface

Right PCI adapter network interface

Left PCI adapter network interface

This illustration shows

Unity ISIS environment.

c

Both nodes must use identical network interface names. Although you can use any

name for the network connections, Avid suggests that you use the naming conventions

provided in the following table.

3. Depending on your Avid Unity network and the device you selected, type a new name

for the network connection and press Enter.

Use the following illustration and table for reference. The illustration uses an SR2400 in

an Avid Unity ISIS environment as an example.

Naming Network Connections

Avid Unity

Network Interface

Avid Unity ISIS

New Names

MediaNetwork

New Names Comment

Left on-board network

interface

Right on-board network

interface

Left PCI adapter network

interface

34

Left-subnet number Public ISIS - Public network.

Use the subnet number of the

interface. The examples in this

document use Left-74.

MediaNetwork: Public network

Right-subnet number Private ISIS - Public network

Use the subnet number of the

interface. The examples in this

document use Right-75.

MediaNetwork - Private network

Not used Not used Disabled

used for heartbeat between the two

servers in the cluster

Page 35

Preparing the Server for the Cluster Service

Naming Network Connections (Continued)

Avid Unity

Network Interface

Avid Unity ISIS

New Names

MediaNetwork

New Names Comment

Right PCI adapter

network interface.

4. Repeat steps 2 and 3 for each network connection.

Private Not used ISIS - Private network used for

heartbeat between the two servers

in the cluster.

MediaNetwork - Disabled

The following Network Connections window shows the new names used in an Avid

Unity ISIS environment.

5. Close the Network Connections window.

Configuring the Private Network Adapter on Each Node

To configure the private network adapter for the heartbeat connection:

1. Open the Network Connections window.

2. Right-click the Private network connection and select Properties.

The Private Properties dialog box opens.

3. On the General tab, click the Internet Protocol (TCP/IP) check box. Make sure all other

components are unchecked.

35

Page 36

2 Automatic Server Failover Installation

Select this check box.

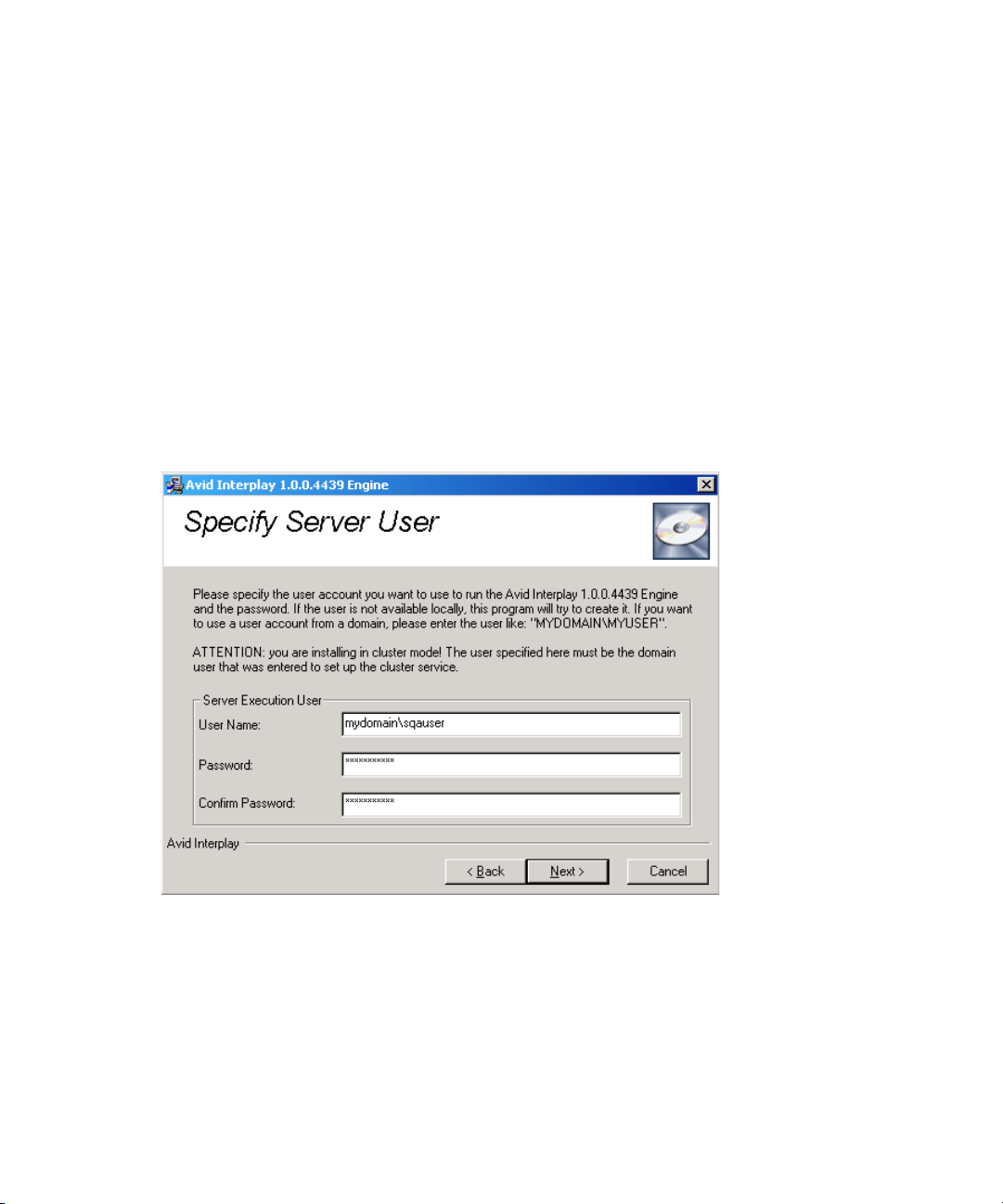

All others are unchecked.

36

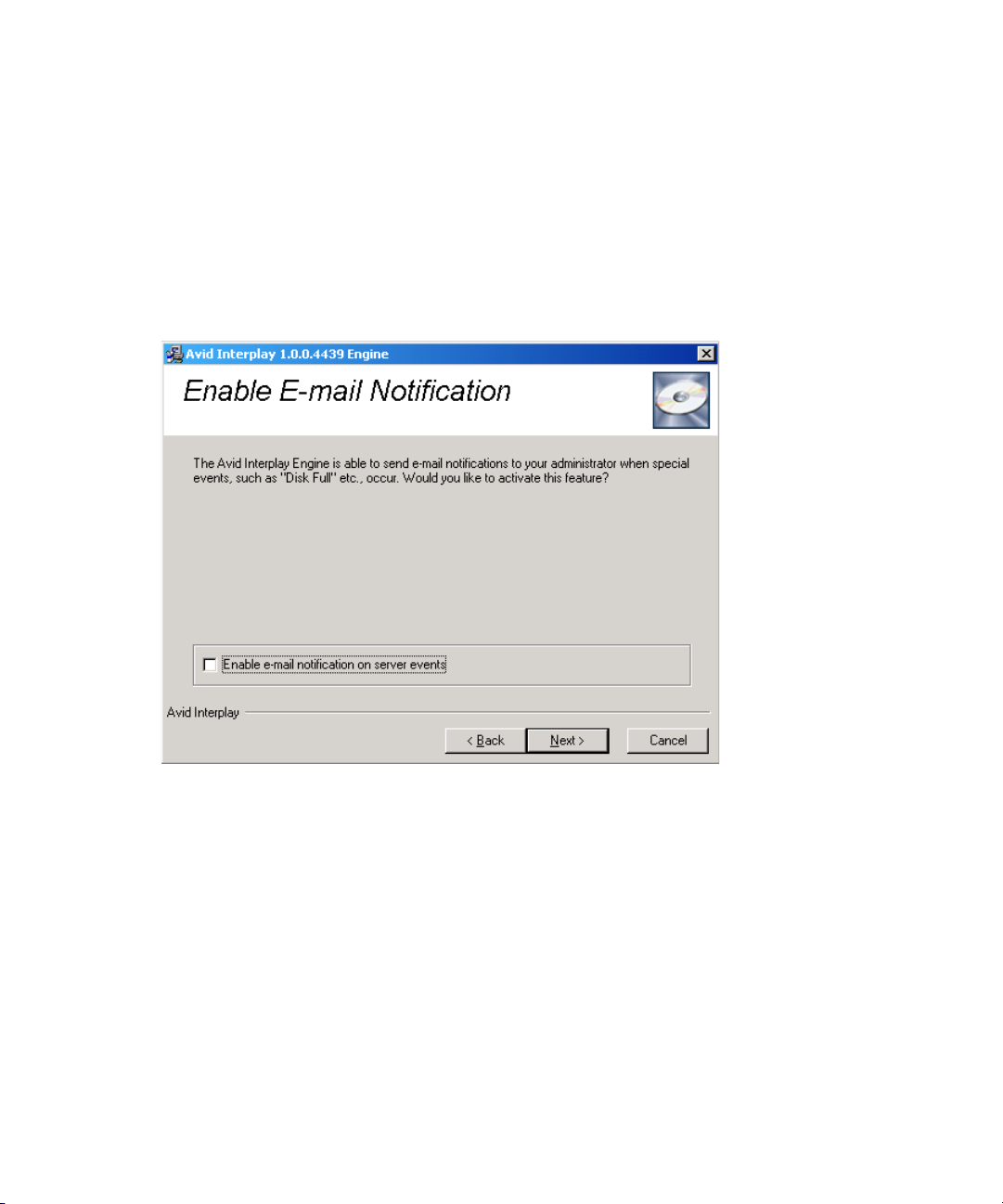

4. Select Internet Protocol (TCP/IP) and click Properties.

The Internet Protocol (TCP/IP) Properties dialog box opens.

Page 37

Preparing the Server for the Cluster Service

Type the private IP

address for the node

you are configuring.

5. On the General tab of the Internet Protocol (TCP/IP) Properties dialog box:

a. Select “Use the following IP address.”

b. IP address: type the IP address for the Private network connection for the node you

are configuring. See “List of IP Addresses and Network Names” on page 30.

When performing this procedure on the second node in the cluster make sure you use the

n

static private IP addresses for that node. In this example, use 192. 168. 100. 2.

c. Subnet mask: type the subnet mask address

Make sure you use a completely different IP address scheme from the one used for the public

n

network.

d. Make sure the “Default gateway” and “Use the Following DNS server addresses”

text boxes are empty.

6. Click Advanced.

The Advanced TCP/IP Settings dialog box opens.

37

Page 38

2 Automatic Server Failover Installation

38

7. On the DNS tab, make sure no values are defined and that the “Register this

connection’s addresses in DNS” and “Use this connection’s DNS suffix in DNS

registration” are not selected.

8. On the WINS tab, do the following:

t Make sure no values are defined in the WINS addresses area.

t Uncheck “Enable LMHOSTS Lookup”.

t Select “Disable NetBIOS over TCP/IP.”

9. Click OK.

A message might by displayed stating “This connection has an empty primary WINS

address. Do you want to continue?” Click Yes.

10. Repeat this procedure on the other node in the cluster, using the static private IP

addresses for that node.

Page 39

Preparing the Server for the Cluster Service

Configuring the Binding Order Networks on Each Node

Repeat this procedure on each node and make sure the configuration matches on both nodes.

To configure the binding order networks:

1. On one node, open the Network Connections window.

2. Select Advanced > Advanced Settings.

3. In the Connections area, use the arrow controls to position the network connections in

the following order:

- For an Avid Unity ISIS environment, use the following order, as shown in the

illustration:

-Right

-Left

-Private

- Local Area Connection 4

39

Page 40

2 Automatic Server Failover Installation

- For an Avid Unity MediaNetwork environment use the following order:

-Public

-Private

4. Click OK.

5. Repeat this procedure on the other node and make sure the configuration matches on

nodes.

both

Configuring the Public Network Adapter on Each Node

Make sure you configure the IP address network interfaces for the Public Network Adapter

as you normally would. For examples of public network settings, see

and Network Names” on page 30.

Joining Both Servers to the Active Directory Domain

After configuring the network information, join the two servers to the Active Directory

domain. You can then use your domain credentials for the Cluster Installation Account (see

“Before You Begin the Server Failover Installation” on page 28).

“List of IP Addresses

Configuring the Cluster Shared-Storage RAID Disks on Each Node

Both nodes must have the same configuration for the cluster shared-storage RAID disk.

When you configure the disks on the second node, make sure the disks match the disk

configuration you set up on the first node.

Before you create the partitions on the cluster nodes, make sure the cluster shared-storage

n

RAID disks were pre-configured (mirror, stripe, etc.) by the vendor. Make sure the disks are

Basic and not Dynamic.

To configure the disks on each node:

1. Shut down the server node you are not configuring at this time.

2. Open the Disk Management tool.

3. Initialize the disks, if not already initialized, by right-clicking the disk and selecting

Initialize Disk.

4. Use Quick Format to configure the disks as partitions, using the following names and

drive letters:

- Quorum (Q:) 4GB

-MSDTC (R:) 5GB

- Database (S:) 925GB

40

Page 41

Configuring the Cluster Service

Configure disks

as shown

The following illustration shows the required names and drive letters.

5. Verify you can access the disk and that it is working by creating a file and deleting it.

6. Shut down the first node and start the second node.

7. On the second node, assign drive letters and names. You do not need to format the disks.

a. Open the Disk Management tool. Right-click the partition, select Change Drive

Letter, and enter the appropriate letter. Repeat these actions for the other partitions.

b. Open My Computer. Select a drive, right-click, select Rename, and enter the

appropriate name. Repeat these actions for the other drives.

Configuring the Cluster Service

Take the following steps to configure the cluster service:

1. Turn off the second node.

2. Configure the first node using the New Server Cluster Wizard. See “Configuring the

Cluster Service on the First Node” on page 42

41

Page 42

2 Automatic Server Failover Installation

3. Validate the cluster service installation on the first node. See “Validating the Cluster

Service on the First Node” on page 47.

4. Turn on the second node. Leave first node turned on.

5. Configure the second node using Add Cluster Computers Wizard. See “Configuring the

Cluster Service on the Second Node” on page 47.

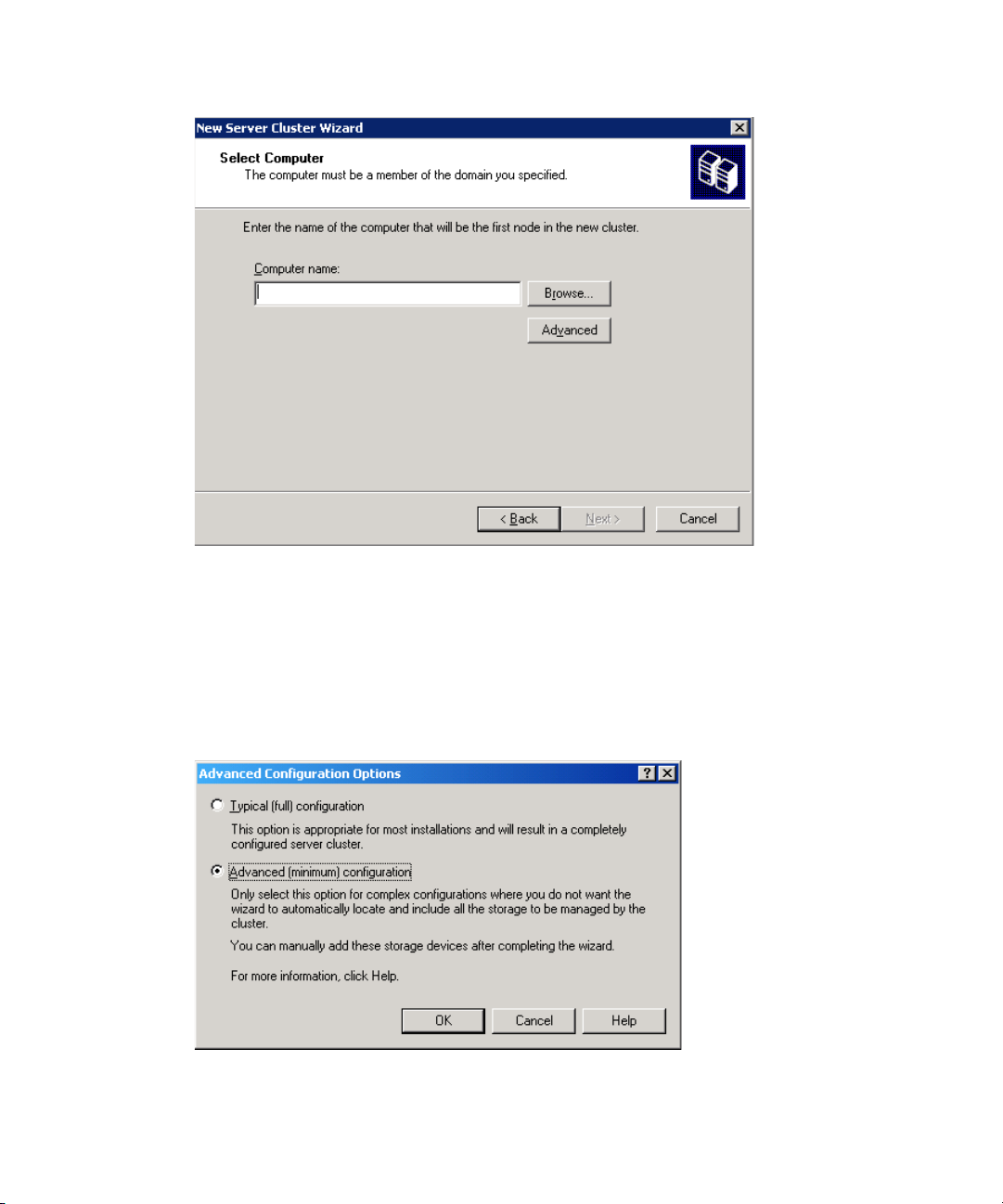

Configuring the Cluster Service on the First Node

To configure the cluster service on the first node:

1. Turn off the server for the node you are not configuring at this time.

2. Make sure all storage devices are turned on.

3. Click Start and select All Programs > Administrative Tools > Cluster Administrator.

The Open Connection to Cluster dialog box opens.

42

4. Select “Create new cluster” from the Action menu.

5. Make sure you have the prerequisites to configure the cluster, as shown in the New

Server Cluster Wizard Welcome window.

6. Click Next.

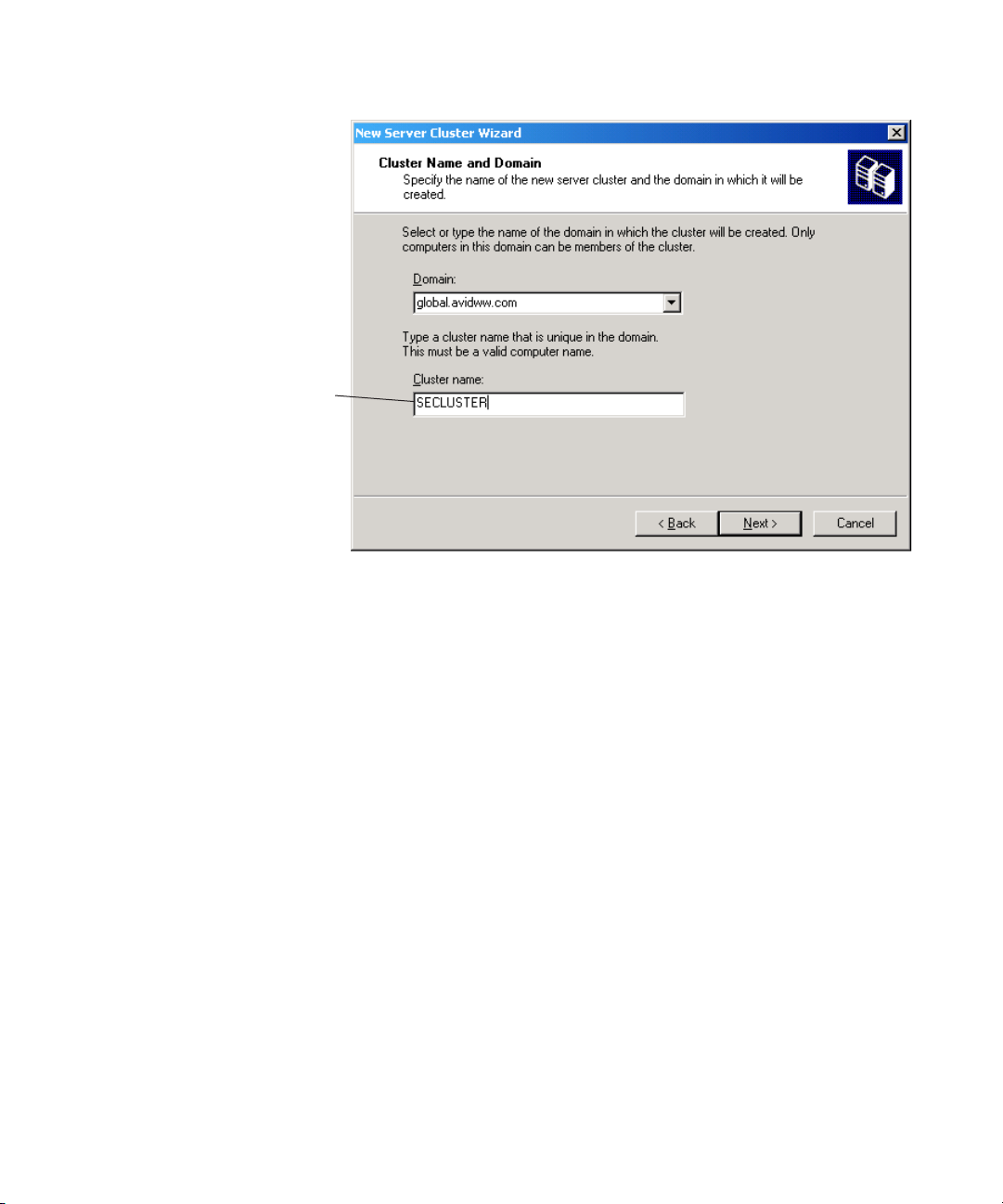

7. In the Cluster Name and Domain dialog box, do the following:

- Domain: select the name of your Active Directory domain

- Cluster name: type the Cluster service name, for example SECLUSTER — see

“List of IP Addresses and Network Names” on page 30.

Page 43

8. Click Next.

Type the Cluster

service name.

Configuring the Cluster Service

The Select Computer dialog box opens.

You might be prompted for an account. If so, use a domain user account, such as the Cluster

n

Installation Account referred to in

page 28. Do not use the Cluster Service Account (Service Execution User).

“Before You Begin the Server Failover Installation” on

43

Page 44

2 Automatic Server Failover Installation

9. In the Select Computer dialog box, in the Computer name text box, type the Cluster

node host name of the first node.

44

For example, use SECLUSTER1. See “List of IP Addresses and Network Names” on

page 30.

10. Click Advanced.

The Advanced Configuration Options dialog box opens.

11. Select Advanced (minimum) configuration, and click OK.

12. Click Next.

Page 45

Configuring the Cluster Service

Type the Cluster Service

Account user name.

The setup process analyzes the node for hardware or software problems that might cause

problems during installation. A warning icon displays next to “Checking Cluster

feasibility.” In this case, the warnings do not indicate a problem.

13. Click Next after the analyze is complete and the Task Complete bar is green.

14. In the IP Address dialog box, type the Cluster Service ISIS IP address for the left side in

the IP Address text box. See

“List of IP Addresses and Network Names” on page 30.

15. Click Next.

16. In the Cluster Service Account dialog box, type the cluster user name and password, and

select the domain.

This is the Cluster Service Account (Server Execution User) used to start the cluster

service. It is also used by the Interplay Engine. It must be a unique name that will not be

used for any other purpose. See

“Before You Begin the Server Failover Installation” on

page 28. Check that the account is part of the domain, and that the name and password

are correct, by logging into the domain.

17. Click Next.

The Proposed Cluster Configuration dialog box opens.

45

Page 46

2 Automatic Server Failover Installation

46

18. Click Quorum.

The Cluster Configuration Quorum dialog box opens.

19. Select Disk Q: from the menu, and click OK.

20. Review the summary on the Proposed Cluster Configuration dialog box to verify all the

information for creating the cluster is correct.

21. Click Next. The Creating the Cluster dialog box opens.

22. Review any errors during the cluster creation.

If red errors display, check the Cluster Service ISIS IP address you entered in step 14.

n

23. Click Next.

Page 47

24. Click Finish.

Verify

Resources

Validating the Cluster Service on the First Node

To validate the first node cluster installation:

1. Click Start and select Programs > Administrative Tools > Cluster Administrator.

2. In the left pane, click Resources to make sure all resources are online.

Configuring the Cluster Service

Configuring the Cluster Service on the Second Node

To configure the cluster service on the second node:

1. Make sure the first node is on and all storage devices are turned on.

2. Turn on the server for the second node.

3. In the first node, click Start and select Programs > Administrative Tools > Cluster

Administrator.

4. Select File > New > Node.

The Add Node Wizard opens.

5. Click Next.

You might be prompted for an account. If so, use a domain user account, such as the Cluster

n

Installation Account referred to in

page 28. Do not use the Cluster Service Account (Service Execution User).

6. In the Select Computers dialog box, in the Computer name text box, type the Cluster

node host name of the second node and click Add.

For example, use SECLUSTER2. See “List of IP Addresses and Network Names” on

page 30.

“Before You Begin the Server Failover Installation” on

47

Page 48

2 Automatic Server Failover Installation

7. Click Advanced.

The Advanced Configuration Options dialog box opens.

48

8. Select Advanced (minimum) configuration, and click OK.

9. Click Next.

The setup process analyzes the node for hardware or software problems that might cause

problems during installation. A warning icon displays next to “Checking Cluster

feasibility.” In this case, the warnings do not indicate a problem.

10. Click Next after the analyze is complete and the Task Complete bar is green.

Page 49

Configuring the Cluster Service

11. Type the password for the cluster service account. This account is used to start the

cluster service.

12. Click Next.

13. In the Proposed Cluster Configuration dialog box, review the summary to verify all the

information for creating the cluster is correct.

14. Click Next.

The Adding Nodes to the Cluster dialog box opens.

15. Review any errors during the cluster creation.

A warning icon displays next to “Reanalyzing cluster.” In this case, the warnings do not

indicate a problem.

16. Click Next.

17. Click Finish.

49

Page 50

2 Automatic Server Failover Installation

The Private network

(virtual cluster) is used

for the Heartbeat.

Configuring Rules for the Cluster Networks

After the networks are configured on each node and the cluster service is configured, you

need to configure the network roles to determine the function within the cluster.

The procedures in this section use Left-74 and Right-75 as examples of the public networks.

n

You should replace the numbers with your subnet numbers.

To configure the rules for the cluster networks:

1. Click Start and select Programs > Administrative Tools > Cluster Administrator.

2. In the left pane, click Cluster Configuration > Networks, and right-click Private and

select Properties.

3. Select “Internal cluster communications only (private network).”

50

4. Click OK.

5. In the left pane, click Cluster Configuration > Networks, and right-click Left-74 and

select Properties.

6. In the Left-74 Properties dialog box, verify these options:

Page 51

- Name: Left-74

- Enable this network for cluster use

- All communications (mixed network)

7. Click OK.

8. In the left pane, click Cluster Configuration > Networks, and right-click Right-75 and

select Properties.

9. In the Right-75 Properties dialog box, verify these options:

- Name: Right-75

- Enable this network for cluster use

- All communications (mixed network)

10. Click OK.

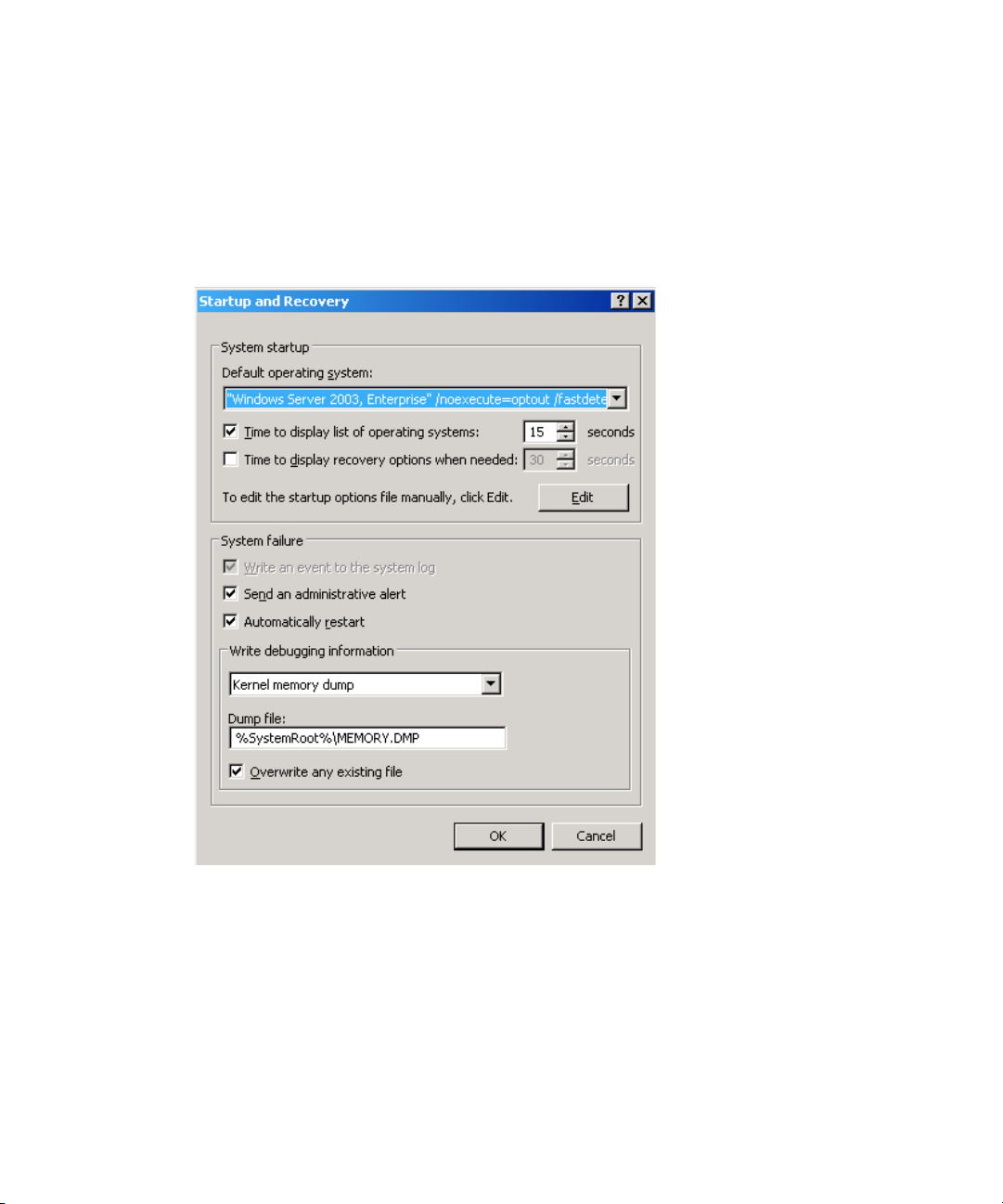

Prioritizing the Heartbeat Adapter

After you configure network roles for how the cluster service uses the network adapter, you

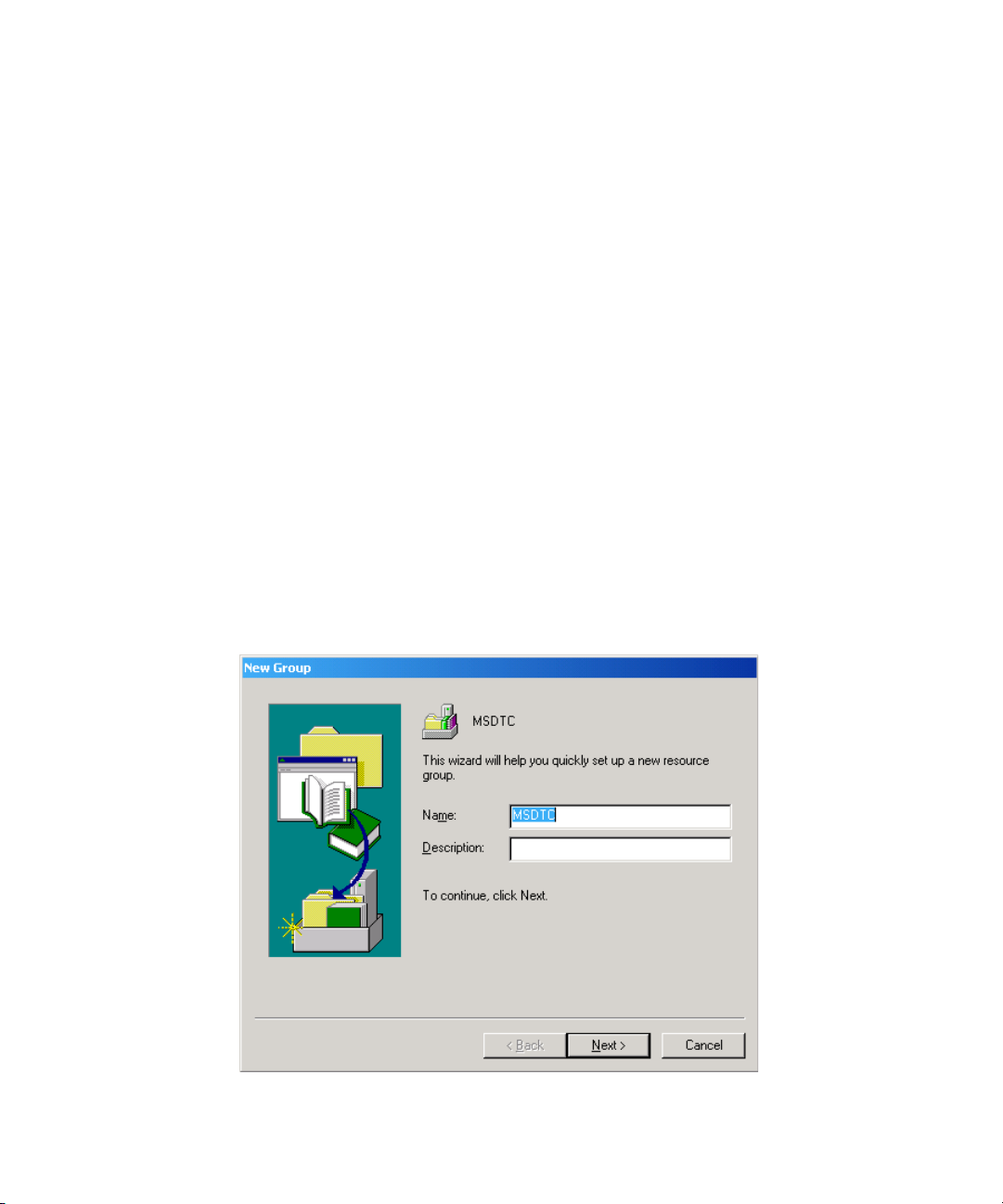

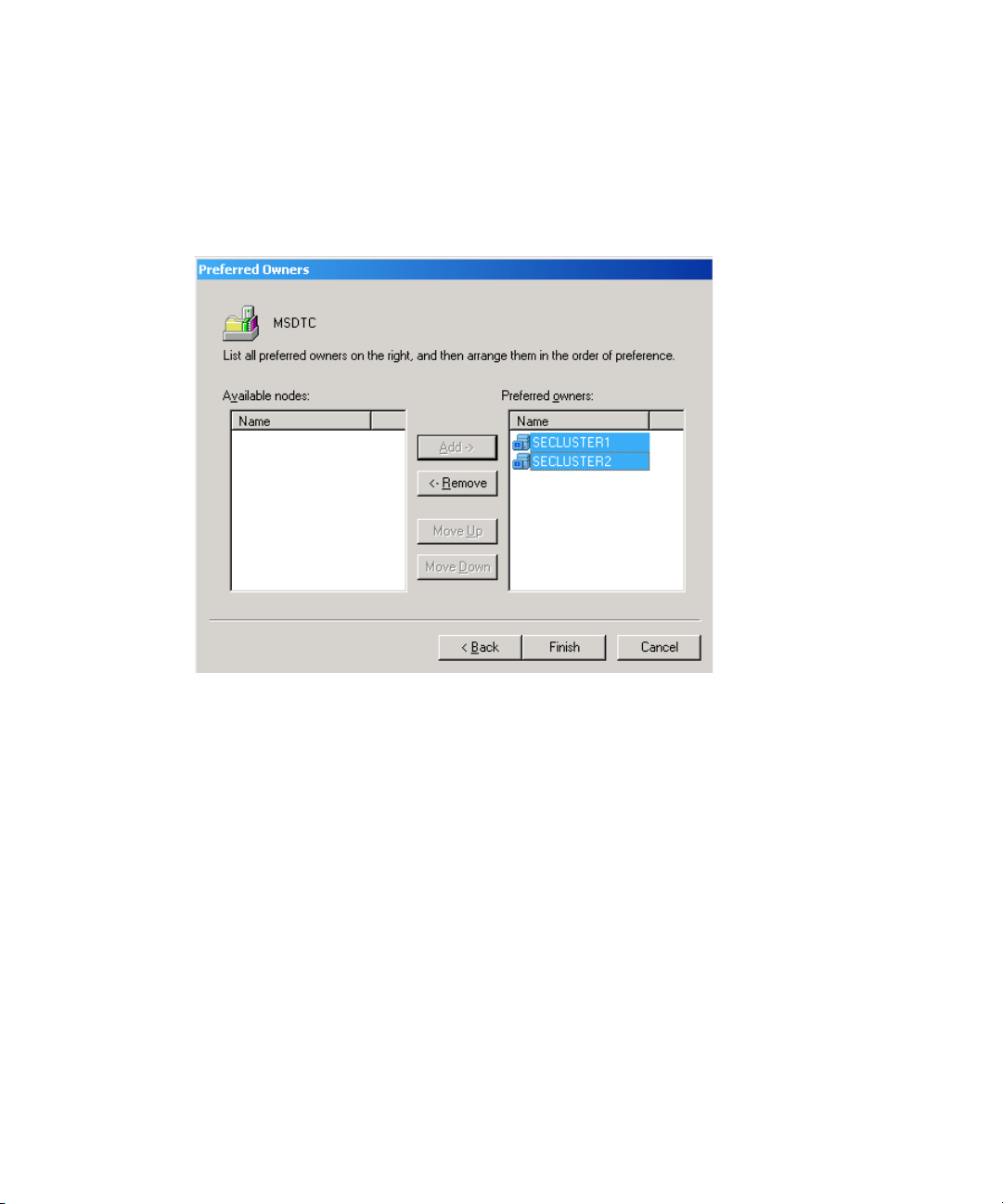

need to prioritize the order in which they are used for intra-cluster communications. The