User Manual

SM2-D1312-TI6455 / VisionCam PS

CMOS DSP Camera

MAN060 05/2013 V1.0

All information provided in this manual is believed to be accurate and reliable. No

responsibility is assumed by Photonfocus AG for its use. Photonfocus AG reserves the right to

make changes to this information without notice.

Reproduction of this manual in whole or in part, by any means, is prohibited without prior

permission having been obtained from Photonfocus AG.

1

2

Contents

1 Preface 7

1.1 About Photonfocus . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

1.2 Contact . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

1.3 Sales Offices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

1.4 Further information . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

1.5 Legend . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8

2 How to get started (SM2) 9

2.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.2 Get the camera and its accessories . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.2.1 SM2-Camera only . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

2.2.2 SM2 Starter Kit . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

2.2.3 Accessories . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

2.3 Hardware Installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

2.4 Software Installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

2.5 Emulator, Debugger Installation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

2.5.1 Code Composer Studio (CCS) . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

2.5.2 Emulator . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

2.5.3 Connect camera to JTAG . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

2.5.4 Framework and examples . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

2.5.5 Documentation of the code and libraries . . . . . . . . . . . . . . . . . . . . . 16

2.5.6 microSD card . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

3 Product Specification 17

3.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

3.2 Hardware Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

3.3 Feature Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

3.4 Technical Specification . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

4 Functionality 25

4.1 Image Acquisition . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

4.1.1 Readout Modes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

4.1.2 Readout Timing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 27

4.1.3 Exposure Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

4.1.4 Maximum Frame Rate . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 30

4.2 Pixel Response . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

4.2.1 Linear Response . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

4.2.2 LinLog®. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 31

4.3 Reduction of Image Size . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

4.3.1 Region of Interest (ROI) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 36

4.3.2 ROI configuration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 39

4.3.3 Calculation of the maximum frame rate . . . . . . . . . . . . . . . . . . . . . . 39

CONTENTS 3

CONTENTS

4.3.4 Multiple Regions of Interest . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 41

4.3.5 Decimation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

4.4 Trigger and Strobe . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 47

4.4.1 Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 47

4.4.2 Trigger Source . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 48

4.4.3 Exposure Time Control . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

4.4.4 Trigger Delay . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

4.4.5 Burst Trigger . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 51

4.4.6 Software Trigger . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

4.4.7 Strobe Output . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

4.5 Data Path Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 56

4.6 Image Correction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 57

4.6.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 57

4.6.2 Offset Correction (FPN, Hot Pixels) . . . . . . . . . . . . . . . . . . . . . . . . . 57

4.6.3 Gain Correction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 59

4.6.4 Corrected Image . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 60

4.7 Digital Gain and Offset . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

4.8 Grey Level Transformation (LUT) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

4.8.1 Gain . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

4.8.2 Gamma . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

4.8.3 User-defined Look-up Table . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

4.8.4 Region LUT and LUT Enable . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

4.9 Convolver (monochrome models only) . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

4.9.1 Functionality . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

4.9.2 Settings . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

4.9.3 Examples . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

4.10 Crosshairs (monochrome models only) . . . . . . . . . . . . . . . . . . . . . . . . . . . 71

4.10.1 Functionality . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 71

4.11 Image Information and Status Line . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

4.11.1 Counters and Average Value . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

4.11.2 Status Line . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

4.12 Test Images . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

4.12.1 Ramp . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

4.12.2 LFSR . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

4.12.3 Troubleshooting using the LFSR . . . . . . . . . . . . . . . . . . . . . . . . . . . 75

5 Hardware Interface 79

5.1 GigE Connector . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

5.2 Power Supply Connector . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

5.3 Trigger Connector . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

5.4 Status Indicator (SM2 cameras) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

5.5 Power and Ground Connection for SM2 Cameras . . . . . . . . . . . . . . . . . . . . . 81

5.6 Trigger and Strobe Signals for SM2 Cameras . . . . . . . . . . . . . . . . . . . . . . . 82

5.6.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

5.6.2 Opto-isolated Interface (power connector) . . . . . . . . . . . . . . . . . . . . 82

5.6.3 RS422 Interface . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 84

6 Framework Functionalities 85

6.1 Web Server . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

6.1.1 Access to the Web Server . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

6.1.2 Information about the Camera . . . . . . . . . . . . . . . . . . . . . . . . . . . 86

6.1.3 Configuration of the Camera . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

6.1.4 Sensor . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

4

6.1.5 Camera . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

6.1.6 Save Pic . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 104

6.1.7 Tools . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 105

6.1.8 View Par . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 106

6.1.9 App . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 107

6.2 FTP Server . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 107

7 Mechanical and Optical Considerations 109

7.1 Mechanical Interface . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 109

7.1.1 Cameras with GigE Interface . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 109

7.2 Optical Interface . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

7.2.1 Cleaning the Sensor . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 110

7.3 CE compliance . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 112

8 Warranty 113

8.1 Warranty Terms . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113

8.2 Warranty Claim . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 113

9 References 115

A Pinouts 117

A.1 Power Supply Connector . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 117

A.2 RS422 Trigger and Strobe Interface . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 118

B Revision History 121

CONTENTS 5

CONTENTS

6

1

Preface

1.1 About Photonfocus

The Swiss company Photonfocus is one of the leading specialists in the development of CMOS

image sensors and corresponding industrial cameras for machine vision, security & surveillance

and automotive markets.

Photonfocus is dedicated to making the latest generation of CMOS technology commercially

available. Active Pixel Sensor (APS) and global shutter technologies enable high speed and

high dynamic range (120 dB) applications, while avoiding disadvantages like image lag,

blooming and smear.

Photonfocus has proven that the image quality of modern CMOS sensors is now appropriate

for demanding applications. Photonfocus’ product range is complemented by custom design

solutions in the area of camera electronics and CMOS image sensors.

Photonfocus is ISO 9001 certified. All products are produced with the latest techniques in order

to ensure the highest degree of quality.

1.2 Contact

Photonfocus AG, Bahnhofplatz 10, CH-8853 Lachen SZ, Switzerland

Sales Phone: +41 55 451 07 45 Email: sales@photonfocus.com

Support Phone: +41 55 451 01 37 Email: support@photonfocus.com

Table 1.1: Photonfocus Contact

1.3 Sales Offices

Photonfocus products are available through an extensive international distribution network

and through our key account managers. Details of the distributor nearest you and contacts to

our key account managers can be found at www.photonfocus.com.

1.4 Further information

Photonfocus reserves the right to make changes to its products and documentation without notice. Photonfocus products are neither intended nor certified for

use in life support systems or in other critical systems. The use of Photonfocus

products in such applications is prohibited.

Photonfocus is a trademark and LinLog®is a registered trademark of Photonfocus AG. CameraLink®and GigE Vision®are a registered mark of the Automated

Imaging Association. Product and company names mentioned herein are trademarks or trade names of their respective companies.

7

1 Preface

Reproduction of this manual in whole or in part, by any means, is prohibited

without prior permission having been obtained from Photonfocus AG.

Photonfocus can not be held responsible for any technical or typographical errors.

1.5 Legend

In this documentation the reader’s attention is drawn to the following icons:

Important note

Alerts and additional information

Attention, critical warning

✎

Notification, user guide

8

2

How to get started (SM2)

2.1 Introduction

The SM2-D1312(IE)-TI6455 Series / VisionCam PS is an intelligent camera especially designed for

machine vision applications. The camera consists of the CMOS camera head and the embedded

vision computer. These two main components are developed by Photonfocus AG (camera head)

and IMAGO Technologies (vision computer). This document is a guideline for programming

and understanding the SM2-D1312(IE)-TI6455 Series / VisionCam PS.

This guide shows you:

• How to get the camera and it’s accessories

• How to install the required hardware

• How to install the required software

• How to acquire your first images and how to modify camera settings

2.2 Get the camera and its accessories

2.2.1 SM2-Camera only

An order of the SM2 camera contains the camera body only (no lense).

Figure 2.1: SM2 camera

9

2 How to get started (SM2)

2.2.2 SM2 Starter Kit

An order of the SM2 Starter Kit, contains the following items:

• SM2 Camera body (no lense).

• SM2-JTAG connector

Figure 2.2: SM2-JTAG adapter and camera

2.2.3 Accessories

SM2-JTAG Connector

The SM2-JTAG connector is needed to connect the emulator to the DSP of the SM2 camera. The

JTAG interface is behind the "JTAG / SD-CARD" plate. This connector can be ordered from

Photonfocus.

Figure 2.3: SM2-JTAG adapter

10

Power supply

The power supply can be ordered from Photonfocus.

Figure 2.4: SM2 power supply

SM2 Trigger cables

The SM2 trigger cables packages contains two cables:

• 12pol cable for the Hirose power connector.

• 14pol cable for the MDR-14 connector.

Figure 2.5: SM2 trigger cables

.

2.2 Get the camera and its accessories 11

2 How to get started (SM2)

2.3 Hardware Installation

The hardware installation that is required for this guide is described in this section.

The following hardware is required:

• PC with Microsoft Windows OS (XP, Vista, Windows 7)

• A Gigabit Ethernet network interface card (NIC) must be installed in the PC.

• Photonfocus SM2 camera.

• Suitable power supply for the camera (see in the camera manual for specification) which

can be ordered from your Photonfocus dealership.

• GigE cable of at least Cat 5E or 6.

Photonfocus SM2 cameras can also be used under Linux.

Do not bend GigE cables too much. Excess stress on the cable results in transmission errors. In robots applications, the stress that is applied to the GigE cable is

especially high due to the fast movement of the robot arm. For such applications,

special drag chain capable cables are available.

The following list describes the connection of the camera to the PC:

1. Remove the Photonfocus SM2 camera from its packaging. Please make sure the following

items are included with your camera:

• Power supply connector

• SM2 camera body

• IP address (default: 192.168.1.240)

If any items are missing or damaged, please contact your dealership.

2. Connect the camera to the GigE interface of your PC with a GigE cable of at least Cat 5E or

6.

Figure 2.6: Rear view of the SM2 camera SM2-D1312(IE)-TI6455-80 with power supply and I/O connector,

Ethernet jack (RJ45) and status LED

12

3. Connect a suitable power supply to the power plug. The pin out of the connector is

shown in the camera manual.

Check the correct supply voltage and polarity! Do not exceed the operating

voltage range of the camera.

A suitable power supply can be ordered from your Photonfocus dealership.

4. Connect the power supply to the camera (see Fig. 2.6).

.

2.3 Hardware Installation 13

2 How to get started (SM2)

2.4 Software Installation

This section describes the installation of the required software to accomplish the tasks

described in this chapter.

1. Use the JavaApplet (tcpdisplay.jar) to connect to the camera. There are differnet ways to

get the JavaApplet.

• If you have already the JavaApplet and you know the camer IP address, doubleclick

the JavaApplet and enter camera IP address.

• You can find the JavaApplet in the Photonfocus\SM2 folder, when you have installed

PFInstaller and selected the SM2 package during installation.

• If you don’t know the camera IP address, use the VIBFinder.exe tool (you can find it

also in the Photonfocus\SM2 folder of the PFInstaller installation). Click to "Find

Devices", select the camera and click to "Show Properties". Now you can click to

"Open TCP Display".

• The JavaApplet (tcpdisplay.jar) is also on the microSD card of the camera. Unplug the

microSD card and connect it to a computer or download the JavaApplet directly over

FTP (ftp://192.168.1.240, username, password)

2. The web browser displays the web server main window as shown in Fig. 2.7.

Figure 2.7: Framework (web server) start page

.

14

2.5 Emulator, Debugger Installation

This section describes what software and tools are needed to compile own c/c++ code and

download it to the DSP.

2.5.1 Code Composer Studio (CCS)

The Code Composer Studio (CCS) is an integrated development environment (IDE) for Texas

Instruments (TI) embedded processor families. CCS comprises a suite of tools used to develop

and debug embedded applications. It includes compilers for each of TI’s device families, source

code editor, project build environment, debugger, profiler, simulators, real-time operating

system and many other features.

Here are more information about the CCS: www.ti.com/tool/ccstudio.

The Node Locked Single User (N01D) cost about 445US$. There is also a 90day evaluation

version (CSS-FREE) avialable. The evaluation version has no other limitations. More

informations about the licensing of the CSS:

http://processors.wiki.ti.com/index.php/Licensing_-_CCS.

2.5.2 Emulator

For debugging a emulator is needed. Photonfocus recomment the XDS200 for about 295US$.

Pros of the XDS200:

• No additional driver is needed. The driver is part of the CCS installation.

• Only a USB connector (no additional power needed), perfect for notebook users!

• Low cost (XDS560 family is about 1000US$).

The XDS200 can be ordered directly from the Texas Instruments webs site. Find more

information about the XDS200 here: www.ti.com/tool/xds200.

Figure 2.8: XDS200 emulator

2.5 Emulator, Debugger Installation 15

2 How to get started (SM2)

2.5.3 Connect camera to JTAG

Remove the "JTAG / SD-Card" plate, before connecting the JTAG. Figure 2.9 shows how to

connect the SM2-JTAG connector and the XDS200 emulator.

Figure 2.9: How to connect camera to XDS200 emulator

2.5.4 Framework and examples

The framework and the examples are working with the CCS. We provide also the source code

of the framework and the examples.

2.5.5 Documentation of the code and libraries

These documentations are on the microSD card of the SM2 camera.

2.5.6 microSD card

The microSD card of the SM2 camera contains the following:

• All needed files for CCS development (framework, source code, libraries, examples) in a

zip file

• Camera manual and source code documentation.

• VIBFinder.exe, this windows tool finds the SM2 camera in the network, if the user does

not know the camera IP address.

• tcpdisplay.jar, JavaApplet to connect to camera build-in webserver

• Ethernet.ini, to change the camera IP-address, default address is: 192.168.1.240

Remove the "JTAG / SD-Card" plate, before accessing the microSD card.

Figure 2.10: microSD card

16

3

Product Specification

3.1 Introduction

The SM2-D1312(IE)-TI6455 CMOS camera series are built around the A1312(IE/C) CMOS image

sensor from Photonfocus and IMAGO Technologies, that provides a resolution of 1312 x 1082

pixels at a wide range of spectral sensitivity. There are standard monochrome and NIR

enhanced monochrome (IE) models. The camera series is aimed at standard applications in

industrial image processing. The principal advantages are:

• Resolution of 1312 x 1082 pixels.

• Wide spectral sensitivity from 320 nm to 1030 nm for monochrome models.

• Enhanced near infrared (NIR) sensitivity with the A1312IE CMOS image sensor.

• High quantum efficiency: > 50% for monochrome models and between 25% and 45% for

colour models.

• High pixel fill factor (> 60%).

• Superior signal-to-noise ratio (SNR).

• Low power consumption at high speeds.

• Very high resistance to blooming.

• High dynamic range of up to 120 dB.

• Ideal for high speed applications: Global shutter.

• Image resolution of up to 12 bit.

• On camera shading correction.

• 3x3 Convolver for image pre-processing included on camera.

• Up to 512 regions of interest (MROI).

• 2 look-up tables (12-to-8 bit) on user-defined image regions (Region-LUT).

• Crosshairs overlay on the image (monochrome models only).

• Image information and camera settings inside the image (status line).

• Software provided for setting and storage of camera parameters.

• The camera has a Gigabit Ethernet interface.

• Texas Instrument TMS320C6455 DSP with 1.2GHz and 9600MIPS

• 512MB onboard DDR RAM.

• The camera has a Gigabit Ethernet interface.

• 2GB microSD card (max. 32GB).

• Advanced I/O capabilities: 3 isolated trigger inputs, 3 differential isolated RS-422 inputs, 3

differential isolated RS-422 outputs and 3 isolated outputs.

• Wide power input range from 12 V (-10 %) to 24V (+10 %).

The general specification and features of the camera are listed in the following sections.

17

3 Product Specification

3.2 Hardware Overview

The three main components in the block diagram are the CMOS camera module, the FPGA and

the digital signal processor. These components are especially designed to transfer high data

rates and to communicate with each other. The used high speed communication protocol is

called Sun-System-Protocol. The CMOS camera module is a D1312(IE) camera module from

Photonfocus, with all its included features like 1312 x 1082 pixel camera resolution, global

shutter, shading correction and LinLog®technology. The image processing computer (FPGA /

DSP / SDRAM / Flash) is a module based on VisionBox technology from IMAGO Technologies.

The function of this module is the handling of the image data, the doing the image processing

and performing the communication between the components and peripheral devices. The

digital signal processor has a fast internal memory of 2048 kB and runs with a maximum

frequency of 1200 MHz. There are up to 512 MB external SDRAM, storing image data and

program data.

18

C M O S

C a m e r a

F P G A

C 6 4 5 5 D S P

1 2 0 0 M H z

R A M

5 1 2 M B

F l a s h

4 M B

E t h e r n e t

1 0 0 0 M b i t / s

R S 4 2 2 T r a n s c e i v e r

( 3 x I n / 3 x O u t )

3 x O p t o I n

3 x O p t o O u t

1 x R S 2 3 2

2 x L E D

m i c r o S D C a r d

Figure 3.1: Hardware overview

Each SM2-D1312(IE)-TI6455 / VisionCam PS camera features an internal flash memory of 4

MBytes and a µSD Card. The internal flash memory is used to store the bootloader, some

configuration files and the firmware. The µSD card is used to store image data, the executable

program and other user data. The communication with external devices is realized via a 1000

Mbit/s Ethernet connection. In this way it is possible to perform high data rates. For other

communication purposes, the optocoupled inputs and outputs, the RS422 transceivers and the

serial port are helpful.

3.2 Hardware Overview 19

3 Product Specification

3.3 Feature Overview

Characteristics SM2-D1312(IE)-TI6455

Interface Gigabit Ethernet, TCP/IP, FTP µSD card

Camera Control Web server or programming library

Trigger Modes Software Trigger / External isolated trigger input / PLC Trigger

Features Greyscale resolution 12 bit / 10 bit / 8 bit

Region of Interest (ROI)

Test pattern (LFSR and grey level ramp)

Shading Correction (Offset and Gain)

3x3 Convolver included on camera

High blooming resistance

isolated trigger input and isolated strobe output

2 look-up tables (12-to-8 bit) on user-defined image region (Region-LUT)

Up to 512 regions of interest (MROI)

Image information and camera settings inside the image (status line)

Crosshairs overlay on the image

Table 3.1: Feature overview (see Chapter 4 for more information)

Figure 3.2: SM2-D1312(IE)-TI6455 CMOS camera with C-mount lens

20

3.4 Technical Specification

Technical Parameters SM2-D1312(IE)-TI6455

Technology CMOS active pixel (APS)

Scanning system Progressive scan

Optical format / diagonal 1” (13.6 mm diagonal) @ maximum

resolution

2/3” (11.6 mm diagonal) @ 1024 x 1024

resolution

Resolution 1312 x 1082 pixels

Pixel size 8 µm x 8 µm

Active optical area 10.48 mm x 8.64 mm (maximum)

Random noise < 0.3 DN @ 8 bit

1)

Fixed pattern noise (FPN) 3.4 DN @ 8 bit / correction OFF

1)

Fixed pattern noise (FPN) < 1DN @ 8 bit / correction ON

1)2)

Dark current SM2-D1312-TI6455 0.65 fA / pixel @ 27 °C

Dark current SM2-D1312IE-TI6455 0.79 fA / pixel @ 27 °C

Full well capacity ~ 90 ke

−

Spectral range SM2-D1312-TI6455 350 nm ... 980 nm (see Fig. 3.3)

Spectral range SM21-D1312IE-TI6455 320 nm ... 1000 nm (see Fig. 3.4)

Responsivity MV1-D1312 and DR1-D1312 295 x103DN/(J/m2) @ 670 nm / 8 bit

Responsivity MV1-D1312IE and DR1-D1312IE 305 x103DN/(J/m2) @ 870 nm / 8 bit

Quantum Efficiency > 50 %

Optical fill factor > 60 %

Dynamic range 60 dB in linear mode, 120 dB with LinLog

®

Colour format (colour models) RGB Bayer Raw Data Pattern

Characteristic curve Linear, LinLog

®

Shutter mode Global shutter

Greyscale resolution 12 bit / 10 bit / 8 bit

Table 3.2: General specification of the SM2-D1312(IE)-TI6455 camera series (Footnotes:1)Indicated values

are typical values.2)Indicated values are subject to confirmation.

3.4 Technical Specification 21

3 Product Specification

SM2-D1312(IE)-TI6455-80 SM2-D1312(IE)-TI6455-160

Exposure Time 10 µs ... 0.83 s 10 µs ... 0.42 s

Exposure time increment 50 ns 25 ns

Frame rate5)( T

int

= 10 µs) 54 fps 108 fps

Pixel clock frequency 40 MHz 80 MHz

Pixel clock cycle 25 ns 12.5 ns

Camera taps 1 2

Read out mode sequential or simultaneous

Table 3.3: Model-specific parameters (Footnotes:5)Maximum frame rate @ full resolution @ 8 bit).

SM2-D1312(IE)-TI6455-80 SM2-D1312(IE)-TI6455-160

Operating temperature / moisture 0°C ... 50°C / 20 ... 80 %

Storage temperature / moisture -25°C ... 60°C / 20 ... 95 %

Camera power supply +12 V DC (± 10 %)

Trigger signal input range +12 .. +24 V DC

Strobe signal power supply +12 .. +24 V DC

Strobe signal sink current (average) max. 8 mA

Max. power consumption @ 12 V < TBD W < TBD W

Lens mount C-Mount (CS-Mount optional)

Dimensions 60 x 60 x TBD mm

3

Mass 600 g

Conformity CE / RoHS / WEE

Table 3.4: Physical characteristics and operating ranges of the SM2-D1312(IE)-TI6455 camera series

.

22

Fig. 3.3 shows the quantum efficiency and the responsivity of the monochrome A1312 CMOS

sensor, displayed as a function of wavelength. For more information on photometric and

radiometric measurements see the Photonfocus application note AN008 available in the

support area of our website www.photonfocus.com.

800

1000

1200

40%

50%

60%

/J/m²]

Efficiency

QE Responsivity

0

200

400

600

0%

10%

20%

30%

200 300 400 500 600 700 800 900 1000 1100

Responsivity [

V

Quantu

m

Wavelength [nm]

Figure 3.3: Spectral response of the A1312 CMOS monochrome image sensor (standard) in the SM2-D1312TI6455 camera series

3.4 Technical Specification 23

3 Product Specification

Fig. 3.4 shows the quantum efficiency and the responsivity of the monochrome A1312IE CMOS

sensor, displayed as a function of wavelength. The enhancement in the NIR quantum efficiency

could be used to realize applications in the 900 to 1064 nm region.

0%

10%

20%

30%

40%

50%

60%

300 400 500 600 700 800 900 1000 1100

Wavelength [nm]

Quantum Efficiency

0

200

400

600

800

1000

1200

Responsivity [V/J/m^2]

QE [%]

Responsivity [V/W/m^2]

Figure 3.4: Spectral response of the A1312IE monochrome image sensor (NIR enhanced) in the SM2D1312IE-TI6455 camera series

24

4

Functionality

This chapter serves as an overview of the camera configuration modes and explains camera

features. The goal is to describe what can be done with the camera. The setup of the

SM2-D1312(IE)-TI6455 series cameras is explained in later chapters.

4.1 Image Acquisition

4.1.1 Readout Modes

The SM2-D1312(IE)-TI6455 cameras provide two different readout modes:

Sequential readout Frame time is the sum of exposure time and readout time. Exposure time

of the next image can only start if the readout time of the current image is finished.

Simultaneous readout (interleave) The frame time is determined by the maximum of the

exposure time or of the readout time, which ever of both is the longer one. Exposure

time of the next image can start during the readout time of the current image.

Readout Mode SM2-D1312(IE)-TI6455 Series

Sequential readout available

Simultaneous readout available

Table 4.1: Readout mode of SM2-D1312(IE)-TI6455Series camera

The following figure illustrates the effect on the frame rate when using either the sequential

readout mode or the simultaneous readout mode (interleave exposure).

E x p o s u r e t i m e

F r a m e r a t e

( f p s )

S i m u l t a n e o u s

r e a d o u t m o d e

S e q u e n t i a l

r e a d o u t m o d e

f p s = 1 / r e a d o u t t i m e

f p s = 1 / e x p o s u r e t i m e

f p s = 1 / ( r e a d o u t t i m e + e x p o s u r e t i m e )

e x p o s u r e t i m e < r e a d o u t t i m e

e x p o s u r e t i m e > r e a d o u t t i m e

e x p o s u r e t i m e = r e a d o u t t i m e

Figure 4.1: Frame rate in sequential readout mode and simultaneous readout mode

Sequential readout mode For the calculation of the frame rate only a single formula applies:

frames per second equal to the inverse of the sum of exposure time and readout time.

25

4 Functionality

Simultaneous readout mode (exposure time < readout time) The frame rate is given by the

readout time. Frames per second equal to the inverse of the readout time.

Simultaneous readout mode (exposure time > readout time) The frame rate is given by the

exposure time. Frames per second equal to the inverse of the exposure time.

The simultaneous readout mode allows higher frame rates. However, if the exposure time

greatly exceeds the readout time, then the effect on the frame rate is neglectable.

In simultaneous readout mode image output faces minor limitations. The overall

linear sensor reponse is partially restricted in the lower grey scale region.

When changing readout mode from sequential to simultaneous readout mode

or vice versa, new settings of the BlackLevelOffset and of the image correction

are required.

Sequential readout

By default the camera continuously delivers images as fast as possible ("Free-running mode")

in the sequential readout mode. Exposure time of the next image can only start if the readout

time of the current image is finished.

e x p o s u r e r e a d o u t

e x p o s u r e

r e a d o u t

Figure 4.2: Timing in free-running sequential readout mode

When the acquisition of an image needs to be synchronised to an external event, an external

trigger can be used (refer to Section 4.4). In this mode, the camera is idle until it gets a signal

to capture an image.

e x p o s u r e r e a d o u t

i d l e e x p o s u r e

e x t e r n a l t r i g g e r

Figure 4.3: Timing in triggered sequential readout mode

Simultaneous readout (interleave exposure)

To achieve highest possible frame rates, the camera must be set to "Free-running mode" with

simultaneous readout. The camera continuously delivers images as fast as possible. Exposure

time of the next image can start during the readout time of the current image.

e x p o s u r e n i d l e

i d l e

r e a d o u t n

e x p o s u r e n + 1

r e a d o u t n + 1

f r a m e t i m e

r e a d o u t n - 1

Figure 4.4: Timing in free-running simultaneous readout mode (readout time> exposure time)

26

e x p o s u r e n

i d l e

r e a d o u t n

e x p o s u r e n + 1

f r a m e t i m e

r e a d o u t n - 1

i d l e

e x p o s u r e n - 1

Figure 4.5: Timing in free-running simultaneous readout mode (readout time< exposure time)

When the acquisition of an image needs to be synchronised to an external event, an external

trigger can be used (refer to Section 4.4). In this mode, the camera is idle until it gets a signal

to capture an image.

Figure 4.6: Timing in triggered simultaneous readout mode

4.1.2 Readout Timing

Sequential readout timing

By default, the camera is in free running mode and delivers images without any external

control signals. The sensor is operated in sequential readout mode, which means that the

sensor is read out after the exposure time. Then the sensor is reset, a new exposure starts and

the readout of the image information begins again. The data is output on the rising edge of

the pixel clock. The signals FRAME_VALID (FVAL) and LINE_VALID (LVAL) mask valid image

information. The signal SHUTTER indicates the active exposure period of the sensor and is shown

for clarity only.

Simultaneous readout timing

To achieve highest possible frame rates, the camera must be set to "Free-running mode" with

simultaneous readout. The camera continuously delivers images as fast as possible. Exposure

time of the next image can start during the readout time of the current image. The data is

output on the rising edge of the pixel clock. The signals FRAME_VALID (FVAL) and LINE_VALID (LVAL)

mask valid image information. The signal SHUTTER indicates the active integration phase of the

sensor and is shown for clarity only.

4.1 Image Acquisition 27

4 Functionality

P C L K

S H U T T E R

F V A L

L V A L

D V A L

D A T A

L i n e p a u s e

L i n e p a u s e L i n e p a u s e

F i r s t L i n e L a s t L i n e

E x p o s u r e

T i m e

F r a m e T i m e

C P R E

Figure 4.7: Timing diagram of sequential readout mode

28

P C L K

S H U T T E R

F V A L

L V A L

D V A L

D A T A

L i n e p a u s e

L i n e p a u s e L i n e p a u s e

F i r s t L i n e L a s t L i n e

E x p o s u r e

T i m e

F r a m e T i m e

C P R E

E x p o s u r e

T i m e

C P R E

Figure 4.8: Timing diagram of simultaneous readout mode (readout time > exposure time)

P C L K

S H U T T E R

F V A L

L V A L

D V A L

D A T A

L i n e p a u s e

L i n e p a u s e L i n e p a u s e

F i r s t L i n e L a s t L i n e

F r a m e T i m e

C P R E

E x p o s u r e T i m e

C P R E

Figure 4.9: Timing diagram simultaneous readout mode (readout time < exposure time)

4.1 Image Acquisition 29

4 Functionality

Frame time Frame time is the inverse of the frame rate.

Exposure time Period during which the pixels are integrating the incoming light.

PCLK Pixel clock on internal camera interface.

SHUTTER Internal signal, shown only for clarity. Is ’high’ during the exposure

time.

FVAL (Frame Valid) Is ’high’ while the data of one complete frame are transferred.

LVAL (Line Valid) Is ’high’ while the data of one line are transferred. Example: To transfer

an image with 640x480 pixels, there are 480 LVAL within one FVAL active

high period. One LVAL lasts 640 pixel clock cycles.

DVAL (Data Valid) Is ’high’ while data are valid.

DATA Transferred pixel values. Example: For a 100x100 pixel image, there are

100 values transferred within one LVAL active high period, or 100*100

values within one FVAL period.

Line pause Delay before the first line and after every following line when reading

out the image data.

Table 4.2: Explanation of control and data signals used in the timing diagram

These terms will be used also in the timing diagrams of Section 4.4.

4.1.3 Exposure Control

The exposure time defines the period during which the image sensor integrates the incoming

light. Refer to Section 3.4 for the allowed exposure time range.

4.1.4 Maximum Frame Rate

The maximum frame rate depends on the exposure time and the size of the image (see Section

4.3.)

The maximal frame rate with current camera settings can be read out from the

property FrameRateMax (AcquisitionFrameRateMax in GigE cameras).

.

30

4.2 Pixel Response

4.2.1 Linear Response

The camera offers a linear response between input light signal and output grey level. This can

be modified by the use of LinLog®as described in the following sections. In addition, a linear

digital gain may be applied, as follows. Please see Table 3.2 for more model-dependent

information.

Black Level Adjustment

The black level is the average image value at no light intensity. It can be adjusted by the

software. Thus, the overall image gets brighter or darker. Use a histogram to control the

settings of the black level.

In CameraLink®cameras the black level is called "BlackLevelOffset" and in GigE

cameras "BlackLevel".

4.2.2 LinLog

®

Overview

The LinLog®technology from Photonfocus allows a logarithmic compression of high light

intensities inside the pixel. In contrast to the classical non-integrating logarithmic pixel, the

LinLog®pixel is an integrating pixel with global shutter and the possibility to control the

transition between linear and logarithmic mode.

In situations involving high intrascene contrast, a compression of the upper grey level region

can be achieved with the LinLog®technology. At low intensities each pixel shows a linear

response. At high intensities the response changes to logarithmic compression (see Fig. 4.10).

The transition region between linear and logarithmic response can be smoothly adjusted by

software and is continuously differentiable and monotonic.

G r e y

V a l u e

L i g h t I n t e n s i t y

0 %

1 0 0 %

L i n e a r

R e s p o n s e

S a t u r a t i o n

W e a k c o m p r e s s i o n

V a l u e 2

S t r o n g c o m p r e s s i o n

V a l u e 1

R e s u l t i n g L i n l o g

R e s p o n s e

Figure 4.10: Resulting LinLog2 response curve

4.2 Pixel Response 31

4 Functionality

LinLog®is controlled by up to 4 parameters (Time1, Time2, Value1 and Value2). Value1 and Value2

correspond to the LinLog®voltage that is applied to the sensor. The higher the parameters

Value1 and Value2 respectively, the stronger the compression for the high light intensities. Time1

and Time2 are normalised to the exposure time. They can be set to a maximum value of 1000,

which corresponds to the exposure time.

Examples in the following sections illustrate the LinLog®feature.

LinLog1

In the simplest way the pixels are operated with a constant LinLog®voltage which defines the

knee point of the transition.This procedure has the drawback that the linear response curve

changes directly to a logarithmic curve leading to a poor grey resolution in the logarithmic

region (see Fig. 4.12).

tt

V a l u e 1

t

e x p

0

V

L i n L o g

= V a l u e 2

T i m e 1 = T i m e 2 = m a x .

= 1 0 0 0

Figure 4.11: Constant LinLog voltage in the Linlog1 mode

0

50

100

150

200

250

300

Typical LinLog1 Response Curve − Varying Parameter Value1

Illumination Intensity

Output grey level (8 bit) [DN]

V1 = 15

V1 = 16

V1 = 17

V1 = 18

V1 = 19

Time1=1000, Time2=1000, Value2=Value1

Figure 4.12: Response curve for different LinLog settings in LinLog1 mode

.

32

LinLog2

To get more grey resolution in the LinLog®mode, the LinLog2 procedure was developed. In

LinLog2 mode a switching between two different logarithmic compressions occurs during the

exposure time (see Fig. 4.13). The exposure starts with strong compression with a high

LinLog®voltage (Value1). At Time1 the LinLog®voltage is switched to a lower voltage resulting in

a weaker compression. This procedure gives a LinLog®response curve with more grey

resolution. Fig. 4.14 and Fig. 4.15 show how the response curve is controlled by the three

parameters Value1, Value2 and the LinLog®time Time1.

Settings in LinLog2 mode, enable a fine tuning of the slope in the logarithmic

region.

tt

V a l u e 1

V a l u e 2

T i m e 1

t

e x p

0

V

L i n L o g

T i m e 2 = m a x .

= 1 0 0 0

T i m e 1

Figure 4.13: Voltage switching in the Linlog2 mode

0

50

100

150

200

250

300

Typical LinLog2 Response Curve − Varying Parameter Time1

Illumination Intensity

Output grey level (8 bit) [DN]

T1 = 840

T1 = 920

T1 = 960

T1 = 980

T1 = 999

Time2=1000, Value1=19, Value2=14

Figure 4.14: Response curve for different LinLog settings in LinLog2 mode

4.2 Pixel Response 33

4 Functionality

0

20

40

60

80

100

120

140

160

180

200

Typical LinLog2 Response Curve − Varying Parameter Time1

Illumination Intensity

Output grey level (8 bit) [DN]

T1 = 880

T1 = 900

T1 = 920

T1 = 940

T1 = 960

T1 = 980

T1 = 1000

Time2=1000, Value1=19, Value2=18

Figure 4.15: Response curve for different LinLog settings in LinLog2 mode

LinLog3

To enable more flexibility the LinLog3 mode with 4 parameters was introduced. Fig. 4.16 shows

the timing diagram for the LinLog3 mode and the control parameters.

V

L i n L o g

t

V a l u e 1

V a l u e 2

t

e x p

T i m e 2

T i m e 1

T i m e 1 T i m e 2

t

e x p

V a l u e 3 = C o n s t a n t = 0

Figure 4.16: Voltage switching in the LinLog3 mode

.

34

0

50

100

150

200

250

300

Typical LinLog2 Response Curve − Varying Parameter Time2

Illumination Intensity

Output grey level (8 bit) [DN]

T2 = 950

T2 = 960

T2 = 970

T2 = 980

T2 = 990

Time1=850, Value1=19, Value2=18

Figure 4.17: Response curve for different LinLog settings in LinLog3 mode

4.2 Pixel Response 35

4 Functionality

4.3 Reduction of Image Size

With Photonfocus cameras there are several possibilities to focus on the interesting parts of an

image, thus reducing the data rate and increasing the frame rate. The most commonly used

feature is Region of Interest (ROI).

4.3.1 Region of Interest (ROI)

Some applications do not need full image resolution (e.g. 1312 x 1082 pixels). By reducing the

image size to a certain region of interest (ROI), the frame rate can be increased. A region of

interest can be almost any rectangular window and is specified by its position within the full

frame and its width (W) and height (H). Fig. 4.18 and Fig. 4.19 how possible configurations for

the region of interest, and Table 4.3 present numerical examples of how the frame rate can be

increased by reducing the ROI.

Both reductions in x- and y-direction result in a higher frame rate.

The minimum width of the region of interest depends on the model of the MV1D1312(I) camera series. For more details please consult Table 4.4 and Table 4.5.

The minimum width must be positioned symmetrically towards the vertical center line of the sensor as shown in Fig. 4.18 and Fig. 4.19). A list of possible

settings of the ROI for each camera model is given in Table 4.5.

³ 2 0 8

P i x e l

³

2 0 8 P i x e l

³ 2 0 8

P i x e l

+ m o d u l o 3 2 P i x e l

³ 2 0 8

P i x e l + m o d u l o 3 2 P i x e l

a )

b )

Figure 4.18: Possible configuration of the region of interest with SM2-D1312(IE)-TI6455-80 CMOS camera

✎

It is recommended to re-adjust the settings of the shading correction each time

a new region of interest is selected.

36

³

2 7 2 p i x e l

³

2 7 2 p i x e l

³

2 7 2 p i x e l

+ m o d u l o 3 2 p i x e l

³

2 7 2 p i x e l + m o d u l o 3 2 p i x e l

a )

b )

Figure 4.19: Possible configuration of the region of interest with SM2-D1312(IE)-TI6455-160 CMOS camera

Any region of interest may NOT be placed outside of the center of the sensor. Examples shown

in Fig. 4.20 illustrate configurations of the ROI that are NOT allowed.

a )

b )

Figure 4.20: ROI configuration examples that are NOT allowed

4.3 Reduction of Image Size 37

4 Functionality

ROI Dimension [Standard] SM2-D1312(IE)-TI6455-80 SM2-D1312(IE)-TI6455-160

1312 x 1082 (full resolution) 54 fps 108 fps

1248 x 1082 56 fps 113 fps

1280 x 1024 (SXGA) 58 fps 117 fps

1280 x 768 (WXGA) 78 fps 156 fps

800 x 600 (SVGA) 157 fps 310 fps

640 x 480 (VGA) 241 fps 472 fps

288 x 1 not allowed ROI setting not allowed ROI setting

480 x 1 10593 fps not allowed ROI setting

544 x 1 10498 fps 11022 fps

544 x 1082 125 fps 249 fps

480 x 1082 141 fps not allowed ROI setting

1312 x 544 107 fps 214 fps

1248 x 544 112 fps 224 fps

1312 x 256 227 fps 445 fps

1248 x 256 238 fps 466 fps

544 x 544 248 fps 485 fps

480 x 480 314 fps not allowed ROI setting

1024 x 1024 72 fps 145 fps

1056 x 1056 68 fps 136 fps

1312 x 1 9541 fps 10460 fps

1248 x 1 9615 fps 10504 fps

Table 4.3: Frame rates of different ROI settings (exposure time 10 µs; correction on, and sequential readout

mode).

38

.

4.3.2 ROI configuration

In the SM2-D1312(IE)-TI6455 camera series the following two restrictions have to be respected

for the ROI configuration:

• The minimum width (w) of the ROI is camera model dependent, consisting of 416 pixel in

the SM2-D1312(IE)-TI6455-80 camera, of 544 pixel in the SM2-D1312(IE)-TI6455-160

camera.

• The region of interest must overlap a minimum number of pixels centered to the left and

to the right of the vertical middle line of the sensor (ovl).

For any camera model of the SM2-D1312(IE)-TI6455 camera series the allowed ranges for the

ROI settings can be deduced by the following formula:

x

min

= max(0, 656 + ovl − w)

x

max

= min(656 − ovl, 1312 − w) .

where "ovl" is the overlap over the middle line and "w" is the width of the region of interest.

Any ROI settings in x-direction exceeding the minimum ROI width must be modulo 32.

SM2-D1312(IE)-TI6455-80 SM2-D1312(IE)-TI6455-160

ROI width (w) 416 ... 1312 544 ... 1312

overlap (ovl) 208 272

width condition modulo 32 modulo 32

Table 4.4: Summary of the ROI configuration restrictions for the SM2-D1312(IE)-TI6455 camera series indicating the minimum ROI width (w) and the required number of pixel overlap (ovl) over the sensor middle

line

The settings of the region of interest in x-direction are restricted to modulo 32

(see Table 4.5).

There are no restrictions for the settings of the region of interest in y-direction.

4.3.3 Calculation of the maximum frame rate

The frame rate mainly depends on the exposure time and readout time. The frame rate is the

inverse of the frame time.

fps =

1

t

frame

Calculation of the frame time (sequential mode)

4.3 Reduction of Image Size 39

4 Functionality

Width ROI-X (SM2-D1312(IE)-TI6455-80) ROI-X (SM2-D1312(IE)-TI6455-160)

288 not available not available

320 not available not available

352 not available not available

384 not available not available

416 448 not available

448 416 ... 448 not available

480 384 ... 448 not available

512 352 ... 448 not available

544 320 ... 448 384

576 288 ... 448 352 ... 384

608 256 ... 448 320 ... 352

640 224 ... 448 288 ... 384

672 192 ... 448 256 ... 384

704 160 ... 448 224 ... 384

736 128 ... 448 192 ... 384

768 96 ... 448 160 ... 384

800 64 ... 448 128 ... 384

832 32 ... 448 96 ... 384

864 0 ... 448 64 ... 384

896 0 ... 416 32 ... 384

... ... ...

1248 0 ... 64 0 ... 64

1312 0 0

Table 4.5: Some possible ROI-X settings (SM2-D1312(IE)-TI6455 series)

t

frame

≥ t

exp

+ t

ro

Typical values of the readout time troare given in table Table 4.6. Calculation of the frame time

(simultaneous mode)

The calculation of the frame time in simultaneous read out mode requires more detailed data

input and is skipped here for the purpose of clarity.

A frame rate calculator for calculating the maximum frame rate is available in

the support area of the Photonfocus website.

An overview of resulting frame rates in different exposure time settings is given in table Table

4.7.

40

ROI Dimension SM2-D1312(IE)-TI6455-80 SM2-D1312(IE)-TI6455-160

1312 x 1082 tro= 18.23 ms tro= 9.12 ms

1248 x 1082 tro= 17.37 ms tro= 8.68 ms

1024 x 512 tro= 6.78 ms tro= 3.39 ms

1056 x 512 tro= 6.99 ms tro= 3.49 ms

1024 x 256 tro= 3.39 ms tro= 1.70 ms

1056 x 256 tro= 3.49 ms tro= 1.75 ms

Table 4.6: Read out time at different ROI settings for the SM2-D1312(IE)-TI6455 CMOS camera series in

sequential read out mode.

Exposure time SM2-D1312(IE)-TI6455-80 SM2-D1312(IE)-TI6455-160

10 µs 54 / 54 fps 108 / 108 fps

100 µs 54 / 54 fps 107 / 108 fps

500 µs 53 / 54 fps 103 / 108 fps

1 ms 51 / 54 fps 98 / 108 fps

2 ms 49 / 54 fps 89 / 108 fps

5 ms 42 / 54 fps 70 / 108 fps

10 ms 35 / 54 fps 52 / 99 fps

12 ms 33 / 54 fps 47 / 82 fps

Table 4.7: Frame rates of different exposure times, [sequential readout mode / simultaneous readout mode

], resolution 1312 x 1082 pixel, FPN correction on.

4.3.4 Multiple Regions of Interest

The SM2-D1312(IE)-TI6455 camera series can handle up to 512 different regions of interest. This

feature can be used to reduce the image data and increase the frame rate. An application

example for using multiple regions of interest (MROI) is a laser triangulation system with

several laser lines. The multiple ROIs are joined together and form a single image, which is

transferred to the frame grabber.

An individual MROI region is defined by its starting value in y-direction and its height. The

starting value in horizontal direction and the width is the same for all MROI regions and is

defined by the ROI settings. The maximum frame rate in MROI mode depends on the number

of rows and columns being read out. Overlapping ROIs are allowed. See Section 4.3.3 for

information on the calculation of the maximum frame rate.

Fig. 4.21 compares ROI and MROI: the setups (visualized on the image sensor area) are

displayed in the upper half of the drawing. The lower half shows the dimensions of the

resulting image. On the left-hand side an example of ROI is shown and on the right-hand side

an example of MROI. It can be readily seen that resulting image with MROI is smaller than the

resulting image with ROI only and the former will result in an increase in image frame rate.

Fig. 4.22 shows another MROI drawing illustrating the effect of MROI on the image content.

Fig. 4.23 shows an example from hyperspectral imaging where the presence of spectral lines at

known regions need to be inspected. By using MROI only a 656x54 region need to be readout

and a frame rate of 4300 fps can be achieved. Without using MROI the resulting frame rate

would be 216 fps for a 656x1082 ROI.

.

4.3 Reduction of Image Size 41

4 Functionality

M R O I 0

M R O I 1

M R O I 2

( 0 , 0 )

( 1 3 1 1 , 1 0 8 1 )

( 0 , 0 )

( 1 3 1 1 , 1 0 8 1 )

R O I

M R O I 0

M R O I 1

M R O I 2

R O I

Figure 4.21: Multiple Regions of Interest

Figure 4.22: Multiple Regions of Interest with 5 ROIs

42

6 5 6 p i x e l

( 0 , 0 )

( 1 3 1 1 , 1 0 8 1 )

2 0 p i x e l

2 6 p i x e l

2 p i x e l

2 p i x e l

2 p i x e l

1 p i x e l

1 p i x e l

C h e m i c a l A g e n t

A

B C

Figure 4.23: Multiple Regions of Interest in hyperspectral imaging

4.3 Reduction of Image Size 43

4 Functionality

4.3.5 Decimation

Decimation reduces the number of pixels in y-direction. Decimation can also be used together

with ROI or MROI. Decimation in y-direction transfers every nthrow only and directly results in

reduced read-out time and higher frame rate respectively.

Fig. 4.24 shows decimation on the full image. The rows that will be read out are marked by red

lines. Row 0 is read out and then every nthrow.

( 0 , 0 )

( 1 3 1 1 , 1 0 8 1 )

Figure 4.24: Decimation in full image

Fig. 4.25 shows decimation on a ROI. The row specified by the Window.Y setting is first read

out and then every nthrow until the end of the ROI.

( 0 , 0 )

( 1 3 1 1 , 1 0 8 1 )

R O I

Figure 4.25: Decimation and ROI

Fig. 4.26 shows decimation and MROI. For every MROI region m, the first row read out is the

row specified by the MROI<m>.Y setting and then every nthrow until the end of MROI region

m.

44

( 0 , 0 )

( 1 3 1 1 , 1 0 8 1 )

M R O I 0

R O I

M R O I 2

M R O I 1

Figure 4.26: Decimation and MROI

The image in Fig. 4.27 on the right-hand side shows the result of decimation 3 of the image on

the left-hand side.

Figure 4.27: Image example of decimation 3

An example of a high-speed measurement of the elongation of an injection needle is given in

Fig. 4.28. In this application the height information is less important than the width

information. Applying decimation 2 on the original image on the left-hand side doubles the

resulting frame to about 7800 fps.

.

4.3 Reduction of Image Size 45

4 Functionality

Figure 4.28: Example of decimation 2 on image of injection needle

46

4.4 Trigger and Strobe

4.4.1 Introduction

The start of the exposure of the camera’s image sensor is controlled by the trigger. The trigger

can either be generated internally by the camera (free running trigger mode) or by an external

device (external trigger mode).

This section refers to the external trigger mode if not otherwise specified.

In external trigger mode, the trigger can be applied through the CameraLink®interface

(interface trigger) or directly by the power supply connector of the camera (I/O Trigger) (see

Section 4.4.2). The trigger signal can be configured to be active high or active low. When the

frequency of the incoming triggers is higher than the maximal frame rate of the current

camera settings, then some trigger pulses will be missed. A missed trigger counter counts these

events. This counter can be read out by the user.

The exposure time in external trigger mode can be defined by the setting of the exposure time

register (camera controlled exposure mode) or by the width of the incoming trigger pulse

(trigger controlled exposure mode) (see Section 4.4.3).

An external trigger pulse starts the exposure of one image. In Burst Trigger Mode however, a

trigger pulse starts the exposure of a user defined number of images (see Section 4.4.5).

The start of the exposure is shortly after the active edge of the incoming trigger. An additional

trigger delay can be applied that delays the start of the exposure by a user defined time (see

Section 4.4.4). This often used to start the exposure after the trigger to a flash lighting source.

4.4 Trigger and Strobe 47

4 Functionality

4.4.2 Trigger Source

The trigger signal can be configured to be active high or active low. One of the following

trigger sources can be used:

Free running The trigger is generated internally by the camera. Exposure starts immediately

after the camera is ready and the maximal possible frame rate is attained, if Constant

Frame Rate mode is disabled. In Constant Frame Rate mode, exposure starts after a

user-specified time (Frame Time) has elapsed from the previous exposure start and

therefore the frame rate is set to a user defined value.

Opt. In 0 In the "Opt. In 0" trigger mode, the trigger signal is applied to the camera by the

power supply connector (via an optocoupler). The input OPTO_IN0 is used.

RS422 In 0 In the "RS422 In 0" trigger mode, the trigger signal is applied directly to the camera

by the 14 pol trigger connector. The differential input DIG_IN0 is used (PDIG_IN0 and

NDIG_IN0 pins)

RS422 Encoder Use the In the "RS422 Encoder" trigger mode, the trigger signal is applied

directly to the camera by the 14 pol trigger connector. TBD.

C a m e r a

T r i g g e r S o u r c e

R X R S 4 2 2

1 4 p o l e

C o n n e c t o r

1 2 p o l e

C o n n e c t o r

O p t o c o u p l e r

O P T O _ I N [ 0 . . 2 ]

P D I G _ I N [ 0 . . 2 ]

N D I G _ I N [ 0 . . 2 ]

S h a f t e n c o d e r

D S P

F P G A

S e n s o r

D I G _ I N [ 1 . . 2 ]

D I G _ I N 0

O P T O _ I N 0

O P T O _ I N [ 1 . . 2 ]

Figure 4.29: Trigger inputs of the SM2-D1312(IE)-TI6455 camera series

48

4.4.3 Exposure Time Control

Depending on the trigger mode, the exposure time can be determined either by the camera or

by the trigger signal itself:

Camera-controlled Exposure time In this trigger mode the exposure time is defined by the

camera. For an active high trigger signal, the camera starts the exposure with a positive

trigger edge and stops it when the preprogrammed exposure time has elapsed. The

exposure time is defined by the software.

Trigger-controlled Exposure time In this trigger mode the exposure time is defined by the

pulse width of the trigger pulse. For an active high trigger signal, the camera starts the

exposure with the positive edge of the trigger signal and stops it with the negative edge.

Trigger-controlled exposure time is not available in simultaneous readout mode.

External Trigger with Camera controlled Exposure Time

In the external trigger mode with camera controlled exposure time the rising edge of the

trigger pulse starts the camera states machine, which controls the sensor and optional an

external strobe output. Fig. 4.30 shows the detailed timing diagram for the external trigger

mode with camera controlled exposure time.

e x t e r n a l t r i g g e r p u l s e i n p u t

t r i g g e r a f t e r i s o l a t o r

t r i g g e r p u l s e i n t e r n a l c a m e r a c o n t r o l

d e l a y e d t r i g g e r f o r s h u t t e r c o n t r o l

i n t e r n a l s h u t t e r c o n t r o l

d e l a y e d t r i g g e r f o r s t r o b e c o n t r o l

i n t e r n a l s t r o b e c o n t r o l

e x t e r n a l s t r o b e p u l s e o u t p u t

t

d - i s o - i n p u t

t

j i t t e r

t

t r i g g e r - d e l a y

t

e x p o s u r e

t

s t r o b e - d e l a y

t

d - i s o - o u t p u t

t

s t r o b e - d u r a t i o n

t

t r i g g e r - o f f s e t

t

s t r o b e - o f f s e t

Figure 4.30: Timing diagram for the camera controlled exposure time

The rising edge of the trigger signal is detected in the camera control electronic which is

implemented in an FPGA. Before the trigger signal reaches the FPGA it is isolated from the

4.4 Trigger and Strobe 49

4 Functionality

camera environment to allow robust integration of the camera into the vision system. In the

signal isolator the trigger signal is delayed by time t

d−iso−input

. This signal is clocked into the

FPGA which leads to a jitter of t

jitter

. The pulse can be delayed by the time t

trigger−delay

which

can be configured by a user defined value via camera software. The trigger offset delay

t

trigger−offset

results then from the synchronous design of the FPGA state machines. The

exposure time t

exposure

is controlled with an internal exposure time controller.

The trigger pulse from the internal camera control starts also the strobe control state machines.

The strobe can be delayed by t

strobe−delay

with an internal counter which can be controlled by

the customer via software settings. The strobe offset delay t

strobe−delay

results then from the

synchronous design of the FPGA state machines. A second counter determines the strobe

duration t

strobe−duration

(strobe-duration). For a robust system design the strobe output is also

isolated from the camera electronic which leads to an additional delay of t

d−iso−output

. Table

4.8 and Table 4.9 gives an overview over the minimum and maximum values of the parameters.

External Trigger with Pulsewidth controlled Exposure Time

In the external trigger mode with Pulsewidth controlled exposure time the rising edge of the

trigger pulse starts the camera states machine, which controls the sensor. The falling edge of

the trigger pulse stops the image acquisition. Additionally the optional external strobe output

is controlled by the rising edge of the trigger pulse. Timing diagram Fig. 4.31 shows the

detailed timing for the external trigger mode with pulse width controlled exposure time.

e x t e r n a l t r i g g e r p u l s e i n p u t

t r i g g e r a f t e r i s o l a t o r

t r i g g e r p u l s e r i s i n g e d g e c a m e r a c o n t r o l

d e l a y e d t r i g g e r r i s i n g e d g e f o r s h u t t e r s e t

i n t e r n a l s h u t t e r c o n t r o l

d e l a y e d t r i g g e r f o r s t r o b e c o n t r o l

i n t e r n a l s t r o b e c o n t r o l

e x t e r n a l s t r o b e p u l s e o u t p u t

t

d - i s o - i n p u t

t

j i t t e r

t

t r i g g e r - d e l a y

t

e x p o s u r e

t

s t r o b e - d e l a y

t

d - i s o - o u t p u t

t

s t r o b e - d u r a t i o n

t r i g g e r p u l s e f a l l i n g e d g e c a m e r a c o n t r o l

d e l a y e d t r i g g e r f a l l i n g e d g e s h u t t e r r e s e t

t

j i t t e r

t

t r i g g e r - d e l a y

t

e x p o s u r e

t

t r i g g e r - o f f s e t

t

s t r o b e - o f f s e t

Figure 4.31: Timing diagram for the Pulsewidth controlled exposure time

50

The timing of the rising edge of the trigger pulse until to the start of exposure and strobe is

equal to the timing of the camera controlled exposure time (see Section 4.4.3). In this mode

however the end of the exposure is controlled by the falling edge of the trigger Pulsewidth:

The falling edge of the trigger pulse is delayed by the time t

d−iso−input

which is results from the

signal isolator. This signal is clocked into the FPGA which leads to a jitter of t

jitter

. The pulse is

then delayed by t

trigger−delay

by the user defined value which can be configured via camera

software. After the trigger offset time t

trigger−offset

the exposure is stopped.

4.4.4 Trigger Delay

The trigger delay is a programmable delay in milliseconds between the incoming trigger edge

and the start of the exposure. This feature may be required to synchronize to external strobe

with the exposure of the camera.

4.4.5 Burst Trigger

The camera includes a burst trigger engine. When enabled, it starts a predefined number of

acquisitions after one single trigger pulse. The time between two acquisitions and the number

of acquisitions can be configured by a user defined value via the camera software. The burst

trigger feature works only in the mode "Camera controlled Exposure Time".

The burst trigger signal can be configured to be active high or active low. When the frequency

of the incoming burst triggers is higher than the duration of the programmed burst sequence,

then some trigger pulses will be missed. A missed burst trigger counter counts these events.

This counter can be read out by the user.

The timing diagram of the burst trigger mode is shown in Fig. 4.32. The timing of the

"external trigger pulse input" until to the "trigger pulse internal camera control" is equal to

the timing in the section Fig. 4.31. This trigger pulse then starts after a user configurable burst

trigger delay time t

burst−trigger−delay

the internal burst engine, which generates n internal

triggers for the shutter- and the strobe-control. A user configurable value defines the time

t

burst−period−time

between two acquisitions.

4.4 Trigger and Strobe 51

4 Functionality

e x t e r n a l t r i g g e r p u l s e i n p u t

t r i g g e r a f t e r i s o l a t o r

t r i g g e r p u l s e i n t e r n a l c a m e r a c o n t r o l

d e l a y e d t r i g g e r f o r s h u t t e r c o n t r o l

i n t e r n a l s h u t t e r c o n t r o l

d e l a y e d t r i g g e r f o r s t r o b e c o n t r o l

i n t e r n a l s t r o b e c o n t r o l

e x t e r n a l s t r o b e p u l s e o u t p u t

t

d - i s o - i n p u t

t

j i t t e r

t

t r i g g e r - d e l a y

t

e x p o s u r e

t

s t r o b e - d e l a y

t

d - i s o - o u t p u t

t

s t r o b e - d u r a t i o n

t

t r i g g e r - o f f s e t

t

s t r o b e - o f f s e t

d e l a y e d t r i g g e r f o r b u r s t t r i g g e r e n g i n e

t

b u r s t - t r i g g e r - d e l a y

t

b u r s t - p e r i o d - t i m e

Figure 4.32: Timing diagram for the burst trigger mode

52

SM2-D1312(IE)-TI6455-80 SM2-D1312(IE)-TI6455-80

Timing Parameter Minimum Maximum

t

d−iso−input

45 ns 60 ns

t

jitter

0 50 ns

t

trigger−delay

0 0.84 s

t

burst−trigger−delay

0 0.84 s

t

burst−period−time

depends on camera settings 0.84 s

t

trigger−offset

(non burst mode) 200 ns 200 ns

t

trigger−offset

(burst mode) 250 ns 250 ns

t

exposure

10 µs 0.84 s

t

strobe−delay

600 ns 0.84 s

t

strobe−offset

(non burst mode) 200 ns 200 ns

t

strobe−offset

(burst mode) 250 ns 250 ns

t

strobe−duration

200 ns 0.84 s

t

d−iso−output

45 ns 60 ns

t

trigger−pulsewidth

200 ns n/a

Number of bursts n 1 30000

Table 4.8: Summary of timing parameters relevant in the external trigger mode using camera (SM2D1312(IE)-TI6455-80)

4.4 Trigger and Strobe 53

4 Functionality

SM2-D1312(IE)-TI6455-160 SM2-D1312(IE)-TI6455-160

Timing Parameter Minimum Maximum

t

d−iso−input

45 ns 60 ns

t

jitter

0 25 ns

t

trigger−delay

0 0.42 s

t

burst−trigger−delay

0 0.42 s

t

burst−period−time

depends on camera settings 0.42 s

t

trigger−offset

(non burst mode) 100 ns 100 ns

t

trigger−offset

(burst mode) 125 ns 125 ns

t

exposure

10 µs 0.42 s

t

strobe−delay

0 0.42 s

t

strobe−offset

(non burst mode) 100 ns 100 ns

t

strobe−offset

(burst mode) 125 ns 125 ns

t

strobe−duration

200 ns 0.42 s

t

d−iso−output

45 ns 60 ns

t

trigger−pulsewidth

200 ns n/a

Number of bursts n 1 30000

Table 4.9: Summary of timing parameters relevant in the external trigger mode using camera (SM2D1312(IE)-TI6455-160)

54

4.4.6 Software Trigger

The software trigger enables to emulate an external trigger pulse by the camera software

through the serial data interface. It works with both burst mode enabled and disabled. As

soon as it is performed via the camera software, it will start the image acquisition(s),

depending on the usage of the burst mode and the burst configuration. The software trigger is

not working FreeRunning mode.

4.4.7 Strobe Output

The strobe unit is implemented in the form of two interfaces:

• RS422 compatible interface

• Opto-isolated interface.

Each interface has 3 output channels OUT[0..2]. Channel OUT0 is dedicated for very fast strobes

and is a FPGA output signal. Channel OUT1 and OUT2 are used for the slow strobe outputs and

are connected with DSP output signals. Fig. 4.33 shows the different kinds of interfaces in a

simplified manner. The pinout of the interface connectors are given in Appendix Appendix A.

For further hardware details see Section 5. The strobe output can be used both in free-running

and in trigger mode. There is a programmable delay available to adjust the strobe pulse to

your application.

The opto isolated strobe outputs OPTO_OUT[0..2] need a separate power supply.

Please see Appendix Appendix A for more information.

C a m e r a

T X R S 4 2 2

1 4 p o l e

C o n n e c t o r

1 2 p o l e

C o n n e c t o r

O p t o c o u p l e r

D S P

F P G A

S e n s o r

O P T O _ O U T [ 0 . . 2 ]

P D I G _ O U T [ 0 . . 2 ]

N D I G _ O U T [ 0 . . 2 ]

F l a s h

D I G _ O U T [ 1 . . 2 ]

D I G _ O U T 0

O P T O _ O U T [ 1 . . 2 ]

O P T O _ O U T 0

Figure 4.33: Strobe output of the SM2-D1312(IE)-TI6455

4.4 Trigger and Strobe 55

4 Functionality

4.5 Data Path Overview

The data path is the path of the image from the output of the image sensor to the output of

the camera. The sequence of blocks is shown in figure Fig. 4.34.

I m a g e S e n s o r

F P N C o r r e c t i o n

D i g i t a l O f f s e t

D i g i t a l G a i n

L o o k - u p t a b l e ( L U T )

3 x 3 C o n v o l v e r

C r o s s h a i r s i n s e r t i o n

S t a t u s l i n e i n s e r t i o n

T e s t i m a g e s i n s e r t i o n

A p p l y d a t a r e s o l u t i o n

I m a g e o u t p u t

M o n o c h r o m e c a m e r a s

I m a g e S e n s o r

F P N C o r r e c t i o n

D i g i t a l O f f s e t

D i g i t a l G a i n /

R G B F i n e G a i n

L o o k - u p t a b l e ( L U T )

S t a t u s l i n e i n s e r t i o n

T e s t i m a g e s i n s e r t i o n

A p p l y d a t a r e s o l u t i o n

I m a g e o u t p u t

C o l o u r c a m e r a s

Figure 4.34: camera data path

.

56

4.6 Image Correction

4.6.1 Overview

The camera possesses image pre-processing features, that compensate for non-uniformities

caused by the sensor, the lens or the illumination. This method of improving the image quality

is generally known as ’Shading Correction’ or ’Flat Field Correction’ and consists of a

combination of offset correction, gain correction and pixel interpolation.

Since the correction is performed in hardware, there is no performance limitation of the cameras for high frame rates.

The offset correction subtracts a configurable positive or negative value from the live image

and thus reduces the fixed pattern noise of the CMOS sensor. In addition, hot pixels can be

removed by interpolation. The gain correction can be used to flatten uneven illumination or to

compensate shading effects of a lens. Both offset and gain correction work on a pixel-per-pixel

basis, i.e. every pixel is corrected separately. For the correction, a black reference and a grey

reference image are required. Then, the correction values are determined automatically in the

camera.

Do not set any reference images when gain or LUT is enabled! Read the following sections very carefully.

Correction values of both reference images can be saved into the internal flash memory, but

this overwrites the factory presets. Then the reference images that are delivered by factory

cannot be restored anymore.

4.6.2 Offset Correction (FPN, Hot Pixels)

The offset correction is based on a black reference image, which is taken at no illumination

(e.g. lens aperture completely closed). The black reference image contains the fixed-pattern

noise of the sensor, which can be subtracted from the live images in order to minimise the

static noise.

Offset correction algorithm

After configuring the camera with a black reference image, the camera is ready to apply the

offset correction:

1. Determine the average value of the black reference image.

2. Subtract the black reference image from the average value.

3. Mark pixels that have a grey level higher than 1008 DN (@ 12 bit) as hot pixels.

4. Store the result in the camera as the offset correction matrix.

5. During image acquisition, subtract the correction matrix from the acquired image and

interpolate the hot pixels (see Section 4.6.2).

4.6 Image Correction 57

4 Functionality

4

4

4

31

21

3 1

4 32

3

4

1

1

2 4 14

4

3

1

3

4

b l a c k r e f e r e n c e

i m a g e

1

1

1

2

- 1

2

- 2

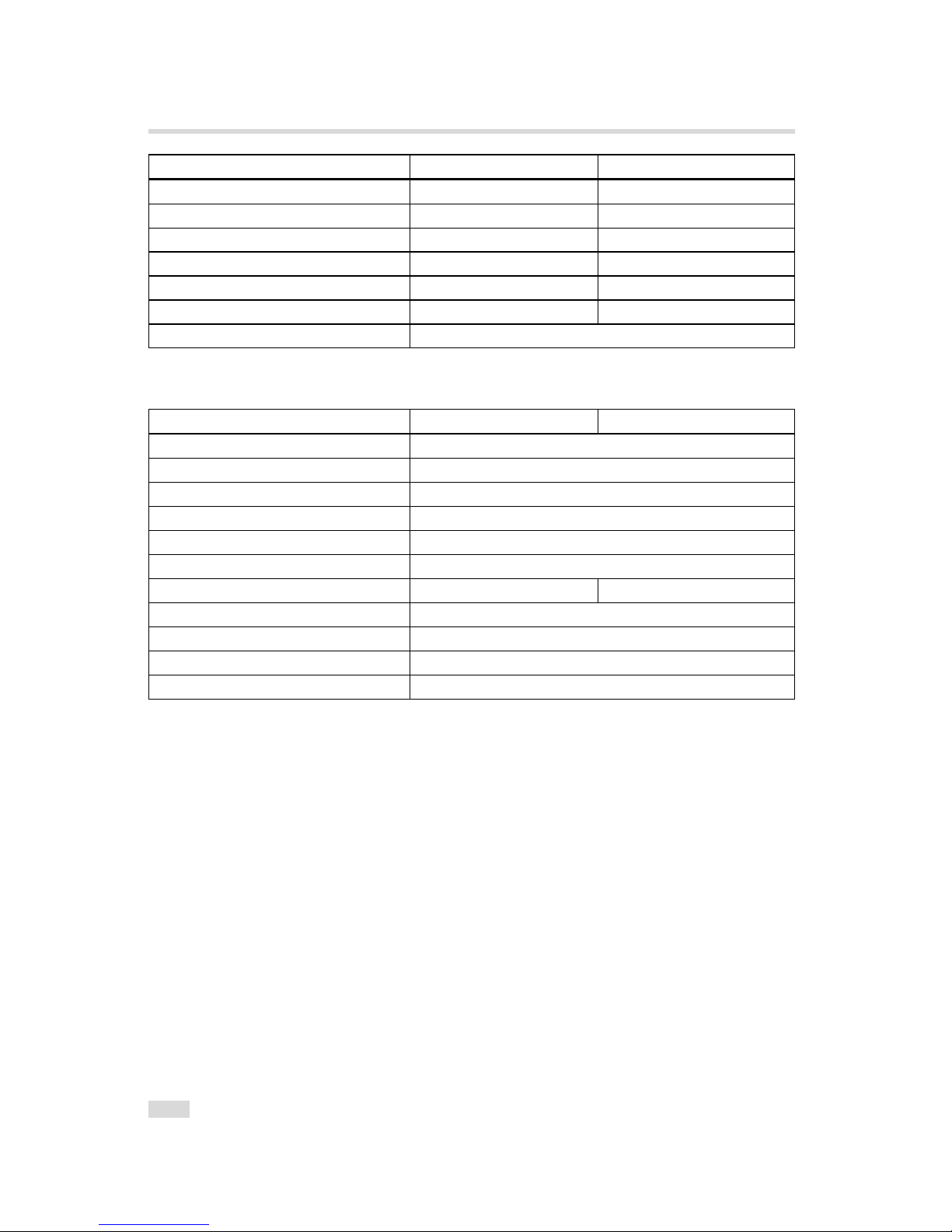

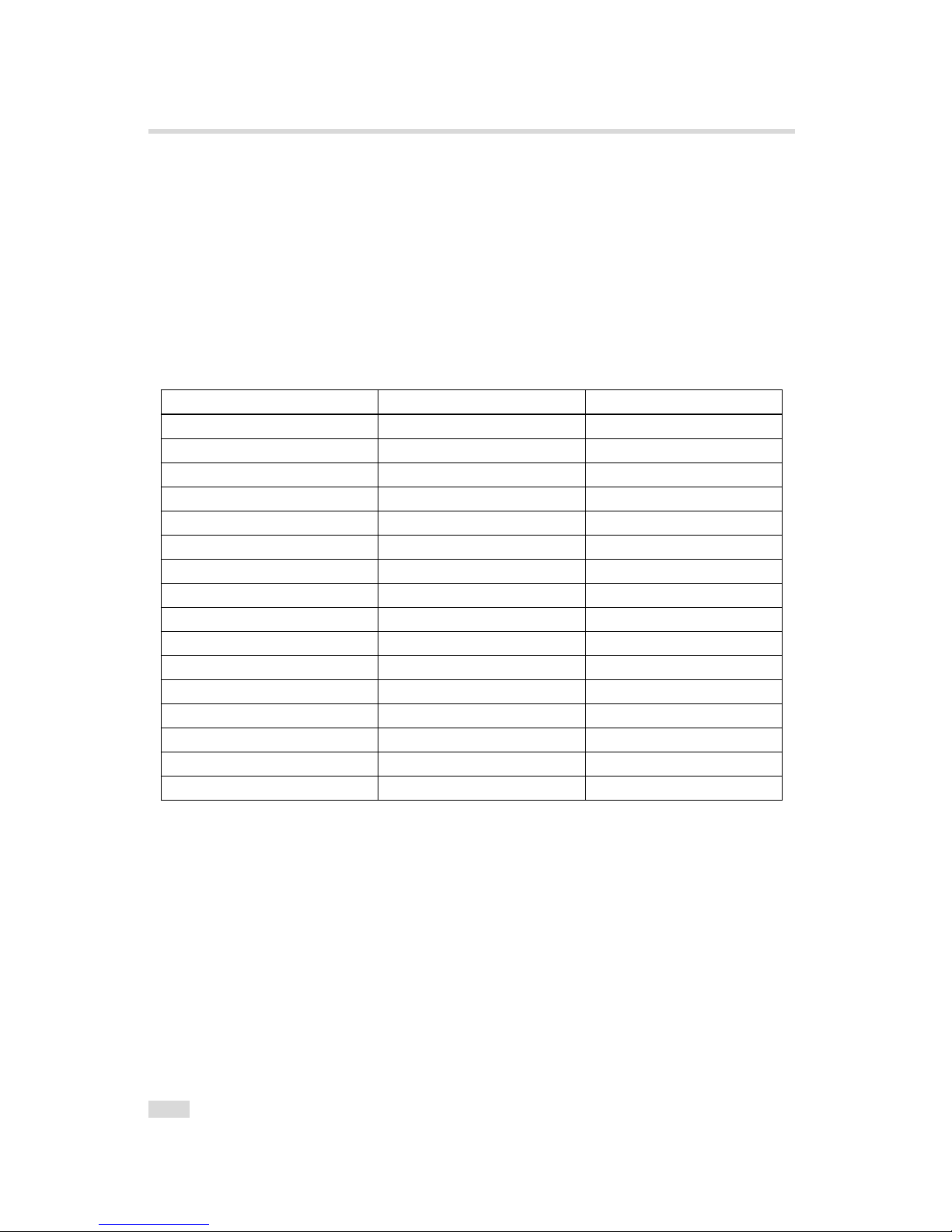

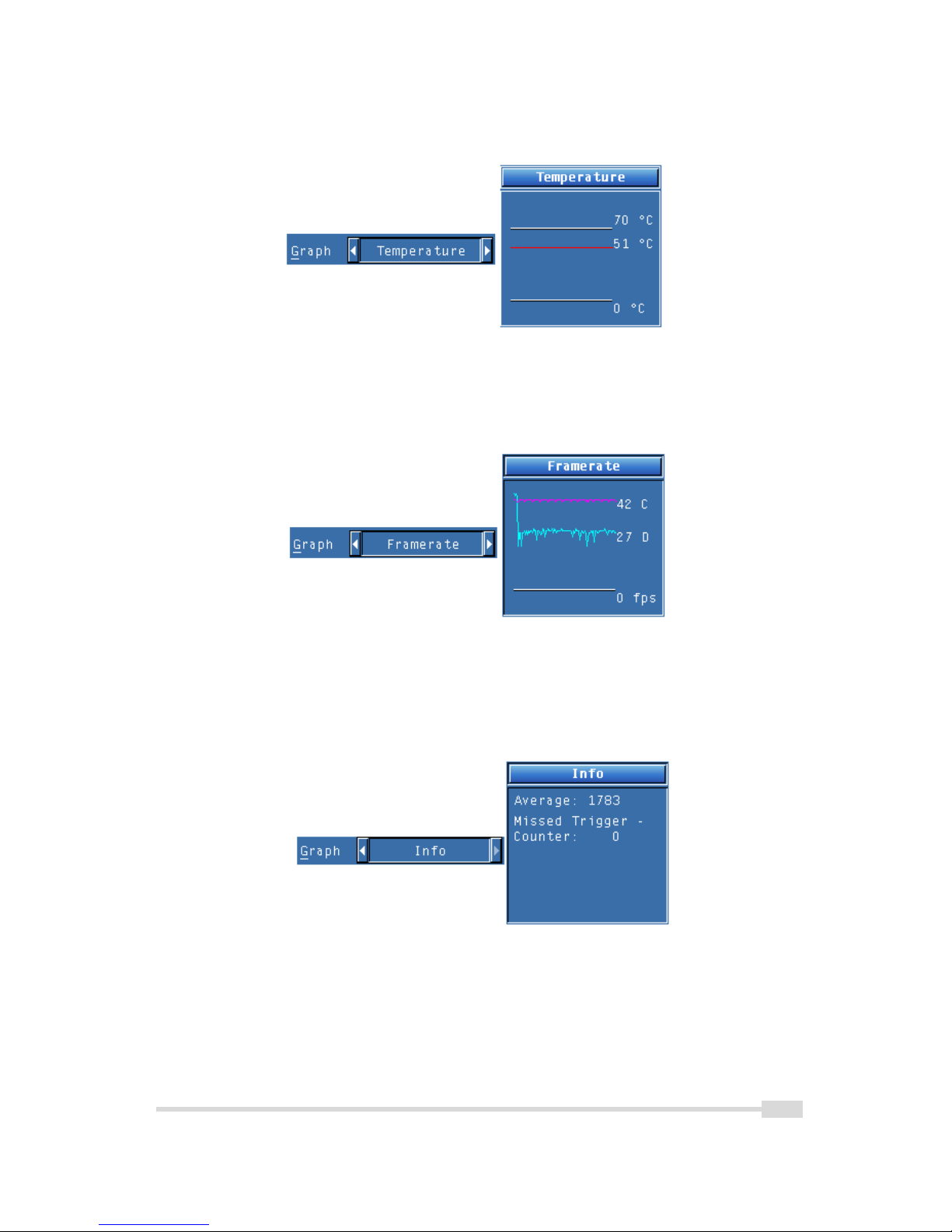

- 1