Overland

Storage

SnapScale

Administrator’s Guide

™

For a Clustered Network Running

™

RAINcloudOS

Version 4.0

December 2013

10400455-001

SnapScale/RAINcloudOS 4.0 Administrator’s Guide

Dec 2013 ©Overland Storage, Inc. All rights reserved.

Overland®, Overland Data®, Overland Storage®, ARCvault®, DynamicRAID®, LibraryPro®, LoaderXpress®, Multi-SitePAC®, NEO®, NEO Series®,

PowerLoader

and XchangeNOW

GuardianOS™, RAINcloud™, RapidRebuild™, SnapDisk™, SnapEDR™, Snap Enterprise Data Replicator™, SnapExpansion™, SnapSAN™,

SnapScale™, SnapServer DX Series™, SnapServer Manager™, and SnapWrite™ are trademarks of Overland Storage, Inc.

All other brand names or trademarks are the property of their respective owners.

The names of companies and individuals used in examples are fictitious and intended to illustrate the use of the software. Any resemblance to actual

companies or individuals, whether past or present, is coincidental.

PROPRIETARY NOTICE

All information contained in or disclosed by this document is considered proprietary by Overland Storage. By accepting this material the recipient agrees

that this material and the information contained therein are held in confidence and in trust and will not be used, reproduced in whole or in part, nor its

contents revealed to others, except to meet the purpose for which it was delivered. It is understood that no right is conveyed to reproduce or have

reproduced any item herein disclosed without express permission from Overland Storage.

Overland Storage provides this manual as is, without warranty of any kind, either expressed or implied, including, but not limited to, the implied

warranties of merchantability and fitness for a particular purpose. Overland Storage may make improvements or changes in the product(s) or programs

described in this manual at any time. These changes will be incorporated in new editions of this publication.

Overland Storage assumes no responsibility for the accuracy, completeness, sufficiency, or usefulness of this manual, nor for any problem that might arise

from the use of the information in this manual.

FW 4.0.061

®

, Protection OS®, REO®, REO 4000®, REO Series®, Snap Appliance®, Snap Care® (EU only), SnapServer®, StorAssure®, Ultamus®, VR2®,

®

are registered trademarks of Overland Storage, Inc.

Overland Storage, Inc.

9112 Spectrum Center Blvd.

San Diego, CA 92123

U.S.A.

Tel: 1.877.654.3429 (toll-free U.S.)

Tel: +1.858.571.5555, Option 5 (International)

Fax: +1.858.571.0982 (general)

Fax: +1.858.571.3664 (sales)

www.overlandstorage.com

10400455-001 December 2013 Dec 2013 ©Overland Storage, Inc. ii

Audience and Purpose

This guide is intended for system and network administrators charged with installing and

maintaining a SnapScale cluster running RAINcloudOS 4.0 on their network. It provides

information on the installation, configuration, security, and maintenance of the SnapScale

cluster and nodes.

Product Documentation

SnapScale product documentation and additional literature are available online, along with

the latest release of the RAINcloudOS 4.0 software.

Point your browser to:

http://docs.overlandstorage.com/snapscale

Follow the appropriate link on that page to download the latest software file or document.

Preface

For additional assistance, search at http://support.overlandstorage.com.

Overland Technical Support

For help configuring and using your SnapScale cluster, email our technical support staff at:

techsupport@overlandstorage.com.

You can get additional technical support information on the Contact Us web page at:

http://docs.overlandstorage.com/support

For a complete list of support times based on your type of coverage, visit our website at:

http://docs.overlandstorage.com/care

Software Updates

The latest release of the RAINcloudOS software can be obtained from the Downloads and

Resources (SnapScale Solutions) page at the Overland Storage website:

http://docs.overlandstorage.com/snapscale

Follow the appropriate instructions to download the latest software file.

For additional assistance, search at http://support.overlandstorage.com/.

10400455-001 Dec 2013 ©Overland Storage, Inc. iii

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Preface

Conventions

This document exercises several alerts and typographical conventions.

Alerts

Convention Description & Usage

IMPORTANT An Important note is a type of note that provides information essential to

the completion of a task or that can impact the product and its function.

CAUTION A Caution contains information that the user needs to know to avoid

damaging or permanently deleting data or causing physical damage to

the hardware or system.

WARNING

ADVERTISSEMENT

Typographical Conventions

A Warning contains information concerning personal safety. Failure to

follow directions in the warning could result in bodily harm or death.

Un Canadien avertissement comme celui-ci contient des informations

relatives à la sécurité personnelle. Ignorer les instructions dans

l'avertissement peut entraîner des lésions corporelles ou la mort.

Convention Description & Usage

Button_name Words in this special boldface font indicate the names of command

buttons found in the Web Management Interface.

Ctrl-Alt-r This type of format details the keys you press simultaneously. In this

example, hold down the Ctrl and Alt keys and press the r key.

NOTE A Note indicates neutral or positive information that emphasizes or

supplements important points of the main text. A note supplies

information that may apply only in special cases, for example, memory

limitations or details that apply to specific program versions.

Menu Flow

Indicator (>)

Courier Italic A variable for which you must substitute a value.

Courier Bold

Information contained in this guide has been reviewed for accuracy, but not for product

warranty because of the various environments, operating systems, or settings involved.

Information and specifications may change without notice.

Words with a greater than sign between them indicate the flow of actions

to accomplish a task. For example, Setup > Passwords > User

indicates that you should press the Setup button, then the Passwords

button, and finally the User button to accomplish a task.

Commands you enter in a command-line interface (CLI).

Japanese Voluntary Control Council for Interference (VCCI)

10400455-001 Dec 2013 ©Overland Storage, Inc. iv

Preface

Chapter 1 - Overview

SnapScale Conventions ......................................................................................................................................1-1

SnapScale Node Requirements ..........................................................................................................................1-2

RAINcloudOS Specifications ...............................................................................................................................1-3

RAINcloudOS 4.0 Features ...................................................................................................................................1-4

Client and Storage Networks .............................................................................................................................. 1-5

Node/Switch Cabling Example .....................................................................................................................1-5

Node Port Configurations ....................................................................................................................................1-6

X2 Node Configurations ................................................................................................................................. 1-6

X4 Node Configurations ................................................................................................................................. 1-7

Chapter 2 - Setup and Configuration

Connecting for the First Time ..............................................................................................................................2-1

Connect Using the Node Name ...................................................................................................................2-1

Connect Using SSM .........................................................................................................................................2-2

Create a New SnapScale Cluster (via Wizard) .................................................................................................2-3

Step 1 – Select SnapScale Nodes .................................................................................................................2-3

Step 2 – Client Network Configuration Overview .......................................................................................2-5

Step 3 – Choose Client Network Static TCP/IP Settings ..............................................................................2-5

Step 4 – Configure Node Static IP Addresses ..............................................................................................2-6

Step 5 – Basic SnapScale Properties .............................................................................................................2-7

Step 6 – Set Date and Time ...........................................................................................................................2-8

Step 7 – Summary Verification & Cluster Creation .....................................................................................2-9

Join an Existing SnapScale Cluster (via Wizard) ..............................................................................................2-12

Web Management Interface ...........................................................................................................................2-13

Alert Messages ..............................................................................................................................................2-15

Site Map .........................................................................................................................................................2-16

Contact Information ....................................................................................................................................2-17

Contents

Chapter 3 - SnapScale Settings

SnapScale Properties ...........................................................................................................................................3-2

Date/Time ..............................................................................................................................................................3-3

Configure Date and Time Settings Manually ...............................................................................................3-3

Configure Date and Time Settings for Automatic Synchronization .......................................................... 3-4

SSH ..........................................................................................................................................................................3-5

UPS ..........................................................................................................................................................................3-5

Edit UPS Properties ..........................................................................................................................................3-6

Procedure to Configure UPS Protection .......................................................................................................3-7

Add Network UPS Device ..............................................................................................................................3-7

10400455-001 Dec 2013 ©Overland Storage, Inc. v

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Contents

Change Network UPS Device .......................................................................................................................3-8

Delete Network UPS Device ..........................................................................................................................3-8

Chapter 4 - Network Access

View Network Information ...................................................................................................................................4-2

Client Network Information ............................................................................................................................4-2

Storage Network Information ........................................................................................................................4-4

TCP/IP Networking ................................................................................................................................................4-5

Bonding Options .............................................................................................................................................4-6

Guidelines in TCP/IP Configuration ...............................................................................................................4-7

Edit Storage Network Properties ...................................................................................................................4-8

Utility IP Address ..............................................................................................................................................4-9

Windows/SMB Networking .................................................................................................................................4-10

Support for Windows/SMB Networking .......................................................................................................4-11

Support for Windows Network Authentication ..........................................................................................4-12

Connect from a Windows Client ...............................................................................................................4-13

Connect a Mac OS X Client Using SMB .....................................................................................................4-13

Configure Windows/SMB Networking .........................................................................................................4-13

NFS Access ..........................................................................................................................................................4-16

Support for NFS ..............................................................................................................................................4-17

NFS Share Mounting .....................................................................................................................................4-17

NIS Domains ........................................................................................................................................................4-18

Guidelines for Configuring NIS .....................................................................................................................4-18

FTP/FTPS Access ..................................................................................................................................................4-19

Supported FTP Clients ...................................................................................................................................4-19

SNMP Configuration ...........................................................................................................................................4-20

Default Traps .................................................................................................................................................4-21

Supported Network Manager Applications and MIBs .............................................................................4-22

Configure SNMP ............................................................................................................................................4-22

Web Access ........................................................................................................................................................4-23

Configuring HTTP/HTTPS ................................................................................................................................4-23

Using Web Root to Configure the SnapScale as a Simple Web Server ..................................................4-24

iSNS Configuration ..............................................................................................................................................4-27

Chapter 5 - Storage Options

Peer Sets ................................................................................................................................................................5-1

Peer Sets and Recovery .................................................................................................................................5-2

Peer Set Utilization ...........................................................................................................................................5-4

Peer Set Basics .................................................................................................................................................5-4

Peer Sets Page ................................................................................................................................................5-5

Spare Disks Page .............................................................................................................................................5-6

Spare Distributor ..............................................................................................................................................5-7

Data Balancer .................................................................................................................................................5-9

Volumes ...............................................................................................................................................................5-11

Volume Overview .........................................................................................................................................5-11

Creating Volumes .........................................................................................................................................5-12

Edit Volume Properties .................................................................................................................................5-14

Deleting Volumes ..........................................................................................................................................5-14

Quotas .................................................................................................................................................................5-15

Default Quotas ..............................................................................................................................................5-16

Quotas for Volume Page .............................................................................................................................5-17

10400455-001 Dec 2013 ©Overland Storage, Inc. vi

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Contents

Add Quota Wizard .......................................................................................................................................5-18

Editing or Removing Quotas ........................................................................................................................5-20

Snapshots ............................................................................................................................................................5-21

Snapshots Overview .....................................................................................................................................5-22

Creating Snapshots ......................................................................................................................................5-22

Adjusting Snapshot Space ...........................................................................................................................5-25

Accessing Snapshots ....................................................................................................................................5-26

Scheduling Snapshots ..................................................................................................................................5-26

Edit Snapshot Properties ...............................................................................................................................5-27

iSCSI Disks .............................................................................................................................................................5-27

Configuring iSCSI Initiators ...........................................................................................................................5-27

iSCSI Configuration on the SnapScale .......................................................................................................5-27

Create iSCSI Disks .......................................................................................................................................... 5-29

Edit iSCSI Disk Properties ...............................................................................................................................5-31

Delete an iSCSI Disk ......................................................................................................................................5-32

Configuring VSS/VDS for iSCSI Disks ............................................................................................................5-32

Nodes ...................................................................................................................................................................5-35

Nodes Overview ...........................................................................................................................................5-35

Edit Node Properties .....................................................................................................................................5-36

Node Drives ...................................................................................................................................................5-36

Adding Nodes ...............................................................................................................................................5-37

Removing Nodes ..........................................................................................................................................5-41

Node Identification ......................................................................................................................................5-42

Disks ......................................................................................................................................................................5-43

Replacing Drives ...........................................................................................................................................5-43

Adding Drives ................................................................................................................................................5-44

Chapter 6 - Security Options

Overview ...............................................................................................................................................................6-1

Guidelines for Local Authentication .............................................................................................................6-2

User and Group ID Assignments .................................................................................................................... 6-3

Security Guides ..................................................................................................................................................... 6-3

Windows Active Directory Security Guide ...................................................................................................6-4

Entire Volume Security Guide ........................................................................................................................6-5

Folder on Volume Security Guide .................................................................................................................6-5

Shares .....................................................................................................................................................................6-5

Share Security Overview ................................................................................................................................6-6

Create Shares ..................................................................................................................................................6-6

Edit Share Properties .......................................................................................................................................6-8

Delete Shares ..................................................................................................................................................6-9

Configuring Share Access .............................................................................................................................6-9

Local Users ...........................................................................................................................................................6-16

Create a User ................................................................................................................................................6-16

Edit User Properties .......................................................................................................................................6-17

Local User Password Policies .......................................................................................................................6-19

Assign User to Group ....................................................................................................................................6-20

Delete Local User ..........................................................................................................................................6-21

Local Groups .......................................................................................................................................................6-22

Create New Group .......................................................................................................................................6-22

Edit Group Properties ...................................................................................................................................6-23

Specify Users in Group ..................................................................................................................................6-24

10400455-001 Dec 2013 ©Overland Storage, Inc. vii

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Contents

Delete Group ................................................................................................................................................6-24

Security Models ................................................................................................................................................... 6-25

Managing Volume Security Models ...........................................................................................................6-25

ID Mapping .........................................................................................................................................................6-25

Add Mapping ................................................................................................................................................6-26

Change Mapping .........................................................................................................................................6-29

Auto Mapping ...............................................................................................................................................6-31

Remove Mappings .......................................................................................................................................6-33

Remove Missing ID Mappings .....................................................................................................................6-36

Filesystem Updates .......................................................................................................................................6-37

Home Directories ................................................................................................................................................6-37

Configure Home Directories ........................................................................................................................6-38

Chapter 7 - System Monitoring

System Status .........................................................................................................................................................7-2

SnapScale Status ............................................................................................................................................7-2

Activity ...................................................................................................................................................................7-3

Active Users .....................................................................................................................................................7-3

Open Files ........................................................................................................................................................7-4

Network Monitor .............................................................................................................................................7-4

Event Log ...............................................................................................................................................................7-7

Filter the Log ....................................................................................................................................................7-7

Protocol Manager ................................................................................................................................................ 7-8

SnapScale Settings ...............................................................................................................................................7-9

Tape .....................................................................................................................................................................7-11

Chapter 8 - Maintenance

Shutdown and Restart .........................................................................................................................................8-2

Manually Powering Nodes On and Off ........................................................................................................8-2

Data Import ...........................................................................................................................................................8-2

Setting Up a Data Import Job .......................................................................................................................8-4

Stopping an Import Job .................................................................................................................................8-6

Recreating an Import Job .............................................................................................................................8-6

Preserving Permissions ....................................................................................................................................8-6

OS Update ............................................................................................................................................................. 8-7

Update the RAINcloudOS Software .............................................................................................................8-8

Software Update Notification .......................................................................................................................8-8

Configuring Update Notification ..................................................................................................................8-9

Manually Checking for Updates ...................................................................................................................8-9

Support ..................................................................................................................................................................8-9

Phone Home Support ...................................................................................................................................8-10

Registering Your Cluster ...............................................................................................................................8-12

Tools ......................................................................................................................................................................8-13

Email Notification ..........................................................................................................................................8-14

Host File Editor ...............................................................................................................................................8-15

Delete SnapScale Cluster ............................................................................................................................8-16

Chapter 9 - Misc. Options

Home Pages – Web/Admin .................................................................................................................................9-1

Web Home ......................................................................................................................................................9-2

Administration .................................................................................................................................................9-3

10400455-001 Dec 2013 ©Overland Storage, Inc. viii

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Contents

SnapExtensions ......................................................................................................................................................9-5

Snap EDR .........................................................................................................................................................9-5

Snap Finder ...........................................................................................................................................................9-6

Edit Snap Finder Properties ............................................................................................................................9-7

Change Password ................................................................................................................................................ 9-8

Changing Your Password ...............................................................................................................................9-8

Management Interface Settings ........................................................................................................................9-9

Appendix A - Backup Solutions

Backup and Replication Solutions ..................................................................................................................... A-1

Snap Enterprise Data Replicator ....................................................................................................................... A-1

Snap EDR Usage ............................................................................................................................................ A-2

Configuring Snap EDR for RAINcloudOS .....................................................................................................A-2

Scheduling Jobs in Snap EDR ....................................................................................................................... A-3

Backup via SMB or NFS ........................................................................................................................................ A-3

Backup via Agent or Media Server ................................................................................................................... A-3

Utility IP Address ............................................................................................................................................. A-3

Appendix B - Security and Access

Security Model Rules ............................................................................................................................................B-1

Security Model Management .............................................................................................................................B-2

Special Share Options ..........................................................................................................................................B-2

Hiding Shares ...................................................................................................................................................B-2

Where to Place Shares ...................................................................................................................................B-3

File and Share Access ..........................................................................................................................................B-3

Cumulative Share Permissions .......................................................................................................................B-3

Snapshot Shares and On Demand File Recovery .......................................................................................B-3

Creating a Snapshot Share ...........................................................................................................................B-3

File-level Security ..................................................................................................................................................B-4

Security Personalities and Security Models ..................................................................................................B-4

Windows ACLs .................................................................................................................................................B-4

Appendix C - RAINcloudOS Ports

Appendix D - Troubleshooting

LED Indicators ....................................................................................................................................................... D-1

SnapScale X2 Node LEDs .............................................................................................................................. D-1

SnapScale X4 Node LEDs .............................................................................................................................. D-3

Network Reset ...................................................................................................................................................... D-3

Master Glossary & Acronym List

Index

10400455-001 Dec 2013 ©Overland Storage, Inc. ix

Chapter 1

Overview

SnapScale is a flexible, scalable, low-maintenance network-attached storage cluster

composed of a redundant array of independent nodes running RAINcloudOS. This guide

applies to SnapScale nodes running RAINcloudOS version 4.0.

Offering user-selectable levels of data redundancy, SnapScale uses File-level Striping to

write data across multiple nodes and drives simultaneously for instant protection and high

availability. With a SnapScale cluster, volumes can be configured, created, provisioned, and

grown on demand. Special features such as Data Balancer redistributes files to optimize

performance and Spare Distributor evenly distributes spare drives across nodes. Files can be

accessed either through NFS or CIFS/SMB protocols. SnapScale Flexible Volumes

automatically adjust capacity so they only occupy as much space as their data requires.

Topics in Overview:

• SnapScale Conventions

• SnapScale Node Requirements

• RAINcloudOS Specifications

• RAINcloudOS 4.0 Features

• Client and Storage Networks

• Node Port Configurations

SnapScale Conventions

The SnapScale cluster supports three or more nodes hosting redundant sets of data for data

protection. An Administrator can configure, add, or remove nodes on demand to change

storage requirements. The overall storage system is able to easily grow from three nodes to

meet your needs.

Peer sets are created using two or three drives (based on redundancy choices) located on

different nodes. Each peer set member has the same data and metadata as its peers.

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-1

SnapScale/RAINcloudOS 4.0 Administrator’s Guide SnapScale Node Requirements

There are three different states for SnapScale nodes:

• Uninitialized node – an independent node that has not yet been joined to a

SnapScale cluster.

• SnapScale node – a healthy node that is a member of a fully-configured SnapScale

cluster. Both 2U nodes with up to 12 drives and 4U nodes with 36 drives are available.

• Management node – a SnapScale node with special duties involved in managing the

cluster. The Management node is selected automatically by the RAINcloudOS when

the cluster boots. Should that management node fail, another currently available node

is automatically chosen to become the new Management node. This Management node

also hosts peer sets with metadata and data just like all other SnapScale nodes.

Other key concepts include:

• Management IP – the IP address through which the administrator accesses the Web

Management Interface of the current Management node.

• Peer set – a set of two or three disks (each on a separate node) that have mirrored

data for redundancy.

• Cluster Name – the name visible to network clients and used to connect to the cluster

(similar to a server name), and resolvable to node IP addresses via round robin DNS.

• Cluster Management Name – the hostname resolvable to the Management IP for

Web Management Interface access or Snap EDR configuration.

• Data Replication Count – an administrator-specified, cluster-wide count of the

number of mirrored copies of data within the cluster. The Data Replication Count can

be either “2” or “3” and determines the number of drives in a peer set.

A SnapScale cluster consists of two separate networks:

• Client Network – used exclusively for client access. Clients can connect to any node

to access data anywhere on the cluster.

• Storage Network – an isolated network used exclusively by the cluster for inter-node

communications. This includes:

• Heartbeat (node health/presence) sensing.

• Synchronization of peer set members.

• Data transfer between nodes to facilitate clients reading from and writing to files.

SnapScale Node Requirements

The following table details the basic requirements for cluster nodes:

Requirement Detailed Description

Minimum number of nodes A SnapScale cluster must have a minimum of three (3) nodes to

No expansion units A SnapScale node cannot have any expansion units attached to it.

Minimum number of disks per

node

Maximum size of file on cluster While the system reports total free space across the entire cluster, the

operate normally.

Each node must have a minimum of four disks. Additional disks can be

added as needed.

maximum file size at any given time is dictated by free space on the

least-utilized peer set. This is reported in the Web Management

Interface.

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-2

SnapScale/RAINcloudOS 4.0 Administrator’s Guide RAINcloudOS Specifications

Requirement Detailed Description

Common Storage network To form or join a SnapScale cluster, each Uninitialized node must be

connected to the same Storage network as the other nodes.

Storage network links To form or join a SnapScale cluster each Uninitialized node must have

connectivity (active link) on both Storage network ports.

Storage network usage Only a single cluster can use a given Storage network.

Client network separate from

Storage network

Nodes must be running same

RAINcloudOS version

Adding nodes When adding nodes to an existing cluster, the number of nodes added

Disk requirements All disks in the cluster must be the same type of disk (such as SAS)

The Client and Storage networks must be on different (independent)

networks, and the Storage network must be isolated from all other

networks.

To form a SnapScale cluster, all nodes must be running the same

version of RAINcloudOS.

To join an already configured SnapScale cluster, an Uninitialized node

must have the same version of RAINcloudOS as the other SnapScale

nodes:

• If the Uninitialized node has an older version of the RAINcloudOS,

the Uninitialized node must be upgraded to the later version.

• If the Uninitialized node has a newer version of the RAINcloudOS,

then all SnapScale nodes must be upgraded to the later version.

(The node can be reinstalled with a version matching the cluster if

the hardware supports it.)

at one time should be at least the same number as the Data

Replication Count. This ensures the new nodes and cluster are

efficiently utilizing increased storage space.

and same rotational speed.

RAINcloudOS Specifications

These specifications apply to all SnapScale nodes running RAINcloudOS 4.0:

Feature Specification

Network Transport Protocols TCP/IP (Transmission Control Protocol/Internet Protocol)

UDP/IP (User Datagram Protocol/Internet Protocol)

Network File Protocols Microsoft Networking (CIFS/SMB)

UNIX Network Filesystem (NFS) 3.0 only

Hypertext Transfer Protocol (HTTP/HTTPS)

Network Security • Microsoft Active Directory Service (ADS) (member server)

• UNIX Network Information Service (NIS)

• File and Folder Access Control List (ACL) Security for Users and

Groups

• Secure Sockets Layer (SSL v2/3) 128-bit Encryption

• SMTP Authentication and support for email encryption (STARTTLS

and TLS/SSL encryption protocols)

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-3

SnapScale/RAINcloudOS 4.0 Administrator’s Guide RAINcloudOS 4.0 Features

Feature Specification

Network Client Types Microsoft Windows 2000 SP4/2003/2003 R2/2008 SP2/2008 R2

/XP SP3/Vista SP2/7/8/2012

Mac OS X 10.5/10.6/10.7/10.8 (via CIFS/SMB)

Sun Solaris 10 and 11

HP-UX 11

AIX 5.3/6

Red Hat Enterprise Linux (RHEL) 4.x/5.x/6.x

Novell SuSE Linux Enterprise Server (SLES) 10.x/11.x

Data Protection • Snapshots for immediate or scheduled point-in-time images of the

cluster filesystem

• Support for network backup via CIFS/SMB

• Support for Symantec Backup Exec 2010/2012 and NetBackup 7.5.

• APC® brand Uninterruptible Power Supply (UPS) with Network

Management Cards, a USB interface, or a serial interface (with USBto-Serial adapter) are supported for graceful system shutdown

System Management • Browser-based administration tool called the Web Management

Interface

• Read-only CLI support

• Environmental monitoring

• Email event notification

• Data importation (migration)

• SNMP (MIB II and Host Resource MIB)

• User disk quotas for Windows, UNIX/Linux, FTP/FTPS

• NIS Group disk quotas for UNIX/Linux

DHCP Support Only supports Dynamic Host Configuration Protocol (DHCP) in an

Uninitialized node for configuring or adding to a cluster.

RAINcloudOS 4.0 Features

NOTE: For details and descriptions of all the new features and a list of other improvements to the

operating system, see the Product Release Notes on the Overland SnapScale website.

With the release of RAINcloudOS 4.0, the following features and functionality are now

available:

Feature New Functionality

iSCSI Support SnapScale can now create and host iSCSI disk targets on

the cluster file system. These iSCSI disks can register with

an iSNS server, and can also be managed by Windows

VSS/VDS.

SMB2, FTP/FTPS, and SNMP

Support Added

Improved Network Monitoring The Network Monitor page provides additional information

Added User/Group Quotas per

Volume

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-4

SMB2, FTP/FTPS, and SNMP are all now supported in

RAINcloudOS.

including high-water marks, network activity for the whole

cluster, and clearer labels.

Storage consumption and file count quotas can now be

configured for users and NIS groups per volume.

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Client and Storage Networks

Feature New Functionality

Data Balancer & Spare

Distributor Improved

Client and Storage Networks

SnapScale requires two separate networks to function correctly: A public network (Client)

and a private network (Storage). To support failover, two Storage network switches must be

connected together (using a 1GbE or 10GbE cable between the switches). Each of the two

Storage network ports on the node need to be connected to a different Storage switch.

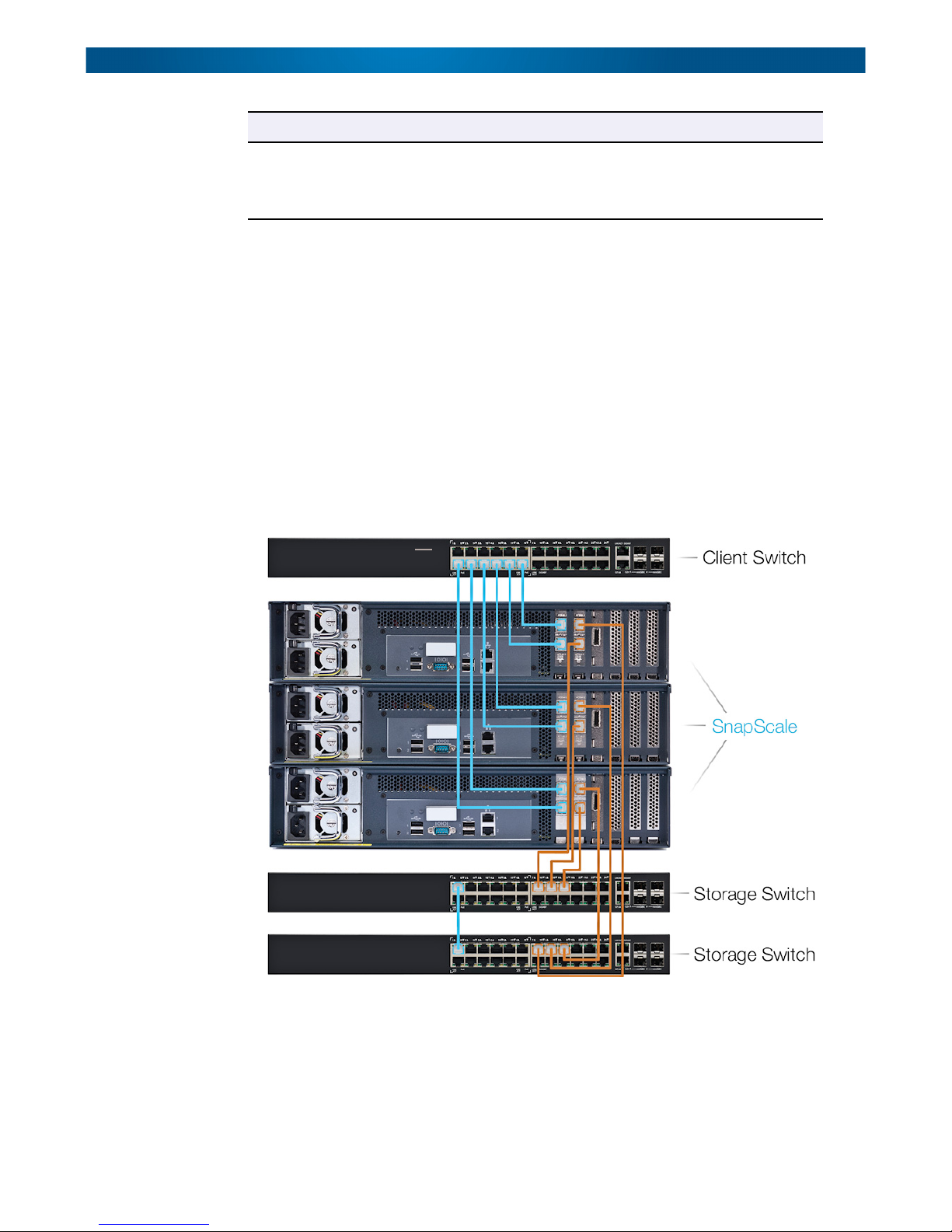

Node/Switch Cabling Example

The following example shows three dual 10GbE card X2 nodes and how to connect them to

the network switches. The cables used to connect to the Client side of the network (blue)

originate from the Client 10GbE card in slot 1. Two cables are used to connect both ports of

each node to the Client switch.

Data Balancer (formerly Capacity Balancer) redistributes

files to optimize performance. Spare Distributor (formerly

the Spare Disk Balancer) evenly distributes spare drives

across nodes. Both have been improved for faster results.

The cables used to connect to the Storage side of the network (orange) originate from the

Storage 10GbE card in slot 2. For each node, one cable is used to connect a one Storage port

of each note to one of the two Storage switches used for failover.

For connections between 10GbE cards and 10GbE switches, use either direct-attached

copper cables or fibre cables with SFP+ modules pre-installed in the card and switch ports.

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-5

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Node Port Configurations

IMPORTANT: If using fibre cables, you must use Overland-approved SFP+ modules. With the

cluster powered OFF, insert the modules into the card and switch ports. Connect the fibre

cable between the two SFP+ modules and restore power to the cluster.

Node Port Configurations

Both the X2 and X4 nodes come in three different configurations: 1GbE ports (both Client

and Storage ports), a single 10GbE card (with 1GbE Client ports), and dual 10GbE cards.

NOTE: If desired, optional 10GbE cards can be added later to upgrade the node.

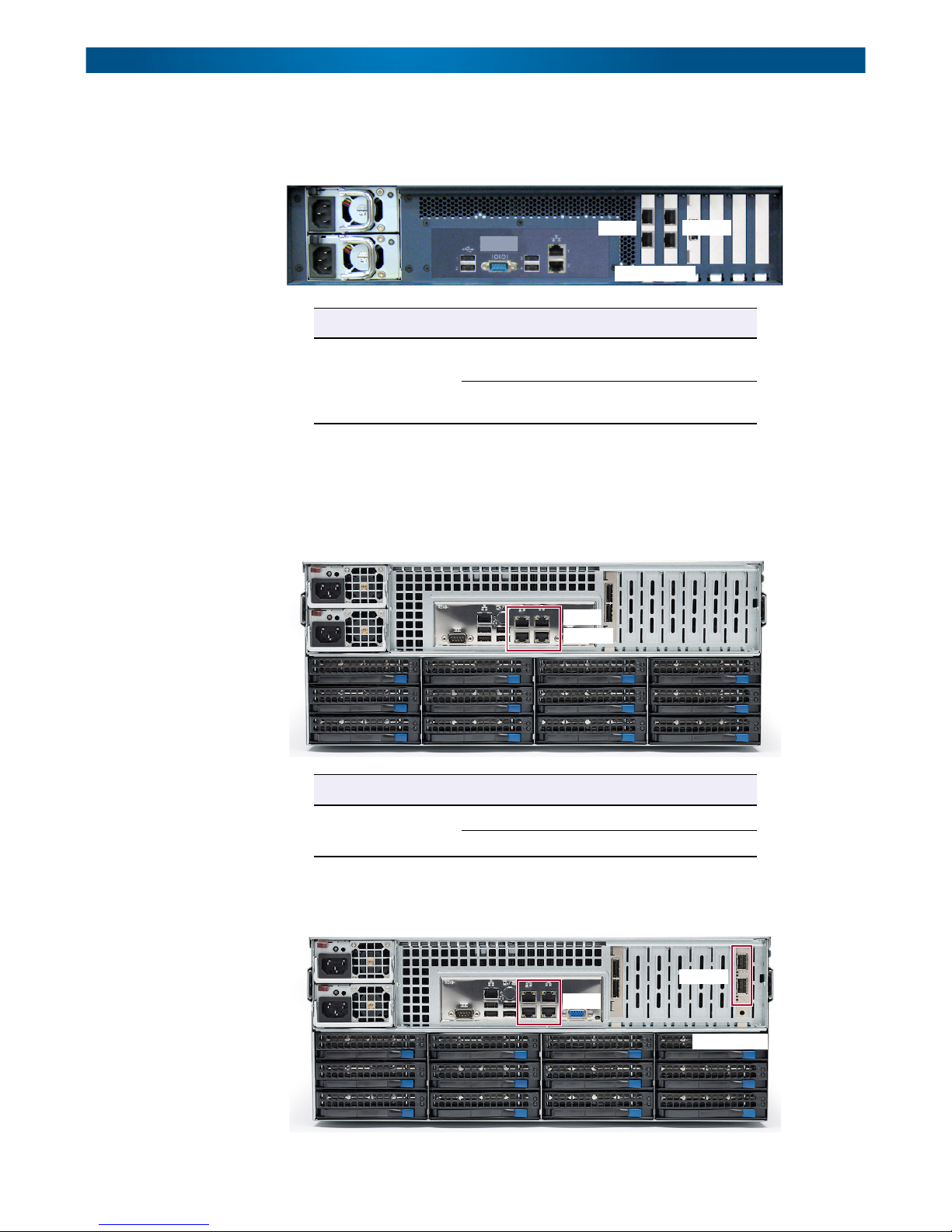

X2 Node Configurations

Basic 1GbE. At the rear of the X2 node, the 1GbE ports connected directly to the

motherboard are configured to access the Client and Storage networks.

Storage

1

Client

2

Configuration Node GbE Ports Network Switch

Basic 1GbE X2 Ports 1 & 2 Client (public)

Slot 2 1GbE Card

(ports 3 & 4)

Storage (private)

3

4

1GbE Card

Single 10GbE. The single-card 10GbE configuration uses the two 1GbE ports for the Client

connection and the two 10GbE ports on the card for the Storage connections.

Storage

1

Client

2

Configuration Node GbE Ports Network Switch

Single 10GbE X2 Ports 1 & 2 Client (public)

Slot 2 10GbE Card

(ports 3 & 4)

Storage (private)

3

4

10GbE Card

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-6

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Node Port Configurations

Dual 10GbE. The dual-card configuration uses the left 10GbE card ports for the Client

connections and the right 10GbE card ports for the Storage connections. The 1GbE ports are

not used.

1

3

Client

10GbE Cards

Configuration Node GbE Ports Network Switch

Storage

4

2

Dual 10GbE X2 Slot 1 10GbE Card

X4 Node Configurations

Basic 1GbE. At the rear of the 1GbE configuration, there are two sets of 1GbE ports

connected directly to the motherboard for connecting to the switches. The top two are for the

Client network; bottom two for Storage.

Configuration Node GbE Ports Network Switch

(ports 1 & 2)

Slot 2 10GbE Card

(ports 3 & 4)

Client

341

2

Storage

Client (public)

Storage (private)

Basic 1GbE X4 Ports 1 & 2 Client (public)

Single 10GbE. The single-card 10GbE configuration uses the two 1GbE ports for the Client

connection and the two 10GbE ports on the card for the Storage connections.

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-7

Ports 3 & 4 Storage (private)

Storage

341

Client

2

10GbE Card

5

6

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Node Port Configurations

Configuration Node GbE Ports Network Switch

Single 10GbE X4 Ports 1, 2, 3, & 4 Client (public)

Slot 7 10GbE Card

(ports 5 & 6)

Storage (private)

Dual 10GbE. The dual-card configuration uses the left 10GbE card ports for the Client

connections and the right 10GbE card ports for the Storage connections. The 1GbE ports are

not used.

Client

Unused

341

2

10GbE Cards

567

8

Storage

Configuration Node GbE Ports Network Switch

Dual 10GbE X4 Slot 6 10GbE Card

(ports 7 & 8)

Slot 7 10GbE Card

(ports 5 & 6)

Client (public)

Storage (private)

10400455-001 Dec 2013 ©Overland Storage, Inc. 1-8

This section covers the initial setup and configuration of an individual SnapScale node

running RAINcloudOS 4.0. It also addresses how to use that node to set up a SnapScale

cluster of three or more nodes, or to add the node to an existing SnapScale cluster.

NOTE: For information concerning the installation and wiring of the SnapScale node hardware,

refer to either the SnapScale X2 Node Quick Start Guide or the SnapScale X4 Node Quick

Start Guide.

Topics in Setup and Configuration:

• Connecting for the First Time

• Create a New SnapScale Cluster (via Wizard)

• Join an Existing SnapScale Cluster (via Wizard)

• Web Management Interface

Connecting for the First Time

Setup and Configuration

NOTE: Uninitialized nodes are configured to acquire their IP address from a DHCP server. If no

DHCP server is found on the network, the node defaults to an IP address in the range of

169.254.xxx.xxx and is labeled in SnapServer Manager (SSM) as “ZeroConf”. You may not

be able to see Uninitialized nodes on your network until you discover them using either the

default node name or the SSM utility and optionally assign them an IP address.

Connect Using the Node Name

This procedure requires that name resolution services (via DNS or an equivalent service) be

operational.

NOTE: Any node that is selected to be part of a cluster can be used to create the cluster.

1. Find the node name of an Uninitialized node that is to be used to create a new

SnapScale cluster.

A SnapScale node name is of the format “Nodennnnnnn,” where nnnnnnn is the node

chassis number. The node number is a unique, numeric-only string that appears on a

label affixed to the bottom of the appliance.

2. In a web browser, enter the URL to connect to the node.

For example, enter “http://Nodennnnnnn” (using the node name).

3. Press Enter to connect to the Web Management Interface.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-1

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Connecting for the First Time

4. In the login dialog box, enter admin as the user name and admin as the password

(the system defaults), then click OK.

5. Complete the Initial Setup Wizard to either create a new SnapScale cluster or join

an existing cluster.

Connect Using SSM

1. Launch SnapServer Manager (SSM).

SSM discovers all SnapServers, SnapScale clusters, and SnapScale nodes on its local

network segment and displays their names, IP addresses, and other information in the

main console. If you do not have a DHCP server, there might be a delay before the node

appears on the network.

NOTE: To distinguish multiple SnapServers or SnapScale nodes, you may need to find their

default names as explained in Connect Using the Node Name on page 2-1.

2. If using a DHCP server, proceed to Step 3; otherwise, assign an IP address to one of

the nodes to be configured in the cluster.

NOTE: Only one node needs to be configured with an IP address in order to create the cluster.

a. In SSM, right-click the node name.

b. Select Set IP Address.

c. Enter an IP address and a subnet mask, then click OK.

3. In SSM, right-click the node name and select Launch Web Administration.

4. Log into the Web Management Interface.

In the login dialog box, enter admin as the user name and admin as the password

(the system defaults), then click OK.

5. Complete the Initial Setup Wizard to either create a new SnapScale cluster or join

an existing cluster.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-2

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

Create a New SnapScale Cluster (via Wizard)

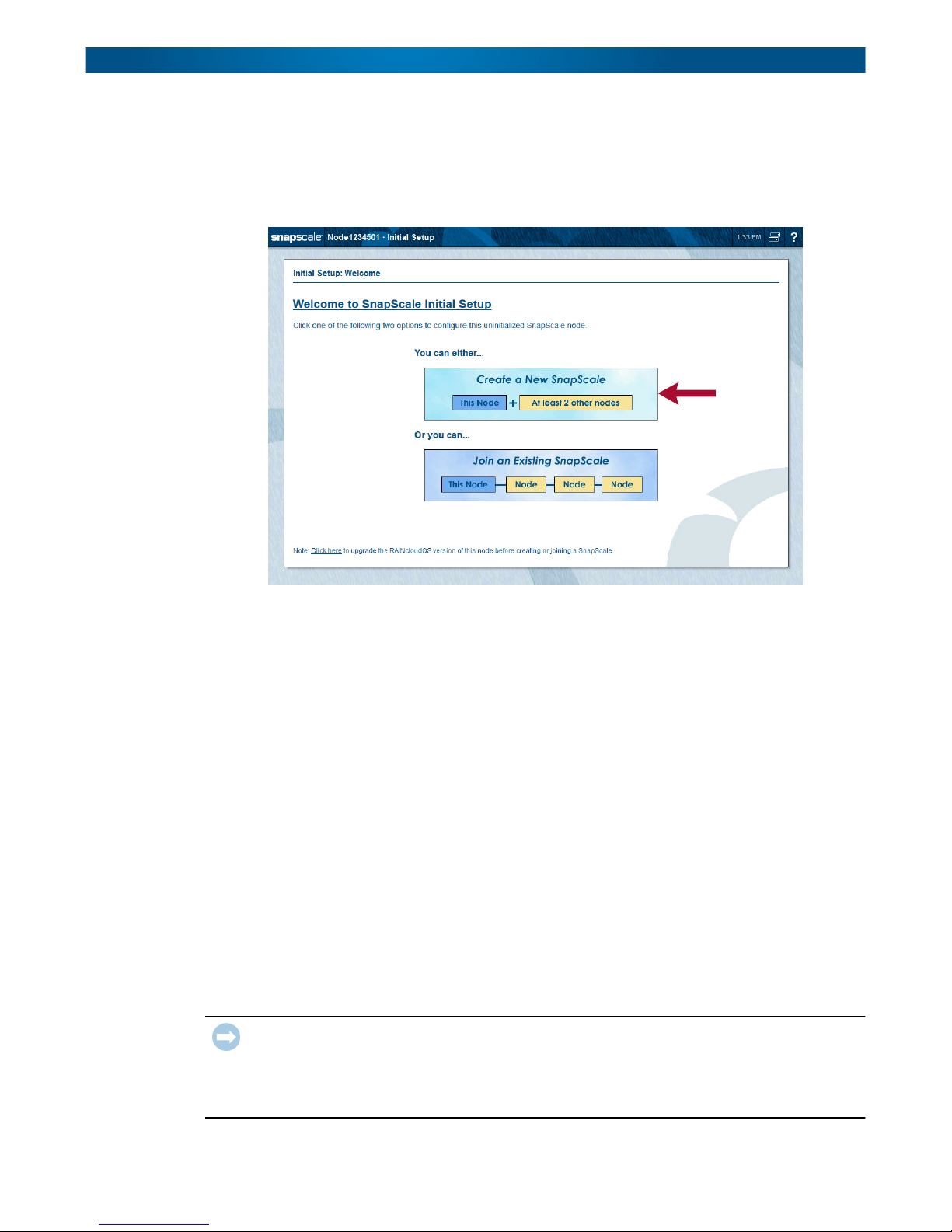

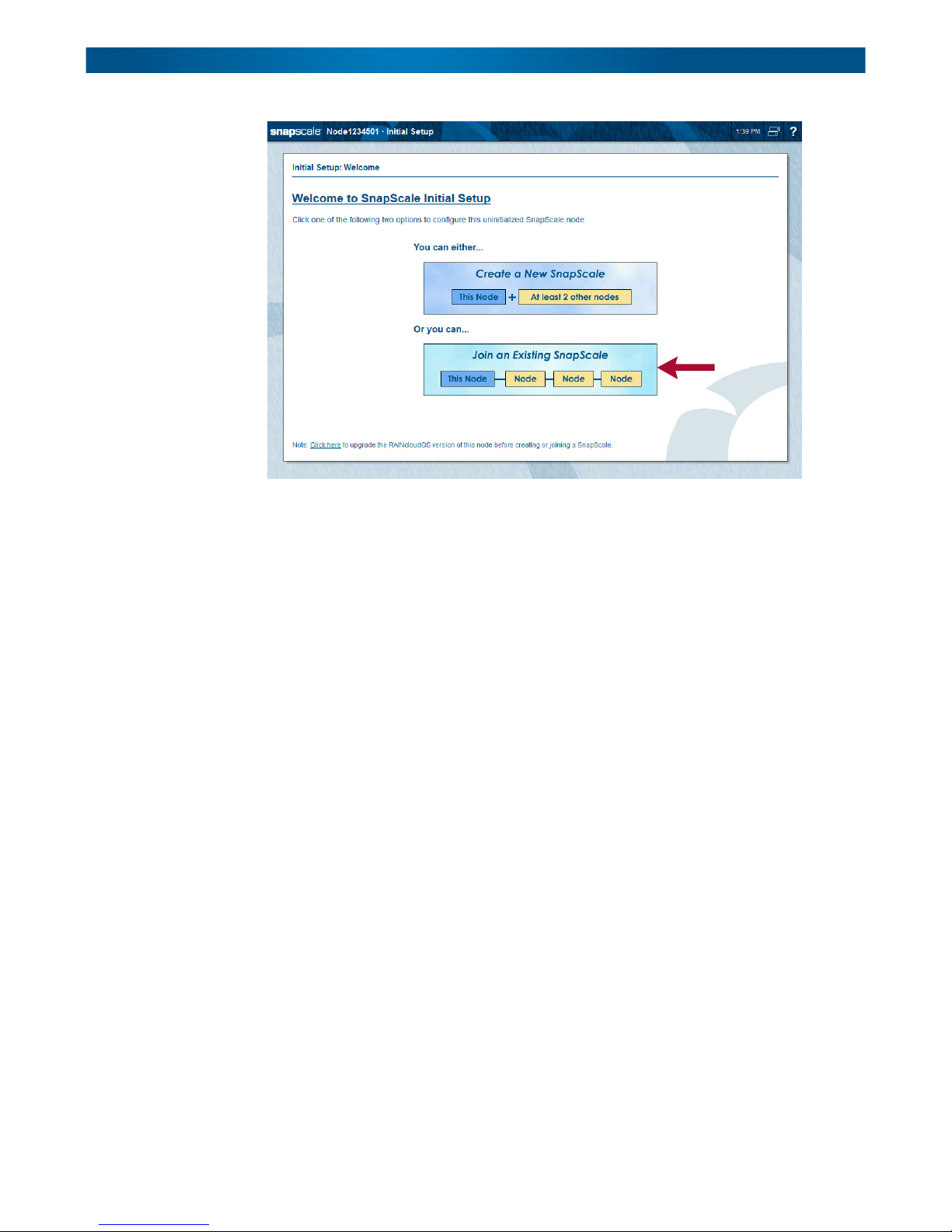

On a new node, once you log in, the Initial Setup Wizard runs displaying the Welcome page.

From the Initial Setup Wizard, you can use this node to create a new SnapScale cluster by

connecting to two or more other nodes. Click Create a New SnapScale to start the wizard.

The Initial Setup Wizard for creating a new SnapScale cluster consists of seven steps:

Step 1: Select the nodes to be included in the cluster.

Step 2: Review the Client network information.

Step 3: Choose the static TCP/IP settings for the Client network.

Step 4: Populate the Static IP addresses for the nodes.

Step 5: Enter the basic SnapScale properties.

Step 6: Set the date and time.

Step 7: Verify the settings and create a SnapScale cluster.

NOTE: After the cluster is created, you are asked to configure the Administrator’s password as part

of Step 7.

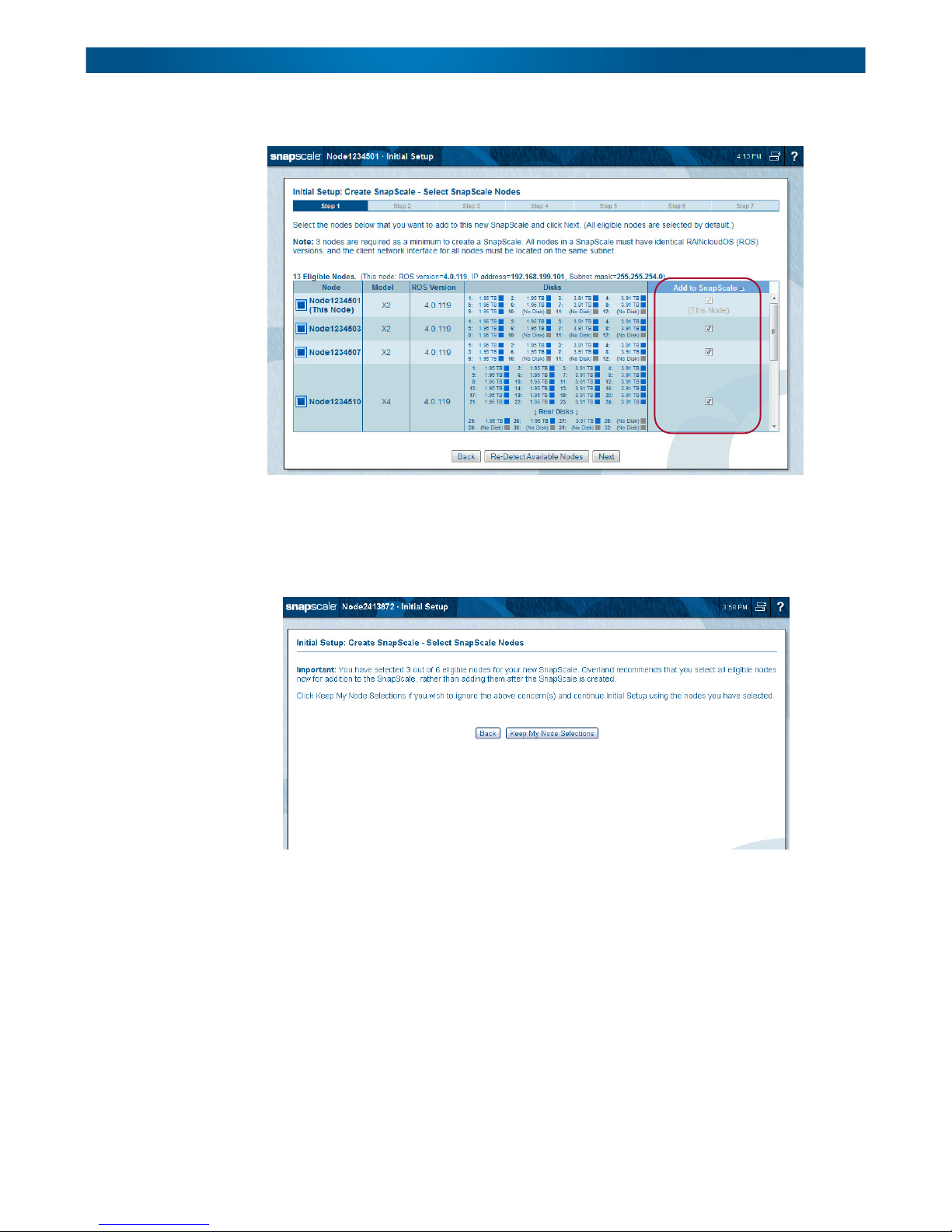

Step 1 – Select SnapScale Nodes

Select the nodes you want to use from the list of eligible nodes.

IMPORTANT: At least three nodes are required to create a SnapScale clustered network. All

nodes must have the identical version of RAINcloudOS (ROS) and be on a subnet that does not

contain an existing cluster. The Client network interfaces for all the nodes must be located on the

same public network subnet, and the Storage network interfaces for all nodes must be located on

the same private Storage network subnet. The nodes cannot have any expansion units attached.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-3

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

Any combination of node types (X4 and X2) can be used to create a cluster.

Verify that the boxes in the Add to SnapScale column for the nodes you want to use are

checked. Click Re-Detect Available Nodes to refresh the list. When ready, click Next.

NOTE: If you deselect one or more of the detected nodes, when you click Next a message page is

displayed recommending that you add all the nodes at once.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-4

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

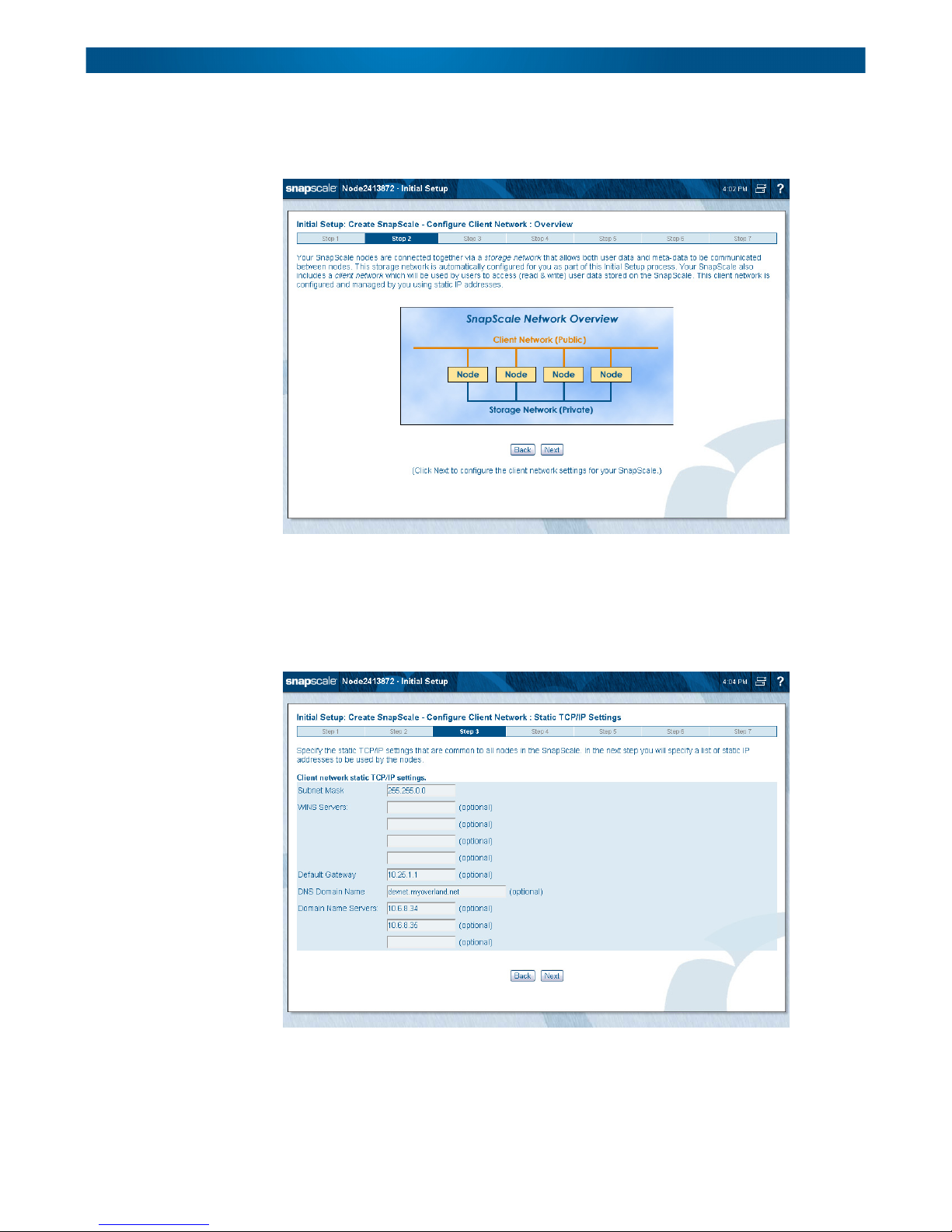

Step 2 – Client Network Configuration Overview

Review the information about setting up your Client network. Click Next to continue.

Step 3 – Choose Client Network Static TCP/IP Settings

Use this step to specify the static TCP/IP settings that will be common to all nodes in the

cluster. Then click Next to continue to the next page to set the actual node static IP

addresses.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-5

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

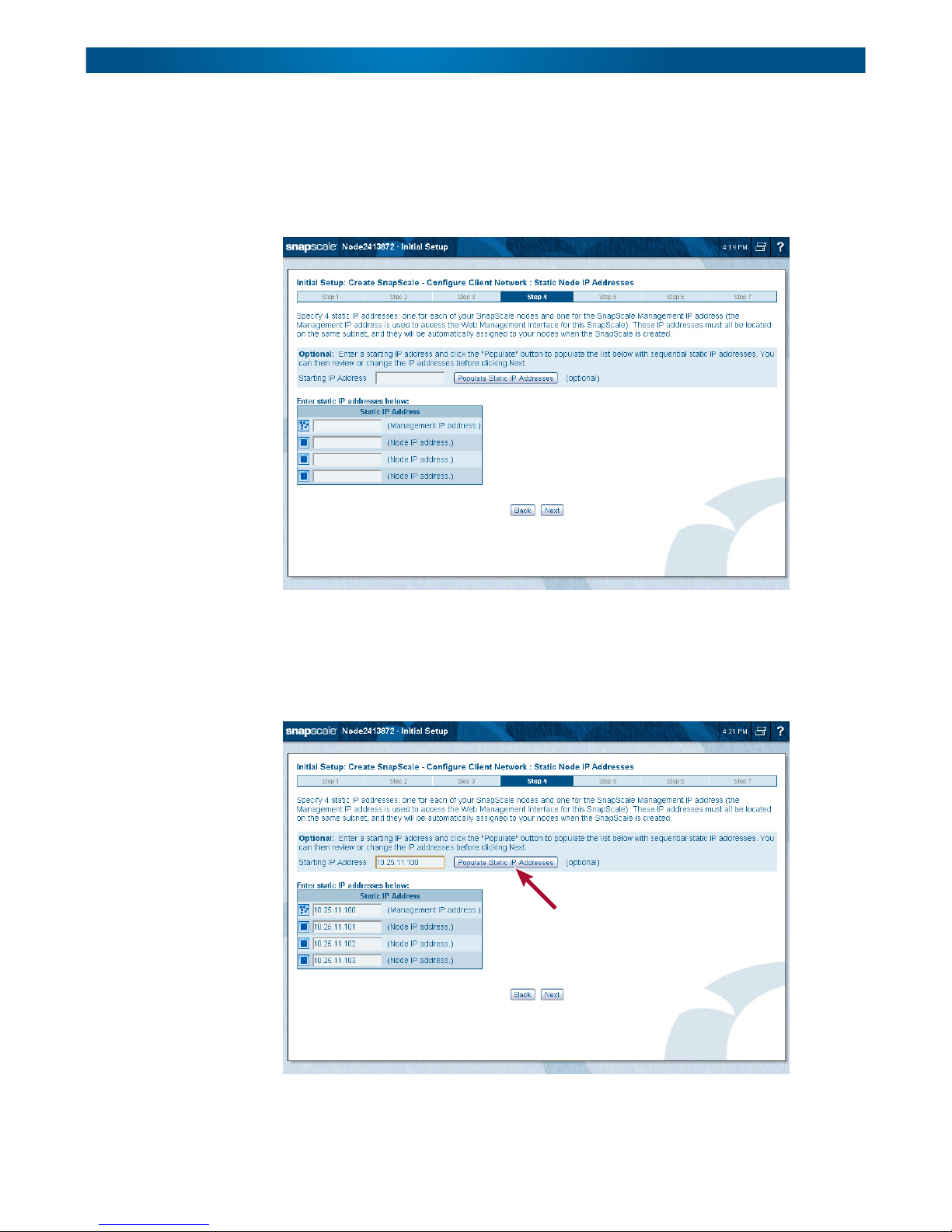

Step 4 – Configure Node Static IP Addresses

A SnapScale cluster requires a set of static IP addresses: one for each node, and one for the

Management IP. Use this page to specify the static IP addresses for each of your nodes and

for the SnapScale Management IP address used to access the Web Management Interface for

this cluster.

These IP addresses must all be located on the same subnet. They are automatically assigned

to your nodes when the SnapScale cluster is created.

The Populate Static IP Addresses button can be used to automatically enter a sequential list

of static IP addresses. Just enter an IP address on the subnet and click Populate Static IP

Addresses

. The fields below it are automatically populated.

Click Next to continue.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-6

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

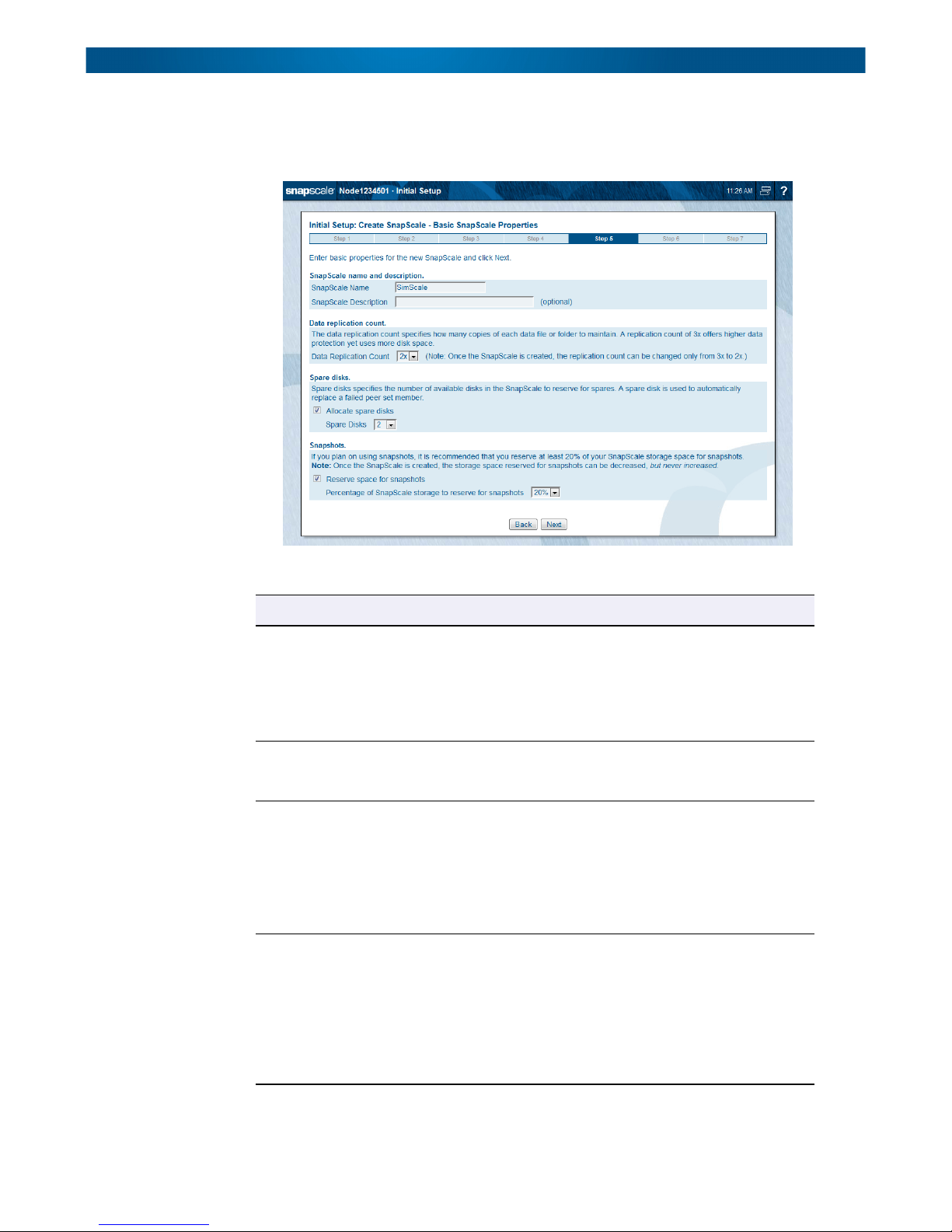

Step 5 – Basic SnapScale Properties

Use this step to enter the basic properties for your new SnapScale cluster, then click Next.

This table lists and describes the basic options:

Option Description

SnapScale Name Either accept the default name or enter an alphanumeric

name up to 15 characters in length. Network clients use this

name with round robin DNS name resolution to connect to

the cluster.

The default name is “Scalennnnnnn” (where nnnnnnn is the

appliance number of the node used to create the cluster).

SnapScale Description This optional field provides a place to define the cluster in the

overall scheme of your network and better identify the cluster

on a LAN.

Data Replication Count The data replication count establishes the level of data

redundancy in the cluster. The setting specifies how many

disks are in a peer set and as a result how many copies of

each data file or folder to maintain. A count of 3x offers

higher data protection but uses more disk space.

Once the cluster is created, the count can only be decreased

from 3x to 2x. It cannot be increased from 2x to 3x.

Spare Disks Allocation Check the box and select the number of spare disks you want

to reserve. A spare disk is used to automatically replace a

failed Peer Set member.

If there are unused drives remaining after allocating the

number of spares requested, they are used for other peer

sets. If there is an insufficient number of drives left to create

a final peer set, the drives are configured as additional

spares.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-7

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

Option Description

Reserve Space for

Snapshots

IMPORTANT: If you uncheck the box for reserving space for snapshots, an alert is displayed to

remind you that the feature will be permanently disabled for the cluster.

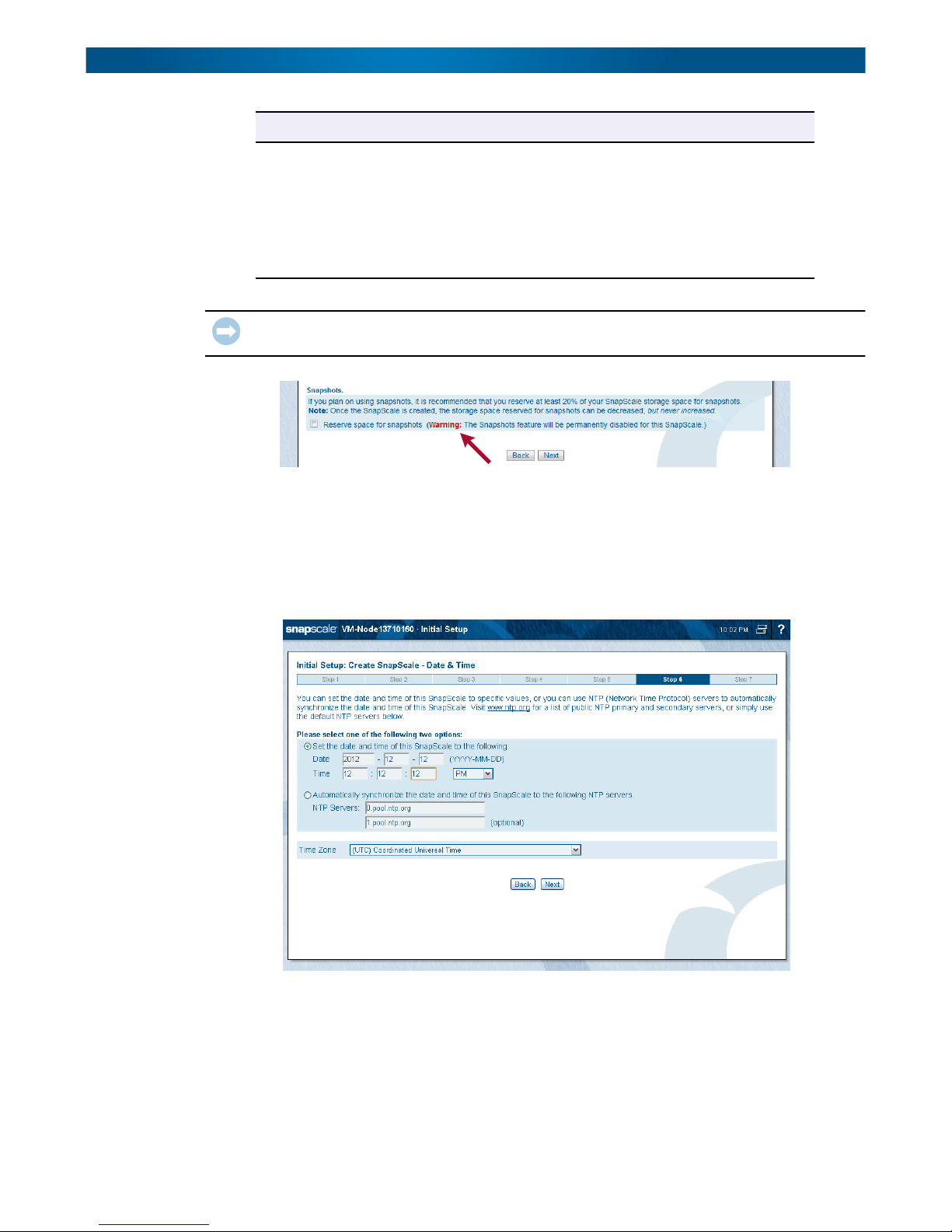

Step 6 – Set Date and Time

Nodes automatically synchronize time with one another. You can either manually set the

date and time to specific values, or you can use NTP (Network Time Protocol) servers to

automatically synchronize the date and time. Visit www.ntp.org for a list of public NTP

primary and secondary servers, or simply use the default NTP servers below.

Check the box and select the percentage of the storage

space you want to reserve for snapshots. It is recommended

that at least 20% of your SnapScale storage space be set

aside for snapshots.

NOTE: Once the SnapScale cluster is created, the storage

space reserved for snapshots can only be

decreased. It can never be increased.

If you intend to join the cluster to a Windows domain, configure the cluster using the manual

settings to set the date and time. Otherwise, configure the cluster to synchronize with up to

two NTP servers.

NOTE: NTP cannot be used if you are joining a Windows Active Directory domain.

Default NTP servers automatically populate the server fields. The Time Zone is set

automatically to UTC time but can be changed using the drop-down list.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-8

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

Click Next to continue.

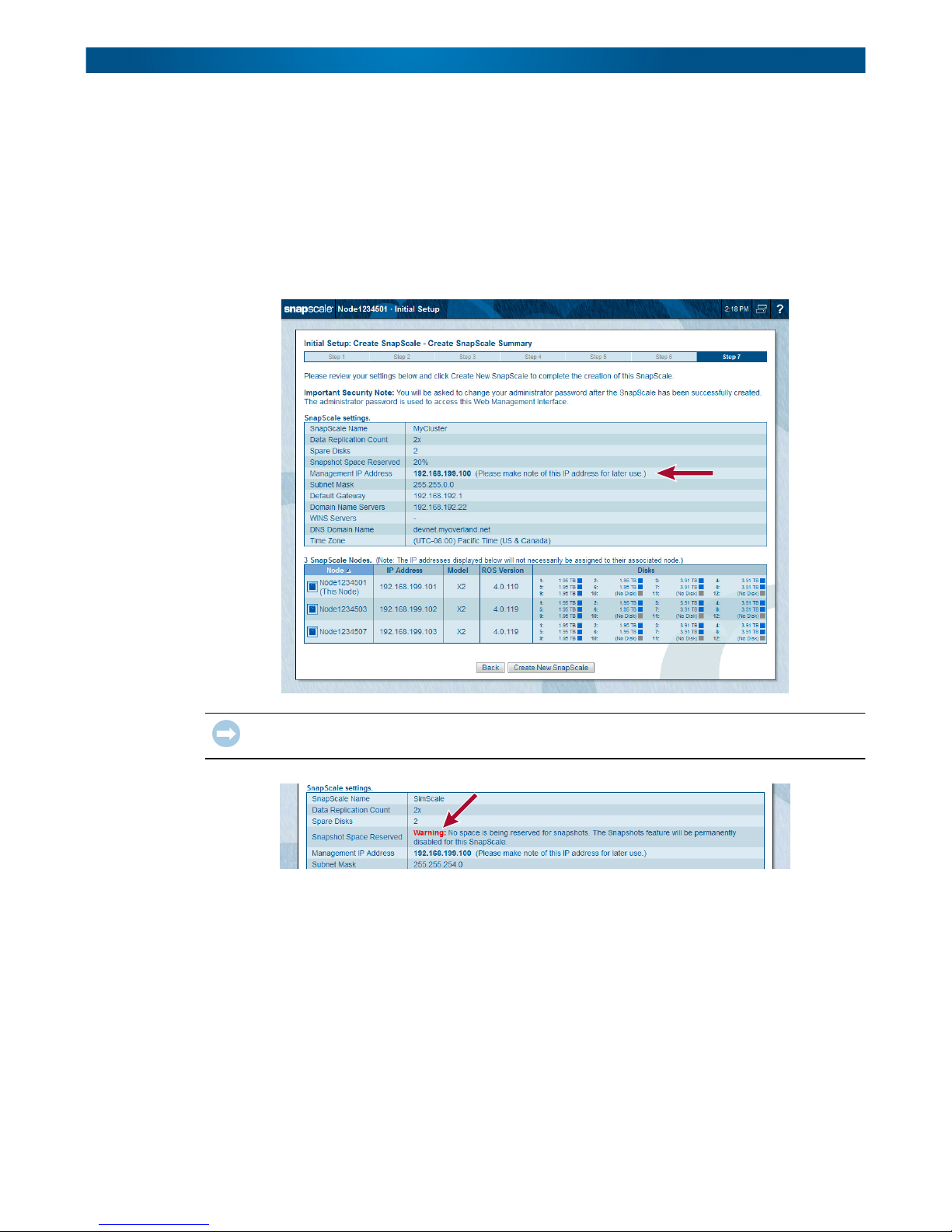

Step 7 – Summary Verification & Cluster Creation

At this step, review the current settings and go back if you need to make changes.

NOTE: Make note of the Management IP address for later use. Also, both the Client and Storage

network bond types can be changed after the cluster is created. See TCP/IP Networking in

Chapter 4.

IMPORTANT: If you uncheck the box for reserving space for snapshots, an alert is displayed to

remind you that the feature will be permanently disabled for the cluster.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-9

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

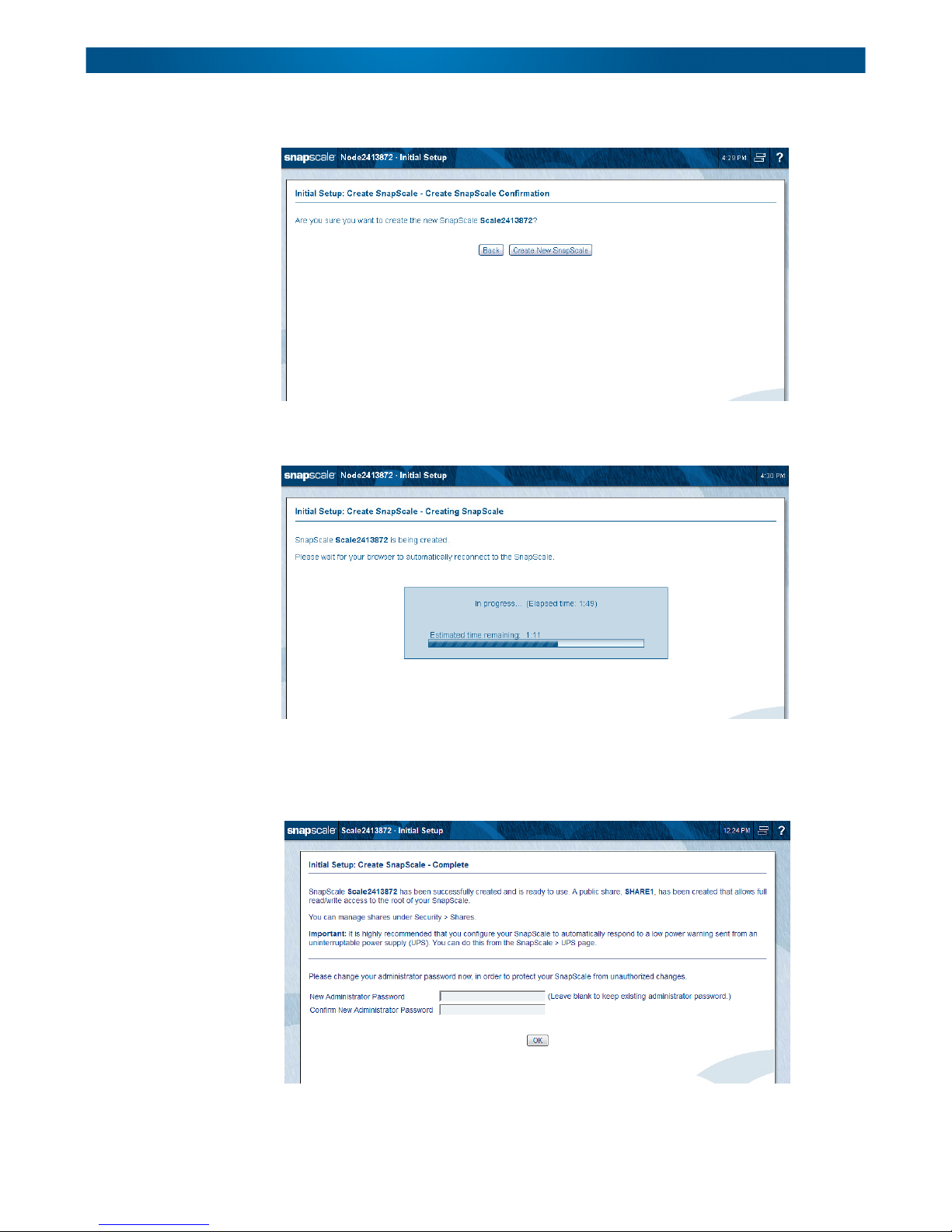

Click Create New SnapScale to complete the process. A confirmation page is shown.

Click Create New SnapScale again to create the cluster. A progress bar is displayed as the

SnapScale cluster is created.

Once the cluster is created and the system changes the uninitialized node IP addresses from

DHCP to the configured static IP address, a completion page is displayed stating that a

share was created and suggesting UPS units be enabled. To enhance security, you are asked

to change the default administrator password after the cluster has been successfully created:

It is highly recommended that you use the password fields at the bottom of the page to

change the Administrator’s password for the cluster.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-10

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Create a New SnapScale Cluster (via Wizard)

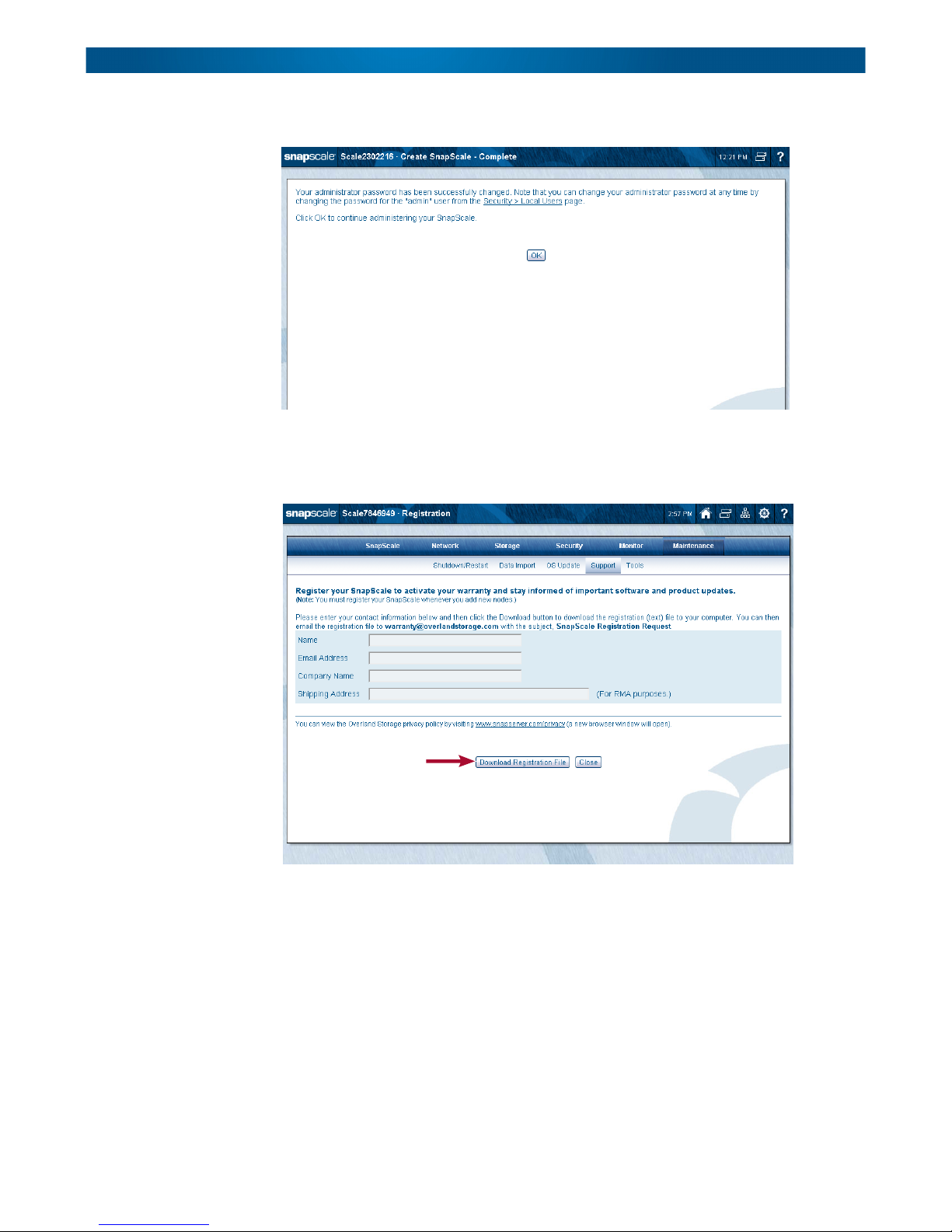

After changing the Administrator’s password and clicking OK, a success page is shown:

Click OK to continue. The Login page is shown. Log in using the new password.

After changing the password and logging back in, the Registration page is displayed to

facilitate activating your warranty:

Complete the registration fields and then click Download Registration File. Email that file

(SnapScaleRegistration.csv) to Overland Storage Service (warranty@overlandstorage.com)

using the subject line “SnapScale Registration Request” to initiate your warranty coverage.

(See To Register Your Cluster in Chapter 8.)

Click Close. You will receive a confirmation email to confirm and complete the registration.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-11

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Join an Existing SnapScale Cluster (via Wizard)

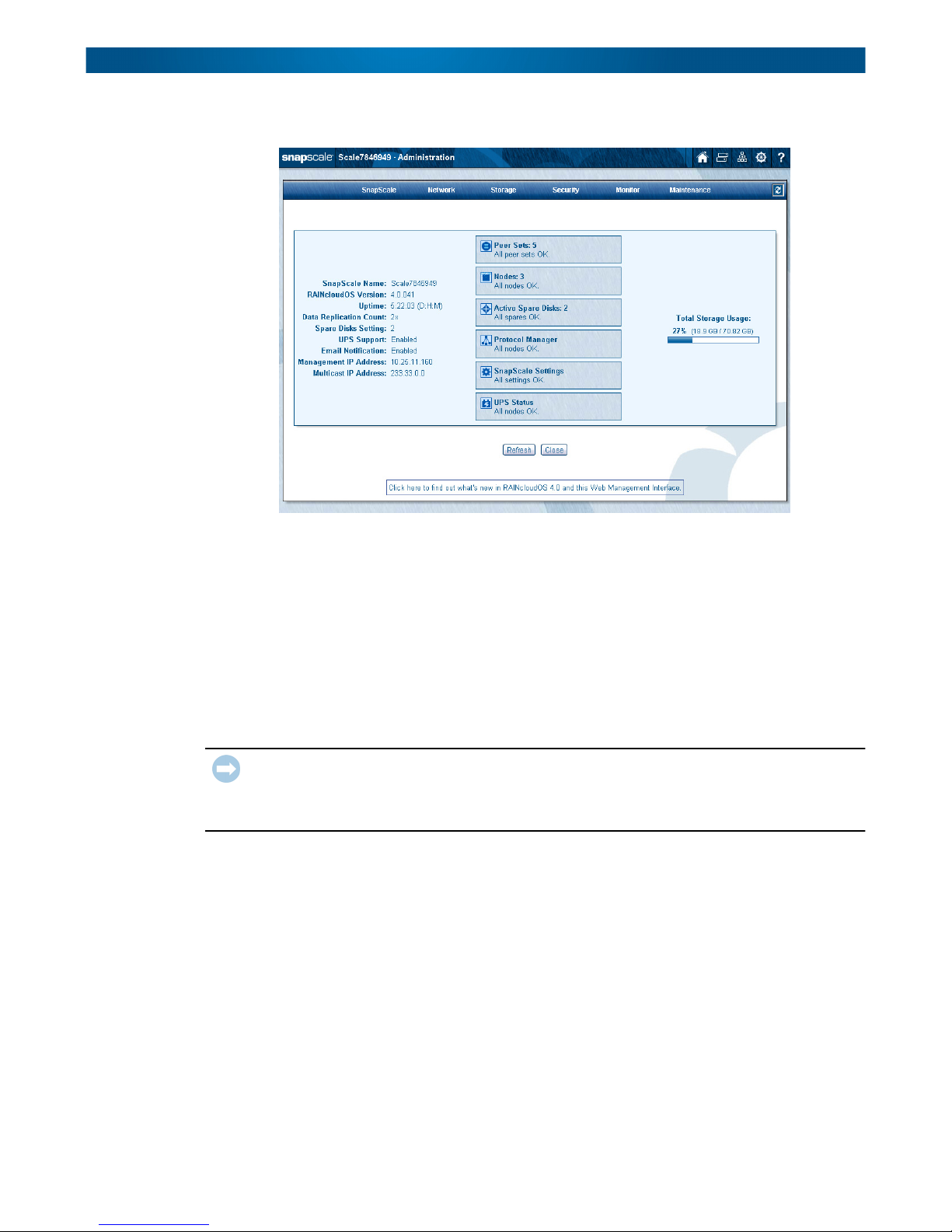

When you close that page, the Administration page is displayed:

It is recommended that you configure your DNS in your network so clients can resolve the

cluster using round-robin name resolution:

• Add a host record for the cluster management name (<clustername>–mgt) to resolve to

the Management IP address.

• Add multiple host records for the cluster name resolving to each of the node IP

addresses. The DNS resolves lookups for the cluster name via round robin.

Join an Existing SnapScale Cluster (via Wizard)

IMPORTANT: While the Initial Setup Wizard can be used to add one or more new nodes to an

existing cluster, it is recommended that you log into the existing cluster’s Web Management

Interface and add the nodes using the Add Nodes function (Storage > Nodes > Add Nodes).

Refer to Adding Nodes in Chapter 5 for more information.

At any time, one or more new nodes can be added to the cluster to expand the storage pool.

NOTE: To create new peer sets to expand cluster storage, it is recommended that the number of

new nodes you add is equal to the Data Replication Count being used (2x or 3x) and they all

be added at the same time.

When you log into any of the new, uninitialized nodes, the Initial Setup Wizard launches

displaying the Welcome page and its two options. To add this and other nodes to an existing

SnapScale cluster, click Join an Existing SnapScale.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-12

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Web Management Interface

The Initial Setup Wizard then redirects you to the Add Nodes page in the Web Management

Interface where this node (and all other discovered/new nodes) can be easily added to the

cluster. (See Adding Nodes in Chapter 5 for more information.) You are then directed to select

the nodes to add, set the static IP addresses, and confirm the settings.

NOTE: If no existing SnapScale cluster is detected, a warning is displayed. Verify that the node is

on the same Storage network as the other nodes in the cluster, then click Re-Detect

SnapScale.

Web Management Interface

SnapScale nodes use a web-based graphical user interface (GUI), called the Web

Management Interface, to administer and monitor the cluster. It supports most common web

browsers. JavaScript must be enabled in the browser for it to work.

When connecting to the cluster with a web browser, the Web Home page (see Web Home in

Chapter 9) of the Web Management Interface is displayed. This page shows any shares at

the top, the three primary options below the shares list, and has special navigation buttons

displayed on the right side of the title bar (see the next table).

NOTE: If you have not gone through the initial setup or authentication is required, you may be

prompted to log in when you first access the Web Management Interface.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-13

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Web Management Interface

The Web Home page displays the following icons and options:

Icons & Options Description

Change Password Click this icon to access the password change page. Passwords

are case sensitive. Use up to 15 alphanumeric characters.

Switch User Click this icon to log out and open the login dialog box to log in as

a different user.

Administration Click this icon to administer the node. If you are not yet logged in,

you are prompted to do so.

Navigation Buttons: The following navigation buttons are present in the upper right on

every Web Management Interface page:

Home – Click this icon to switch between the Web Home page

and the Admin Home page. If you have not yet logged in to the

Admin Home page, only the Web Home page is available.

Snap Finder – Click this icon to view a list of all SnapServers,

SnapScale clusters, and Uninitialized nodes on your network, and

to specify a list of remote servers that can access these servers,

clusters, and nodes on other subnets. You can access these

servers, clusters, and nodes by clicking the listed name or IP

address.

SnapExtensions – Click this to view the SnapExtensions page,

where you can acquire licenses for and configure third-party

applications.

Site Map – Click this icon to view a Site Map of the available

options in the Web Management Interface, where you can

navigate directly to all the major utility pages. The current page is

shown in orange text.

Help – Click this icon to access the web online help for the Web

Management Interface page you are viewing.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-14

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Web Management Interface

Icons & Options Description

UI Appearance Click the Mgmt. Interface Settings link in the Site Map to

choose a background for the Web Management Interface. You

can select either a solid-colored background or a textured-graphic

background.

When logged in to the Administration page, details about the cluster’s health are shown:

The same icons are available at the top of the page plus a refresh icon ( ) for auto-refresh

pages located on the tab bar. For more information, see Web Home in Chapter 9.

Alert Messages

Alert messages are displayed on Administrator-level Web Management Interface pages that

display a menu. Some alerts (such as Spare Distributor and Data Balancer) have clickable

options:

• [Later] - Hides the alert for 24 hours or until after feature is run, whichever is first.

• [Hide] - Suppresses the alert. It will not be shown again until after the feature called

out in the alert is run and a new alert for that feature is generated.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-15

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Web Management Interface

When a cluster is restarted, the Web Management Interface shows the status while the

cluster is booting. Because some components are not immediately available, an alert

message is displayed showing the percent done and as a reminder that the process is not

complete, some nodes may appear offline, and so forth. Some of the status boxes may show

warnings.

Site Map

The RAINcloudOS site map ( ) provides links to all the web pages that make up the Web

Management Interface. All the pages are each covered in detail in the following chapters.

To close the site map, click either Close or outside the map.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-16

SnapScale/RAINcloudOS 4.0 Administrator’s Guide Web Management Interface

Contact Information

From the Web Management Interface, click the SnapScale logo in the upper left corner of the

Web Management Interface to display the pertinent hardware, software, and contact

information:

Scroll down to view additional contact information. Click either Close or outside the box to

dismiss.

10400455-001 Dec 2013 ©Overland Storage, Inc. 2-17

Chapter 3

SnapScale Settings

This section covers the initial setup and configuration of a SnapScale cluster of three or more

nodes. The four basic options for cluster settings are found under the SnapScale tab. They

can also be accessed using the site map icon ( ).

Topics in SnapScale Settings:

• SnapScale Properties

• Date/Time

• SSH

• UPS

10400455-001 Dec 2013 ©Overland Storage, Inc. 3-1

SnapScale/RAINcloudOS 4.0 Administrator’s Guide SnapScale Properties

SnapScale Properties

These basic options are found under SnapScale Properties:

This table details the options on the

Option Description

SnapScale Name and

Description

Description This optional field provides a place to define the cluster in the

Data Replication Count The data replication count establishes the level of data

Spare Disks Check the box and select the number of spare disks you want

Storage Utilization Use the two drop-down lists to select the percentage of

Either accept the default cluster name or enter an

alphanumeric name up to 15 characters in length. Network

clients can use this name along with round robin DNS name

resolution to connect to the cluster.

The default name is “Scalennnnnnn” (where nnnnnnn is the

appliance number of the node used to create the cluster).

overall scheme of your network and better identify the cluster

on a LAN.