Page 1

Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Novell

Business Continuity Clustering

novdocx (en) 11 December 2007

for NetWare

1.1

ADMINISTRATION GUIDE

February 15, 2008

®

www.novell.com

Page 2

Legal Notices

Novell, Inc., makes no representations or warranties with respect to the contents or use of this documentation, and

specifically disclaims any express or implied warranties of merchantability or fitness for any particular purpose.

Further, Novell, Inc., reserves the right to revise this publication and to make changes to its content, at any time,

without obligation to notify any person or entity of such revisions or changes.

Further, Novell, Inc., makes no representations or warranties with respect to any software, and specifically disclaims

any express or implied warranties of merchantability or fitness for any particular purpose. Further, Novell, Inc.,

reserves the right to make changes to any and all parts of Novell software, at any time, without any obligation to

notify any person or entity of such changes.

Any products or technical information provided under this Agreement may be subject to U.S. export controls and the

trade laws of other countries. You agree to comply with all export control regulations and to obtain any required

licenses or classification to export, re-export or import deliverables. You agree not to export or re-export to entities on

the current U.S. export exclusion lists or to any embargoed or terrorist countries as specified in the U.S. export laws.

You agree to not use deliverables for prohibited nuclear, missile, or chemical biological weaponry end uses. See the

Novell International Trade Services Web page (http://www.novell.com/info/exports/) for more information on

exporting Novell software. Novell assumes no responsibility for your failure to obtain any necessary export

approvals.

novdocx (en) 11 December 2007

Copyright © 2007-2008 Novell, Inc. All rights reserved. No part of this publication may be reproduced, photocopied,

stored on a retrieval system, or transmitted without the express written consent of the publisher.

Novell, Inc., has intellectual property rights relating to technology embodied in the product that is described in this

document. In particular, and without limitation, these intellectual property rights may include one or more of the U.S.

patents listed on the Novell Legal Patents Web page (http://www.novell.com/company/legal/patents/) and one or

more additional patents or pending patent applications in the U.S. and in other countries.

Novell, Inc.

404 Wyman Street, Suite 500

Waltham, MA 02451

U.S.A.

www.novell.com

Online Documentation: To access the latest online documentation for this and other Novell products, see

the Novell Documentation Web page (http://www.novell.com/documentation).

Page 3

Novell Trademarks

For Novell Trademarks, see the Novell Trademark and Service Mark list (http://www.novell.com/company/legal/

trademarks/tmlist.html).

Third-Party Materials

All third-party trademarks are the property of their respective owners.

novdocx (en) 11 December 2007

Page 4

novdocx (en) 11 December 2007

Page 5

Contents

About This Guide 9

1Overview 11

1.1 Disaster Recovery Implications . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

1.2 Disaster Recovery Implementations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

1.2.1 Stretch Clusters vs. Cluster of Clusters. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

1.2.2 Novell Business Continuity Clusters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

1.2.3 Usage Scenarios . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16

2 Installation and Setup 19

2.1 Requirements . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 19

2.1.1 NetWare 6.5 SP 5 or SP 6 (OES 1 SP2 or SP3 NetWare) . . . . . . . . . . . . . . . . . . . . 19

2.1.2 Novell eDirectory 8.8 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.1.3 Novell Cluster Services 1.8.2 for NetWare . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.1.4 OpenSLP. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 20

2.1.5 OpenWBEM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.1.6 BASH. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.1.7 LIBC . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.1.8 autoexec.ncf File . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.1.9 Shared Disk Systems . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.1.10 Link Speeds. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.2 Installing Identity Manager . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 21

2.3 Installing Novell Business Continuity Cluster Software . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.3.1 Business Continuity Cluster Licensing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

2.3.2 Running the Business Continuity Cluster Installation Program . . . . . . . . . . . . . . . . . 23

2.3.3 Business Continuity Cluster Component Locations . . . . . . . . . . . . . . . . . . . . . . . . . 25

2.4 Configuring File System Mirroring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 25

2.4.1 Configuring NSS Mirroring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

2.4.2 Configuring SAN-Based Mirroring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

2.4.3 LUN Masking. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

2.5 Setting Up Novell Business Continuity Cluster Software . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 29

2.5.1 Configuring Business-Continuity-Specific IDM Drivers . . . . . . . . . . . . . . . . . . . . . . . 29

2.5.2 Configuring Clusters for Business Continuity . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

2.5.3 Configuring Cluster Resources for Business Continuity . . . . . . . . . . . . . . . . . . . . . . 41

2.6 Managing a Novell Business Continuity Cluster . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 43

2.6.1 Migrating a Cluster Resource to Another Cluster . . . . . . . . . . . . . . . . . . . . . . . . . . . 44

2.6.2 Changing Cluster Peer Credentials. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 45

2.6.3 Viewing the Current Status of a Business Continuity Cluster . . . . . . . . . . . . . . . . . . 46

2.6.4 Generating a Cluster Report . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 46

2.6.5 Disabling Business Continuity Cluster Resources. . . . . . . . . . . . . . . . . . . . . . . . . . . 47

2.6.6 Business Continuity Cluster Console Commands . . . . . . . . . . . . . . . . . . . . . . . . . . . 47

2.7 Business Continuity Cluster Failure Types . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 49

2.7.1 SAN-based Mirroring Failure Types and Responses . . . . . . . . . . . . . . . . . . . . . . . . 50

2.7.2 Host-Based Mirroring Failure Types and Responses . . . . . . . . . . . . . . . . . . . . . . . . 51

novdocx (en) 11 December 2007

3 Upgrading BCC 53

3.1 Upgrading BCC 1.0 to BCC 1.1 for NetWare. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

3.1.1 Upgrading NetWare. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 53

Contents 5

Page 6

3.1.2 Installing or Upgrading IDM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

3.1.3 Installing BCC 1.1 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

3.1.4 Resetting BCC Administrative User Credentials . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

3.1.5 Authorizing the BCC Administrative User . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 54

3.1.6 Verifying SAN Scripts. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

3.1.7 Deleting and Re-Creating BCC IDM Drivers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

3.2 Upgrading BCC 1.0 to BCC 1.1 for Linux . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 55

4 Troubleshooting BCC 1.1 57

4.1 Cluster Connection States . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 57

4.2 Driver Ports. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 59

4.3 Excluded Users . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 59

4.4 Security Equivalent User . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 60

4.5 Certificates . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

4.6 Clusters Can’t Communicate . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 61

4.7 BCC Startup Flags . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

4.7.1 Using BCC Startup Flags . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

4.8 Problems With BCC Installation on NetWare . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 62

4.9 IDM Drivers for Cluster Synchronization Do Not Start . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 63

4.10 IDM Drivers Do Not Synchronize Objects from One Cluster to Another. . . . . . . . . . . . . . . . . . 63

4.11 Tracing IDM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 64

4.12 Peer Cluster Communication Not Working . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

4.13 Administration of Peer Clusters Not Functional . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

4.14 Resource Does Not Migrate to Another Cluster . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

4.15 Resource Cannot Be Brought Online . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 65

4.16 Dumping Syslog on NetWare . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

4.17 Slow Failovers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

4.18 Resource Script Search and Replace Functions Do Not Work . . . . . . . . . . . . . . . . . . . . . . . . 66

4.19 Virtual NCP Server IP Addresses Won’t Change . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 66

4.20 IP Address, Virtual Server DN, or Pool Name Does Not Appear on the iManager Cluster

Configuration Page. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 67

4.21 Blank Error String iManager Error Appears While Bringing a Resource Online. . . . . . . . . . . . 68

4.22 Best Practices. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

4.23 Mapping Drives in Login Scripts . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 68

4.24 Mapping Drives to Home Directories Using the %HOME_DIRECTORY Variable . . . . . . . . . . 69

4.25 BCC Error Codes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 70

novdocx (en) 11 December 2007

5 Virtual IP Addresses 73

5.1 Virtual IP Address Definitions and Characteristics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

5.1.1 Definitions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 73

5.1.2 Characteristics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 74

5.2 Virtual IP Address Benefits. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 74

5.2.1 High Availability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 74

5.2.2 Unlimited Mobility . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

5.3 Other Added Features . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

5.3.1 Support for Host Mask . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 77

5.3.2 Source Address Selection for Outbound Connections . . . . . . . . . . . . . . . . . . . . . . . . 77

5.4 Reducing the Consumption of Additional IP Addresses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 78

5.5 Configuring Virtual IP Addresses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 79

5.5.1 Displaying Bound Virtual IP Addresses . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 80

6 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 7

A Implementing a Multiple-Tree BCC 81

A.1 Copying User Objects Using IDM . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

A.2 Configuring User Object Synchronization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 81

A.3 Creating SSL Certificates . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 82

A.4 Synchronizing IDM Drivers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 83

A.5 Preventing IDM Synchronization Loops. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 83

A.6 Migrating Resources to Another Cluster . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 85

B Setting Up Auto-Failover 87

B.1 Enabling Auto-Failover . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 87

B.2 Creating an Auto-Failover Policy . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 88

B.3 Refining the Auto-Failover Policy. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 88

B.4 Adding or Editing Monitor Configurations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 89

C Security Considerations 91

C.1 Security Features. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 91

C.2 Security Configuration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 91

C.2.1 BCC Configuration Settings . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 92

C.2.2 Security Information for Other Products . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 95

C.3 Other Security Considerations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 96

novdocx (en) 11 December 2007

D Documentation Updates 97

D.1 February 15, 2008 (Updates). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 97

D.1.1 Installation and Setup . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 97

D.1.2 Troubleshooting BCC 1.1 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 98

Contents 7

Page 8

novdocx (en) 11 December 2007

8 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 9

About This Guide

novdocx (en) 11 December 2007

This guide describes how to install, configure, and manage Novell® Business Continuity Clustering

®

for NetWare

Chapter 1, “Overview,” on page 11

Chapter 2, “Installation and Setup,” on page 19

Chapter 3, “Upgrading BCC,” on page 53

Chapter 4, “Troubleshooting BCC 1.1,” on page 57

Chapter 5, “Virtual IP Addresses,” on page 73

Appendix A, “Implementing a Multiple-Tree BCC,” on page 81

Appendix B, “Setting Up Auto-Failover,” on page 87

Appendix C, “Security Considerations,” on page 91

. The guide is divided into the following sections:

Audience

This guide is intended for intended for anyone involved in installing, configuring, and managing

Novell Cluster Services.

Feedback

We want to hear your comments and suggestions about this manual and the other documentation

included with this product. Please use the User Comments feature at the bottom of each page of the

online documentation, or go to www.novell.com/documentation/feedback.html and enter your

comments there.

Documentation Updates

The latest version of this Novell Business Continuity Clustering for NetWare Administration Guide

is available on the BCC 1.1 Documentation Web site (http://www.novell.com/documentation/

bcc11/index.html).

Documentation Conventions

In Novell documentation, a greater-than symbol (>) is used to separate actions within a step and

items in a cross-reference path.

®

A trademark symbol (

, TM, etc.) denotes a Novell trademark. An asterisk (*) denotes a third-party

trademark.

About This Guide

9

Page 10

novdocx (en) 11 December 2007

10 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 11

1

Overview

novdocx (en) 11 December 2007

1

As corporations become more international, fueled in part by the reach of the World Wide Web, the

requirement for service availability has increased. Novell

the ability to maintain 24x7x365 data and application services to their users while still being able to

perform maintenance and upgrades on their systems.

In the past few years, natural disasters (ice storms, earthquakes, hurricanes, tornadoes, and fires)

have caused unplanned outages of entire data centers. In addition, US federal agencies have realized

the disastrous effects that terrorist attacks could have on the US economy when corporations lose

their data and the ability to perform critical business practices. This has resulted in initial

recommendations for corporations to build mirrored or replicated data centers that are

geographically separated by 300 km or more (The minimum acceptable distance being 200 km).

Many companies have built and deployed geographically mirrored data centers. The problem is that

setting up and maintaining the two or more centers is a very manual process that takes a great deal of

planning and synchronizing. Even configuration changes have to be carefully planned and

replicated. One mistake and the redundant site is no longer able to effectively take over in the event

of a disaster.

®

Cluster Services™ offers corporations

1.1 Disaster Recovery Implications

The implications of disaster recovery are directly tied to your data. Is your data mission critical? In

many instances, critical systems and data drive the business. If these services stop, the business

stops. When calculating the cost of downtime, some things to consider are

File transfers and file storage

E-mail, calendaring and collaboration

Web hosting

Critical databases

Productivity

Reputation

Continuous availability of critical business systems is no longer a luxury, it is a competitive business

requirement.The Gartner Group estimates that 40% of enterprises that experience a disaster will go

out of business in five years and only 15% of enterprises have a full-fledged business continuity plan

that goes beyond core technology and infrastructure.

1.2 Disaster Recovery Implementations

There are two main Novell Cluster Services implementations that you can use to achieve your

desired level of disaster recovery. These include a stretch cluster and a cluster of clusters. The

Novell Business Continuity Cluster product automates some of the configuration and processes used

in a cluster of clusters.

Section 1.2.1, “Stretch Clusters vs. Cluster of Clusters,” on page 12

Section 1.2.2, “Novell Business Continuity Clusters,” on page 15

Overview

11

Page 12

Section 1.2.3, “Usage Scenarios,” on page 16

1.2.1 Stretch Clusters vs. Cluster of Clusters

“Stretch Clusters” on page 12

“Cluster of Clusters” on page 12

“Implementation Comparison” on page 14

Stretch Clusters

A stretch cluster consists of one cluster in which the nodes in the cluster are located in

geographically separate areas. All nodes in the cluster must be in the same eDirectory™ tree. In this

architecture, the data is mirrored between two data centers that are geographically separated. All the

machines in both data centers are part of one cluster, so that if a disaster occurs in one data center,

the other automatically takes over.

Figure 1-1 Stretch Cluster

novdocx (en) 11 December 2007

Building A Building B

Ethernet Switch Ethernet Switch

Server 2

Fibre Channel

Switch

Fibre Channel

Disk Array

Site 1

Cluster of Clusters

Server 3Server 1 Server 4

8-node cluster stretched

between two sites

WAN

Cluster

Heartbeat

SAN

Disk blocks

Server 6 Server 7Server 5 Server 8

Fibre Channel

Switch

Fibre Channel

Disk Array

Site 2

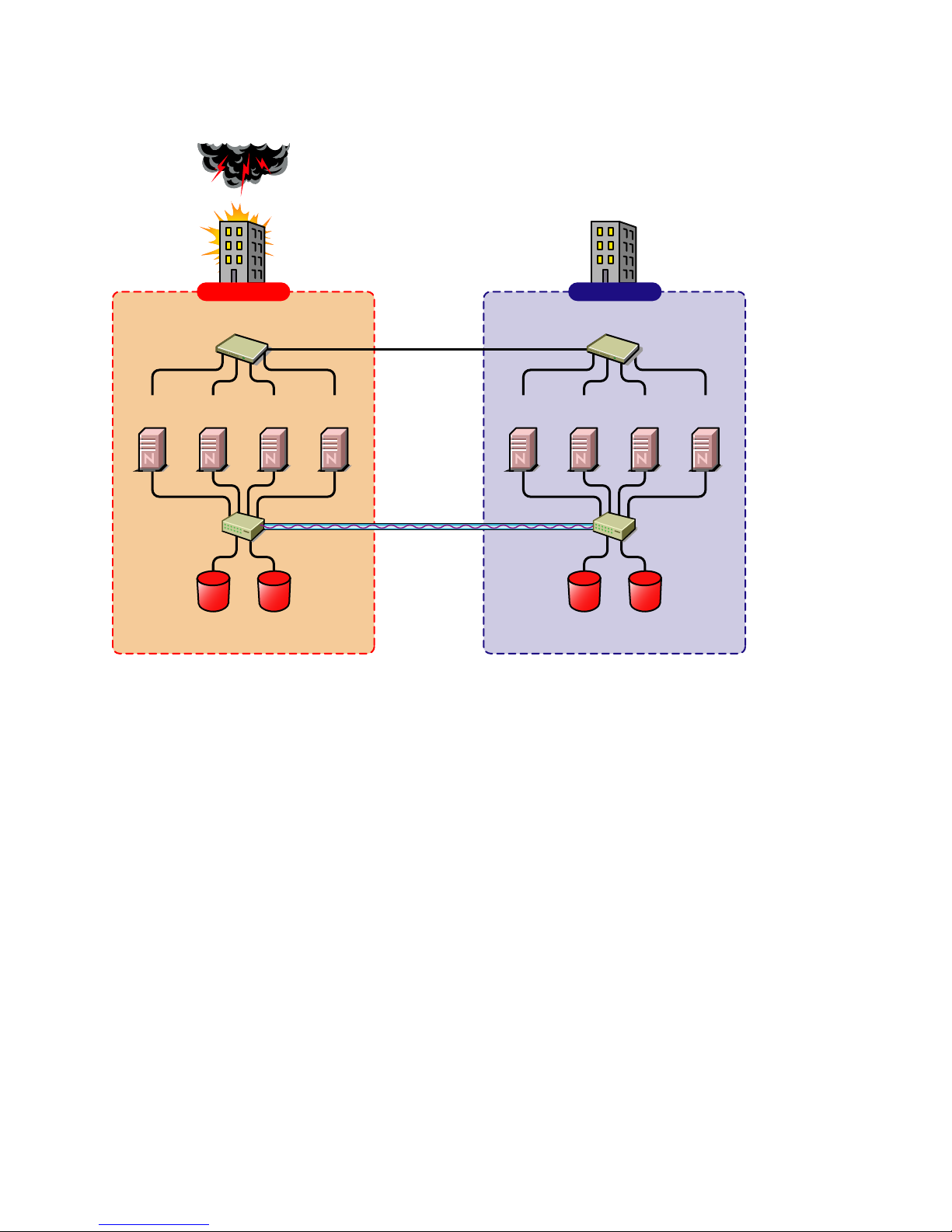

A cluster of clusters consists of two or more clusters in which each cluster is located in a

geographically separate area. A cluster of clusters provides the ability to fail over selected cluster

resources or all cluster resources from one cluster to another cluster. Typically, replication of data

blocks between SANs is performed by SAN hardware, but it can be done by host-based mirroring

for synchronous replication over short distances.

12 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 13

Figure 1-2 Cluster of Clusters

Building A Building B

novdocx (en) 11 December 2007

Two independent clusters at

geographically separate sites

Server

1A

Fibre Channel

Server

Switch

Ethernet Switch

2A

Fibre Channel

Cluster Site 1

Server

3A

Disk Arrays

Server

4A

WAN

eDirectory

DirXML

SAN

Disk blocks

Server

1B

Ethernet Switch

Server2BServer

3B

Fibre Channel

Switch

Fibre Channel

Disk Arrays

Cluster Site 2

Server

4B

Overview 13

Page 14

Implementation Comparison

Table 1-1 Disaster Recovery Implementation Comparison

Stretch Cluster Cluster of Clusters

novdocx (en) 11 December 2007

Advantages It automatically fails over.

It is easier to manage than

separate clusters.

Disadvantages

Failure of site interconnect can

result in LUNs becoming primary

at both locations (split brain

problem) if host-based mirroring

is used.

An SBD partition must be

mirrored between sites.

It accommodates only two sites.

All IP addresses must reside in

the same subnet.

The eDirectory partition must

span the cluster.

The chance of LUNs at both

locations becoming primary is

minimized.

eDirectory partitions don't need

to span the cluster.

Each cluster can be in a

separate eDirectory tree.

IP addresses for each cluster

can be on different IP subnets.

It accommodates more than two

sites and cluster resources can

fail over to separate clusters

(multiple-site fan-out failover

support).

SBD partitions are not mirrored

between sites.

Resource configurations must be

kept in sync manually.

14 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 15

Stretch Cluster Cluster of Clusters

novdocx (en) 11 December 2007

Other Considerations Host-based mirroring is required

to mirror the SBD partition

between sites.

Link variations can cause false

failovers.

You could consider partitioning

the eDirectory tree to place the

cluster container in a partition

separate from the rest of the

tree.

The cluster heartbeat must be

increased to accommodate link

latency between sites.

You can set this as high as 30

seconds, monitor cluster

heartbeat statistics, and then

tune down as needed.

Because all IP addresses in the

cluster must be on the same

subnet, you must ensure that

your routers handle gratuitous

ARP.

Contact your router vendor or

consult your router

documentation for more

information.

Depending on the platform used,

storage arrays must be

controllable by scripts that run on

NetWare

are not SMI-S compliant.

®

or Linux if the SANs

1.2.2 Novell Business Continuity Clusters

Novell Business Continuity Clusters is a cluster of clusters similar to what is described above,

except that the cluster configuration, maintenance, and synchronization have been automated by

adding specialized software.

Novell Business Continuity Clustering software is an integrated set of tools to automate the setup

and maintenance of a Business Continuity infrastructure. Unlike competitive solutions that attempt

to build stretch clusters, Novell Business Continuity Clustering utilizes a cluster of clusters. Each

site has its own independent clusters, and the clusters in each of the geographically separate sites are

each treated as "nodes" in a larger cluster, allowing a whole site to do fan-out failover to other

multiple sites. Although this can currently be done manually with a cluster of clusters, Novell

Business Continuity Clustering automates the system using eDirectory and policy-based

management of the resources and storage systems.

Novell Business Continuity Clustering software provides the following advantages:

Integrates with SAN hardware devices to automate the failover process using standards based

mechanisms such as SMI-S.

Utilizes Novell Identity Manager technology to automatically synchronize and transfer cluster-

related eDirectory objects from one cluster to another.

Provides the capability to fail over as few as one cluster resource, or as many as all cluster

resources.

Overview 15

Page 16

Includes intelligent failover that lets you do site failover testing as a standard practice.

Provdes scripting capability for enhanced control and customization.

Provides simplified business continuity cluster configuration and management using the

browser-based iManager management tool.

Runs on Linux and NetWare.

1.2.3 Usage Scenarios

There are several Business Continuity Clustering usage scenarios that can be used to achieve the

desired level of disaster recovery. Three possible scenarios include:

A Two-Site Business Continuity Cluster Solution

A Multiple-Site Business Continuity Cluster Solution

A Low-Cost Business Continuity Cluster Solution

Two-Site Business Continuity Cluster Solution

The two-site solution can be used in one of two ways:

novdocx (en) 11 December 2007

A primary site in which all services are normally active, and a secondary site which is

effectively idle, with the data mirrored at it and the applications and services ready to load if

needed.

Two active sites each supporting different applications and services. Either site can take over

for the other site at any time.

The first option is typically used when the purpose of the secondary site is primarily testing by the

IT department. The second option is typically used in a company that has more than one large site of

operations.

16 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 17

Figure 1-3 Two-Site Business Continuity Cluster

Two independent clusters at

geographically separate sites

Building A Building B

novdocx (en) 11 December 2007

Server

1A

Fibre Channel

Server

Switch

Ethernet Switch

2A

Fibre Channel

Cluster Site 1

Server

3A

Disk Arrays

Server

4A

WAN

eDirectory

DirXML

Server

1B

SAN

Disk blocks

Ethernet Switch

Server2BServer

3B

Fibre Channel

Switch

Fibre Channel

Disk Arrays

Cluster Site 2

Server

4B

Multiple-Site Business Continuity Cluster Solution

This is a large Business Continuity Cluster solution capable of supporting up to 32 nodes per site

and more than two sites. Services and applications can do fan-out failover between sites. Replication

of data blocks is typically done by SAN vendors, but can be done by host-based mirroring for

synchronous replication over short distances. The illustration below depicts a four-site business

continuity cluster.

Overview 17

Page 18

Figure 1-4 Multiple-Site Business Continuity Cluster

novdocx (en) 11 December 2007

Building D

Ethernet Switch

Server2DServer

3C

4B

Fibre Channel

Switch

Fibre Channel

Disk Arrays

Server

4C

Server

3D

Fibre Channel

Switch

4D

Server

1A

Fibre Channel

Switch

Four independent clusters in

geographically separate sites

Building A Building B

Ethernet Switch

Server

Server

2A

Fibre Channel

Disk Arrays

Cluster Site 1

WAN

eDirectory

DirXML

Server

3A

4A

SAN

Disk blocks

Server

Ethernet Switch

Server

Server2BServer

1B

Fibre Channel

Disk Arrays

Cluster Sites 2, 3, and 4

1C

Building C

Ethernet Switch

Server

1D

Server2CServer

Server

3B

Fibre Channel

Switch

Fibre Channel

Disk Arrays

Using the Novell Portal Services, iChain®, and ZENworks® products, all services, applications, and

data can be rendered through the internet, allowing for loss of service at one site but still providing

full access to the services and data by virtue of the ubiquity of the internet. Data and services

continue to be available from the other mirrored sites. Moving applications and services to the

Internet frees corporations from the restrictions of traditional LAN-based applications. Traditional

LAN applications require a LAN infrastructure that must be replicated at each site, and might

require relocation of employees to allow the business to continue. Internet-based applications allow

employees to work from any place that offers an internet connection, including homes and hotels.

Low-Cost Business Continuity Cluster Solution

The low-cost business continuity cluster solution is similar to the previous two solutions, but

replaces Fibre Channel arrays with iSCSI arrays. Data block mirroring can be accomplished either

with iSCSI-based block replication, or host-based mirroring. In either case, snapshot technology can

allow for asynchronous replication over long distances. However, the lower cost solution does not

necessarily have the performance associated with higher-end Fibre Channel storage arrays.

18 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 19

2

Installation and Setup

This section covers the following information to help you install, set up, and configure Novell®

Business Continuity Clustering for your specific needs:

Section 2.1, “Requirements,” on page 19

Section 2.2, “Installing Identity Manager,” on page 21

Section 2.3, “Installing Novell Business Continuity Cluster Software,” on page 23

Section 2.4, “Configuring File System Mirroring,” on page 25

Section 2.5, “Setting Up Novell Business Continuity Cluster Software,” on page 29

Section 2.6, “Managing a Novell Business Continuity Cluster,” on page 43

Section 2.7, “Business Continuity Cluster Failure Types,” on page 49

2.1 Requirements

novdocx (en) 11 December 2007

2

The requirements in this section must be met prior to installing Novell Business Continuity Cluster

software.

Section 2.1.1, “NetWare 6.5 SP 5 or SP 6 (OES 1 SP2 or SP3 NetWare),” on page 19

Section 2.1.2, “Novell eDirectory 8.8,” on page 20

Section 2.1.3, “Novell Cluster Services 1.8.2 for NetWare,” on page 20

Section 2.1.4, “OpenSLP,” on page 20

Section 2.1.5, “OpenWBEM,” on page 21

Section 2.1.6, “BASH,” on page 21

Section 2.1.7, “LIBC,” on page 21

Section 2.1.8, “autoexec.ncf File,” on page 21

Section 2.1.9, “Shared Disk Systems,” on page 21

Section 2.1.10, “Link Speeds,” on page 21

2.1.1 NetWare 6.5 SP 5 or SP 6 (OES 1 SP2 or SP3 NetWare)

NetWare® 6.5 Support Pack 5 or 6 (same as OES 1 Support Pack 2 or 3 Netware) must be installed

and running on all servers that will be part of a business continuity cluster.

The requires the NetWare 6.5 post Support Pack 5 Update. See TID # 2974185 (http://

support.novell.com/cgi-bin/search/searchtid.cgi?/2974185.htm). If you have installed NetWare 6.5

Support Pack 6, the Support Pack 5 Update is not required.

See the OES NetWare Installation Guide for information on installing and configuring NetWare 6.5.

Installation and Setup

19

Page 20

2.1.2 Novell eDirectory 8.8

eDirectory 8.8 is supported with Business Continuity Clustering 1.1 Support Pack 1. See the

eDirectory 8.8 documentation (http://www.novell.com/documentation/edir88/index.html) for more

information.

2.1.3 Novell Cluster Services 1.8.2 for NetWare

Two to four clusters with Novell Cluster Services™ 1.8.2 (the version that ships with NetWare 6.5

Support Pack 5) or later installed and running on each node in the cluster.

Each cluster must have a unique name, even if the clusters reside in different Novell eDirectory™

trees, and clusters must not have the same name as any of the eDirectory trees in the business

continuity cluster.

See the OES Novell Cluster Services 1.8.2 Administration Guide for NetWare for information on

installing and configuring Novell Cluster Services.

The hardware requirements for Novell Business Continuity Cluster software are the same as for

Novell Cluster Services. For more information, see “Hardware Requirements” and “Shared

Disk System Requirements” in the OES Novell Cluster Services 1.8.2 Administration Guide for

NetWare.

Some SAN vendors require you to purchase or license their CLI (Command Line Interface)

separately. The CLI for the SAN might not initially be included with your hardware.

novdocx (en) 11 December 2007

Also, some SAN hardware may not be SMI-S compliant and can’t be managed using SMI-S

commands.

The recommended configuration is to have each cluster be in the same eDirectory tree. You can have

a business continuity cluster with clusters in separate eDirectory trees. See Appendix A,

“Implementing a Multiple-Tree BCC,” on page 81 for more information.

You must have the nwcs18pt3 or later cluster patch. See TID # 2974985 (http://support.novell.com/

cgi-bin/search/searchtid.cgi?/2974985.htm). This patch can only be applied to NetWare 6.5 Support

Pack 5. If you have upgraded to NetWare 6.5 Support Pack 6, this patch is not required.

The recommended configuration of a business continuity cluster is to have each Novell Cluster

Services cluster be in the same eDirectory tree. You can have a business continuity cluster with

clusters in separate eDirectory trees. See Appendix A, “Implementing a Multiple-Tree BCC,” on

page 81 for more information.

In order to add or change cluster peer credentials, you must access iManager on a server that is in the

same eDirectory tree as the cluster you are adding or changing peer credentials for.

2.1.4 OpenSLP

You must have SLP (Server Location Protocol) set up and configured properly. See Configuring

OpenSLP for eDirectory (http://www.novell.com/documentation/edir873/edir873/data/

aksbdp5.html#aksbdp5) in the Novell eDirectory 8.7.3 Administration Guide.

20 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 21

2.1.5 OpenWBEM

OpenWBEM must be running and configured to start in autoexec.ncf. See the OpenWBEM

Services Administration Guide for OES (http://www.novell.com/documentation/oes/cimom/data/

front.html#front).

For the required OpenWBEM patch, see TID # 5004180 (http://www.novell.com/support/

search.do?cmd=displayKC&docType=kc&externalId=InfoDocument-patchbuilderreadme5004180&sliceId=&dialogID=34683729&stateId=0%200%2034689728).

2.1.6 BASH

BASH must be installed on all nodes that participate in the BCC. The BASH shell does not need to

be running, only installed.

2.1.7 LIBC

You must have the latest LIBC patch installed. This is currently libcsp6X. See TID # 5003460 (http:/

/www.novell.com/support/search.do?cmd=displayKC&docType=kc&externalId=InfoDocumentpatchbuilder-readme5003460&sliceId=&dialogID=36100275&stateId=0%200%2036102542).

novdocx (en) 11 December 2007

2.1.8 autoexec.ncf File

The sys:\system\autoexec.ncf file must be modified so that the call to sys:/bin/

unixenv.ncf is before the calls to openwbem.ncf and ldbcc.ncf.

2.1.9 Shared Disk Systems

For Business Continuity Clustering 1.1 on NetWare, a shared disk system (Storage Area Network or

SAN) is required for each cluster in the business continuity cluster. See “Shared Disk System

Requirements” in the OES Novell Cluster Services 1.8.2 Administration Guide for NetWare.

2.1.10 Link Speeds

For real-time mirroring, link speeds should be 1 GB or better, the fibre-channel cable length between

sites should be less than 200 kilometers, and the links should be dedicated.

Many factors should be considered for distances greater than 200 kilometers, some of which

include:

The amount of data being transferred

The bandwidth of the link

Whether or not snapshot technology is being used

2.2 Installing Identity Manager

DirXML® is now called Identity Manager (IDM). IDM 2.0.2 is part of the IDM 2 Bundle Edition

(formerly referred to as a starter pack) that is included with OES NetWare and OES Linux Support

Pack 2.

Installation and Setup 21

Page 22

The same installation program used to install the Identity Manager engine is also used to install the

Identity Manager drivers and management utilities. See Installing Identity Manager on NetWare

(http://www.novell.com/documentation/dirxml20/admin/data/abaa2oj.html) in the Novell Nsure

Identity Manager 2.0.1 Administration Guide.

NOTE: The IDM 2.0.1 documentation also applies to IDM 2.0.2.

The Identity Manager (IDM) 2.0.2 engine and eDirectory driver (or later version of IDM 2.x) must

be installed on one node in each cluster. The node where the Identity Manager engine and eDirectory

driver is installed must have an eDirectory replica with at least read/write access to all eDirectory

objects that will be synchronized between clusters (see the list in the Important reminder below).

This does not apply to all eDirectory objects in the tree.

The IDM 2.0.2 management utilities must also be installed, and can be installed on a cluster node,

but installing them on a non-cluster node is the recommended configuration. The management

utilities should be installed on the same server as iManager. See “Business Continuity Cluster

Component Locations” on page 25 for specific information on where to install IDM components.

NOTE: Filtered eDirectory replicas are not supported with this version of Business Continuity

Cluster software. Full replicas are required.

novdocx (en) 11 December 2007

IMPORTANT: The eDirectory replica must have at least read/write access to the following

containers:

The Identity Manager driver set container.

The container where the Cluster object resides.

The container where the Server objects reside.

If server objects reside in multiple containers, this must be a container high enough in the tree

to be above all containers that contain server objects.

Best practice is to have all server objects in one container.

The container where the cluster pool and volume objects will be placed when they are

synchronized to this cluster. This container is referred to as the landing zone. The NCP server

objects for the virtual server of a BCC enabled resource will also be placed in the landing zone.

If the eDirectory replica does not have read/write access to the containers listed above,

synchronization will not work properly.

Installing Identity Manager 3 Bundle Edition

IDM 3.0.1 is supported with BCC 1.1 Support Pack 1 and is included with the IDM 3.0.1 Bundle

Edition. Instructions for installing and configuring the IDM 3.0.1 Bundle Edition can be found with

the IDM 3.0.1 Bundle Edition Documentation (http://www.novell.com/documentation/oes/implgde/

index.html?page=/documentation/oes/implgde/data/b4dgr2g.html#b4dgr2k).

22 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 23

2.3 Installing Novell Business Continuity Cluster

Software

It is necessary to run the Novell Business Continuity Clustering installation program when you want

to:

Install the Business Continuity Cluster engine software on cluster nodes for the clusters that

will be part of a business continuity cluster.

Install the iManager snap-ins for Business Continuity on either a NetWare 6.5 server or a

Windows server.

When you run the installation program to install the Business Continuity Cluster engine software,

the eDirectory schema is automatically extended in the eDirectory tree where the engine software is

installed.

The Business Continuity Cluster installation installs to only one cluster at a time You must run the

installation program again for each NetWare cluster.

The Business Continuity Cluster installation program is run from a Windows workstation. Prior to

running the installation program, the Windows workstation must have the latest Novell Client™

software installed and you must be authenticated to the eDirectory tree where the cluster resides.

novdocx (en) 11 December 2007

Information for installing BCC 1.1 is contained in the following sections:

Section 2.3.1, “Business Continuity Cluster Licensing,” on page 23

Section 2.3.2, “Running the Business Continuity Cluster Installation Program,” on page 23

Section 2.3.3, “Business Continuity Cluster Component Locations,” on page 25

2.3.1 Business Continuity Cluster Licensing

Novell Business Continuity Cluster software requires a paper license agreement for each business

continuity cluster.

2.3.2 Running the Business Continuity Cluster Installation

Program

To install Novell Business Continuity Clustering, download and copy the software to a directory on

your Windows workstation, then complete the following steps:

1 From the directory on your Windows workstation where you just copied the Business

Continuity Clustering software, run install.exe.

2 Continue through the installation wizard until you get to the page that prompts you to select the

components to install.

3 Select at least one of the Identity Manager templates for iManager installation options and the

Novell Business Continuity Cluster component, then click Next.

Selecting Identity Manager Templates for NetWare iManager Servers installs the templates on

a NetWare server. The templates add functionality to iManager so you can manage your

Business Continuity Cluster. You will be asked to specify the NetWare server where the

templates will be installed later in the installation.

Installation and Setup 23

Page 24

Selecting the Identity Manager Templates for Windows iManager Servers installs the templates

on the local Windows server. You must have iManager installed on the Windows server before

installing the templates. The templates add functionality to iManager so you can manage your

business continuity cluster. You will be asked to specify the path to Tomcat (a default path is

provided) on the Windows server later in the installation.

The Business Continuity Cluster component contains the core software engine files that make

up the Business Continuity Cluster product. The Business Continuity Cluster software must be

installed on all nodes in each cluster that will be part of a Business Continuity Cluster.

4 Do one of the following:

If you chose to install the IDM iManager templates on a NetWare server, specify the name

of the eDirectory tree and the fully distinguished name for the server where you want to

install the templates. Then click Next.

If you don’t know the fully distinguished name for the server, you can browse and select

it.

If you chose to install the IDM iManager templates on a Windows server, specify the path

to Tomcat (a default path is provided) on the server. Then click Next.

You must have iManager installed on the Windows server before installing the templates.

5 Continue through the Upgrade Reminder screen and then specify the name of the eDirectory

tree and the fully distinguished name for the cluster where you want to install the core software

files.

If you don’t know the fully distinguished name for the cluster, you can browse and select it.

novdocx (en) 11 December 2007

6 Select the servers in the cluster where you want to install the core software files for the

Business Continuity Cluster product.

All servers currently in the cluster you specified are listed and are selected by default.

You can choose to automatically start Business Continuity Cluster software on each selected

node after the installation is complete. If Business Continuity Cluster software is not started

automatically after the installation, you can start it manually later by rebooting the cluster

server or by entering LDBCC at the server console.

7 Enter the name and password of an eDirectory user (or browse and select one) with sufficient

rights to manage your BCC. This name should be entered in eDirectory dot format. For

example, admin.servers.novell.

This user should have at least Read and Write rights to the All Attribute Rights property on the

Cluster object of the remote cluster.

8 Continue through the final installation screen and then restart the cluster nodes where IDM is

running and where you have upgraded libc.nlm.

Restarting the cluster nodes can be performed in a rolling fashion in which one server is

restarted while the other servers in the cluster continue running. Then another server is

restarted, and then another, until all servers in the cluster have been restarted.

This lets you keep your cluster up and running and lets your users continue to access the

network while cluster nodes are being restarted.

9 Repeat the above procedure for each cluster that will be part of the business continuity cluster.

24 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 25

2.3.3 Business Continuity Cluster Component Locations

The following figure illustrates where the various components needed for a business continuity

cluster are installed.

Figure 2-1 Business Continuity Cluster Component Locations

novdocx (en) 11 December 2007

Server

A

IDM

Managment

Utilities

BCC

iManager

plug-ins

Fibre Channel

Ethernet Switch

NW 6.5

iManager

Server

1A

Switch

Fibre Channel

Disk Arrays

Cluster Site 1

Building A

NW 6.5

NCS

BCC eng.

Server

2A

NW 6.5

NCS

BCC eng.

Server

3A

NW 6.5

NCS

BCC eng.

IDM eng.

IDM eDir

Driver

WAN

eDirectory

IDM

SAN

Disk blocks

NW 6.5

NCS

BCC eng.

IDM eng.

IDM eDir

Driver

NW 6.5

NCS

BCC eng.

Server

1B

Building B

Ethernet Switch

Server

2B

NW 6.5

NCS

BCC eng.

Fibre Channel

Disk Arrays

Cluster Site 2

NW 6.5

iManager

Server

Server

B

IDM

Managment

3B

Utilities

BCC

iManager

plug-ins

Fibre Channel

Switch

Figure 2-1 uses the following abbreviations:

BCC eng. -- Business Continuity Cluster engine

NCS -- Novell Cluster Services

IDM eng. -- Identity Manager engine

IDM eDir Driver -- Identity Manager eDirectory Driver

NW 6.5 -- NetWare 6.5

2.4 Configuring File System Mirroring

Several different methods and scenarios for mirroring data between geographically separate sites

exist. Each method has its own strengths and weaknesses. After considering the different methods,

you will need to choose either host-based mirroring or SAN-based mirroring (also called arraybased mirroring) and whether you want the mirroring to be synchronous or asynchronous.

Installation and Setup 25

Page 26

SAN-based synchronous mirroring is preferred and is provided by SAN hardware manufacturers.

Host-based synchronous mirroring functionality is included with the NSS file system (NSS

mirroring) that is part of NetWare 6.5.

NOTE: The Business Continuity Cluster 1.1 product does not perform data mirroring. You must

configure either SAN-based mirroring or host-based mirroring separately.

NSS mirroring is a checkpoint-based synchronous mirroring solution. Data blocks are written

synchronously to multiple storage devices. It is an alternative to SAN array-based synchronous

replication options.

IMPORTANT: NSS Snapshot technology does not work in a business continuity cluster.

Figure 2-2 Synchronous Mirroring

Synchronous

Mirroring

Building A Building B

novdocx (en) 11 December 2007

Ethernet Switch

Server 2

Fibre Channel

Switch

Server 3Server 1 Server 4

Changes

Completion

Fibre Channel

Disk Array

Ethernet Switch

Server 6 Server 7Server 5 Server 8

Fibre Channel

Switch

Fibre Channel

Disk Array

MirrorOriginal

2.4.1 Configuring NSS Mirroring

NSS partitions must be mirrored after they are created. If you have an existing partition that you

want to mirror, you can either create another partition of equal size on another device to mirror the

first partition to, or let the mirroring software automatically create another partition of equal size on

another device.

When you create a Novell Cluster Services system that utilizes shared storage space (a Storage Area

Network or SAN), it is important to remember that all servers attached to the shared device, whether

in the cluster or not, have access to all of the volumes on the shared storage space unless you

specifically prevent such access. Novell Cluster Services arbitrates access to shared volumes for all

cluster nodes, but cannot protect shared volumes from being corrupted by noncluster servers.

26 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 27

NOTE: Software included with your SAN can be used to mask LUNs or provide zoning capabilities

that will prevent shared volumes from being corrupted by noncluster servers.

Creating and Mirroring NSS Partitions on Shared Storage

Prior to creating and mirroring NSS partitions on shared storage, ensure that you have

All servers in the cluster connected to a shared storage system

One or more drive arrays configured on the shared storage system

The drives on the shared storage system marked as shared.

To create and mirror NSS partitions:

1 Start NSSMU by entering NSSMU at the server console of a cluster server.

2 Select Partitions from the NSSMU main menu.

3 Press the Insert key and select the device on your shared storage system where you want to

create a partition.

With a device marked as sharable for clustering, all partitions on that device are automatically

sharable.

novdocx (en) 11 December 2007

Device names are not changeable and might be labeled something like 0x2 or 0x1.

4 Select NSS as the partition type, then specify the partition size and, if desired, an NSS pool

name and label.

If you specify a pool name, a pool by that name will automatically be created on the partition. If

no pool name is specified, you will have to create a pool on the partition later.

4a If you chose to create a pool, choose whether you want the pool to be activated and cluster

enabled when it is created.

The Activate on Creation feature is enabled by default. This causes the pool to be

activated as soon as it is created. If you choose not to activate the pool, you will have to

manually activate it later before it can be used.

The Cluster Enable on Creation feature is also enabled by default. If you want to cluster

enable the pool at the same time it is created, accept the default entry (Yes) and continue

with Step 4b. If you want to cluster enable the pool at a later date, see the OES Novell

Cluster Services 1.8.2 Administration Guide for NetWare for more information.

4b Specify the Virtual Server Name, IP Address, Advertising Protocols and, if necessary, the

CIFS Server Name.

When you cluster-enable a pool, a virtual Server object is automatically created and given

the name of the Cluster object plus the cluster-enabled pool. For example, if the cluster

name is cluster1 and the cluster-enabled pool name is pool1, then the default virtual server

name will be cluster1_pool1_server. You can edit the field to change the default virtual

server name.

Each cluster-enabled NSS pool requires its own IP address. The IP address is used to

provide access and failover capability to the cluster-enabled pool (virtual server). The IP

address you assign to the pool remains assigned to the pool regardless of which server in

the cluster is hosting the pool.

You can select one or all of the advertising protocols. NCP™ is the protocol used by

Novell clients, CIFS is the protocol used by Microsoft clients, and AFP is the protocol

Installation and Setup 27

Page 28

used by Macintosh* clients. Selecting any of the protocols causes lines to be added to the

pool resource load and unload scripts to activate the selected protocols on the cluster. This

lets you ensure that the cluster-enabled pool you just created is highly available to all your

clients.

If you select CIFS as one of the protocols, a CIFS Server Name is also required. This is the

server name CIFS clients see when they browse the network. A default server name is

listed, but you can change the server name by editing the text in the field.

4c Select Create to create and cluster-enable the pool.

5 Select the partition you want to mirror (this might be the partition you just created) and press

the F3 key.

6 Select the device with free space or the partition you want to mirror to, then select YES to

mirror the partition.

To ensure disaster recovery, the device you select to mirror should be in another storage array

in the other data center.

Creating an NSS Pool and Volumes

After an NSS partition has been created and mirrored, if you have not already done so, you must

create an NSS pool and volume on that partition. To do this, follow the instructions in "“Creating

NSS Pools” " in the “Installation and Setup” section of the Novell Cluster Services 1.8.2

Administration Guide for NetWare.

novdocx (en) 11 December 2007

Novell Cluster Services Configuration and Setup

After configuring NSS mirroring and creating a pool and volume on the mirrored NSS partition, if

you did not cluster-enable the NSS pool on the mirrored partition when you created it, do so by

following the instructions in the “Installation and Setup”section of the Novell Cluster Services 1.8.2

Administration Guide for NetWare.

When you cluster-enable a shared disk pool, the commands to start and stop the pool resource are

automatically added to the resource load and unload scripts.

Checking NSS Volume Mirror Status

After you have configured NSS mirroring with Novell Cluster Services, you should check to ensure

that it is working properly in a cluster environment.

1 Ensure that the volumes on the cluster-enabled pool are mounted on the assigned server by

entering volumes at the server console.

2 Check the mirror status of the mirrored partition by entering mirror status at the server

console of the server where the NSS pool on the mirrored partition is active.

After entering mirror status, you should see a message indicating that mirror status is 100

percent or a message indicating that the mirrored object is fully synchronized.

3 Migrate the pool to another server in the cluster and again check to ensure the volumes on the

pool are mounted by entering volumes at the server console.

4 Check the mirror status of the partition again by entering mirror status at the server

console.

28 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 29

IMPORTANT: If you create or delete a pool or partition on shared storage that is part of a business

continuity cluster, you must run the cluster scan for new devices command on a server

in each of the other clusters that belong to the business continuity cluster.

2.4.2 Configuring SAN-Based Mirroring

Consult your SAN vendor or SAN vendor documentation for instructions on configuring SANbased mirroring.

2.4.3 LUN Masking

We recommend that you implement LUN masking in your business continuity cluster for data

protection. LUN masking is provided by your SAN vendor.

LUN masking is the ability to exclusively assign each LUN to one or more host connections. With it

you can assign appropriately sized pieces of storage from a common storage pool to various servers.

See your SAN vendor documentation for more information on configuring LUN masking.

novdocx (en) 11 December 2007

2.5 Setting Up Novell Business Continuity

Cluster Software

After you have installed and configured IDM and the Business Continuity Cluster software, and you

have configured file system mirroring, you need to set up Novell Business Continuity Cluster

software. Instructions contained in this section for setting up Novell Business Continuity Cluster

software consists of:

“Configuring Business-Continuity-Specific IDM Drivers” on page 29

“Configuring Clusters for Business Continuity” on page 35

“Configuring Cluster Resources for Business Continuity” on page 41

2.5.1 Configuring Business-Continuity-Specific IDM Drivers

The IDM preconfigured templates for iManager that were installed when you ran the Business

Continuity Cluster (BCC) installation must be configured so you can properly manage your business

continuity cluster. The preconfigured templates include a template for cluster resource

synchronization and a template for User object synchronization. Cluster resource synchronization

must always be configured, even in a single-tree BCC. User object synchronization is necessary

only if you have more than one eDirectory tree in your business continuity cluster. See Appendix A,

“Implementing a Multiple-Tree BCC,” on page 81 for more information.

IMPORTANT: The Identity Manager engine and eDirectory driver must be installed on one node in

each cluster. The node where Identity Manager is installed must have an eDirectory replica with at

least read/write access to all eDirectory objects that will be synchronized between clusters. This

does not apply to all eDirectory objects in the tree.

The eDirectory replica must have at least read/write access to the following containers:

The Identity Manager driver set container.

The container where the Cluster object resides.

Installation and Setup 29

Page 30

The container where the server objects reside.

If server objects reside in multiple containers, this must be a container high enough in the tree

to be above all containers that contain server objects.

Best practice is to have all server objects in one container.

The container where the cluster pool and volume objects will be placed when they are

synchronized to this cluster. This container is referred to as the landing zone. The NCP server

objects for the virtual server of a BCC enabled resource will also be placed in the landing zone.

If the eDirectory replica does not have read/write access to the containers listed above,

synchronization will not work properly.

NOTE: Filtered eDirectory replicas are not supported with this version of Business Continuity

Cluster software. Full replicas are required.

To configure the IDM drivers/templates:

1 Start your Internet browser and enter the URL for iManager.

novdocx (en) 11 December 2007

The URL is http://server_ip_address/nps/iManager.html. Replace server_ip_address with the

IP address or DNS name of the NetWare server that has iManager and the DirXML

preconfigured templates for iManager installed.

2 Enter your username and password.

3 In the left column, click DirXML Utilities, then click the New Driver link.

4 Choose to place the new driver in a new driver set, then click Next.

Both the User Object Synchronization Driver and the Cluster Resource Synchronization Driver

can be added to the same driver set.

5 Specify the driver set name, context, and the server that the driver set will be associated with.

The server is the same server where you installed the IDM engine and eDirectory driver.

6 Choose to not create a new partition for the driver set, then click Next.

7 Choose to import a preconfigured driver from the server, select the DirXML preconfigured

template for cluster resource synchronization, then click Next.

The template name is BCCClusterResourceSyncronization.XML.

8 Fill in the values on the wizard page as prompted, then click Next.

Each field contains an example of the type of information that should go into the field.

Descriptions of the information required are also included with each field.

Additional information for the wizard page fields includes:

Driver name: Specify a unique name for this driver that will identify its function. For

example, Cluster1SyncCluster2. If you use both preconfigured templates, you must

specify different driver names for each driver template.

Name of SSL Certificate: If you do not have an SSL certificate, leave this value set to the

default. The certificate will be created later in the configuration process. See Step 1 on

page 32 for instructions on creating SSL certificates.

DNS name of other IDM node: Specify the DNS name or IP address of the IDM server

in the other cluster.

30 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 31

Port number for this driver: If you have a business continuity cluster that consists of

three or four clusters, you must specify unique port numbers for each driver template set.

The default port number is 2002.

You must specify the same port number for the same template in the other cluster. For

example, if you specify 2003 as the port number for the resource synchronization

template, you must specify 2003 as the port number for the resource synchronization

template in the peer driver for the other cluster.

Full Distinguished Name (DN) of the cluster this driver services: For example,

Cluster1.siteA.Novell.

Fully Distinguished Name (DN) of the landing zone container: Specify the context of

the container where the cluster pool and volume objects in the other cluster will be placed

when they are synchronized to this cluster. This container is referred to as the landing

zone. The NCP server objects for the virtual server of a BCC enabled resource will also be

placed in the landing zone.

IMPORTANT: The context must already exist and must be specified using dot format

without the tree name. For example, siteA.Novell.

Prior to performing this step, you could create a separate container in eDirectory specificly

for these cluster pool and volume objects. You would then specify the context of the new

container in this step.

The IDM Driver object must have sufficient rights to create, modify, and delete objects and

attributes in the following containers:

novdocx (en) 11 December 2007

The Identity Manager driver set container.

The container where the Cluster object resides.

The container where the Server objects reside.

If server objects reside in multiple containers, this must be a container high enough in the

tree to be above all containers that contain server objects.

Best practice is to have all server objects in one container.

The container where the cluster pool and volume objects will be placed when they are

synchronized to this cluster.

This container is referred to as the landing zone. The NCP server objects for the virtual

server of a BCC enabled resource will also be placed in the landing zone.

You can do this by making the IDM Driver object security equivalent to another user object

with those rights. See Step 9 on page 31.

If you choose to include User object synchronization, exclude the Admin User object from

being synchronized. See Step 7 on page 83.

9 Make the IDM Driver object security equivalent to an existing User object.

9a Click Define Security Equivalences, then click Add.

9b Browse to and select the desired User object, then click OK.

9c Click Next, then click Finish.

10 Repeat Step 1 through Step 9 above on the other clusters in your business continuity cluster.

This includes creating a new driver and driver set for each cluster.

Installation and Setup 31

Page 32

IMPORTANT: If you have upgraded to IDM 3 and click either the cluster synchronization driver or

the user synchronization driver, a message will be displayed prompting you to convert the driver to a

new architecture. Click OK to convert the driver.

Creating SSL Certificates

It is recommended that you create an an SSL certificate for the Cluster Resource Synchronization

Driver. Creating one certificate creates that certificate for a driver pair. For example, creating an SSL

certificate for the Cluster Resource Synchronization Driver creates the certificate for the Cluster

Resource Synchronization Drivers on both clusters.

To create an SSL certificate:

1 Start your Internet browser and enter the URL for iManager.

The URL is http://server_ip_address/nps/iManager.html. Replace server_ip_address with the

IP address or DNS name of the server that has iManager and the IDM preconfigured templates

for iManager installed.

2 Enter your username and password.

novdocx (en) 11 December 2007

3 In the left column, click DirXML Utilities, then click NDS-to-NDS Driver Certificates.

4 Specify the requested driver information for this cluster, then click Next.

You must specify the driver name (including the context) you supplied in Step 8 on page 30 for

this cluster. Use the following format when specifying the driver name:

DriverName.DriverSet.OrganizationalUnit.OrganizationName

Ensure that there are no spaces (beginning or end) in the specified context, and do not use the

following format:

cn=DriverName.ou=OrganizationalUnitName.o=OrganizationName

5 Specify the requested driver information for the driver in the other cluster, then click Next and

then Finish.

Use the same format specified in Step 4 above.

Synchronizing IDM Drivers

After creating BCC-specific IDM drivers and SSL certificates, if you are adding a new cluster to an

existing business continuity cluster, you must synchronize the BCC-specific IDM drivers. If BCCspecific IDM drivers are not synchronized, clusters can't be enabled for business continuity. This is

not necessary unless you are adding a new cluster to an existing business continuity cluster.

To synchronize BCC-specific IDM drivers:

1 Start your Internet browser and enter the URL for iManager.

The URL is http://server_ip_address/nps/iManager.html. Replace server_ip_address with the

IP address or DNS name of the server that has iManager and the IDM preconfigured templates

for iManager installed.

2 Enter your username and password.

3 In the left column, click DirXML, then click the DirXML Overview link.

4 Search for and find the BCC driver set.

32 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 33

5 Click the red Cluster Sync icon for the driver you want to synchronize, then click the Migrate

from eDirectory button.

6 Click Add, browse to and select the cluster object for the new cluster you are adding, then click

OK.

Selecting the cluster object will cause the BCC-specific cluster IDM drivers to synchronize.

If you have multiple eDirectory trees in your BCC, see Section A.4, “Synchronizing IDM Drivers,”

on page 83.

Preventing IDM Synchronization Loops

If you have three or more clusters in your business continuity cluster, you should set up IDM user

object and cluster resource object synchronization in a manner that prevents IDM synchronization

loops. IDM synchronization loops can cause excessive network traffic and slow server

communication and performance.

For example, in a three-cluster business continuity cluster, an IDM synchronization loop occurs

when Cluster One is configured to synchronize with Cluster Two, Cluster Two is configured to

synchronize with Cluster Three, and Cluster Three is configured to synchronize back to Cluster One.

This is illustrated in Figure 2-3 below.

novdocx (en) 11 December 2007

Figure 2-3 Three-Cluster IDM Synchronization Loop

Cluster

One

DirXML Sync

DirXML Sync

DirXML Sync

Cluster

Three

Cluster

Tw o

A preferred method is to make Cluster One an IDM synchronization master in which Cluster One

synchronizes with cluster two and Cluster Two and Cluster Three both synchronize with Cluster

One. This is illustrated in Figure 2-4 below.

Installation and Setup 33

Page 34

Figure 2-4 Three-Cluster IDM Synchronization Master

Cluster

One

DirXML Sync

DirXML Sync

novdocx (en) 11 December 2007

Cluster

Three

Cluster

Tw o

You could also have Cluster One synchronize with Cluster Two, Cluster Two synchronize with

Cluster Three, and Cluster Three synchronize back to Cluster Two as is illustrated in Figure 2-5

below.

Figure 2-5 Alternate Three-Cluster IDM Synchronization Scenario

Cluster

One

DirXML Sync

DirXML Sync

Cluster

Three

Cluster

Tw o

To change your BCC synchronization scenario:

1 In the Connections section of the Business Continuity Cluster Properties page, select one or

more peer clusters that you want a cluster to synchronize to, then click Edit.

In order for a cluster to appear in the list of possible peer clusters, that cluster must:

Have Business Continuity Cluster software installed.

Have IDM installed.

Have business continuity-specific IDM drivers configured and running.

Be enabled for business continuity.

34 Novell Business Continuity Clustering 1.1 for NetWare Administration Guide

Page 35

2.5.2 Configuring Clusters for Business Continuity

This procedure consists of

“Enabling Clusters for Business Continuity” on page 35

“Adding Cluster Peer Credentials” on page 35

“Adding Resource Script Search and Replace Values” on page 36

These tasks must be performed on each separate cluster that you want to be part of the business

continuity cluster.

NOTE: Identity Manager must be configured and running before configuring clusters for business

continuity.

Enabling Clusters for Business Continuity

If you want to enable a cluster to fail over selected resources or all cluster resources to another

cluster, you must enable business continuity on that cluster.

novdocx (en) 11 December 2007

1 Start your Internet browser and enter the URL for iManager.

The URL is http://server_ip_address/nps/iManager.html. Replace server_ip_address with the

IP address or DNS name of the server that has iManager and the IDM preconfigured templates

for iManager installed. This server should be in the same eDirectory tree as the cluster you are

enabling for business continuity.

2 Enter your username and password.

3 Ensure that the Business Continuity-specific IDM drivers are running.

3a In the left column, click DirXML, and then click the DirXML Overview link.

3b Search the eDirectory Container or tree for the Business Continuity-specific DirXML

drivers.

3c Click the upper right corner of the driver icon(s) to see if the driver is started or stopped.

If the driver is stopped, you can start it by choosing Start.

4 In the left column, click Clusters, then click the Cluster Options link.

5 Specify a cluster name or browse and select one.

6 Click the Properties button, then click the Business Continuity tab.

7 Ensure that the Enable Business Continuity Features check box is selected.

8 Repeat Step 1 through Step 7 for the other cluster that this cluster will migrate resources to.

9 Continue with Step 1 in the Adding Cluster Peer Credentials section below.

Adding Cluster Peer Credentials

In order for one cluster to connect to a second cluster, the first cluster must be able to authenticate to

the second cluster. To make this possible, you must add the username and password of the user that

the selected cluster will use to connect to the selected peer cluster.

Installation and Setup 35

Page 36

IMPORTANT: In order to add or change cluster peer credentials, you must access iManager on a

server that is in the same eDirectory tree as the cluster you are adding or changing peer credentials

for.