Page 1

Deployment Guide: Network Convergence with

Emulex LP21000 CNA and VMware® ESX Server

How to deploy Converged Networking with VMware ESX

Server Using Emulex FCoE Technology

Page 2

Table of Contents

Introduction ......................................................................................................................................... 3

Drivers of Network Convergence ..................................................................................................... 3

Benefits of FCoE Enabled Network Convergence ........................................................................... 4

FCoE Technology Overview .............................................................................................................. 5

Introduction to Emulex LP21000 CNA ............................................................................................. 6

Converged Network Deployment models ........................................................................................ 7

Converged Networking with FCoE in an ESX Environment .......................................................... 7

CNA Instantiation Model in ESX ............................................................................................................................................ 8

Configuring the LP21000 as a Storage Adapter .................................................................................................................. 9

Configuring the LP21000 for Multipathing in ESX ............................................................................................................ 11

Configuring the LP21000 as a Network Adapter ............................................................................................................. 13

Configuring the LP21000 for NIC Teaming in ESX .......................................................................................................... 14

Scaling to Enterprise Manageability ...................................................................................................................................... 14

Conclusion .......................................................................................................................................... 16

2

Page 3

Introduction

Fibre Channel over Ethernet (FCoE) technology enables convergence of storage and LAN networks in

enterprise data centers. The key benefits of FCoE include cost savings and improved operating efficiencies,

but the technology is new and deployment configurations are less well understood than proven Fibre

Channel solutions.

Server virtualization using VMware ESX enables consolidation of server resources by allowing multiple

virtual machines to exist on a single physical server. This level of consolidation increases the demand for I/O

bandwidth and the need for multiple I/O adapters. FCoE-based converged networking addresses the

requirement for increased bandwidth while significantly simplifying the I/O infrastructure.

This deployment guide presents information about network convergence using FCoE technology and also

provides an overview of how to realize the maximum benefits from network convergence using FCoE

technology in an ESX Server environment.

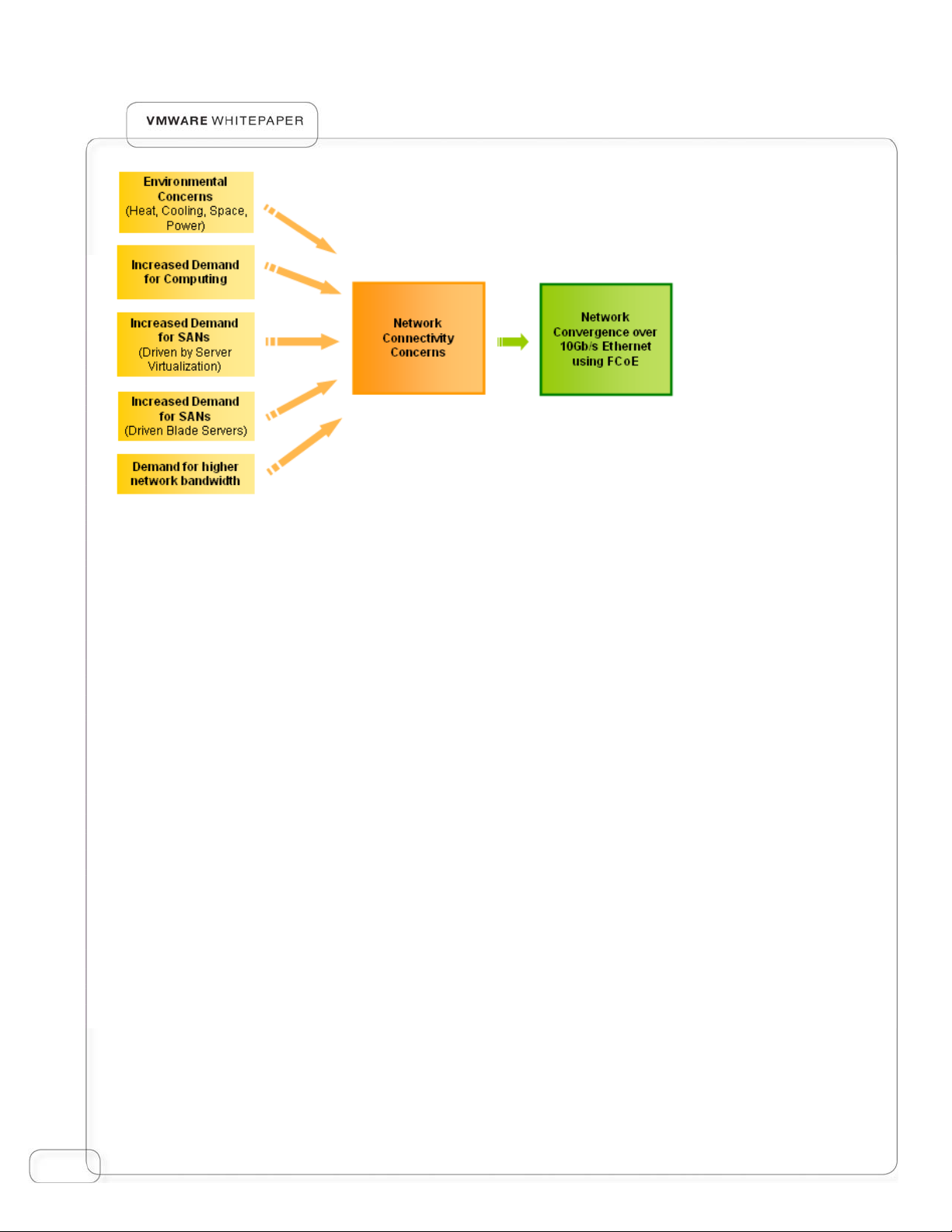

Drivers of Network Convergence

Enterprises rely on their computing infrastructure to provide a broad array of services. As enterprises

continue to scale their computing infrastructure to meet the demand for these services, they inherently face

an infrastructure sprawl fueled by the proliferation of servers, networks and storage. This infrastructure

growth typically results in increased capital expenses for equipment and operating expenses for power,

cooling and IT management, reducing the overall efficiency of operations.

In order to overcome these limitations, the industry is moving towards empowering data centers with

technologies that enable consolidation. Server virtualization and blade server technologies are helping

address the consolidation of physical servers and rising rack densities in the data center. A related trend

associated with server virtualization and blade server deployments is the expanded use of higher I/O

bandwidth and adoption of external storage which enable consolidation and mobility.

Since the late 1990s data centers have maintained two sets of networks – a Fibre Channel storage area

network (SAN) for storage I/O traffic and a local area network (LAN) for data networking traffic. With the

growing implementation of SANs, accelerated by the adoption of blade servers and server virtualization, the

penetration of SANs is expected to grow much higher.

While IT managers can continue to maintain two separate networks, in reality this increases overall costs by

multiplying the number of adapters, cables, and switch ports required to connect every server directly with

supporting LANs and SANs. Further, the resulting network sprawl hinders the flexibility and manageability of

the data center.

3

Page 4

Figure 1: Key factors driving network convergence.

Network convergence, enabled by FCoE, helps address the network infrastructure sprawl while fully

complementing server consolidation efforts and improving the efficiency of the enterprise data center.

Benefits of FCoE Enabled Network Convergence

An FCoE enabled converged network provides the following key benefits to IT organizations:

• Lower total cost of ownership through infrastructure simplification

A converged network based on FCoE lowers the number of cables, switch ports and adapters

required to maintain both SAN and LAN connectivity. The reduction in the number of adapters

facilitates the use of smaller servers and the expanded offload to the adapter enables higher CPU

efficiency which reduces power and cooling costs.

• Consolidation while protecting existing investments

FCoE lets organizations phase the roll out of converged networks. FCoE–based network

convergence drives consolidation in new deployments without affecting the existing server and

storage infrastructure or the processes required to manage and support existing applications. For

example, the use of a common management framework across Fibre Channel and FCoE connected

servers protects existing investments in management tools and processes and lowers the long-term

operating cost of the data center.

4

Page 5

• Increased IT efficiency and business agility

A converged network streamlines and eliminates repeated administrative tasks such as server and

network provisioning with a “wire once” deployment model. The converged network also improves

business agility letting data centers dynamically and rapidly respond to requests for new or expanded

services, new servers and new configurations. The converged network fully complements server

virtualization in addressing the on-demand requirements of the next generation data center where

applications and the infrastructure are provisioned on the fly.

• Seamless extension of Fibre Channel SANs in the data center

Fibre Channel is the predominant storage protocol deployed by enterprise data centers. With the

adoption of blade servers and server virtualization, there is an increased demand for Fibre Channel

SANs in these environments. FCoE addresses this requirement by leveraging 10Gb/s Ethernet and

extending proven Fibre Channel SAN benefits. The use of light-weight encapsulation for FCoE also

facilitates FCoE gateways that are less compute intensive and thus ensures high levels of storage

networking performance.

• Alignment with existing operating models and administrative domains

FCoE based converged networks gives IT architects the ability to design a data center architecture

that aligns with existing organization structure and operating models. This minimizes the need to

unify or significantly overhaul operating procedures used by storage and networking IT staff.

FCoE Technology Overview

FCoE is the industry standard that drives network convergence in the enterprise data center. The FCoE

technology leverages lossless Ethernet infrastructure to carry native Fibre Channel traffic over Ethernet.

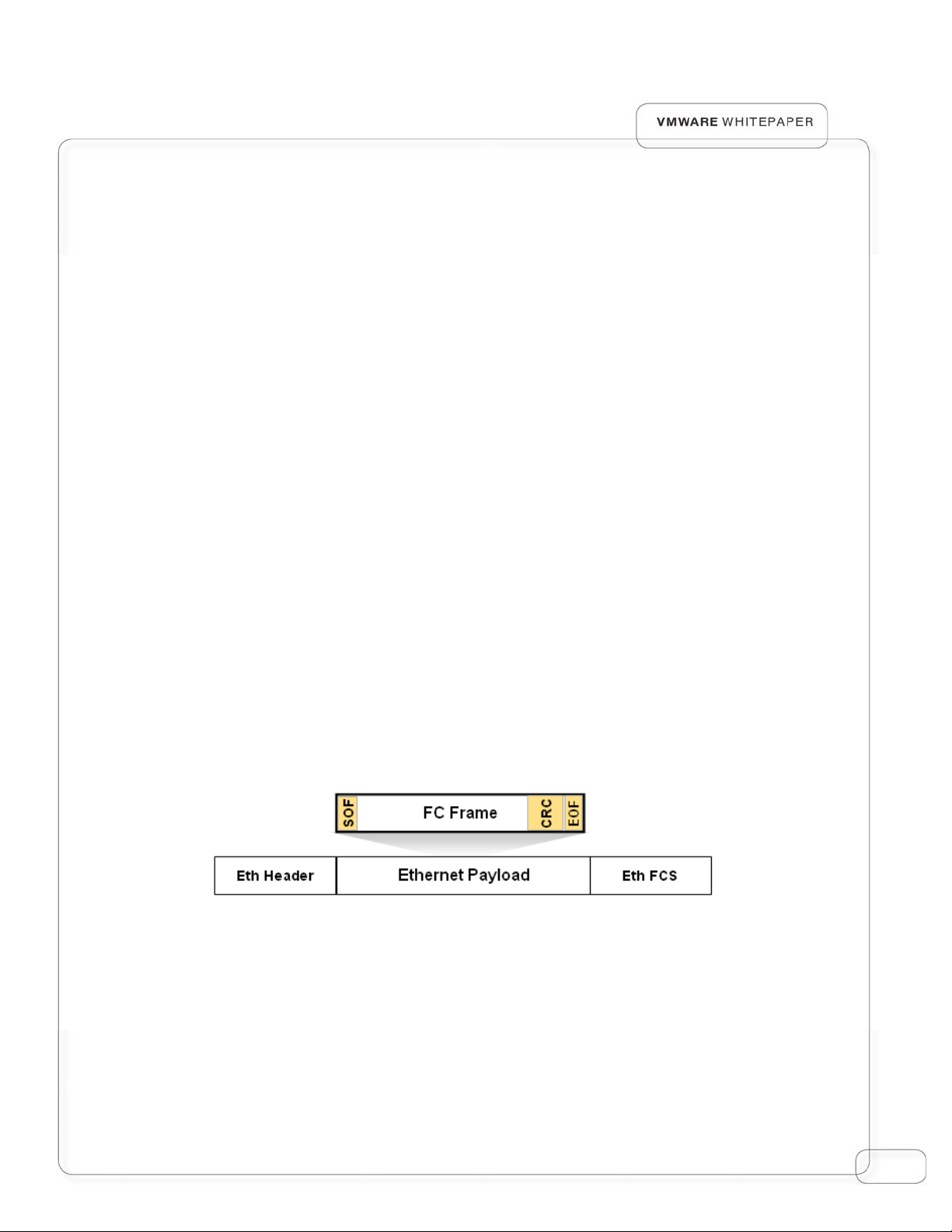

Figure 2: FCoE Encapsulates complete Fibre Channel frames on to an

Ethernet frames

Leveraging 10Gb/s Ethernet to carry both the data networking and storage networking traffic enables

convergence in the data center networks and thus reduces the number of cables, switch ports and adapters,

which lowers the overall power and cooling requirements and the total cost of ownership.

5

Page 6

n

d

e

n

n

v

n

t

n

t

e

A

n

g

v

w

t

a

n

n

A

s

r

u

t

1

v

a

e

o

e

N

n

w

d

n

E

i

A

r

e

F

o

s

d

g

u

o

c

u

r

e

e

y

a

z

pplications

By contin

existing i

Intro

Enha

uing to main

vestments i

uction

Fi

gure 3: Net

ced Etherne

ain Fibre Ch

Fibre Chan

o Emul

Network

TCP

IP

orking and

infrastructu

nnel as the

el infrastruc

ex LP2

ing

L

ssless Ether

Fibre Chann

e to achieve

pper layer p

ure.

000 C

SCSI

FCP

FC-2

FCo

Mappin

et

l stacks leve

convergenc

rotocol, the

A

age a comm

in the data

CoE techno

n

enter

logy also full

employs

6

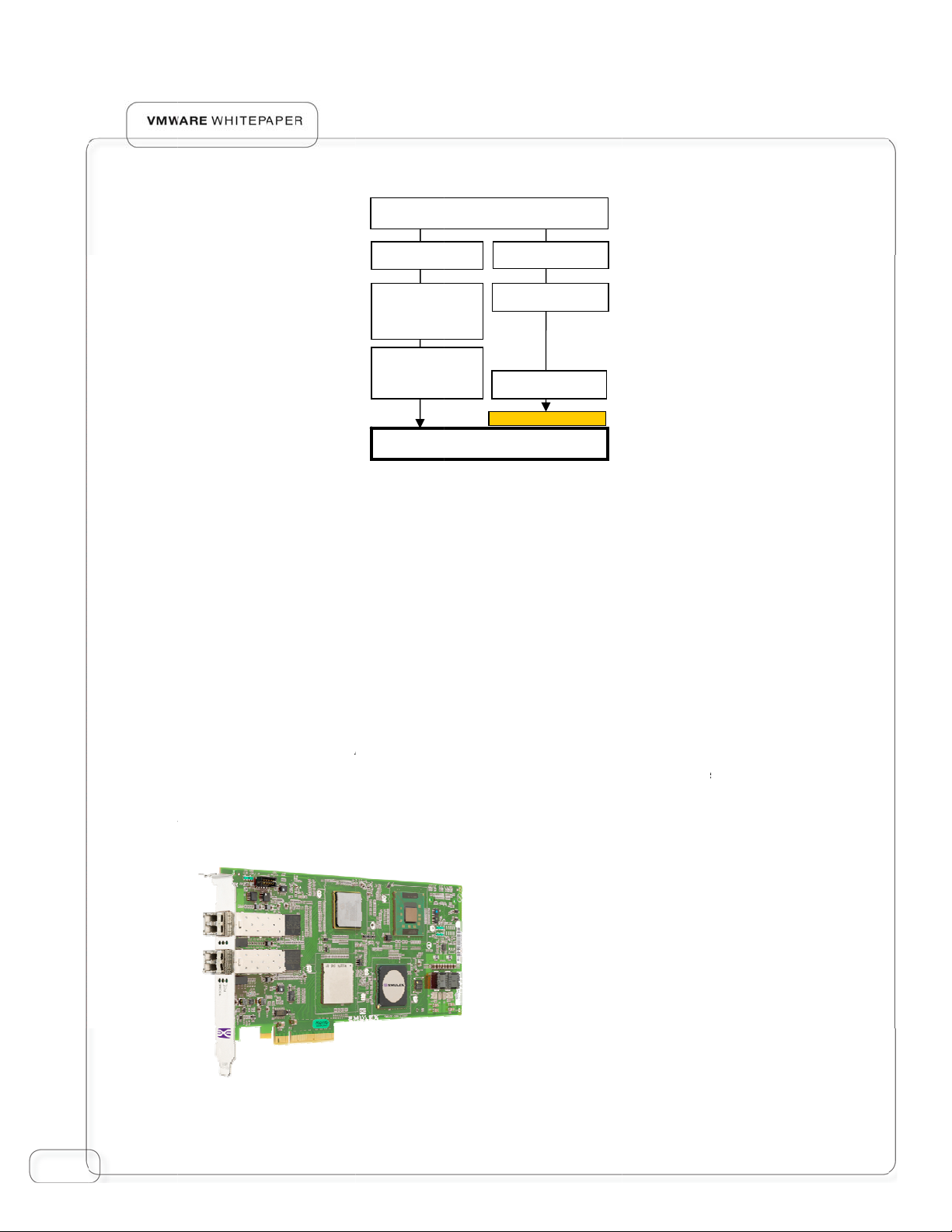

Emulex is

Converg

networki

convergi

server en

one of the l

d Network

g applicatio

g networkin

ironments.

ading propo

dapter (CN

s in enterpri

and storage

ents and de

) product f

e data cent

connectivity

elopers of F

mily is desig

rs. The LP12

hile provi

F

CoE technol

ed to suppo

000 simplifie

ing enhance

gure 4: Em

gy. The Em

rt high perfo

network d

performanc

lex LP21000

lex LP21000

mance stor

ployment by

for virtuali

CNA

ge and

ed

Page 7

Servers supporting PCI Express (x8 or higher) will be able to utilize the full potential of the Emulex

LP21000’s 2x10Gb/s Ethernet interfaces, balancing both storage and networking I/O requirements. The

priority flow control prevents degradation of traffic delivery and enhanced transmission selection enables

partitioning and guaranteed service levels for different traffic types.

The LP21000 fully leverages the proven Emulex LightPulse® architecture and thus extends the reliability,

performance and manageability characteristics of proven Fibre Channel host bus adapters (HBAs) to the

converged network adapter.

Converged Network Deployment models

Emulex is closely partnering with Convergence Enhanced Ethernet (CEE) switch manufacturers to develop

the next generation of FCoE based converged network infrastructure. The combination of lossless Ethernet

switches and Emulex CNAs will help facilitate the adoption of FCoE and pave the way for 10Gb/s Ethernet

as the converged network fabric in the enterprise data center.

The lossless Ethernet switch, typically provisioned with native Fibre Channel ports and an FCoE to Fibre

Channel forwarder, provides all the services of a Fibre Channel switch. The switch can be connected

directly to a Fibre Channel storage array directly as an F-port, or connected to another Fibre Channel

switch or a Fibre Channel director (using E-port or NPIV mode) which is then connected to the rest of the

SAN.

Figure 5: Converged network deployment and access to SAN

7

Page 8

Converged Networking with FCoE in an ESX Environment

Server virtualization is one of the key drivers of FCoE deployment. FCoE enabled network convergence

significantly reduces the number of cables, switch ports and adapters as show in Figure 6.

Virtual Network 1

Virtual Network 2

Virtual Network 2

Virtual Network 3

Fibre Channel SAN A

Fibre Channel SAN B

Administration/VMRC

Server NIC

Virtual Network 1

VMotion

Virtual Network 3

VMotion

Administration/VMRC

Server NIC

Virt Net 1 Backup

Virt Net 2 Backup

Virt Net 3 Backup

VMotion Backup

Separate LAN and SAN

connectivity with NICs and HBAs

Converged Networking with

CNA

Figure 6: Effects of Convergence using a CNA in a Rack-mount Server running ESX

The following sections provide a detailed description of how to deploy and configure an Emulex LP21000 in

a VMware ESX Server environment.

CNA Instantiation Model in ESX

Although the CNA is a single physical entity, to an operating system the adapter is represented as an HBA

and a NIC (Network Interface Card).This level of transparency to the operating system ensures that the

CNA configuration and management practices are performed in the same manner as current standalone

NIC and HBA devices.

Extending this concept to VMware ESX, the Emulex LP21000 CNA is recognized by ESX as both an HBA

and a NIC. The ESX server in turn provides the networking and storage networking access for the virtual

8

Page 9

machines hosted on the ESX server. The configuration and manageability of the ESX server is performed by

VMware vCenter® Server (typically residing on a separate management server).

Figure 7: The hypervisor/VMKernel views the CNA as an HBA and a NIC

When an Emulex LP21000 CNA is installed on an ESX server, the adapter is visible in the vCenter

Configuration Tab in the storage adapter view as well as the network adapter view. When deploying

multiple CNAs for failover and load balancing, both Fibre Channel multipathing and NIC teaming must be

configured on both CNAs.

Configuring the LP21000 as a Storage Adapter

Upon installation, the LP21000 appears as a storage adapter similar to that of a HBA (see Figure 8). The

LP21000s are each associated with a standard 48-bit Fibre Channel WWN (World Wide Name) and all

LUNs visible to that adapter are displayed in the details area for the selected LP21000. LUN masking and

zoning operations, required for LUN visibility, are carried out similar to configuring a Fibre Channel HBA.

9

Page 10

Figure 8:

Two LP21000

CNAs appear as

Fibre Channel

storage adapters

By selecting storage from within the configuration tab (see Figure 9), the Add Storage Wizard can be used to

create the data stores and select either the Virtual Machine File System (VMFS), or Raw Device Mapping

(RDM) access method (for more information refer to the VMware ESX configuration guide). Both access

methods can be used in parallel by the same ESX server using the same physical HBA or CNA.

Figure 9:

Use the “Add

Storage Wizard” to

create the datastore

10

Page 11

IT organizations can also leverage the Emulex Virtual HBA technology, based on the ANSI T11 N_Port ID

Virtualization (NPIV) standard. This virtualization technology allows a single physical CNA or HBA port to

function as multiple virtual ports (VPorts), with each VPort having a separate identity in the Fabric.

NPIV support began with ESX Server 3.5 and requires use of RDM. Using NPIV, each virtual machine can

attach to its own VPort which consists of the combination of a distinct Worldwide Node Name (WWNN)

and multiple Worldwide Port Names (WWPNs), as shown in Figure 10. Again, the procedures for using this

technology are the same as those for Fibre Channel HBAs, including full support for virtual machine and

virtual port migration by VMotion.

Configuring the LP21000 for Multipathing in ESX

Multipathing is common technology used by data centers to protect against adapter, switch, or cable failure.

Enabling multipathing with the CNA typically means providing link redundancy either in the form of dualport configurations or a configuration with two single port CNAs to support failover. Similar to HBA

multipath configuration, each of the CNA ports used for multipathing must have visibility to the same LUNs

including proper physical connectivity, LUN masking, zoning, and any other array configuration. When each

LP21000 has the required visibility to the same LUNs and an ESX data store has been appropriately created

on those LUNs, then ESX will provide the path switching or the multipathing to that data store, providing a

redundant path for VMs to their corresponding storage store. No special drivers are required, since the

multipathing functionality is part of ESX.

A single dual-port CNA can provide port redundancy with each port connecting to a different CEE switch.

SAN A

FCoE Enabled

Server w/

LP21002

Switch

FCoE Enabled

SAN B

Switch

Figure 10: Port level redundancy using a single Dual-Ported CNA

Though the above setup provides multiple data paths to the storage and provides active-standby links for

networking, it does not eliminate the CNA as the single point of failure. This situation is avoided by the next

configuration. Added redundancy can be provided with two CNAs serving one host. Installing two CNAs in

one host is the recommended configuration for high availability converged networking.

11

Page 12

SAN A

FCoE Enabled

Server w/

LP21000

In this configuration, even in the extreme case of a CNA failure, the links for storage and networking can be

immediately failed over with no interruption to applications.

Within ESX, multipathing can be configured from the configuration tab by selecting storage, followed by

selecting properties for the appropriate data store. From the data store properties windows, selecting

Manage Paths will reveal the multiple paths to the data store and the policy for multipathing access (fixed,

last active, or round robin - see Figure 12). Once the multipathing configuration has been completed for a

set of CNAs on an ESX server, redundancy configuration for the storage functionality of the CNAs will be

complete. However, the network side of the CNA still requires configuration (NIC teaming).

Figure 11: CNA level redundancy using two Single-Ported CNAs

Switch

FCoE Enabled

Switch

SAN B

Figure 12: CNA initiated multipathing accessing a data store

12

Page 13

Configuring the LP21000 as a Network Adapter

Upon installation, the LP21000 appears as a 10 Gigabit NIC in the Configuration Tab’s network adapter

view, as shown in Figure 13 where two LP21000s appears as two 10 Gigabit network adapters (also shown

are two 100 Mbps NICs which are built into the server motherboard.).

Figure 13: Two LP21000 CNAs appear as two 10 Gigabit network adapters

It is recommended that the service console traffic be on a separate physical network, using the 100 Mbps

links. This provides the enhanced security for the service console as well as enables CNA link bandwidth to

be shared by multiple virtual machines. Virtual machines reach the external network through the virtual

network (which is comprised of one or more vSwitches) which in turn is linked with the physical adapters.

In order to create a virtual network and assign it a virtual machine, begin by selecting Networking from the

Configuration tab and then select Add Networking to start the Add Networking Wizard. Select create a

virtual switch and select the network adapters (which are representations of CNAs) from the list to map

them as uplinks to the virtual switch. Since multiple virtual machines with variety of traffic types can now

access the shared 10Gb/s link, it is also recommended that Virtual LANs (VLANs) be used to segregate

traffic from different virtual machines. The VLAN information can be configured as part of Port Group

properties under the Connection Settings screen of the Add Networking Wizard.

13

Page 14

Configuring the LP21000 for NIC Teaming in ESX

NIC teaming occurs when multiple uplink adapters are associated with a single vSwitch to form a team. A

team can share the load of traffic between physical and virtual networks or provide passive failover in the

event of a hardware failure or network outage. Figure 14 shows the configuration of two vSwitches within

an ESX server. The service console traffic is assigned to a vSwitch that in turn is connected to a 100 Mbps

NIC. The second vSwitch provides networking for the VMkernel service and for a virtual machine. On the

uplink, the vSwitch is connected to two LP21000 CNAs which provides redundancy through NIC teaming.

14

Figure 14: A network configuration where a vSwitch is uplinked with two CNAs for NIC teaming

Scaling to Enterprise Manageability

As FCoE-based convergence makes its way into enterprise data centers, one of the key requirements is the

ability to manage both the FCoE and Fibre Channel SAN infrastructure without increasing the overall

management complexity. Emulex provides software applications that streamline deployment, simplify

management and virtualization as well as provide multipathing capabilities in mission critical environments.

Emulex HBAnyware is a unified management platform for Emulex LightPulse Fibre Channel HBAs and FCoE

CNAs. It provides a centralized console to manage Emulex HBAs and CNAs on local and remote hosts.

Some new features of HBAnyware 4.0 include -

Page 15

Unified management of Emulex Fibre Channel HBAs and CNAs

• VPort monitoring and configuration

• Online boot from SAN configuration

• On-line WWN management

• Flexible, customizable reporting capability

The availability of graphical user interface (GUI) and command line interface (CLI) options provides greater

flexibility with HBAnyware. The application is available for a broad set of operating systems including Linux,

Windows, Solaris and VMware ESX platforms and supports cross-platform SANs. Some of the highly valued

capabilities of HBAnyware that make it more attractive for enterprise scalability and high availability are

firmware management, with the ability to update or “flash” firmware without having to bring down the CNA

or HBA, and the ability to flash a set of adapters on remote servers with just a few mouse clicks.

Figure 15: HBAnyware 4.0 common Management Application for HBAs and CNAs that can be local or remote

Of additional value for data center management is the common driver architecture where the same driver

can be used with all generations of Emulex HBAs and CNAs. This reduces driver update errors and

simplifies administration.

The benefits of LP21000 CNA management that are provided with HBAnyware and the Emulex common

driver architecture enable existing data centers to seamlessly extend their Fibre Channel management

processes to FCoE attached servers, facilitating efficiency in a multiprotocol, multi-operating system

enterprise SAN. HBAnyware also provides functions for assigning bandwidth for individual traffic types by

apportioning bandwidth assignments for each priority group as shown in Figure 15.

15

Page 16

Conclusion

Server virtualization has been one of the key drivers of converged networks in the data center. A converged

network fully complements server virtualization and enables the roll out of on-demand services where

applications and network services are provisioned on the fly. Fibre Channel over Ethernet technology

enables network convergence in enterprise data centers and provides benefits such as infrastructure

simplification, investment protection and lowered total cost of ownership. FCoE extends these benefits

without disrupting existing architectures and operating models.

Emulex has partnered with VMware to enable FCoE based network convergence for the ESX Server

environments. This document provided an outline for how to deploy this emerging technology in an ESX

environment.

16

Page 17

VMware, Inc. 3401Hillview Drive Palo Alto CA 94304 USA Tel 650-427-5000

www.vmware.com

Emulex Corp. 3333 Susan Street Costa Mesa CA 92626 USA Tel 714-662-5600

www.emulex.com

© Emulex Corporation. All Rights Reserved. No part of this document may be reproduced by any means or translated to any

electronic medium without prior written consent of Emulex.

Information furnished by Emulex is believed to be accurate and reliable. However no responsibility is assumed by Emulex for its use;

or for any infringements of patents or other rights of third parties which may result from its use. No license is granted by

implication or otherwise under any patent, copyright or related rights of Emulex. Emulex, the Emulex logo, LightPulse and SLI are

trademarks of Emulex.

VMware, the VMware “boxes” logo and design, Virtual SMP and VMotion are registered trademarks or trademarks of VMware, Inc.

in the United States and/or other jurisdictions. All other marks and names mentioned herein may be trademarks of their respective

companies.

09-1387

Loading...

Loading...