Intel 100SWE48UF1 User Manual

INTEL® OMNI-PATH FABRIC BUILDERS CATALOG

INTEL® OMNI-PATH HOST FABRIC ADAPTER 100 SERIES

INTEL® OMNI-PATH EDGE SWITCHES 100 SERIES

INTEL® OMNI-PATH DIRECTOR CLASS SWITCHES 100 SERIES

VERSION 2.0, MAY 2017

About |

Product Overview |

Partners |

TABLE OF CONTENTS |

|

|

About this Document................................................................................................................................................................................................................... |

|

2 |

Product Overview.......................................................................................................................................................................................................................... |

|

3 |

Fabric Builder Partners............................................................................................................................................................................................................. |

|

10 |

Intel® Fabric Builder Partners by Category........................................................................................................................................................ |

11 |

|

ISV/OSV Interoperability Quick Reference........................................................................................................................................................ |

15 |

|

HW and Services Vendor Support Quick Reference.................................................................................................................................... |

16 |

|

Partner Profiles................................................................................................................................................................................................................. |

|

18 |

Document Revision History:

Revision B, Initial Release, May 2017

Contact: Lester Dimit (Fabric Ecosystem PME): lester.dimit@intel.com

Contact: Susan Bobholz (Fabric Ecosystem Manager): susan.c.bobholz@intel.com

ABOUT THIS DOCUMENT

This Fabric Builders Catalog is a resource for Intel® Omni-Path stakeholders to use to as an overview of Intel’s Omni-Path Architecture products and a brief overview of Fabric Builder partner’s Intel OPA supported products and services.

Summary of What Changed

Oct. 2016: Intel® Omni-Path Fabric Builders Catalog (Version 1.0)

Preface

This catalog contains Fabric Builder ecosystem partner information for Intel® Omni-Path switches and adapters. Fabric Builder partner information has been gathered from Intel Fabric Builder records, partner websites, collateral documentation, and suppliers.

Intended Audience

This guide is a resource for developers and consultants who want to provide Intel-powered products, solutions, and services to their customers. It allows them to identify the certified high performance computing (HPC) components best suited to their needs.

How to Use this Guide

The information in this guide was collected from Intel Corporation partners and is organized as follows:

•Section 1, Product Overview—This section describes the Intel Omni-Path Architecture product line. There is also a listing of Fabric Builder participants by category, and a quick reference for all of the Fabric Builders in this guide.

•Section 2, Intel Fabric Builders Catalog—This section lists the Intel Fabric Builder partners alphabetically.

Different Fabric Builder partners offer different solutions, test criteria, and environments; therefore, the interoperability matrices vary from section to section.

Intel® Omni-Path Fabric Builders Catalog |

2 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

PRODUCT OVERVIEW

Intel® Omni-Path products provide world-class, cost-effective HPC Fabric solutions.

This section describes the following:

INTEL® OMNI-PATH HOST FABRIC ADAPTER 100 SERIES

INTEL® OMNI-PATH EDGE SWITCHES 100 SERIES

INTEL® OMNI-PATH DIRECTOR CLASS SWITCHES 100 SERIES

Intel® Omni-Path Fabric Builders Catalog |

3 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

INTEL® OMNI-PATH HOST FABRIC ADAPTER 100 SERIES

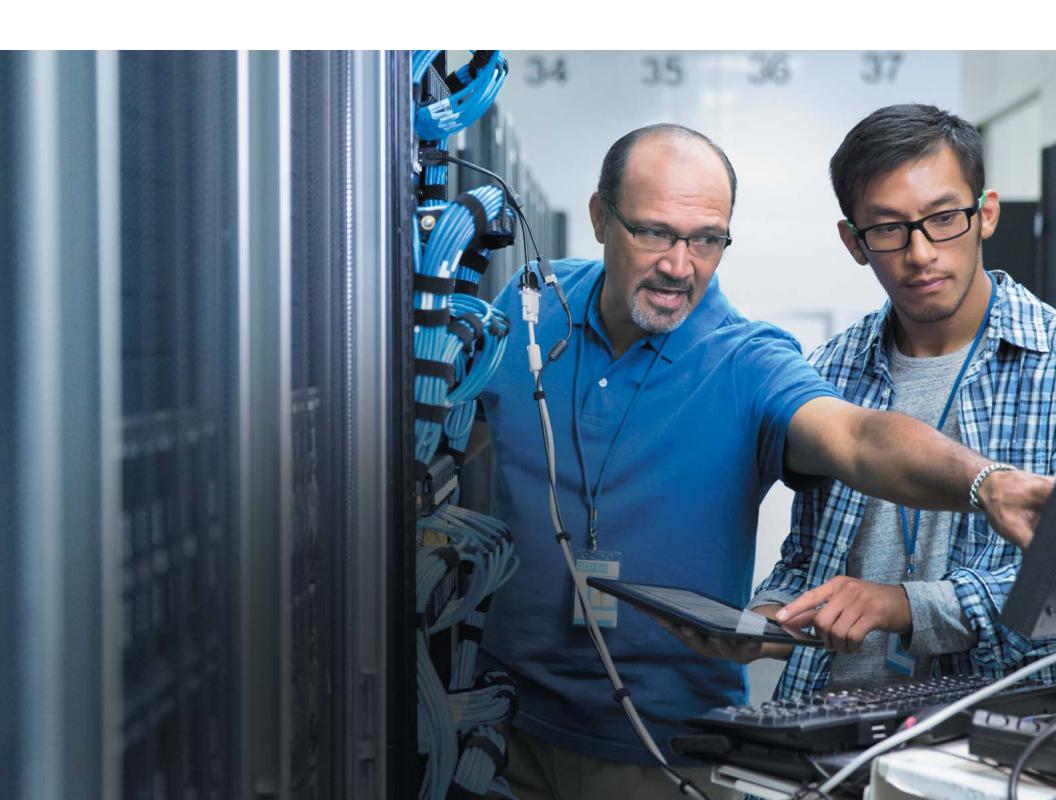

High Performance Computing (HPC) solutions require the highest levels of performance, scalability, and availability to power complex application workloads. Designed specifically for HPC, Intel® Omni-Path Host Fabric Interface (HFI) adapters, an element of the Intel® Scalable System Framework, use an advanced “on-load” design that automatically scales fabric performance with rising server core counts, making these adapters ideal for today’s increasingly demanding workloads.

Multiple Performance Levels

Two Intel® Omni-Path Host Fabric Adapter models are available to help fabric designers maximize performance versus cost for diverse requirements. The PCIe* x16 model supports the full 100 Gbs line rate. The PCIe x8 model supports the same 100 Gbps link rate, while the narrower PCIe connection limits actual data rates to 56 Gbps.

Advanced Quality of Service (QoS)

Intel Omni-Path Host Fabric Interface adapters provide the foundation for powerful and efficient traffic control. Data is segmented into 65-bit Flow Control Digits (FLITs), which are assembled into much larger Link Transfer Packets

(LTPs) for efficient wire transfer. By managing traffic at the

FLIT level, Intel® Omni-Path Architecture (Intel® OPA) Edge and Director switches are able to make extremely granular switching decisions to optimize latency, throughput, and resiliency more effectively for all traffic types.

High Reliability and Resilience

With their on-load design, Intel Omni-Path HFI adapters eliminate the need for data path firmware and external memory, while maintaining all connection state information in host memory. This reduces the potential for data errors and makes the fabric inherently more resilient to adapter and fabric failures. Additional protection against errors and downtime is provided by ECC protection on all internal SRAMs and parity checking on all internal buses.

Investment Protection

Great care was taken to ease the transition from previousgeneration fabric solutions to Intel OPA. The proven Open

Fabrics Alliance* (OFA) software stack “just works” with the vast majority of existing HPC applications and provides an ideal foundation for future development. The on-load architecture also delivers increasing value over time by allowing fabric performance to scale automatically with ongoing advances in Intel® Xeon® processors and Intel® Xeon Phi™ coprocessors.

Intel® Omni-Path Fabric Builders Catalog |

4 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

INTEL® OMNI-PATH HOST FABRIC ADAPTER 100 SERIES

HFI SPECIFICATIONS

Bus interface

•PCI Express* Gen3 x16 or PCI Express Gen3 x8

Device type

• End point

Advanced interrupts

•MSI-X

•INTx

HFI Specifications

and Interfaces

ASIC

• Single Intel® OP HFI ASIC

Max Data Rate

•100 Gbps – PCIe x16

•56 Gbps – PCIe x8 (Effective rate of 56 Gbps determined by PCIe x8 interface; Intel OP Link will operate at up to

100 Gbps)

Virtual Lanes

•Configurable from one to eight VLs plus one management VL

MTU

•Configurable MTU size of 2 KB, 4 KB, 8 KB,

or 10 KB

Interfaces

•Supports QSFP28 quad small form factor pluggable passive copper, optical transceivers, and active optical cables

Physical Specifications

Port

•One Intel OP 4X Host

Fabric Interface QSFP28

LED

•Link status indicator (Green)

Software Operating

Systems

•Red Hat* Enterprise Linux*

•SUSE* Enterprise Linux*

Server

•CentOS*

•Scientific Linux*

•Contact your Intel representative for others

FEATURE |

100HFA016LS (X16) |

100HFA018LS (X8) |

Total Adapter Bandwidth (bi-dir) |

25 GB/s (100 Gb Link Speed) |

Up to 15 GB/s (100 Gb Link Speed) |

Dimensions (w x h) |

|

|

Card |

2.713" x 6.6" |

2.713" x 6.6" |

Standard |

0.725" × 4.725" |

0.725" × 4.725" |

Low Profile |

0.725" × 3.118" |

0.725" × 3.118” |

|

|

|

Connector |

QSFP28 |

QSFP28 |

|

|

|

Power (Typ./Max) – Watts DC |

|

|

Copper |

7.4/11.7 W |

6.3/8.3 W |

|

|

|

Optical (Class 4 Optics – 3 Watts |

10.6/14.9 W |

9.5/11.5 W |

Max) |

|

|

INTEL SKU |

INTEL MM# |

DESCRIPTION |

100HFA016LS |

948159 |

Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe* x16 |

|

|

Low Profile 100HFA016LS |

100HFA018LS |

945670 |

Intel Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe x8 |

|

|

Low Profile 100HFA018LS |

Intel® Omni-Path Fabric Builders Catalog |

5 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

INTEL® OMNI-PATH FABRIC EDGE SWITCHES 100 SERIES

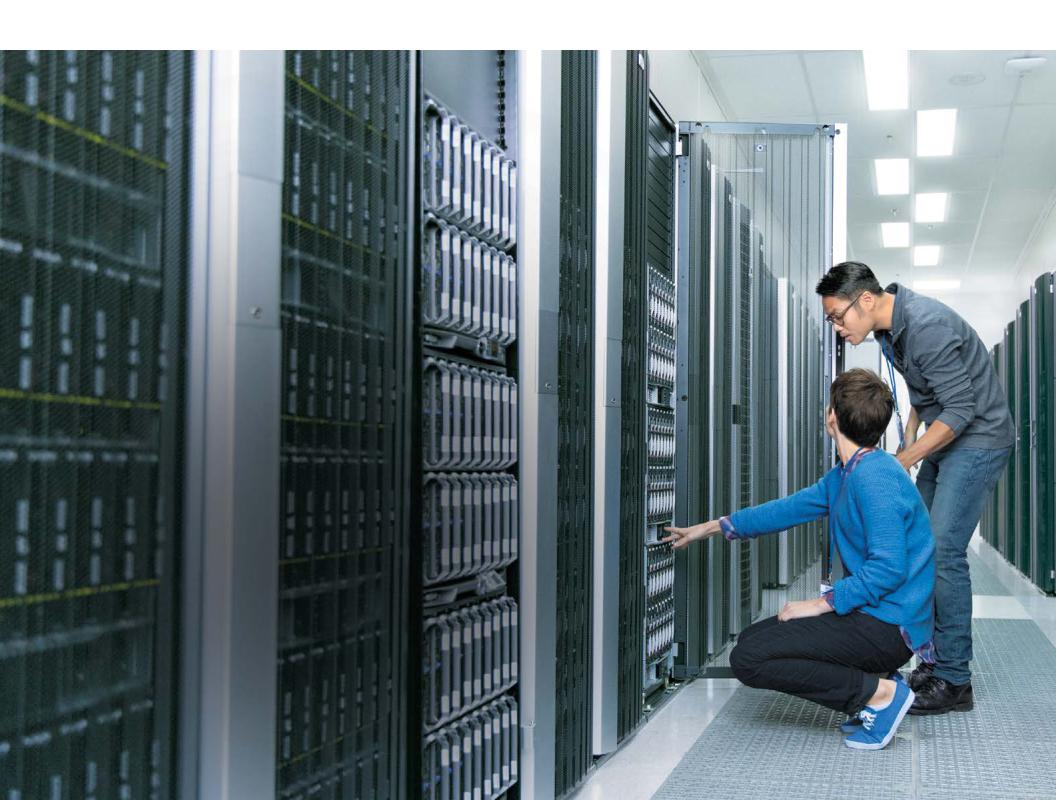

Intel® Omni-Path Fabric Edge Switches, an element of the Intel® Scalable System Framework, are part of an end-to-end product family for HPC fabrics that delivers high performance with breakthrough value. Intel® Omni-Path Architecture (Intel® OPA) builds on proven technologies from Intel® True Scale Architecture, the Cray Aries* interconnect, and open source software to provide an evolutionary on-ramp to revolutionary new fabric capabilities.

Higher Performance at Lower Cost

Intel Omni-Path Fabric Edge Switches deliver 100 Gbps port bandwidth with latency that stays low even at extreme scale. Second generation Intel fabric switch silicon, with market leading 48 port radix, these switches can lower fabric acquisition costs by as much as 50 percent, while simultaneously reducing space and power requirements.1

With these savings, you can potentially achieve higher total cluster performance within the same hardware budget to expand and accelerate your research.

Flexible Fabrics at Every Scale

Intel Omni-Path Fabric Edge Switches support HPC clusters of all sizes, from entry-level systems to supercomputers with tens of thousands of server nodes. You can use these switches in combination with Intel® Omni-Path Director Class Switches to build low-latency, multi-tier fabrics with an exceptional set of features for high-speed networking.

High Availability

Intel Omni-Path Fabric Edge Switches provide integrated support for high availability with advanced features such as power, component-level diagnostics and alarming, and out-of-band management. Innovative features take fabric resilience and availability to new heights without sacrificing performance. Packet Integrity Protection (PIP), for example, provides high packet reliability with latency-free error checking and link-level recovery. Dynamic Lane Scaling

(DLS) maintains 75 percent of link bandwidth if a physical lane fails, so HPC workloads can complete gracefully to keep project efforts on track.

SWITCH SPECIFICATIONS

•Based on Intel® Omni-Path Switch Silicon 100 Series 48 Port ASIC

•100 Gbps per port bidirectional

•Virtual lanes: Configurable from one to eight VLs plus one management VL

•Configurable MTU size of 2 KB, 4 KB, 8 KB, or 10 KB

•Maximum multicast table size: 8,192 entries

•Maximum unicast table size: 49,151 entries

•Supports QSFP28 Quad Small Form Factor Pluggable cabling

•Passive copper or active fiber cables

Management Features

•Management Card (Optional)

•Built-in Fabric Manager

•Subnet Management Agent (SMA)

•Performance Management Agent (PMA)

•Enables Command Line Interface and Chassis Management GUI through 10/100/1000 Base-T Ethernet

•Enables Serial Console through USB Serial Port

•Supports Embedded Subnet Manager (ESM) and Performance Manager (PM)

•Enables Network Time Protocol (NTP), SNMP/MIBs, and LDAP

•FastFabric Toolset

•Fabric Management GUI

LED Status

•Twenty-four (24) or forty-eight (48) link status indicators, one per QSFP28 port (Green)

•Two (2) Ethernet indicators (Off = 10; Green = 100; Orange = 1G)

•Two (2) Chassis indicators (Green – Status; Amber – Attention)

•Two (2) Power Supply, one per supply (Green)

•One (1) Q7 Management Installed, (Green – Installed)

•One (1) External Management Capable, (Green –

One or more ports can be directly connected to a Fabric Manager)

•Airflow direction (Green – front-to-back airflow)

Intel® Omni-Path Fabric Builders Catalog |

6 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

INTEL® OMNI-PATH FABRIC EDGE SWITCHES 100 SERIES

FEATURE |

100SWE48Q |

100SWE48U |

100SWE24Q |

100SWE24U |

100 Gb ports |

48 |

48 |

24 |

24 |

Total System Bandwidth (bi-dir) |

1.2 TB/s |

1.2 TB/s |

.6 TB/s |

.6 TB/s |

Dimensions (w x h x d) |

17.3" x 1.72" x 17.15" |

17.3" x 1.72" x 17.15" |

17.3" x 1.72" x 17.15" |

17.3" x 1.72" x 17.15" |

Reversible Fan Modules |

1 |

1 |

1 |

1 |

Mgmt. Modules |

1 |

0 |

1 |

0 |

|

|

|

|

|

Power Supplies (Fixed) Min / AC |

1/2 |

1/2 |

1/2 |

1/2 |

|

|

|

|

|

Power (Typ./Max) |

|

|

|

|

Input 100-240 VAC 50-60 Hz |

189/238 W (Copper) |

189/238 W (Copper) |

146/179 W (Copper) |

146/179 W (Copper) |

Optical Power w/ Max 3 W QSFP |

356/408 W (All |

356/408 W (All |

231/264 W (All |

231/264 W (All |

|

Optical) |

Optical) |

Optical) |

Optical) |

Weight – Fully Loaded |

6.7 kg |

6.7 kg |

6.2 kg |

6.2 kg |

|

|

|

|

|

INTEL SKU |

INTEL MM# |

DESCRIPTION |

100SWE48QF2 |

948588 |

Intel® Omni-Path Edge Switch 100 Series 48 Port Managed Forward 2 PSU 100SWE48QF2 |

|

|

|

100SWE48UF2 |

948678 |

Intel® Omni-Path Edge Switch 100 Series 48 Port Forward 2 PSU 100SWE48UF2 |

|

|

|

100SWE24QF2 |

945654 |

Intel® Omni-Path Edge Switch 100 Series 24 Port Managed Forward 2 PSU 100SWE24QF2 |

|

|

|

100SWE24UF2 |

945655 |

Intel® Omni-Path Edge Switch 100 Series 24 Port Forward 2 PSU 100SWE24UF2 |

100SWE48QF1 |

945662 |

Intel® Omni-Path Edge Switch 100 Series 48 Port Managed Forward 1 PSU 100SWE48QF1 |

100SWE48UF1 |

945663 |

Intel® Omni-Path Edge Switch 100 Series 48 Port Forward 1 PSU 100SWE48UF1 |

100SWE24QF1 |

945664 |

Intel® Omni-Path Edge Switch 100 Series 24 Port Managed Forward 1 PSU 100SWE24QF1 |

100SWE24UR1 |

945669 |

Intel® Omni-Path Edge Switch 100 Series 24 Port Reverse 1 PSU 100SWE24UR1 |

|

|

|

100SWEQ7CN1 |

945775 |

Intel® Omni-Path Edge Switch Management Card 100 Series 100SWEQ7CN1 |

|

|

|

100SWEIKIT1 |

945820 |

Intel® Omni-Path Edge Switch Installation Kit 100 Series 100SWEIKIT1 |

|

|

|

1Internal cost analysis based on a 256-node to 2048-node clusters configured with Mellanox* FDR and EDR InfiniBand* products. Mellanox component pricing from kernelsoftware.com Prices as of November 3, 2015. Compute node pricing based on Dell PowerEdge R730 server from www.dell.com. Prices as of May 26, 2015. Intel® OPA (x8) utilizes a 2-1 over-subscribed Fabric. Intel® OPA pricing based on estimated reseller pricing using projected Intel MSRP pricing on day of launch.

Intel® Omni-Path Fabric Builders Catalog |

7 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

INTEL® OMNI-PATH DIRECTOR CLASS SWITCHES 100 SERIES

Intel® Omni-Path Director Class Switches, an element of the Intel® Scalable System Framework, are part of an end-to-end product family for HPC fabrics that delivers high performance with breakthrough cost models. Intel® Omni-Path Architecture (Intel® OPA) builds on proven technologies from Intel® True Scale Architecture,2 the Cray Aries* interconnect, and open source software to provide an evolutionary on-ramp to revolutionary new fabric capabilities.

Higher Performance at Lower Cost

Intel Omni-Path Director Class Switches deliver 100 Gbps port bandwidth with latency that stays low even at extreme scale. Based on new Intel 48-port switch silicon, these switches can lower fabric acquisition costs by as much as 50 percent,2 while simultaneously reducing space and power requirements.3 With these savings, you can potentially achieve higher total cluster performance within the same hardware budget to expand and accelerate your research.

Flexible Fabrics at Every Scale

Intel Omni-Path Director Class Switches support HPC clusters of all sizes, from mid-level clusters to supercomputers with tens of thousands of servers. You can use these switches in combination with Intel Omni-Path Fabric Edge Switches to build low-latency, multi-tier fabrics with an exceptional set of features for high-speed networking.

Modular Design

Intel OPA leaf, spine, power, cooling, and management modules are common across chassis sizes, providing the flexibility needed to deploy and grow HPC environments efficiently and cost effectively.

High Availability

Intel OPA switches provide integrated support for high availability with advanced features such as power, fabric, and management module redundancy, component-level diagnostics and alarming, and out-of-band management. Innovative features take fabric resilience and availability to new heights without sacrificing performance. Packet Integrity Protection (PIP), for example, provides high packet reliability with latency-free error checking and link-level recovery. Dynamic Lane Scaling (DLS) maintains 75 percent of link bandwidth if a physical lane fails, so

HPC workloads can complete gracefully to keep research efforts on track.

SWITCH SPECIFICATIONS

•Based on Intel® Omni-Path Switch Silicon 100 Series 48 Port ASIC

•100 Gbps per port bidirectional

•Virtual lanes: Configurable from one to eight VLs plus one management VL

•Configurable MTU size of 2 KB, 4 KB, 8 KB, or 10 KB

•Maximum multicast table size: 8,192 entries

•Maximum unicast table size: 49,151 entries

•Supports QSFP28 Quad Small Form Factor Pluggable cabling

•Passive copper or active fiber cable

Management Features

•Management Module with optional redundancy

•Built-in Fabric Manager

•Subnet Management Agent (SMA)

•Performance Management Agent (PMA)

•Enables Command Line Interface and Chassis

Management GUI through 10/100/1000

Base-T Ethernet

•Enables Serial Console through USB Serial Port

•Supports Embedded Subnet Manager (ESM) and Performance Manager (PM)

•Enables Network Time Protocol (NTP), SNMP/MIBs, and LDAP

•FastFabric Toolset

•Fabric Management GUI

2Intel technologies’ features and benefits depend on system configuration and may require enabled hardware, software or service activation. Learn more at Intel.com, or from the OEM or retailer.

3Internal cost analysis based on a 256-node to 2048-node clusters configured with Mellanox* FDR and EDR InfiniBand* products. Mellanox component pricing from kernelsoftware.com Prices as of November 3, 2015. Compute node pricing based on Dell PowerEdge R730 server from www.dell.com. Prices as of May 26, 2015. Intel® OPA (x8) utilizes a 2-1 over-subscribed Fabric. Intel® OPA pricing based on estimated reseller.

Intel® Omni-Path Fabric Builders Catalog |

8 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

INTEL® OMNI-PATH DIRECTOR CLASS SWITCHES 100 SERIES

FEATURE |

100SWD24 |

100HSWD06 |

100 Gbs ports |

32-up to 768 |

32-up to 192 |

Total System Bandwidth (bi-dir) |

19.2 TB/s |

4.8 TB/s |

Dimensions (W x H x D) |

17.6" x 35.0" x 29.5" |

17.6" x 12.2" x 29.5" |

Leaf Modules (Hot Swap) |

1-24 |

1-6 |

Spine Modules (Hot Swap) |

Up to 8 |

Up to 2 |

|

|

|

Fan Modules (Hot Swap) |

9 |

3 |

|

|

|

Mgmt. Modules (Hot Swap) |

1/2 |

1/2 |

|

|

|

Power Supplies (Hot Swap) Min/DC/AC |

6/7/12 |

2/3/4 |

|

|

|

Power (Typ./Max) |

|

|

Input 180-260 VAC 50-60 Hz |

6.8/8.9 KW (Copper) |

1.8/2.3 KW (Copper) |

Optical Power: Class 4 – 3 Watt Max |

9.5/11.6 KW (All Optical) |

2.4/3 KW (All Optical) |

Weight – Fully Loaded |

265 kg |

86 kg |

Status LEDs** (Ethernet/DC_On) |

1/2 |

1/2 |

**Status LEDs: Ethernet Activity (Green), Ethernet Speed (Green/Orange) / DC_On (Green on push button)

INTEL SKU |

INTEL MM# |

DESCRIPTION |

|

100SWD06B1N |

945676 |

Intel® Omni-Path Director Class Switch 100 Series 6 Slot Base 1MM 100SWD06B1N |

|

|

|

|

|

100SWD24B1N |

945677 |

Intel® Omni-Path Director Class Switch 100 Series 24 Slot Base 1MM 100SWD24B1N |

|

100SWDMGTSH |

945776 |

Intel® Omni-Path Director Switch Management Module 100 Series 100SWDMGTSH |

|

100SWDLF32Q |

945777 |

Intel® Omni-Path Director Switch Leaf Module 100 Series 32 port 100SWDLF32Q |

|

100SWDSPINE |

945778 |

Intel® Omni-Path Director Switch Spine Module 100 Series 100SWDSPINE |

|

100SWDFAN01 |

945779 |

Intel® Omni-Path Director Switch Fan Module 100 Series 100SWDFAN01 |

|

|

|

|

|

100SWDPS001 |

945780 |

Intel® Omni-Path Director Switch Power Supply Module 100 Series 100SWDPS001 |

|

|

|

|

|

100SWDLFFPN |

945781 |

Intel® Omni-Path Director Switch Leaf Filler Panel 100 Series 100SWDLFFPN |

|

|

|

|

|

100SWDSPFPN |

945834 |

Intel® Omni-Path Director Switch Spine Filler Panel 100 Series 100SWDSPFPN |

|

|

|

|

|

100SWDMSFPN |

945835 |

Intel® Omni-Path Director Switch Management Filler Panel 100 Series 100SWDMSFPN |

|

|

|

|

|

100SWDPSFPN |

945870 |

Intel® Omni-Path Director Switch Power Supply Filler Panel 100 Series 100SWDPSFPN |

|

|

|

|

|

100SWDIKT06 |

945832 |

Intel® Omni-Path Director Switch Installation Kit 100 Series 6 Slot 100SWDIKT06 |

|

|

|

|

|

100SWDIKT24 |

945833 |

Intel® Omni-Path Director Switch Installation Kit 100 Series 24 Slot 100SWDIKT24 |

|

|

|

|

|

Intel® Omni-Path Fabric Builders Catalog |

9 |

||

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

FABRIC BUILDER PARTNERS

PARTNERS BY CATAGORY

ISV/OSV INTEROPERABILITY QUICK REFERENCE

HW AND SERVICES VENDOR SUPPORT QUICK REFERENCE

PARTNER PROFILES

Intel® Omni-Path Fabric Builders Catalog |

10 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

INTEL® FABRIC BUILDER PARTNERS BY CATEGORY

This table provides the alliance partners by category including vertical market/type partner applications.

VERTICAL MARKET TYPE |

FABRIC BUILDER PARTNER |

APPLICATION |

|

|

|

HW |

|

|

Cabling & Connectivity |

Amphenol FCI |

UltraPort 100G QSFP ganged & stacked board connectors |

|

|

100G QSFP Omni-Path copper cables assemblies |

|

|

100G QSFP Omni-path Active Optical Cables (AOC’s) |

|

|

Cable direct QSFP to LEC flyover cable assembly |

|

|

PwrBlade ULTRA® |

|

|

PwrMAX® |

|

|

|

Cabling & Connectivity |

Molex |

zQSFP* Omni-Path Cages and Cables |

|

|

Impact* Backplane System |

|

|

EXTreme Power* |

|

|

|

Cabling & Connectivity |

TE Connectivity |

STRADA Whisper* |

|

|

|

Storage |

Data Direct Networks (DDN) |

SFA14K*/SFA14KE* High Performance Block Storage |

|

|

DDN ES14K – Scale Out Lustre* Appliance |

|

|

DDN GS14K – Scale Out Spectrum Scale (GPFS) Appliance |

Storage |

Mangstor |

NX-Series* of storage array products |

Storage |

SGI |

SGI ICE* |

|

|

SGI Rackable* |

|

|

SGI UV* |

|

|

|

Storage |

Seagate |

ClusterStor L300N |

|

|

ClusterStor L300 |

|

|

ClusterStor G300N |

OEM |

Cray |

Cray CS400-AC™ and CS400-LC™ Cluster Supercomputers |

OEM |

Dell |

Dell Switch* |

|

|

Dell PowerEdge Server* |

|

|

|

OEM |

Hewlett Packard Enterprise |

HPE ProLiant Gen 9 Apollo servers* |

|

|

HPE ProLiant Gen 9 DL servers* |

|

|

|

Intel® Omni-Path Fabric Builders Catalog |

11 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

INTEL® FABRIC BUILDER PARTNERS BY CATEGORY, CONTINUED

VERTICAL MARKET TYPE |

FABRIC BUILDER PARTNER |

APPLICATION |

HW, continued |

|

|

OEM |

Lenovo |

Lenovo System x servers* |

|

|

Lenovo NeXtScale servers* |

|

|

Lenovo ThinkServer* |

|

|

Lenovo Solution for GPFS Storage Server* (GSS) |

|

|

Lustre*-based storage solution |

|

|

Lenovo Scalable Infrastructure* |

|

|

|

OEM |

Penguin Computing |

Relion and Tundra ES server solutions* |

|

|

|

OEM |

Supermicro |

TwinPro Server Solution* |

|

|

FatTwin Server Solution* |

|

|

Intel® Xeon Phi™ processor Server Solution |

Channel |

Advanced Clustering Technologies |

Custom, turnkey HPC clusters, servers, storage solutions, and workstations |

Channel |

Aspen Systems |

HPC Cluster solutions (Compute, Storage) and Workstations |

Channel |

Atipa Technologies |

Atipa Altezza AT228* server series, |

|

|

Atipa Visione series* KNL based accelerator servers |

|

|

|

Channel |

Calligo Technologies |

HPC Cluster solutions (Compute, Storage, Fabric) |

|

|

Intel® Lustre*-based Storage systems |

|

|

Code Modernization |

|

|

Workshops on Parallel Programming (Basic/Advanced) |

|

|

Intel Code Modernization Workshop |

|

|

Intel® Lustre* Parallel File System |

|

|

Intel® Omni-Path Architecture |

|

|

|

Channel |

Cirrascale |

Cirrascale GX4 |

|

|

Cirrascale GX8 |

|

|

Cirrascale GB5600 |

|

|

Cirrascale VB1600 |

|

|

|

Channel |

Colfax International |

Intel® Omni-Path Host Fabric Adapter |

|

|

Intel® Omni-Path Edge Switch |

|

|

|

Channel |

Core Information Technology (COIT) |

HPC Cluster Systems based on Intel solutions |

Intel® Omni-Path Fabric Builders Catalog |

12 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

INTEL® FABRIC BUILDER PARTNERS BY CATEGORY, CONTINUED

VERTICAL MARKET TYPE |

FABRIC BUILDER PARTNER |

APPLICATION |

HW, continued |

|

|

Channel |

Dalco |

DALCO High Performance Cluster |

|

|

DALCO GPU AI and deep learning |

|

|

DALCO Scalable Flash Storage Modules |

|

|

DALCO Storage Solutions |

|

|

DALCO Workstations |

Channel |

E4 Computer Engineering |

E4 1/2/3/4U Servers |

|

|

E4 Workstation |

|

|

E4 High Density Server |

|

|

E4 GPU Server |

|

|

E4 Switches |

Channel |

Exalit |

G10 cluster, R2608, X4000, X8000 |

Channel |

Exxact |

Exxact Intel® Xeon Phi™ processors, HPC, and GPU Clusters |

|

|

|

Channel |

HPC Korea |

Thunderbolt™ Cluster Solution |

|

|

|

Channel |

Megware |

SlideSX® - HPC Compute Platform |

|

|

Intel Xeon Server and Twin² Compute Nodes |

|

|

Megware HPC Compute Cluster with Cluster Ready |

|

|

|

Channel |

MyungIn Inno |

HPC Cluster Systems based on Intel solutions |

Channel |

PSSC Labs |

CloudOOP Big Data Servers |

|

|

PowerServe HPC Servers |

|

|

CloudSeek Database Servers |

|

|

SureStore Storage Servers |

|

|

PowerWulf Cluster |

|

|

Parallux Storage Cluster |

Channel |

RAID Media Systems GmbH |

HPC clusters and servers, storage and backup systems |

|

|

Intel® Compute Blocks |

|

|

|

Channel |

Sandia Systems |

Sandia Tiger* Cluster System |

|

|

|

Channel |

Tera Tec |

HPC Cluster Systems based on Intel solutions |

|

|

|

Intel® Omni-Path Fabric Builders Catalog |

13 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

INTEL® FABRIC BUILDER PARTNERS BY CATEGORY, CONTINUED

VERTICAL MARKET TYPE |

FABRIC BUILDER PARTNER |

APPLICATION |

HW, continued |

|

|

|

|

|

Channel |

Tyrone Systems |

Tyrone® Servers with Intel® Xeon® and Intel® Xeon Phi™ Processors |

|

|

HPC Cluster Solutions |

|

|

PFS Storage Solutions |

|

|

|

ISV (Middleware) |

|

|

|

|

|

Automotive/Aerospace |

Software Cradle |

scSTREAM/HeatDesigner,* SC/Tetra/scFLOW* |

Manufacturing |

Altair |

HyperWorks,* PBSWorks* |

Manufacturing |

Ansys |

CFX, Fluent |

Manufacturing |

COMSOL |

Multiphysics, Server |

Manufacturing |

Livermore Software Technology Corp |

LS-DYNA R9.1.0 revision 113698 |

|

(LSTC) |

|

Manufacturing |

Siemens PLM |

StarCMM+ v12.04 and later |

|

|

|

Energy |

Flow Science |

FLOW-3D/MP |

|

|

|

File System |

BeeGFS |

BeeGFS |

|

|

|

Management |

Bright Computing |

Bright Cluster Manager* for HPC |

Programming Interface |

Fraunhofer ITWM |

GPI |

Simulation/Modeling |

CST |

CST Studio Suite |

OSV |

|

|

OS |

Red Hat |

Red Hat Enterprise Linux* |

|

|

|

OS |

SUSE |

SUSE Linux Enterprise Server* |

|

|

|

Services |

|

|

|

|

|

Consulting |

System Fabric Works |

System integrator and reseller for HPC solutions using Debian Linux* |

|

|

|

Consulting |

Whiteklay |

HPC Consulting Services |

|

|

|

Intel® Omni-Path Fabric Builders Catalog |

14 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

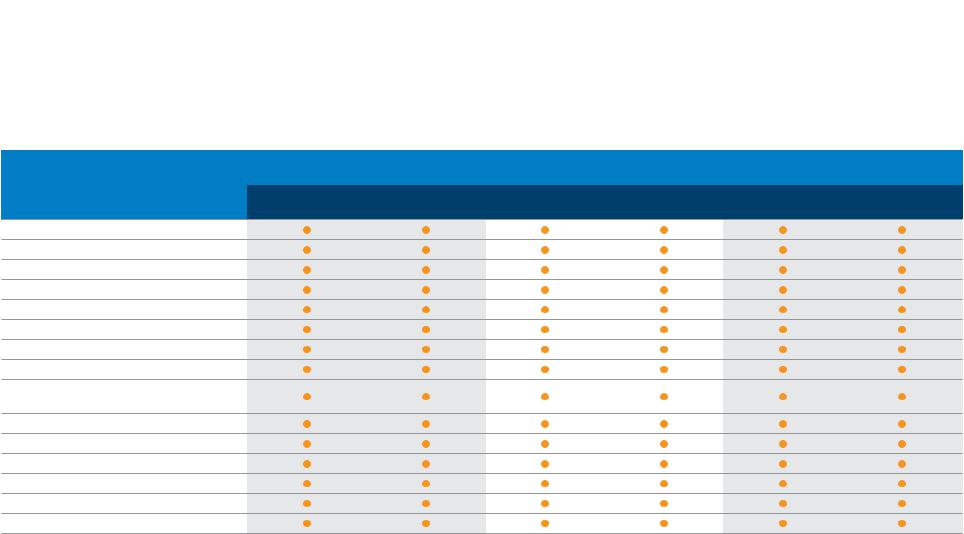

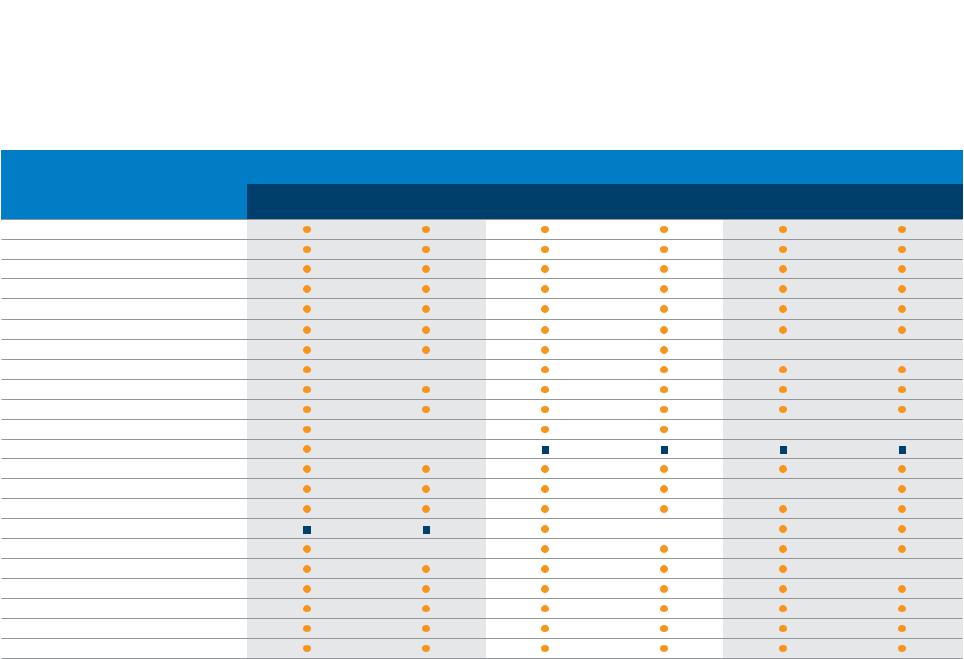

ISV/OSV INTEROPERABILITY QUICK REFERENCE

This table provides a summary of the ISV/OSV interoperability found in this catalog.

|

INTEL OMNI-PATH HOST |

INTEL® OMNI-PATH EDGE SWITCH |

INTEL® OMNI-PATH DIRECTOR |

|||

FABRIC BUILDER |

FABRIC ADAPTER |

CLASS SWITCHES |

||||

|

||||||

PARTNER |

HFA 100 Series |

HFA 100 Series |

100 Series 48 Port 100 Series 24 Port 100 Series 24 Slot |

100 Series 6 Slot |

||

|

1 Port PCIe* x16 |

1 Port PCIe x8 |

|

|

|

|

Altair

Ansys

BeeGFS

Bright Computing

COMSOL

CST (Computer Simulation Technology)

Flow Science

Fraunhofer ITWM

Livermore Software Technology Corporation (LSTC)

Red Hat

Siemens PLM

Software Cradle Co., Ltd.

SUSE

System Fabric Works

Whiteklay

Intel® Omni-Path Fabric Builders Catalog |

15 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

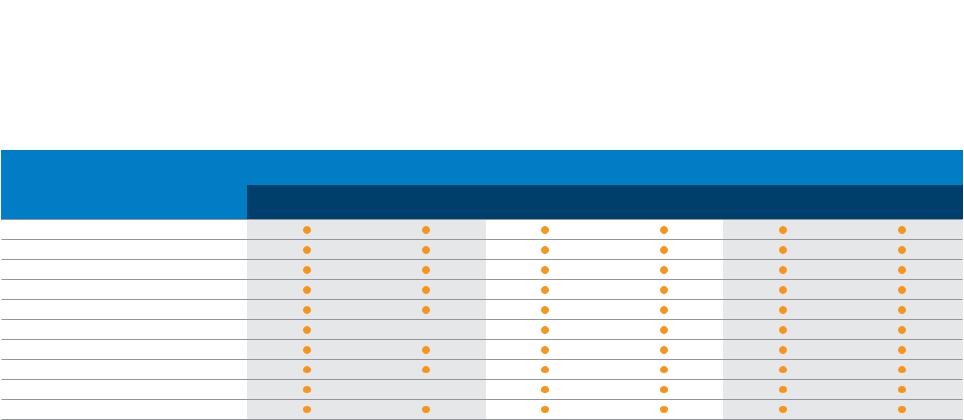

HW AND SERVICES VENDOR SUPPORT QUICK REFERENCE

This table provides a summary of HW Vendor support of Intel OPA found in this catalog.

|

INTEL OMNI-PATH HOST |

INTEL® OMNI-PATH EDGE SWITCH |

INTEL® OMNI-PATH DIRECTOR |

|||

FABRIC BUILDER |

FABRIC ADAPTER |

CLASS SWITCHES |

||||

|

||||||

PARTNER |

HFA 100 Series |

HFA 100 Series |

100 Series 48 Port 100 Series 24 Port 100 Series 24 Slot |

100 Series 6 Slot |

||

|

1 Port PCIe* x16 |

1 Port PCIe x8 |

|

|

|

|

Advanced Clustering Technologies Inc.

Amphenol FCi

Aspen Systems LLC

Atipa Technologies

Calligo Technologies

Cirrascale

Colfax Customized Solutions

Core Information Technology (COIT)

Cray

Dalco

DDN (Data Direct Networks)

Dell

E4 Computer Engineering

Exalit

Exxact

Hewlett Packard Enterprise

HPC Korea

Lenovo

Mangstor

Megware

Molex

Myungin Inno

OEM Branded

OEM Branded

Intel® Omni-Path Fabric Builders Catalog |

16 |

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

HW AND SERVICES VENDOR SUPPORT QUICK REFERENCE, CONTINUED

This table provides a summary of HW Vendor support of Intel OPA found in this catalog.

|

INTEL OMNI-PATH HOST |

INTEL® OMNI-PATH EDGE SWITCH |

INTEL® OMNI-PATH DIRECTOR |

|||

FABRIC BUILDER |

FABRIC ADAPTER |

CLASS SWITCHES |

||||

|

||||||

PARTNER |

HFA 100 Series |

HFA 100 Series |

100 Series 48 Port 100 Series 24 Port 100 Series 24 Slot |

100 Series 6 Slot |

||

|

1 Port PCIe* x16 |

1 Port PCIe x8 |

|

|

|

|

Penguin Computing

PSSC Labs

RAID Media Systems GmbH

Sandia Systems

Seagate

SGI

Supermicro

TE Connectivity

Tera Tec

Tyrone Systems

Intel® Omni-Path Fabric Builders Catalog |

17 |

About |

Product Overview |

Partners |

CATAGORY: HARDWARE |

VERTICAL: CHANNEL |

|

|

||

|

ADVANCED CLUSTERING TECHNOLOGIES

About Advanced Clustering Technologies

Advanced Clustering Technologies builds customized, turn-key high-performance computing (HPC) solutions to meet a wide array of computational challenges. For 15 years Advanced Clustering has been developing systems for some of the most prestigious national laboratories, universities, and corporations.

Whether you need a cluster, server, storage solution, or workstation, Advanced Clustering’s technical and sales teams can build the ideal system for your needs. Our team has more than 50 years of combined industry experience and comprehensive knowledge in the areas of cluster topologies and cluster configurations.

Advanced Clustering Technologies also offers cloud HPC services with ACTnowHPC, the on-demand, pay-as-you-go cloud solution that features the latest technology on bare metal hardware.

Advanced Clustering Technologies and Intel

Advanced Clustering Technologies offers custom, turnkey HPC clusters, servers, storage solutions and workstations featuring Intel® Omni-Path Architecture.

Advanced Clustering Technologies and Intel® OPA Interoperability Matrices

The solutions in the following matrix have been provided by or obtained from Advanced Clustering Technologies.

COMPONENT |

DETAIL |

Advanced Clustering |

We offer custom, turnkey HPC clusters, servers, storage solutions, and workstations |

Technologies Products |

|

Operating Systems |

Linux* |

|

|

Intel® OPA Host Fabric |

Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe* x16 |

Adapters |

Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe x8 |

Intel® OPA Switches |

Intel® Omni-Path Edge Switch 100 Series 48 port |

|

Intel® Omni-Path Edge Switch 100 Series 24 port |

|

|

Advanced Clustering Technologies Product Interoperability with Intel® Omni-Path Host Fabric Adapters and Switches Matrix

Intel® Omni-Path Fabric Builders Catalog

Contact Name: Jim Paugh

Address: 3148 Roanoke Road Kansas City MO 64111

Email: jpaugh@advancedclustering.com

Phone: 913-643-0310

Web: www.advancedclustering.com

18

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

CATAGORY: ISV VERTICAL: MANUFACTURING

ALTAIR (HYPERWORKS)

About Altair

Altair is focused on the development and broad application of simulation technology to synthesize and optimize designs, processes, and decisions for improved business performance. Privately held with more than 2,600 employees, Altair is headquartered in Troy, Michigan,

USA and operates more than 45 offices throughout

22 countries. Today, Altair serves more than 5,000 corporate clients across broad industry segments.

Altair and Intel

Altair® HyperWorks® is the most comprehensive, open architecture CAE simulation platform in the industry, offering the best technologies to design and optimize high performance, weight efficient, and innovative products. HyperWorks includes best-in-class modeling, linear and nonlinear analysis, structural optimization, fluid and multi-body dynamics simulation, visualization, and data management solutions.

AcuSolve is Altair’s most powerful Computational Fluid Dynamics (CFD) tool, providing users with a full range of physical models. Simulations involving flow, heat transfer, turbulence, and non-Newtonian materials are handled with ease by AcuSolve’s robust and scalable solver technology. These well validated physical models are delivered with unmatched accuracy on fully unstructured meshes. This means less time spent building meshes and more time spent exploring your designs.

FEKO is a comprehensive computational electromagnetics (CEM) software used widely in the telecommunications, automobile, aerospace, and defense industries. FEKO offers several frequency and time domain EM solvers

Intel® Omni-Path Fabric Builders Catalog

under a single license. Hybridization of these methods enables the efficient analysis of a broad spectrum of

EM problems, including antennas, microstrip circuits, RF components, and biomedical systems, the placement of antennas on electrically large structures, the calculation of scattering as well as the investigation of electromagnetic compatibility (EMC).

RADIOSS is a leading structural analysis solver for highly non-linear problems under dynamic loadings. It is used across all industries worldwide to improve the crashworthiness, safety, and manufacturability of structural designs. For over 20 years, RADIOSS has established itself as a leader and an industry standard for automotive crash, drop and impact analysis, terminal ballistic, blast and explosion effects and high velocity impacts.

Contact Name: Piush Patel

Address: 1820 East Big Beaver Road

Troy, Michigan 48083

Email: ppatel@altair.com

Phone: 512-470-7209

Fax: 248-614-2411

Web: www.altair.com

OptiStruct is an industry proven, modern structural analysis solver for linear and nonlinear problems under static and dynamic loadings. It is the market-leading solution for structural design and optimization. Based on finite-element and multi-body dynamics technology, and through advanced analysis and optimization algorithms, OptiStruct helps designers and engineers rapidly develop innovative, lightweight, and structurally efficient designs.

Altair and Intel® OPA Interoperability Matrices

The solutions in the following matrix have been provided by or obtained from Altair.

COMPONENT |

DETAIL |

Altair Products |

AcuSolve |

|

FEKO |

|

RADIOSS |

|

OptiStruct |

|

|

Operating Systems |

RHEL 6.X, SLES 11.X, and CentOS 6.X |

|

|

Intel® OPA Host Fabric |

Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe* x16 |

Adapters |

Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe x8 |

|

|

Intel® OPA Switches |

Intel® Omni-Path Edge Switch 100 Series 48 port |

|

Intel® Omni-Path Edge Switch 100 Series 24 port |

|

Intel® Omni-Path Director Class Switches 100 Series 24 Slot |

|

Intel® Omni-Path Director Class Switches 100 Series 6 Slot |

Altair Product Interoperability with Intel® Omni-Path Host Fabric Adapters and Switches Matrix

19

About |

Product Overview |

Partners |

|

|

|

|

|

|

|

|

|

CATAGORY: ISV VERTICAL: MANUFACTURING

ALTAIR (PBS WORKS)

About Altair

Altair is focused on the development and broad application of simulation technology to synthesize and optimize designs, processes, and decisions for improved business performance. Privately held with more than 2,600 employees, Altair is headquartered in Troy,

Michigan, USA and operates more than 45 offices throughout 22 countries. Today, Altair serves more than 5,000 corporate clients across broad industry segments.

Altair and Intel

PBS Works workload management suite is used by thousands of organizations worldwide to simplify the administration and use of HPC clusters, clouds, and supercomputing environments. The suite is comprised of PBS

Professional, job scheduler, and workload manager;

Computer Manager and Display Manager, web applications for job management and remote visualization; PBS Analytics, web-based portal for visualizing historical HPC resource usage for optimized ROI; and Altair Software Asset Optimization, which enables visualization and analysis of global software inventories and utilization rates.

PBS Professional software optimizes job scheduling and workload management in high-performance computing (HPC) environments—clusters, clouds, and supercomputers—improving system efficiency and people’s productivity. Built by HPC people for HPC people, PBS Pro™ is fast, scalable, secure, and resilient, and supports all modern infrastructure, middleware, and applications.

Contact Name: Piush Patel

Address: 1820 East Big Beaver Road Troy, Michigan 48083

Email: ppatel@altair.com

Phone: 512-470-7209 Fax: 248-614-2411

Web: www.altair.com

Altair and Intel® OPA Interoperability Matrices

The solutions in the following matrix have been provided by or obtained from Altair.

COMPONENT |

DETAIL |

Altair Products |

PBS Professional SW |

Operating Systems |

RHEL 7.X, SLES 12.X, and CentOS 7.X |

Intel® OPA Host Fabric |

Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe* x16 |

Adapters |

Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe x8 |

|

|

Intel® OPA Switches |

Intel® Omni-Path Edge Switch 100 Series 48 port |

|

Intel® Omni-Path Edge Switch 100 Series 24 port |

|

Intel® Omni-Path Director Class Switches 100 Series 24 Slot |

|

Intel® Omni-Path Director Class Switches 100 Series 6 Slot |

Altair Product Interoperability with Intel® Omni-Path Host Fabric Adapters and Switches Matrix

Intel® Omni-Path Fabric Builders Catalog |

20 |

About |

Product Overview |

Partners |

|

|

|

||

|

|

|

|

CATAGORY: HARDWARE |

VERTICAL: CABLING & CONNECTIVITY |

||

AMPHENOL

About Amphenol

At Amphenol we design and manufacture a wide array of hi-tech connector solutions for various market applications, including data/telecommunications, data storage

and servers, consumer electronics, medical, industrial and instrumentation, automotive motorized vehicles as well as renewable energy.

Amphenol and Intel

Resonance dampening features in the design results in superior signal integrity performance. Connectors are offered in both ganged and stacked configurations with light pipes and heat sinks.

100G QSFP Omni-Path Cables Both 100G QSFP passive copper cables as well as the active optical (AOC) Omni-

Path compliant cable portfolios are offered to address cabling interconnect solutions from 0.5 meters up to 100 meters. Copper cables are available in 30 and 26 AWG

UltraPort Board Connectors Amphenol’s UltraPort™ |

cable sizes to optimize signal integrity while balancing |

QSFP+ board connector system is comprised of 38- |

the need to minimize cable bulk and increase system air |

position, 0.8mm pitch connectors in each port that are |

flow. The AOC’s are available in lengths from 1 meter up |

designed to support four lanes of 25G signal transmission. |

to 100 meters while offering low power consumption and |

|

superior data transmission properties. |

Amphenol and Intel® OPA Interoperability Matrices

The solutions in the following matrix have been provided by or obtained from Amphenol.

COMPONENT DETAIL

Amphenol Products UltraPort 100G QSFP ganged and stacked board connectors

100G QSFP Omni-Path copper cables assemblies

100G QSFP Omni-Path Active Optical Cables (AOC’s)

Cable direct QSFP to LEC flyover cable assembly PwrBlade ULTRA®

PwrMAX®

Intel® OPA Host Fabric |

Intel® Omni-Path Host Fabric Interface Adapter 100 |

Series 1 |

Port PCIe* x16 |

|

Adapters |

Intel® Omni-Path Host Fabric Interface Adapter 100 |

Series 1 |

Port PCIe x8 |

|

|

|

|

|

|

Intel® OPA Switches |

Intel® Omni-Path Edge Switch 100 Series 48 port |

|

|

|

|

Intel® Omni-Path Edge Switch 100 Series 24 port |

|

|

|

|

Intel® Omni-Path Director Class Switches 100 |

Series 24 Slot |

|

|

|

Intel® Omni-Path Director Class Switches 100 |

Series 6 Slot |

|

|

|

|

|

|

|

Amphenol Product Interoperability with Intel® Omni-Path Host Fabric Adapters and Switches Matrix

Contact Name: Jim David

Address: 825 Old Trail Road

Etters, PA 17319

Email: jim.david@fci.com

Phone: 1-717-938-7841

Fax: 1-717-938-7883

Web: www.fci.com

IFP Flyover Cable Assembly Flyover cable assembly supporting 50G PAM4 performance and utilizing LEC connector as CPU interface with Cable Direct QSFP

(CD-QSFP) connector to mate to industry standard QSFP external plug. Varying cable lengths from 5 inches to 17 inches offered in 30AWG.

PwrBlade ULTRA® The PwrBlade ULTRA® connector is the newest addition to the PwrBlade® product line. This new design offers an overall height reduction of 24% to reduce airflow impedance in high density power supplies. Three contact choices are available; high power, low power, and signal. Use of ultra-high conductivity materials and newly conductive plating results in the lowest profile power distribution connector capable of delivering more than 200 Amps per linear inch.

PwrMAX® The PwrMAX® connector family offers a compact means for connecting up to 100A DC power in modern system architectures requiring high density and high current. Configurations include coplanar, backplane, mezzanine, and orthogonal. The PwrMAX® family of connectors are some of the highest density power + signal blind-mate connectors on the market today.

Intel® Omni-Path Fabric Builders Catalog |

21 |

Loading...

Loading...